Haoyu Chen

Micro-AU CLIP: Fine-Grained Contrastive Learning from Local Independence to Global Dependency for Micro-Expression Action Unit Detection

Mar 17, 2026Abstract:Micro-expression (ME) action units (Micro-AUs) provide objective clues for fine-grained genuine emotion analysis. Most existing Micro-AU detection methods learn AU features from the whole facial image/video, which conflicts with the inherent locality of AU, resulting in insufficient perception of AU regions. In fact, each AU independently corresponds to specific localized facial muscle movements (local independence), while there is an inherent dependency between some AUs under specific emotional states (global dependency). Thus, this paper explores the effectiveness of the independence-to-dependency pattern and proposes a novel micro-AU detection framework, micro-AU CLIP, that uniquely decomposes the AU detection process into local semantic independence modeling (LSI) and global semantic dependency (GSD) modeling. In LSI, Patch Token Attention (PTA) is designed, mapping several local features within the AU region to the same feature space; In GSD, Global Dependency Attention (GDA) and Global Dependency Loss (GDLoss) are presented to model the global dependency relationships between different AUs, thereby enhancing each AU feature. Furthermore, considering CLIP's native limitations in micro-semantic alignment, a microAU contrastive loss (MiAUCL) is designed to learn AU features by a fine-grained alignment of visual and text features. Also, Micro-AU CLIP is effectively applied to ME recognition in an emotion-label-free way. The experimental results demonstrate that Micro-AU CLIP can fully learn fine-grained micro-AU features, achieving state-of-the-art performance.

FG-SGL: Fine-Grained Semantic Guidance Learning via Motion Process Decomposition for Micro-Gesture Recognition

Mar 17, 2026Abstract:Micro-gesture recognition (MGR) is challenging due to subtle inter-class variations. Existing methods rely on category-level supervision, which is insufficient for capturing subtle and localized motion differences. Thus, this paper proposes a Fine-Grained Semantic Guidance Learning (FG-SGL) framework that jointly integrates fine-grained and category-level semantics to guide vision--language models in perceiving local MG motions. FG-SA adopts fine-grained semantic cues to guide the learning of local motion features, while CP-A enhances the separability of MG features through category-level semantic guidance. To support fine-grained semantic guidance, this work constructs a fine-grained textual dataset with human annotations that describes the dynamic process of MGs in four refined semantic dimensions. Furthermore, a Multi-Level Contrastive Optimization strategy is designed to jointly optimize both modules in a coarse-to-fine pattern. Experiments show that FG-SGL achieves competitive performance, validating the effectiveness of fine-grained semantic guidance for MGR.

iMiGUE-Speech: A Spontaneous Speech Dataset for Affective Analysis

Feb 25, 2026Abstract:This work presents iMiGUE-Speech, an extension of the iMiGUE dataset that provides a spontaneous affective corpus for studying emotional and affective states. The new release focuses on speech and enriches the original dataset with additional metadata, including speech transcripts, speaker-role separation between interviewer and interviewee, and word-level forced alignments. Unlike existing emotional speech datasets that rely on acted or laboratory-elicited emotions, iMiGUE-Speech captures spontaneous affect arising naturally from real match outcomes. To demonstrate the utility of the dataset and establish initial benchmarks, we introduce two evaluation tasks for comparative assessment: speech emotion recognition and transcript-based sentiment analysis. These tasks leverage state-of-the-art pre-trained representations to assess the dataset's ability to capture spontaneous affective states from both acoustic and linguistic modalities. iMiGUE-Speech can also be synchronously paired with micro-gesture annotations from the original iMiGUE dataset, forming a uniquely multimodal resource for studying speech-gesture affective dynamics. The extended dataset is available at https://github.com/CV-AC/imigue-speech.

M-Loss: Quantifying Model Merging Compatibility with Limited Unlabeled Data

Feb 09, 2026Abstract:Training of large-scale models is both computationally intensive and often constrained by the availability of labeled data. Model merging offers a compelling alternative by directly integrating the weights of multiple source models without requiring additional data or extensive training. However, conventional model merging techniques, such as parameter averaging, often suffer from the unintended combination of non-generalizable features, especially when source models exhibit significant weight disparities. Comparatively, model ensembling generally provides more stable and superior performance that aggregates multiple models by averaging outputs. However, it incurs higher inference costs and increased storage requirements. While previous studies experimentally showed the similarities between model merging and ensembling, theoretical evidence and evaluation metrics remain lacking. To address this gap, we introduce Merging-ensembling loss (M-Loss), a novel evaluation metric that quantifies the compatibility of merging source models using very limited unlabeled data. By measuring the discrepancy between parameter averaging and model ensembling at layer and node levels, M-Loss facilitates more effective merging strategies. Specifically, M-Loss serves both as a quantitative criterion of the theoretical feasibility of model merging, and a guide for parameter significance in model pruning. Our theoretical analysis and empirical evaluations demonstrate that incorporating M-Loss into the merging process significantly improves the alignment between merged models and model ensembling, providing a scalable and efficient framework for accurate model consolidation.

SayNext-Bench: Why Do LLMs Struggle with Next-Utterance Prediction?

Jan 30, 2026Abstract:We explore the use of large language models (LLMs) for next-utterance prediction in human dialogue. Despite recent advances in LLMs demonstrating their ability to engage in natural conversations with users, we show that even leading models surprisingly struggle to predict a human speaker's next utterance. Instead, humans can readily anticipate forthcoming utterances based on multimodal cues, such as gestures, gaze, and emotional tone, from the context. To systematically examine whether LLMs can reproduce this ability, we propose SayNext-Bench, a benchmark that evaluates LLMs and Multimodal LLMs (MLLMs) on anticipating context-conditioned responses from multimodal cues spanning a variety of real-world scenarios. To support this benchmark, we build SayNext-PC, a novel large-scale dataset containing dialogues with rich multimodal cues. Building on this, we further develop a dual-route prediction MLLM, SayNext-Chat, that incorporates cognitively inspired design to emulate predictive processing in conversation. Experimental results demonstrate that our model outperforms state-of-the-art MLLMs in terms of lexical overlap, semantic similarity, and emotion consistency. Our results prove the feasibility of next-utterance prediction with LLMs from multimodal cues and emphasize the (i) indispensable role of multimodal cues and (ii) actively predictive processing as the foundation of natural human interaction, which is missing in current MLLMs. We hope that this exploration offers a new research entry toward more human-like, context-sensitive AI interaction for human-centered AI. Our benchmark and model can be accessed at https://saynext.github.io/.

Vision Large Language Models Are Good Noise Handlers in Engagement Analysis

Nov 18, 2025

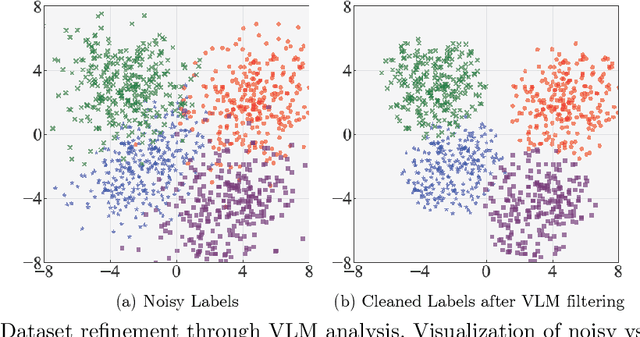

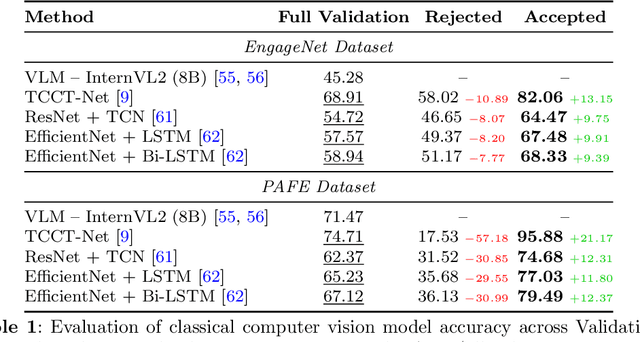

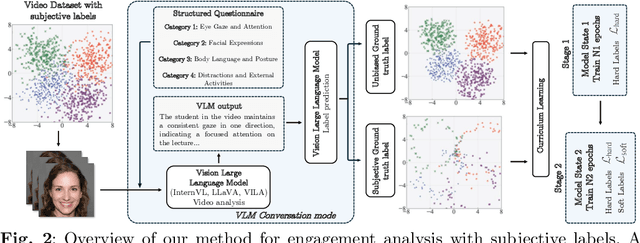

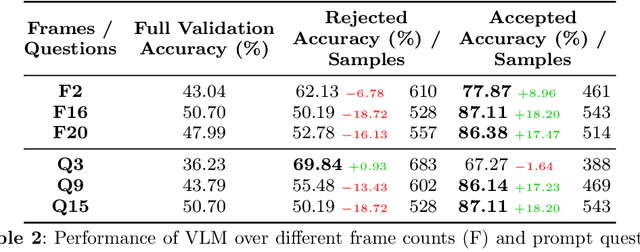

Abstract:Engagement recognition in video datasets, unlike traditional image classification tasks, is particularly challenged by subjective labels and noise limiting model performance. To overcome the challenges of subjective and noisy engagement labels, we propose a framework leveraging Vision Large Language Models (VLMs) to refine annotations and guide the training process. Our framework uses a questionnaire to extract behavioral cues and split data into high- and low-reliability subsets. We also introduce a training strategy combining curriculum learning with soft label refinement, gradually incorporating ambiguous samples while adjusting supervision to reflect uncertainty. We demonstrate that classical computer vision models trained on refined high-reliability subsets and enhanced with our curriculum strategy show improvements, highlighting benefits of addressing label subjectivity with VLMs. This method surpasses prior state of the art across engagement benchmarks such as EngageNet (three of six feature settings, maximum improvement of +1.21%), and DREAMS / PAFE with F1 gains of +0.22 / +0.06.

On Evaluating the Poisoning Robustness of Federated Learning under Local Differential Privacy

Sep 05, 2025Abstract:Federated learning (FL) combined with local differential privacy (LDP) enables privacy-preserving model training across decentralized data sources. However, the decentralized data-management paradigm leaves LDPFL vulnerable to participants with malicious intent. The robustness of LDPFL protocols, particularly against model poisoning attacks (MPA), where adversaries inject malicious updates to disrupt global model convergence, remains insufficiently studied. In this paper, we propose a novel and extensible model poisoning attack framework tailored for LDPFL settings. Our approach is driven by the objective of maximizing the global training loss while adhering to local privacy constraints. To counter robust aggregation mechanisms such as Multi-Krum and trimmed mean, we develop adaptive attacks that embed carefully crafted constraints into a reverse training process, enabling evasion of these defenses. We evaluate our framework across three representative LDPFL protocols, three benchmark datasets, and two types of deep neural networks. Additionally, we investigate the influence of data heterogeneity and privacy budgets on attack effectiveness. Experimental results demonstrate that our adaptive attacks can significantly degrade the performance of the global model, revealing critical vulnerabilities and highlighting the need for more robust LDPFL defense strategies against MPA. Our code is available at https://github.com/ZiJW/LDPFL-Attack

EventRR: Event Referential Reasoning for Referring Video Object Segmentation

Aug 10, 2025Abstract:Referring Video Object Segmentation (RVOS) aims to segment out the object in a video referred by an expression. Current RVOS methods view referring expressions as unstructured sequences, neglecting their crucial semantic structure essential for referent reasoning. Besides, in contrast to image-referring expressions whose semantics focus only on object attributes and object-object relations, video-referring expressions also encompass event attributes and event-event temporal relations. This complexity challenges traditional structured reasoning image approaches. In this paper, we propose the Event Referential Reasoning (EventRR) framework. EventRR decouples RVOS into object summarization part and referent reasoning part. The summarization phase begins by summarizing each frame into a set of bottleneck tokens, which are then efficiently aggregated in the video-level summarization step to exchange the global cross-modal temporal context. For reasoning part, EventRR extracts semantic eventful structure of a video-referring expression into highly expressive Referential Event Graph (REG), which is a single-rooted directed acyclic graph. Guided by topological traversal of REG, we propose Temporal Concept-Role Reasoning (TCRR) to accumulate the referring score of each temporal query from REG leaf nodes to root node. Each reasoning step can be interpreted as a question-answer pair derived from the concept-role relations in REG. Extensive experiments across four widely recognized benchmark datasets, show that EventRR quantitatively and qualitatively outperforms state-of-the-art RVOS methods. Code is available at https://github.com/bio-mlhui/EventRR

Learning Transferable Facial Emotion Representations from Large-Scale Semantically Rich Captions

Jul 28, 2025Abstract:Current facial emotion recognition systems are predominately trained to predict a fixed set of predefined categories or abstract dimensional values. This constrained form of supervision hinders generalization and applicability, as it reduces the rich and nuanced spectrum of emotions into oversimplified labels or scales. In contrast, natural language provides a more flexible, expressive, and interpretable way to represent emotions, offering a much broader source of supervision. Yet, leveraging semantically rich natural language captions as supervisory signals for facial emotion representation learning remains relatively underexplored, primarily due to two key challenges: 1) the lack of large-scale caption datasets with rich emotional semantics, and 2) the absence of effective frameworks tailored to harness such rich supervision. To this end, we introduce EmoCap100K, a large-scale facial emotion caption dataset comprising over 100,000 samples, featuring rich and structured semantic descriptions that capture both global affective states and fine-grained local facial behaviors. Building upon this dataset, we further propose EmoCapCLIP, which incorporates a joint global-local contrastive learning framework enhanced by a cross-modal guided positive mining module. This design facilitates the comprehensive exploitation of multi-level caption information while accommodating semantic similarities between closely related expressions. Extensive evaluations on over 20 benchmarks covering five tasks demonstrate the superior performance of our method, highlighting the promise of learning facial emotion representations from large-scale semantically rich captions. The code and data will be available at https://github.com/sunlicai/EmoCapCLIP.

SWIFT: A General Sensitive Weight Identification Framework for Fast Sensor-Transfer Pansharpening

Jul 27, 2025Abstract:Pansharpening aims to fuse high-resolution panchromatic (PAN) images with low-resolution multispectral (LRMS) images to generate high-resolution multispectral (HRMS) images. Although deep learning-based methods have achieved promising performance, they generally suffer from severe performance degradation when applied to data from unseen sensors. Adapting these models through full-scale retraining or designing more complex architectures is often prohibitively expensive and impractical for real-world deployment. To address this critical challenge, we propose a fast and general-purpose framework for cross-sensor adaptation, SWIFT (Sensitive Weight Identification for Fast Transfer). Specifically, SWIFT employs an unsupervised sampling strategy based on data manifold structures to balance sample selection while mitigating the bias of traditional Farthest Point Sampling, efficiently selecting only 3\% of the most informative samples from the target domain. This subset is then used to probe a source-domain pre-trained model by analyzing the gradient behavior of its parameters, allowing for the quick identification and subsequent update of only the weight subset most sensitive to the domain shift. As a plug-and-play framework, SWIFT can be applied to various existing pansharpening models. Extensive experiments demonstrate that SWIFT reduces the adaptation time from hours to approximately one minute on a single NVIDIA RTX 4090 GPU. The adapted models not only substantially outperform direct-transfer baselines but also achieve performance competitive with, and in some cases superior to, full retraining, establishing a new state-of-the-art on cross-sensor pansharpening tasks for the WorldView-2 and QuickBird datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge