Fang Wan

University of Chinese Academy of Sciences, Beijing, China

Thinking with Images via Self-Calling Agent

Dec 11, 2025Abstract:Thinking-with-images paradigms have showcased remarkable visual reasoning capability by integrating visual information as dynamic elements into the Chain-of-Thought (CoT). However, optimizing interleaved multimodal CoT (iMCoT) through reinforcement learning remains challenging, as it relies on scarce high-quality reasoning data. In this study, we propose Self-Calling Chain-of-Thought (sCoT), a novel visual reasoning paradigm that reformulates iMCoT as a language-only CoT with self-calling. Specifically, a main agent decomposes the complex visual reasoning task to atomic subtasks and invokes its virtual replicas, i.e. parameter-sharing subagents, to solve them in isolated context. sCoT enjoys substantial training effectiveness and efficiency, as it requires no explicit interleaving between modalities. sCoT employs group-relative policy optimization to reinforce effective reasoning behavior to enhance optimization. Experiments on HR-Bench 4K show that sCoT improves the overall reasoning performance by up to $1.9\%$ with $\sim 75\%$ fewer GPU hours compared to strong baseline approaches. Code is available at https://github.com/YWenxi/think-with-images-through-self-calling.

One-DoF Robotic Design of Overconstrained Limbs with Energy-Efficient, Self-Collision-Free Motion

Sep 26, 2025Abstract:While it is expected to build robotic limbs with multiple degrees of freedom (DoF) inspired by nature, a single DoF design remains fundamental, providing benefits that include, but are not limited to, simplicity, robustness, cost-effectiveness, and efficiency. Mechanisms, especially those with multiple links and revolute joints connected in closed loops, play an enabling factor in introducing motion diversity for 1-DoF systems, which are usually constrained by self-collision during a full-cycle range of motion. This study presents a novel computational approach to designing one-degree-of-freedom (1-DoF) overconstrained robotic limbs for a desired spatial trajectory, while achieving energy-efficient, self-collision-free motion in full-cycle rotations. Firstly, we present the geometric optimization problem of linkage-based robotic limbs in a generalized formulation for self-collision-free design. Next, we formulate the spatial trajectory generation problem with the overconstrained linkages by optimizing the similarity and dynamic-related metrics. We further optimize the geometric shape of the overconstrained linkage to ensure smooth and collision-free motion driven by a single actuator. We validated our proposed method through various experiments, including personalized automata and bio-inspired hexapod robots. The resulting hexapod robot, featuring overconstrained robotic limbs, demonstrated outstanding energy efficiency during forward walking.

Geometric-Mean Policy Optimization

Jul 28, 2025Abstract:Recent advancements, such as Group Relative Policy Optimization (GRPO), have enhanced the reasoning capabilities of large language models by optimizing the arithmetic mean of token-level rewards. However, GRPO suffers from unstable policy updates when processing tokens with outlier importance-weighted rewards, which manifests as extreme importance sampling ratios during training, i.e., the ratio between the sampling probabilities assigned to a token by the current and old policies. In this work, we propose Geometric-Mean Policy Optimization (GMPO), a stabilized variant of GRPO. Instead of optimizing the arithmetic mean, GMPO maximizes the geometric mean of token-level rewards, which is inherently less sensitive to outliers and maintains a more stable range of importance sampling ratio. In addition, we provide comprehensive theoretical and experimental analysis to justify the design and stability benefits of GMPO. Beyond improved stability, GMPO-7B outperforms GRPO by an average of 4.1% on multiple mathematical benchmarks and 1.4% on multimodal reasoning benchmark, including AIME24, AMC, MATH500, OlympiadBench, Minerva, and Geometry3K. Code is available at https://github.com/callsys/GMPO.

Timestep Embedding Tells: It's Time to Cache for Video Diffusion Model

Nov 28, 2024

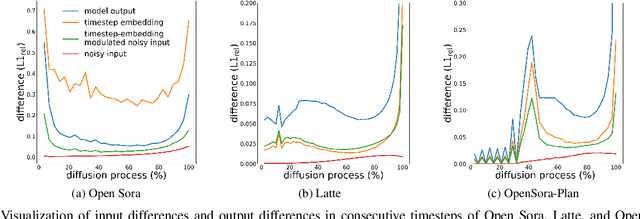

Abstract:As a fundamental backbone for video generation, diffusion models are challenged by low inference speed due to the sequential nature of denoising. Previous methods speed up the models by caching and reusing model outputs at uniformly selected timesteps. However, such a strategy neglects the fact that differences among model outputs are not uniform across timesteps, which hinders selecting the appropriate model outputs to cache, leading to a poor balance between inference efficiency and visual quality. In this study, we introduce Timestep Embedding Aware Cache (TeaCache), a training-free caching approach that estimates and leverages the fluctuating differences among model outputs across timesteps. Rather than directly using the time-consuming model outputs, TeaCache focuses on model inputs, which have a strong correlation with the modeloutputs while incurring negligible computational cost. TeaCache first modulates the noisy inputs using the timestep embeddings to ensure their differences better approximating those of model outputs. TeaCache then introduces a rescaling strategy to refine the estimated differences and utilizes them to indicate output caching. Experiments show that TeaCache achieves up to 4.41x acceleration over Open-Sora-Plan with negligible (-0.07% Vbench score) degradation of visual quality.

Correspondence-Guided SfM-Free 3D Gaussian Splatting for NVS

Aug 16, 2024Abstract:Novel View Synthesis (NVS) without Structure-from-Motion (SfM) pre-processed camera poses--referred to as SfM-free methods--is crucial for promoting rapid response capabilities and enhancing robustness against variable operating conditions. Recent SfM-free methods have integrated pose optimization, designing end-to-end frameworks for joint camera pose estimation and NVS. However, most existing works rely on per-pixel image loss functions, such as L2 loss. In SfM-free methods, inaccurate initial poses lead to misalignment issue, which, under the constraints of per-pixel image loss functions, results in excessive gradients, causing unstable optimization and poor convergence for NVS. In this study, we propose a correspondence-guided SfM-free 3D Gaussian splatting for NVS. We use correspondences between the target and the rendered result to achieve better pixel alignment, facilitating the optimization of relative poses between frames. We then apply the learned poses to optimize the entire scene. Each 2D screen-space pixel is associated with its corresponding 3D Gaussians through approximated surface rendering to facilitate gradient back propagation. Experimental results underline the superior performance and time efficiency of the proposed approach compared to the state-of-the-art baselines.

On Flange-based 3D Hand-Eye Calibration for Soft Robotic Tactile Welding

Jul 27, 2024

Abstract:This paper investigates the direct application of standardized designs on the robot for conducting robot hand-eye calibration by employing 3D scanners with collaborative robots. The well-established geometric features of the robot flange are exploited by directly capturing its point cloud data. In particular, an iterative method is proposed to facilitate point cloud processing toward a refined calibration outcome. Several extensive experiments are conducted over a range of collaborative robots, including Universal Robots UR5 & UR10 e-series, Franka Emika, and AUBO i5 using an industrial-grade 3D scanner Photoneo Phoxi S & M and a commercial-grade 3D scanner Microsoft Azure Kinect DK. Experimental results show that translational and rotational errors converge efficiently to less than 0.28 mm and 0.25 degrees, respectively, achieving a hand-eye calibration accuracy as high as the camera's resolution, probing the hardware limit. A welding seam tracking system is presented, combining the flange-based calibration method with soft tactile sensing. The experiment results show that the system enables the robot to adjust its motion in real-time, ensuring consistent weld quality and paving the way for more efficient and adaptable manufacturing processes.

Close the Sim2real Gap via Physically-based Structured Light Synthetic Data Simulation

Jul 17, 2024

Abstract:Despite the substantial progress in deep learning, its adoption in industrial robotics projects remains limited, primarily due to challenges in data acquisition and labeling. Previous sim2real approaches using domain randomization require extensive scene and model optimization. To address these issues, we introduce an innovative physically-based structured light simulation system, generating both RGB and physically realistic depth images, surpassing previous dataset generation tools. We create an RGBD dataset tailored for robotic industrial grasping scenarios and evaluate it across various tasks, including object detection, instance segmentation, and embedding sim2real visual perception in industrial robotic grasping. By reducing the sim2real gap and enhancing deep learning training, we facilitate the application of deep learning models in industrial settings. Project details are available at https://baikaixinpublic.github.io/structured light 3D synthesizer/.

Evolutionary Morphology Towards Overconstrained Locomotion via Large-Scale, Multi-Terrain Deep Reinforcement Learning

Jul 01, 2024

Abstract:While the animals' Fin-to-Limb evolution has been well-researched in biology, such morphological transformation remains under-adopted in the modern design of advanced robotic limbs. This paper investigates a novel class of overconstrained locomotion from a design and learning perspective inspired by evolutionary morphology, aiming to integrate the concept of `intelligent design under constraints' - hereafter referred to as constraint-driven design intelligence - in developing modern robotic limbs with superior energy efficiency. We propose a 3D-printable design of robotic limbs parametrically reconfigurable as a classical planar 4-bar linkage, an overconstrained Bennett linkage, and a spherical 4-bar linkage. These limbs adopt a co-axial actuation, identical to the modern legged robot platforms, with the added capability of upgrading into a wheel-legged system. Then, we implemented a large-scale, multi-terrain deep reinforcement learning framework to train these reconfigurable limbs for a comparative analysis of overconstrained locomotion in energy efficiency. Results show that the overconstrained limbs exhibit more efficient locomotion than planar limbs during forward and sideways walking over different terrains, including floors, slopes, and stairs, with or without random noises, by saving at least 22% mechanical energy in completing the traverse task, with the spherical limbs being the least efficient. It also achieves the highest average speed of 0.85 meters per second on flat terrain, which is 20% faster than the planar limbs. This study paves the path for an exciting direction for future research in overconstrained robotics leveraging evolutionary morphology and reconfigurable mechanism intelligence when combined with state-of-the-art methods in deep reinforcement learning.

Evaluation of Text-to-Video Generation Models: A Dynamics Perspective

Jul 01, 2024Abstract:Comprehensive and constructive evaluation protocols play an important role in the development of sophisticated text-to-video (T2V) generation models. Existing evaluation protocols primarily focus on temporal consistency and content continuity, yet largely ignore the dynamics of video content. Dynamics are an essential dimension for measuring the visual vividness and the honesty of video content to text prompts. In this study, we propose an effective evaluation protocol, termed DEVIL, which centers on the dynamics dimension to evaluate T2V models. For this purpose, we establish a new benchmark comprising text prompts that fully reflect multiple dynamics grades, and define a set of dynamics scores corresponding to various temporal granularities to comprehensively evaluate the dynamics of each generated video. Based on the new benchmark and the dynamics scores, we assess T2V models with the design of three metrics: dynamics range, dynamics controllability, and dynamics-based quality. Experiments show that DEVIL achieves a Pearson correlation exceeding 90% with human ratings, demonstrating its potential to advance T2V generation models. Code is available at https://github.com/MingXiangL/DEVIL.

One Fling to Goal: Environment-aware Dynamics for Goal-conditioned Fabric Flinging

Jun 20, 2024Abstract:Fabric manipulation dynamically is commonly seen in manufacturing and domestic settings. While dynamically manipulating a fabric piece to reach a target state is highly efficient, this task presents considerable challenges due to the varying properties of different fabrics, complex dynamics when interacting with environments, and meeting required goal conditions. To address these challenges, we present \textit{One Fling to Goal}, an algorithm capable of handling fabric pieces with diverse shapes and physical properties across various scenarios. Our method learns a graph-based dynamics model equipped with environmental awareness. With this dynamics model, we devise a real-time controller to enable high-speed fabric manipulation in one attempt, requiring less than 3 seconds to finish the goal-conditioned task. We experimentally validate our method on a goal-conditioned manipulation task in five diverse scenarios. Our method significantly improves this goal-conditioned task, achieving an average error of 13.2mm in complex scenarios. Our method can be seamlessly transferred to real-world robotic systems and generalized to unseen scenarios in a zero-shot manner.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge