Haibin He

GoMatching++: Parameter- and Data-Efficient Arbitrary-Shaped Video Text Spotting and Benchmarking

May 28, 2025

Abstract:Video text spotting (VTS) extends image text spotting (ITS) by adding text tracking, significantly increasing task complexity. Despite progress in VTS, existing methods still fall short of the performance seen in ITS. This paper identifies a key limitation in current video text spotters: limited recognition capability, even after extensive end-to-end training. To address this, we propose GoMatching++, a parameter- and data-efficient method that transforms an off-the-shelf image text spotter into a video specialist. The core idea lies in freezing the image text spotter and introducing a lightweight, trainable tracker, which can be optimized efficiently with minimal training data. Our approach includes two key components: (1) a rescoring mechanism to bridge the domain gap between image and video data, and (2) the LST-Matcher, which enhances the frozen image text spotter's ability to handle video text. We explore various architectures for LST-Matcher to ensure efficiency in both parameters and training data. As a result, GoMatching++ sets new performance records on challenging benchmarks such as ICDAR15-video, DSText, and BOVText, while significantly reducing training costs. To address the lack of curved text datasets in VTS, we introduce ArTVideo, a new benchmark featuring over 30% curved text with detailed annotations. We also provide a comprehensive statistical analysis and experimental results for ArTVideo. We believe that GoMatching++ and the ArTVideo benchmark will drive future advancements in video text spotting. The source code, models and dataset are publicly available at https://github.com/Hxyz-123/GoMatching.

Adapting Segment Anything Model for Power Transmission Corridor Hazard Segmentation

May 28, 2025Abstract:Power transmission corridor hazard segmentation (PTCHS) aims to separate transmission equipment and surrounding hazards from complex background, conveying great significance to maintaining electric power transmission safety. Recently, the Segment Anything Model (SAM) has emerged as a foundational vision model and pushed the boundaries of segmentation tasks. However, SAM struggles to deal with the target objects in complex transmission corridor scenario, especially those with fine structure. In this paper, we propose ELE-SAM, adapting SAM for the PTCHS task. Technically, we develop a Context-Aware Prompt Adapter to achieve better prompt tokens via incorporating global-local features and focusing more on key regions. Subsequently, to tackle the hazard objects with fine structure in complex background, we design a High-Fidelity Mask Decoder by leveraging multi-granularity mask features and then scaling them to a higher resolution. Moreover, to train ELE-SAM and advance this field, we construct the ELE-40K benchmark, the first large-scale and real-world dataset for PTCHS including 44,094 image-mask pairs. Experimental results for ELE-40K demonstrate the superior performance that ELE-SAM outperforms the baseline model with the average 16.8% mIoU and 20.6% mBIoU performance improvement. Moreover, compared with the state-of-the-art method on HQSeg-44K, the average 2.9% mIoU and 3.8% mBIoU absolute improvements further validate the effectiveness of our method on high-quality generic object segmentation. The source code and dataset are available at https://github.com/Hhaizee/ELE-SAM.

Reasoning-OCR: Can Large Multimodal Models Solve Complex Logical Reasoning Problems from OCR Cues?

May 19, 2025Abstract:Large Multimodal Models (LMMs) have become increasingly versatile, accompanied by impressive Optical Character Recognition (OCR) related capabilities. Existing OCR-related benchmarks emphasize evaluating LMMs' abilities of relatively simple visual question answering, visual-text parsing, etc. However, the extent to which LMMs can deal with complex logical reasoning problems based on OCR cues is relatively unexplored. To this end, we introduce the Reasoning-OCR benchmark, which challenges LMMs to solve complex reasoning problems based on the cues that can be extracted from rich visual-text. Reasoning-OCR covers six visual scenarios and encompasses 150 meticulously designed questions categorized into six reasoning challenges. Additionally, Reasoning-OCR minimizes the impact of field-specialized knowledge. Our evaluation offers some insights for proprietary and open-source LMMs in different reasoning challenges, underscoring the urgent to improve the reasoning performance. We hope Reasoning-OCR can inspire and facilitate future research on enhancing complex reasoning ability based on OCR cues. Reasoning-OCR is publicly available at https://github.com/Hxyz-123/ReasoningOCR.

One Fling to Goal: Environment-aware Dynamics for Goal-conditioned Fabric Flinging

Jun 20, 2024

Abstract:Fabric manipulation dynamically is commonly seen in manufacturing and domestic settings. While dynamically manipulating a fabric piece to reach a target state is highly efficient, this task presents considerable challenges due to the varying properties of different fabrics, complex dynamics when interacting with environments, and meeting required goal conditions. To address these challenges, we present \textit{One Fling to Goal}, an algorithm capable of handling fabric pieces with diverse shapes and physical properties across various scenarios. Our method learns a graph-based dynamics model equipped with environmental awareness. With this dynamics model, we devise a real-time controller to enable high-speed fabric manipulation in one attempt, requiring less than 3 seconds to finish the goal-conditioned task. We experimentally validate our method on a goal-conditioned manipulation task in five diverse scenarios. Our method significantly improves this goal-conditioned task, achieving an average error of 13.2mm in complex scenarios. Our method can be seamlessly transferred to real-world robotic systems and generalized to unseen scenarios in a zero-shot manner.

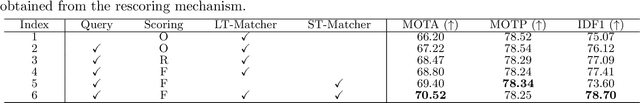

GoMatching: A Simple Baseline for Video Text Spotting via Long and Short Term Matching

Jan 13, 2024Abstract:Beyond the text detection and recognition tasks in image text spotting, video text spotting presents an augmented challenge with the inclusion of tracking. While advanced end-to-end trainable methods have shown commendable performance, the pursuit of multi-task optimization may pose the risk of producing sub-optimal outcomes for individual tasks. In this paper, we highlight a main bottleneck in the state-of-the-art video text spotter: the limited recognition capability. In response to this issue, we propose to efficiently turn an off-the-shelf query-based image text spotter into a specialist on video and present a simple baseline termed GoMatching, which focuses the training efforts on tracking while maintaining strong recognition performance. To adapt the image text spotter to video datasets, we add a rescoring head to rescore each detected instance's confidence via efficient tuning, leading to a better tracking candidate pool. Additionally, we design a long-short term matching module, termed LST-Matcher, to enhance the spotter's tracking capability by integrating both long- and short-term matching results via Transformer. Based on the above simple designs, GoMatching achieves impressive performance on two public benchmarks, e.g., setting a new record on the ICDAR15-video dataset, and one novel test set with arbitrary-shaped text, while saving considerable training budgets. The code will be released at https://github.com/Hxyz-123/GoMatching.

Scalable Mask Annotation for Video Text Spotting

May 02, 2023Abstract:Video text spotting refers to localizing, recognizing, and tracking textual elements such as captions, logos, license plates, signs, and other forms of text within consecutive video frames. However, current datasets available for this task rely on quadrilateral ground truth annotations, which may result in including excessive background content and inaccurate text boundaries. Furthermore, methods trained on these datasets often produce prediction results in the form of quadrilateral boxes, which limits their ability to handle complex scenarios such as dense or curved text. To address these issues, we propose a scalable mask annotation pipeline called SAMText for video text spotting. SAMText leverages the SAM model to generate mask annotations for scene text images or video frames at scale. Using SAMText, we have created a large-scale dataset, SAMText-9M, that contains over 2,400 video clips sourced from existing datasets and over 9 million mask annotations. We have also conducted a thorough statistical analysis of the generated masks and their quality, identifying several research topics that could be further explored based on this dataset. The code and dataset will be released at \url{https://github.com/ViTAE-Transformer/SAMText}.

Diff-Font: Diffusion Model for Robust One-Shot Font Generation

Dec 12, 2022

Abstract:Font generation is a difficult and time-consuming task, especially in those languages using ideograms that have complicated structures with a large number of characters, such as Chinese. To solve this problem, few-shot font generation and even one-shot font generation have attracted a lot of attention. However, most existing font generation methods may still suffer from (i) large cross-font gap challenge; (ii) subtle cross-font variation problem; and (iii) incorrect generation of complicated characters. In this paper, we propose a novel one-shot font generation method based on a diffusion model, named Diff-Font, which can be stably trained on large datasets. The proposed model aims to generate the entire font library by giving only one sample as the reference. Specifically, a large stroke-wise dataset is constructed, and a stroke-wise diffusion model is proposed to preserve the structure and the completion of each generated character. To our best knowledge, the proposed Diff-Font is the first work that developed diffusion models to handle the font generation task. The well-trained Diff-Font is not only robust to font gap and font variation, but also achieved promising performance on difficult character generation. Compared to previous font generation methods, our model reaches state-of-the-art performance both qualitatively and quantitatively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge