Chenyu Zhang

Diff-PC: Identity-preserving and 3D-aware Controllable Diffusion for Zero-shot Portrait Customization

Jan 31, 2026Abstract:Portrait customization (PC) has recently garnered significant attention due to its potential applications. However, existing PC methods lack precise identity (ID) preservation and face control. To address these tissues, we propose Diff-PC, a diffusion-based framework for zero-shot PC, which generates realistic portraits with high ID fidelity, specified facial attributes, and diverse backgrounds. Specifically, our approach employs the 3D face predictor to reconstruct the 3D-aware facial priors encompassing the reference ID, target expressions, and poses. To capture fine-grained face details, we design ID-Encoder that fuses local and global facial features. Subsequently, we devise ID-Ctrl using the 3D face to guide the alignment of ID features. We further introduce ID-Injector to enhance ID fidelity and facial controllability. Finally, training on our collected ID-centric dataset improves face similarity and text-to-image (T2I) alignment. Extensive experiments demonstrate that Diff-PC surpasses state-of-the-art methods in ID preservation, facial control, and T2I consistency. Furthermore, our method is compatible with multi-style foundation models.

HeterCSI: Channel-Adaptive Heterogeneous CSI Pretraining Framework for Generalized Wireless Foundation Models

Jan 26, 2026Abstract:Wireless foundation models promise transformative capabilities for channel state information (CSI) processing across diverse 6G network applications, yet face fundamental challenges due to the inherent dual heterogeneity of CSI across both scale and scenario dimensions. However, current pretraining approaches either constrain inputs to fixed dimensions or isolate training by scale, limiting the generalization and scalability of wireless foundation models. In this paper, we propose HeterCSI, a channel-adaptive pretraining framework that reconciles training efficiency with robust cross-scenario generalization via a new understanding of gradient dynamics in heterogeneous CSI pretraining. Our key insight reveals that CSI scale heterogeneity primarily causes destructive gradient interference, while scenario diversity actually promotes constructive gradient alignment when properly managed. Specifically, we formulate heterogeneous CSI batch construction as a partitioning optimization problem that minimizes zero-padding overhead while preserving scenario diversity. To solve this, we develop a scale-aware adaptive batching strategy that aligns CSI samples of similar scales, and design a double-masking mechanism to isolate valid signals from padding artifacts. Extensive experiments on 12 datasets demonstrate that HeterCSI establishes a generalized foundation model without scenario-specific finetuning, achieving superior average performance over full-shot baselines. Compared to the state-of-the-art zero-shot benchmark WiFo, it reduces NMSE by 7.19 dB, 4.08 dB, and 5.27 dB for CSI reconstruction, time-domain, and frequency-domain prediction, respectively. The proposed HeterCSI framework also reduces training latency by 53% compared to existing approaches while improving generalization performance by 1.53 dB on average.

Kinematics-Aware Diffusion Policy with Consistent 3D Observation and Action Space for Whole-Arm Robotic Manipulation

Dec 19, 2025

Abstract:Whole-body control of robotic manipulators with awareness of full-arm kinematics is crucial for many manipulation scenarios involving body collision avoidance or body-object interactions, which makes it insufficient to consider only the end-effector poses in policy learning. The typical approach for whole-arm manipulation is to learn actions in the robot's joint space. However, the unalignment between the joint space and actual task space (i.e., 3D space) increases the complexity of policy learning, as generalization in task space requires the policy to intrinsically understand the non-linear arm kinematics, which is difficult to learn from limited demonstrations. To address this issue, this letter proposes a kinematics-aware imitation learning framework with consistent task, observation, and action spaces, all represented in the same 3D space. Specifically, we represent both robot states and actions using a set of 3D points on the arm body, naturally aligned with the 3D point cloud observations. This spatially consistent representation improves the policy's sample efficiency and spatial generalizability while enabling full-body control. Built upon the diffusion policy, we further incorporate kinematics priors into the diffusion processes to guarantee the kinematic feasibility of output actions. The joint angle commands are finally calculated through an optimization-based whole-body inverse kinematics solver for execution. Simulation and real-world experimental results demonstrate higher success rates and stronger spatial generalizability of our approach compared to existing methods in body-aware manipulation policy learning.

Harnessing Deep LLM Participation for Robust Entity Linking

Nov 18, 2025Abstract:Entity Linking (EL), the task of mapping textual entity mentions to their corresponding entries in knowledge bases, constitutes a fundamental component of natural language understanding. Recent advancements in Large Language Models (LLMs) have demonstrated remarkable potential for enhancing EL performance. Prior research has leveraged LLMs to improve entity disambiguation and input representation, yielding significant gains in accuracy and robustness. However, these approaches typically apply LLMs to isolated stages of the EL task, failing to fully integrate their capabilities throughout the entire process. In this work, we introduce DeepEL, a comprehensive framework that incorporates LLMs into every stage of the entity linking task. Furthermore, we identify that disambiguating entities in isolation is insufficient for optimal performance. To address this limitation, we propose a novel self-validation mechanism that utilizes global contextual information, enabling LLMs to rectify their own predictions and better recognize cohesive relationships among entities within the same sentence. Extensive empirical evaluation across ten benchmark datasets demonstrates that DeepEL substantially outperforms existing state-of-the-art methods, achieving an average improvement of 2.6\% in overall F1 score and a remarkable 4% gain on out-of-domain datasets. These results underscore the efficacy of deep LLM integration in advancing the state-of-the-art in entity linking.

Scaling Equitable Reflection Assessment in Education via Large Language Models and Role-Based Feedback Agents

Nov 14, 2025Abstract:Formative feedback is widely recognized as one of the most effective drivers of student learning, yet it remains difficult to implement equitably at scale. In large or low-resource courses, instructors often lack the time, staffing, and bandwidth required to review and respond to every student reflection, creating gaps in support precisely where learners would benefit most. This paper presents a theory-grounded system that uses five coordinated role-based LLM agents (Evaluator, Equity Monitor, Metacognitive Coach, Aggregator, and Reflexion Reviewer) to score learner reflections with a shared rubric and to generate short, bias-aware, learner-facing comments. The agents first produce structured rubric scores, then check for potentially biased or exclusionary language, add metacognitive prompts that invite students to think about their own thinking, and finally compose a concise feedback message of at most 120 words. The system includes simple fairness checks that compare scoring error across lower and higher scoring learners, enabling instructors to monitor and bound disparities in accuracy. We evaluate the pipeline in a 12-session AI literacy program with adult learners. In this setting, the system produces rubric scores that approach expert-level agreement, and trained graders rate the AI-generated comments as helpful, empathetic, and well aligned with instructional goals. Taken together, these results show that multi-agent LLM systems can deliver equitable, high-quality formative feedback at a scale and speed that would be impossible for human graders alone. More broadly, the work points toward a future where feedback-rich learning becomes feasible for any course size or context, advancing long-standing goals of equity, access, and instructional capacity in education.

T2I-RiskyPrompt: A Benchmark for Safety Evaluation, Attack, and Defense on Text-to-Image Model

Oct 25, 2025

Abstract:Using risky text prompts, such as pornography and violent prompts, to test the safety of text-to-image (T2I) models is a critical task. However, existing risky prompt datasets are limited in three key areas: 1) limited risky categories, 2) coarse-grained annotation, and 3) low effectiveness. To address these limitations, we introduce T2I-RiskyPrompt, a comprehensive benchmark designed for evaluating safety-related tasks in T2I models. Specifically, we first develop a hierarchical risk taxonomy, which consists of 6 primary categories and 14 fine-grained subcategories. Building upon this taxonomy, we construct a pipeline to collect and annotate risky prompts. Finally, we obtain 6,432 effective risky prompts, where each prompt is annotated with both hierarchical category labels and detailed risk reasons. Moreover, to facilitate the evaluation, we propose a reason-driven risky image detection method that explicitly aligns the MLLM with safety annotations. Based on T2I-RiskyPrompt, we conduct a comprehensive evaluation of eight T2I models, nine defense methods, five safety filters, and five attack strategies, offering nine key insights into the strengths and limitations of T2I model safety. Finally, we discuss potential applications of T2I-RiskyPrompt across various research fields. The dataset and code are provided in https://github.com/datar001/T2I-RiskyPrompt.

Comparative Performance Analysis of Different Hybrid NOMA Schemes

Sep 18, 2025

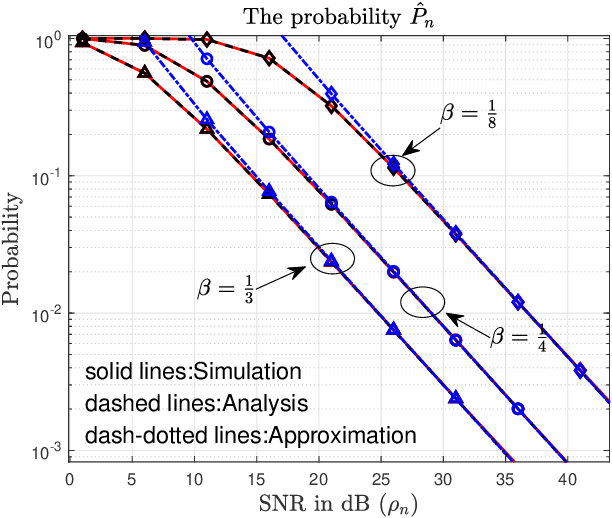

Abstract:Hybrid non-orthogonal multiple access (H-NOMA), which combines the advantages of pure NOMA and conventional OMA organically, has emerged as a highly promising multiple access technology for future wireless networks. Recent studies have proposed various H-NOMA systems by employing different successive interference cancellation (SIC) methods for the NOMA transmission phase. However, existing analyses typically assume a fixed channel gain order between paired users, despite the fact that channel coefficients follow random distribution, leading to their magnitude relationships inherently stochastic and time varying. This paper analyzes the performance of three H-NOMA schemes under stochastic channel gain ordering: a) fixed order SIC (FSIC) aided H-NOMA scheme; b) hybrid SIC with non-power adaptation (HSIC-NPA) aided H-NOMA scheme; c) hybrid SIC with power adaptation (HSIC-PA) aided H-NOMA scheme. Theoretical analysis derives closed-form expressions for the probability that H-NOMA schemes underperform conventional OMA. Asymptotic results in the high signal-to-noise ratio (SNR) regime are also developed. Simulation results validate our analysis and demonstrate the performance of H-NOMA schemes across different SNR scenarios, providing a theoretical foundation for the deployment of H-NOMA in next-generation wireless systems.

MoFE-Time: Mixture of Frequency Domain Experts for Time-Series Forecasting Models

Jul 09, 2025

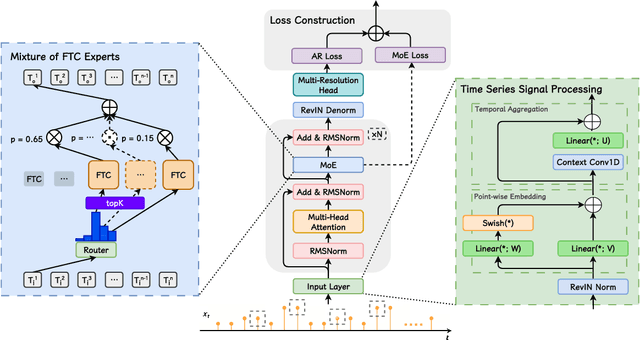

Abstract:As a prominent data modality task, time series forecasting plays a pivotal role in diverse applications. With the remarkable advancements in Large Language Models (LLMs), the adoption of LLMs as the foundational architecture for time series modeling has gained significant attention. Although existing models achieve some success, they rarely both model time and frequency characteristics in a pretraining-finetuning paradigm leading to suboptimal performance in predictions of complex time series, which requires both modeling periodicity and prior pattern knowledge of signals. We propose MoFE-Time, an innovative time series forecasting model that integrates time and frequency domain features within a Mixture of Experts (MoE) network. Moreover, we use the pretraining-finetuning paradigm as our training framework to effectively transfer prior pattern knowledge across pretraining and finetuning datasets with different periodicity distributions. Our method introduces both frequency and time cells as experts after attention modules and leverages the MoE routing mechanism to construct multidimensional sparse representations of input signals. In experiments on six public benchmarks, MoFE-Time has achieved new state-of-the-art performance, reducing MSE and MAE by 6.95% and 6.02% compared to the representative methods Time-MoE. Beyond the existing evaluation benchmarks, we have developed a proprietary dataset, NEV-sales, derived from real-world business scenarios. Our method achieves outstanding results on this dataset, underscoring the effectiveness of the MoFE-Time model in practical commercial applications.

Spreading Depolarization Detection in Electrocorticogram Spectrogram Imaging by Deep Learning: Is It Just About Delta Band?

May 01, 2025

Abstract:Prevention of secondary brain injury is a core aim of neurocritical care, with Spreading Depolarizations (SDs) recognized as a significant independent cause. SDs are typically monitored through invasive, high-frequency electrocorticography (ECoG); however, detection remains challenging due to signal artifacts that obscure critical SD-related electrophysiological changes, such as power attenuation and DC drifting. Recent studies suggest spectrogram analysis could improve SD detection; however, brain injury patients often show power reduction across all bands except delta, causing class imbalance. Previous methods focusing solely on delta mitigates imbalance but overlooks features in other frequencies, limiting detection performance. This study explores using multi-frequency spectrogram analysis, revealing that essential SD-related features span multiple frequency bands beyond the most active delta band. This study demonstrated that further integration of both alpha and delta bands could result in enhanced SD detection accuracy by a deep learning model.

A Reactive Framework for Whole-Body Motion Planning of Mobile Manipulators Combining Reinforcement Learning and SDF-Constrained Quadratic Programmi

Mar 31, 2025Abstract:As an important branch of embodied artificial intelligence, mobile manipulators are increasingly applied in intelligent services, but their redundant degrees of freedom also limit efficient motion planning in cluttered environments. To address this issue, this paper proposes a hybrid learning and optimization framework for reactive whole-body motion planning of mobile manipulators. We develop the Bayesian distributional soft actor-critic (Bayes-DSAC) algorithm to improve the quality of value estimation and the convergence performance of the learning. Additionally, we introduce a quadratic programming method constrained by the signed distance field to enhance the safety of the obstacle avoidance motion. We conduct experiments and make comparison with standard benchmark. The experimental results verify that our proposed framework significantly improves the efficiency of reactive whole-body motion planning, reduces the planning time, and improves the success rate of motion planning. Additionally, the proposed reinforcement learning method ensures a rapid learning process in the whole-body planning task. The novel framework allows mobile manipulators to adapt to complex environments more safely and efficiently.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge