Weizhi Nie

Mask-Guided Attention Regulation for Anatomically Consistent Counterfactual CXR Synthesis

Mar 04, 2026Abstract:Counterfactual generation for chest X-rays (CXR) aims to simulate plausible pathological changes while preserving patient-specific anatomy. However, diffusion-based editing methods often suffer from structural drift, where stable anatomical semantics propagate globally through attention and distort non-target regions, and unstable pathology expression, since subtle and localized lesions induce weak and noisy conditioning signals. We present an inference-time attention regulation framework for reliable counterfactual CXR synthesis. An anatomy-aware attention regularization module gates self-attention and anatomy-token cross-attention with organ masks, confining structural interactions to anatomical ROIs and reducing unintended distortions. A pathology-guided module enhances pathology-token cross-attention within target lung regions during early denoising and performs lightweight latent corrections driven by an attention-concentration energy, enabling controllable lesion localization and extent. Extensive evaluations on CXR datasets show improved anatomical consistency and more precise, controllable pathological edits compared with standard diffusion editing, supporting localized counterfactual analysis and data augmentation for downstream tasks.

Organ-Agents: Virtual Human Physiology Simulator via LLMs

Aug 20, 2025Abstract:Recent advances in large language models (LLMs) have enabled new possibilities in simulating complex physiological systems. We introduce Organ-Agents, a multi-agent framework that simulates human physiology via LLM-driven agents. Each Simulator models a specific system (e.g., cardiovascular, renal, immune). Training consists of supervised fine-tuning on system-specific time-series data, followed by reinforcement-guided coordination using dynamic reference selection and error correction. We curated data from 7,134 sepsis patients and 7,895 controls, generating high-resolution trajectories across 9 systems and 125 variables. Organ-Agents achieved high simulation accuracy on 4,509 held-out patients, with per-system MSEs <0.16 and robustness across SOFA-based severity strata. External validation on 22,689 ICU patients from two hospitals showed moderate degradation under distribution shifts with stable simulation. Organ-Agents faithfully reproduces critical multi-system events (e.g., hypotension, hyperlactatemia, hypoxemia) with coherent timing and phase progression. Evaluation by 15 critical care physicians confirmed realism and physiological plausibility (mean Likert ratings 3.9 and 3.7). Organ-Agents also enables counterfactual simulations under alternative sepsis treatment strategies, generating trajectories and APACHE II scores aligned with matched real-world patients. In downstream early warning tasks, classifiers trained on synthetic data showed minimal AUROC drops (<0.04), indicating preserved decision-relevant patterns. These results position Organ-Agents as a credible, interpretable, and generalizable digital twin for precision diagnosis, treatment simulation, and hypothesis testing in critical care.

Structure Causal Models and LLMs Integration in Medical Visual Question Answering

May 05, 2025

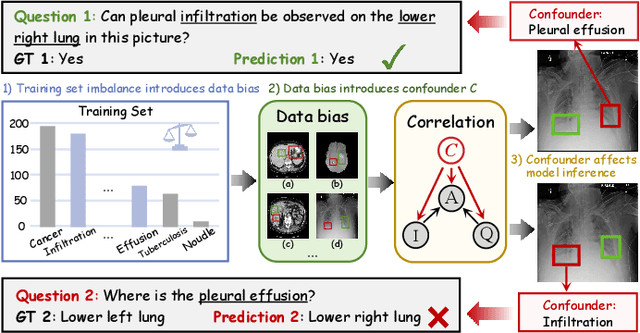

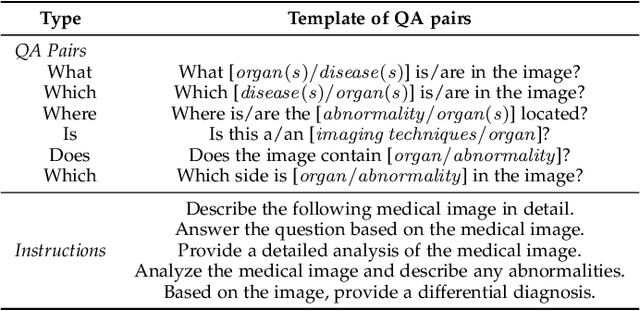

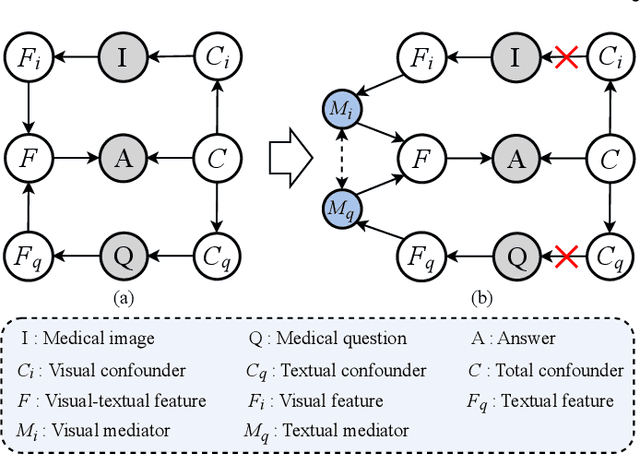

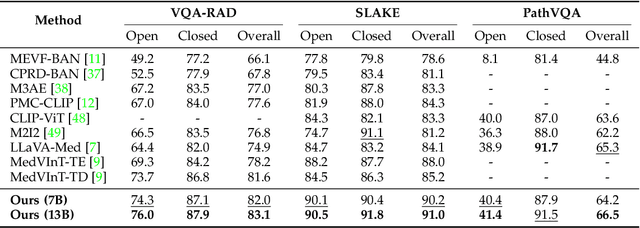

Abstract:Medical Visual Question Answering (MedVQA) aims to answer medical questions according to medical images. However, the complexity of medical data leads to confounders that are difficult to observe, so bias between images and questions is inevitable. Such cross-modal bias makes it challenging to infer medically meaningful answers. In this work, we propose a causal inference framework for the MedVQA task, which effectively eliminates the relative confounding effect between the image and the question to ensure the precision of the question-answering (QA) session. We are the first to introduce a novel causal graph structure that represents the interaction between visual and textual elements, explicitly capturing how different questions influence visual features. During optimization, we apply the mutual information to discover spurious correlations and propose a multi-variable resampling front-door adjustment method to eliminate the relative confounding effect, which aims to align features based on their true causal relevance to the question-answering task. In addition, we also introduce a prompt strategy that combines multiple prompt forms to improve the model's ability to understand complex medical data and answer accurately. Extensive experiments on three MedVQA datasets demonstrate that 1) our method significantly improves the accuracy of MedVQA, and 2) our method achieves true causal correlations in the face of complex medical data.

FreeInsert: Disentangled Text-Guided Object Insertion in 3D Gaussian Scene without Spatial Priors

May 02, 2025Abstract:Text-driven object insertion in 3D scenes is an emerging task that enables intuitive scene editing through natural language. However, existing 2D editing-based methods often rely on spatial priors such as 2D masks or 3D bounding boxes, and they struggle to ensure consistency of the inserted object. These limitations hinder flexibility and scalability in real-world applications. In this paper, we propose FreeInsert, a novel framework that leverages foundation models including MLLMs, LGMs, and diffusion models to disentangle object generation from spatial placement. This enables unsupervised and flexible object insertion in 3D scenes without spatial priors. FreeInsert starts with an MLLM-based parser that extracts structured semantics, including object types, spatial relationships, and attachment regions, from user instructions. These semantics guide both the reconstruction of the inserted object for 3D consistency and the learning of its degrees of freedom. We leverage the spatial reasoning capabilities of MLLMs to initialize object pose and scale. A hierarchical, spatially aware refinement stage further integrates spatial semantics and MLLM-inferred priors to enhance placement. Finally, the appearance of the object is improved using the inserted-object image to enhance visual fidelity. Experimental results demonstrate that FreeInsert achieves semantically coherent, spatially precise, and visually realistic 3D insertions without relying on spatial priors, offering a user-friendly and flexible editing experience.

Causal Disentanglement for Robust Long-tail Medical Image Generation

Apr 20, 2025Abstract:Counterfactual medical image generation effectively addresses data scarcity and enhances the interpretability of medical images. However, due to the complex and diverse pathological features of medical images and the imbalanced class distribution in medical data, generating high-quality and diverse medical images from limited data is significantly challenging. Additionally, to fully leverage the information in limited data, such as anatomical structure information and generate more structurally stable medical images while avoiding distortion or inconsistency. In this paper, in order to enhance the clinical relevance of generated data and improve the interpretability of the model, we propose a novel medical image generation framework, which generates independent pathological and structural features based on causal disentanglement and utilizes text-guided modeling of pathological features to regulate the generation of counterfactual images. First, we achieve feature separation through causal disentanglement and analyze the interactions between features. Here, we introduce group supervision to ensure the independence of pathological and identity features. Second, we leverage a diffusion model guided by pathological findings to model pathological features, enabling the generation of diverse counterfactual images. Meanwhile, we enhance accuracy by leveraging a large language model to extract lesion severity and location from medical reports. Additionally, we improve the performance of the latent diffusion model on long-tailed categories through initial noise optimization.

TRCE: Towards Reliable Malicious Concept Erasure in Text-to-Image Diffusion Models

Mar 10, 2025Abstract:Recent advances in text-to-image diffusion models enable photorealistic image generation, but they also risk producing malicious content, such as NSFW images. To mitigate risk, concept erasure methods are studied to facilitate the model to unlearn specific concepts. However, current studies struggle to fully erase malicious concepts implicitly embedded in prompts (e.g., metaphorical expressions or adversarial prompts) while preserving the model's normal generation capability. To address this challenge, our study proposes TRCE, using a two-stage concept erasure strategy to achieve an effective trade-off between reliable erasure and knowledge preservation. Firstly, TRCE starts by erasing the malicious semantics implicitly embedded in textual prompts. By identifying a critical mapping objective(i.e., the [EoT] embedding), we optimize the cross-attention layers to map malicious prompts to contextually similar prompts but with safe concepts. This step prevents the model from being overly influenced by malicious semantics during the denoising process. Following this, considering the deterministic properties of the sampling trajectory of the diffusion model, TRCE further steers the early denoising prediction toward the safe direction and away from the unsafe one through contrastive learning, thus further avoiding the generation of malicious content. Finally, we conduct comprehensive evaluations of TRCE on multiple malicious concept erasure benchmarks, and the results demonstrate its effectiveness in erasing malicious concepts while better preserving the model's original generation ability. The code is available at: http://github.com/ddgoodgood/TRCE. CAUTION: This paper includes model-generated content that may contain offensive material.

MV-CLIP: Multi-View CLIP for Zero-shot 3D Shape Recognition

Nov 30, 2023

Abstract:Large-scale pre-trained models have demonstrated impressive performance in vision and language tasks within open-world scenarios. Due to the lack of comparable pre-trained models for 3D shapes, recent methods utilize language-image pre-training to realize zero-shot 3D shape recognition. However, due to the modality gap, pretrained language-image models are not confident enough in the generalization to 3D shape recognition. Consequently, this paper aims to improve the confidence with view selection and hierarchical prompts. Leveraging the CLIP model as an example, we employ view selection on the vision side by identifying views with high prediction confidence from multiple rendered views of a 3D shape. On the textual side, the strategy of hierarchical prompts is proposed for the first time. The first layer prompts several classification candidates with traditional class-level descriptions, while the second layer refines the prediction based on function-level descriptions or further distinctions between the candidates. Remarkably, without the need for additional training, our proposed method achieves impressive zero-shot 3D classification accuracies of 84.44\%, 91.51\%, and 66.17\% on ModelNet40, ModelNet10, and ShapeNet Core55, respectively. Furthermore, we will make the code publicly available to facilitate reproducibility and further research in this area.

CAT-DM: Controllable Accelerated Virtual Try-on with Diffusion Model

Nov 30, 2023

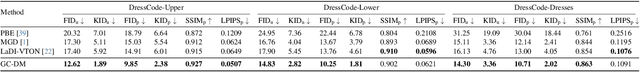

Abstract:Image-based virtual try-on enables users to virtually try on different garments by altering original clothes in their photographs. Generative Adversarial Networks (GANs) dominate the research field in image-based virtual try-on, but have not resolved problems such as unnatural deformation of garments and the blurry generation quality. Recently, diffusion models have emerged with surprising performance across various image generation tasks. While the generative quality of diffusion models is impressive, achieving controllability poses a significant challenge when applying it to virtual try-on tasks and multiple denoising iterations limit its potential for real-time applications. In this paper, we propose Controllable Accelerated virtual Try-on with Diffusion Model called CAT-DM. To enhance the controllability, a basic diffusion-based virtual try-on network is designed, which utilizes ControlNet to introduce additional control conditions and improves the feature extraction of garment images. In terms of acceleration, CAT-DM initiates a reverse denoising process with an implicit distribution generated by a pre-trained GAN-based model. Compared with previous try-on methods based on diffusion models, CAT-DM not only retains the pattern and texture details of the in-shop garment but also reduces the sampling steps without compromising generation quality. Extensive experiments demonstrate the superiority of CAT-DM against both GAN-based and diffusion-based methods in producing more realistic images and accurately reproducing garment patterns. Our code and models will be publicly released.

Image-Based Virtual Try-On: A Survey

Nov 08, 2023Abstract:Image-based virtual try-on aims to synthesize a naturally dressed person image with a clothing image, which revolutionizes online shopping and inspires related topics within image generation, showing both research significance and commercial potentials. However, there is a great gap between current research progress and commercial applications and an absence of comprehensive overview towards this field to accelerate the development. In this survey, we provide a comprehensive analysis of the state-of-the-art techniques and methodologies in aspects of pipeline architecture, person representation and key modules such as try-on indication, clothing warping and try-on stage. We propose a new semantic criteria with CLIP, and evaluate representative methods with uniformly implemented evaluation metrics on the same dataset. In addition to quantitative and qualitative evaluation of current open-source methods, we also utilize ControlNet to fine-tune a recent large image generation model (PBE) to show future potentials of large-scale models on image-based virtual try-on task. Finally, unresolved issues are revealed and future research directions are prospected to identify key trends and inspire further exploration. The uniformly implemented evaluation metrics, dataset and collected methods will be made public available at https://github.com/little-misfit/Survey-Of-Virtual-Try-On.

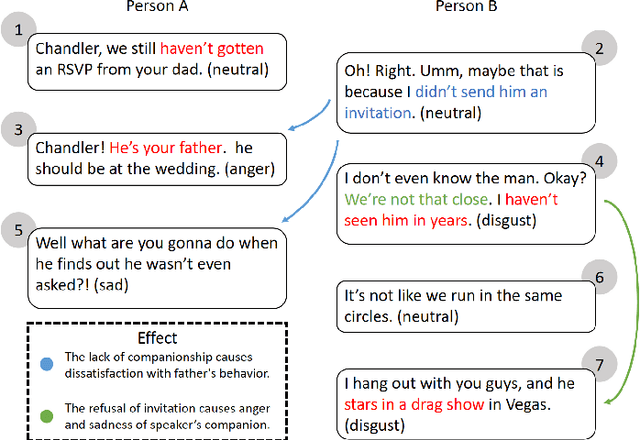

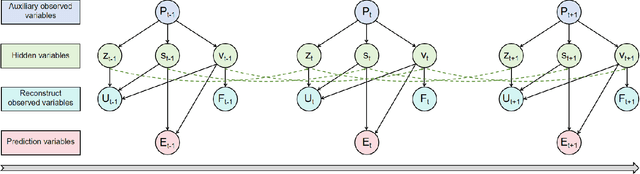

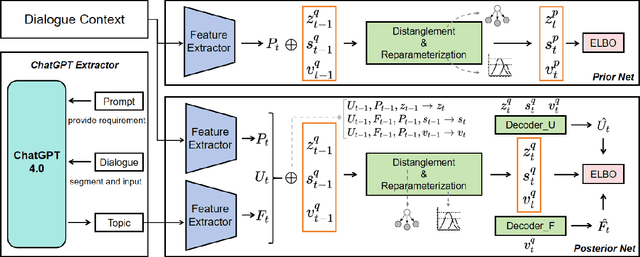

Dynamic Causal Disentanglement Model for Dialogue Emotion Detection

Sep 13, 2023

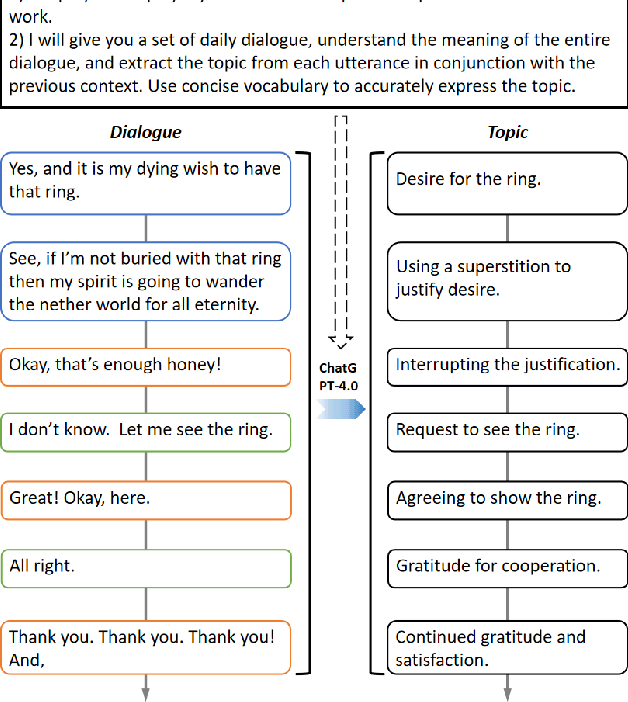

Abstract:Emotion detection is a critical technology extensively employed in diverse fields. While the incorporation of commonsense knowledge has proven beneficial for existing emotion detection methods, dialogue-based emotion detection encounters numerous difficulties and challenges due to human agency and the variability of dialogue content.In dialogues, human emotions tend to accumulate in bursts. However, they are often implicitly expressed. This implies that many genuine emotions remain concealed within a plethora of unrelated words and dialogues.In this paper, we propose a Dynamic Causal Disentanglement Model based on hidden variable separation, which is founded on the separation of hidden variables. This model effectively decomposes the content of dialogues and investigates the temporal accumulation of emotions, thereby enabling more precise emotion recognition. First, we introduce a novel Causal Directed Acyclic Graph (DAG) to establish the correlation between hidden emotional information and other observed elements. Subsequently, our approach utilizes pre-extracted personal attributes and utterance topics as guiding factors for the distribution of hidden variables, aiming to separate irrelevant ones. Specifically, we propose a dynamic temporal disentanglement model to infer the propagation of utterances and hidden variables, enabling the accumulation of emotion-related information throughout the conversation. To guide this disentanglement process, we leverage the ChatGPT-4.0 and LSTM networks to extract utterance topics and personal attributes as observed information.Finally, we test our approach on two popular datasets in dialogue emotion detection and relevant experimental results verified the model's superiority.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge