Keliang Xie

Organ-Agents: Virtual Human Physiology Simulator via LLMs

Aug 20, 2025Abstract:Recent advances in large language models (LLMs) have enabled new possibilities in simulating complex physiological systems. We introduce Organ-Agents, a multi-agent framework that simulates human physiology via LLM-driven agents. Each Simulator models a specific system (e.g., cardiovascular, renal, immune). Training consists of supervised fine-tuning on system-specific time-series data, followed by reinforcement-guided coordination using dynamic reference selection and error correction. We curated data from 7,134 sepsis patients and 7,895 controls, generating high-resolution trajectories across 9 systems and 125 variables. Organ-Agents achieved high simulation accuracy on 4,509 held-out patients, with per-system MSEs <0.16 and robustness across SOFA-based severity strata. External validation on 22,689 ICU patients from two hospitals showed moderate degradation under distribution shifts with stable simulation. Organ-Agents faithfully reproduces critical multi-system events (e.g., hypotension, hyperlactatemia, hypoxemia) with coherent timing and phase progression. Evaluation by 15 critical care physicians confirmed realism and physiological plausibility (mean Likert ratings 3.9 and 3.7). Organ-Agents also enables counterfactual simulations under alternative sepsis treatment strategies, generating trajectories and APACHE II scores aligned with matched real-world patients. In downstream early warning tasks, classifiers trained on synthetic data showed minimal AUROC drops (<0.04), indicating preserved decision-relevant patterns. These results position Organ-Agents as a credible, interpretable, and generalizable digital twin for precision diagnosis, treatment simulation, and hypothesis testing in critical care.

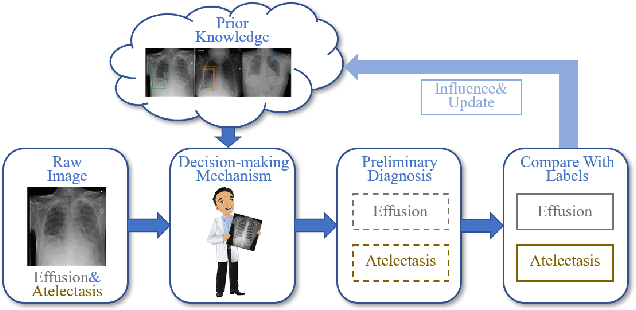

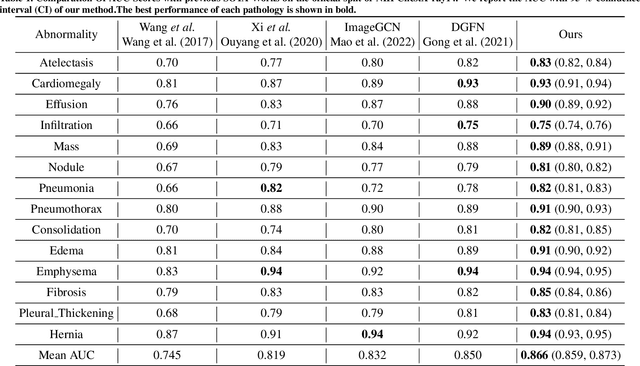

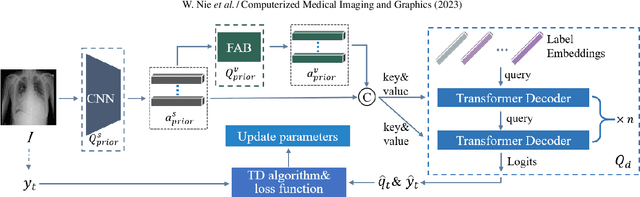

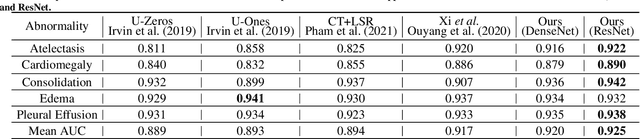

Deep Reinforcement Learning Framework for Thoracic Diseases Classification via Prior Knowledge Guidance

Jun 02, 2023

Abstract:The chest X-ray is often utilized for diagnosing common thoracic diseases. In recent years, many approaches have been proposed to handle the problem of automatic diagnosis based on chest X-rays. However, the scarcity of labeled data for related diseases still poses a huge challenge to an accurate diagnosis. In this paper, we focus on the thorax disease diagnostic problem and propose a novel deep reinforcement learning framework, which introduces prior knowledge to direct the learning of diagnostic agents and the model parameters can also be continuously updated as the data increases, like a person's learning process. Especially, 1) prior knowledge can be learned from the pre-trained model based on old data or other domains' similar data, which can effectively reduce the dependence on target domain data, and 2) the framework of reinforcement learning can make the diagnostic agent as exploratory as a human being and improve the accuracy of diagnosis through continuous exploration. The method can also effectively solve the model learning problem in the case of few-shot data and improve the generalization ability of the model. Finally, our approach's performance was demonstrated using the well-known NIH ChestX-ray 14 and CheXpert datasets, and we achieved competitive results. The source code can be found here: \url{https://github.com/NeaseZ/MARL}.

Instrumental Variable Learning for Chest X-ray Classification

May 20, 2023

Abstract:The chest X-ray (CXR) is commonly employed to diagnose thoracic illnesses, but the challenge of achieving accurate automatic diagnosis through this method persists due to the complex relationship between pathology. In recent years, various deep learning-based approaches have been suggested to tackle this problem but confounding factors such as image resolution or noise problems often damage model performance. In this paper, we focus on the chest X-ray classification task and proposed an interpretable instrumental variable (IV) learning framework, to eliminate the spurious association and obtain accurate causal representation. Specifically, we first construct a structural causal model (SCM) for our task and learn the confounders and the preliminary representations of IV, we then leverage electronic health record (EHR) as auxiliary information and we fuse the above feature with our transformer-based semantic fusion module, so the IV has the medical semantic. Meanwhile, the reliability of IV is further guaranteed via the constraints of mutual information between related causal variables. Finally, our approach's performance is demonstrated using the MIMIC-CXR, NIH ChestX-ray 14, and CheXpert datasets, and we achieve competitive results.

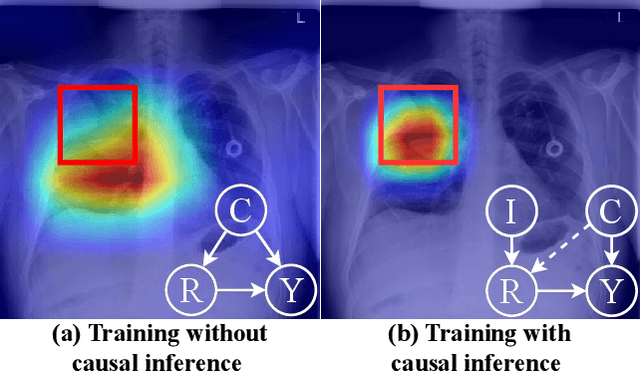

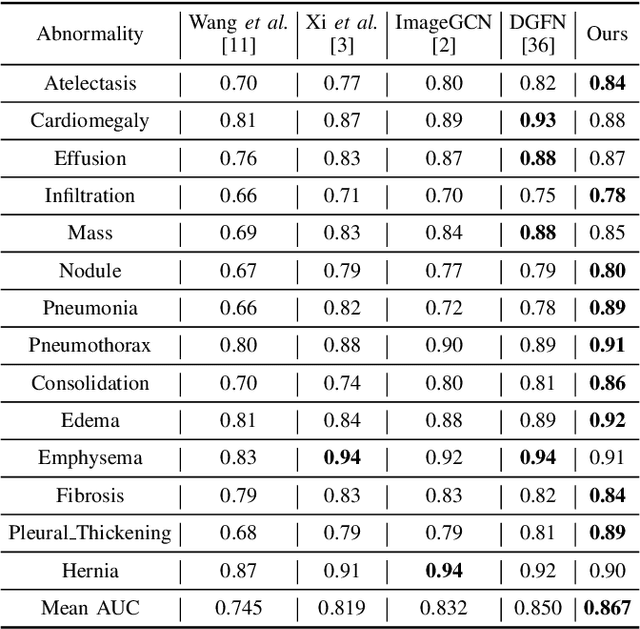

Chest X-ray Image Classification: A Causal Perspective

May 20, 2023Abstract:The chest X-ray (CXR) is one of the most common and easy-to-get medical tests used to diagnose common diseases of the chest. Recently, many deep learning-based methods have been proposed that are capable of effectively classifying CXRs. Even though these techniques have worked quite well, it is difficult to establish whether what these algorithms actually learn is the cause-and-effect link between diseases and their causes or just how to map labels to photos.In this paper, we propose a causal approach to address the CXR classification problem, which constructs a structural causal model (SCM) and uses the backdoor adjustment to select effective visual information for CXR classification. Specially, we design different probability optimization functions to eliminate the influence of confounders on the learning of real causality. Experimental results demonstrate that our proposed method outperforms the open-source NIH ChestX-ray14 in terms of classification performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge