Zixuan Liu

Targeting Misalignment: A Conflict-Aware Framework for Reward-Model-based LLM Alignment

Dec 10, 2025Abstract:Reward-model-based fine-tuning is a central paradigm in aligning Large Language Models with human preferences. However, such approaches critically rely on the assumption that proxy reward models accurately reflect intended supervision, a condition often violated due to annotation noise, bias, or limited coverage. This misalignment can lead to undesirable behaviors, where models optimize for flawed signals rather than true human values. In this paper, we investigate a novel framework to identify and mitigate such misalignment by treating the fine-tuning process as a form of knowledge integration. We focus on detecting instances of proxy-policy conflicts, cases where the base model strongly disagrees with the proxy. We argue that such conflicts often signify areas of shared ignorance, where neither the policy nor the reward model possesses sufficient knowledge, making them especially susceptible to misalignment. To this end, we propose two complementary metrics for identifying these conflicts: a localized Proxy-Policy Alignment Conflict Score (PACS) and a global Kendall-Tau Distance measure. Building on this insight, we design an algorithm named Selective Human-in-the-loop Feedback via Conflict-Aware Sampling (SHF-CAS) that targets high-conflict QA pairs for additional feedback, refining both the reward model and policy efficiently. Experiments on two alignment tasks demonstrate that our approach enhances general alignment performance, even when trained with a biased proxy reward. Our work provides a new lens for interpreting alignment failures and offers a principled pathway for targeted refinement in LLM training.

Causal Inspired Multi Modal Recommendation

Oct 14, 2025

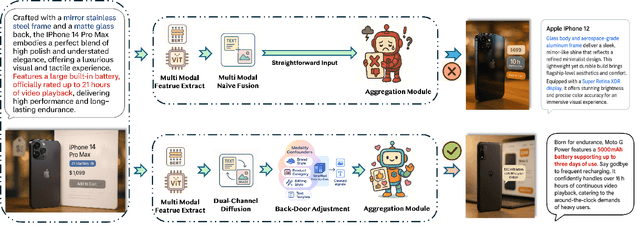

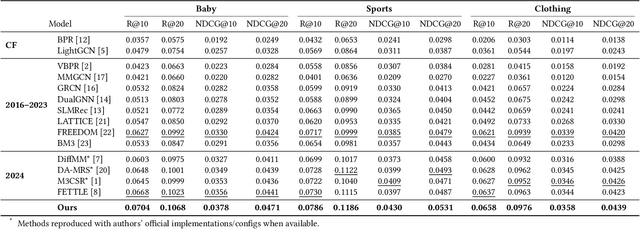

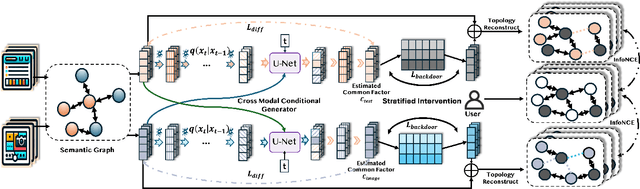

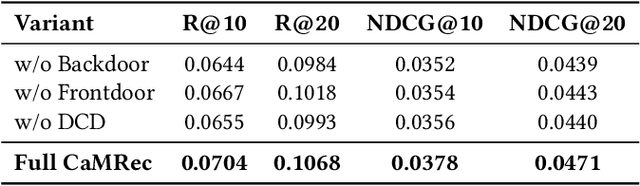

Abstract:Multimodal recommender systems enhance personalized recommendations in e-commerce and online advertising by integrating visual, textual, and user-item interaction data. However, existing methods often overlook two critical biases: (i) modal confounding, where latent factors (e.g., brand style or product category) simultaneously drive multiple modalities and influence user preference, leading to spurious feature-preference associations; (ii) interaction bias, where genuine user preferences are mixed with noise from exposure effects and accidental clicks. To address these challenges, we propose a Causal-inspired multimodal Recommendation framework. Specifically, we introduce a dual-channel cross-modal diffusion module to identify hidden modal confounders, utilize back-door adjustment with hierarchical matching and vector-quantized codebooks to block confounding paths, and apply front-door adjustment combined with causal topology reconstruction to build a deconfounded causal subgraph. Extensive experiments on three real-world e-commerce datasets demonstrate that our method significantly outperforms state-of-the-art baselines while maintaining strong interpretability.

On the Hardness of Unsupervised Domain Adaptation: Optimal Learners and Information-Theoretic Perspective

Jul 09, 2025Abstract:This paper studies the hardness of unsupervised domain adaptation (UDA) under covariate shift. We model the uncertainty that the learner faces by a distribution $\pi$ in the ground-truth triples $(p, q, f)$ -- which we call a UDA class -- where $(p, q)$ is the source -- target distribution pair and $f$ is the classifier. We define the performance of a learner as the overall target domain risk, averaged over the randomness of the ground-truth triple. This formulation couples the source distribution, the target distribution and the classifier in the ground truth, and deviates from the classical worst-case analyses, which pessimistically emphasize the impact of hard but rare UDA instances. In this formulation, we precisely characterize the optimal learner. The performance of the optimal learner then allows us to define the learning difficulty for the UDA class and for the observed sample. To quantify this difficulty, we introduce an information-theoretic quantity -- Posterior Target Label Uncertainty (PTLU) -- along with its empirical estimate (EPTLU) from the sample , which capture the uncertainty in the prediction for the target domain. Briefly, PTLU is the entropy of the predicted label in the target domain under the posterior distribution of ground-truth classifier given the observed source and target samples. By proving that such a quantity serves to lower-bound the risk of any learner, we suggest that these quantities can be used as proxies for evaluating the hardness of UDA learning. We provide several examples to demonstrate the advantage of PTLU, relative to the existing measures, in evaluating the difficulty of UDA learning.

DexSinGrasp: Learning a Unified Policy for Dexterous Object Singulation and Grasping in Cluttered Environments

Apr 06, 2025Abstract:Grasping objects in cluttered environments remains a fundamental yet challenging problem in robotic manipulation. While prior works have explored learning-based synergies between pushing and grasping for two-fingered grippers, few have leveraged the high degrees of freedom (DoF) in dexterous hands to perform efficient singulation for grasping in cluttered settings. In this work, we introduce DexSinGrasp, a unified policy for dexterous object singulation and grasping. DexSinGrasp enables high-dexterity object singulation to facilitate grasping, significantly improving efficiency and effectiveness in cluttered environments. We incorporate clutter arrangement curriculum learning to enhance success rates and generalization across diverse clutter conditions, while policy distillation enables a deployable vision-based grasping strategy. To evaluate our approach, we introduce a set of cluttered grasping tasks with varying object arrangements and occlusion levels. Experimental results show that our method outperforms baselines in both efficiency and grasping success rate, particularly in dense clutter. Codes, appendix, and videos are available on our project website https://nus-lins-lab.github.io/dexsingweb/.

BADGR: Bundle Adjustment Diffusion Conditioned by GRadients for Wide-Baseline Floor Plan Reconstruction

Mar 25, 2025Abstract:Reconstructing precise camera poses and floor plan layouts from wide-baseline RGB panoramas is a difficult and unsolved problem. We introduce BADGR, a novel diffusion model that jointly performs reconstruction and bundle adjustment (BA) to refine poses and layouts from a coarse state, using 1D floor boundary predictions from dozens of images of varying input densities. Unlike a guided diffusion model, BADGR is conditioned on dense per-entity outputs from a single-step Levenberg Marquardt (LM) optimizer and is trained to predict camera and wall positions while minimizing reprojection errors for view-consistency. The objective of layout generation from denoising diffusion process complements BA optimization by providing additional learned layout-structural constraints on top of the co-visible features across images. These constraints help BADGR to make plausible guesses on spatial relations which help constrain pose graph, such as wall adjacency, collinearity, and learn to mitigate errors from dense boundary observations with global contexts. BADGR trains exclusively on 2D floor plans, simplifying data acquisition, enabling robust augmentation, and supporting variety of input densities. Our experiments and analysis validate our method, which significantly outperforms the state-of-the-art pose and floor plan layout reconstruction with different input densities.

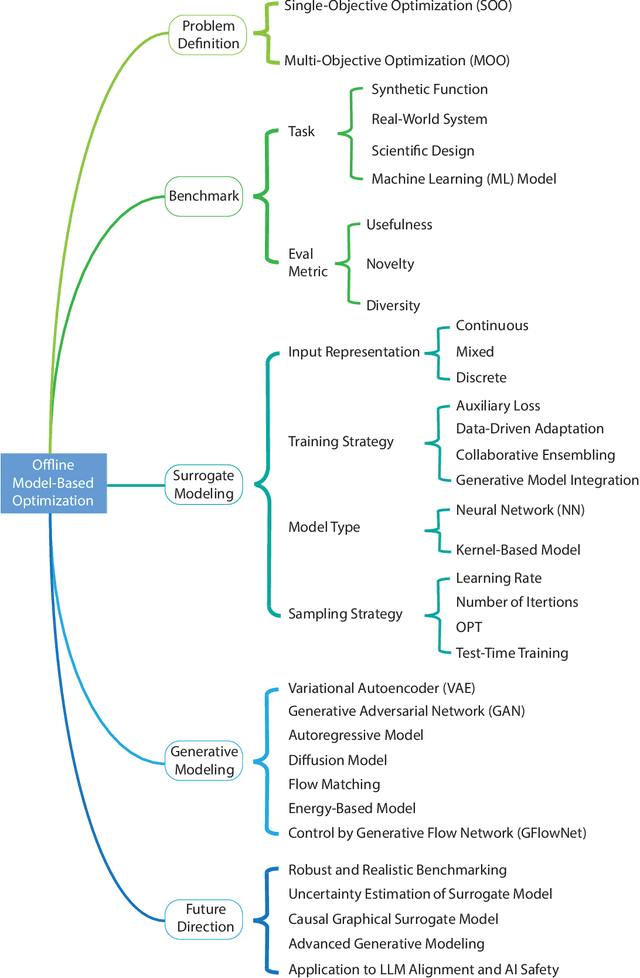

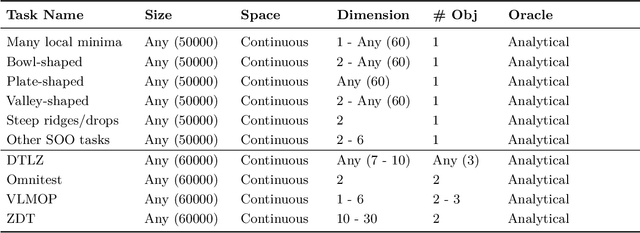

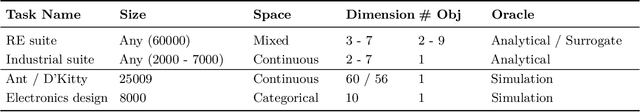

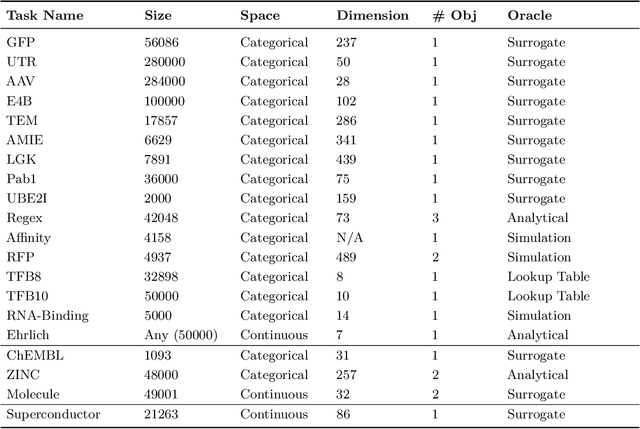

Offline Model-Based Optimization: Comprehensive Review

Mar 21, 2025

Abstract:Offline optimization is a fundamental challenge in science and engineering, where the goal is to optimize black-box functions using only offline datasets. This setting is particularly relevant when querying the objective function is prohibitively expensive or infeasible, with applications spanning protein engineering, material discovery, neural architecture search, and beyond. The main difficulty lies in accurately estimating the objective landscape beyond the available data, where extrapolations are fraught with significant epistemic uncertainty. This uncertainty can lead to objective hacking(reward hacking), exploiting model inaccuracies in unseen regions, or other spurious optimizations that yield misleadingly high performance estimates outside the training distribution. Recent advances in model-based optimization(MBO) have harnessed the generalization capabilities of deep neural networks to develop offline-specific surrogate and generative models. Trained with carefully designed strategies, these models are more robust against out-of-distribution issues, facilitating the discovery of improved designs. Despite its growing impact in accelerating scientific discovery, the field lacks a comprehensive review. To bridge this gap, we present the first thorough review of offline MBO. We begin by formalizing the problem for both single-objective and multi-objective settings and by reviewing recent benchmarks and evaluation metrics. We then categorize existing approaches into two key areas: surrogate modeling, which emphasizes accurate function approximation in out-of-distribution regions, and generative modeling, which explores high-dimensional design spaces to identify high-performing designs. Finally, we examine the key challenges and propose promising directions for advancement in this rapidly evolving field including safe control of superintelligent systems.

Deformable Registration Framework for Augmented Reality-based Surgical Guidance in Head and Neck Tumor Resection

Mar 11, 2025Abstract:Head and neck squamous cell carcinoma (HNSCC) has one of the highest rates of recurrence cases among solid malignancies. Recurrence rates can be reduced by improving positive margins localization. Frozen section analysis (FSA) of resected specimens is the gold standard for intraoperative margin assessment. However, because of the complex 3D anatomy and the significant shrinkage of resected specimens, accurate margin relocation from specimen back onto the resection site based on FSA results remains challenging. We propose a novel deformable registration framework that uses both the pre-resection upper surface and the post-resection site of the specimen to incorporate thickness information into the registration process. The proposed method significantly improves target registration error (TRE), demonstrating enhanced adaptability to thicker specimens. In tongue specimens, the proposed framework improved TRE by up to 33% as compared to prior deformable registration. Notably, tongue specimens exhibit complex 3D anatomies and hold the highest clinical significance compared to other head and neck specimens from the buccal and skin. We analyzed distinct deformation behaviors in different specimens, highlighting the need for tailored deformation strategies. To further aid intraoperative visualization, we also integrated this framework with an augmented reality-based auto-alignment system. The combined system can accurately and automatically overlay the deformed 3D specimen mesh with positive margin annotation onto the resection site. With a pilot study of the AR guided framework involving two surgeons, the integrated system improved the surgeons' average target relocation error from 9.8 cm to 4.8 cm.

GrInAdapt: Scaling Retinal Vessel Structural Map Segmentation Through Grounding, Integrating and Adapting Multi-device, Multi-site, and Multi-modal Fundus Domains

Mar 08, 2025Abstract:Retinal vessel segmentation is critical for diagnosing ocular conditions, yet current deep learning methods are limited by modality-specific challenges and significant distribution shifts across imaging devices, resolutions, and anatomical regions. In this paper, we propose GrInAdapt, a novel framework for source-free multi-target domain adaptation that leverages multi-view images to refine segmentation labels and enhance model generalizability for optical coherence tomography angiography (OCTA) of the fundus of the eye. GrInAdapt follows an intuitive three-step approach: (i) grounding images to a common anchor space via registration, (ii) integrating predictions from multiple views to achieve improved label consensus, and (iii) adapting the source model to diverse target domains. Furthermore, GrInAdapt is flexible enough to incorporate auxiliary modalities such as color fundus photography, to provide complementary cues for robust vessel segmentation. Extensive experiments on a multi-device, multi-site, and multi-modal retinal dataset demonstrate that GrInAdapt significantly outperforms existing domain adaptation methods, achieving higher segmentation accuracy and robustness across multiple domains. These results highlight the potential of GrInAdapt to advance automated retinal vessel analysis and support robust clinical decision-making.

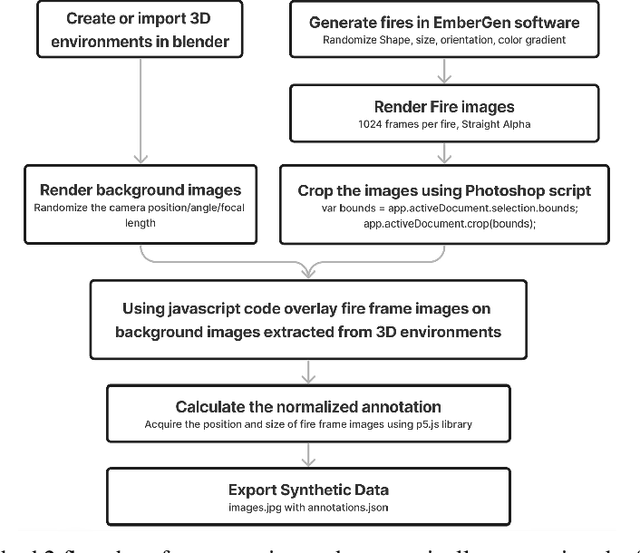

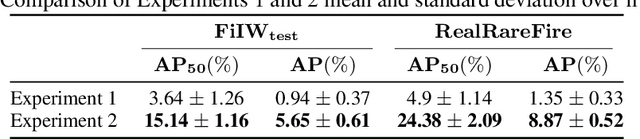

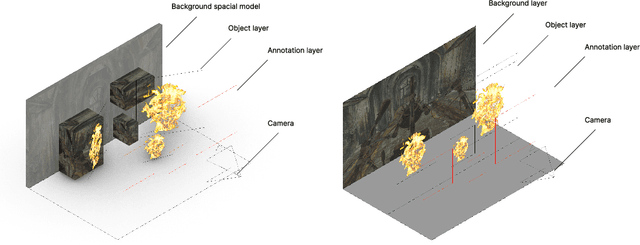

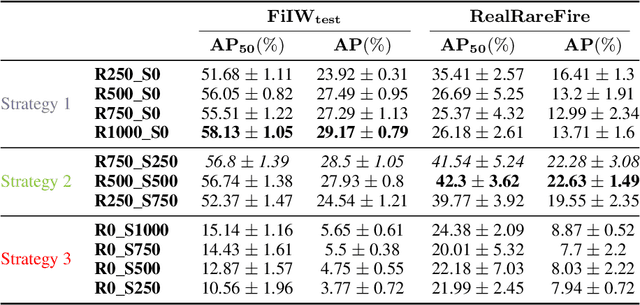

Synthetic imagery for fuzzy object detection: A comparative study

Oct 01, 2024

Abstract:The fuzzy object detection is a challenging field of research in computer vision (CV). Distinguishing between fuzzy and non-fuzzy object detection in CV is important. Fuzzy objects such as fire, smoke, mist, and steam present significantly greater complexities in terms of visual features, blurred edges, varying shapes, opacity, and volume compared to non-fuzzy objects such as trees and cars. Collection of a balanced and diverse dataset and accurate annotation is crucial to achieve better ML models for fuzzy objects, however, the task of collection and annotation is still highly manual. In this research, we propose and leverage an alternative method of generating and automatically annotating fully synthetic fire images based on 3D models for training an object detection model. Moreover, the performance, and efficiency of the trained ML models on synthetic images is compared with ML models trained on real imagery and mixed imagery. Findings proved the effectiveness of the synthetic data for fire detection, while the performance improves as the test dataset covers a broader spectrum of real fires. Our findings illustrates that when synthetic imagery and real imagery is utilized in a mixed training set the resulting ML model outperforms models trained on real imagery as well as models trained on synthetic imagery for detection of a broad spectrum of fires. The proposed method for automating the annotation of synthetic fuzzy objects imagery carries substantial implications for reducing both time and cost in creating computer vision models specifically tailored for detecting fuzzy objects.

Robust Guided Diffusion for Offline Black-Box Optimization

Oct 01, 2024

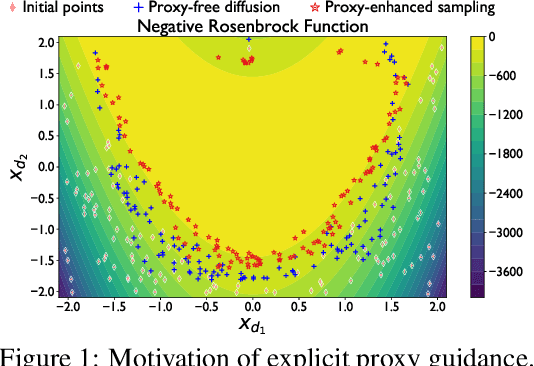

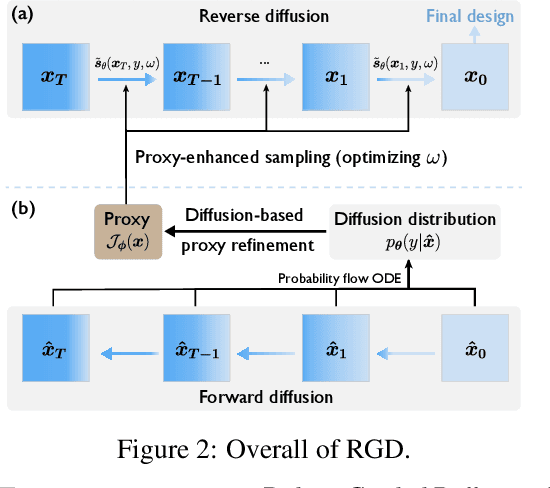

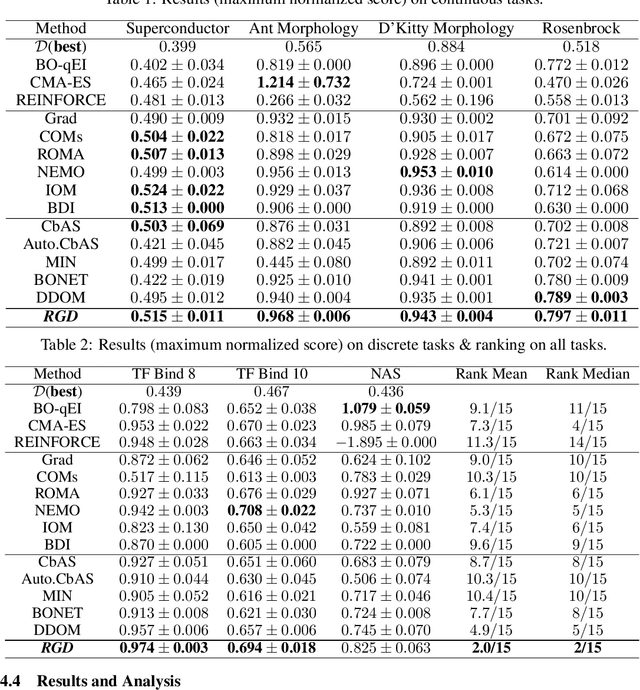

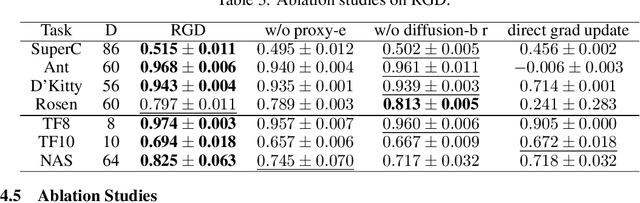

Abstract:Offline black-box optimization aims to maximize a black-box function using an offline dataset of designs and their measured properties. Two main approaches have emerged: the forward approach, which learns a mapping from input to its value, thereby acting as a proxy to guide optimization, and the inverse approach, which learns a mapping from value to input for conditional generation. (a) Although proxy-free~(classifier-free) diffusion shows promise in robustly modeling the inverse mapping, it lacks explicit guidance from proxies, essential for generating high-performance samples beyond the training distribution. Therefore, we propose \textit{proxy-enhanced sampling} which utilizes the explicit guidance from a trained proxy to bolster proxy-free diffusion with enhanced sampling control. (b) Yet, the trained proxy is susceptible to out-of-distribution issues. To address this, we devise the module \textit{diffusion-based proxy refinement}, which seamlessly integrates insights from proxy-free diffusion back into the proxy for refinement. To sum up, we propose \textit{\textbf{R}obust \textbf{G}uided \textbf{D}iffusion for Offline Black-box Optimization}~(\textbf{RGD}), combining the advantages of proxy~(explicit guidance) and proxy-free diffusion~(robustness) for effective conditional generation. RGD achieves state-of-the-art results on various design-bench tasks, underscoring its efficacy. Our code is at https://anonymous.4open.science/r/RGD-27A5/README.md.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge