Alexandros Karargyris

IHU Strasbourg, UNISTRA

Medical Imaging AI Competitions Lack Fairness

Dec 19, 2025

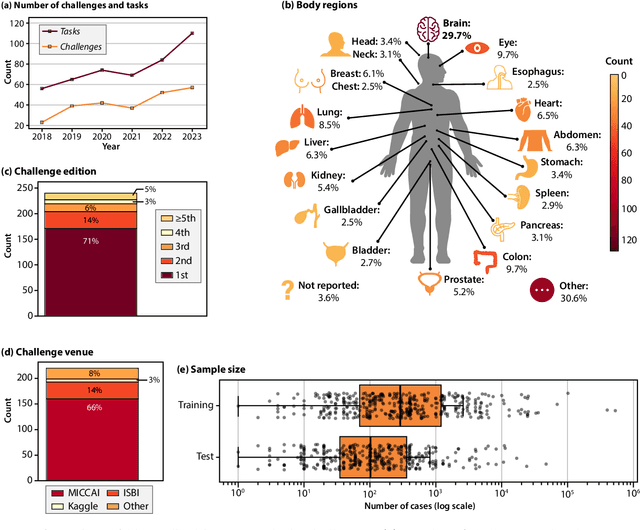

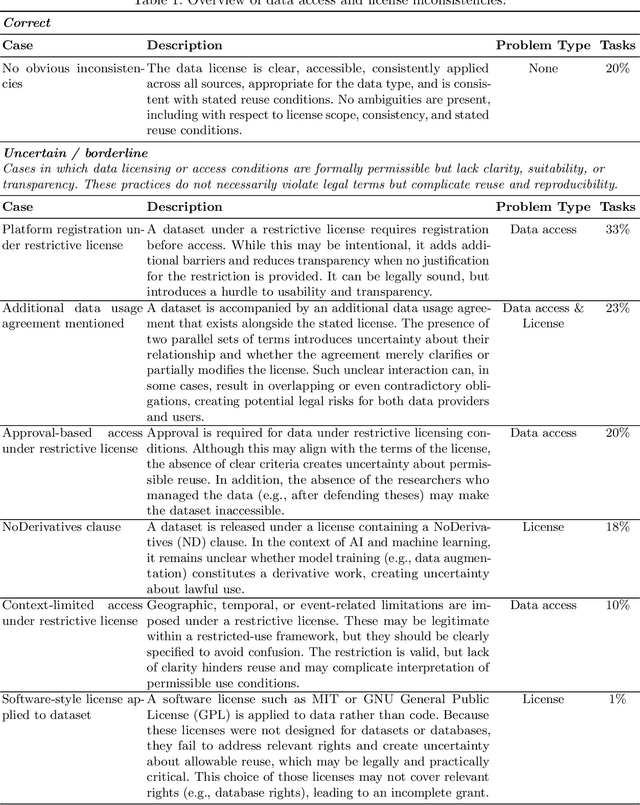

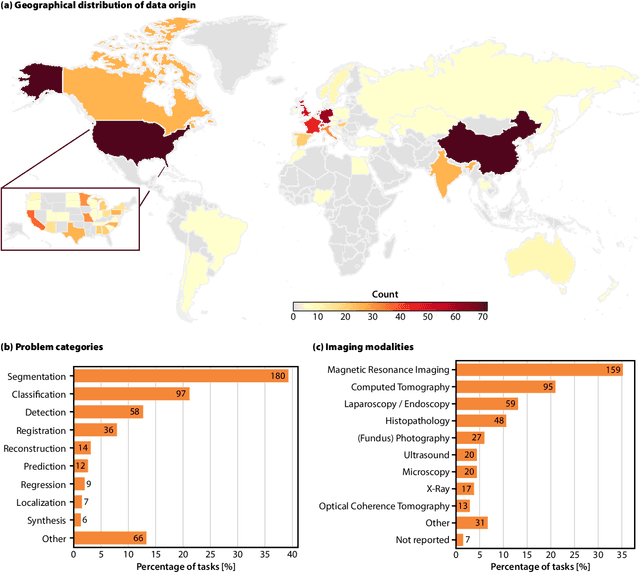

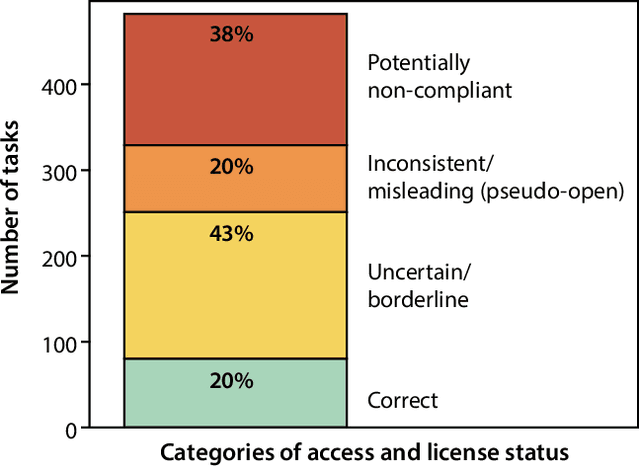

Abstract:Benchmarking competitions are central to the development of artificial intelligence (AI) in medical imaging, defining performance standards and shaping methodological progress. However, it remains unclear whether these benchmarks provide data that are sufficiently representative, accessible, and reusable to support clinically meaningful AI. In this work, we assess fairness along two complementary dimensions: (1) whether challenge datasets are representative of real-world clinical diversity, and (2) whether they are accessible and legally reusable in line with the FAIR principles. To address this question, we conducted a large-scale systematic study of 241 biomedical image analysis challenges comprising 458 tasks across 19 imaging modalities. Our findings show substantial biases in dataset composition, including geographic location, modality-, and problem type-related biases, indicating that current benchmarks do not adequately reflect real-world clinical diversity. Despite their widespread influence, challenge datasets were frequently constrained by restrictive or ambiguous access conditions, inconsistent or non-compliant licensing practices, and incomplete documentation, limiting reproducibility and long-term reuse. Together, these shortcomings expose foundational fairness limitations in our benchmarking ecosystem and highlight a disconnect between leaderboard success and clinical relevance.

Analysis of the MICCAI Brain Tumor Segmentation -- Metastases (BraTS-METS) 2025 Lighthouse Challenge: Brain Metastasis Segmentation on Pre- and Post-treatment MRI

Apr 16, 2025Abstract:Despite continuous advancements in cancer treatment, brain metastatic disease remains a significant complication of primary cancer and is associated with an unfavorable prognosis. One approach for improving diagnosis, management, and outcomes is to implement algorithms based on artificial intelligence for the automated segmentation of both pre- and post-treatment MRI brain images. Such algorithms rely on volumetric criteria for lesion identification and treatment response assessment, which are still not available in clinical practice. Therefore, it is critical to establish tools for rapid volumetric segmentations methods that can be translated to clinical practice and that are trained on high quality annotated data. The BraTS-METS 2025 Lighthouse Challenge aims to address this critical need by establishing inter-rater and intra-rater variability in dataset annotation by generating high quality annotated datasets from four individual instances of segmentation by neuroradiologists while being recorded on video (two instances doing "from scratch" and two instances after AI pre-segmentation). This high-quality annotated dataset will be used for testing phase in 2025 Lighthouse challenge and will be publicly released at the completion of the challenge. The 2025 Lighthouse challenge will also release the 2023 and 2024 segmented datasets that were annotated using an established pipeline of pre-segmentation, student annotation, two neuroradiologists checking, and one neuroradiologist finalizing the process. It builds upon its previous edition by including post-treatment cases in the dataset. Using these high-quality annotated datasets, the 2025 Lighthouse challenge plans to test benchmark algorithms for automated segmentation of pre-and post-treatment brain metastases (BM), trained on diverse and multi-institutional datasets of MRI images obtained from patients with brain metastases.

Towards Real-time Intrahepatic Vessel Identification in Intraoperative Ultrasound-Guided Liver Surgery

Oct 04, 2024Abstract:While laparoscopic liver resection is less prone to complications and maintains patient outcomes compared to traditional open surgery, its complexity hinders widespread adoption due to challenges in representing the liver's internal structure. Laparoscopic intraoperative ultrasound offers efficient, cost-effective and radiation-free guidance. Our objective is to aid physicians in identifying internal liver structures using laparoscopic intraoperative ultrasound. We propose a patient-specific approach using preoperative 3D ultrasound liver volume to train a deep learning model for real-time identification of portal tree and branch structures. Our personalized AI model, validated on ex vivo swine livers, achieved superior precision (0.95) and recall (0.93) compared to surgeons, laying groundwork for precise vessel identification in ultrasound-based liver resection. Its adaptability and potential clinical impact promise to advance surgical interventions and improve patient care.

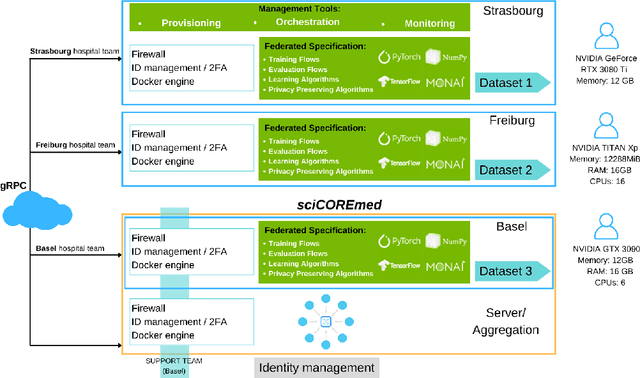

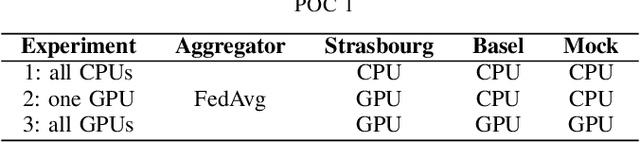

Clinnova Federated Learning Proof of Concept: Key Takeaways from a Cross-border Collaboration

Oct 03, 2024

Abstract:Clinnova, a collaborative initiative involving France, Germany, Switzerland, and Luxembourg, is dedicated to unlocking the power of precision medicine through data federation, standardization, and interoperability. This European Greater Region initiative seeks to create an interoperable European standard using artificial intelligence (AI) and data science to enhance healthcare outcomes and efficiency. Key components include multidisciplinary research centers, a federated biobanking strategy, a digital health innovation platform, and a federated AI strategy. It targets inflammatory bowel disease, rheumatoid diseases, and multiple sclerosis (MS), emphasizing data quality to develop AI algorithms for personalized treatment and translational research. The IHU Strasbourg (Institute of Minimal-invasive Surgery) has the lead in this initiative to develop the federated learning (FL) proof of concept (POC) that will serve as a foundation for advancing AI in healthcare. At its core, Clinnova-MS aims to enhance MS patient care by using FL to develop more accurate models that detect disease progression, guide interventions, and validate digital biomarkers across multiple sites. This technical report presents insights and key takeaways from the first cross-border federated POC on MS segmentation of MRI images within the Clinnova framework. While our work marks a significant milestone in advancing MS segmentation through cross-border collaboration, it also underscores the importance of addressing technical, logistical, and ethical considerations to realize the full potential of FL in healthcare settings.

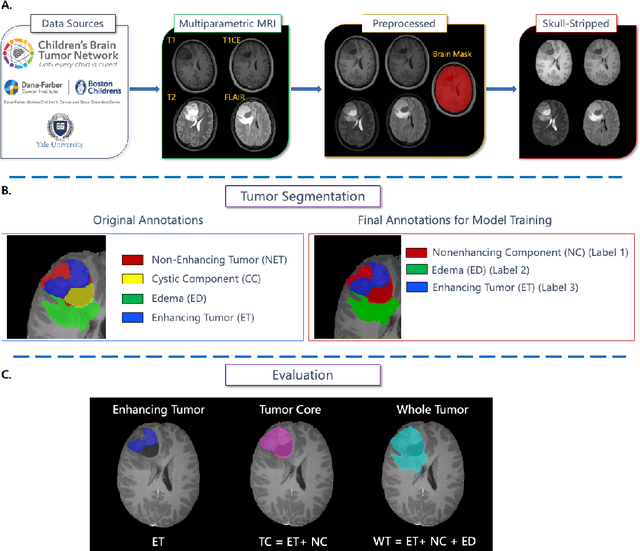

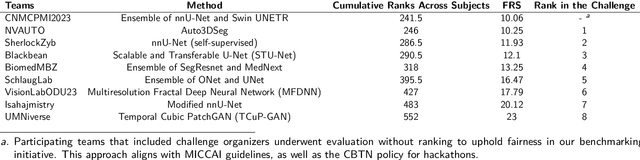

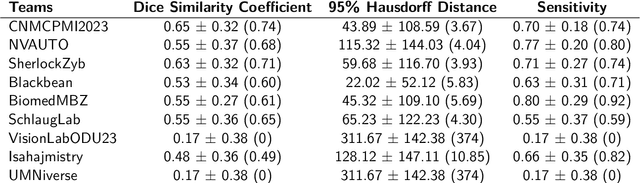

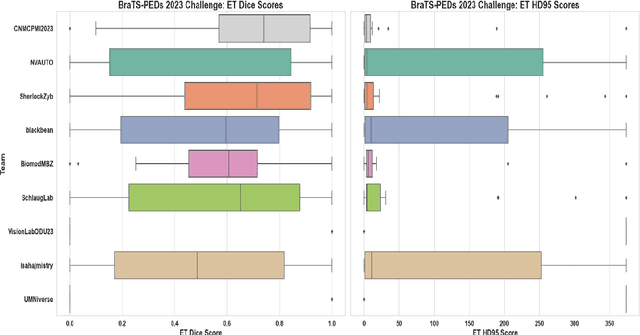

BraTS-PEDs: Results of the Multi-Consortium International Pediatric Brain Tumor Segmentation Challenge 2023

Jul 11, 2024

Abstract:Pediatric central nervous system tumors are the leading cause of cancer-related deaths in children. The five-year survival rate for high-grade glioma in children is less than 20%. The development of new treatments is dependent upon multi-institutional collaborative clinical trials requiring reproducible and accurate centralized response assessment. We present the results of the BraTS-PEDs 2023 challenge, the first Brain Tumor Segmentation (BraTS) challenge focused on pediatric brain tumors. This challenge utilized data acquired from multiple international consortia dedicated to pediatric neuro-oncology and clinical trials. BraTS-PEDs 2023 aimed to evaluate volumetric segmentation algorithms for pediatric brain gliomas from magnetic resonance imaging using standardized quantitative performance evaluation metrics employed across the BraTS 2023 challenges. The top-performing AI approaches for pediatric tumor analysis included ensembles of nnU-Net and Swin UNETR, Auto3DSeg, or nnU-Net with a self-supervised framework. The BraTSPEDs 2023 challenge fostered collaboration between clinicians (neuro-oncologists, neuroradiologists) and AI/imaging scientists, promoting faster data sharing and the development of automated volumetric analysis techniques. These advancements could significantly benefit clinical trials and improve the care of children with brain tumors.

Self-supervised Learning via Cluster Distance Prediction for Operating Room Context Awareness

Jul 07, 2024Abstract:Semantic segmentation and activity classification are key components to creating intelligent surgical systems able to understand and assist clinical workflow. In the Operating Room, semantic segmentation is at the core of creating robots aware of clinical surroundings, whereas activity classification aims at understanding OR workflow at a higher level. State-of-the-art semantic segmentation and activity recognition approaches are fully supervised, which is not scalable. Self-supervision can decrease the amount of annotated data needed. We propose a new 3D self-supervised task for OR scene understanding utilizing OR scene images captured with ToF cameras. Contrary to other self-supervised approaches, where handcrafted pretext tasks are focused on 2D image features, our proposed task consists of predicting the relative 3D distance of image patches by exploiting the depth maps. Learning 3D spatial context generates discriminative features for our downstream tasks. Our approach is evaluated on two tasks and datasets containing multi-view data captured from clinical scenarios. We demonstrate a noteworthy improvement of performance on both tasks, specifically on low-regime data where utility of self-supervised learning is the highest.

Brain Tumor Segmentation (BraTS) Challenge 2024: Meningioma Radiotherapy Planning Automated Segmentation

May 28, 2024Abstract:The 2024 Brain Tumor Segmentation Meningioma Radiotherapy (BraTS-MEN-RT) challenge aims to advance automated segmentation algorithms using the largest known multi-institutional dataset of radiotherapy planning brain MRIs with expert-annotated target labels for patients with intact or post-operative meningioma that underwent either conventional external beam radiotherapy or stereotactic radiosurgery. Each case includes a defaced 3D post-contrast T1-weighted radiotherapy planning MRI in its native acquisition space, accompanied by a single-label "target volume" representing the gross tumor volume (GTV) and any at-risk post-operative site. Target volume annotations adhere to established radiotherapy planning protocols, ensuring consistency across cases and institutions. For pre-operative meningiomas, the target volume encompasses the entire GTV and associated nodular dural tail, while for post-operative cases, it includes at-risk resection cavity margins as determined by the treating institution. Case annotations were reviewed and approved by expert neuroradiologists and radiation oncologists. Participating teams will develop, containerize, and evaluate automated segmentation models using this comprehensive dataset. Model performance will be assessed using the lesion-wise Dice Similarity Coefficient and the 95% Hausdorff distance. The top-performing teams will be recognized at the Medical Image Computing and Computer Assisted Intervention Conference in October 2024. BraTS-MEN-RT is expected to significantly advance automated radiotherapy planning by enabling precise tumor segmentation and facilitating tailored treatment, ultimately improving patient outcomes.

BraTS-Path Challenge: Assessing Heterogeneous Histopathologic Brain Tumor Sub-regions

May 17, 2024Abstract:Glioblastoma is the most common primary adult brain tumor, with a grim prognosis - median survival of 12-18 months following treatment, and 4 months otherwise. Glioblastoma is widely infiltrative in the cerebral hemispheres and well-defined by heterogeneous molecular and micro-environmental histopathologic profiles, which pose a major obstacle in treatment. Correctly diagnosing these tumors and assessing their heterogeneity is crucial for choosing the precise treatment and potentially enhancing patient survival rates. In the gold-standard histopathology-based approach to tumor diagnosis, detecting various morpho-pathological features of distinct histology throughout digitized tissue sections is crucial. Such "features" include the presence of cellular tumor, geographic necrosis, pseudopalisading necrosis, areas abundant in microvascular proliferation, infiltration into the cortex, wide extension in subcortical white matter, leptomeningeal infiltration, regions dense with macrophages, and the presence of perivascular or scattered lymphocytes. With these features in mind and building upon the main aim of the BraTS Cluster of Challenges https://www.synapse.org/brats2024, the goal of the BraTS-Path challenge is to provide a systematically prepared comprehensive dataset and a benchmarking environment to develop and fairly compare deep-learning models capable of identifying tumor sub-regions of distinct histologic profile. These models aim to further our understanding of the disease and assist in the diagnosis and grading of conditions in a consistent manner.

Analysis of the BraTS 2023 Intracranial Meningioma Segmentation Challenge

May 16, 2024

Abstract:We describe the design and results from the BraTS 2023 Intracranial Meningioma Segmentation Challenge. The BraTS Meningioma Challenge differed from prior BraTS Glioma challenges in that it focused on meningiomas, which are typically benign extra-axial tumors with diverse radiologic and anatomical presentation and a propensity for multiplicity. Nine participating teams each developed deep-learning automated segmentation models using image data from the largest multi-institutional systematically expert annotated multilabel multi-sequence meningioma MRI dataset to date, which included 1000 training set cases, 141 validation set cases, and 283 hidden test set cases. Each case included T2, T2/FLAIR, T1, and T1Gd brain MRI sequences with associated tumor compartment labels delineating enhancing tumor, non-enhancing tumor, and surrounding non-enhancing T2/FLAIR hyperintensity. Participant automated segmentation models were evaluated and ranked based on a scoring system evaluating lesion-wise metrics including dice similarity coefficient (DSC) and 95% Hausdorff Distance. The top ranked team had a lesion-wise median dice similarity coefficient (DSC) of 0.976, 0.976, and 0.964 for enhancing tumor, tumor core, and whole tumor, respectively and a corresponding average DSC of 0.899, 0.904, and 0.871, respectively. These results serve as state-of-the-art benchmarks for future pre-operative meningioma automated segmentation algorithms. Additionally, we found that 1286 of 1424 cases (90.3%) had at least 1 compartment voxel abutting the edge of the skull-stripped image edge, which requires further investigation into optimal pre-processing face anonymization steps.

Understanding metric-related pitfalls in image analysis validation

Feb 09, 2023Abstract:Validation metrics are key for the reliable tracking of scientific progress and for bridging the current chasm between artificial intelligence (AI) research and its translation into practice. However, increasing evidence shows that particularly in image analysis, metrics are often chosen inadequately in relation to the underlying research problem. This could be attributed to a lack of accessibility of metric-related knowledge: While taking into account the individual strengths, weaknesses, and limitations of validation metrics is a critical prerequisite to making educated choices, the relevant knowledge is currently scattered and poorly accessible to individual researchers. Based on a multi-stage Delphi process conducted by a multidisciplinary expert consortium as well as extensive community feedback, the present work provides the first reliable and comprehensive common point of access to information on pitfalls related to validation metrics in image analysis. Focusing on biomedical image analysis but with the potential of transfer to other fields, the addressed pitfalls generalize across application domains and are categorized according to a newly created, domain-agnostic taxonomy. To facilitate comprehension, illustrations and specific examples accompany each pitfall. As a structured body of information accessible to researchers of all levels of expertise, this work enhances global comprehension of a key topic in image analysis validation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge