Michael Mix

Brain Tumor Segmentation (BraTS) Challenge 2024: Meningioma Radiotherapy Planning Automated Segmentation

May 28, 2024Abstract:The 2024 Brain Tumor Segmentation Meningioma Radiotherapy (BraTS-MEN-RT) challenge aims to advance automated segmentation algorithms using the largest known multi-institutional dataset of radiotherapy planning brain MRIs with expert-annotated target labels for patients with intact or post-operative meningioma that underwent either conventional external beam radiotherapy or stereotactic radiosurgery. Each case includes a defaced 3D post-contrast T1-weighted radiotherapy planning MRI in its native acquisition space, accompanied by a single-label "target volume" representing the gross tumor volume (GTV) and any at-risk post-operative site. Target volume annotations adhere to established radiotherapy planning protocols, ensuring consistency across cases and institutions. For pre-operative meningiomas, the target volume encompasses the entire GTV and associated nodular dural tail, while for post-operative cases, it includes at-risk resection cavity margins as determined by the treating institution. Case annotations were reviewed and approved by expert neuroradiologists and radiation oncologists. Participating teams will develop, containerize, and evaluate automated segmentation models using this comprehensive dataset. Model performance will be assessed using the lesion-wise Dice Similarity Coefficient and the 95% Hausdorff distance. The top-performing teams will be recognized at the Medical Image Computing and Computer Assisted Intervention Conference in October 2024. BraTS-MEN-RT is expected to significantly advance automated radiotherapy planning by enabling precise tumor segmentation and facilitating tailored treatment, ultimately improving patient outcomes.

Convolutional neural network based deep-learning architecture for intraprostatic tumour contouring on PSMA PET images in patients with primary prostate cancer

Aug 07, 2020

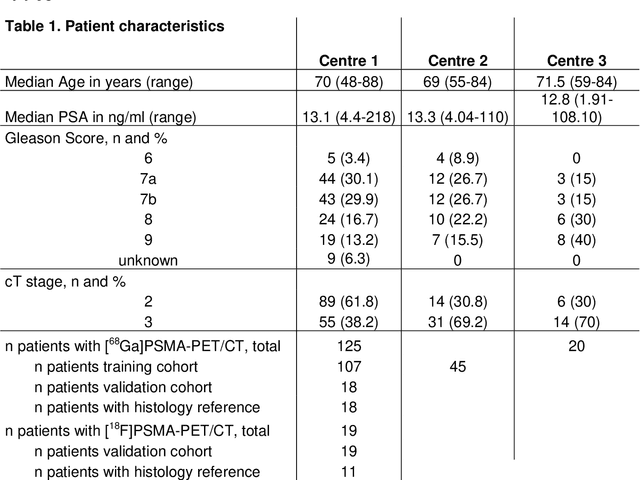

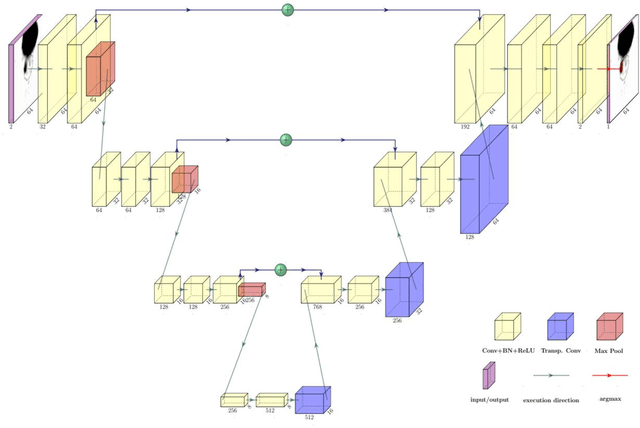

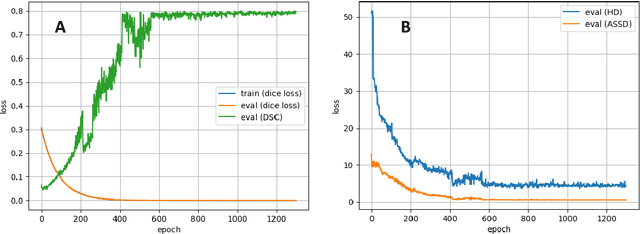

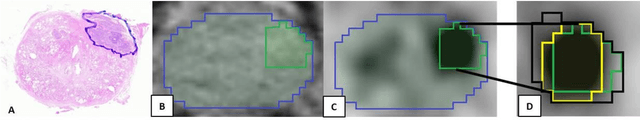

Abstract:Accurate delineation of the intraprostatic gross tumour volume (GTV) is a prerequisite for treatment approaches in patients with primary prostate cancer (PCa). Prostate-specific membrane antigen positron emission tomography (PSMA-PET) may outperform MRI in GTV detection. However, visual GTV delineation underlies interobserver heterogeneity and is time consuming. The aim of this study was to develop a convolutional neural network (CNN) for automated segmentation of intraprostatic tumour (GTV-CNN) in PSMA-PET. Methods: The CNN (3D U-Net) was trained on [68Ga]PSMA-PET images of 152 patients from two different institutions and the training labels were generated manually using a validated technique. The CNN was tested on two independent internal (cohort 1: [68Ga]PSMA-PET, n=18 and cohort 2: [18F]PSMA-PET, n=19) and one external (cohort 3: [68Ga]PSMA-PET, n=20) test-datasets. Accordance between manual contours and GTV-CNN was assessed with Dice-S{\o}rensen coefficient (DSC). Sensitivity and specificity were calculated for the two internal test-datasets by using whole-mount histology. Results: Median DSCs for cohorts 1-3 were 0.84 (range: 0.32-0.95), 0.81 (range: 0.28-0.93) and 0.83 (range: 0.32-0.93), respectively. Sensitivities and specificities for GTV-CNN were comparable with manual expert contours: 0.98 and 0.76 (cohort 1) and 1 and 0.57 (cohort 2), respectively. Computation time was around 6 seconds for a standard dataset. Conclusion: The application of a CNN for automated contouring of intraprostatic GTV in [68Ga]PSMA- and [18F]PSMA-PET images resulted in a high concordance with expert contours and in high sensitivities and specificities in comparison with histology reference. This robust, accurate and fast technique may be implemented for treatment concepts in primary PCa. The trained model and the study's source code are available in an open source repository.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge