Zhengyu Zhang

PointNeRT: A Physics Aware Neural Ray Tracing Surrogate for Propagation Channel Modeling

May 12, 2026Abstract:Ray tracing (RT) has emerged as a key tool for propagation channel modeling and network planning. Conventional RT is based on electromagnetic (EM) wave theory and its application relies on detailed mesh-based environment representations and material properties. In realistic environments, limited environmental geometry and material uncertainties hinder its scalability to complex scenarios. In this paper, we propose a novel physics aware neural RT surrogate named PointNeRT to address these limitations. The proposed model directly takes point clouds as environmental input, and efficiently reconstruct multipath without explicitly constructing mesh models or manually defining EM interaction rules. PointNeRT adopts a hop-by-hop modeling strategy guided by physical interaction constraints. It supports sequential prediction of multipath propagation and power attenuation. Numerical results and experiments demonstrate that the proposed method implicitly captures surface normal characteristics and EM material effects. It further achieves robust generalization in mobility scenarios and provides a physics-guided neural modeling of multipath propagation.

SOLARIS: Speculative Offloading of Latent-bAsed Representation for Inference Scaling

Apr 13, 2026Abstract:Recent advances in recommendation scaling laws have led to foundation models of unprecedented complexity. While these models offer superior performance, their computational demands make real-time serving impractical, often forcing practitioners to rely on knowledge distillation-compromising serving quality for efficiency. To address this challenge, we present SOLARIS (Speculative Offloading of Latent-bAsed Representation for Inference Scaling), a novel framework inspired by speculative decoding. SOLARIS proactively precomputes user-item interaction embeddings by predicting which user-item pairs are likely to appear in future requests, and asynchronously generating their foundation model representations ahead of time. This approach decouples the costly foundation model inference from the latency-critical serving path, enabling real-time knowledge transfer from models previously considered too expensive for online use. Deployed across Meta's advertising system serving billions of daily requests, SOLARIS achieves 0.67% revenue-driving top-line metrics gain, demonstrating its effectiveness at scale.

Channel Measurements and Modeling based on Composite Environmental Factor for Urban Street-Canyon Intersections

Apr 02, 2026Abstract:In urban environments, vehicle-to-everything (V2X) communications require accurate wireless channel characterization. This requirement is particularly critical at street-canyon intersections, where building blockage and rich multipath propagation can severely degrade link reliability. Due to its unique environmental layout, the channel characteristics in urban canyon are influenced by building distribution. However, this feature has not been well captured in existing channel models. In this paper, we propose an environment-related statistical channel model based on 5.8~GHz channel measurements. We construct a composite environmental factor to characterize environmental differences in intersections. Then, the factor is incorporated into 3GPP path-loss model and further linked to small-scale channel parameters. Finally, accuracy of the proposed model is validated using second-order channel statistics. The results show that the proposed model can effectively characterize propagation properties of urban street-canyon intersection channels with different building conditions. The proposed model provides a physically interpretable and statistically effective framework for channel simulation and performance evaluation in urban vehicular scenarios.

Deep Learning Based Site-Specific Channel Inference Using Satellite Images

Mar 30, 2026Abstract:Site-specific channel inference plays a critical role in the design and evaluation of next-generation wireless communication systems by considering the surrounding propagation environment. However, traditional methods are unscalable, while existing AI-based approaches using satellite image are confined to predicting large-scale fading parameters, lacking the capacity to reconstruct the complete channel impulse response (CIR). To address this limitation, we propose a deep learning-based site-specific channel inference framework using satellite images to predict structured Tapped Delay Line (TDL) parameters. We first establish a joint channel-satellite dataset based on measurements. Then, a novel deep learning network is developed to reconstruct the channel parameters. Specifically, a cross-attention-fused dual-branch pipeline extracts macroscopic and microscopic environmental features, while a recurrent tracking module captures the long-term dynamic evolution of multipath components. Experimental results demonstrate that the proposed method achieves high-quality reconstruction of the CIR in unseen scenarios, with a Power Delay Profile (PDP) Average Cosine Similarity exceeding 0.96. This work provides a pathway toward site-specific channel inference for future dynamic wireless networks.

Emergent Dexterity via Diverse Resets and Large-Scale Reinforcement Learning

Mar 16, 2026Abstract:Reinforcement learning in massively parallel physics simulations has driven major progress in sim-to-real robot learning. However, current approaches remain brittle and task-specific, relying on extensive per-task engineering to design rewards, curricula, and demonstrations. Even with this engineering, they often fail on long-horizon, contact-rich manipulation tasks and do not meaningfully scale with compute, as performance quickly saturates when training revisits the same narrow regions of state space. We introduce \Method, a simple and scalable framework that enables on-policy reinforcement learning to robustly solve a broad class of dexterous manipulation tasks using a single reward function, fixed algorithm hyperparameters, no curricula, and no human demonstrations. Our key insight is that long-horizon exploration can be dramatically simplified by using simulator resets to systematically expose the RL algorithm to the diverse set of robot-object interactions which underlie dexterous manipulation. \Method\ programmatically generates such resets with minimal human input, converting additional compute directly into broader behavioral coverage and continued performance gains. We show that \Method\ gracefully scales to long-horizon dexterous manipulation tasks beyond the capabilities of existing approaches and is able to learn robust policies over significantly wider ranges of initial conditions than baselines. Finally, we distill \Method \ into visuomotor policies which display robust retrying behavior and substantially higher success rates than baselines when transferred to the real world zero-shot. Project webpage: https://omnireset.github.io

A Deployment-Friendly Foundational Framework for Efficient Computational Pathology

Feb 15, 2026Abstract:Pathology foundation models (PFMs) have enabled robust generalization in computational pathology through large-scale datasets and expansive architectures, but their substantial computational cost, particularly for gigapixel whole slide images, limits clinical accessibility and scalability. Here, we present LitePath, a deployment-friendly foundational framework designed to mitigate model over-parameterization and patch level redundancy. LitePath integrates LiteFM, a compact model distilled from three large PFMs (Virchow2, H-Optimus-1 and UNI2) using 190 million patches, and the Adaptive Patch Selector (APS), a lightweight component for task-specific patch selection. The framework reduces model parameters by 28x and lowers FLOPs by 403.5x relative to Virchow2, enabling deployment on low-power edge hardware such as the NVIDIA Jetson Orin Nano Super. On this device, LitePath processes 208 slides per hour, 104.5x faster than Virchow2, and consumes 0.36 kWh per 3,000 slides, 171x lower than Virchow2 on an RTX3090 GPU. We validated accuracy using 37 cohorts across four organs and 26 tasks (26 internal, 9 external, and 2 prospective), comprising 15,672 slides from 9,808 patients disjoint from the pretraining data. LitePath ranks second among 19 evaluated models and outperforms larger models including H-Optimus-1, mSTAR, UNI2 and GPFM, while retaining 99.71% of the AUC of Virchow2 on average. To quantify the balance between accuracy and efficiency, we propose the Deployability Score (D-Score), defined as the weighted geometric mean of normalized AUC and normalized FLOP, where LitePath achieves the highest value, surpassing Virchow2 by 10.64%. These results demonstrate that LitePath enables rapid, cost-effective and energy-efficient pathology image analysis on accessible hardware while maintaining accuracy comparable to state-of-the-art PFMs and reducing the carbon footprint of AI deployment.

Artificial Intelligence Empowered Channel Prediction: A New Paradigm for Propagation Channel Modeling

Jan 14, 2026Abstract:This paper proposes a novel paradigm centered on Artificial Intelligence (AI)-empowered propagation channel prediction to address the limitations of traditional channel modeling. We present a comprehensive framework that deeply integrates heterogeneous environmental data and physical propagation knowledge into AI models for site-specific channel prediction, which referred to as channel inference. By leveraging AI to infer site-specific wireless channel states, the proposed paradigm enables accurate prediction of channel characteristics at both link and area levels, capturing spatio-temporal evolution of radio propagation. Some novel strategies to realize the paradigm are introduced and discussed, including AI-native and AI-hybrid inference approaches. This paper also investigates how to enhance model generalization through transfer learning and improve interpretability via explainable AI techniques. Our approach demonstrates significant practical efficacy, achieving an average path loss prediction root mean square error (RMSE) of $\sim$ 4 dB and reducing training time by 60\%-75\%. This new modeling paradigm provides a foundational pathway toward high-fidelity, generalizable, and physically consistent propagation channel prediction for future communication networks.

MambaMIL+: Modeling Long-Term Contextual Patterns for Gigapixel Whole Slide Image

Dec 19, 2025

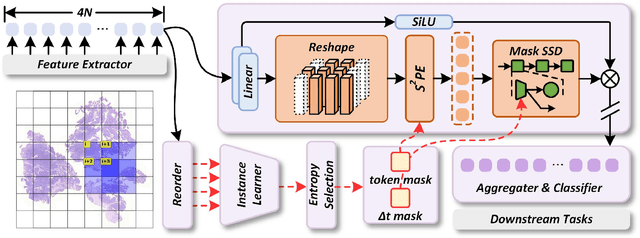

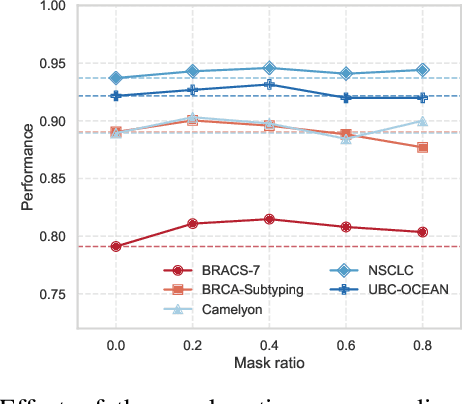

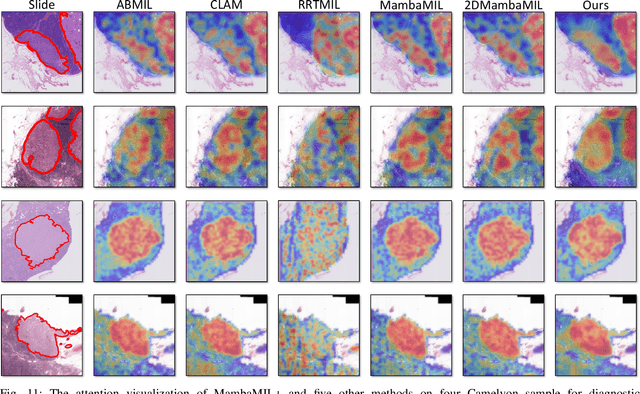

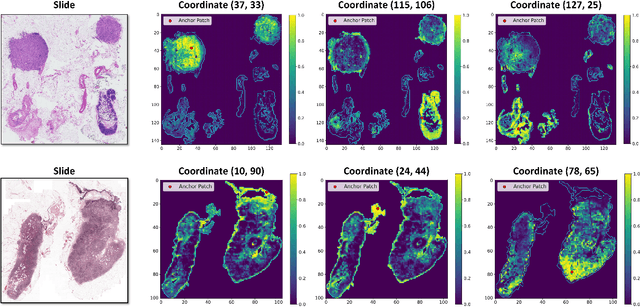

Abstract:Whole-slide images (WSIs) are an important data modality in computational pathology, yet their gigapixel resolution and lack of fine-grained annotations challenge conventional deep learning models. Multiple instance learning (MIL) offers a solution by treating each WSI as a bag of patch-level instances, but effectively modeling ultra-long sequences with rich spatial context remains difficult. Recently, Mamba has emerged as a promising alternative for long sequence learning, scaling linearly to thousands of tokens. However, despite its efficiency, it still suffers from limited spatial context modeling and memory decay, constraining its effectiveness to WSI analysis. To address these limitations, we propose MambaMIL+, a new MIL framework that explicitly integrates spatial context while maintaining long-range dependency modeling without memory forgetting. Specifically, MambaMIL+ introduces 1) overlapping scanning, which restructures the patch sequence to embed spatial continuity and instance correlations; 2) a selective stripe position encoder (S2PE) that encodes positional information while mitigating the biases of fixed scanning orders; and 3) a contextual token selection (CTS) mechanism, which leverages supervisory knowledge to dynamically enlarge the contextual memory for stable long-range modeling. Extensive experiments on 20 benchmarks across diagnostic classification, molecular prediction, and survival analysis demonstrate that MambaMIL+ consistently achieves state-of-the-art performance under three feature extractors (ResNet-50, PLIP, and CONCH), highlighting its effectiveness and robustness for large-scale computational pathology

Meta Lattice: Model Space Redesign for Cost-Effective Industry-Scale Ads Recommendations

Dec 15, 2025Abstract:The rapidly evolving landscape of products, surfaces, policies, and regulations poses significant challenges for deploying state-of-the-art recommendation models at industry scale, primarily due to data fragmentation across domains and escalating infrastructure costs that hinder sustained quality improvements. To address this challenge, we propose Lattice, a recommendation framework centered around model space redesign that extends Multi-Domain, Multi-Objective (MDMO) learning beyond models and learning objectives. Lattice addresses these challenges through a comprehensive model space redesign that combines cross-domain knowledge sharing, data consolidation, model unification, distillation, and system optimizations to achieve significant improvements in both quality and cost-efficiency. Our deployment of Lattice at Meta has resulted in 10% revenue-driving top-line metrics gain, 11.5% user satisfaction improvement, 6% boost in conversion rate, with 20% capacity saving.

A Geometry Map-Based Site-Specific Propagation Channel Model for Urban Scenarios

Nov 19, 2025Abstract:With the rapid deployments of 5G and 6G networks, accurate modeling of urban radio propagation has become critical for system design and network planning. However, conventional statistical or empirical models fail to fully capture the influence of detailed geometric features on site-specific channel variances in dense urban environments. In this paper, we propose a geometry map-based propagation channel model that directly extracts key parameters from a 3D geometry map and incorporates the Uniform Theory of Diffraction (UTD) to recursively compute multiple diffraction fields, thereby enabling accurate prediction of site-specific large-scale path loss and time-varying Doppler characteristics in urban scenarios. A well-designed identification algorithm is developed to efficiently detect buildings that significantly affect signal propagation. The proposed model is validated using urban measurement data, showing excellent agreement of path loss in both line-of-sight (LOS) and nonline-of-sight (NLOS) conditions. In particular, for NLOS scenarios with complex diffractions, it outperforms the 3GPP and simplified models, reducing the RMSE by 7.1 dB and 3.18 dB, respectively. Doppler analysis further demonstrates its accuracy in capturing time-varying propagation characteristics, confirming the scalability and generalization of the model in urban environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge