Xiaoying Zhang

Integrated Channel Sounding and Communication: Requirements, Architecture, Challenges, and Key Technologies

Mar 16, 2026Abstract:Channel models are essential for the design, evaluation, and optimization of wireless communication systems. The emerging space-air-ground-sea integrated network (SAGSIN), characterized by diverse service applications and extended-spectrum operations, places even greater demands on highly accurate channel models. However, conventional channel sounding is limited by generalized measurement campaigns, inadequate cross-band consistency, and insufficient real-time adaptability, making it unable to meet the needs of SAGSIN for scenario-specific and high-precision channel modeling. To address this challenge, we propose a novel technological framework, termed integrated channel sounding and communication (ICSC). By deeply integrating sounding and communication, the ICSC enables efficient and real-time acquisition of dynamic channel characteristics during communication processes, supporting fine-grained site- and scenario-specific measurements. Furthermore, leveraging artificial intelligence techniques, ICSC can identify channel conditions and adapt waveform parameters in real-time according to scenario variations, which in turn enhances communication performance. This article first introduces the fundamental principles of the ICSC framework, elaborates on its core concepts and key advantages, and demonstrates its feasibility through the development of an integrated verification system (IVS). Subsequently, the potential applications and opportunities of the ICSC are analyzed in depth, followed by a discussion of its future development directions and remaining challenges.

RetroAgent: From Solving to Evolving via Retrospective Dual Intrinsic Feedback

Mar 12, 2026Abstract:Standard reinforcement learning (RL) for large language model (LLM)-based agents typically optimizes extrinsic task-success rewards, prioritizing one-off task solving over continual adaptation. As a result, agents may converge to suboptimal policies due to limited exploration, and accumulated experience remains implicitly stored in model parameters, hindering efficient experiential learning. Inspired by humans' capacity for retrospective self-improvement, we introduce RetroAgent, an online RL framework that enables agents to master complex interactive environments not only by solving, but also by evolving under the joint guidance of extrinsic task-success rewards and retrospective dual intrinsic feedback. Concretely, RetroAgent features a hindsight self-reflection mechanism that produces: (1) intrinsic numerical feedback, which tracks incremental subtask completion relative to prior attempts to reward promising exploration; and (2) intrinsic language feedback, which distills reusable lessons into a memory buffer retrieved via our proposed Similarity & Utility-Aware Upper Confidence Bound (SimUtil-UCB) strategy, jointly balancing relevance, utility, and exploration. Extensive experiments across four challenging agentic tasks show that RetroAgent achieves state-of-the-art (SOTA) performance, substantially outperforming RL fine-tuning, memory-augmented RL, exploration-guided RL, and meta-RL methods -- e.g., exceeding Group Relative Policy Optimization (GRPO)-trained agents by +18.3% on ALFWorld, +15.4% on WebShop, +27.1% on Sokoban, and +8.9% on MineSweeper -- while maintaining strong test-time adaptation and out-of-distribution generalization.

Visual Generation Unlocks Human-Like Reasoning through Multimodal World Models

Jan 27, 2026Abstract:Humans construct internal world models and reason by manipulating the concepts within these models. Recent advances in AI, particularly chain-of-thought (CoT) reasoning, approximate such human cognitive abilities, where world models are believed to be embedded within large language models. Expert-level performance in formal and abstract domains such as mathematics and programming has been achieved in current systems by relying predominantly on verbal reasoning. However, they still lag far behind humans in domains like physical and spatial intelligence, which require richer representations and prior knowledge. The emergence of unified multimodal models (UMMs) capable of both verbal and visual generation has therefore sparked interest in more human-like reasoning grounded in complementary multimodal pathways, though their benefits remain unclear. From a world-model perspective, this paper presents the first principled study of when and how visual generation benefits reasoning. Our key position is the visual superiority hypothesis: for certain tasks--particularly those grounded in the physical world--visual generation more naturally serves as world models, whereas purely verbal world models encounter bottlenecks arising from representational limitations or insufficient prior knowledge. Theoretically, we formalize internal world modeling as a core component of CoT reasoning and analyze distinctions among different forms of world models. Empirically, we identify tasks that necessitate interleaved visual-verbal CoT reasoning, constructing a new evaluation suite, VisWorld-Eval. Controlled experiments on a state-of-the-art UMM show that interleaved CoT significantly outperforms purely verbal CoT on tasks that favor visual world modeling, but offers no clear advantage otherwise. Together, this work clarifies the potential of multimodal world modeling for more powerful, human-like multimodal AI.

A Geometry Map-Based Site-Specific Propagation Channel Model for Urban Scenarios

Nov 19, 2025Abstract:With the rapid deployments of 5G and 6G networks, accurate modeling of urban radio propagation has become critical for system design and network planning. However, conventional statistical or empirical models fail to fully capture the influence of detailed geometric features on site-specific channel variances in dense urban environments. In this paper, we propose a geometry map-based propagation channel model that directly extracts key parameters from a 3D geometry map and incorporates the Uniform Theory of Diffraction (UTD) to recursively compute multiple diffraction fields, thereby enabling accurate prediction of site-specific large-scale path loss and time-varying Doppler characteristics in urban scenarios. A well-designed identification algorithm is developed to efficiently detect buildings that significantly affect signal propagation. The proposed model is validated using urban measurement data, showing excellent agreement of path loss in both line-of-sight (LOS) and nonline-of-sight (NLOS) conditions. In particular, for NLOS scenarios with complex diffractions, it outperforms the 3GPP and simplified models, reducing the RMSE by 7.1 dB and 3.18 dB, respectively. Doppler analysis further demonstrates its accuracy in capturing time-varying propagation characteristics, confirming the scalability and generalization of the model in urban environments.

Building Task Bots with Self-learning for Enhanced Adaptability, Extensibility, and Factuality

Aug 27, 2025Abstract:Developing adaptable, extensible, and accurate task bots with minimal or zero human intervention is a significant challenge in dialog research. This thesis examines the obstacles and potential solutions for creating such bots, focusing on innovative techniques that enable bots to learn and adapt autonomously in constantly changing environments.

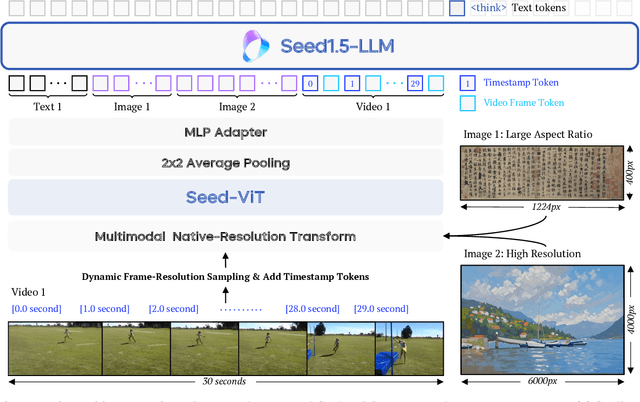

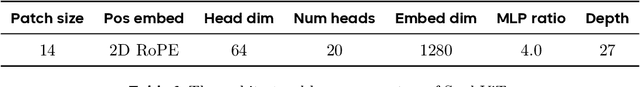

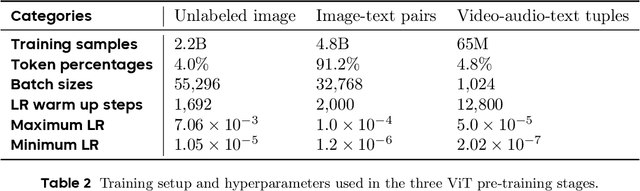

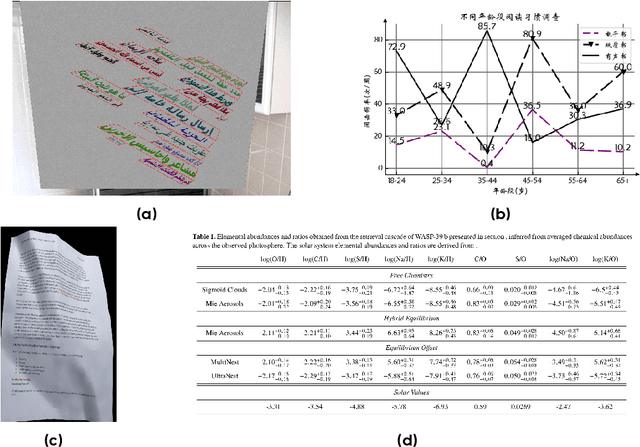

Seed1.5-VL Technical Report

May 11, 2025

Abstract:We present Seed1.5-VL, a vision-language foundation model designed to advance general-purpose multimodal understanding and reasoning. Seed1.5-VL is composed with a 532M-parameter vision encoder and a Mixture-of-Experts (MoE) LLM of 20B active parameters. Despite its relatively compact architecture, it delivers strong performance across a wide spectrum of public VLM benchmarks and internal evaluation suites, achieving the state-of-the-art performance on 38 out of 60 public benchmarks. Moreover, in agent-centric tasks such as GUI control and gameplay, Seed1.5-VL outperforms leading multimodal systems, including OpenAI CUA and Claude 3.7. Beyond visual and video understanding, it also demonstrates strong reasoning abilities, making it particularly effective for multimodal reasoning challenges such as visual puzzles. We believe these capabilities will empower broader applications across diverse tasks. In this report, we mainly provide a comprehensive review of our experiences in building Seed1.5-VL across model design, data construction, and training at various stages, hoping that this report can inspire further research. Seed1.5-VL is now accessible at https://www.volcengine.com/ (Volcano Engine Model ID: doubao-1-5-thinking-vision-pro-250428)

Towards Self-Improving Systematic Cognition for Next-Generation Foundation MLLMs

Mar 16, 2025Abstract:Despite their impressive capabilities, Multimodal Large Language Models (MLLMs) face challenges with fine-grained perception and complex reasoning. Prevalent pre-training approaches focus on enhancing perception by training on high-quality image captions due to the extremely high cost of collecting chain-of-thought (CoT) reasoning data for improving reasoning. While leveraging advanced MLLMs for caption generation enhances scalability, the outputs often lack comprehensiveness and accuracy. In this paper, we introduce Self-Improving Cognition (SIcog), a self-learning framework designed to construct next-generation foundation MLLMs by enhancing their systematic cognitive capabilities through multimodal pre-training with self-generated data. Specifically, we propose chain-of-description, an approach that improves an MLLM's systematic perception by enabling step-by-step visual understanding, ensuring greater comprehensiveness and accuracy. Additionally, we adopt a structured CoT reasoning technique to enable MLLMs to integrate in-depth multimodal reasoning. To construct a next-generation foundation MLLM with self-improved cognition, SIcog first equips an MLLM with systematic perception and reasoning abilities using minimal external annotations. The enhanced models then generate detailed captions and CoT reasoning data, which are further curated through self-consistency. This curated data is ultimately used to refine the MLLM during multimodal pre-training, facilitating next-generation foundation MLLM construction. Extensive experiments on both low- and high-resolution MLLMs across diverse benchmarks demonstrate that, with merely 213K self-generated pre-training samples, SIcog produces next-generation foundation MLLMs with significantly improved cognition, achieving benchmark-leading performance compared to prevalent pre-training approaches.

Conversational Dueling Bandits in Generalized Linear Models

Jul 26, 2024

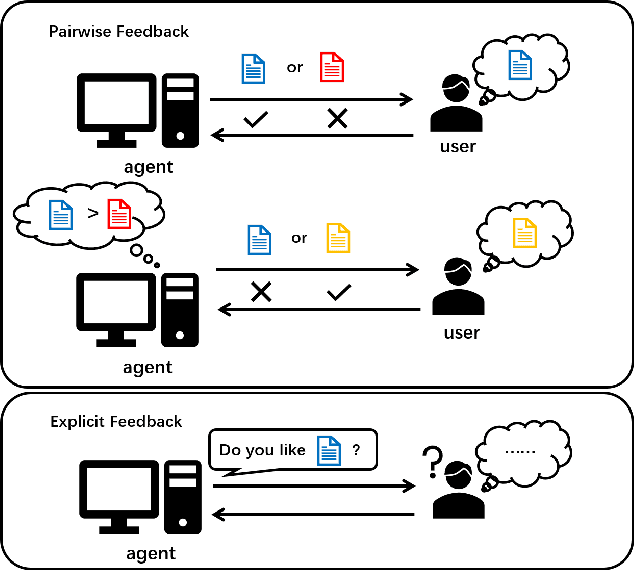

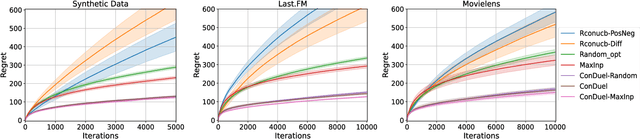

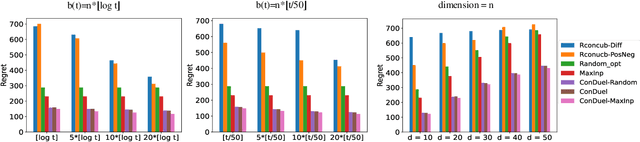

Abstract:Conversational recommendation systems elicit user preferences by interacting with users to obtain their feedback on recommended commodities. Such systems utilize a multi-armed bandit framework to learn user preferences in an online manner and have received great success in recent years. However, existing conversational bandit methods have several limitations. First, they only enable users to provide explicit binary feedback on the recommended items or categories, leading to ambiguity in interpretation. In practice, users are usually faced with more than one choice. Relative feedback, known for its informativeness, has gained increasing popularity in recommendation system design. Moreover, current contextual bandit methods mainly work under linear reward assumptions, ignoring practical non-linear reward structures in generalized linear models. Therefore, in this paper, we introduce relative feedback-based conversations into conversational recommendation systems through the integration of dueling bandits in generalized linear models (GLM) and propose a novel conversational dueling bandit algorithm called ConDuel. Theoretical analyses of regret upper bounds and empirical validations on synthetic and real-world data underscore ConDuel's efficacy. We also demonstrate the potential to extend our algorithm to multinomial logit bandits with theoretical and experimental guarantees, which further proves the applicability of the proposed framework.

User-Creator Feature Dynamics in Recommender Systems with Dual Influence

Jul 19, 2024

Abstract:Recommender systems present relevant contents to users and help content creators reach their target audience. The dual nature of these systems influences both users and creators: users' preferences are affected by the items they are recommended, while creators are incentivized to alter their contents such that it is recommended more frequently. We define a model, called user-creator feature dynamics, to capture the dual influences of recommender systems. We prove that a recommender system with dual influence is guaranteed to polarize, causing diversity loss in the system. We then investigate, both theoretically and empirically, approaches for mitigating polarization and promoting diversity in recommender systems. Unexpectedly, we find that common diversity-promoting approaches do not work in the presence of dual influence, while relevancy-optimizing methods like top-$k$ recommendation can prevent polarization and improve diversity of the system.

Toward Optimal LLM Alignments Using Two-Player Games

Jun 16, 2024

Abstract:The standard Reinforcement Learning from Human Feedback (RLHF) framework primarily focuses on optimizing the performance of large language models using pre-collected prompts. However, collecting prompts that provide comprehensive coverage is both tedious and challenging, and often fails to include scenarios that LLMs need to improve on the most. In this paper, we investigate alignment through the lens of two-agent games, involving iterative interactions between an adversarial and a defensive agent. The adversarial agent's task at each step is to generate prompts that expose the weakness of the defensive agent. In return, the defensive agent seeks to improve its responses to these newly identified prompts it struggled with, based on feedback from the reward model. We theoretically demonstrate that this iterative reinforcement learning optimization converges to a Nash Equilibrium for the game induced by the agents. Experimental results in safety scenarios demonstrate that learning in such a competitive environment not only fully trains agents but also leads to policies with enhanced generalization capabilities for both adversarial and defensive agents.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge