Cong Xu

Explainable Knowledge Tracing via Probabilistic Embeddings and Pattern-based Reasoning

May 10, 2026Abstract:Knowledge Tracing (KT) models students' knowledge states based on learning interactions to predict performance. While deep learning-based KT models have boosted predictive accuracy, most models rely on deterministic vector embeddings and opaque latent state transitions, limiting interpretability regarding how specific past behaviors influence predictions. To address this limitation, we propose Probabilistic Logical Knowledge Tracing (PLKT), an interpretable KT framework that formulates prediction as a goal-conditioned evidence reasoning process over historical learning behaviors. Instead of representing knowledge states as deterministic vector embeddings, PLKT employs robust Beta-distributed probabilistic embeddings to represent student knowledge states. This probabilistic foundation allows us to model the uncertainty of historical behaviors and perform explicit logical operations (e.g., conjunction), constructing transparent reasoning paths that reveal how specific past interactions contribute to the prediction. Extensive experiments show that PLKT outperforms state-of-the-art KT methods while achieving superior interpretability. Our code is available at https://anonymous.4open.science/r/PLKT-D3CE/.

ASPIRE: Make Spectral Graph Collaborative Filtering Great Again via Adaptive Filter Learning

Apr 24, 2026Abstract:Graph filter design is central to spectral collaborative filtering, yet most existing methods rely on manually tuned hyperparameters rather than fully learnable filters. We show that this challenge stems from a bias in traditional recommendation objectives, which induces a spectral phenomenon termed low-frequency explosion, thereby fundamentally hindering the effective learning of graph filters. To overcome this limitation, we propose a novel adaptive spectral graph collaborative filtering framework (ASPIRE) based on a bi-level optimization objective. Guided by our theoretical analysis, we disentangle the filter learning objective, which in turn leads to excellent recommendation performance, spectral adaptivity, and training stability in practice. Extensive experiments show our learned filters match the performance of carefully engineered task-specific designs. Furthermore, ASPIRE is equally effective in LLM-powered collaborative filtering. Our findings demonstrate that graph filter learning is viable and generalizable, paving the way for more expressive graph neural networks in collaborative filtering.

Markovian Pre-Trained Transformer for Next-Item Recommendation

Jan 13, 2026Abstract:We introduce the Markovian Pre-trained Transformer (MPT) for next-item recommendation, a transferable model fully pre-trained on synthetic Markov chains, yet capable of achieving state-of-the-art performance by fine-tuning a lightweight adaptor. This counterintuitive success stems from the observation of the `Markovian' nature: advanced sequential recommenders coincidentally rely on the latest interaction to make predictions, while the historical interactions serve mainly as auxiliary cues for inferring the user's general, non-sequential identity. This characteristic necessitates the capabilities of a universal recommendation model to effectively summarize the user sequence, with particular emphasis on the latest interaction. MPT inherently has the potential to be universal and transferable. On the one hand, when trained to predict the next state of Markov chains, it acquires the capabilities to estimate transition probabilities from the context (one adaptive manner for summarizing sequences) and attend to the last state to ensure accurate state transitions. On the other hand, unlike the heterogeneous interaction data, an unlimited amount of controllable Markov chains is available to boost the model capacity. We conduct extensive experiments on five public datasets from three distinct platforms to validate the superiority of Markovian pre-training over traditional recommendation pre-training and recent language pre-training paradigms.

HiGR: Efficient Generative Slate Recommendation via Hierarchical Planning and Multi-Objective Preference Alignment

Dec 31, 2025Abstract:Slate recommendation, where users are presented with a ranked list of items simultaneously, is widely adopted in online platforms. Recent advances in generative models have shown promise in slate recommendation by modeling sequences of discrete semantic IDs autoregressively. However, existing autoregressive approaches suffer from semantically entangled item tokenization and inefficient sequential decoding that lacks holistic slate planning. To address these limitations, we propose HiGR, an efficient generative slate recommendation framework that integrates hierarchical planning with listwise preference alignment. First, we propose an auto-encoder utilizing residual quantization and contrastive constraints to tokenize items into semantically structured IDs for controllable generation. Second, HiGR decouples generation into a list-level planning stage for global slate intent, followed by an item-level decoding stage for specific item selection. Third, we introduce a listwise preference alignment objective to directly optimize slate quality using implicit user feedback. Experiments on our large-scale commercial media platform demonstrate that HiGR delivers consistent improvements in both offline evaluations and online deployment. Specifically, it outperforms state-of-the-art methods by over 10% in offline recommendation quality with a 5x inference speedup, while further achieving a 1.22% and 1.73% increase in Average Watch Time and Average Video Views in online A/B tests.

MARS2 2025 Challenge on Multimodal Reasoning: Datasets, Methods, Results, Discussion, and Outlook

Sep 17, 2025

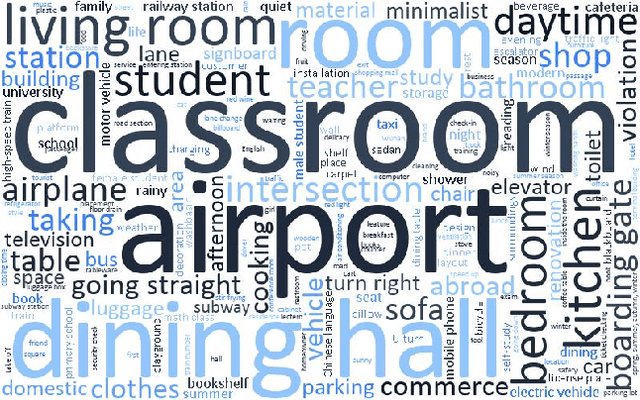

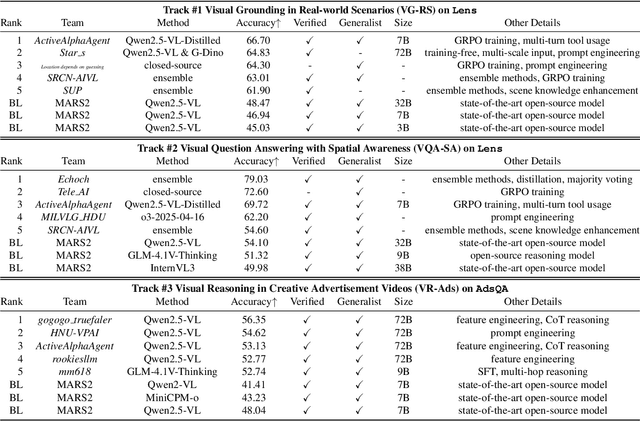

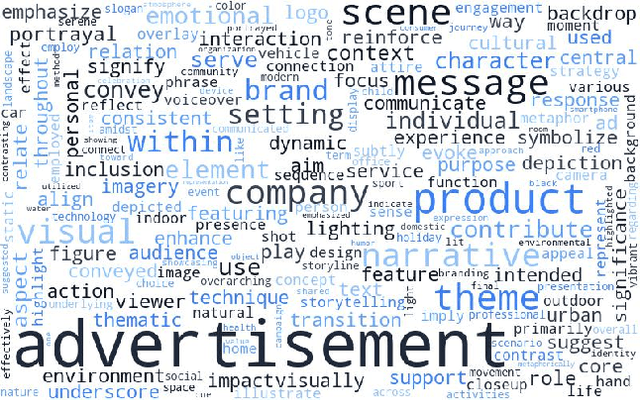

Abstract:This paper reviews the MARS2 2025 Challenge on Multimodal Reasoning. We aim to bring together different approaches in multimodal machine learning and LLMs via a large benchmark. We hope it better allows researchers to follow the state-of-the-art in this very dynamic area. Meanwhile, a growing number of testbeds have boosted the evolution of general-purpose large language models. Thus, this year's MARS2 focuses on real-world and specialized scenarios to broaden the multimodal reasoning applications of MLLMs. Our organizing team released two tailored datasets Lens and AdsQA as test sets, which support general reasoning in 12 daily scenarios and domain-specific reasoning in advertisement videos, respectively. We evaluated 40+ baselines that include both generalist MLLMs and task-specific models, and opened up three competition tracks, i.e., Visual Grounding in Real-world Scenarios (VG-RS), Visual Question Answering with Spatial Awareness (VQA-SA), and Visual Reasoning in Creative Advertisement Videos (VR-Ads). Finally, 76 teams from the renowned academic and industrial institutions have registered and 40+ valid submissions (out of 1200+) have been included in our ranking lists. Our datasets, code sets (40+ baselines and 15+ participants' methods), and rankings are publicly available on the MARS2 workshop website and our GitHub organization page https://github.com/mars2workshop/, where our updates and announcements of upcoming events will be continuously provided.

Beyond Semantic Understanding: Preserving Collaborative Frequency Components in LLM-based Recommendation

Aug 14, 2025Abstract:Recommender systems in concert with Large Language Models (LLMs) present promising avenues for generating semantically-informed recommendations. However, LLM-based recommenders exhibit a tendency to overemphasize semantic correlations within users' interaction history. When taking pretrained collaborative ID embeddings as input, LLM-based recommenders progressively weaken the inherent collaborative signals as the embeddings propagate through LLM backbones layer by layer, as opposed to traditional Transformer-based sequential models in which collaborative signals are typically preserved or even enhanced for state-of-the-art performance. To address this limitation, we introduce FreLLM4Rec, an approach designed to balance semantic and collaborative information from a spectral perspective. Item embeddings that incorporate both semantic and collaborative information are first purified using a Global Graph Low-Pass Filter (G-LPF) to preliminarily remove irrelevant high-frequency noise. Temporal Frequency Modulation (TFM) then actively preserves collaborative signal layer by layer. Note that the collaborative preservation capability of TFM is theoretically guaranteed by establishing a connection between the optimal but hard-to-implement local graph fourier filters and the suboptimal yet computationally efficient frequency-domain filters. Extensive experiments on four benchmark datasets demonstrate that FreLLM4Rec successfully mitigates collaborative signal attenuation and achieves competitive performance, with improvements of up to 8.00\% in NDCG@10 over the best baseline. Our findings provide insights into how LLMs process collaborative information and offer a principled approach for improving LLM-based recommendation systems.

Pushing the Limits of Low-Bit Optimizers: A Focus on EMA Dynamics

May 01, 2025

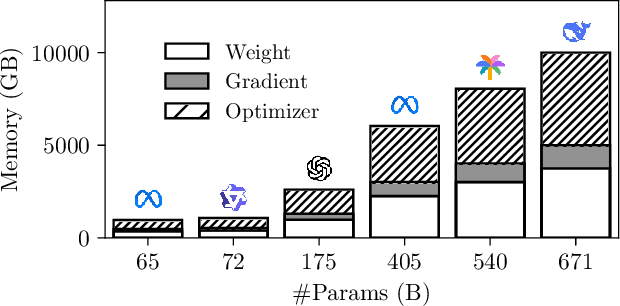

Abstract:The explosion in model sizes leads to continued growth in prohibitive training/fine-tuning costs, particularly for stateful optimizers which maintain auxiliary information of even 2x the model size to achieve optimal convergence. We therefore present in this work a novel type of optimizer that carries with extremely lightweight state overloads, achieved through ultra-low-precision quantization. While previous efforts have achieved certain success with 8-bit or 4-bit quantization, our approach enables optimizers to operate at precision as low as 3 bits, or even 2 bits per state element. This is accomplished by identifying and addressing two critical challenges: the signal swamping problem in unsigned quantization that results in unchanged state dynamics, and the rapidly increased gradient variance in signed quantization that leads to incorrect descent directions. The theoretical analysis suggests a tailored logarithmic quantization for the former and a precision-specific momentum value for the latter. Consequently, the proposed SOLO achieves substantial memory savings (approximately 45 GB when training a 7B model) with minimal accuracy loss. We hope that SOLO can contribute to overcoming the bottleneck in computational resources, thereby promoting greater accessibility in fundamental research.

DropletVideo: A Dataset and Approach to Explore Integral Spatio-Temporal Consistent Video Generation

Mar 08, 2025Abstract:Spatio-temporal consistency is a critical research topic in video generation. A qualified generated video segment must ensure plot plausibility and coherence while maintaining visual consistency of objects and scenes across varying viewpoints. Prior research, especially in open-source projects, primarily focuses on either temporal or spatial consistency, or their basic combination, such as appending a description of a camera movement after a prompt without constraining the outcomes of this movement. However, camera movement may introduce new objects to the scene or eliminate existing ones, thereby overlaying and affecting the preceding narrative. Especially in videos with numerous camera movements, the interplay between multiple plots becomes increasingly complex. This paper introduces and examines integral spatio-temporal consistency, considering the synergy between plot progression and camera techniques, and the long-term impact of prior content on subsequent generation. Our research encompasses dataset construction through to the development of the model. Initially, we constructed a DropletVideo-10M dataset, which comprises 10 million videos featuring dynamic camera motion and object actions. Each video is annotated with an average caption of 206 words, detailing various camera movements and plot developments. Following this, we developed and trained the DropletVideo model, which excels in preserving spatio-temporal coherence during video generation. The DropletVideo dataset and model are accessible at https://dropletx.github.io.

Collaborative Filtering Meets Spectrum Shift: Connecting User-Item Interaction with Graph-Structured Side Information

Feb 12, 2025

Abstract:Graph Neural Network (GNN) has demonstrated their superiority in collaborative filtering, where the user-item (U-I) interaction bipartite graph serves as the fundamental data format. However, when graph-structured side information (e.g., multimodal similarity graphs or social networks) is integrated into the U-I bipartite graph, existing graph collaborative filtering methods fall short of achieving satisfactory performance. We quantitatively analyze this problem from a spectral perspective. Recall that a bipartite graph possesses a full spectrum within the range of [-1, 1], with the highest frequency exactly achievable at -1 and the lowest frequency at 1; however, we observe as more side information is incorporated, the highest frequency of the augmented adjacency matrix progressively shifts rightward. This spectrum shift phenomenon has caused previous approaches built for the full spectrum [-1, 1] to assign mismatched importance to different frequencies. To this end, we propose Spectrum Shift Correction (dubbed SSC), incorporating shifting and scaling factors to enable spectral GNNs to adapt to the shifted spectrum. Unlike previous paradigms of leveraging side information, which necessitate tailored designs for diverse data types, SSC directly connects traditional graph collaborative filtering with any graph-structured side information. Experiments on social and multimodal recommendation demonstrate the effectiveness of SSC, achieving relative improvements of up to 23% without incurring any additional computational overhead.

STAIR: Manipulating Collaborative and Multimodal Information for E-Commerce Recommendation

Dec 16, 2024

Abstract:While the mining of modalities is the focus of most multimodal recommendation methods, we believe that how to fully utilize both collaborative and multimodal information is pivotal in e-commerce scenarios where, as clarified in this work, the user behaviors are rarely determined entirely by multimodal features. In order to combine the two distinct types of information, some additional challenges are encountered: 1) Modality erasure: Vanilla graph convolution, which proves rather useful in collaborative filtering, however erases multimodal information; 2) Modality forgetting: Multimodal information tends to be gradually forgotten as the recommendation loss essentially facilitates the learning of collaborative information. To this end, we propose a novel approach named STAIR, which employs a novel STepwise grAph convolution to enable a co-existence of collaborative and multimodal Information in e-commerce Recommendation. Besides, it starts with the raw multimodal features as an initialization, and the forgetting problem can be significantly alleviated through constrained embedding updates. As a result, STAIR achieves state-of-the-art recommendation performance on three public e-commerce datasets with minimal computational and memory costs. Our code is available at https://github.com/yhhe2004/STAIR.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge