Kawal Rhode

Artificial Intelligence in the Autonomous Navigation of Endovascular Interventions: A Systematic Review

May 06, 2024

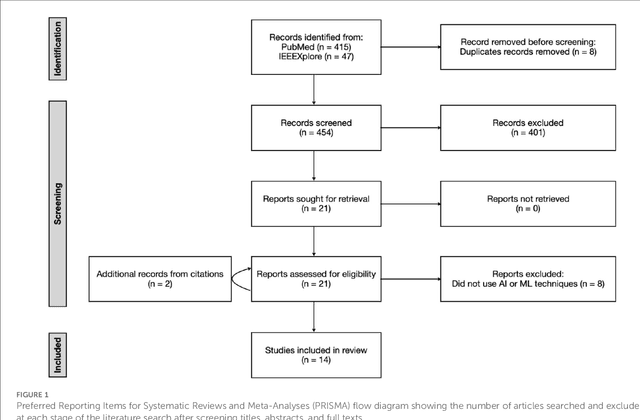

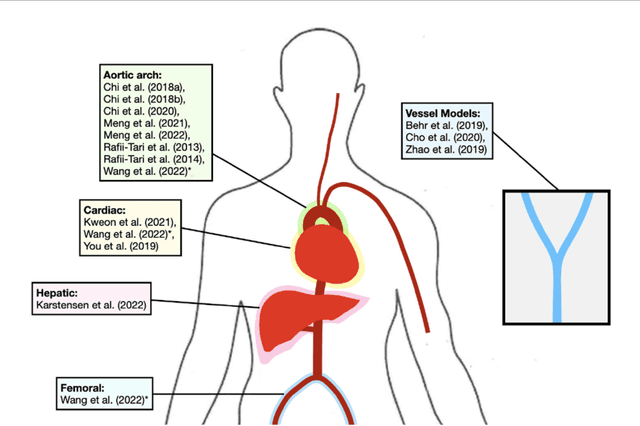

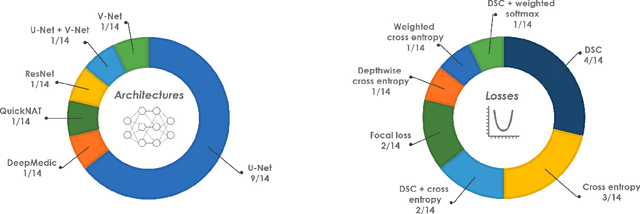

Abstract:Purpose: Autonomous navigation of devices in endovascular interventions can decrease operation times, improve decision-making during surgery, and reduce operator radiation exposure while increasing access to treatment. This systematic review explores recent literature to assess the impact, challenges, and opportunities artificial intelligence (AI) has for the autonomous endovascular intervention navigation. Methods: PubMed and IEEEXplore databases were queried. Eligibility criteria included studies investigating the use of AI in enabling the autonomous navigation of catheters/guidewires in endovascular interventions. Following PRISMA, articles were assessed using QUADAS-2. PROSPERO: CRD42023392259. Results: Among 462 studies, fourteen met inclusion criteria. Reinforcement learning (9/14, 64%) and learning from demonstration (7/14, 50%) were used as data-driven models for autonomous navigation. Studies predominantly utilised physical phantoms (10/14, 71%) and in silico (4/14, 29%) models. Experiments within or around the blood vessels of the heart were reported by the majority of studies (10/14, 71%), while simple non-anatomical vessel platforms were used in three studies (3/14, 21%), and the porcine liver venous system in one study. We observed that risk of bias and poor generalisability were present across studies. No procedures were performed on patients in any of the studies reviewed. Studies lacked patient selection criteria, reference standards, and reproducibility, resulting in low clinical evidence levels. Conclusions: AI's potential in autonomous endovascular navigation is promising, but in an experimental proof-of-concept stage, with a technology readiness level of 3. We highlight that reference standards with well-identified performance metrics are crucial to allow for comparisons of data-driven algorithms proposed in the years to come.

* Abstract shortened for arXiv character limit

Goal-conditioned reinforcement learning for ultrasound navigation guidance

May 02, 2024

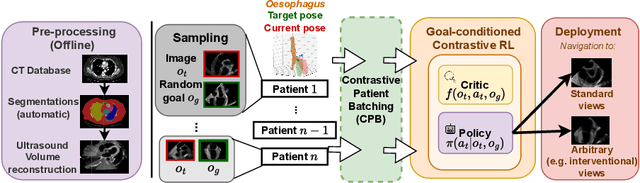

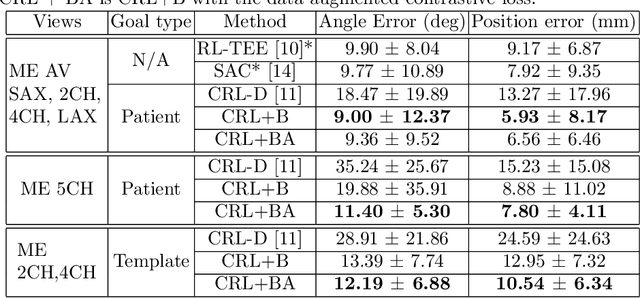

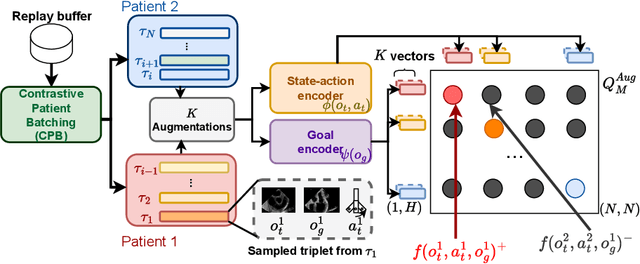

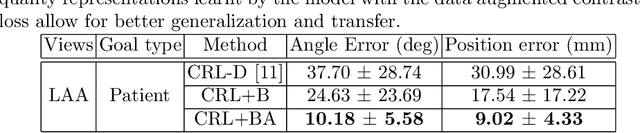

Abstract:Transesophageal echocardiography (TEE) plays a pivotal role in cardiology for diagnostic and interventional procedures. However, using it effectively requires extensive training due to the intricate nature of image acquisition and interpretation. To enhance the efficiency of novice sonographers and reduce variability in scan acquisitions, we propose a novel ultrasound (US) navigation assistance method based on contrastive learning as goal-conditioned reinforcement learning (GCRL). We augment the previous framework using a novel contrastive patient batching method (CPB) and a data-augmented contrastive loss, both of which we demonstrate are essential to ensure generalization to anatomical variations across patients. The proposed framework enables navigation to both standard diagnostic as well as intricate interventional views with a single model. Our method was developed with a large dataset of 789 patients and obtained an average error of 6.56 mm in position and 9.36 degrees in angle on a testing dataset of 140 patients, which is competitive or superior to models trained on individual views. Furthermore, we quantitatively validate our method's ability to navigate to interventional views such as the Left Atrial Appendage (LAA) view used in LAA closure. Our approach holds promise in providing valuable guidance during transesophageal ultrasound examinations, contributing to the advancement of skill acquisition for cardiac ultrasound practitioners.

Cardiac ultrasound simulation for autonomous ultrasound navigation

Feb 09, 2024Abstract:Ultrasound is well-established as an imaging modality for diagnostic and interventional purposes. However, the image quality varies with operator skills as acquiring and interpreting ultrasound images requires extensive training due to the imaging artefacts, the range of acquisition parameters and the variability of patient anatomies. Automating the image acquisition task could improve acquisition reproducibility and quality but training such an algorithm requires large amounts of navigation data, not saved in routine examinations. Thus, we propose a method to generate large amounts of ultrasound images from other modalities and from arbitrary positions, such that this pipeline can later be used by learning algorithms for navigation. We present a novel simulation pipeline which uses segmentations from other modalities, an optimized volumetric data representation and GPU-accelerated Monte Carlo path tracing to generate view-dependent and patient-specific ultrasound images. We extensively validate the correctness of our pipeline with a phantom experiment, where structures' sizes, contrast and speckle noise properties are assessed. Furthermore, we demonstrate its usability to train neural networks for navigation in an echocardiography view classification experiment by generating synthetic images from more than 1000 patients. Networks pre-trained with our simulations achieve significantly superior performance in settings where large real datasets are not available, especially for under-represented classes. The proposed approach allows for fast and accurate patient-specific ultrasound image generation, and its usability for training networks for navigation-related tasks is demonstrated.

Towards intrinsic force sensing and control in parallel soft robots

Nov 19, 2021

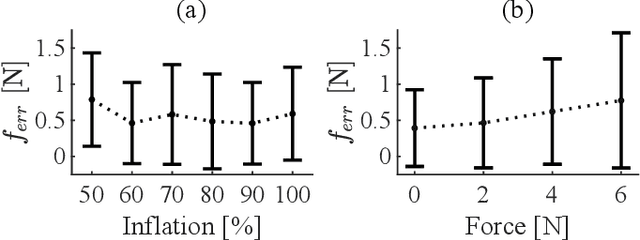

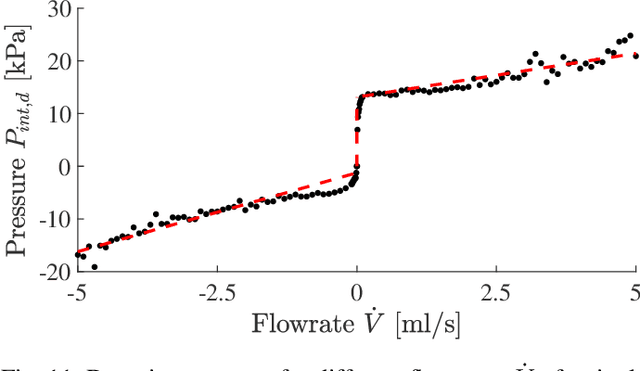

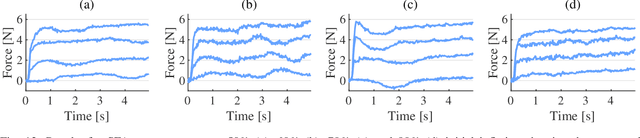

Abstract:With soft robotics being increasingly employed in settings demanding high and controlled contact forces, recent research has demonstrated the use of soft robots to estimate or intrinsically sense forces without requiring external sensing mechanisms. Whilst this has mainly been shown in tendon-based continuum manipulators or deformable robots comprising of push-pull rod actuation, fluid drives still pose great challenges due to high actuation variability and nonlinear mechanical system responses. In this work we investigate the capabilities of a hydraulic, parallel soft robot to intrinsically sense and subsequently control contact forces. A comprehensive algorithm is derived for static, quasi-static and dynamic force sensing which relies on fluid volume and pressure information of the system. The algorithm is validated for a single degree-of-freedom soft fluidic actuator. Results indicate that axial forces acting on a single actuator can be estimated with an accuracy of 0.56 +- 0.66N within the validated range of 0 to 6N in a quasi-static configuration. The force sensing methodology is applied to force control in a single actuator as well as the coupled parallel robot. It can be seen that forces are accurately controllable for both systems, with the capability of controlling directional contact forces in case of the multi degree-of-freedom parallel soft robot.

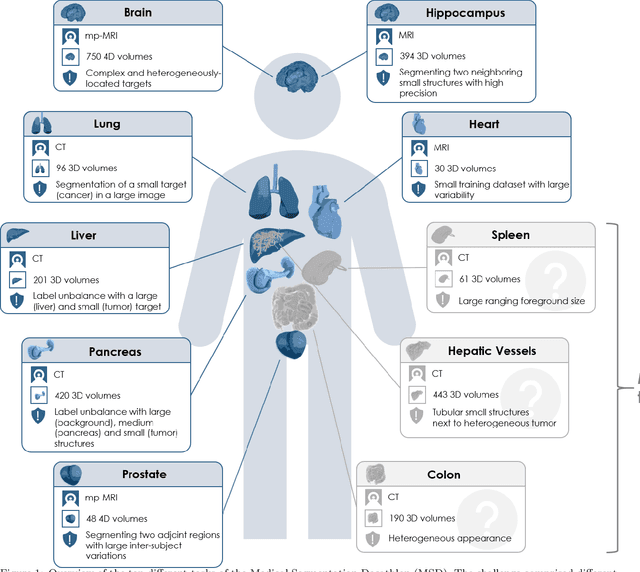

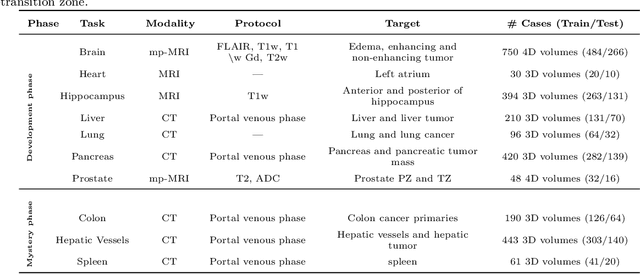

The Medical Segmentation Decathlon

Jun 10, 2021

Abstract:International challenges have become the de facto standard for comparative assessment of image analysis algorithms given a specific task. Segmentation is so far the most widely investigated medical image processing task, but the various segmentation challenges have typically been organized in isolation, such that algorithm development was driven by the need to tackle a single specific clinical problem. We hypothesized that a method capable of performing well on multiple tasks will generalize well to a previously unseen task and potentially outperform a custom-designed solution. To investigate the hypothesis, we organized the Medical Segmentation Decathlon (MSD) - a biomedical image analysis challenge, in which algorithms compete in a multitude of both tasks and modalities. The underlying data set was designed to explore the axis of difficulties typically encountered when dealing with medical images, such as small data sets, unbalanced labels, multi-site data and small objects. The MSD challenge confirmed that algorithms with a consistent good performance on a set of tasks preserved their good average performance on a different set of previously unseen tasks. Moreover, by monitoring the MSD winner for two years, we found that this algorithm continued generalizing well to a wide range of other clinical problems, further confirming our hypothesis. Three main conclusions can be drawn from this study: (1) state-of-the-art image segmentation algorithms are mature, accurate, and generalize well when retrained on unseen tasks; (2) consistent algorithmic performance across multiple tasks is a strong surrogate of algorithmic generalizability; (3) the training of accurate AI segmentation models is now commoditized to non AI experts.

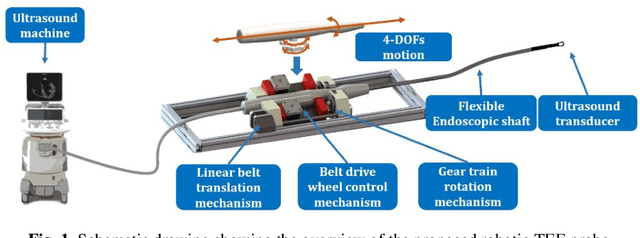

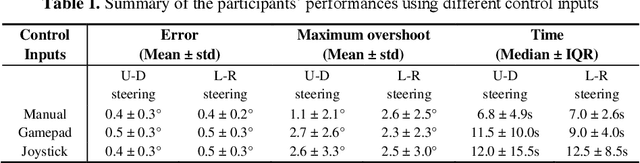

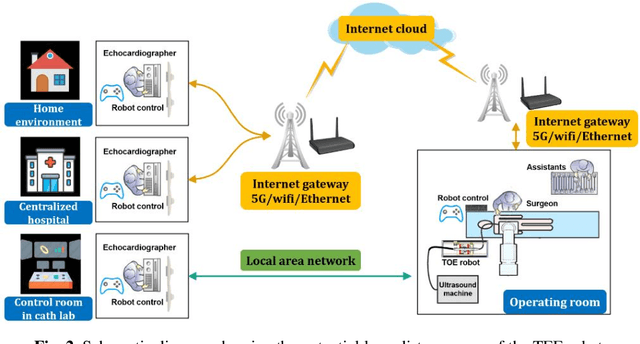

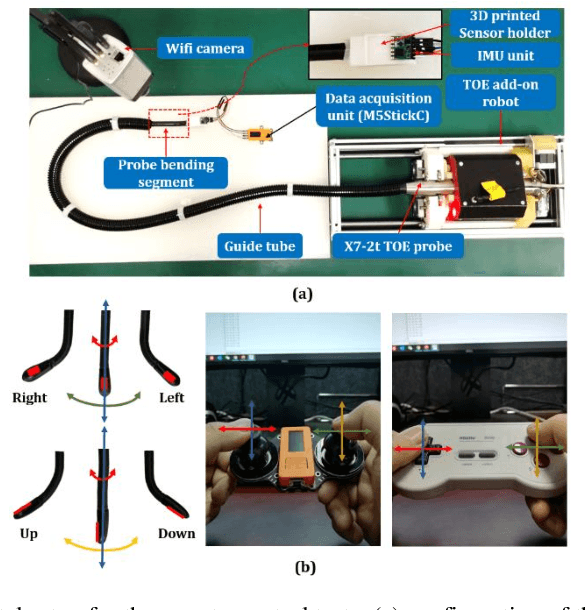

IoT-based Remote Control Study of a Robotic Trans-esophageal Ultrasound Probe via LAN and 5G

May 28, 2020

Abstract:A robotic trans-esophageal echocardiography (TEE) probe has been recently developed to address the problems with manual control in the X-ray envi-ronment when a conventional probe is used for interventional procedure guidance. However, the robot was exclusively to be used in local areas and the effectiveness of remote control has not been scientifically tested. In this study, we implemented an Internet-of-things (IoT)-based configuration to the TEE robot so the system can set up a local area network (LAN) or be configured to connect to an internet cloud over 5G. To investigate the re-mote control, backlash hysteresis effects were measured and analysed. A joy-stick-based device and a button-based gamepad were then employed and compared with the manual control in a target reaching experiment for the two steering axes. The results indicated different hysteresis curves for the left-right and up-down steering axes with the input wheel's deadbands found to be 15 deg and deg, respectively. Similar magnitudes of positioning errors at approximately 0.5 deg and maximum overshoots at around 2.5 deg were found when manually and robotically controlling the TEE probe. The amount of time to finish the task indicated a better performance using the button-based gamepad over joystick-based device, although both were worse than the manual control. It is concluded that the IoT-based remote control of the TEE probe is feasible and a trained user can accurately manipulate the probe. The main identified problem was the backlash hysteresis in the steering axes, which can result in continuous oscillations and overshoots.

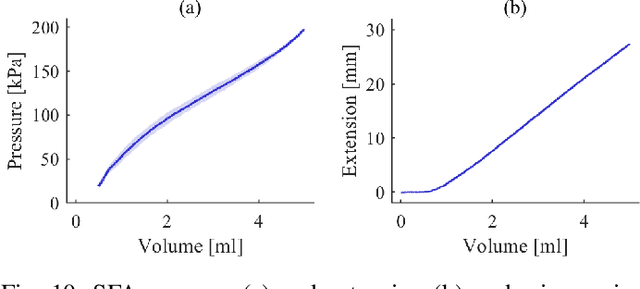

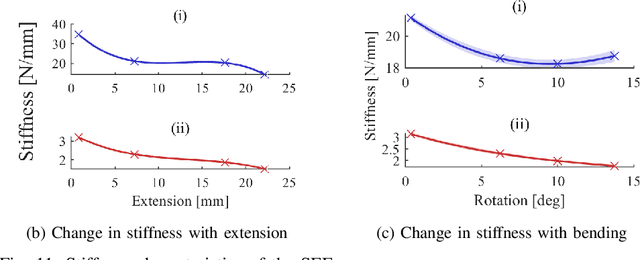

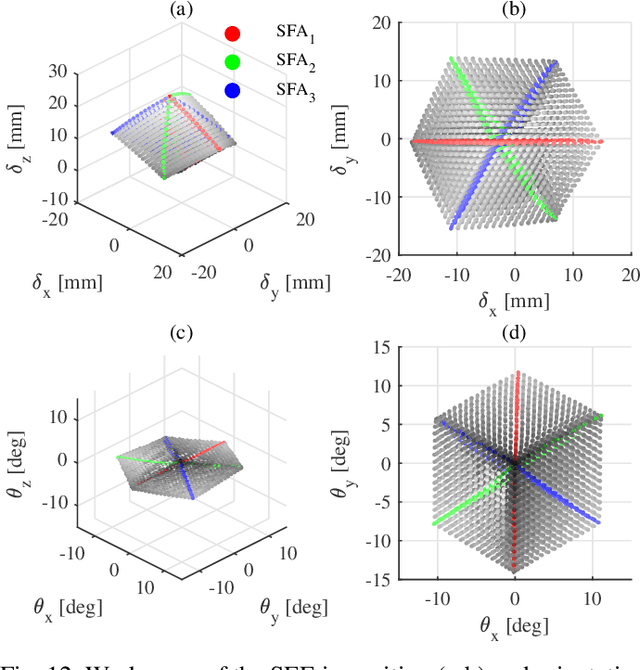

Design and integration of a parallel, soft robotic end-effector for extracorporeal ultrasound

Jun 11, 2019

Abstract:In this work we address limitations in state-of-the-art ultrasound robots by designing and integrating the first soft robotic system for ultrasound imaging. It makes use of the inherent qualities of soft robotics technologies to establish a safe, adaptable interaction between ultrasound probe and patient. We acquire clinical data to establish the movement ranges and force levels required in prenatal foetal ultrasound imaging and design our system accordingly. The end-effector's stiffness characteristics allow for it to reach the desired workspace while maintaining a stable contact between ultrasound probe and patient under the determined loads. The system exhibits a high degree of safety due to its inherent compliance in the transversal direction. We verify the mechanical characteristics of the end-effector, derive and validate a kinetostatic model and demonstrate the robot's controllability with and without external loading. The imaging capabilities of the robot are shown in a tele-operated setting on a foetal phantom. The design exhibits the desired stiffness characteristics with a high stiffness along the ultrasound transducer and a high compliance in lateral direction. Twist is constrained using a braided mesh reinforcement. The model can accurately predict the end-effector pose with a mean error of about 6% in position 7% in orientation. The derived controller is, with an average position error of 0.39mm able to track a target pose efficiently without and with externally applied loads. Finally, the images acquired with the system are of equally good quality compared to a manual sonographer scan.

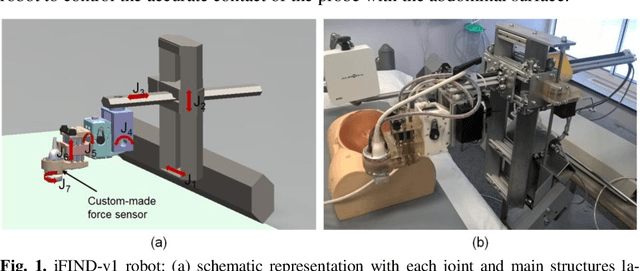

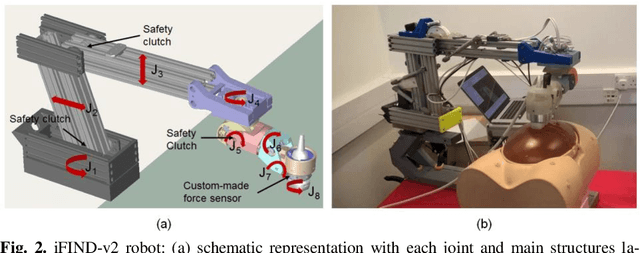

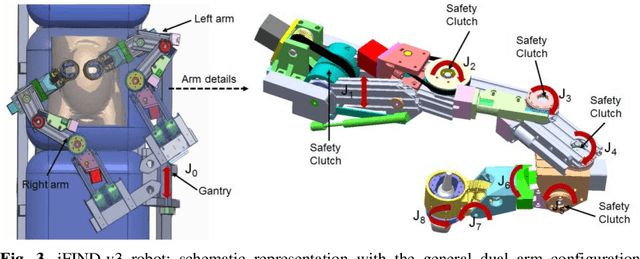

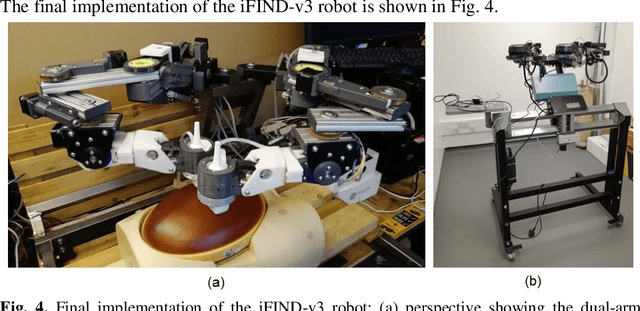

Robotic-assisted Ultrasound for Fetal Imaging: Evolution from Single-arm to Dual-arm System

Apr 10, 2019

Abstract:The development of robotic-assisted extracorporeal ultrasound systems has a long history and a number of projects have been proposed since the 1990s focusing on different technical aspects. These aim to resolve the deficiencies of on-site manual manipulation of hand-held ultrasound probes. This paper presents the recent ongoing developments of a series of bespoke robotic systems, including both single-arm and dual-arm versions, for a project known as intelligent Fetal Imaging and Diagnosis (iFIND). After a brief review of the development history of the extracorporeal ultrasound robotic system used for fetal and abdominal examinations, the specific aim of the iFIND robots, the design evolution, the implementation details of each version, and the initial clinical feedback of the iFIND robot series are presented. Based on the preliminary testing of these newly-proposed robots on 42 volunteers, the successful and re-liable working of the mechatronic systems were validated. Analysis of a participant questionnaire indicates a comfortable scanning experience for the volunteers and a good acceptance rate to being scanned by the robots.

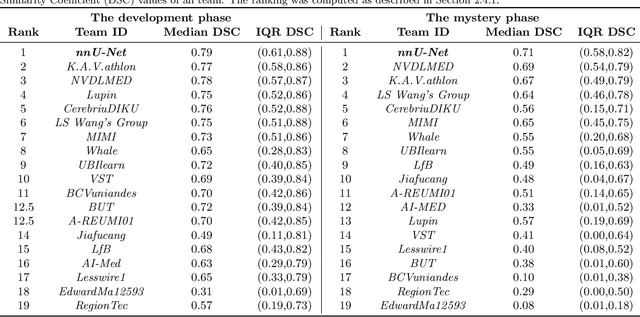

Evaluation of Algorithms for Multi-Modality Whole Heart Segmentation: An Open-Access Grand Challenge

Feb 21, 2019

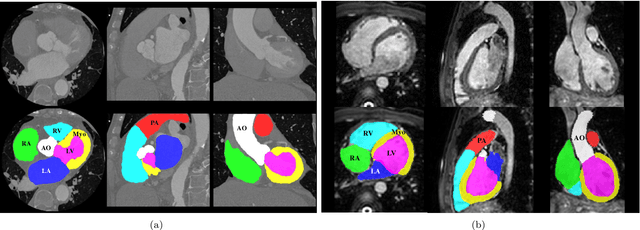

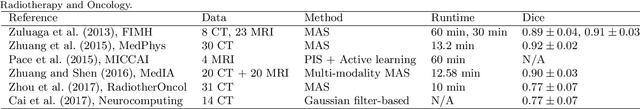

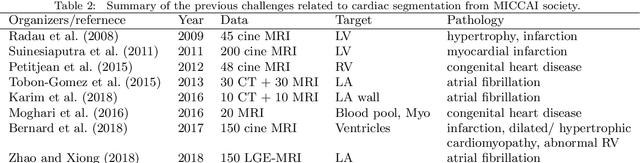

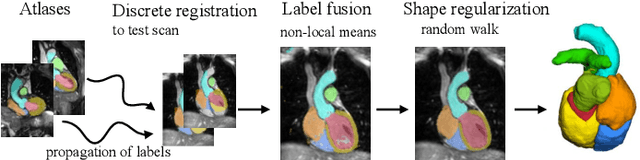

Abstract:Knowledge of whole heart anatomy is a prerequisite for many clinical applications. Whole heart segmentation (WHS), which delineates substructures of the heart, can be very valuable for modeling and analysis of the anatomy and functions of the heart. However, automating this segmentation can be arduous due to the large variation of the heart shape, and different image qualities of the clinical data. To achieve this goal, a set of training data is generally needed for constructing priors or for training. In addition, it is difficult to perform comparisons between different methods, largely due to differences in the datasets and evaluation metrics used. This manuscript presents the methodologies and evaluation results for the WHS algorithms selected from the submissions to the Multi-Modality Whole Heart Segmentation (MM-WHS) challenge, in conjunction with MICCAI 2017. The challenge provides 120 three-dimensional cardiac images covering the whole heart, including 60 CT and 60 MRI volumes, all acquired in clinical environments with manual delineation. Ten algorithms for CT data and eleven algorithms for MRI data, submitted from twelve groups, have been evaluated. The results show that many of the deep learning (DL) based methods achieved high accuracy, even though the number of training datasets was limited. A number of them also reported poor results in the blinded evaluation, probably due to overfitting in their training. The conventional algorithms, mainly based on multi-atlas segmentation, demonstrated robust and stable performance, even though the accuracy is not as good as the best DL method in CT segmentation. The challenge, including the provision of the annotated training data and the blinded evaluation for submitted algorithms on the test data, continues as an ongoing benchmarking resource via its homepage (\url{www.sdspeople.fudan.edu.cn/zhuangxiahai/0/mmwhs/}).

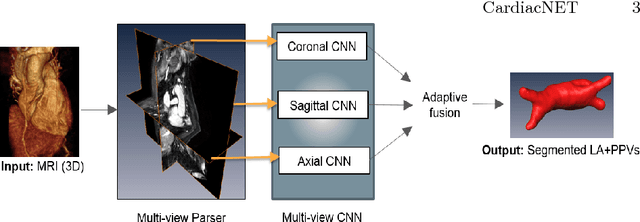

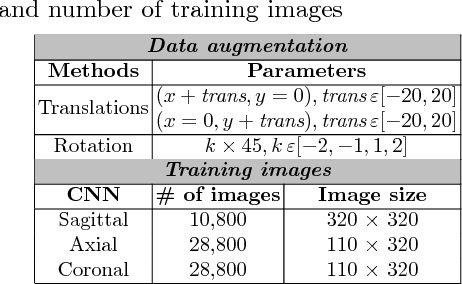

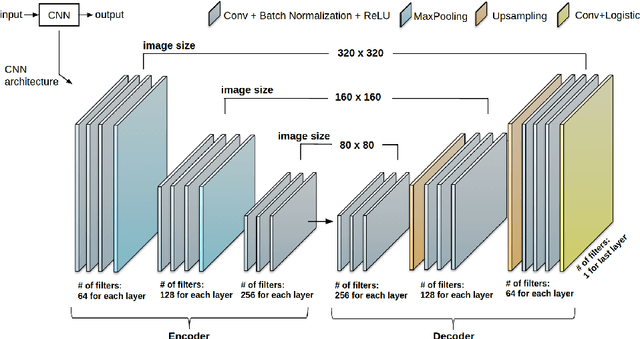

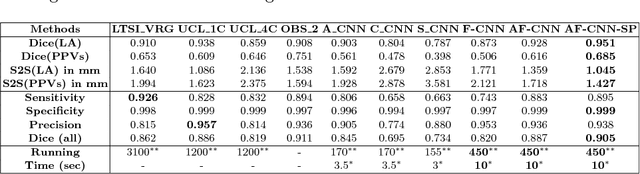

CardiacNET: Segmentation of Left Atrium and Proximal Pulmonary Veins from MRI Using Multi-View CNN

May 19, 2017

Abstract:Anatomical and biophysical modeling of left atrium (LA) and proximal pulmonary veins (PPVs) is important for clinical management of several cardiac diseases. Magnetic resonance imaging (MRI) allows qualitative assessment of LA and PPVs through visualization. However, there is a strong need for an advanced image segmentation method to be applied to cardiac MRI for quantitative analysis of LA and PPVs. In this study, we address this unmet clinical need by exploring a new deep learning-based segmentation strategy for quantification of LA and PPVs with high accuracy and heightened efficiency. Our approach is based on a multi-view convolutional neural network (CNN) with an adaptive fusion strategy and a new loss function that allows fast and more accurate convergence of the backpropagation based optimization. After training our network from scratch by using more than 60K 2D MRI images (slices), we have evaluated our segmentation strategy to the STACOM 2013 cardiac segmentation challenge benchmark. Qualitative and quantitative evaluations, obtained from the segmentation challenge, indicate that the proposed method achieved the state-of-the-art sensitivity (90%), specificity (99%), precision (94%), and efficiency levels (10 seconds in GPU, and 7.5 minutes in CPU).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge