Hong-Ning Dai

Lingnan University

Lyapunov Stability-Aware Stackelberg Game for Low-Altitude Economy: A Control-Oriented Pruning-Based DRL Approach

Feb 01, 2026Abstract:With the rapid expansion of the low-altitude economy, Unmanned Aerial Vehicles (UAVs) serve as pivotal aerial base stations supporting diverse services from users, ranging from latency-sensitive critical missions to bandwidth-intensive data streaming. However, the efficacy of such heterogeneous networks is often compromised by the conflict between limited onboard resources and stringent stability requirements. Moving beyond traditional throughput-centric designs, we propose a Sensing-Communication-Computing-Control closed-loop framework that explicitly models the impact of communication latency on physical control stability. To guarantee mission reliability, we leverage the Lyapunov stability theory to derive an intrinsic mapping between the state evolution of the control system and communication constraints, transforming abstract stability requirements into quantifiable resource boundaries. Then, we formulate the resource allocation problem as a Stackelberg game, where UAVs (as leaders) dynamically price resources to balance load and ensure stability, while users (as followers) optimize requests based on service urgency. Furthermore, addressing the prohibitive computational overhead of standard Deep Reinforcement Learning (DRL) on energy-constrained edge platforms, we propose a novel and lightweight pruning-based Proximal Policy Optimization (PPO) algorithm. By integrating a dynamic structured pruning mechanism, the proposed algorithm significantly compresses the neural network scale during training, enabling the UAV to rapidly approximate the game equilibrium with minimal inference latency. Simulation results demonstrate that the proposed scheme effectively secures control loop stability while maximizing system utility in dynamic low-altitude environments.

FedBiCross: A Bi-Level Optimization Framework to Tackle Non-IID Challenges in Data-Free One-Shot Federated Learning on Medical Data

Jan 05, 2026Abstract:Data-free knowledge distillation-based one-shot federated learning (OSFL) trains a model in a single communication round without sharing raw data, making OSFL attractive for privacy-sensitive medical applications. However, existing methods aggregate predictions from all clients to form a global teacher. Under non-IID data, conflicting predictions cancel out during averaging, yielding near-uniform soft labels that provide weak supervision for distillation. We propose FedBiCross, a personalized OSFL framework with three stages: (1) clustering clients by model output similarity to form coherent sub-ensembles, (2) bi-level cross-cluster optimization that learns adaptive weights to selectively leverage beneficial cross-cluster knowledge while suppressing negative transfer, and (3) personalized distillation for client-specific adaptation. Experiments on four medical image datasets demonstrate that FedBiCross consistently outperforms state-of-the-art baselines across different non-IID degrees.

Achieving Effective Virtual Reality Interactions via Acoustic Gesture Recognition based on Large Language Models

Nov 10, 2025Abstract:Natural and efficient interaction remains a critical challenge for virtual reality and augmented reality (VR/AR) systems. Vision-based gesture recognition suffers from high computational cost, sensitivity to lighting conditions, and privacy leakage concerns. Acoustic sensing provides an attractive alternative: by emitting inaudible high-frequency signals and capturing their reflections, channel impulse response (CIR) encodes how gestures perturb the acoustic field in a low-cost and user-transparent manner. However, existing CIR-based gesture recognition methods often rely on extensive training of models on large labeled datasets, making them unsuitable for few-shot VR scenarios. In this work, we propose the first framework that leverages large language models (LLMs) for CIR-based gesture recognition in VR/AR systems. Despite LLMs' strengths, it is non-trivial to achieve few-shot and zero-shot learning of CIR gestures due to their inconspicuous features. To tackle this challenge, we collect differential CIR rather than original CIR data. Moreover, we construct a real-world dataset collected from 10 participants performing 15 gestures across three categories (digits, letters, and shapes), with 10 repetitions each. We then conduct extensive experiments on this dataset using an LLM-adopted classifier. Results show that our LLM-based framework achieves accuracy comparable to classical machine learning baselines, while requiring no domain-specific retraining.

EBS-CFL: Efficient and Byzantine-robust Secure Clustered Federated Learning

Jun 16, 2025Abstract:Despite federated learning (FL)'s potential in collaborative learning, its performance has deteriorated due to the data heterogeneity of distributed users. Recently, clustered federated learning (CFL) has emerged to address this challenge by partitioning users into clusters according to their similarity. However, CFL faces difficulties in training when users are unwilling to share their cluster identities due to privacy concerns. To address these issues, we present an innovative Efficient and Robust Secure Aggregation scheme for CFL, dubbed EBS-CFL. The proposed EBS-CFL supports effectively training CFL while maintaining users' cluster identity confidentially. Moreover, it detects potential poisonous attacks without compromising individual client gradients by discarding negatively correlated gradients and aggregating positively correlated ones using a weighted approach. The server also authenticates correct gradient encoding by clients. EBS-CFL has high efficiency with client-side overhead O(ml + m^2) for communication and O(m^2l) for computation, where m is the number of cluster identities, and l is the gradient size. When m = 1, EBS-CFL's computational efficiency of client is at least O(log n) times better than comparison schemes, where n is the number of clients.In addition, we validate the scheme through extensive experiments. Finally, we theoretically prove the scheme's security.

* Accepted by AAAI 25

YOLO-TS: Real-Time Traffic Sign Detection with Enhanced Accuracy Using Optimized Receptive Fields and Anchor-Free Fusion

Oct 22, 2024

Abstract:Ensuring safety in both autonomous driving and advanced driver-assistance systems (ADAS) depends critically on the efficient deployment of traffic sign recognition technology. While current methods show effectiveness, they often compromise between speed and accuracy. To address this issue, we present a novel real-time and efficient road sign detection network, YOLO-TS. This network significantly improves performance by optimizing the receptive fields of multi-scale feature maps to align more closely with the size distribution of traffic signs in various datasets. Moreover, our innovative feature-fusion strategy, leveraging the flexibility of Anchor-Free methods, allows for multi-scale object detection on a high-resolution feature map abundant in contextual information, achieving remarkable enhancements in both accuracy and speed. To mitigate the adverse effects of the grid pattern caused by dilated convolutions on the detection of smaller objects, we have devised a unique module that not only mitigates this grid effect but also widens the receptive field to encompass an extensive range of spatial contextual information, thus boosting the efficiency of information usage. Evaluation on challenging public datasets, TT100K and CCTSDB2021, demonstrates that YOLO-TS surpasses existing state-of-the-art methods in terms of both accuracy and speed. The code for our method will be available.

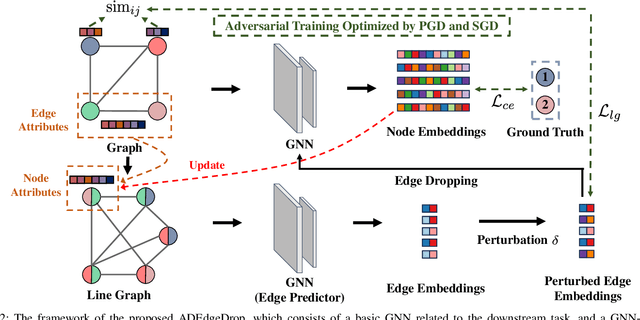

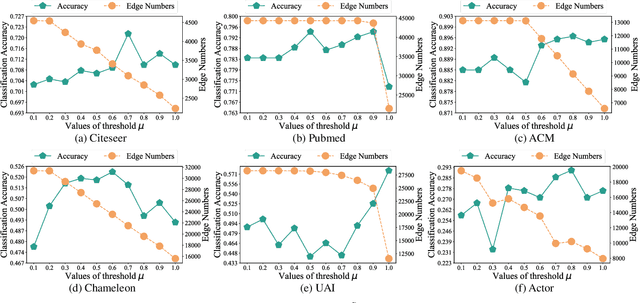

ADEdgeDrop: Adversarial Edge Dropping for Robust Graph Neural Networks

Mar 14, 2024

Abstract:Although Graph Neural Networks (GNNs) have exhibited the powerful ability to gather graph-structured information from neighborhood nodes via various message-passing mechanisms, the performance of GNNs is limited by poor generalization and fragile robustness caused by noisy and redundant graph data. As a prominent solution, Graph Augmentation Learning (GAL) has recently received increasing attention. Among prior GAL approaches, edge-dropping methods that randomly remove edges from a graph during training are effective techniques to improve the robustness of GNNs. However, randomly dropping edges often results in bypassing critical edges, consequently weakening the effectiveness of message passing. In this paper, we propose a novel adversarial edge-dropping method (ADEdgeDrop) that leverages an adversarial edge predictor guiding the removal of edges, which can be flexibly incorporated into diverse GNN backbones. Employing an adversarial training framework, the edge predictor utilizes the line graph transformed from the original graph to estimate the edges to be dropped, which improves the interpretability of the edge-dropping method. The proposed ADEdgeDrop is optimized alternately by stochastic gradient descent and projected gradient descent. Comprehensive experiments on six graph benchmark datasets demonstrate that the proposed ADEdgeDrop outperforms state-of-the-art baselines across various GNN backbones, demonstrating improved generalization and robustness.

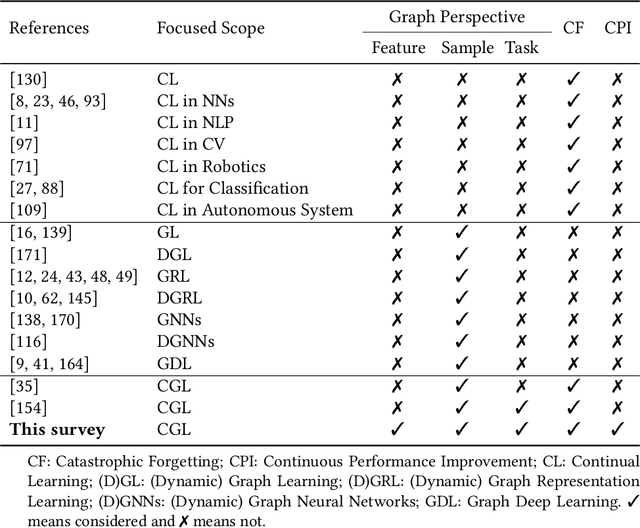

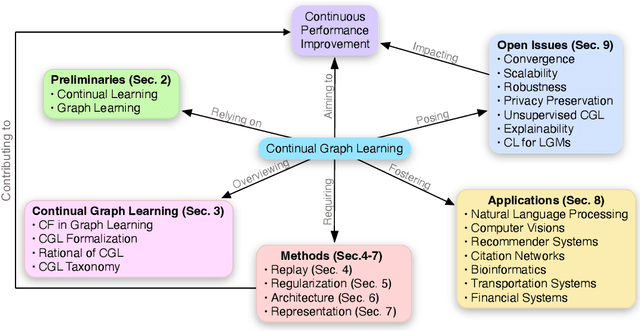

Continual Learning on Graphs: A Survey

Feb 09, 2024

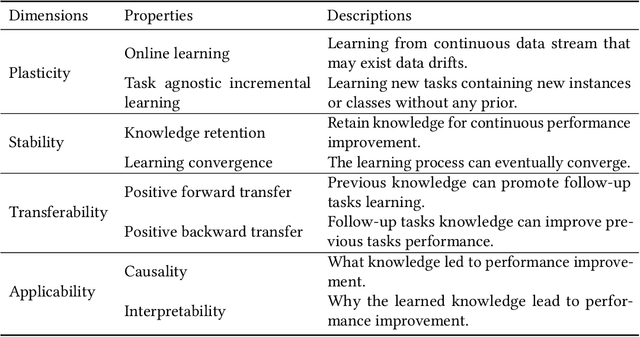

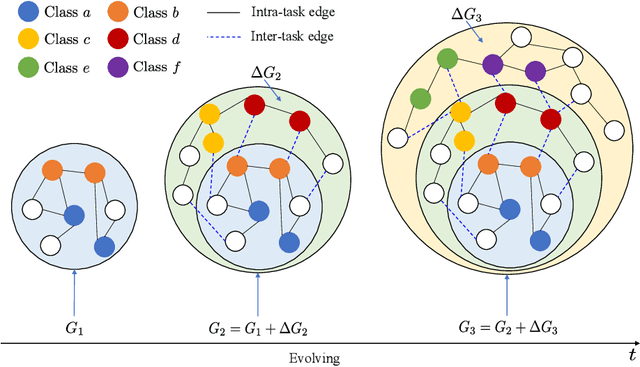

Abstract:Recently, continual graph learning has been increasingly adopted for diverse graph-structured data processing tasks in non-stationary environments. Despite its promising learning capability, current studies on continual graph learning mainly focus on mitigating the catastrophic forgetting problem while ignoring continuous performance improvement. To bridge this gap, this article aims to provide a comprehensive survey of recent efforts on continual graph learning. Specifically, we introduce a new taxonomy of continual graph learning from the perspective of overcoming catastrophic forgetting. Moreover, we systematically analyze the challenges of applying these continual graph learning methods in improving performance continuously and then discuss the possible solutions. Finally, we present open issues and future directions pertaining to the development of continual graph learning and discuss how they impact continuous performance improvement.

AI-Driven Patient Monitoring with Multi-Agent Deep Reinforcement Learning

Sep 24, 2023

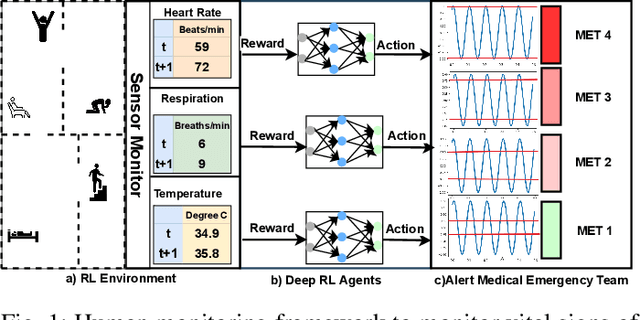

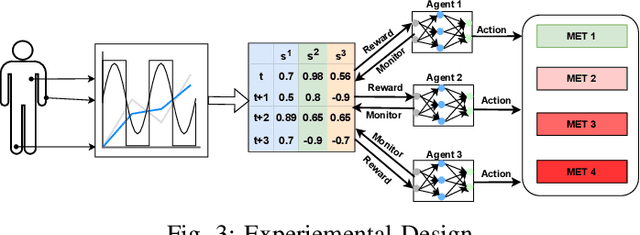

Abstract:Effective patient monitoring is vital for timely interventions and improved healthcare outcomes. Traditional monitoring systems often struggle to handle complex, dynamic environments with fluctuating vital signs, leading to delays in identifying critical conditions. To address this challenge, we propose a novel AI-driven patient monitoring framework using multi-agent deep reinforcement learning (DRL). Our approach deploys multiple learning agents, each dedicated to monitoring a specific physiological feature, such as heart rate, respiration, and temperature. These agents interact with a generic healthcare monitoring environment, learn the patients' behavior patterns, and make informed decisions to alert the corresponding Medical Emergency Teams (METs) based on the level of emergency estimated. In this study, we evaluate the performance of the proposed multi-agent DRL framework using real-world physiological and motion data from two datasets: PPG-DaLiA and WESAD. We compare the results with several baseline models, including Q-Learning, PPO, Actor-Critic, Double DQN, and DDPG, as well as monitoring frameworks like WISEML and CA-MAQL. Our experiments demonstrate that the proposed DRL approach outperforms all other baseline models, achieving more accurate monitoring of patient's vital signs. Furthermore, we conduct hyperparameter optimization to fine-tune the learning process of each agent. By optimizing hyperparameters, we enhance the learning rate and discount factor, thereby improving the agents' overall performance in monitoring patient health status. Our AI-driven patient monitoring system offers several advantages over traditional methods, including the ability to handle complex and uncertain environments, adapt to varying patient conditions, and make real-time decisions without external supervision.

Integration of Blockchain and Edge Computing in Internet of Things: A Survey

May 26, 2022Abstract:As an important technology to ensure data security, consistency, traceability, etc., blockchain has been increasingly used in Internet of Things (IoT) applications. The integration of blockchain and edge computing can further improve the resource utilization in terms of network, computing, storage, and security. This paper aims to present a survey on the integration of blockchain and edge computing. In particular, we first give an overview of blockchain and edge computing. We then present a general architecture of an integration of blockchain and edge computing system. We next study how to utilize blockchain to benefit edge computing, as well as how to use edge computing to benefit blockchain. We also discuss the issues brought by the integration of blockchain and edge computing system and solutions from perspectives of resource management, joint optimization, data management, computation offloading and security mechanism. Finally, we analyze and summarize the existing challenges posed by the integration of blockchain and edge computing system and the potential solutions in the future.

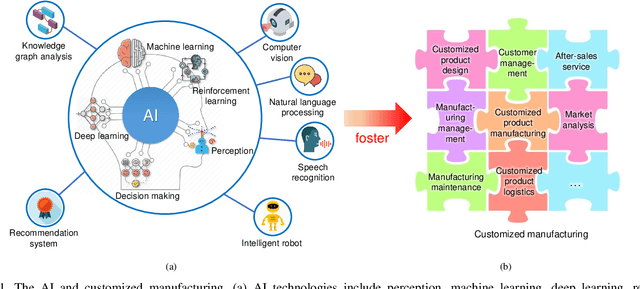

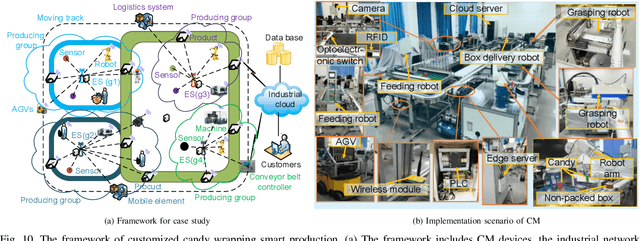

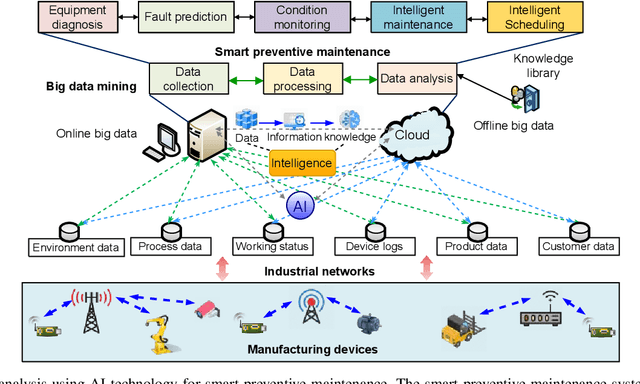

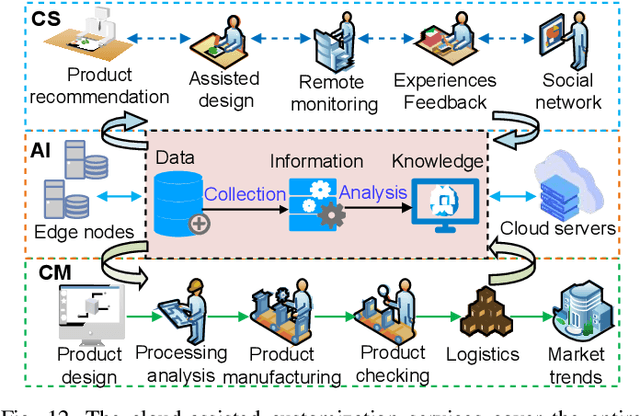

Artificial Intelligence-Driven Customized Manufacturing Factory: Key Technologies, Applications, and Challenges

Aug 07, 2021

Abstract:The traditional production paradigm of large batch production does not offer flexibility towards satisfying the requirements of individual customers. A new generation of smart factories is expected to support new multi-variety and small-batch customized production modes. For that, Artificial Intelligence (AI) is enabling higher value-added manufacturing by accelerating the integration of manufacturing and information communication technologies, including computing, communication, and control. The characteristics of a customized smart factory are to include self-perception, operations optimization, dynamic reconfiguration, and intelligent decision-making. The AI technologies will allow manufacturing systems to perceive the environment, adapt to the external needs, and extract the process knowledge, including business models, such as intelligent production, networked collaboration, and extended service models. This paper focuses on the implementation of AI in customized manufacturing (CM). The architecture of an AI-driven customized smart factory is presented. Details of intelligent manufacturing devices, intelligent information interaction, and construction of a flexible manufacturing line are showcased. The state-of-the-art AI technologies of potential use in CM, i.e., machine learning, multi-agent systems, Internet of Things, big data, and cloud-edge computing are surveyed. The AI-enabled technologies in a customized smart factory are validated with a case study of customized packaging. The experimental results have demonstrated that the AI-assisted CM offers the possibility of higher production flexibility and efficiency. Challenges and solutions related to AI in CM are also discussed.

* 21 pages, 12 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge