Guo Li

HiLoRA: Hierarchical Low-Rank Adaptation for Personalized Federated Learning

Mar 03, 2026Abstract:Vision Transformers (ViTs) have been widely adopted in vision tasks due to their strong transferability. In Federated Learning (FL), where full fine-tuning is communication heavy, Low-Rank Adaptation (LoRA) provides an efficient and communication-friendly way to adapt ViTs. However, existing LoRA-based federated tuning methods overlook latent client structures in real-world settings, limiting shared representation learning and hindering effective adaptation to unseen clients. To address this, we propose HiLoRA, a hierarchical LoRA framework that places adapters at three levels: root, cluster, and leaf, each designed to capture global, subgroup, and client-specific knowledge, respectively. Through cross-tier orthogonality and cascaded optimization, HiLoRA separates update subspaces and aligns each tier with its residual personalized objective. In particular, we develop a LoRA-Subspace Adaptive Clustering mechanism that infers latent client groups via subspace similarity analysis, thereby facilitating knowledge sharing across structurally aligned clients. Theoretically, we establish a tier-wise generalization analysis that supports HiLoRA's design. Experiments on ViT backbones with CIFAR-100 and DomainNet demonstrate consistent improvements in both personalization and generalization.

MATRIX AS PLAN: Structured Logical Reasoning with Feedback-Driven Replanning

Jan 15, 2026Abstract:As knowledge and semantics on the web grow increasingly complex, enhancing Large Language Models (LLMs) comprehension and reasoning capabilities has become particularly important. Chain-of-Thought (CoT) prompting has been shown to enhance the reasoning capabilities of LLMs. However, it still falls short on logical reasoning tasks that rely on symbolic expressions and strict deductive rules. Neuro-symbolic methods address this gap by enforcing formal correctness through external solvers. Yet these solvers are highly format-sensitive, and small instabilities in model outputs can lead to frequent processing failures. LLM-driven approaches avoid parsing brittleness, but they lack structured representations and process-level error-correction mechanisms. To further enhance the logical reasoning capabilities of LLMs, we propose MatrixCoT, a structured CoT framework with a matrix-based plan. Specifically, we normalize and type natural language expressions, attach explicit citation fields, and introduce a matrix-based planning method to preserve global relations among steps. The plan becomes a verifiable artifact, making execution more stable. For verification, we also add a feedback-driven replanning mechanism. Under semantic-equivalence constraints, it identifies omissions and defects, rewrites and compresses the dependency matrix, and produces a more trustworthy final answer. Experiments on five logical-reasoning benchmarks and five LLMs show that, without relying on external solvers, MatrixCoT enhances both robustness and interpretability when tackling complex symbolic reasoning tasks, while maintaining competitive performance.

Temporal-IRL: Modeling Port Congestion and Berth Scheduling with Inverse Reinforcement Learning

Jun 24, 2025Abstract:Predicting port congestion is crucial for maintaining reliable global supply chains. Accurate forecasts enableimprovedshipment planning, reducedelaysand costs, and optimizeinventoryanddistributionstrategies, thereby ensuring timely deliveries and enhancing supply chain resilience. To achieve accurate predictions, analyzing vessel behavior and their stay times at specific port terminals is essential, focusing particularly on berth scheduling under various conditions. Crucially, the model must capture and learn the underlying priorities and patterns of berth scheduling. Berth scheduling and planning are influenced by a range of factors, including incoming vessel size, waiting times, and the status of vessels within the port terminal. By observing historical Automatic Identification System (AIS) positions of vessels, we reconstruct berth schedules, which are subsequently utilized to determine the reward function via Inverse Reinforcement Learning (IRL). For this purpose, we modeled a specific terminal at the Port of New York/New Jersey and developed Temporal-IRL. This Temporal-IRL model learns berth scheduling to predict vessel sequencing at the terminal and estimate vessel port stay, encompassing both waiting and berthing times, to forecast port congestion. Utilizing data from Maher Terminal spanning January 2015 to September 2023, we trained and tested the model, achieving demonstrably excellent results.

FedHL: Federated Learning for Heterogeneous Low-Rank Adaptation via Unbiased Aggregation

May 24, 2025

Abstract:Federated Learning (FL) facilitates the fine-tuning of Foundation Models (FMs) using distributed data sources, with Low-Rank Adaptation (LoRA) gaining popularity due to its low communication costs and strong performance. While recent work acknowledges the benefits of heterogeneous LoRA in FL and introduces flexible algorithms to support its implementation, our theoretical analysis reveals a critical gap: existing methods lack formal convergence guarantees due to parameter truncation and biased gradient updates. Specifically, adapting client-specific LoRA ranks necessitates truncating global parameters, which introduces inherent truncation errors and leads to subsequent inaccurate gradient updates that accumulate over training rounds, ultimately degrading performance. To address the above issues, we propose \textbf{FedHL}, a simple yet effective \textbf{Fed}erated Learning framework tailored for \textbf{H}eterogeneous \textbf{L}oRA. By leveraging the full-rank global model as a calibrated aggregation basis, FedHL eliminates the direct truncation bias from initial alignment with client-specific ranks. Furthermore, we derive the theoretically optimal aggregation weights by minimizing the gradient drift term in the convergence upper bound. Our analysis shows that FedHL guarantees $\mathcal{O}(1/\sqrt{T})$ convergence rate, and experiments on multiple real-world datasets demonstrate a 1-3\% improvement over several state-of-the-art methods.

Unlocking Multimodal Integration in EHRs: A Prompt Learning Framework for Language and Time Series Fusion

Feb 19, 2025Abstract:Large language models (LLMs) have shown remarkable performance in vision-language tasks, but their application in the medical field remains underexplored, particularly for integrating structured time series data with unstructured clinical notes. In clinical practice, dynamic time series data such as lab test results capture critical temporal patterns, while clinical notes provide rich semantic context. Merging these modalities is challenging due to the inherent differences between continuous signals and discrete text. To bridge this gap, we introduce ProMedTS, a novel self-supervised multimodal framework that employs prompt-guided learning to unify these heterogeneous data types. Our approach leverages lightweight anomaly detection to generate anomaly captions that serve as prompts, guiding the encoding of raw time series data into informative embeddings. These embeddings are aligned with textual representations in a shared latent space, preserving fine-grained temporal nuances alongside semantic insights. Furthermore, our framework incorporates tailored self-supervised objectives to enhance both intra- and inter-modal alignment. We evaluate ProMedTS on disease diagnosis tasks using real-world datasets, and the results demonstrate that our method consistently outperforms state-of-the-art approaches.

TENPLEX: Changing Resources of Deep Learning Jobs using Parallelizable Tensor Collections

Dec 08, 2023Abstract:Deep learning (DL) jobs use multi-dimensional parallelism, i.e they combine data, model, and pipeline parallelism, to use large GPU clusters efficiently. This couples jobs tightly to a set of GPU devices, but jobs may experience changes to the device allocation: (i) resource elasticity during training adds or removes devices; (ii) hardware maintenance may require redeployment on different devices; and (iii) device failures force jobs to run with fewer devices. Current DL frameworks lack support for these scenarios, as they cannot change the multi-dimensional parallelism of an already-running job in an efficient and model-independent way. We describe Tenplex, a state management library for DL frameworks that enables jobs to change the GPU allocation and job parallelism at runtime. Tenplex achieves this by externalizing the DL job state during training as a parallelizable tensor collection (PTC). When the GPU allocation for the DL job changes, Tenplex uses the PTC to transform the DL job state: for the dataset state, Tenplex repartitions it under data parallelism and exposes it to workers through a virtual file system; for the model state, Tenplex obtains it as partitioned checkpoints and transforms them to reflect the new parallelization configuration. For efficiency, these PTC transformations are executed in parallel with a minimum amount of data movement between devices and workers. Our experiments show that Tenplex enables DL jobs to support dynamic parallelization with low overhead.

Quiver: Supporting GPUs for Low-Latency, High-Throughput GNN Serving with Workload Awareness

May 18, 2023

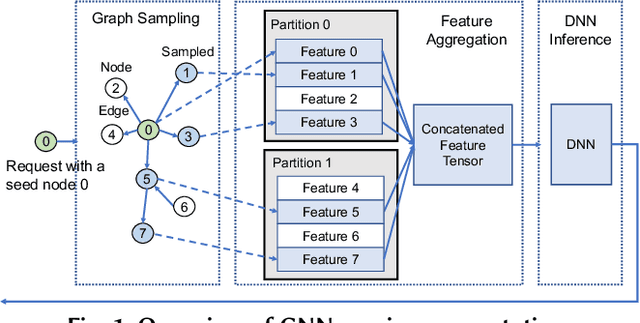

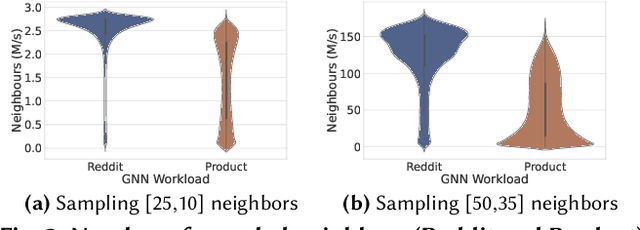

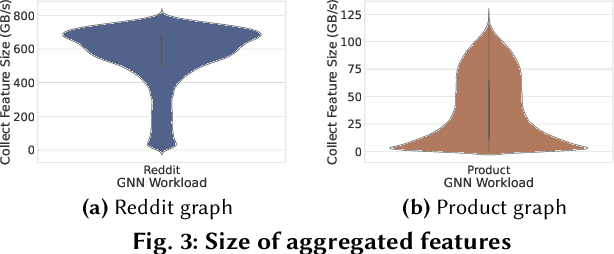

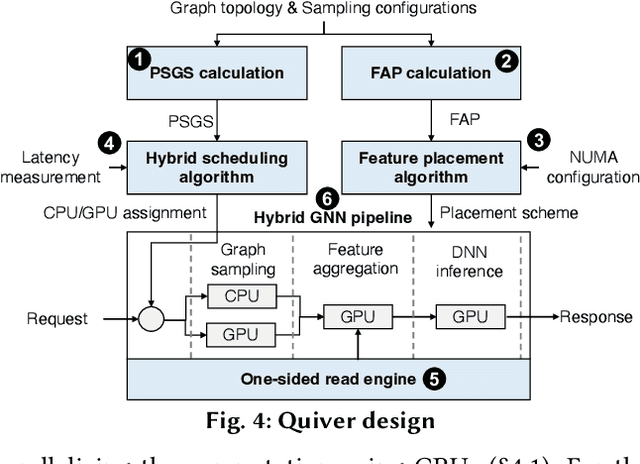

Abstract:Systems for serving inference requests on graph neural networks (GNN) must combine low latency with high throughout, but they face irregular computation due to skew in the number of sampled graph nodes and aggregated GNN features. This makes it challenging to exploit GPUs effectively: using GPUs to sample only a few graph nodes yields lower performance than CPU-based sampling; and aggregating many features exhibits high data movement costs between GPUs and CPUs. Therefore, current GNN serving systems use CPUs for graph sampling and feature aggregation, limiting throughput. We describe Quiver, a distributed GPU-based GNN serving system with low-latency and high-throughput. Quiver's key idea is to exploit workload metrics for predicting the irregular computation of GNN requests, and governing the use of GPUs for graph sampling and feature aggregation: (1) for graph sampling, Quiver calculates the probabilistic sampled graph size, a metric that predicts the degree of parallelism in graph sampling. Quiver uses this metric to assign sampling tasks to GPUs only when the performance gains surpass CPU-based sampling; and (2) for feature aggregation, Quiver relies on the feature access probability to decide which features to partition and replicate across a distributed GPU NUMA topology. We show that Quiver achieves up to 35 times lower latency with an 8 times higher throughput compared to state-of-the-art GNN approaches (DGL and PyG).

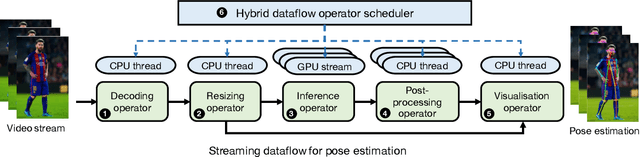

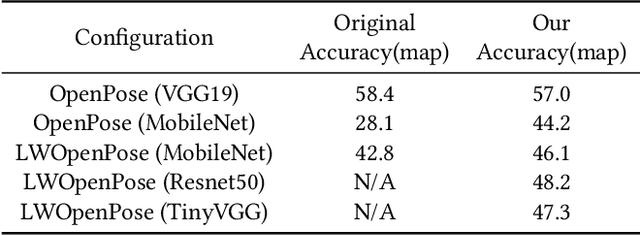

Fast and Flexible Human Pose Estimation with HyperPose

Aug 26, 2021

Abstract:Estimating human pose is an important yet challenging task in multimedia applications. Existing pose estimation libraries target reproducing standard pose estimation algorithms. When it comes to customising these algorithms for real-world applications, none of the existing libraries can offer both the flexibility of developing custom pose estimation algorithms and the high-performance of executing these algorithms on commodity devices. In this paper, we introduce Hyperpose, a novel flexible and high-performance pose estimation library. Hyperpose provides expressive Python APIs that enable developers to easily customise pose estimation algorithms for their applications. It further provides a model inference engine highly optimised for real-time pose estimation. This engine can dynamically dispatch carefully designed pose estimation tasks to CPUs and GPUs, thus automatically achieving high utilisation of hardware resources irrespective of deployment environments. Extensive evaluation results show that Hyperpose can achieve up to 3.1x~7.3x higher pose estimation throughput compared to state-of-the-art pose estimation libraries without compromising estimation accuracy. By 2021, Hyperpose has received over 1000 stars on GitHub and attracted users from both industry and academy.

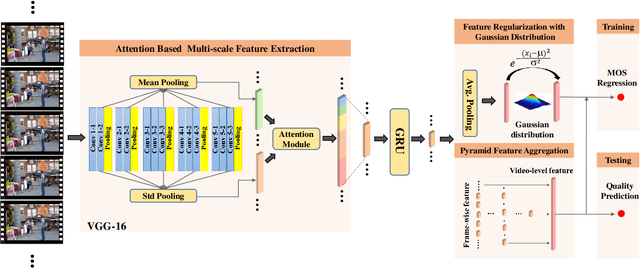

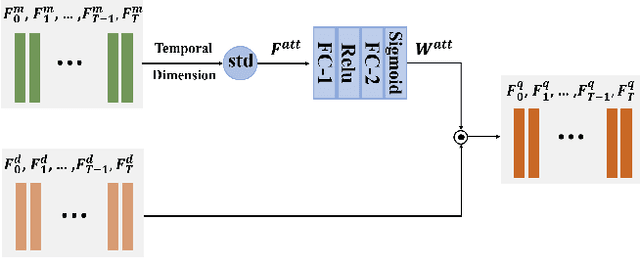

Learning Generalized Spatial-Temporal Deep Feature Representation for No-Reference Video Quality Assessment

Dec 27, 2020

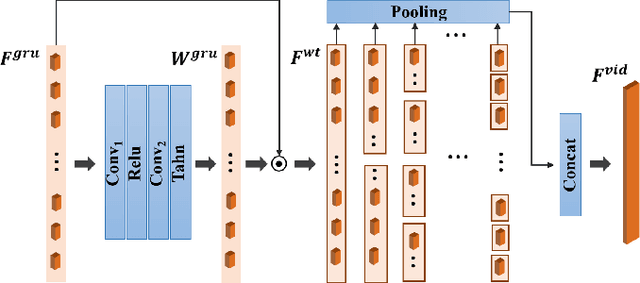

Abstract:In this work, we propose a no-reference video quality assessment method, aiming to achieve high-generalization capability in cross-content, -resolution and -frame rate quality prediction. In particular, we evaluate the quality of a video by learning effective feature representations in spatial-temporal domain. In the spatial domain, to tackle the resolution and content variations, we impose the Gaussian distribution constraints on the quality features. The unified distribution can significantly reduce the domain gap between different video samples, resulting in a more generalized quality feature representation. Along the temporal dimension, inspired by the mechanism of visual perception, we propose a pyramid temporal aggregation module by involving the short-term and long-term memory to aggregate the frame-level quality. Experiments show that our method outperforms the state-of-the-art methods on cross-dataset settings, and achieves comparable performance on intra-dataset configurations, demonstrating the high-generalization capability of the proposed method.

TailorGAN: Making User-Defined Fashion Designs

Jan 20, 2020

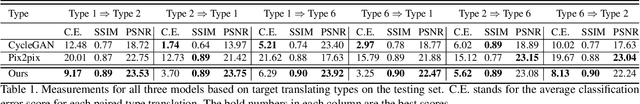

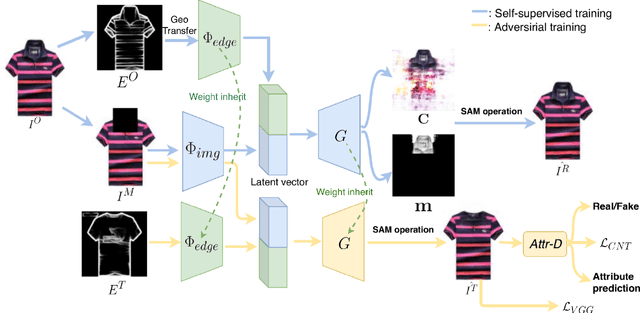

Abstract:Attribute editing has become an important and emerging topic of computer vision. In this paper, we consider a task: given a reference garment image A and another image B with target attribute (collar/sleeve), generate a photo-realistic image which combines the texture from reference A and the new attribute from reference B. The highly convoluted attributes and the lack of paired data are the main challenges to the task. To overcome those limitations, we propose a novel self-supervised model to synthesize garment images with disentangled attributes (e.g., collar and sleeves) without paired data. Our method consists of a reconstruction learning step and an adversarial learning step. The model learns texture and location information through reconstruction learning. And, the model's capability is generalized to achieve single-attribute manipulation by adversarial learning. Meanwhile, we compose a new dataset, named GarmentSet, with annotation of landmarks of collars and sleeves on clean garment images. Extensive experiments on this dataset and real-world samples demonstrate that our method can synthesize much better results than the state-of-the-art methods in both quantitative and qualitative comparisons.

* fashion

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge