Danfeng Hong

Smart Transfer: Leveraging Vision Foundation Model for Rapid Building Damage Mapping with Post-Earthquake VHR Imagery

Apr 03, 2026Abstract:Living in a changing climate, human society now faces more frequent and severe natural disasters than ever before. As a consequence, rapid disaster response during the "Golden 72 Hours" of search and rescue becomes a vital humanitarian necessity and community concern. However, traditional disaster damage surveys routinely fail to generalize across distinct urban morphologies and new disaster events. Effective damage mapping typically requires exhaustive and time-consuming manual data annotation. To address this issue, we introduce Smart Transfer, a novel Geospatial Artificial Intelligence (GeoAI) framework, leveraging state-of-the-art vision Foundation Models (FMs) for rapid building damage mapping with post-earthquake Very High Resolution (VHR) imagery. Specifically, we design two novel model transfer strategies: first, Pixel-wise Clustering (PC), ensuring robust prototype-level global feature alignment; second, a Distance-Penalized Triplet (DPT), integrating patch-level spatial autocorrelation patterns by assigning stronger penalties to semantically inconsistent yet spatially adjacent patches. Extensive experiments and ablations from the recent 2023 Turkiye-Syria earthquake show promising performance in multiple cross-region transfer settings, namely Leave One Domain Out (LODO) and Specific Source Domain Combination (SSDC). Moreover, Smart Transfer provides a scalable, automated GeoAI solution to accelerate building damage mapping and support rapid disaster response, offering new opportunities to enhance disaster resilience in climate-vulnerable regions and communities. The data and code are publicly available at https://github.com/ai4city-hkust/SmartTransfer.

Foundation Models in Remote Sensing: Evolving from Unimodality to Multimodality

Mar 01, 2026Abstract:Remote sensing (RS) techniques are increasingly crucial for deepening our understanding of the planet. As the volume and diversity of RS data continue to grow exponentially, there is an urgent need for advanced data modeling and understanding capabilities to manage and interpret these vast datasets effectively. Foundation models present significant new growth opportunities and immense potential to revolutionize the RS field. In this paper, we conduct a comprehensive technical survey on foundation models in RS, offering a brand-new perspective by exploring their evolution from unimodality to multimodality. We hope this work serves as a valuable entry point for researchers interested in both foundation models and RS and helps them launch new projects or explore new research topics in this rapidly evolving area. This survey addresses the following three key questions: What are foundation models in RS? Why are foundation models needed in RS? How can we effectively guide junior researchers in gaining a comprehensive and practical understanding of foundation models in RS applications? More specifically, we begin by outlining the background and motivation, emphasizing the importance of foundation models in RS. We then review existing foundation models in RS, systematically categorizing them into unimodal and multimodal approaches. Additionally, we provide a tutorial-like section to guide researchers, especially beginners, on how to train foundation models in RS and apply them to real-world tasks. The survey aims to equip researchers in RS with a deeper and more efficient understanding of foundation models, enabling them to get started easily and effectively apply these models across various RS applications.

Vision-Language Model Purified Semi-Supervised Semantic Segmentation for Remote Sensing Images

Jan 30, 2026Abstract:The semi-supervised semantic segmentation (S4) can learn rich visual knowledge from low-cost unlabeled images. However, traditional S4 architectures all face the challenge of low-quality pseudo-labels, especially for the teacher-student framework.We propose a novel SemiEarth model that introduces vision-language models (VLMs) to address the S4 issues for the remote sensing (RS) domain. Specifically, we invent a VLM pseudo-label purifying (VLM-PP) structure to purify the teacher network's pseudo-labels, achieving substantial improvements. Especially in multi-class boundary regions of RS images, the VLM-PP module can significantly improve the quality of pseudo-labels generated by the teacher, thereby correctly guiding the student model's learning. Moreover, since VLM-PP equips VLMs with open-world capabilities and is independent of the S4 architecture, it can correct mispredicted categories in low-confidence pseudo-labels whenever a discrepancy arises between its prediction and the pseudo-label. We conducted extensive experiments on multiple RS datasets, which demonstrate that our SemiEarth achieves SOTA performance. More importantly, unlike previous SOTA RS S4 methods, our model not only achieves excellent performance but also offers good interpretability. The code is released at https://github.com/wangshanwen001/SemiEarth.

KANO: Kolmogorov-Arnold Neural Operator for Image Super-Resolution

Dec 28, 2025Abstract:The highly nonlinear degradation process, complex physical interactions, and various sources of uncertainty render single-image Super-resolution (SR) a particularly challenging task. Existing interpretable SR approaches, whether based on prior learning or deep unfolding optimization frameworks, typically rely on black-box deep networks to model latent variables, which leaves the degradation process largely unknown and uncontrollable. Inspired by the Kolmogorov-Arnold theorem (KAT), we for the first time propose a novel interpretable operator, termed Kolmogorov-Arnold Neural Operator (KANO), with the application to image SR. KANO provides a transparent and structured representation of the latent degradation fitting process. Specifically, we employ an additive structure composed of a finite number of B-spline functions to approximate continuous spectral curves in a piecewise fashion. By learning and optimizing the shape parameters of these spline functions within defined intervals, our KANO accurately captures key spectral characteristics, such as local linear trends and the peak-valley structures at nonlinear inflection points, thereby endowing SR results with physical interpretability. Furthermore, through theoretical modeling and experimental evaluations across natural images, aerial photographs, and satellite remote sensing data, we systematically compare multilayer perceptrons (MLPs) and Kolmogorov-Arnold networks (KANs) in handling complex sequence fitting tasks. This comparative study elucidates the respective advantages and limitations of these models in characterizing intricate degradation mechanisms, offering valuable insights for the development of interpretable SR techniques.

Any-Optical-Model: A Universal Foundation Model for Optical Remote Sensing

Dec 19, 2025

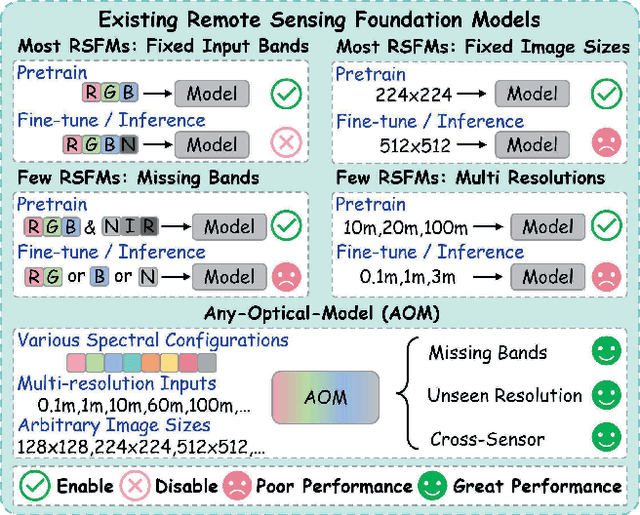

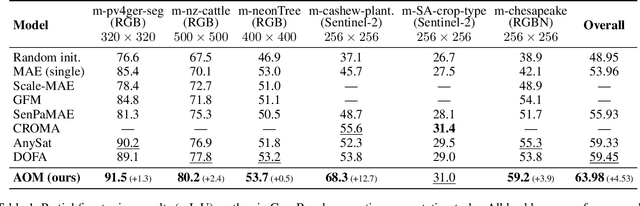

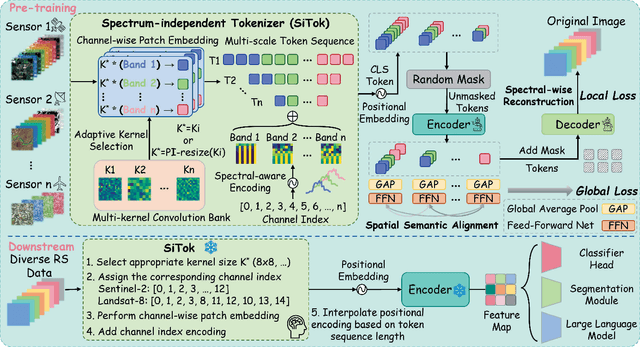

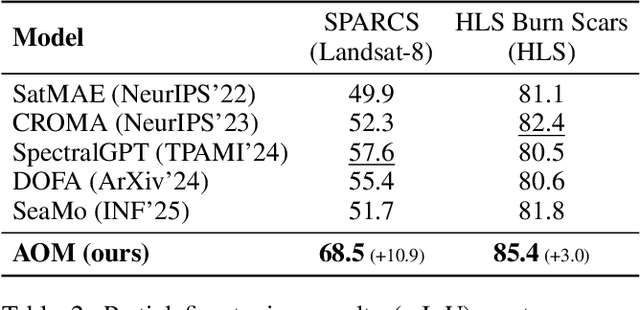

Abstract:Optical satellites, with their diverse band layouts and ground sampling distances, supply indispensable evidence for tasks ranging from ecosystem surveillance to emergency response. However, significant discrepancies in band composition and spatial resolution across different optical sensors present major challenges for existing Remote Sensing Foundation Models (RSFMs). These models are typically pretrained on fixed band configurations and resolutions, making them vulnerable to real world scenarios involving missing bands, cross sensor fusion, and unseen spatial scales, thereby limiting their generalization and practical deployment. To address these limitations, we propose Any Optical Model (AOM), a universal RSFM explicitly designed to accommodate arbitrary band compositions, sensor types, and resolution scales. To preserve distinctive spectral characteristics even when bands are missing or newly introduced, AOM introduces a spectrum-independent tokenizer that assigns each channel a dedicated band embedding, enabling explicit encoding of spectral identity. To effectively capture texture and contextual patterns from sub-meter to hundred-meter imagery, we design a multi-scale adaptive patch embedding mechanism that dynamically modulates the receptive field. Furthermore, to maintain global semantic consistency across varying resolutions, AOM incorporates a multi-scale semantic alignment mechanism alongside a channel-wise self-supervised masking and reconstruction pretraining strategy that jointly models spectral-spatial relationships. Extensive experiments on over 10 public datasets, including those from Sentinel-2, Landsat, and HLS, demonstrate that AOM consistently achieves state-of-the-art (SOTA) performance under challenging conditions such as band missing, cross sensor, and cross resolution settings.

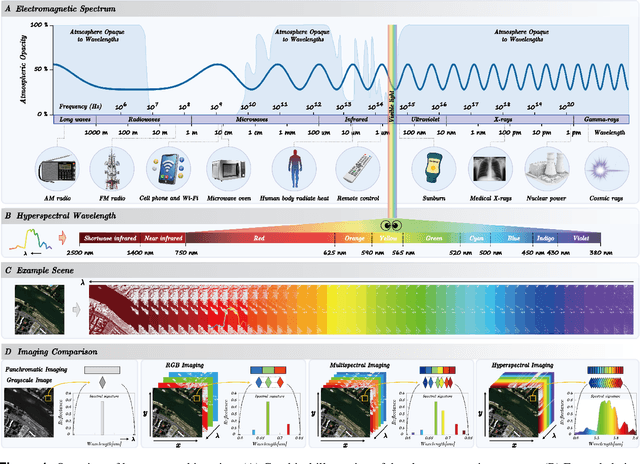

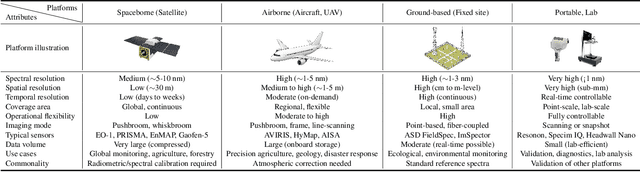

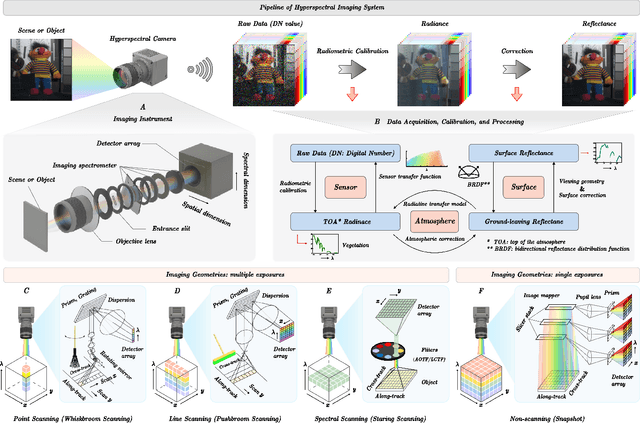

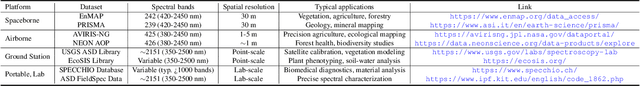

Hyperspectral Imaging

Aug 11, 2025

Abstract:Hyperspectral imaging (HSI) is an advanced sensing modality that simultaneously captures spatial and spectral information, enabling non-invasive, label-free analysis of material, chemical, and biological properties. This Primer presents a comprehensive overview of HSI, from the underlying physical principles and sensor architectures to key steps in data acquisition, calibration, and correction. We summarize common data structures and highlight classical and modern analysis methods, including dimensionality reduction, classification, spectral unmixing, and AI-driven techniques such as deep learning. Representative applications across Earth observation, precision agriculture, biomedicine, industrial inspection, cultural heritage, and security are also discussed, emphasizing HSI's ability to uncover sub-visual features for advanced monitoring, diagnostics, and decision-making. Persistent challenges, such as hardware trade-offs, acquisition variability, and the complexity of high-dimensional data, are examined alongside emerging solutions, including computational imaging, physics-informed modeling, cross-modal fusion, and self-supervised learning. Best practices for dataset sharing, reproducibility, and metadata documentation are further highlighted to support transparency and reuse. Looking ahead, we explore future directions toward scalable, real-time, and embedded HSI systems, driven by sensor miniaturization, self-supervised learning, and foundation models. As HSI evolves into a general-purpose, cross-disciplinary platform, it holds promise for transformative applications in science, technology, and society.

Diffusion Model in Hyperspectral Image Processing and Analysis: A Review

May 16, 2025Abstract:Hyperspectral image processing and analysis has important application value in remote sensing, agriculture and environmental monitoring, but its high dimensionality, data redundancy and noise interference etc. bring great challenges to the analysis. Traditional models have limitations in dealing with these complex data, and it is difficult to meet the increasing demand for analysis. In recent years, Diffusion Model, as an emerging generative model, has shown unique advantages in hyperspectral image processing. By simulating the diffusion process of data in time, the Diffusion Model can effectively process high-dimensional data, generate high-quality samples, and perform well in denoising and data enhancement. In this paper, we review the recent research advances in diffusion modeling for hyperspectral image processing and analysis, and discuss its applications in tasks such as high-dimensional data processing, noise removal, classification, and anomaly detection. The performance of diffusion-based models on image processing is compared and the challenges are summarized. It is shown that the diffusion model can significantly improve the accuracy and efficiency of hyperspectral image analysis, providing a new direction for future research.

Joint Super-Resolution and Segmentation for 1-m Impervious Surface Area Mapping in China's Yangtze River Economic Belt

May 08, 2025Abstract:We propose a novel joint framework by integrating super-resolution and segmentation, called JointSeg, which enables the generation of 1-meter ISA maps directly from freely available Sentinel-2 imagery. JointSeg was trained on multimodal cross-resolution inputs, offering a scalable and affordable alternative to traditional approaches. This synergistic design enables gradual resolution enhancement from 10m to 1m while preserving fine-grained spatial textures, and ensures high classification fidelity through effective cross-scale feature fusion. This method has been successfully applied to the Yangtze River Economic Belt (YREB), a region characterized by complex urban-rural patterns and diverse topography. As a result, a comprehensive ISA mapping product for 2021, referred to as ISA-1, was generated, covering an area of over 2.2 million square kilometers. Quantitative comparisons against the 10m ESA WorldCover and other benchmark products reveal that ISA-1 achieves an F1-score of 85.71%, outperforming bilinear-interpolation-based segmentation by 9.5%, and surpassing other ISA datasets by 21.43%-61.07%. In densely urbanized areas (e.g., Suzhou, Nanjing), ISA-1 reduces ISA overestimation through improved discrimination of green spaces and water bodies. Conversely, in mountainous regions (e.g., Ganzi, Zhaotong), it identifies significantly more ISA due to its enhanced ability to detect fragmented anthropogenic features such as rural roads and sparse settlements, demonstrating its robustness across diverse landscapes. Moreover, we present biennial ISA maps from 2017 to 2023, capturing spatiotemporal urbanization dynamics across representative cities. The results highlight distinct regional growth patterns: rapid expansion in upstream cities, moderate growth in midstream regions, and saturation in downstream metropolitan areas.

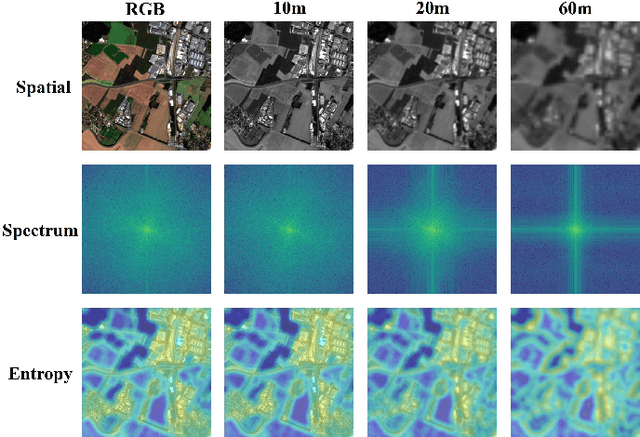

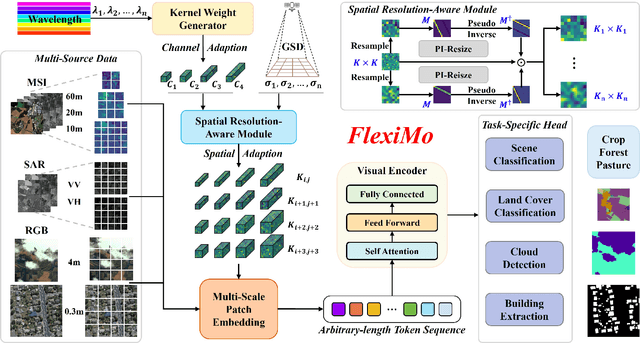

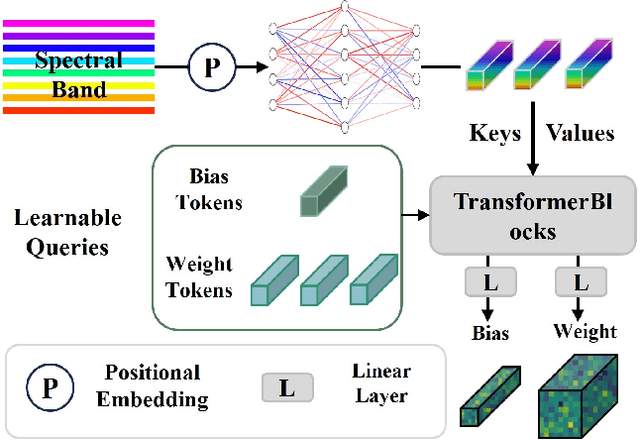

FlexiMo: A Flexible Remote Sensing Foundation Model

Mar 31, 2025

Abstract:The rapid expansion of multi-source satellite imagery drives innovation in Earth observation, opening unprecedented opportunities for Remote Sensing Foundation Models to harness diverse data. However, many existing models remain constrained by fixed spatial resolutions and patch sizes, limiting their ability to fully exploit the heterogeneous spatial characteristics inherent in satellite imagery. To address these challenges, we propose FlexiMo, a flexible remote sensing foundation model that endows the pre-trained model with the flexibility to adapt to arbitrary spatial resolutions. Central to FlexiMo is a spatial resolution-aware module that employs a parameter-free alignment embedding mechanism to dynamically recalibrate patch embeddings based on the input image's resolution and dimensions. This design not only preserves critical token characteristics and ensures multi-scale feature fidelity but also enables efficient feature extraction without requiring modifications to the underlying network architecture. In addition, FlexiMo incorporates a lightweight channel adaptation module that leverages prior spectral information from sensors. This mechanism allows the model to process images with varying numbers of channels while maintaining the data's intrinsic physical properties. Extensive experiments on diverse multimodal, multi-resolution, and multi-scale datasets demonstrate that FlexiMo significantly enhances model generalization and robustness. In particular, our method achieves outstanding performance across a range of downstream tasks, including scene classification, land cover classification, urban building segmentation, and cloud detection. By enabling parameter-efficient and physically consistent adaptation, FlexiMo paves the way for more adaptable and effective foundation models in real-world remote sensing applications.

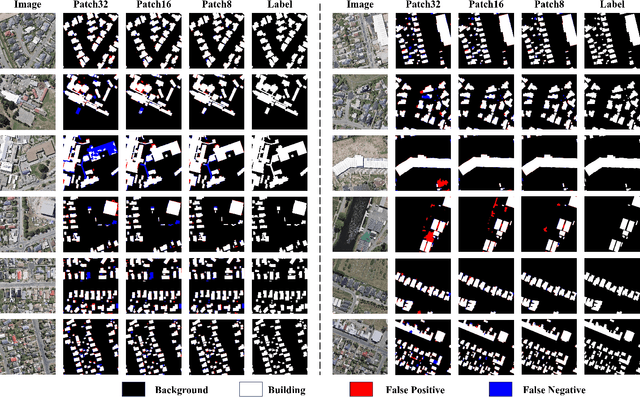

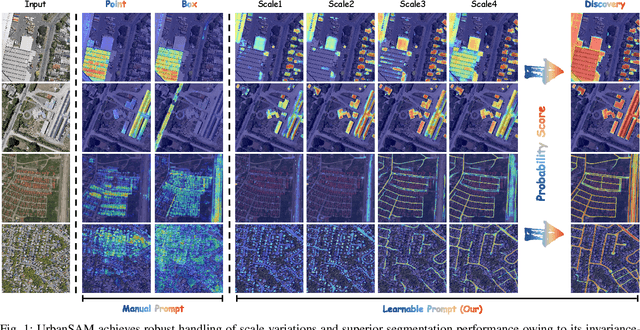

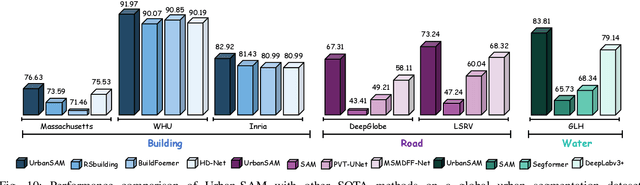

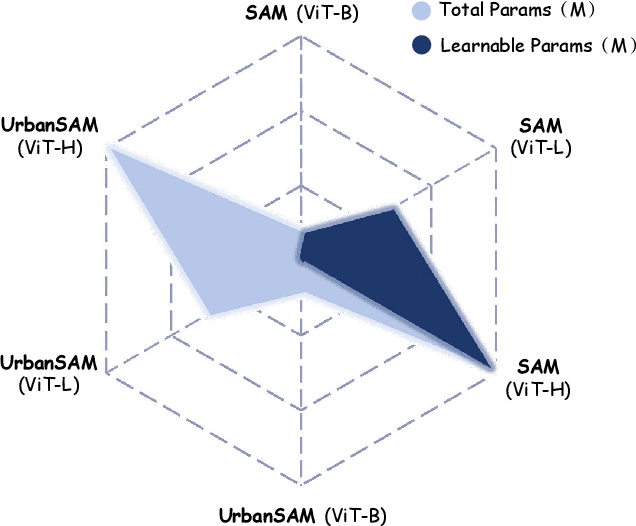

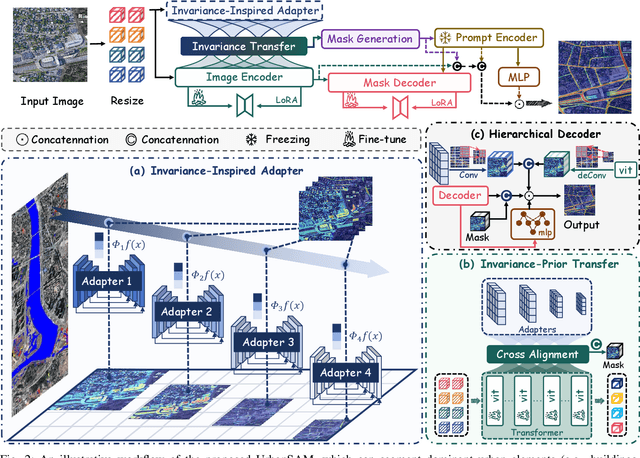

UrbanSAM: Learning Invariance-Inspired Adapters for Segment Anything Models in Urban Construction

Feb 21, 2025

Abstract:Object extraction and segmentation from remote sensing (RS) images is a critical yet challenging task in urban environment monitoring. Urban morphology is inherently complex, with irregular objects of diverse shapes and varying scales. These challenges are amplified by heterogeneity and scale disparities across RS data sources, including sensors, platforms, and modalities, making accurate object segmentation particularly demanding. While the Segment Anything Model (SAM) has shown significant potential in segmenting complex scenes, its performance in handling form-varying objects remains limited due to manual-interactive prompting. To this end, we propose UrbanSAM, a customized version of SAM specifically designed to analyze complex urban environments while tackling scaling effects from remotely sensed observations. Inspired by multi-resolution analysis (MRA) theory, UrbanSAM incorporates a novel learnable prompter equipped with a Uscaling-Adapter that adheres to the invariance criterion, enabling the model to capture multiscale contextual information of objects and adapt to arbitrary scale variations with theoretical guarantees. Furthermore, features from the Uscaling-Adapter and the trunk encoder are aligned through a masked cross-attention operation, allowing the trunk encoder to inherit the adapter's multiscale aggregation capability. This synergy enhances the segmentation performance, resulting in more powerful and accurate outputs, supported by the learned adapter. Extensive experimental results demonstrate the flexibility and superior segmentation performance of the proposed UrbanSAM on a global-scale dataset, encompassing scale-varying urban objects such as buildings, roads, and water.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge