Alejandro Martin-Gomez

DualVision ArthroNav: Investigating Opportunities to Enhance Localization and Reconstruction in Image-based Arthroscopy Navigation via External Cameras

Nov 12, 2025

Abstract:Arthroscopic procedures can greatly benefit from navigation systems that enhance spatial awareness, depth perception, and field of view. However, existing optical tracking solutions impose strict workspace constraints and disrupt surgical workflow. Vision-based alternatives, though less invasive, often rely solely on the monocular arthroscope camera, making them prone to drift, scale ambiguity, and sensitivity to rapid motion or occlusion. We propose DualVision ArthroNav, a multi-camera arthroscopy navigation system that integrates an external camera rigidly mounted on the arthroscope. The external camera provides stable visual odometry and absolute localization, while the monocular arthroscope video enables dense scene reconstruction. By combining these complementary views, our system resolves the scale ambiguity and long-term drift inherent in monocular SLAM and ensures robust relocalization. Experiments demonstrate that our system effectively compensates for calibration errors, achieving an average absolute trajectory error of 1.09 mm. The reconstructed scenes reach an average target registration error of 2.16 mm, with high visual fidelity (SSIM = 0.69, PSNR = 22.19). These results indicate that our system provides a practical and cost-efficient solution for arthroscopic navigation, bridging the gap between optical tracking and purely vision-based systems, and paving the way toward clinically deployable, fully vision-based arthroscopic guidance.

An Image-Guided Robotic System for Transcranial Magnetic Stimulation: System Development and Experimental Evaluation

Oct 20, 2024

Abstract:Transcranial magnetic stimulation (TMS) is a noninvasive medical procedure that can modulate brain activity, and it is widely used in neuroscience and neurology research. Compared to manual operators, robots may improve the outcome of TMS due to their superior accuracy and repeatability. However, there has not been a widely accepted standard protocol for performing robotic TMS using fine-segmented brain images, resulting in arbitrary planned angles with respect to the true boundaries of the modulated cortex. Given that the recent study in TMS simulation suggests a noticeable difference in outcomes when using different anatomical details, cortical shape should play a more significant role in deciding the optimal TMS coil pose. In this work, we introduce an image-guided robotic system for TMS that focuses on (1) establishing standardized planning methods and heuristics to define a reference (true zero) for the coil poses and (2) solving the issue that the manual coil placement requires expert hand-eye coordination which often leading to low repeatability of the experiments. To validate the design of our robotic system, a phantom study and a preliminary human subject study were performed. Our results show that the robotic method can half the positional error and improve the rotational accuracy by up to two orders of magnitude. The accuracy is proven to be repeatable because the standard deviation of multiple trials is lowered by an order of magnitude. The improved actuation accuracy successfully translates to the TMS application, with a higher and more stable induced voltage in magnetic field sensors.

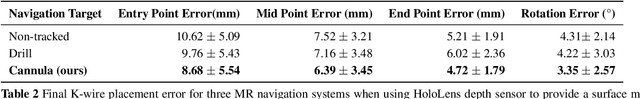

StraightTrack: Towards Mixed Reality Navigation System for Percutaneous K-wire Insertion

Oct 02, 2024

Abstract:In percutaneous pelvic trauma surgery, accurate placement of Kirschner wires (K-wires) is crucial to ensure effective fracture fixation and avoid complications due to breaching the cortical bone along an unsuitable trajectory. Surgical navigation via mixed reality (MR) can help achieve precise wire placement in a low-profile form factor. Current approaches in this domain are as yet unsuitable for real-world deployment because they fall short of guaranteeing accurate visual feedback due to uncontrolled bending of the wire. To ensure accurate feedback, we introduce StraightTrack, an MR navigation system designed for percutaneous wire placement in complex anatomy. StraightTrack features a marker body equipped with a rigid access cannula that mitigates wire bending due to interactions with soft tissue and a covered bony surface. Integrated with an Optical See-Through Head-Mounted Display (OST HMD) capable of tracking the cannula body, StraightTrack offers real-time 3D visualization and guidance without external trackers, which are prone to losing line-of-sight. In phantom experiments with two experienced orthopedic surgeons, StraightTrack improves wire placement accuracy, achieving the ideal trajectory within $5.26 \pm 2.29$ mm and $2.88 \pm 1.49$ degree, compared to over 12.08 mm and 4.07 degree for comparable methods. As MR navigation systems continue to mature, StraightTrack realizes their potential for internal fracture fixation and other percutaneous orthopedic procedures.

Uncertainty-Aware Shape Estimation of a Surgical Continuum Manipulator in Constrained Environments using Fiber Bragg Grating Sensors

May 11, 2024

Abstract:Continuum Dexterous Manipulators (CDMs) are well-suited tools for minimally invasive surgery due to their inherent dexterity and reachability. Nonetheless, their flexible structure and non-linear curvature pose significant challenges for shape-based feedback control. The use of Fiber Bragg Grating (FBG) sensors for shape sensing has shown great potential in estimating the CDM's tip position and subsequently reconstructing the shape using optimization algorithms. This optimization, however, is under-constrained and may be ill-posed for complex shapes, falling into local minima. In this work, we introduce a novel method capable of directly estimating a CDM's shape from FBG sensor wavelengths using a deep neural network. In addition, we propose the integration of uncertainty estimation to address the critical issue of uncertainty in neural network predictions. Neural network predictions are unreliable when the input sample is outside the training distribution or corrupted by noise. Recognizing such deviations is crucial when integrating neural networks within surgical robotics, as inaccurate estimations can pose serious risks to the patient. We present a robust method that not only improves the precision upon existing techniques for FBG-based shape estimation but also incorporates a mechanism to quantify the models' confidence through uncertainty estimation. We validate the uncertainty estimation through extensive experiments, demonstrating its effectiveness and reliability on out-of-distribution (OOD) data, adding an additional layer of safety and precision to minimally invasive surgical robotics.

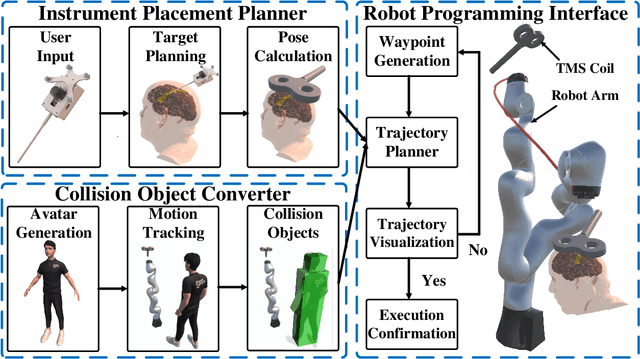

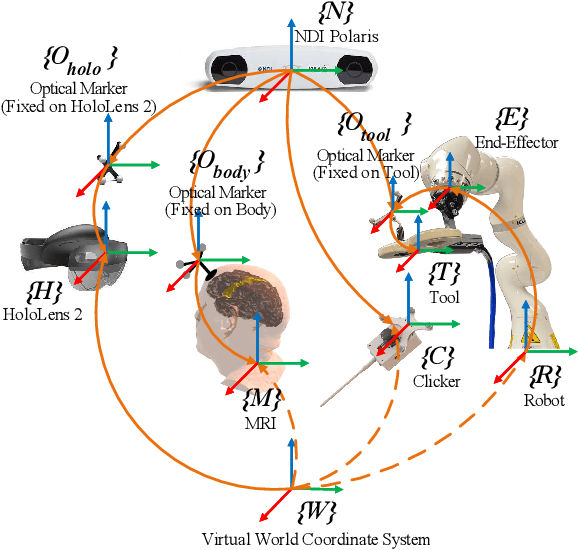

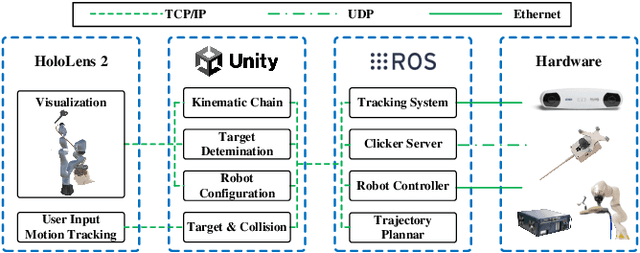

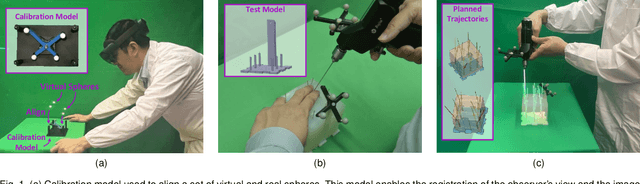

On the Fly Robotic-Assisted Medical Instrument Planning and Execution Using Mixed Reality

Apr 08, 2024

Abstract:Robotic-assisted medical systems (RAMS) have gained significant attention for their advantages in alleviating surgeons' fatigue and improving patients' outcomes. These systems comprise a range of human-computer interactions, including medical scene monitoring, anatomical target planning, and robot manipulation. However, despite its versatility and effectiveness, RAMS demands expertise in robotics, leading to a high learning cost for the operator. In this work, we introduce a novel framework using mixed reality technologies to ease the use of RAMS. The proposed framework achieves real-time planning and execution of medical instruments by providing 3D anatomical image overlay, human-robot collision detection, and robot programming interface. These features, integrated with an easy-to-use calibration method for head-mounted display, improve the effectiveness of human-robot interactions. To assess the feasibility of the framework, two medical applications are presented in this work: 1) coil placement during transcranial magnetic stimulation and 2) drill and injector device positioning during femoroplasty. Results from these use cases demonstrate its potential to extend to a wider range of medical scenarios.

Segment Any Medical Model Extended

Mar 26, 2024Abstract:The Segment Anything Model (SAM) has drawn significant attention from researchers who work on medical image segmentation because of its generalizability. However, researchers have found that SAM may have limited performance on medical images compared to state-of-the-art non-foundation models. Regardless, the community sees potential in extending, fine-tuning, modifying, and evaluating SAM for analysis of medical imaging. An increasing number of works have been published focusing on the mentioned four directions, where variants of SAM are proposed. To this end, a unified platform helps push the boundary of the foundation model for medical images, facilitating the use, modification, and validation of SAM and its variants in medical image segmentation. In this work, we introduce SAMM Extended (SAMME), a platform that integrates new SAM variant models, adopts faster communication protocols, accommodates new interactive modes, and allows for fine-tuning of subcomponents of the models. These features can expand the potential of foundation models like SAM, and the results can be translated to applications such as image-guided therapy, mixed reality interaction, robotic navigation, and data augmentation.

Robotic Navigation Autonomy for Subretinal Injection via Intelligent Real-Time Virtual iOCT Volume Slicing

Jan 17, 2023Abstract:In the last decade, various robotic platforms have been introduced that could support delicate retinal surgeries. Concurrently, to provide semantic understanding of the surgical area, recent advances have enabled microscope-integrated intraoperative Optical Coherent Tomography (iOCT) with high-resolution 3D imaging at near video rate. The combination of robotics and semantic understanding enables task autonomy in robotic retinal surgery, such as for subretinal injection. This procedure requires precise needle insertion for best treatment outcomes. However, merging robotic systems with iOCT introduces new challenges. These include, but are not limited to high demands on data processing rates and dynamic registration of these systems during the procedure. In this work, we propose a framework for autonomous robotic navigation for subretinal injection, based on intelligent real-time processing of iOCT volumes. Our method consists of an instrument pose estimation method, an online registration between the robotic and the iOCT system, and trajectory planning tailored for navigation to an injection target. We also introduce intelligent virtual B-scans, a volume slicing approach for rapid instrument pose estimation, which is enabled by Convolutional Neural Networks (CNNs). Our experiments on ex-vivo porcine eyes demonstrate the precision and repeatability of the method. Finally, we discuss identified challenges in this work and suggest potential solutions to further the development of such systems.

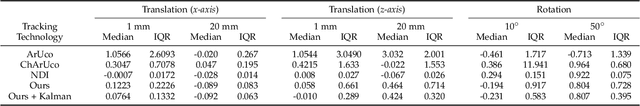

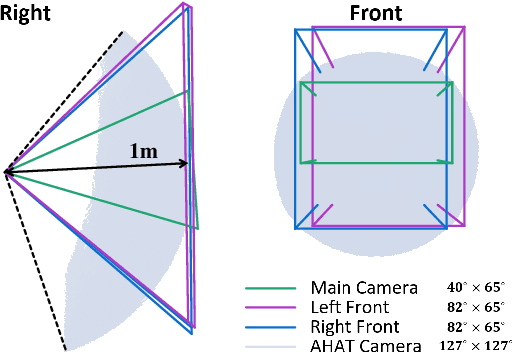

STTAR: Surgical Tool Tracking using off-the-shelf Augmented Reality Head-Mounted Displays

Aug 17, 2022

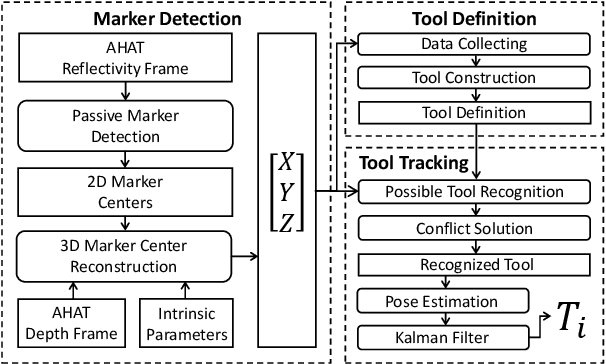

Abstract:The use of Augmented Reality (AR) for navigation purposes has shown beneficial in assisting physicians during the performance of surgical procedures. These applications commonly require knowing the pose of surgical tools and patients to provide visual information that surgeons can use during the task performance. Existing medical-grade tracking systems use infrared cameras placed inside the Operating Room (OR) to identify retro-reflective markers attached to objects of interest and compute their pose. Some commercially available AR Head-Mounted Displays (HMDs) use similar cameras for self-localization, hand tracking, and estimating the objects' depth. This work presents a framework that uses the built-in cameras of AR HMDs to enable accurate tracking of retro-reflective markers, such as those used in surgical procedures, without the need to integrate any additional components. This framework is also capable of simultaneously tracking multiple tools. Our results show that the tracking and detection of the markers can be achieved with an accuracy of 0.09 +- 0.06 mm on lateral translation, 0.42 +- 0.32 mm on longitudinal translation, and 0.80 +- 0.39 deg for rotations around the vertical axis. Furthermore, to showcase the relevance of the proposed framework, we evaluate the system's performance in the context of surgical procedures. This use case was designed to replicate the scenarios of k-wire insertions in orthopedic procedures. For evaluation, two surgeons and one biomedical researcher were provided with visual navigation, each performing 21 injections. Results from this use case provide comparable accuracy to those reported in the literature for AR-based navigation procedures.

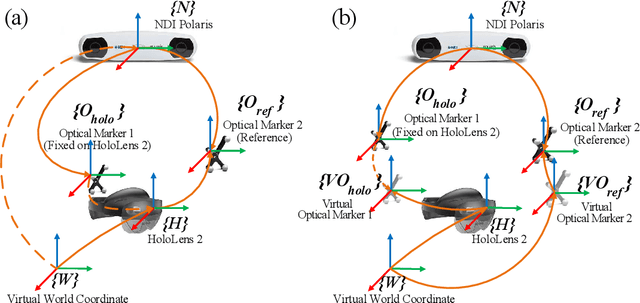

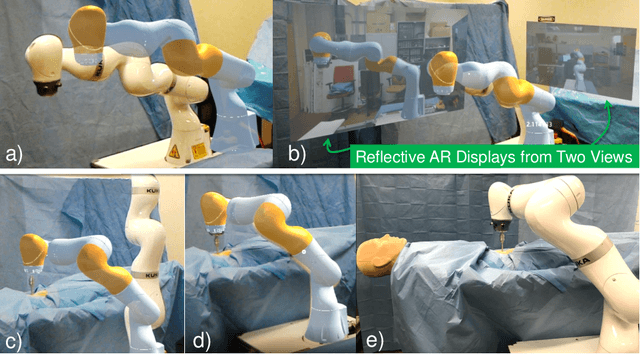

Reflective-AR Display: An Interaction Methodology for Virtual-Real Alignment in Medical Robotics

Jul 23, 2019

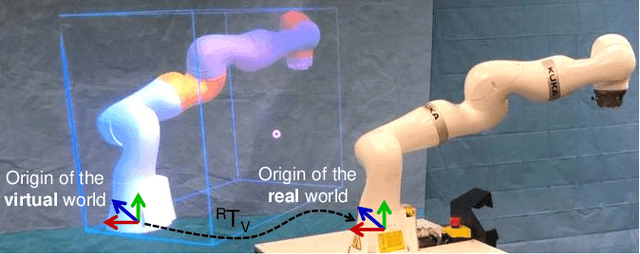

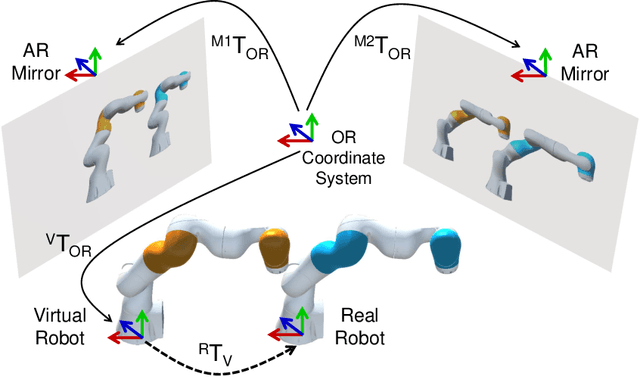

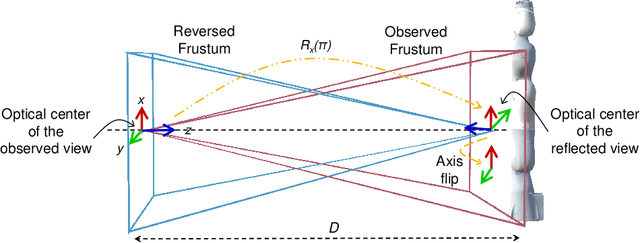

Abstract:Robot-assisted minimally invasive surgery has shown to improve patient outcomes, as well as reduce complications and recovery time for several clinical applications. However, increasingly configurable robotic arms require careful setup by surgical staff to maximize anatomical reach and avoid collisions. Furthermore, safety regulations prevent automatically driving robotic arms to this optimal positioning. We propose a Head-Mounted Display (HMD) based augmented reality (AR) guidance system for optimal surgical arm setup. In this case, the staff equipped with HMD aligns the robot with its planned virtual counterpart. The main challenge, however, is the perspective ambiguities hindering such collaborative robotic solution. To overcome this challenge, we introduce a novel registration concept for intuitive alignment of such AR content by providing a multi-view AR experience via reflective-AR displays that show the augmentations from multiple viewpoints. Using this system, operators can visualize different perspectives simultaneously while actively adjusting the pose to determine the registration transformation that most closely superimposes the virtual onto real. The experimental results demonstrate improvement in the interactive alignment of a virtual and real robot when using a reflective-AR display. We also present measurements from configuring a robotic manipulator in a simulated trocar placement surgery using the AR guidance methodology.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge