Tianyu Song

Feasibility of Augmented Reality-Guided Robotic Ultrasound with Cone-Beam CT Integration for Spine Procedures

Mar 23, 2026Abstract:Accurate needle placement in spine interventions is critical for effective pain management, yet it depends on reliable identification of anatomical landmarks and careful trajectory planning. Conventional imaging guidance often relies both on CT and X-ray fluoroscopy, exposing patients and staff to high dose of radiation while providing limited real-time 3D feedback. We present an optical see-through augmented reality (OST-AR)-guided robotic system for spine procedures that provides in situ visualization of spinal structures to support needle trajectory planning. We integrate a cone-beam CT (CBCT)-derived 3D spine model which is co-registered with live ultrasound, enabling users to combine global anatomical context with local, real-time imaging. We evaluated the system in a phantom user study involving two representative spine procedures: facet joint injection and lumbar puncture. Sixteen participants performed insertions under two visualization conditions: conventional screen vs. AR. Results show that AR significantly reduces execution time and across-task placement error, while also improving usability, trust, and spatial understanding and lowering cognitive workload. These findings demonstrate the feasibility of AR-guided robotic ultrasound for spine interventions, highlighting its potential to enhance accuracy, efficiency, and user experience in image-guided procedures.

Ultrasound-Guided Real-Time Spinal Motion Visualization for Spinal Instability Assessment

Feb 13, 2026Abstract:Purpose: Spinal instability is a widespread condition that causes pain, fatigue, and restricted mobility, profoundly affecting patients' quality of life. In clinical practice, the gold standard for diagnosis is dynamic X-ray imaging. However, X-ray provides only 2D motion information, while 3D modalities such as computed tomography (CT) or cone beam computed tomography (CBCT) cannot efficiently capture motion. Therefore, there is a need for a system capable of visualizing real-time 3D spinal motion while minimizing radiation exposure. Methods: We propose ultrasound as an auxiliary modality for 3D spine visualization. Due to acoustic limitations, ultrasound captures only the superficial spinal surface. Therefore, the partially compounded ultrasound volume is registered to preoperative 3D imaging. In this study, CBCT provides the neutral spine configuration, while robotic ultrasound acquisition is performed at maximal spinal bending. A kinematic model is applied to the CBCT-derived spine model for coarse registration, followed by ICP for fine registration, with kinematic parameters optimized based on the registration results. Real-time ultrasound motion tracking is then used to estimate continuous 3D spinal motion by interpolating between the neutral and maximally bent states. Results: The pipeline was evaluated on a bendable 3D-printed lumbar spine phantom. The registration error was $1.941 \pm 0.199$ mm and the interpolated spinal motion error was $2.01 \pm 0.309$ mm (median). Conclusion: The proposed robotic ultrasound framework enables radiation-reduced, real-time 3D visualization of spinal motion, offering a promising 3D alternative to conventional dynamic X-ray imaging for assessing spinal instability.

Intelligent Virtual Sonographer (IVS): Enhancing Physician-Robot-Patient Communication

Jul 17, 2025Abstract:The advancement and maturity of large language models (LLMs) and robotics have unlocked vast potential for human-computer interaction, particularly in the field of robotic ultrasound. While existing research primarily focuses on either patient-robot or physician-robot interaction, the role of an intelligent virtual sonographer (IVS) bridging physician-robot-patient communication remains underexplored. This work introduces a conversational virtual agent in Extended Reality (XR) that facilitates real-time interaction between physicians, a robotic ultrasound system(RUS), and patients. The IVS agent communicates with physicians in a professional manner while offering empathetic explanations and reassurance to patients. Furthermore, it actively controls the RUS by executing physician commands and transparently relays these actions to the patient. By integrating LLM-powered dialogue with speech-to-text, text-to-speech, and robotic control, our system enhances the efficiency, clarity, and accessibility of robotic ultrasound acquisition. This work constitutes a first step toward understanding how IVS can bridge communication gaps in physician-robot-patient interaction, providing more control and therefore trust into physician-robot interaction while improving patient experience and acceptance of robotic ultrasound.

Learning A Spiking Neural Network for Efficient Image Deraining

May 10, 2024

Abstract:Recently, spiking neural networks (SNNs) have demonstrated substantial potential in computer vision tasks. In this paper, we present an Efficient Spiking Deraining Network, called ESDNet. Our work is motivated by the observation that rain pixel values will lead to a more pronounced intensity of spike signals in SNNs. However, directly applying deep SNNs to image deraining task still remains a significant challenge. This is attributed to the information loss and training difficulties that arise from discrete binary activation and complex spatio-temporal dynamics. To this end, we develop a spiking residual block to convert the input into spike signals, then adaptively optimize the membrane potential by introducing attention weights to adjust spike responses in a data-driven manner, alleviating information loss caused by discrete binary activation. By this way, our ESDNet can effectively detect and analyze the characteristics of rain streaks by learning their fluctuations. This also enables better guidance for the deraining process and facilitates high-quality image reconstruction. Instead of relying on the ANN-SNN conversion strategy, we introduce a gradient proxy strategy to directly train the model for overcoming the challenge of training. Experimental results show that our approach gains comparable performance against ANN-based methods while reducing energy consumption by 54%. The code source is available at https://github.com/MingTian99/ESDNet.

MPSA-DenseNet: A novel deep learning model for English accent classification

Jun 15, 2023

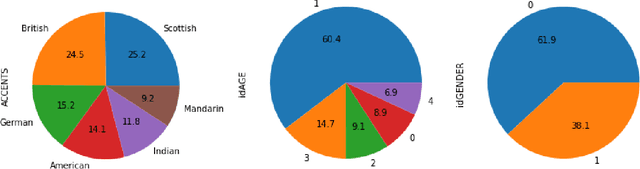

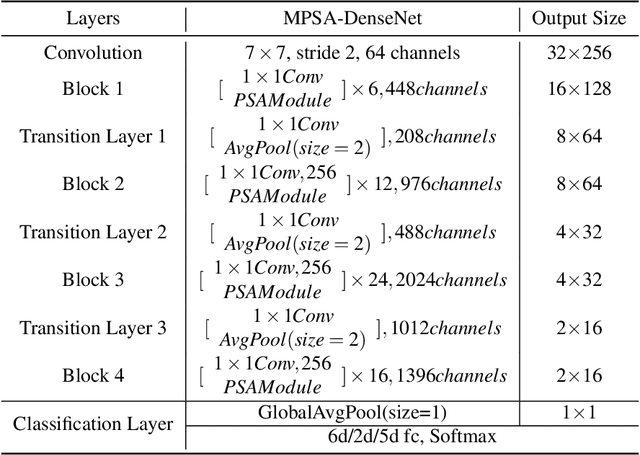

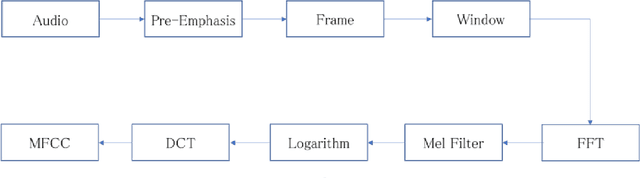

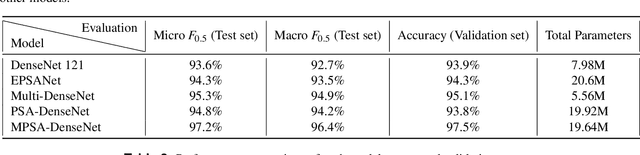

Abstract:This paper presents three innovative deep learning models for English accent classification: Multi-DenseNet, PSA-DenseNet, and MPSE-DenseNet, that combine multi-task learning and the PSA module attention mechanism with DenseNet. We applied these models to data collected from six dialects of English across native English speaking regions (Britain, the United States, Scotland) and nonnative English speaking regions (China, Germany, India). Our experimental results show a significant improvement in classification accuracy, particularly with MPSA-DenseNet, which outperforms all other models, including DenseNet and EPSA models previously used for accent identification. Our findings indicate that MPSA-DenseNet is a highly promising model for accurately identifying English accents.

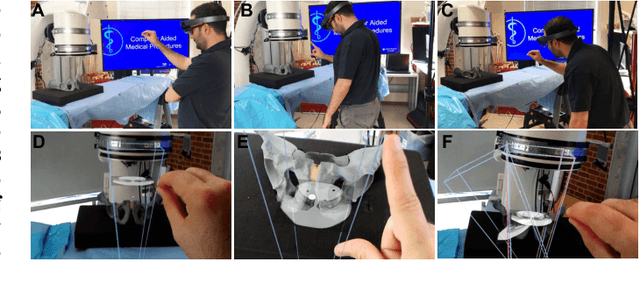

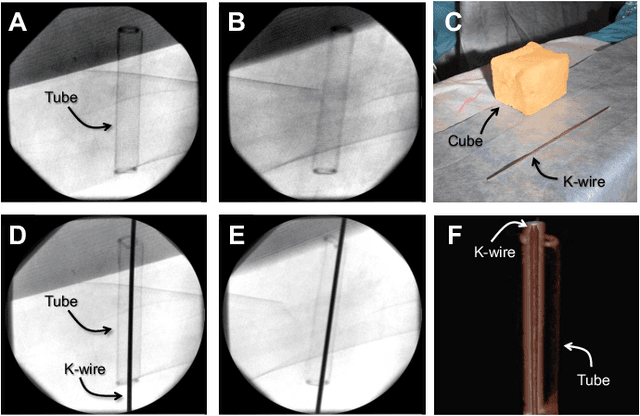

STTAR: Surgical Tool Tracking using off-the-shelf Augmented Reality Head-Mounted Displays

Aug 17, 2022

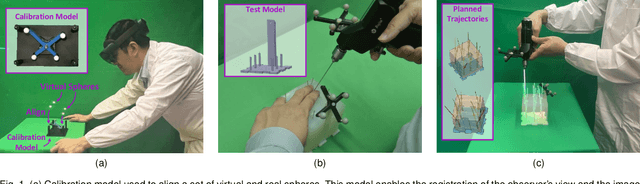

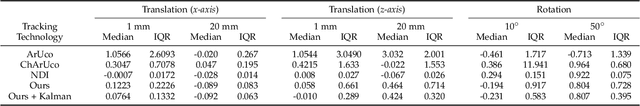

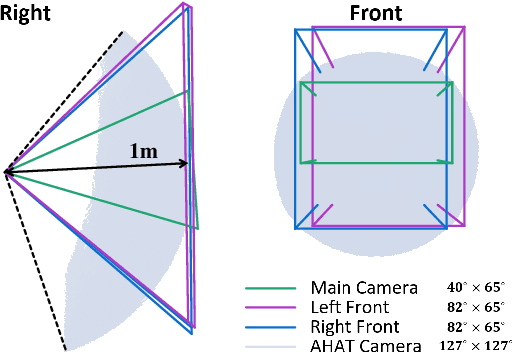

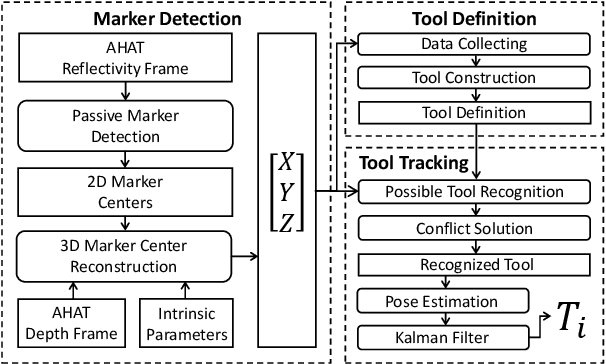

Abstract:The use of Augmented Reality (AR) for navigation purposes has shown beneficial in assisting physicians during the performance of surgical procedures. These applications commonly require knowing the pose of surgical tools and patients to provide visual information that surgeons can use during the task performance. Existing medical-grade tracking systems use infrared cameras placed inside the Operating Room (OR) to identify retro-reflective markers attached to objects of interest and compute their pose. Some commercially available AR Head-Mounted Displays (HMDs) use similar cameras for self-localization, hand tracking, and estimating the objects' depth. This work presents a framework that uses the built-in cameras of AR HMDs to enable accurate tracking of retro-reflective markers, such as those used in surgical procedures, without the need to integrate any additional components. This framework is also capable of simultaneously tracking multiple tools. Our results show that the tracking and detection of the markers can be achieved with an accuracy of 0.09 +- 0.06 mm on lateral translation, 0.42 +- 0.32 mm on longitudinal translation, and 0.80 +- 0.39 deg for rotations around the vertical axis. Furthermore, to showcase the relevance of the proposed framework, we evaluate the system's performance in the context of surgical procedures. This use case was designed to replicate the scenarios of k-wire insertions in orthopedic procedures. For evaluation, two surgeons and one biomedical researcher were provided with visual navigation, each performing 21 injections. Results from this use case provide comparable accuracy to those reported in the literature for AR-based navigation procedures.

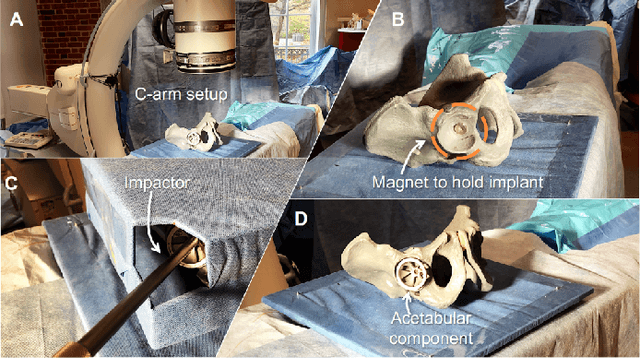

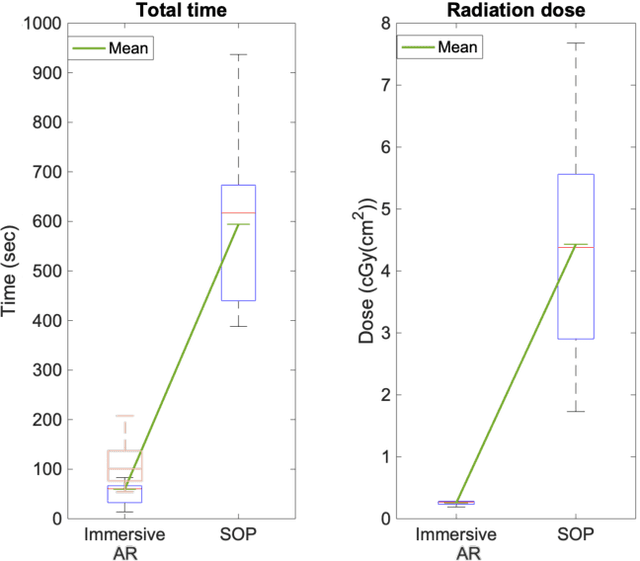

Spatiotemporal-Aware Augmented Reality: Redefining HCI in Image-Guided Therapy

Mar 04, 2020

Abstract:Suboptimal interaction with patient data and challenges in mastering 3D anatomy based on ill-posed 2D interventional images are essential concerns in image-guided therapies. Augmented reality (AR) has been introduced in the operating rooms in the last decade; however, in image-guided interventions, it has often only been considered as a visualization device improving traditional workflows. As a consequence, the technology is gaining minimum maturity that it requires to redefine new procedures, user interfaces, and interactions. The main contribution of this paper is to reveal how exemplary workflows are redefined by taking full advantage of head-mounted displays when entirely co-registered with the imaging system at all times. The proposed AR landscape is enabled by co-localizing the users and the imaging devices via the operating room environment and exploiting all involved frustums to move spatial information between different bodies. The awareness of the system from the geometric and physical characteristics of X-ray imaging allows the redefinition of different human-machine interfaces. We demonstrate that this AR paradigm is generic, and can benefit a wide variety of procedures. Our system achieved an error of $4.76\pm2.91$ mm for placing K-wire in a fracture management procedure, and yielded errors of $1.57\pm1.16^\circ$ and $1.46\pm1.00^\circ$ in the abduction and anteversion angles, respectively, for total hip arthroplasty. We hope that our holistic approach towards improving the interface of surgery not only augments the surgeon's capabilities but also augments the surgical team's experience in carrying out an effective intervention with reduced complications and provide novel approaches of documenting procedures for training purposes.

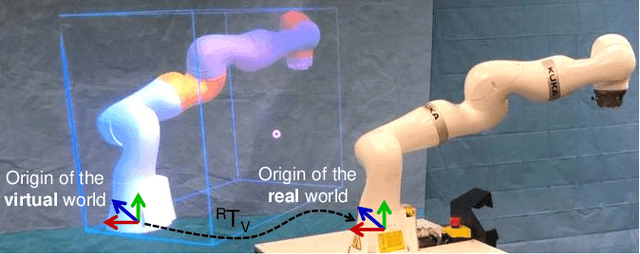

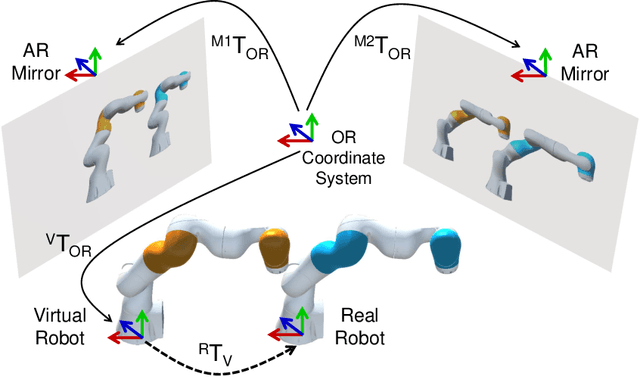

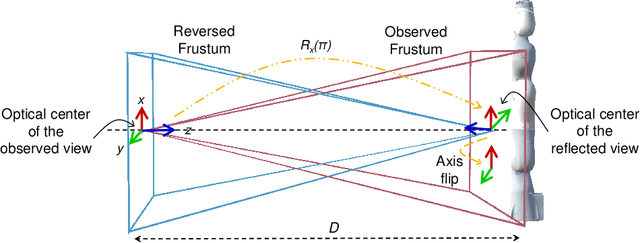

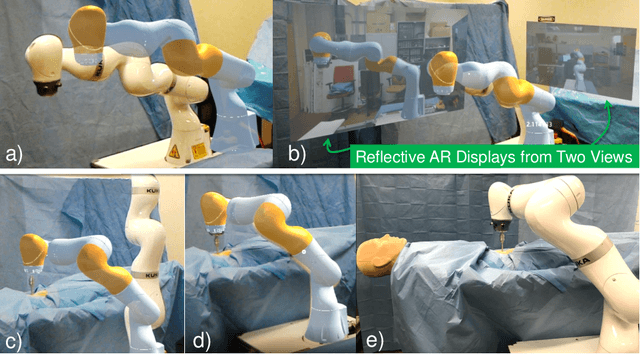

Reflective-AR Display: An Interaction Methodology for Virtual-Real Alignment in Medical Robotics

Jul 23, 2019

Abstract:Robot-assisted minimally invasive surgery has shown to improve patient outcomes, as well as reduce complications and recovery time for several clinical applications. However, increasingly configurable robotic arms require careful setup by surgical staff to maximize anatomical reach and avoid collisions. Furthermore, safety regulations prevent automatically driving robotic arms to this optimal positioning. We propose a Head-Mounted Display (HMD) based augmented reality (AR) guidance system for optimal surgical arm setup. In this case, the staff equipped with HMD aligns the robot with its planned virtual counterpart. The main challenge, however, is the perspective ambiguities hindering such collaborative robotic solution. To overcome this challenge, we introduce a novel registration concept for intuitive alignment of such AR content by providing a multi-view AR experience via reflective-AR displays that show the augmentations from multiple viewpoints. Using this system, operators can visualize different perspectives simultaneously while actively adjusting the pose to determine the registration transformation that most closely superimposes the virtual onto real. The experimental results demonstrate improvement in the interactive alignment of a virtual and real robot when using a reflective-AR display. We also present measurements from configuring a robotic manipulator in a simulated trocar placement surgery using the AR guidance methodology.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge