Yifeng Zhang

CAMO: A Conditional Neural Solver for the Multi-objective Multiple Traveling Salesman Problem

Mar 19, 2026Abstract:Robotic systems often require a team of robots to collectively visit multiple targets while optimizing competing objectives, such as total travel cost and makespan. This setting can be formulated as the Multi-Objective Multiple Traveling Salesman Problem (MOMTSP). Although learning-based methods have shown strong performance on the single-agent TSP and multi-objective TSP variants, they rarely address the combined challenges of multi-agent coordination and multi-objective trade-offs, which introduce dual sources of complexity. To bridge this gap, we propose CAMO, a conditional neural solver for MOMTSP that generalizes across varying numbers of targets, agents, and preference vectors, and yields high-quality approximations to the Pareto front (PF). Specifically, CAMO consists of a conditional encoder to fuse preferences into instance representations, enabling explicit control over multi-objective trade-offs, and a collaborative decoder that coordinates all agents by alternating agent selection and node selection to construct multi-agent tours autoregressively. To further improve generalization, we train CAMO with a REINFORCE-based objective over a mixed distribution of problem sizes. Extensive experiments show that CAMO outperforms both neural and conventional heuristics, achieving a closer approximation of PFs. In addition, ablation results validate the contributions of CAMO's key components, and real-world tests on a mobile robot platform demonstrate its practical applicability.

Inverse Resistive Force Theory (I-RFT): Learning granular properties through robot-terrain physical interactions

Mar 08, 2026Abstract:For robots to navigate safely and efficiently on soft, granular terrains, it is crucial to gather information about the terrain's mechanical properties, which directly affect locomotion performance. Recent research has developed robotic legs that can accurately sense ground reaction forces during locomotion. However, existing tests of granular property estimation often rely on specific foot trajectories, such as vertical penetration or horizontal shear, limiting their applicability during natural locomotion. To address this limitation, we introduce a physics-informed machine learning framework, Inverse Resistive Force Theory (I-RFT), which integrates the Granular Resistive Force Theory model with Gaussian Processes to infer terrain properties from proprioceptively measured contact forces under arbitrary gait trajectories. By embedding the granular force model within the learning process, I-RFT preserves physical consistency while enabling generalization across diverse motion primitives. Experimental results demonstrate that I-RFT accurately estimates terrain properties across multiple gait trajectories and toe shapes. Moreover, we show that the quantified uncertainty over the terrain resistance stress map could enable robots to optimize foot design and gait trajectories for efficient information gathering. This approach establishes a new foundation for data-efficient characterization of complex granular environments and opens new avenues for locomotion strategies that actively adapt gait for autonomous terrain exploration.

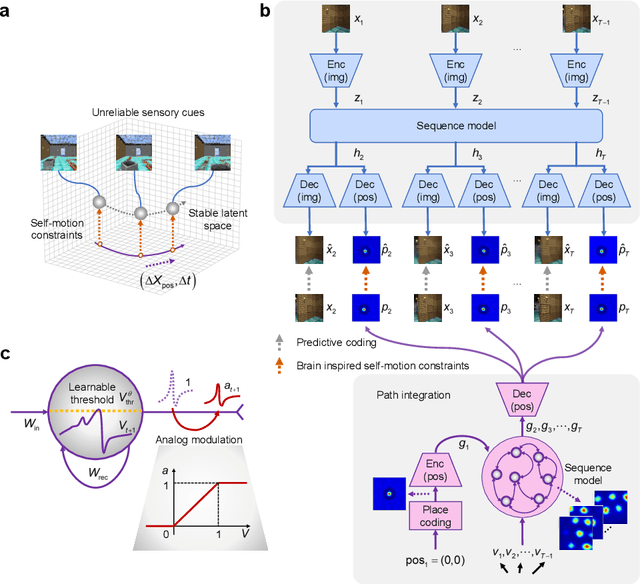

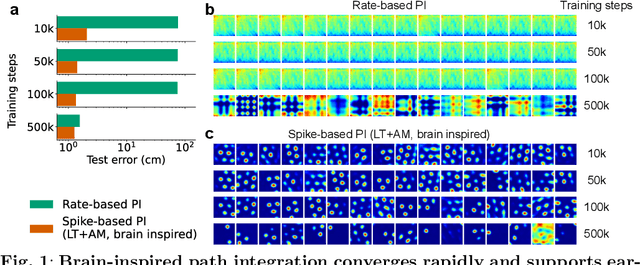

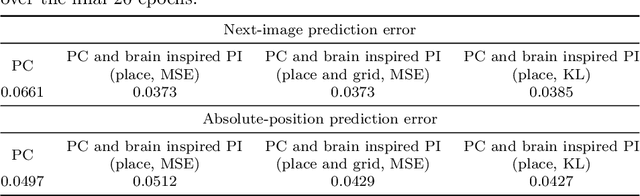

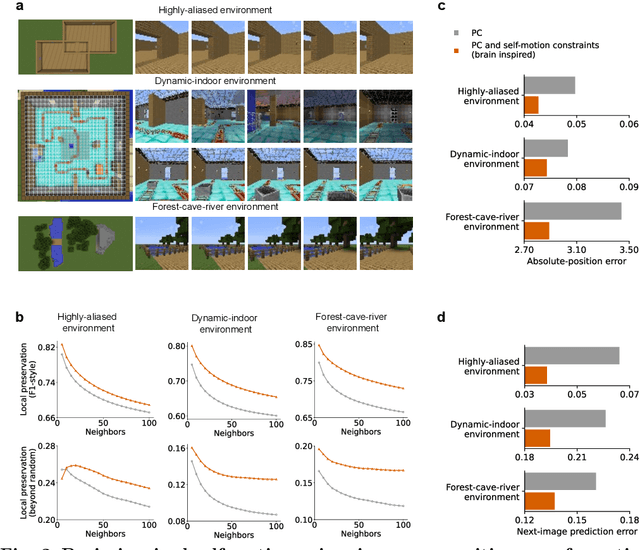

Self-motion as a structural prior for coherent and robust formation of cognitive maps

Dec 23, 2025

Abstract:Most computational accounts of cognitive maps assume that stability is achieved primarily through sensory anchoring, with self-motion contributing to incremental positional updates only. However, biological spatial representations often remain coherent even when sensory cues degrade or conflict, suggesting that self-motion may play a deeper organizational role. Here, we show that self-motion can act as a structural prior that actively organizes the geometry of learned cognitive maps. We embed a path-integration-based motion prior in a predictive-coding framework, implemented using a capacity-efficient, brain-inspired recurrent mechanism combining spiking dynamics, analog modulation and adaptive thresholds. Across highly aliased, dynamically changing and naturalistic environments, this structural prior consistently stabilizes map formation, improving local topological fidelity, global positional accuracy and next-step prediction under sensory ambiguity. Mechanistic analyses reveal that the motion prior itself encodes geometrically precise trajectories under tight constraints of internal states and generalizes zero-shot to unseen environments, outperforming simpler motion-based constraints. Finally, deployment on a quadrupedal robot demonstrates that motion-derived structural priors enhance online landmark-based navigation under real-world sensory variability. Together, these results reframe self-motion as an organizing scaffold for coherent spatial representations, showing how brain-inspired principles can systematically strengthen spatial intelligence in embodied artificial agents.

Domain Adaptation in Structural Health Monitoring of Civil Infrastructure: A Systematic Review

Dec 21, 2025Abstract:This study provides a comprehensive review of domain adaptation (DA) techniques in vibration-based structural health monitoring (SHM). As data-driven models increasingly support the assessment of civil structures, the persistent challenge of transferring knowledge across varying geometries, materials, and environmental conditions remains a major obstacle. DA offers a systematic approach to mitigate these discrepancies by aligning feature distributions between simulated, laboratory, and field domains while preserving the sensitivity of damage-related information. Drawing on more than sixty representative studies, this paper analyzes the evolution of DA methods for SHM, including statistical alignment, adversarial and subdomain learning, physics-informed adaptation, and generative modeling for simulation-to-real transfer. The review summarizes their contributions and limitations across bridge and building applications, revealing that while DA has improved generalization significantly, key challenges persist: managing domain discrepancy, addressing data scarcity, enhancing model interpretability, and enabling adaptability to multiple sources and time-varying conditions. Future research directions emphasize integrating physical constraints into learning objectives, developing physics-consistent generative frameworks to enhance data realism, establishing interpretable and certifiable DA systems for engineering practice, and advancing multi-source and lifelong adaptation for scalable monitoring. Overall, this review consolidates the methodological foundation of DA for SHM, identifies existing barriers to generalization and trust, and outlines the technological trajectory toward transparent, physics-aware, and adaptive monitoring systems that support the long-term resilience of civil infrastructure.

Effect of Gait Design on Proprioceptive Sensing of Terrain Properties in a Quadrupedal Robot

Sep 26, 2025Abstract:In-situ robotic exploration is an important tool for advancing knowledge of geological processes that describe the Earth and other Planetary bodies. To inform and enhance operations for these roving laboratories, it is imperative to understand the terramechanical properties of their environments, especially for traversing on loose, deformable substrates. Recent research suggested that legged robots with direct-drive and low-gear ratio actuators can sensitively detect external forces, and therefore possess the potential to measure terrain properties with their legs during locomotion, providing unprecedented sampling speed and density while accessing terrains previously too risky to sample. This paper explores these ideas by investigating the impact of gait on proprioceptive terrain sensing accuracy, particularly comparing a sensing-oriented gait, Crawl N' Sense, with a locomotion-oriented gait, Trot-Walk. Each gait's ability to measure the strength and texture of deformable substrate is quantified as the robot locomotes over a laboratory transect consisting of a rigid surface, loose sand, and loose sand with synthetic surface crusts. Our results suggest that with both the sensing-oriented crawling gait and locomotion-oriented trot gait, the robot can measure a consistent difference in the strength (in terms of penetration resistance) between the low- and high-resistance substrates; however, the locomotion-oriented trot gait contains larger magnitude and variance in measurements. Furthermore, the slower crawl gait can detect brittle ruptures of the surface crusts with significantly higher accuracy than the faster trot gait. Our results offer new insights that inform legged robot "sensing during locomotion" gait design and planning for scouting the terrain and producing scientific measurements on other worlds to advance our understanding of their geology and formation.

The Primacy of Magnitude in Low-Rank Adaptation

Jul 09, 2025Abstract:Low-Rank Adaptation (LoRA) offers a parameter-efficient paradigm for tuning large models. While recent spectral initialization methods improve convergence and performance over the naive "Noise & Zeros" scheme, their extra computational and storage overhead undermines efficiency. In this paper, we establish update magnitude as the fundamental driver of LoRA performance and propose LoRAM, a magnitude-driven "Basis & Basis" initialization scheme that matches spectral methods without their inefficiencies. Our key contributions are threefold: (i) Magnitude of weight updates determines convergence. We prove low-rank structures intrinsically bound update magnitudes, unifying hyperparameter tuning in learning rate, scaling factor, and initialization as mechanisms to optimize magnitude regulation. (ii) Spectral initialization succeeds via magnitude amplification. We demystify that the presumed knowledge-driven benefit of the spectral component essentially arises from the boost in the weight update magnitude. (iii) A novel and compact initialization strategy, LoRAM, scales deterministic orthogonal bases using pretrained weight magnitudes to simulate spectral gains. Extensive experiments show that LoRAM serves as a strong baseline, retaining the full efficiency of LoRA while matching or outperforming spectral initialization across benchmarks.

DiscoVLA: Discrepancy Reduction in Vision, Language, and Alignment for Parameter-Efficient Video-Text Retrieval

Jun 10, 2025Abstract:The parameter-efficient adaptation of the image-text pretraining model CLIP for video-text retrieval is a prominent area of research. While CLIP is focused on image-level vision-language matching, video-text retrieval demands comprehensive understanding at the video level. Three key discrepancies emerge in the transfer from image-level to video-level: vision, language, and alignment. However, existing methods mainly focus on vision while neglecting language and alignment. In this paper, we propose Discrepancy Reduction in Vision, Language, and Alignment (DiscoVLA), which simultaneously mitigates all three discrepancies. Specifically, we introduce Image-Video Features Fusion to integrate image-level and video-level features, effectively tackling both vision and language discrepancies. Additionally, we generate pseudo image captions to learn fine-grained image-level alignment. To mitigate alignment discrepancies, we propose Image-to-Video Alignment Distillation, which leverages image-level alignment knowledge to enhance video-level alignment. Extensive experiments demonstrate the superiority of our DiscoVLA. In particular, on MSRVTT with CLIP (ViT-B/16), DiscoVLA outperforms previous methods by 1.5% in R@1, reaching a final score of 50.5% R@1. The code is available at https://github.com/LunarShen/DsicoVLA.

Preference-Driven Multi-Objective Combinatorial Optimization with Conditional Computation

Jun 10, 2025Abstract:Recent deep reinforcement learning methods have achieved remarkable success in solving multi-objective combinatorial optimization problems (MOCOPs) by decomposing them into multiple subproblems, each associated with a specific weight vector. However, these methods typically treat all subproblems equally and solve them using a single model, hindering the effective exploration of the solution space and thus leading to suboptimal performance. To overcome the limitation, we propose POCCO, a novel plug-and-play framework that enables adaptive selection of model structures for subproblems, which are subsequently optimized based on preference signals rather than explicit reward values. Specifically, we design a conditional computation block that routes subproblems to specialized neural architectures. Moreover, we propose a preference-driven optimization algorithm that learns pairwise preferences between winning and losing solutions. We evaluate the efficacy and versatility of POCCO by applying it to two state-of-the-art neural methods for MOCOPs. Experimental results across four classic MOCOP benchmarks demonstrate its significant superiority and strong generalization.

Unicorn: A Universal and Collaborative Reinforcement Learning Approach Towards Generalizable Network-Wide Traffic Signal Control

Mar 14, 2025Abstract:Adaptive traffic signal control (ATSC) is crucial in reducing congestion, maximizing throughput, and improving mobility in rapidly growing urban areas. Recent advancements in parameter-sharing multi-agent reinforcement learning (MARL) have greatly enhanced the scalable and adaptive optimization of complex, dynamic flows in large-scale homogeneous networks. However, the inherent heterogeneity of real-world traffic networks, with their varied intersection topologies and interaction dynamics, poses substantial challenges to achieving scalable and effective ATSC across different traffic scenarios. To address these challenges, we present Unicorn, a universal and collaborative MARL framework designed for efficient and adaptable network-wide ATSC. Specifically, we first propose a unified approach to map the states and actions of intersections with varying topologies into a common structure based on traffic movements. Next, we design a Universal Traffic Representation (UTR) module with a decoder-only network for general feature extraction, enhancing the model's adaptability to diverse traffic scenarios. Additionally, we incorporate an Intersection Specifics Representation (ISR) module, designed to identify key latent vectors that represent the unique intersection's topology and traffic dynamics through variational inference techniques. To further refine these latent representations, we employ a contrastive learning approach in a self-supervised manner, which enables better differentiation of intersection-specific features. Moreover, we integrate the state-action dependencies of neighboring agents into policy optimization, which effectively captures dynamic agent interactions and facilitates efficient regional collaboration. Our results show that Unicorn outperforms other methods across various evaluation metrics, highlighting its potential in complex, dynamic traffic networks.

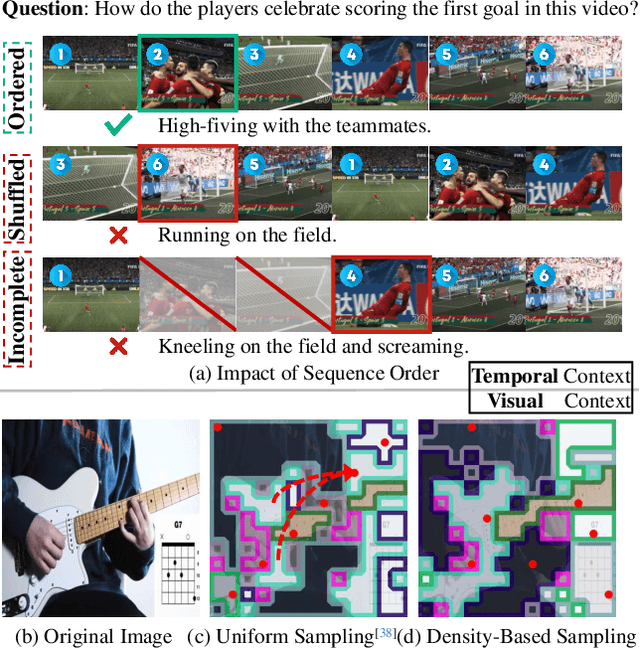

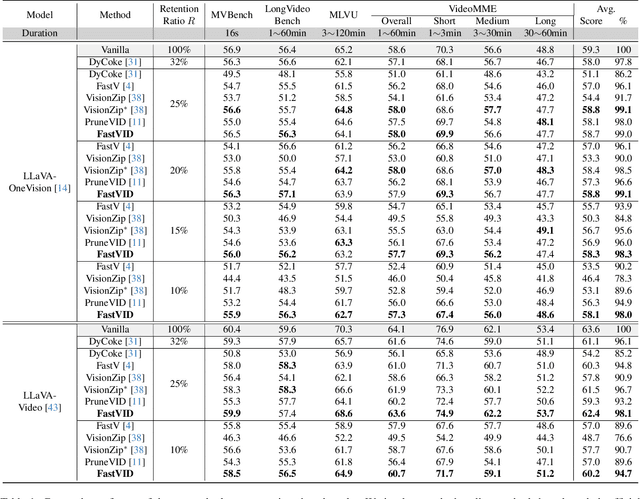

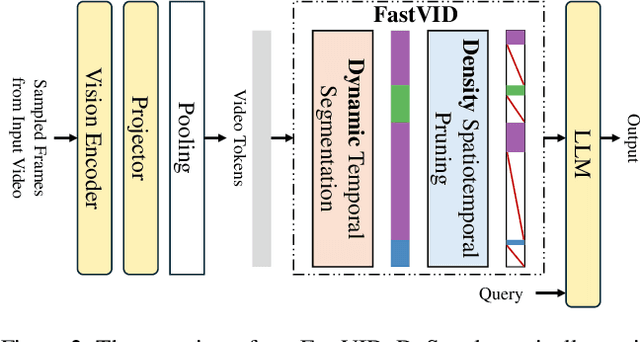

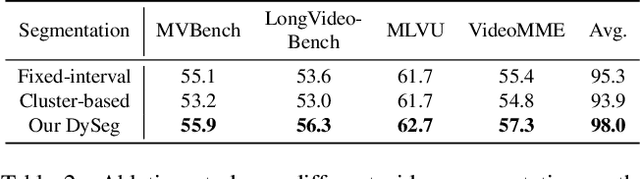

FastVID: Dynamic Density Pruning for Fast Video Large Language Models

Mar 14, 2025

Abstract:Video Large Language Models have shown impressive capabilities in video comprehension, yet their practical deployment is hindered by substantial inference costs caused by redundant video tokens. Existing pruning techniques fail to fully exploit the spatiotemporal redundancy inherent in video data. To bridge this gap, we perform a systematic analysis of video redundancy from two perspectives: temporal context and visual context. Leveraging this insight, we propose Dynamic Density Pruning for Fast Video LLMs termed FastVID. Specifically, FastVID dynamically partitions videos into temporally ordered segments to preserve temporal structure and applies a density-based token pruning strategy to maintain essential visual information. Our method significantly reduces computational overhead while maintaining temporal and visual integrity. Extensive evaluations show that FastVID achieves state-of-the-art performance across various short- and long-video benchmarks on leading Video LLMs, including LLaVA-OneVision and LLaVA-Video. Notably, FastVID effectively prunes 90% of video tokens while retaining 98.0% of LLaVA-OneVision's original performance. The code is available at https://github.com/LunarShen/FastVID.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge