Yang Hu

LiteInception: A Lightweight and Interpretable Deep Learning Framework for General Aviation Fault Diagnosis

Apr 02, 2026Abstract:General aviation fault diagnosis and efficient maintenance are critical to flight safety; however, deploying deep learning models on resource-constrained edge devices poses dual challenges in computational capacity and interpretability. This paper proposes LiteInception--a lightweight interpretable fault diagnosis framework designed for edge deployment. The framework adopts a two-stage cascaded architecture aligned with standard maintenance workflows: Stage 1 performs high-recall fault detection, and Stage 2 conducts fine-grained fault classification on anomalous samples, thereby decoupling optimization objectives and enabling on-demand allocation of computational resources. For model compression, a multi-method fusion strategy based on mutual information, gradient analysis, and SE attention weights is proposed to reduce the input sensor channels from 23 to 15, and a 1+1 branch LiteInception architecture is introduced that compresses InceptionTime parameters by 70%, accelerates CPU inference by over 8x, with less than 3% F1 loss. Furthermore, knowledge distillation is introduced as a precision-recall regulation mechanism, enabling the same lightweight model to adapt to different scenarios--such as safety-critical and auxiliary diagnosis--by switching training strategies. Finally, a dual-layer interpretability framework integrating four attribution methods is constructed, providing traceable evidence chains of "which sensor x which time period." Experiments on the NGAFID dataset demonstrate a fault detection accuracy of 81.92% with 83.24% recall, and a fault identification accuracy of 77.00%, validating the framework's favorable balance among efficiency, accuracy, and interpretability.

A Heterogeneous Long-Micro Scale Cascading Architecture for General Aviation Health Management

Mar 27, 2026Abstract:BACKGROUND: General aviation fleet expansion demands intelligent health monitoring under computational constraints. Real-world aircraft health diagnosis requires balancing accuracy with computational constraints under extreme class imbalance and environmental uncertainty. Existing end-to-end approaches suffer from the receptive field paradox: global attention introduces excessive operational heterogeneity noise for fine-grained fault classification, while localized constraints sacrifice critical cross-temporal context essential for anomaly detection. METHODS: This paper presents an AI-driven heterogeneous cascading architecture for general aviation health management. The proposed Long-Micro Scale Diagnostician (LMSD) explicitly decouples global anomaly detection (full-sequence attention) from micro-scale fault classification (restricted receptive fields), resolving the receptive field paradox while minimizing training overhead. A knowledge distillation-based interpretability module provides physically traceable explanations for safety-critical validation. RESULTS: Experiments on the public National General Aviation Flight Information Database (NGAFID) dataset (28,935 flights, 36 categories) demonstrate 4--8% improvement in safety-critical metrics (MCWPM) with 4.2 times training acceleration and 46% model compression compared to end-to-end baselines. CONCLUSIONS: The AI-driven heterogeneous architecture offers deployable solutions for aviation equipment health management, with potential for digital twin integration in future work. The proposed framework substantiates deployability in resource-constrained aviation environments while maintaining stringent safety requirements.

Select, Hypothesize and Verify: Towards Verified Neuron Concept Interpretation

Mar 26, 2026Abstract:It is essential for understanding neural network decisions to interpret the functionality (also known as concepts) of neurons. Existing approaches describe neuron concepts by generating natural language descriptions, thereby advancing the understanding of the neural network's decision-making mechanism. However, these approaches assume that each neuron has well-defined functions and provides discriminative features for neural network decision-making. In fact, some neurons may be redundant or may offer misleading concepts. Thus, the descriptions for such neurons may cause misinterpretations of the factors driving the neural network's decisions. To address the issue, we introduce a verification of neuron functions, which checks whether the generated concept highly activates the corresponding neuron. Furthermore, we propose a Select-Hypothesize-Verify framework for interpreting neuron functionality. This framework consists of: 1) selecting activation samples that best capture a neuron's well-defined functional behavior through activation-distribution analysis; 2) forming hypotheses about concepts for the selected neurons; and 3) verifying whether the generated concepts accurately reflect the functionality of the neuron. Extensive experiments show that our method produces more accurate neuron concepts. Our generated concepts activate the corresponding neurons with a probability approximately 1.5 times that of the current state-of-the-art method.

Balancing Safety and Efficiency in Aircraft Health Diagnosis: A Task Decomposition Framework with Heterogeneous Long-Micro Scale Cascading and Knowledge Distillation-based Interpretability

Mar 24, 2026Abstract:Whole-aircraft diagnosis for general aviation faces threefold challenges: data uncertainty, task heterogeneity, and computational inefficiency. Existing end-to-end approaches uniformly model health discrimination and fault characterization, overlooking intrinsic receptive field conflicts between global context modeling and local feature extraction, while incurring prohibitive training costs under severe class imbalance. To address these, this study proposes the Diagnosis Decomposition Framework (DDF), explicitly decoupling diagnosis into Anomaly Detection (AD) and Fault Classification (FC) subtasks via the Long-Micro Scale Diagnostician (LMSD). Employing a "long-range global screening and micro-scale local precise diagnosis" strategy, LMSD utilizes Convolutional Tokenizer with Multi-Head Self-Attention (ConvTokMHSA) for global operational pattern discrimination and Multi-Micro Kernel Network (MMK Net) for local fault feature extraction. Decoupled training separates "large-sample lightweight" and "small-sample complex" optimization pathways, significantly reducing computational overhead. Concurrently, Keyness Extraction Layer (KEL) via knowledge distillation furnishes physically traceable explanations for two-stage decisions, materializing interpretability-by-design. Experiments on the NGAFID real-world aviation dataset demonstrate approximately 4-8% improvement in Multi-Class Weighted Penalty Metric (MCWPM) over baselines with substantially reduced training time, validating comprehensive advantages in task adaptability, interpretability, and efficiency. This provides a deployable methodology for general aviation health management.

pQuant: Towards Effective Low-Bit Language Models via Decoupled Linear Quantization-Aware Training

Feb 26, 2026Abstract:Quantization-Aware Training from scratch has emerged as a promising approach for building efficient large language models (LLMs) with extremely low-bit weights (sub 2-bit), which can offer substantial advantages for edge deployment. However, existing methods still fail to achieve satisfactory accuracy and scalability. In this work, we identify a parameter democratization effect as a key bottleneck: the sensitivity of all parameters becomes homogenized, severely limiting expressivity. To address this, we propose pQuant, a method that decouples parameters by splitting linear layers into two specialized branches: a dominant 1-bit branch for efficient computation and a compact high-precision branch dedicated to preserving the most sensitive parameters. Through tailored feature scaling, we explicitly guide the model to allocate sensitive parameters to the high-precision branch. Furthermore, we extend this branch into multiple, sparsely-activated experts, enabling efficient capacity scaling. Extensive experiments indicate our pQuant achieves state-of-the-art performance in extremely low-bit quantization.

LLaTTE: Scaling Laws for Multi-Stage Sequence Modeling in Large-Scale Ads Recommendation

Jan 27, 2026Abstract:We present LLaTTE (LLM-Style Latent Transformers for Temporal Events), a scalable transformer architecture for production ads recommendation. Through systematic experiments, we demonstrate that sequence modeling in recommendation systems follows predictable power-law scaling similar to LLMs. Crucially, we find that semantic features bend the scaling curve: they are a prerequisite for scaling, enabling the model to effectively utilize the capacity of deeper and longer architectures. To realize the benefits of continued scaling under strict latency constraints, we introduce a two-stage architecture that offloads the heavy computation of large, long-context models to an asynchronous upstream user model. We demonstrate that upstream improvements transfer predictably to downstream ranking tasks. Deployed as the largest user model at Meta, this multi-stage framework drives a 4.3\% conversion uplift on Facebook Feed and Reels with minimal serving overhead, establishing a practical blueprint for harnessing scaling laws in industrial recommender systems.

Max-Entropy Reinforcement Learning with Flow Matching and A Case Study on LQR

Dec 29, 2025Abstract:Soft actor-critic (SAC) is a popular algorithm for max-entropy reinforcement learning. In practice, the energy-based policies in SAC are often approximated using simple policy classes for efficiency, sacrificing the expressiveness and robustness. In this paper, we propose a variant of the SAC algorithm that parameterizes the policy with flow-based models, leveraging their rich expressiveness. In the algorithm, we evaluate the flow-based policy utilizing the instantaneous change-of-variable technique and update the policy with an online variant of flow matching developed in this paper. This online variant, termed importance sampling flow matching (ISFM), enables policy update with only samples from a user-specified sampling distribution rather than the unknown target distribution. We develop a theoretical analysis of ISFM, characterizing how different choices of sampling distributions affect the learning efficiency. Finally, we conduct a case study of our algorithm on the max-entropy linear quadratic regulator problems, demonstrating that the proposed algorithm learns the optimal action distribution.

Designing Spatial Architectures for Sparse Attention: STAR Accelerator via Cross-Stage Tiling

Dec 24, 2025Abstract:Large language models (LLMs) rely on self-attention for contextual understanding, demanding high-throughput inference and large-scale token parallelism (LTPP). Existing dynamic sparsity accelerators falter under LTPP scenarios due to stage-isolated optimizations. Revisiting the end-to-end sparsity acceleration flow, we identify an overlooked opportunity: cross-stage coordination can substantially reduce redundant computation and memory access. We propose STAR, a cross-stage compute- and memory-efficient algorithm-hardware co-design tailored for Transformer inference under LTPP. STAR introduces a leading-zero-based sparsity prediction using log-domain add-only operations to minimize prediction overhead. It further employs distributed sorting and a sorted updating FlashAttention mechanism, guided by a coordinated tiling strategy that enables fine-grained stage interaction for improved memory efficiency and latency. These optimizations are supported by a dedicated STAR accelerator architecture, achieving up to 9.2$\times$ speedup and 71.2$\times$ energy efficiency over A100, and surpassing SOTA accelerators by up to 16.1$\times$ energy and 27.1$\times$ area efficiency gains. Further, we deploy STAR onto a multi-core spatial architecture, optimizing dataflow and execution orchestration for ultra-long sequence processing. Architectural evaluation shows that, compared to the baseline design, Spatial-STAR achieves a 20.1$\times$ throughput improvement.

cuPilot: A Strategy-Coordinated Multi-agent Framework for CUDA Kernel Evolution

Dec 23, 2025Abstract:Optimizing CUDA kernels is a challenging and labor-intensive task, given the need for hardware-software co-design expertise and the proprietary nature of high-performance kernel libraries. While recent large language models (LLMs) combined with evolutionary algorithms show promise in automatic kernel optimization, existing approaches often fall short in performance due to their suboptimal agent designs and mismatched evolution representations. This work identifies these mismatches and proposes cuPilot, a strategy-coordinated multi-agent framework that introduces strategy as an intermediate semantic representation for kernel evolution. Key contributions include a strategy-coordinated evolution algorithm, roofline-guided prompting, and strategy-level population initialization. Experimental results show that the generated kernels by cuPilot achieve an average speed up of 3.09$\times$ over PyTorch on a benchmark of 100 kernels. On the GEMM tasks, cuPilot showcases sophisticated optimizations and achieves high utilization of critical hardware units. The generated kernels are open-sourced at https://github.com/champloo2878/cuPilot-Kernels.git.

PADE: A Predictor-Free Sparse Attention Accelerator via Unified Execution and Stage Fusion

Dec 16, 2025

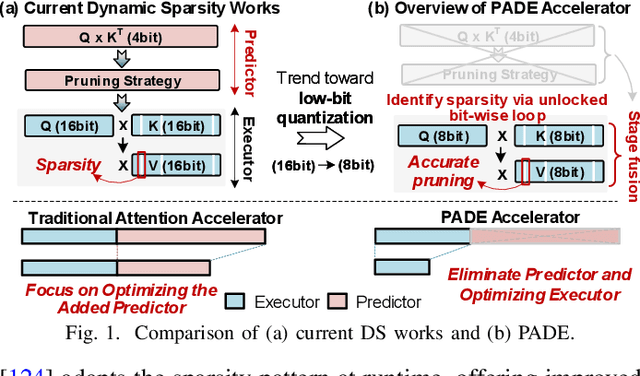

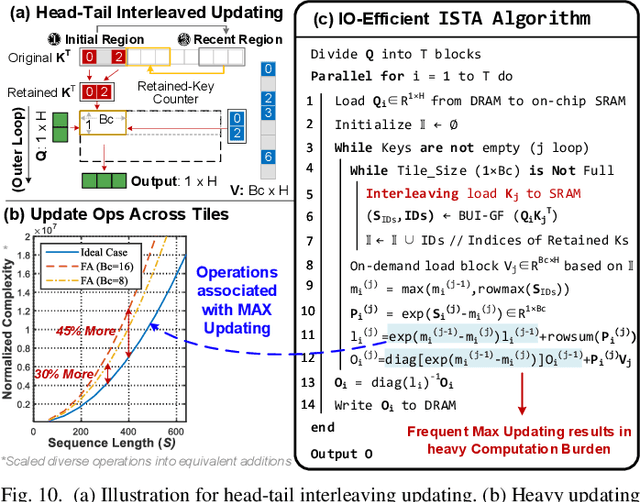

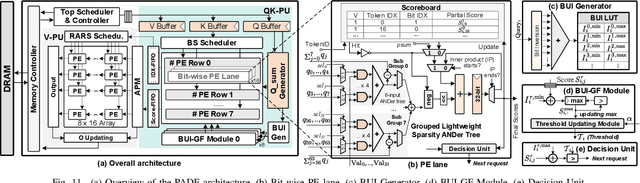

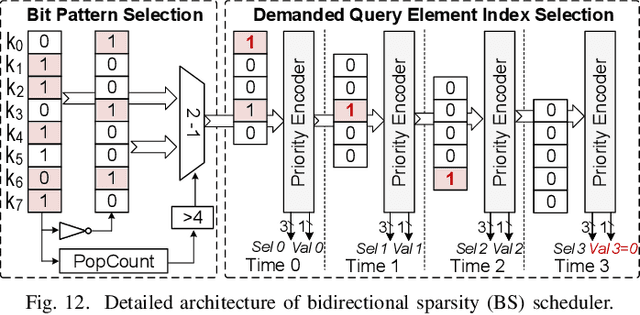

Abstract:Attention-based models have revolutionized AI, but the quadratic cost of self-attention incurs severe computational and memory overhead. Sparse attention methods alleviate this by skipping low-relevance token pairs. However, current approaches lack practicality due to the heavy expense of added sparsity predictor, which severely drops their hardware efficiency. This paper advances the state-of-the-art (SOTA) by proposing a bit-serial enable stage-fusion (BSF) mechanism, which eliminates the need for a separate predictor. However, it faces key challenges: 1) Inaccurate bit-sliced sparsity speculation leads to incorrect pruning; 2) Hardware under-utilization due to fine-grained and imbalanced bit-level workloads. 3) Tiling difficulty caused by the row-wise dependency in sparsity pruning criteria. We propose PADE, a predictor-free algorithm-hardware co-design for dynamic sparse attention acceleration. PADE features three key innovations: 1) Bit-wise uncertainty interval-enabled guard filtering (BUI-GF) strategy to accurately identify trivial tokens during each bit round; 2) Bidirectional sparsity-based out-of-order execution (BS-OOE) to improve hardware utilization; 3) Interleaving-based sparsity-tiled attention (ISTA) to reduce both I/O and computational complexity. These techniques, combined with custom accelerator designs, enable practical sparsity acceleration without relying on an added sparsity predictor. Extensive experiments on 22 benchmarks show that PADE achieves 7.43x speed up and 31.1x higher energy efficiency than Nvidia H100 GPU. Compared to SOTA accelerators, PADE achieves 5.1x, 4.3x and 3.4x energy saving than Sanger, DOTA and SOFA.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge