Shuai Li

Refer to the report for detailed contributions

GDPO-SR: Group Direct Preference Optimization for One-Step Generative Image Super-Resolution

Mar 16, 2026Abstract:Recently, reinforcement learning (RL) has been employed for improving generative image super-resolution (ISR) performance. However, the current efforts are focused on multi-step generative ISR, while one-step generative ISR remains underexplored due to its limited stochasticity. In addition, RL methods such as Direct Preference Optimization (DPO) require the generation of positive and negative sample pairs offline, leading to a limited number of samples, while Group Relative Policy Optimization (GRPO) only calculates the likelihood of the entire image, ignoring local details that are crucial for ISR. In this paper, we propose Group Direct Preference Optimization (GDPO), a novel approach to integrate RL into one-step generative ISR model training. First, we introduce a noise-aware one-step diffusion model that can generate diverse ISR outputs. To prevent performance degradation caused by noise injection, we introduce an unequal-timestep strategy to decouple the timestep of noise addition from that of diffusion. We then present the GDPO strategy, which integrates the principle of GRPO into DPO, to calculate the group-relative advantage of each online generated sample for model optimization. Meanwhile, an attribute-aware reward function is designed to dynamically evaluate the score of each sample based on its statistics of smooth and texture areas. Experiments demonstrate the effectiveness of GDPO in enhancing the performance of one-step generative ISR models. Code: https://github.com/Joyies/GDPO.

MiniAppBench: Evaluating the Shift from Text to Interactive HTML Responses in LLM-Powered Assistants

Mar 10, 2026Abstract:With the rapid advancement of Large Language Models (LLMs) in code generation, human-AI interaction is evolving from static text responses to dynamic, interactive HTML-based applications, which we term MiniApps. These applications require models to not only render visual interfaces but also construct customized interaction logic that adheres to real-world principles. However, existing benchmarks primarily focus on algorithmic correctness or static layout reconstruction, failing to capture the capabilities required for this new paradigm. To address this gap, we introduce MiniAppBench, the first comprehensive benchmark designed to evaluate principle-driven, interactive application generation. Sourced from a real-world application with 10M+ generations, MiniAppBench distills 500 tasks across six domains (e.g., Games, Science, and Tools). Furthermore, to tackle the challenge of evaluating open-ended interactions where no single ground truth exists, we propose MiniAppEval, an agentic evaluation framework. Leveraging browser automation, it performs human-like exploratory testing to systematically assess applications across three dimensions: Intention, Static, and Dynamic. Our experiments reveal that current LLMs still face significant challenges in generating high-quality MiniApps, while MiniAppEval demonstrates high alignment with human judgment, establishing a reliable standard for future research. Our code is available in github.com/MiniAppBench.

Restoration Adaptation for Semantic Segmentation on Low Quality Images

Feb 15, 2026Abstract:In real-world scenarios, the performance of semantic segmentation often deteriorates when processing low-quality (LQ) images, which may lack clear semantic structures and high-frequency details. Although image restoration techniques offer a promising direction for enhancing degraded visual content, conventional real-world image restoration (Real-IR) models primarily focus on pixel-level fidelity and often fail to recover task-relevant semantic cues, limiting their effectiveness when directly applied to downstream vision tasks. Conversely, existing segmentation models trained on high-quality data lack robustness under real-world degradations. In this paper, we propose Restoration Adaptation for Semantic Segmentation (RASS), which effectively integrates semantic image restoration into the segmentation process, enabling high-quality semantic segmentation on the LQ images directly. Specifically, we first propose a Semantic-Constrained Restoration (SCR) model, which injects segmentation priors into the restoration model by aligning its cross-attention maps with segmentation masks, encouraging semantically faithful image reconstruction. Then, RASS transfers semantic restoration knowledge into segmentation through LoRA-based module merging and task-specific fine-tuning, thereby enhancing the model's robustness to LQ images. To validate the effectiveness of our framework, we construct a real-world LQ image segmentation dataset with high-quality annotations, and conduct extensive experiments on both synthetic and real-world LQ benchmarks. The results show that SCR and RASS significantly outperform state-of-the-art methods in segmentation and restoration tasks. Code, models, and datasets will be available at https://github.com/Ka1Guan/RASS.git.

Fast Flow Matching based Conditional Independence Tests for Causal Discovery

Feb 09, 2026Abstract:Constraint-based causal discovery methods require a large number of conditional independence (CI) tests, which severely limits their practical applicability due to high computational complexity. Therefore, it is crucial to design an algorithm that accelerates each individual test. To this end, we propose the Flow Matching-based Conditional Independence Test (FMCIT). The proposed test leverages the high computational efficiency of flow matching and requires the model to be trained only once throughout the entire causal discovery procedure, substantially accelerating causal discovery. According to numerical experiments, FMCIT effectively controls type-I error and maintains high testing power under the alternative hypothesis, even in the presence of high-dimensional conditioning sets. In addition, we further integrate FMCIT into a two-stage guided PC skeleton learning framework, termed GPC-FMCIT, which combines fast screening with guided, budgeted refinement using FMCIT. This design yields explicit bounds on the number of CI queries while maintaining high statistical power. Experiments on synthetic and real-world causal discovery tasks demonstrate favorable accuracy-efficiency trade-offs over existing CI testing methods and PC variants.

Research on a Camera Position Measurement Method based on a Parallel Perspective Error Transfer Model

Feb 08, 2026Abstract:Camera pose estimation from sparse correspondences is a fundamental problem in geometric computer vision and remains particularly challenging in near-field scenarios, where strong perspective effects and heterogeneous measurement noise can significantly degrade the stability of analytic PnP solutions. In this paper, we present a geometric error propagation framework for camera pose estimation based on a parallel perspective approximation. By explicitly modeling how image measurement errors propagate through perspective geometry, we derive an error transfer model that characterizes the relationship between feature point distribution, camera depth, and pose estimation uncertainty. Building on this analysis, we develop a pose estimation method that leverages parallel perspective initialization and error-aware weighting within a Gauss-Newton optimization scheme, leading to improved robustness in proximity operations. Extensive experiments on both synthetic data and real-world images, covering diverse conditions such as strong illumination, surgical lighting, and underwater low-light environments, demonstrate that the proposed approach achieves accuracy and robustness comparable to state-of-the-art analytic and iterative PnP methods, while maintaining high computational efficiency. These results highlight the importance of explicit geometric error modeling for reliable camera pose estimation in challenging near-field settings.

LLM4Fluid: Large Language Models as Generalizable Neural Solvers for Fluid Dynamics

Jan 29, 2026Abstract:Deep learning has emerged as a promising paradigm for spatio-temporal modeling of fluid dynamics. However, existing approaches often suffer from limited generalization to unseen flow conditions and typically require retraining when applied to new scenarios. In this paper, we present LLM4Fluid, a spatio-temporal prediction framework that leverages Large Language Models (LLMs) as generalizable neural solvers for fluid dynamics. The framework first compresses high-dimensional flow fields into a compact latent space via reduced-order modeling enhanced with a physics-informed disentanglement mechanism, effectively mitigating spatial feature entanglement while preserving essential flow structures. A pretrained LLM then serves as a temporal processor, autoregressively predicting the dynamics of physical sequences with time series prompts. To bridge the modality gap between prompts and physical sequences, which can otherwise degrade prediction accuracy, we propose a dedicated modality alignment strategy that resolves representational mismatch and stabilizes long-term prediction. Extensive experiments across diverse flow scenarios demonstrate that LLM4Fluid functions as a robust and generalizable neural solver without retraining, achieving state-of-the-art accuracy while exhibiting powerful zero-shot and in-context learning capabilities. Code and datasets are publicly available at https://github.com/qisongxiao/LLM4Fluid.

Interpretability and Individuality in Knee MRI: Patient-Specific Radiomic Fingerprint with Reconstructed Healthy Personas

Jan 13, 2026Abstract:For automated assessment of knee MRI scans, both accuracy and interpretability are essential for clinical use and adoption. Traditional radiomics rely on predefined features chosen at the population level; while more interpretable, they are often too restrictive to capture patient-specific variability and can underperform end-to-end deep learning (DL). To address this, we propose two complementary strategies that bring individuality and interpretability: radiomic fingerprints and healthy personas. First, a radiomic fingerprint is a dynamically constructed, patient-specific feature set derived from MRI. Instead of applying a uniform population-level signature, our model predicts feature relevance from a pool of candidate features and selects only those most predictive for each patient, while maintaining feature-level interpretability. This fingerprint can be viewed as a latent-variable model of feature usage, where an image-conditioned predictor estimates usage probabilities and a transparent logistic regression with global coefficients performs classification. Second, a healthy persona synthesises a pathology-free baseline for each patient using a diffusion model trained to reconstruct healthy knee MRIs. Comparing features extracted from pathological images against their personas highlights deviations from normal anatomy, enabling intuitive, case-specific explanations of disease manifestations. We systematically compare fingerprints, personas, and their combination across three clinical tasks. Experimental results show that both approaches yield performance comparable to or surpassing state-of-the-art DL models, while supporting interpretability at multiple levels. Case studies further illustrate how these perspectives facilitate human-explainable biomarker discovery and pathology localisation.

Multi-Subspace Multi-Modal Modeling for Diffusion Models: Estimation, Convergence and Mixture of Experts

Jan 04, 2026Abstract:Recently, diffusion models have achieved a great performance with a small dataset of size $n$ and a fast optimization process. However, the estimation error of diffusion models suffers from the curse of dimensionality $n^{-1/D}$ with the data dimension $D$. Since images are usually a union of low-dimensional manifolds, current works model the data as a union of linear subspaces with Gaussian latent and achieve a $1/\sqrt{n}$ bound. Though this modeling reflects the multi-manifold property, the Gaussian latent can not capture the multi-modal property of the latent manifold. To bridge this gap, we propose the mixture subspace of low-rank mixture of Gaussian (MoLR-MoG) modeling, which models the target data as a union of $K$ linear subspaces, and each subspace admits a mixture of Gaussian latent ($n_k$ modals with dimension $d_k$). With this modeling, the corresponding score function naturally has a mixture of expert (MoE) structure, captures the multi-modal information, and contains nonlinear property. We first conduct real-world experiments to show that the generation results of MoE-latent MoG NN are much better than MoE-latent Gaussian score. Furthermore, MoE-latent MoG NN achieves a comparable performance with MoE-latent Unet with $10 \times$ parameters. These results indicate that the MoLR-MoG modeling is reasonable and suitable for real-world data. After that, based on such MoE-latent MoG score, we provide a $R^4\sqrt{Σ_{k=1}^Kn_k}\sqrt{Σ_{k=1}^Kn_kd_k}/\sqrt{n}$ estimation error, which escapes the curse of dimensionality by using data structure. Finally, we study the optimization process and prove the convergence guarantee under the MoLR-MoG modeling. Combined with these results, under a setting close to real-world data, this work explains why diffusion models only require a small training sample and enjoy a fast optimization process to achieve a great performance.

GenCAMO: Scene-Graph Contextual Decoupling for Environment-aware and Mask-free Camouflage Image-Dense Annotation Generation

Jan 03, 2026Abstract:Conceal dense prediction (CDP), especially RGB-D camouflage object detection and open-vocabulary camouflage object segmentation, plays a crucial role in advancing the understanding and reasoning of complex camouflage scenes. However, high-quality and large-scale camouflage datasets with dense annotation remain scarce due to expensive data collection and labeling costs. To address this challenge, we explore leveraging generative models to synthesize realistic camouflage image-dense data for training CDP models with fine-grained representations, prior knowledge, and auxiliary reasoning. Concretely, our contributions are threefold: (i) we introduce GenCAMO-DB, a large-scale camouflage dataset with multi-modal annotations, including depth maps, scene graphs, attribute descriptions, and text prompts; (ii) we present GenCAMO, an environment-aware and mask-free generative framework that produces high-fidelity camouflage image-dense annotations; (iii) extensive experiments across multiple modalities demonstrate that GenCAMO significantly improves dense prediction performance on complex camouflage scenes by providing high-quality synthetic data. The code and datasets will be released after paper acceptance.

DiscoX: Benchmarking Discourse-Level Translation task in Expert Domains

Nov 14, 2025

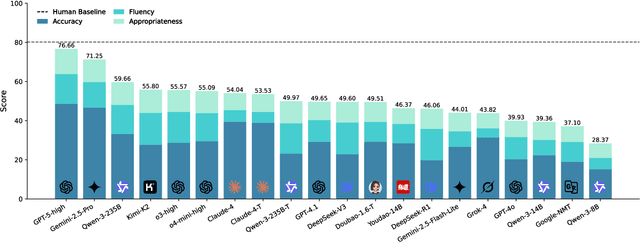

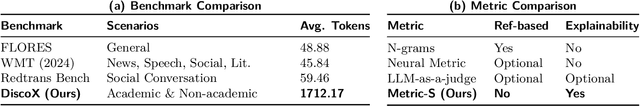

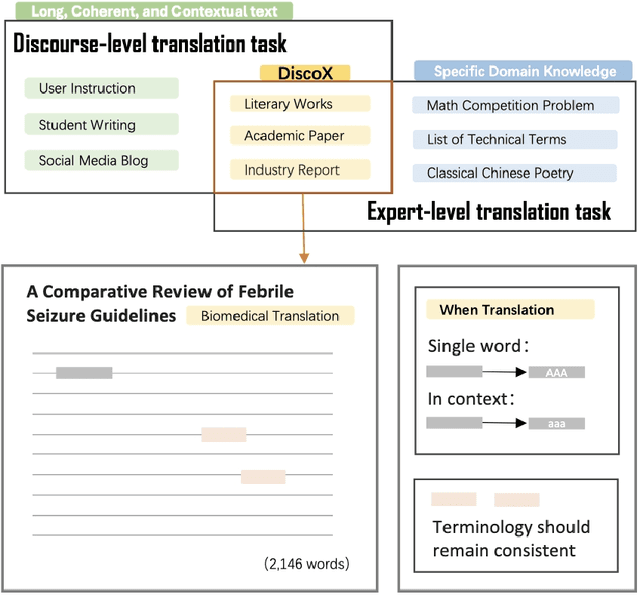

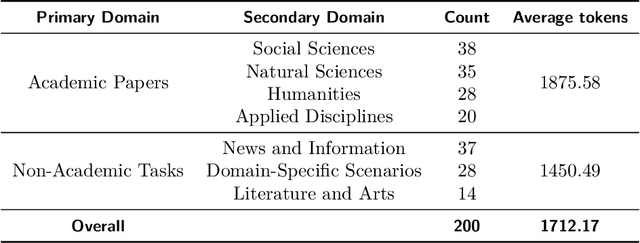

Abstract:The evaluation of discourse-level translation in expert domains remains inadequate, despite its centrality to knowledge dissemination and cross-lingual scholarly communication. While these translations demand discourse-level coherence and strict terminological precision, current evaluation methods predominantly focus on segment-level accuracy and fluency. To address this limitation, we introduce DiscoX, a new benchmark for discourse-level and expert-level Chinese-English translation. It comprises 200 professionally-curated texts from 7 domains, with an average length exceeding 1700 tokens. To evaluate performance on DiscoX, we also develop Metric-S, a reference-free system that provides fine-grained automatic assessments across accuracy, fluency, and appropriateness. Metric-S demonstrates strong consistency with human judgments, significantly outperforming existing metrics. Our experiments reveal a remarkable performance gap: even the most advanced LLMs still trail human experts on these tasks. This finding validates the difficulty of DiscoX and underscores the challenges that remain in achieving professional-grade machine translation. The proposed benchmark and evaluation system provide a robust framework for more rigorous evaluation, facilitating future advancements in LLM-based translation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge