Cheng Xu

Microsoft

DCR: Quantifying Data Contamination in LLMs Evaluation

Jul 15, 2025Abstract:The rapid advancement of large language models (LLMs) has heightened concerns about benchmark data contamination (BDC), where models inadvertently memorize evaluation data, inflating performance metrics and undermining genuine generalization assessment. This paper introduces the Data Contamination Risk (DCR) framework, a lightweight, interpretable pipeline designed to detect and quantify BDC across four granular levels: semantic, informational, data, and label. By synthesizing contamination scores via a fuzzy inference system, DCR produces a unified DCR Factor that adjusts raw accuracy to reflect contamination-aware performance. Validated on 9 LLMs (0.5B-72B) across sentiment analysis, fake news detection, and arithmetic reasoning tasks, the DCR framework reliably diagnoses contamination severity and with accuracy adjusted using the DCR Factor to within 4% average error across the three benchmarks compared to the uncontaminated baseline. Emphasizing computational efficiency and transparency, DCR provides a practical tool for integrating contamination assessment into routine evaluations, fostering fairer comparisons and enhancing the credibility of LLM benchmarking practices.

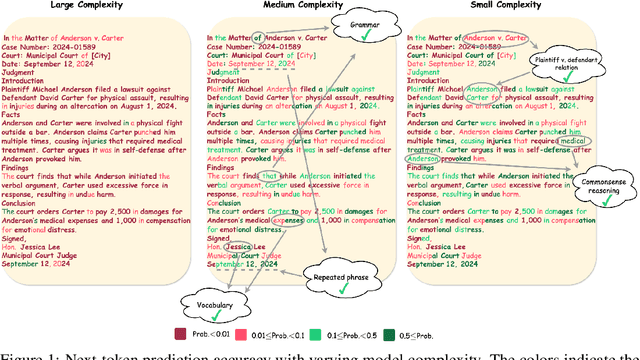

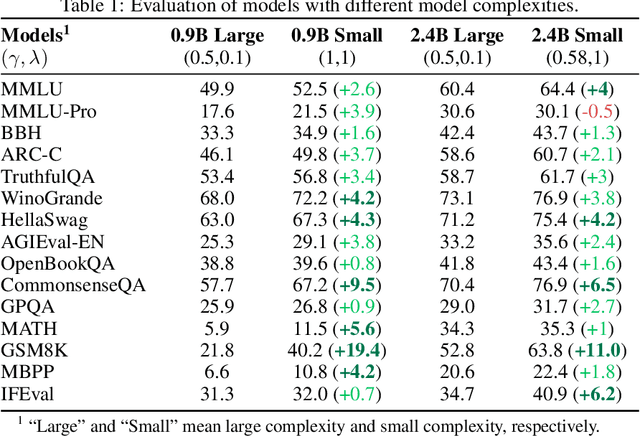

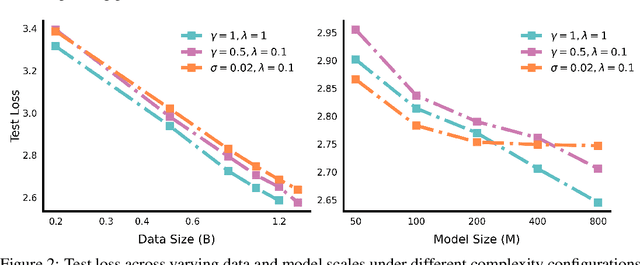

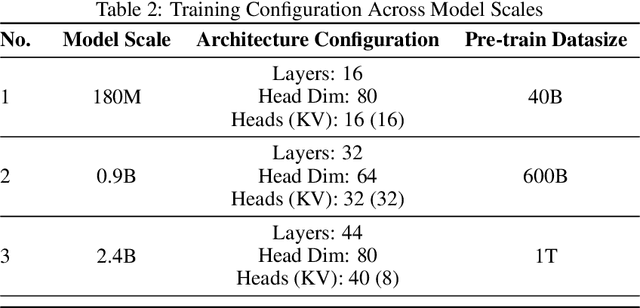

Scalable Complexity Control Facilitates Reasoning Ability of LLMs

May 29, 2025

Abstract:The reasoning ability of large language models (LLMs) has been rapidly advancing in recent years, attracting interest in more fundamental approaches that can reliably enhance their generalizability. This work demonstrates that model complexity control, conveniently implementable by adjusting the initialization rate and weight decay coefficient, improves the scaling law of LLMs consistently over varying model sizes and data sizes. This gain is further illustrated by comparing the benchmark performance of 2.4B models pretrained on 1T tokens with different complexity hyperparameters. Instead of fixing the initialization std, we found that a constant initialization rate (the exponent of std) enables the scaling law to descend faster in both model and data sizes. These results indicate that complexity control is a promising direction for the continual advancement of LLMs.

PreP-OCR: A Complete Pipeline for Document Image Restoration and Enhanced OCR Accuracy

May 28, 2025Abstract:This paper introduces PreP-OCR, a two-stage pipeline that combines document image restoration with semantic-aware post-OCR correction to enhance both visual clarity and textual consistency, thereby improving text extraction from degraded historical documents. First, we synthesize document-image pairs from plaintext, rendering them with diverse fonts and layouts and then applying a randomly ordered set of degradation operations. An image restoration model is trained on this synthetic data, using multi-directional patch extraction and fusion to process large images. Second, a ByT5 post-OCR model, fine-tuned on synthetic historical text pairs, addresses remaining OCR errors. Detailed experiments on 13,831 pages of real historical documents in English, French, and Spanish show that the PreP-OCR pipeline reduces character error rates by 63.9-70.3% compared to OCR on raw images. Our pipeline demonstrates the potential of integrating image restoration with linguistic error correction for digitizing historical archives.

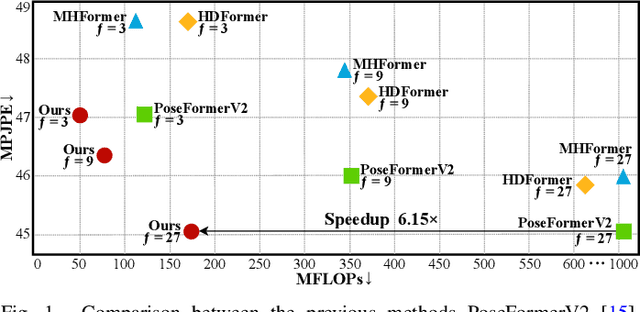

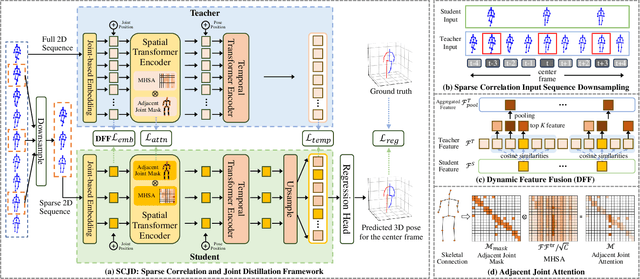

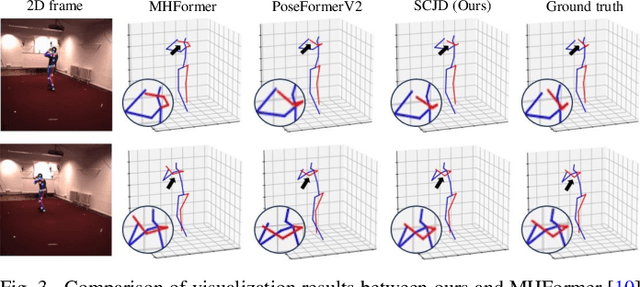

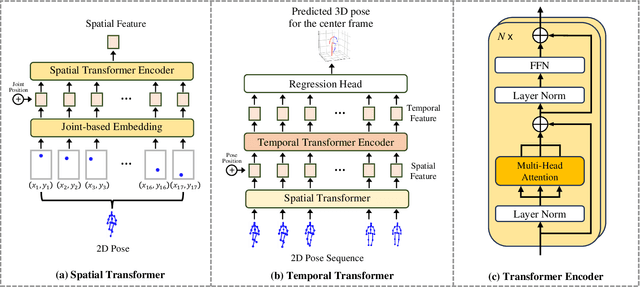

SCJD: Sparse Correlation and Joint Distillation for Efficient 3D Human Pose Estimation

Mar 18, 2025

Abstract:Existing 3D Human Pose Estimation (HPE) methods achieve high accuracy but suffer from computational overhead and slow inference, while knowledge distillation methods fail to address spatial relationships between joints and temporal correlations in multi-frame inputs. In this paper, we propose Sparse Correlation and Joint Distillation (SCJD), a novel framework that balances efficiency and accuracy for 3D HPE. SCJD introduces Sparse Correlation Input Sequence Downsampling to reduce redundancy in student network inputs while preserving inter-frame correlations. For effective knowledge transfer, we propose Dynamic Joint Spatial Attention Distillation, which includes Dynamic Joint Embedding Distillation to enhance the student's feature representation using the teacher's multi-frame context feature, and Adjacent Joint Attention Distillation to improve the student network's focus on adjacent joint relationships for better spatial understanding. Additionally, Temporal Consistency Distillation aligns the temporal correlations between teacher and student networks through upsampling and global supervision. Extensive experiments demonstrate that SCJD achieves state-of-the-art performance. Code is available at https://github.com/wileychan/SCJD.

Rotation-Adaptive Point Cloud Domain Generalization via Intricate Orientation Learning

Feb 04, 2025

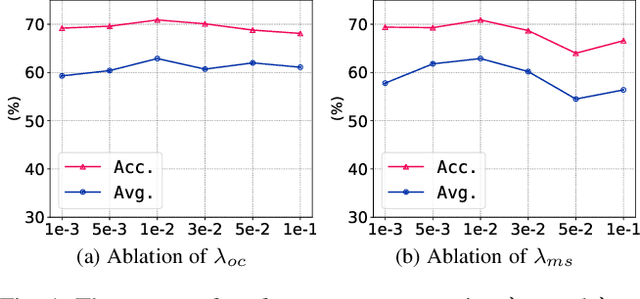

Abstract:The vulnerability of 3D point cloud analysis to unpredictable rotations poses an open yet challenging problem: orientation-aware 3D domain generalization. Cross-domain robustness and adaptability of 3D representations are crucial but not easily achieved through rotation augmentation. Motivated by the inherent advantages of intricate orientations in enhancing generalizability, we propose an innovative rotation-adaptive domain generalization framework for 3D point cloud analysis. Our approach aims to alleviate orientational shifts by leveraging intricate samples in an iterative learning process. Specifically, we identify the most challenging rotation for each point cloud and construct an intricate orientation set by optimizing intricate orientations. Subsequently, we employ an orientation-aware contrastive learning framework that incorporates an orientation consistency loss and a margin separation loss, enabling effective learning of categorically discriminative and generalizable features with rotation consistency. Extensive experiments and ablations conducted on 3D cross-domain benchmarks firmly establish the state-of-the-art performance of our proposed approach in the context of orientation-aware 3D domain generalization.

Dr. Tongue: Sign-Oriented Multi-label Detection for Remote Tongue Diagnosis

Jan 06, 2025Abstract:Tongue diagnosis is a vital tool in Western and Traditional Chinese Medicine, providing key insights into a patient's health by analyzing tongue attributes. The COVID-19 pandemic has heightened the need for accurate remote medical assessments, emphasizing the importance of precise tongue attribute recognition via telehealth. To address this, we propose a Sign-Oriented multi-label Attributes Detection framework. Our approach begins with an adaptive tongue feature extraction module that standardizes tongue images and mitigates environmental factors. This is followed by a Sign-oriented Network (SignNet) that identifies specific tongue attributes, emulating the diagnostic process of experienced practitioners and enabling comprehensive health evaluations. To validate our methodology, we developed an extensive tongue image dataset specifically designed for telemedicine. Unlike existing datasets, ours is tailored for remote diagnosis, with a comprehensive set of attribute labels. This dataset will be openly available, providing a valuable resource for research. Initial tests have shown improved accuracy in detecting various tongue attributes, highlighting our framework's potential as an essential tool for remote medical assessments.

Subgoal-based Hierarchical Reinforcement Learning for Multi-Agent Collaboration

Aug 21, 2024Abstract:Recent advancements in reinforcement learning have made significant impacts across various domains, yet they often struggle in complex multi-agent environments due to issues like algorithm instability, low sampling efficiency, and the challenges of exploration and dimensionality explosion. Hierarchical reinforcement learning (HRL) offers a structured approach to decompose complex tasks into simpler sub-tasks, which is promising for multi-agent settings. This paper advances the field by introducing a hierarchical architecture that autonomously generates effective subgoals without explicit constraints, enhancing both flexibility and stability in training. We propose a dynamic goal generation strategy that adapts based on environmental changes. This method significantly improves the adaptability and sample efficiency of the learning process. Furthermore, we address the critical issue of credit assignment in multi-agent systems by synergizing our hierarchical architecture with a modified QMIX network, thus improving overall strategy coordination and efficiency. Comparative experiments with mainstream reinforcement learning algorithms demonstrate the superior convergence speed and performance of our approach in both single-agent and multi-agent environments, confirming its effectiveness and flexibility in complex scenarios. Our code is open-sourced at: \url{https://github.com/SICC-Group/GMAH}.

G2Face: High-Fidelity Reversible Face Anonymization via Generative and Geometric Priors

Aug 18, 2024

Abstract:Reversible face anonymization, unlike traditional face pixelization, seeks to replace sensitive identity information in facial images with synthesized alternatives, preserving privacy without sacrificing image clarity. Traditional methods, such as encoder-decoder networks, often result in significant loss of facial details due to their limited learning capacity. Additionally, relying on latent manipulation in pre-trained GANs can lead to changes in ID-irrelevant attributes, adversely affecting data utility due to GAN inversion inaccuracies. This paper introduces G\textsuperscript{2}Face, which leverages both generative and geometric priors to enhance identity manipulation, achieving high-quality reversible face anonymization without compromising data utility. We utilize a 3D face model to extract geometric information from the input face, integrating it with a pre-trained GAN-based decoder. This synergy of generative and geometric priors allows the decoder to produce realistic anonymized faces with consistent geometry. Moreover, multi-scale facial features are extracted from the original face and combined with the decoder using our novel identity-aware feature fusion blocks (IFF). This integration enables precise blending of the generated facial patterns with the original ID-irrelevant features, resulting in accurate identity manipulation. Extensive experiments demonstrate that our method outperforms existing state-of-the-art techniques in face anonymization and recovery, while preserving high data utility. Code is available at https://github.com/Harxis/G2Face.

Beat-It: Beat-Synchronized Multi-Condition 3D Dance Generation

Jul 10, 2024Abstract:Dance, as an art form, fundamentally hinges on the precise synchronization with musical beats. However, achieving aesthetically pleasing dance sequences from music is challenging, with existing methods often falling short in controllability and beat alignment. To address these shortcomings, this paper introduces Beat-It, a novel framework for beat-specific, key pose-guided dance generation. Unlike prior approaches, Beat-It uniquely integrates explicit beat awareness and key pose guidance, effectively resolving two main issues: the misalignment of generated dance motions with musical beats, and the inability to map key poses to specific beats, critical for practical choreography. Our approach disentangles beat conditions from music using a nearest beat distance representation and employs a hierarchical multi-condition fusion mechanism. This mechanism seamlessly integrates key poses, beats, and music features, mitigating condition conflicts and offering rich, multi-conditioned guidance for dance generation. Additionally, a specially designed beat alignment loss ensures the generated dance movements remain in sync with the designated beats. Extensive experiments confirm Beat-It's superiority over existing state-of-the-art methods in terms of beat alignment and motion controllability.

Technique Report of CVPR 2024 PBDL Challenges

Jun 15, 2024

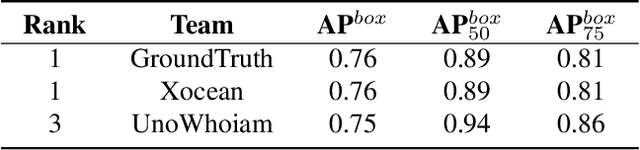

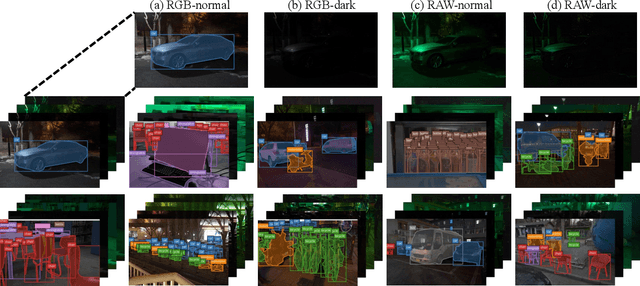

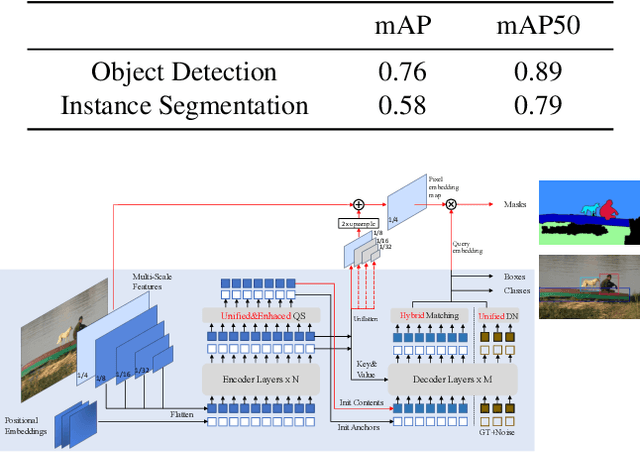

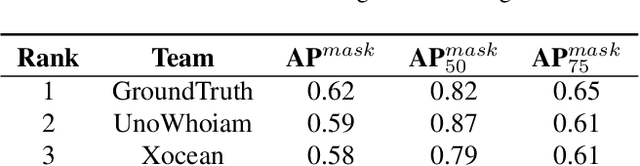

Abstract:The intersection of physics-based vision and deep learning presents an exciting frontier for advancing computer vision technologies. By leveraging the principles of physics to inform and enhance deep learning models, we can develop more robust and accurate vision systems. Physics-based vision aims to invert the processes to recover scene properties such as shape, reflectance, light distribution, and medium properties from images. In recent years, deep learning has shown promising improvements for various vision tasks, and when combined with physics-based vision, these approaches can enhance the robustness and accuracy of vision systems. This technical report summarizes the outcomes of the Physics-Based Vision Meets Deep Learning (PBDL) 2024 challenge, held in CVPR 2024 workshop. The challenge consisted of eight tracks, focusing on Low-Light Enhancement and Detection as well as High Dynamic Range (HDR) Imaging. This report details the objectives, methodologies, and results of each track, highlighting the top-performing solutions and their innovative approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge