Lihui Wang

Shape-morphing programming of soft materials on complex geometries via neural operator

Jan 16, 2026Abstract:Shape-morphing soft materials can enable diverse target morphologies through voxel-level material distribution design, offering significant potential for various applications. Despite progress in basic shape-morphing design with simple geometries, achieving advanced applications such as conformal implant deployment or aerodynamic morphing requires accurate and diverse morphing designs on complex geometries, which remains challenging. Here, we present a Spectral and Spatial Neural Operator (S2NO), which enables high-fidelity morphing prediction on complex geometries. S2NO effectively captures global and local morphing behaviours on irregular computational domains by integrating Laplacian eigenfunction encoding and spatial convolutions. Combining S2NO with evolutionary algorithms enables voxel-level optimisation of material distributions for shape morphing programming on various complex geometries, including irregular-boundary shapes, porous structures, and thin-walled structures. Furthermore, the neural operator's discretisation-invariant property enables super-resolution material distribution design, further expanding the diversity and complexity of morphing design. These advancements significantly improve the efficiency and capability of programming complex shape morphing.

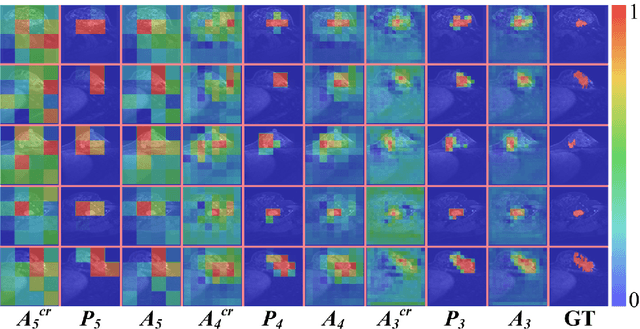

CD-DPE: Dual-Prompt Expert Network based on Convolutional Dictionary Feature Decoupling for Multi-Contrast MRI Super-Resolution

Nov 18, 2025Abstract:Multi-contrast magnetic resonance imaging (MRI) super-resolution intends to reconstruct high-resolution (HR) images from low-resolution (LR) scans by leveraging structural information present in HR reference images acquired with different contrasts. This technique enhances anatomical detail and soft tissue differentiation, which is vital for early diagnosis and clinical decision-making. However, inherent contrasts disparities between modalities pose fundamental challenges in effectively utilizing reference image textures to guide target image reconstruction, often resulting in suboptimal feature integration. To address this issue, we propose a dual-prompt expert network based on a convolutional dictionary feature decoupling (CD-DPE) strategy for multi-contrast MRI super-resolution. Specifically, we introduce an iterative convolutional dictionary feature decoupling module (CD-FDM) to separate features into cross-contrast and intra-contrast components, thereby reducing redundancy and interference. To fully integrate these features, a novel dual-prompt feature fusion expert module (DP-FFEM) is proposed. This module uses a frequency prompt to guide the selection of relevant reference features for incorporation into the target image, while an adaptive routing prompt determines the optimal method for fusing reference and target features to enhance reconstruction quality. Extensive experiments on public multi-contrast MRI datasets demonstrate that CD-DPE outperforms state-of-the-art methods in reconstructing fine details. Additionally, experiments on unseen datasets demonstrated that CD-DPE exhibits strong generalization capabilities.

Unsupervised Multi-Attention Meta Transformer for Rotating Machinery Fault Diagnosis

Sep 11, 2025

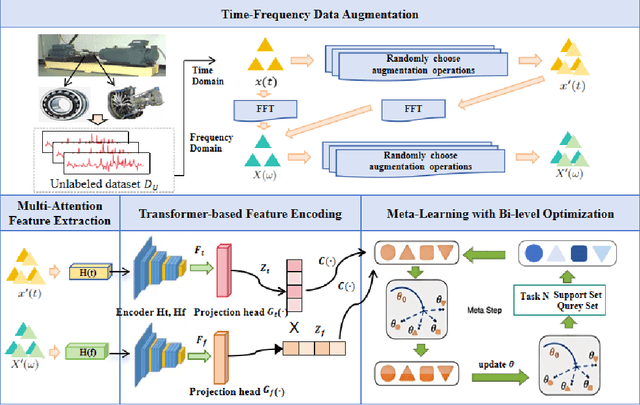

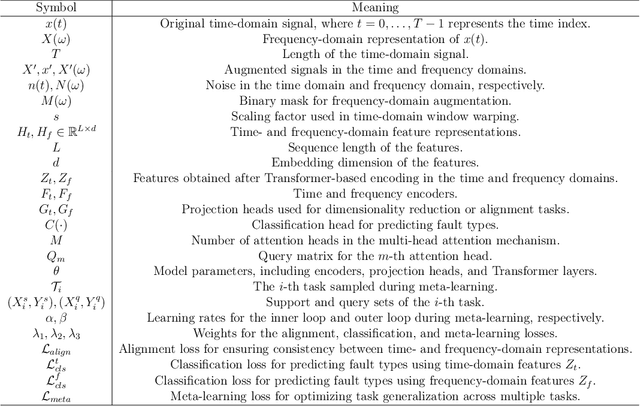

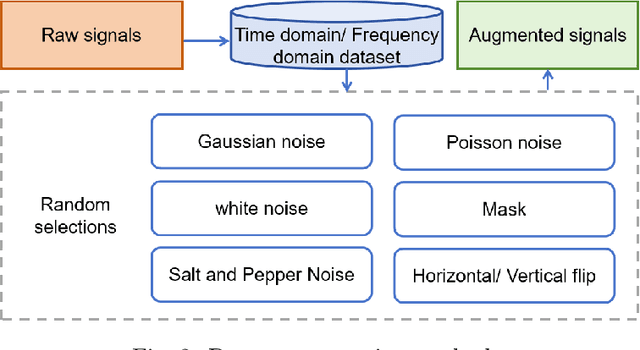

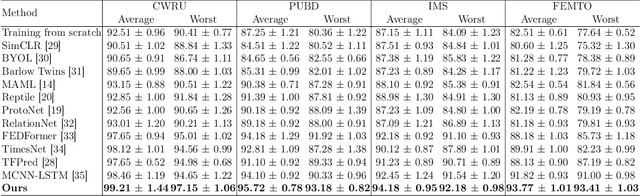

Abstract:The intelligent fault diagnosis of rotating mechanical equipment usually requires a large amount of labeled sample data. However, in practical industrial applications, acquiring enough data is both challenging and expensive in terms of time and cost. Moreover, different types of rotating mechanical equipment with different unique mechanical properties, require separate training of diagnostic models for each case. To address the challenges of limited fault samples and the lack of generalizability in prediction models for practical engineering applications, we propose a Multi-Attention Meta Transformer method for few-shot unsupervised rotating machinery fault diagnosis (MMT-FD). This framework extracts potential fault representations from unlabeled data and demonstrates strong generalization capabilities, making it suitable for diagnosing faults across various types of mechanical equipment. The MMT-FD framework integrates a time-frequency domain encoder and a meta-learning generalization model. The time-frequency domain encoder predicts status representations generated through random augmentations in the time-frequency domain. These enhanced data are then fed into a meta-learning network for classification and generalization training, followed by fine-tuning using a limited amount of labeled data. The model is iteratively optimized using a small number of contrastive learning iterations, resulting in high efficiency. To validate the framework, we conducted experiments on a bearing fault dataset and rotor test bench data. The results demonstrate that the MMT-FD model achieves 99\% fault diagnosis accuracy with only 1\% of labeled sample data, exhibiting robust generalization capabilities.

SUFFICIENT: A scan-specific unsupervised deep learning framework for high-resolution 3D isotropic fetal brain MRI reconstruction

May 26, 2025Abstract:High-quality 3D fetal brain MRI reconstruction from motion-corrupted 2D slices is crucial for clinical diagnosis. Reliable slice-to-volume registration (SVR)-based motion correction and super-resolution reconstruction (SRR) methods are essential. Deep learning (DL) has demonstrated potential in enhancing SVR and SRR when compared to conventional methods. However, it requires large-scale external training datasets, which are difficult to obtain for clinical fetal MRI. To address this issue, we propose an unsupervised iterative SVR-SRR framework for isotropic HR volume reconstruction. Specifically, SVR is formulated as a function mapping a 2D slice and a 3D target volume to a rigid transformation matrix, which aligns the slice to the underlying location in the target volume. The function is parameterized by a convolutional neural network, which is trained by minimizing the difference between the volume slicing at the predicted position and the input slice. In SRR, a decoding network embedded within a deep image prior framework is incorporated with a comprehensive image degradation model to produce the high-resolution (HR) volume. The deep image prior framework offers a local consistency prior to guide the reconstruction of HR volumes. By performing a forward degradation model, the HR volume is optimized by minimizing loss between predicted slices and the observed slices. Comprehensive experiments conducted on large-magnitude motion-corrupted simulation data and clinical data demonstrate the superior performance of the proposed framework over state-of-the-art fetal brain reconstruction frameworks.

GESH-Net: Graph-Enhanced Spherical Harmonic Convolutional Networks for Cortical Surface Registration

Oct 18, 2024Abstract:Currently, cortical surface registration techniques based on classical methods have been well developed. However, a key issue with classical methods is that for each pair of images to be registered, it is necessary to search for the optimal transformation in the deformation space according to a specific optimization algorithm until the similarity measure function converges, which cannot meet the requirements of real-time and high-precision in medical image registration. Researching cortical surface registration based on deep learning models has become a new direction. But so far, there are still only a few studies on cortical surface image registration based on deep learning. Moreover, although deep learning methods theoretically have stronger representation capabilities, surpassing the most advanced classical methods in registration accuracy and distortion control remains a challenge. Therefore, to address this challenge, this paper constructs a deep learning model to study the technology of cortical surface image registration. The specific work is as follows: (1) An unsupervised cortical surface registration network based on a multi-scale cascaded structure is designed, and a convolution method based on spherical harmonic transformation is introduced to register cortical surface data. This solves the problem of scale-inflexibility of spherical feature transformation and optimizes the multi-scale registration process. (2)By integrating the attention mechanism, a graph-enhenced module is introduced into the registration network, using the graph attention module to help the network learn global features of cortical surface data, enhancing the learning ability of the network. The results show that the graph attention module effectively enhances the network's ability to extract global features, and its registration results have significant advantages over other methods.

DFGET: Displacement-Field Assisted Graph Energy Transmitter for Gland Instance Segmentation

Dec 11, 2023

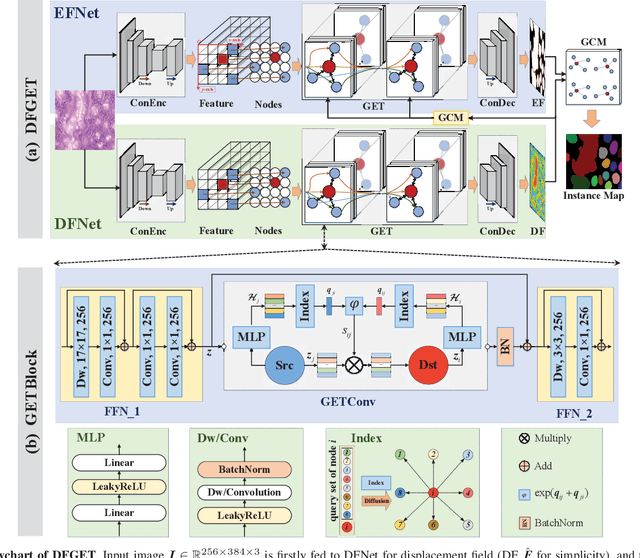

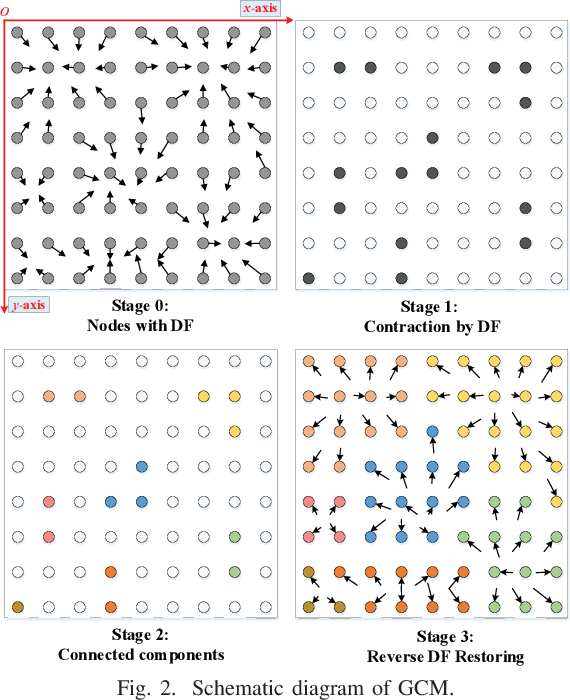

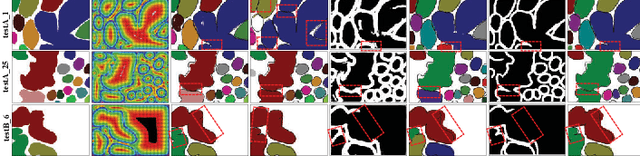

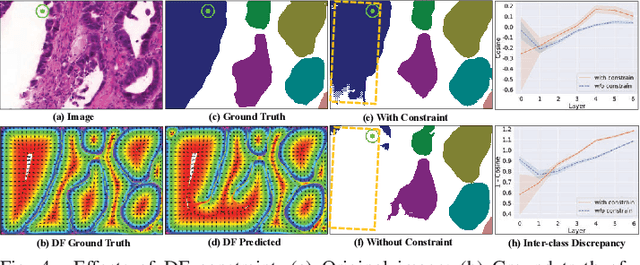

Abstract:Gland instance segmentation is an essential but challenging task in the diagnosis and treatment of adenocarcinoma. The existing models usually achieve gland instance segmentation through multi-task learning and boundary loss constraint. However, how to deal with the problems of gland adhesion and inaccurate boundary in segmenting the complex samples remains a challenge. In this work, we propose a displacement-field assisted graph energy transmitter (DFGET) framework to solve these problems. Specifically, a novel message passing manner based on anisotropic diffusion is developed to update the node features, which can distinguish the isomorphic graphs and improve the expressivity of graph nodes for complex samples. Using such graph framework, the gland semantic segmentation map and the displacement field (DF) of the graph nodes are estimated with two graph network branches. With the constraint of DF, a graph cluster module based on diffusion theory is presented to improve the intra-class feature consistency and inter-class feature discrepancy, as well as to separate the adherent glands from the semantic segmentation maps. Extensive comparison and ablation experiments on the GlaS dataset demonstrate the superiority of DFGET and effectiveness of the proposed anisotropic message passing manner and clustering method. Compared to the best comparative model, DFGET increases the object-Dice and object-F1 score by 2.5% and 3.4% respectively, while decreases the object-HD by 32.4%, achieving state-of-the-art performance.

AdaFuse: Adaptive Medical Image Fusion Based on Spatial-Frequential Cross Attention

Oct 24, 2023

Abstract:Multi-modal medical image fusion is essential for the precise clinical diagnosis and surgical navigation since it can merge the complementary information in multi-modalities into a single image. The quality of the fused image depends on the extracted single modality features as well as the fusion rules for multi-modal information. Existing deep learning-based fusion methods can fully exploit the semantic features of each modality, they cannot distinguish the effective low and high frequency information of each modality and fuse them adaptively. To address this issue, we propose AdaFuse, in which multimodal image information is fused adaptively through frequency-guided attention mechanism based on Fourier transform. Specifically, we propose the cross-attention fusion (CAF) block, which adaptively fuses features of two modalities in the spatial and frequency domains by exchanging key and query values, and then calculates the cross-attention scores between the spatial and frequency features to further guide the spatial-frequential information fusion. The CAF block enhances the high-frequency features of the different modalities so that the details in the fused images can be retained. Moreover, we design a novel loss function composed of structure loss and content loss to preserve both low and high frequency information. Extensive comparison experiments on several datasets demonstrate that the proposed method outperforms state-of-the-art methods in terms of both visual quality and quantitative metrics. The ablation experiments also validate the effectiveness of the proposed loss and fusion strategy.

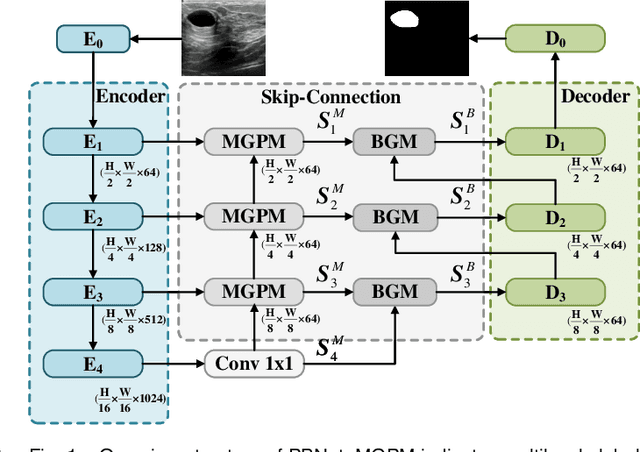

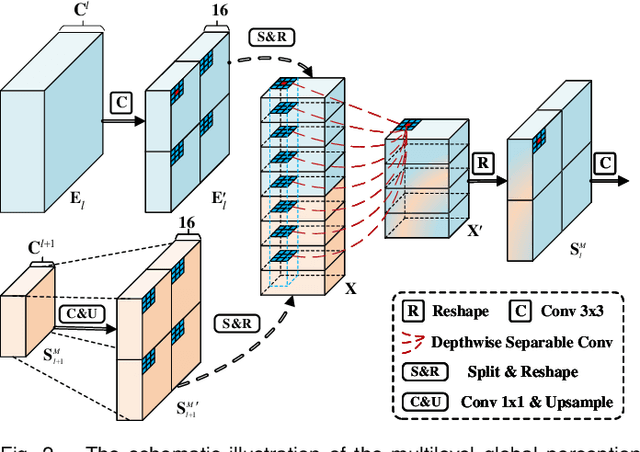

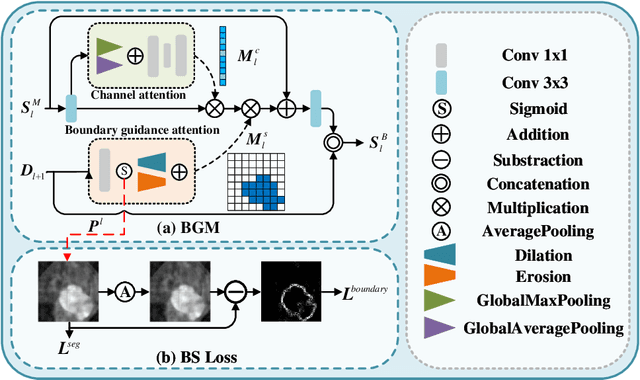

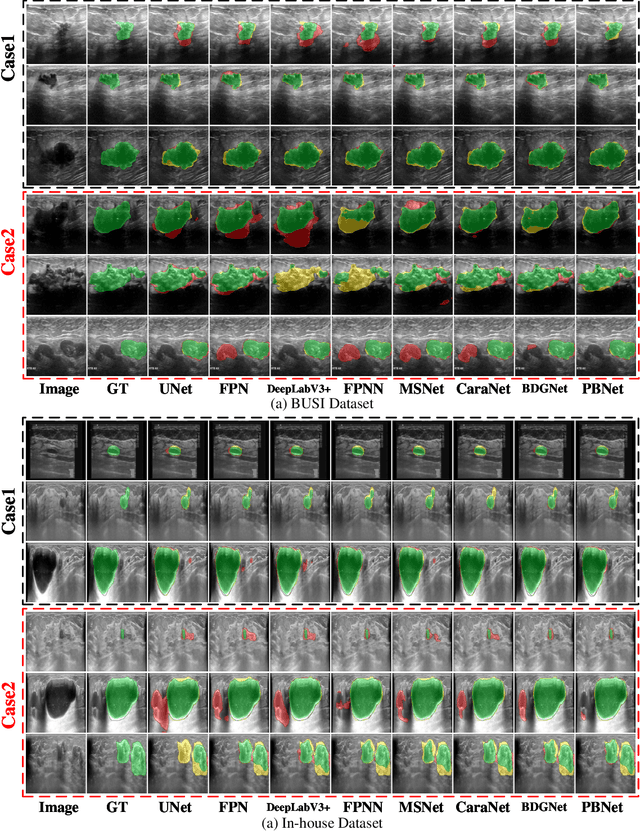

Multilevel Perception Boundary-guided Network for Breast Lesion Segmentation in Ultrasound Images

Oct 23, 2023

Abstract:Automatic segmentation of breast tumors from the ultrasound images is essential for the subsequent clinical diagnosis and treatment plan. Although the existing deep learning-based methods have achieved significant progress in automatic segmentation of breast tumor, their performance on tumors with similar intensity to the normal tissues is still not pleasant, especially for the tumor boundaries. To address this issue, we propose a PBNet composed by a multilevel global perception module (MGPM) and a boundary guided module (BGM) to segment breast tumors from ultrasound images. Specifically, in MGPM, the long-range spatial dependence between the voxels in a single level feature maps are modeled, and then the multilevel semantic information is fused to promote the recognition ability of the model for non-enhanced tumors. In BGM, the tumor boundaries are extracted from the high-level semantic maps using the dilation and erosion effects of max pooling, such boundaries are then used to guide the fusion of low and high-level features. Moreover, to improve the segmentation performance for tumor boundaries, a multi-level boundary-enhanced segmentation (BS) loss is proposed. The extensive comparison experiments on both publicly available dataset and in-house dataset demonstrate that the proposed PBNet outperforms the state-of-the-art methods in terms of both qualitative visualization results and quantitative evaluation metrics, with the Dice score, Jaccard coefficient, Specificity and HD95 improved by 0.70%, 1.1%, 0.1% and 2.5% respectively. In addition, the ablation experiments validate that the proposed MGPM is indeed beneficial for distinguishing the non-enhanced tumors and the BGM as well as the BS loss are also helpful for refining the segmentation contours of the tumor.

Progressive Dual Priori Network for Generalized Breast Tumor Segmentation

Oct 20, 2023

Abstract:To promote the generalization ability of breast tumor segmentation models, as well as to improve the segmentation performance for breast tumors with smaller size, low-contrast amd irregular shape, we propose a progressive dual priori network (PDPNet) to segment breast tumors from dynamic enhanced magnetic resonance images (DCE-MRI) acquired at different sites. The PDPNet first cropped tumor regions with a coarse-segmentation based localization module, then the breast tumor mask was progressively refined by using the weak semantic priori and cross-scale correlation prior knowledge. To validate the effectiveness of PDPNet, we compared it with several state-of-the-art methods on multi-center datasets. The results showed that, comparing against the suboptimal method, the DSC, SEN, KAPPA and HD95 of PDPNet were improved 3.63\%, 8.19\%, 5.52\%, and 3.66\% respectively. In addition, through ablations, we demonstrated that the proposed localization module can decrease the influence of normal tissues and therefore improve the generalization ability of the model. The weak semantic priors allow focusing on tumor regions to avoid missing small tumors and low-contrast tumors. The cross-scale correlation priors are beneficial for promoting the shape-aware ability for irregual tumors. Thus integrating them in a unified framework improved the multi-center breast tumor segmentation performance.

IRS Assisted Federated Learning A Broadband Over-the-Air Aggregation Approach

Oct 11, 2023Abstract:We consider a broadband over-the-air computation empowered model aggregation approach for wireless federated learning (FL) systems and propose to leverage an intelligent reflecting surface (IRS) to combat wireless fading and noise. We first investigate the conventional node-selection based framework, where a few edge nodes are dropped in model aggregation to control the aggregation error. We analyze the performance of this node-selection based framework and derive an upper bound on its performance loss, which is shown to be related to the selected edge nodes. Then, we seek to minimize the mean-squared error (MSE) between the desired global gradient parameters and the actually received ones by optimizing the selected edge nodes, their transmit equalization coefficients, the IRS phase shifts, and the receive factors of the cloud server. By resorting to the matrix lifting technique and difference-of-convex programming, we successfully transform the formulated optimization problem into a convex one and solve it using off-the-shelf solvers. To improve learning performance, we further propose a weight-selection based FL framework. In such a framework, we assign each edge node a proper weight coefficient in model aggregation instead of discarding any of them to reduce the aggregation error, i.e., amplitude alignment of the received local gradient parameters from different edge nodes is not required. We also analyze the performance of this weight-selection based framework and derive an upper bound on its performance loss, followed by minimizing the MSE via optimizing the weight coefficients of the edge nodes, their transmit equalization coefficients, the IRS phase shifts, and the receive factors of the cloud server. Furthermore, we use the MNIST dataset for simulations to evaluate the performance of both node-selection and weight-selection based FL frameworks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge