Kishore Srinivas

Language-Embedded Gaussian Splats (LEGS): Incrementally Building Room-Scale Representations with a Mobile Robot

Sep 26, 2024

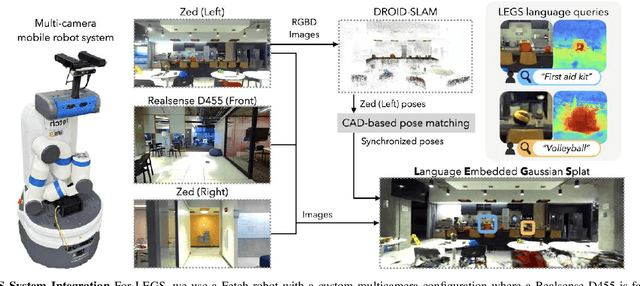

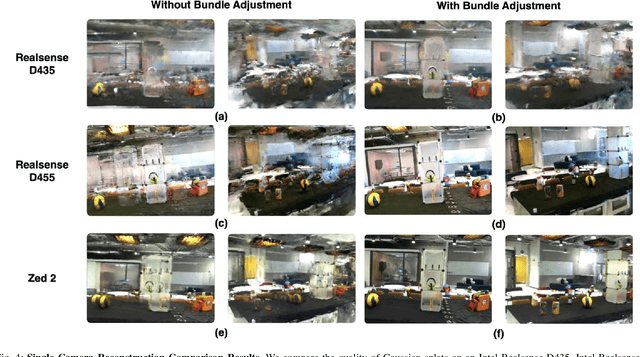

Abstract:Building semantic 3D maps is valuable for searching for objects of interest in offices, warehouses, stores, and homes. We present a mapping system that incrementally builds a Language-Embedded Gaussian Splat (LEGS): a detailed 3D scene representation that encodes both appearance and semantics in a unified representation. LEGS is trained online as a robot traverses its environment to enable localization of open-vocabulary object queries. We evaluate LEGS on 4 room-scale scenes where we query for objects in the scene to assess how LEGS can capture semantic meaning. We compare LEGS to LERF and find that while both systems have comparable object query success rates, LEGS trains over 3.5x faster than LERF. Results suggest that a multi-camera setup and incremental bundle adjustment can boost visual reconstruction quality in constrained robot trajectories, and suggest LEGS can localize open-vocabulary and long-tail object queries with up to 66% accuracy.

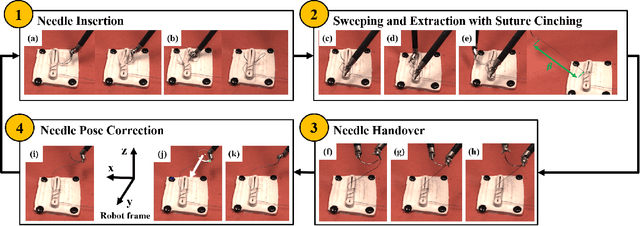

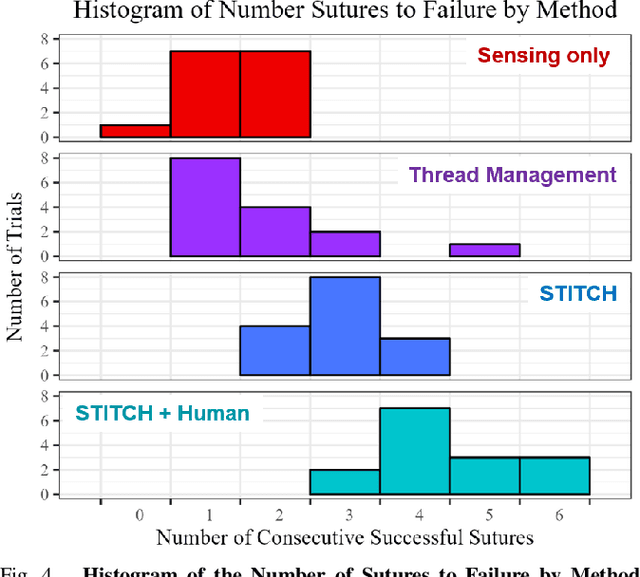

STITCH: Augmented Dexterity for Suture Throws Including Thread Coordination and Handoffs

Apr 08, 2024

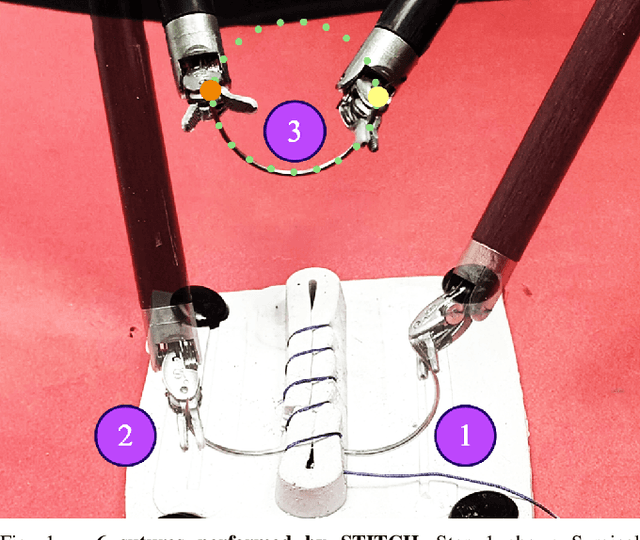

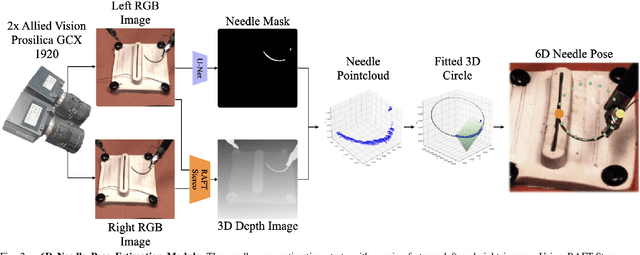

Abstract:We present STITCH: an augmented dexterity pipeline that performs Suture Throws Including Thread Coordination and Handoffs. STITCH iteratively performs needle insertion, thread sweeping, needle extraction, suture cinching, needle handover, and needle pose correction with failure recovery policies. We introduce a novel visual 6D needle pose estimation framework using a stereo camera pair and new suturing motion primitives. We compare STITCH to baselines, including a proprioception-only and a policy without visual servoing. In physical experiments across 15 trials, STITCH achieves an average of 2.93 sutures without human intervention and 4.47 sutures with human intervention. See https://sites.google.com/berkeley.edu/stitch for code and supplemental materials.

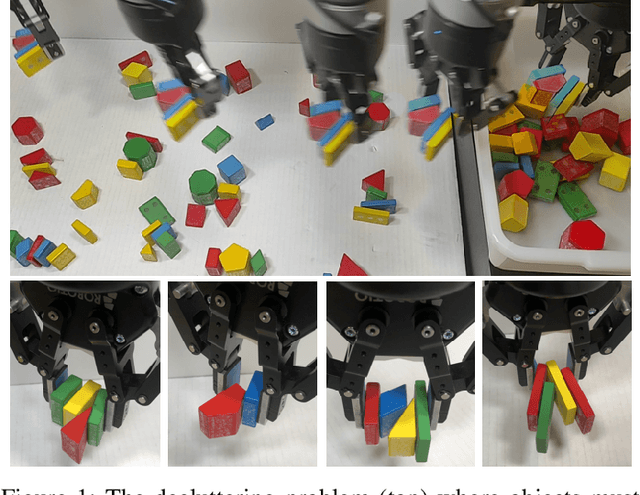

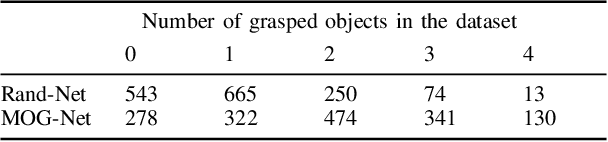

The Busboy Problem: Efficient Tableware Decluttering Using Consolidation and Multi-Object Grasps

Jul 08, 2023

Abstract:We present the "Busboy Problem": automating an efficient decluttering of cups, bowls, and silverware from a planar surface. As grasping and transporting individual items is highly inefficient, we propose policies to generate grasps for multiple items. We introduce the metric of Objects per Trip (OpT) carried by the robot to the collection bin to analyze the improvement seen as a result of our policies. In physical experiments with singulated items, we find that consolidation and multi-object grasps resulted in an 1.8x improvement in OpT, compared to methods without multi-object grasps. See https://sites.google.com/berkeley.edu/busboyproblem for code and supplemental materials.

Automating Vascular Shunt Insertion with the dVRK Surgical Robot

Nov 04, 2022Abstract:Vascular shunt insertion is a fundamental surgical procedure used to temporarily restore blood flow to tissues. It is often performed in the field after major trauma. We formulate a problem of automated vascular shunt insertion and propose a pipeline to perform Automated Vascular Shunt Insertion (AVSI) using a da Vinci Research Kit. The pipeline uses a learned visual model to estimate the locus of the vessel rim, plans a grasp on the rim, and moves to grasp at that point. The first robot gripper then pulls the rim to stretch open the vessel with a dilation motion. The second robot gripper then proceeds to insert a shunt into the vessel phantom (a model of the blood vessel) with a chamfer tilt followed by a screw motion. Results suggest that AVSI achieves a high success rate even with tight tolerances and varying vessel orientations up to 30{\deg}. Supplementary material, dataset, videos, and visualizations can be found at https://sites.google.com/berkeley.edu/autolab-avsi.

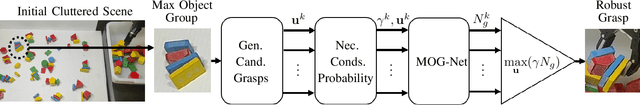

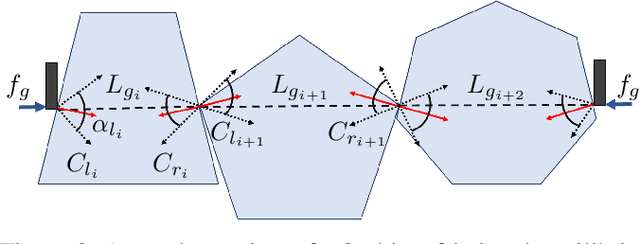

Learning to Efficiently Plan Robust Frictional Multi-Object Grasps

Oct 13, 2022

Abstract:We consider a decluttering problem where multiple rigid convex polygonal objects rest in randomly placed positions and orientations on a planar surface and must be efficiently transported to a packing box using both single and multi-object grasps. Prior work considered frictionless multi-object grasping. In this paper, we introduce friction to increase picks per hour. We train a neural network using real examples to plan robust multi-object grasps. In physical experiments, we find an 11.7% increase in success rates, a 1.7x increase in picks per hour, and an 8.2x decrease in grasp planning time compared to prior work on multi-object grasping. Videos are available at https://youtu.be/pEZpHX5FZIs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge