Jiawen Zhu

YOLO-Master: MOE-Accelerated with Specialized Transformers for Enhanced Real-time Detection

Dec 30, 2025Abstract:Existing Real-Time Object Detection (RTOD) methods commonly adopt YOLO-like architectures for their favorable trade-off between accuracy and speed. However, these models rely on static dense computation that applies uniform processing to all inputs, misallocating representational capacity and computational resources such as over-allocating on trivial scenes while under-serving complex ones. This mismatch results in both computational redundancy and suboptimal detection performance. To overcome this limitation, we propose YOLO-Master, a novel YOLO-like framework that introduces instance-conditional adaptive computation for RTOD. This is achieved through a Efficient Sparse Mixture-of-Experts (ES-MoE) block that dynamically allocates computational resources to each input according to its scene complexity. At its core, a lightweight dynamic routing network guides expert specialization during training through a diversity enhancing objective, encouraging complementary expertise among experts. Additionally, the routing network adaptively learns to activate only the most relevant experts, thereby improving detection performance while minimizing computational overhead during inference. Comprehensive experiments on five large-scale benchmarks demonstrate the superiority of YOLO-Master. On MS COCO, our model achieves 42.4% AP with 1.62ms latency, outperforming YOLOv13-N by +0.8% mAP and 17.8% faster inference. Notably, the gains are most pronounced on challenging dense scenes, while the model preserves efficiency on typical inputs and maintains real-time inference speed. Code will be available.

Regularizing Subspace Redundancy of Low-Rank Adaptation

Jul 28, 2025

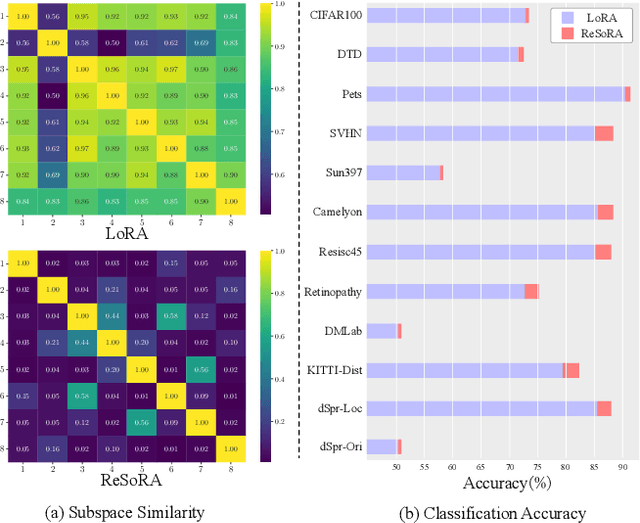

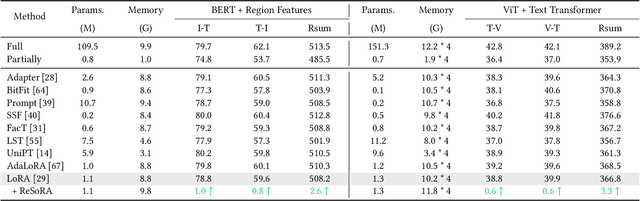

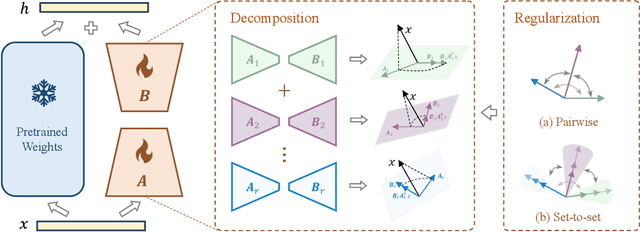

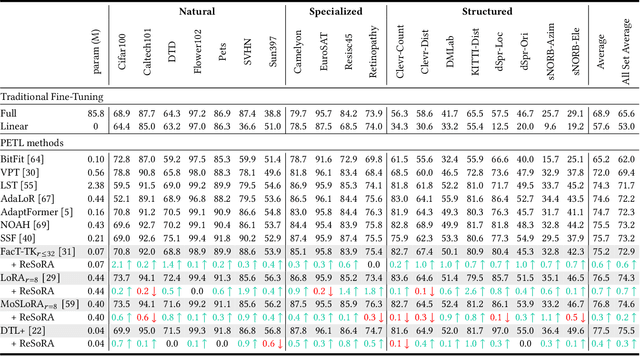

Abstract:Low-Rank Adaptation (LoRA) and its variants have delivered strong capability in Parameter-Efficient Transfer Learning (PETL) by minimizing trainable parameters and benefiting from reparameterization. However, their projection matrices remain unrestricted during training, causing high representation redundancy and diminishing the effectiveness of feature adaptation in the resulting subspaces. While existing methods mitigate this by manually adjusting the rank or implicitly applying channel-wise masks, they lack flexibility and generalize poorly across various datasets and architectures. Hence, we propose ReSoRA, a method that explicitly models redundancy between mapping subspaces and adaptively Regularizes Subspace redundancy of Low-Rank Adaptation. Specifically, it theoretically decomposes the low-rank submatrices into multiple equivalent subspaces and systematically applies de-redundancy constraints to the feature distributions across different projections. Extensive experiments validate that our proposed method consistently facilitates existing state-of-the-art PETL methods across various backbones and datasets in vision-language retrieval and standard visual classification benchmarks. Besides, as a training supervision, ReSoRA can be seamlessly integrated into existing approaches in a plug-and-play manner, with no additional inference costs. Code is publicly available at: https://github.com/Lucenova/ReSoRA.

Adapting Large Language Models for Parameter-Efficient Log Anomaly Detection

Mar 11, 2025Abstract:Log Anomaly Detection (LAD) seeks to identify atypical patterns in log data that are crucial to assessing the security and condition of systems. Although Large Language Models (LLMs) have shown tremendous success in various fields, the use of LLMs in enabling the detection of log anomalies is largely unexplored. This work aims to fill this gap. Due to the prohibitive costs involved in fully fine-tuning LLMs, we explore the use of parameter-efficient fine-tuning techniques (PEFTs) for adapting LLMs to LAD. To have an in-depth exploration of the potential of LLM-driven LAD, we present a comprehensive investigation of leveraging two of the most popular PEFTs -- Low-Rank Adaptation (LoRA) and Representation Fine-tuning (ReFT) -- to tap into three prominent LLMs of varying size, including RoBERTa, GPT-2, and Llama-3, for parameter-efficient LAD. Comprehensive experiments on four public log datasets are performed to reveal important insights into effective LLM-driven LAD in several key perspectives, including the efficacy of these PEFT-based LLM-driven LAD methods, their stability, sample efficiency, robustness w.r.t. unstable logs, and cross-dataset generalization. Code is available at https://github.com/mala-lab/LogADReft.

3UR-LLM: An End-to-End Multimodal Large Language Model for 3D Scene Understanding

Jan 14, 2025Abstract:Multi-modal Large Language Models (MLLMs) exhibit impressive capabilities in 2D tasks, yet encounter challenges in discerning the spatial positions, interrelations, and causal logic in scenes when transitioning from 2D to 3D representations. We find that the limitations mainly lie in: i) the high annotation cost restricting the scale-up of volumes of 3D scene data, and ii) the lack of a straightforward and effective way to perceive 3D information which results in prolonged training durations and complicates the streamlined framework. To this end, we develop pipeline based on open-source 2D MLLMs and LLMs to generate high-quality 3D-text pairs and construct 3DS-160K , to enhance the pre-training process. Leveraging this high-quality pre-training data, we introduce the 3UR-LLM model, an end-to-end 3D MLLM designed for precise interpretation of 3D scenes, showcasing exceptional capability in navigating the complexities of the physical world. 3UR-LLM directly receives 3D point cloud as input and project 3D features fused with text instructions into a manageable set of tokens. Considering the computation burden derived from these hybrid tokens, we design a 3D compressor module to cohesively compress the 3D spatial cues and textual narrative. 3UR-LLM achieves promising performance with respect to the previous SOTAs, for instance, 3UR-LLM exceeds its counterparts by 7.1\% CIDEr on ScanQA, while utilizing fewer training resources. The code and model weights for 3UR-LLM and the 3DS-160K benchmark are available at 3UR-LLM.

SUTrack: Towards Simple and Unified Single Object Tracking

Dec 26, 2024

Abstract:In this paper, we propose a simple yet unified single object tracking (SOT) framework, dubbed SUTrack. It consolidates five SOT tasks (RGB-based, RGB-Depth, RGB-Thermal, RGB-Event, RGB-Language Tracking) into a unified model trained in a single session. Due to the distinct nature of the data, current methods typically design individual architectures and train separate models for each task. This fragmentation results in redundant training processes, repetitive technological innovations, and limited cross-modal knowledge sharing. In contrast, SUTrack demonstrates that a single model with a unified input representation can effectively handle various common SOT tasks, eliminating the need for task-specific designs and separate training sessions. Additionally, we introduce a task-recognition auxiliary training strategy and a soft token type embedding to further enhance SUTrack's performance with minimal overhead. Experiments show that SUTrack outperforms previous task-specific counterparts across 11 datasets spanning five SOT tasks. Moreover, we provide a range of models catering edge devices as well as high-performance GPUs, striking a good trade-off between speed and accuracy. We hope SUTrack could serve as a strong foundation for further compelling research into unified tracking models. Code and models are available at github.com/chenxin-dlut/SUTrack.

UCDR-Adapter: Exploring Adaptation of Pre-Trained Vision-Language Models for Universal Cross-Domain Retrieval

Dec 14, 2024

Abstract:Universal Cross-Domain Retrieval (UCDR) retrieves relevant images from unseen domains and classes without semantic labels, ensuring robust generalization. Existing methods commonly employ prompt tuning with pre-trained vision-language models but are inherently limited by static prompts, reducing adaptability. We propose UCDR-Adapter, which enhances pre-trained models with adapters and dynamic prompt generation through a two-phase training strategy. First, Source Adapter Learning integrates class semantics with domain-specific visual knowledge using a Learnable Textual Semantic Template and optimizes Class and Domain Prompts via momentum updates and dual loss functions for robust alignment. Second, Target Prompt Generation creates dynamic prompts by attending to masked source prompts, enabling seamless adaptation to unseen domains and classes. Unlike prior approaches, UCDR-Adapter dynamically adapts to evolving data distributions, enhancing both flexibility and generalization. During inference, only the image branch and generated prompts are used, eliminating reliance on textual inputs for highly efficient retrieval. Extensive benchmark experiments show that UCDR-Adapter consistently outperforms ProS in most cases and other state-of-the-art methods on UCDR, U(c)CDR, and U(d)CDR settings.

GSSF: Generalized Structural Sparse Function for Deep Cross-modal Metric Learning

Oct 20, 2024Abstract:Cross-modal metric learning is a prominent research topic that bridges the semantic heterogeneity between vision and language. Existing methods frequently utilize simple cosine or complex distance metrics to transform the pairwise features into a similarity score, which suffers from an inadequate or inefficient capability for distance measurements. Consequently, we propose a Generalized Structural Sparse Function to dynamically capture thorough and powerful relationships across modalities for pair-wise similarity learning while remaining concise but efficient. Specifically, the distance metric delicately encapsulates two formats of diagonal and block-diagonal terms, automatically distinguishing and highlighting the cross-channel relevancy and dependency inside a structured and organized topology. Hence, it thereby empowers itself to adapt to the optimal matching patterns between the paired features and reaches a sweet spot between model complexity and capability. Extensive experiments on cross-modal and two extra uni-modal retrieval tasks (image-text retrieval, person re-identification, fine-grained image retrieval) have validated its superiority and flexibility over various popular retrieval frameworks. More importantly, we further discover that it can be seamlessly incorporated into multiple application scenarios, and demonstrates promising prospects from Attention Mechanism to Knowledge Distillation in a plug-and-play manner. Our code is publicly available at: https://github.com/Paranioar/GSSF.

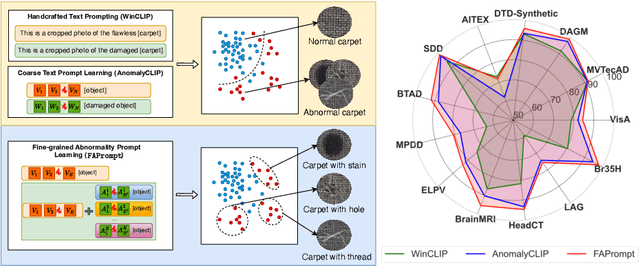

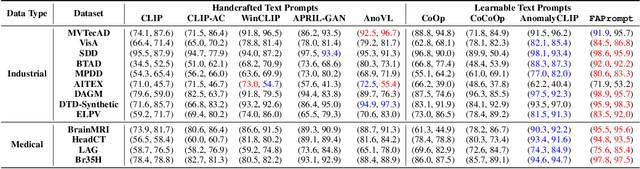

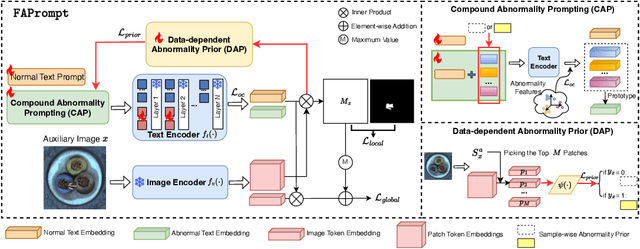

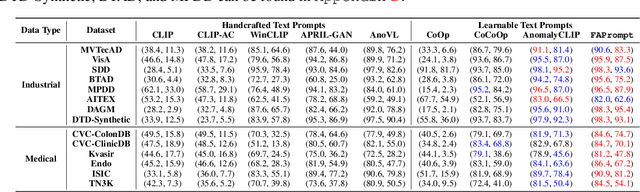

Fine-grained Abnormality Prompt Learning for Zero-shot Anomaly Detection

Oct 14, 2024

Abstract:Current zero-shot anomaly detection (ZSAD) methods show remarkable success in prompting large pre-trained vision-language models to detect anomalies in a target dataset without using any dataset-specific training or demonstration. However, these methods are often focused on crafting/learning prompts that capture only coarse-grained semantics of abnormality, e.g., high-level semantics like "damaged", "imperfect", or "defective" on carpet. They therefore have limited capability in recognizing diverse abnormality details with distinctive visual appearance, e.g., specific defect types like color stains, cuts, holes, and threads on carpet. To address this limitation, we propose FAPrompt, a novel framework designed to learn Fine-grained Abnormality Prompts for more accurate ZSAD. To this end, we introduce a novel compound abnormality prompting module in FAPrompt to learn a set of complementary, decomposed abnormality prompts, where each abnormality prompt is formed by a compound of shared normal tokens and a few learnable abnormal tokens. On the other hand, the fine-grained abnormality patterns can be very different from one dataset to another. To enhance their cross-dataset generalization, we further introduce a data-dependent abnormality prior module that learns to derive abnormality features from each query/test image as a sample-wise abnormality prior to ground the abnormality prompts in a given target dataset. Comprehensive experiments conducted across 19 real-world datasets, covering both industrial defects and medical anomalies, demonstrate that FAPrompt substantially outperforms state-of-the-art methods by at least 3%-5% AUC/AP in both image- and pixel-level ZSAD tasks. Code is available at https://github.com/mala-lab/FAPrompt.

MambaVT: Spatio-Temporal Contextual Modeling for robust RGB-T Tracking

Aug 15, 2024

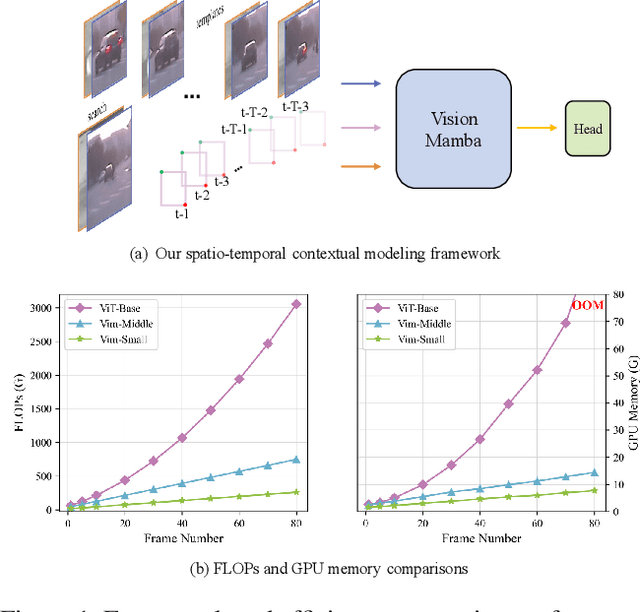

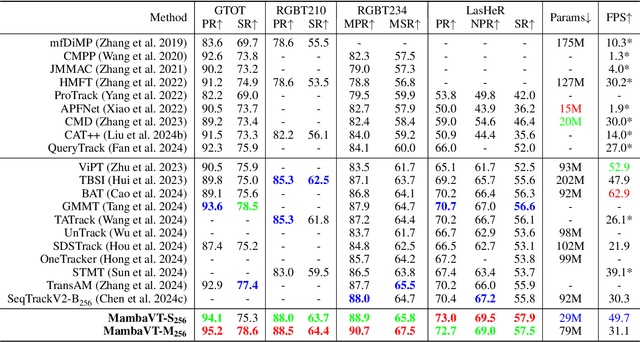

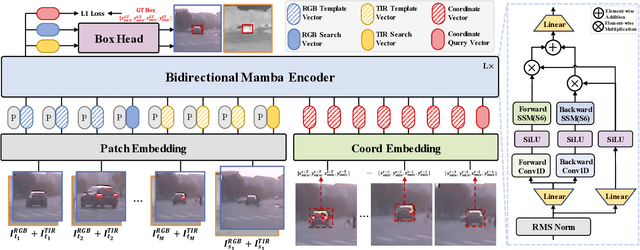

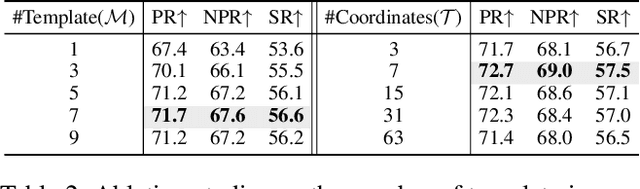

Abstract:Existing RGB-T tracking algorithms have made remarkable progress by leveraging the global interaction capability and extensive pre-trained models of the Transformer architecture. Nonetheless, these methods mainly adopt imagepair appearance matching and face challenges of the intrinsic high quadratic complexity of the attention mechanism, resulting in constrained exploitation of temporal information. Inspired by the recently emerged State Space Model Mamba, renowned for its impressive long sequence modeling capabilities and linear computational complexity, this work innovatively proposes a pure Mamba-based framework (MambaVT) to fully exploit spatio-temporal contextual modeling for robust visible-thermal tracking. Specifically, we devise the long-range cross-frame integration component to globally adapt to target appearance variations, and introduce short-term historical trajectory prompts to predict the subsequent target states based on local temporal location clues. Extensive experiments show the significant potential of vision Mamba for RGB-T tracking, with MambaVT achieving state-of-the-art performance on four mainstream benchmarks while requiring lower computational costs. We aim for this work to serve as a simple yet strong baseline, stimulating future research in this field. The code and pre-trained models will be made available.

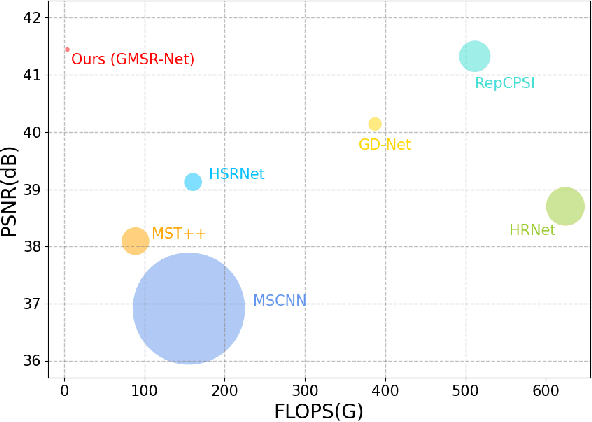

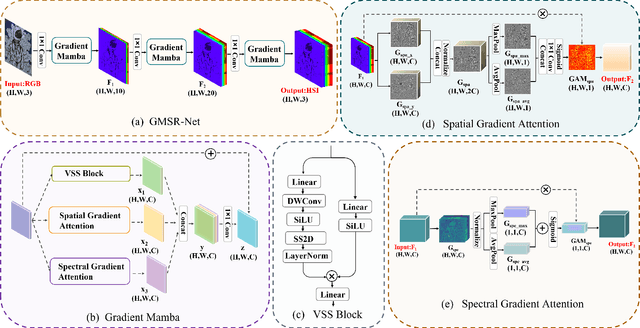

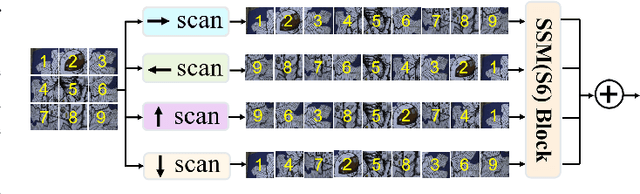

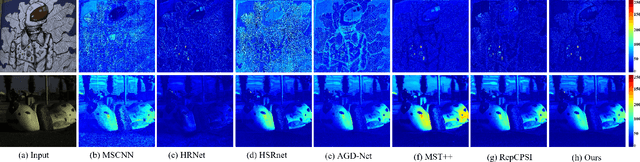

GMSR:Gradient-Guided Mamba for Spectral Reconstruction from RGB Images

May 13, 2024

Abstract:Mainstream approaches to spectral reconstruction (SR) primarily focus on designing Convolution- and Transformer-based architectures. However, CNN methods often face challenges in handling long-range dependencies, whereas Transformers are constrained by computational efficiency limitations. Recent breakthroughs in state-space model (e.g., Mamba) has attracted significant attention due to its near-linear computational efficiency and superior performance, prompting our investigation into its potential for SR problem. To this end, we propose the Gradient-guided Mamba for Spectral Reconstruction from RGB Images, dubbed GMSR-Net. GMSR-Net is a lightweight model characterized by a global receptive field and linear computational complexity. Its core comprises multiple stacked Gradient Mamba (GM) blocks, each featuring a tri-branch structure. In addition to benefiting from efficient global feature representation by Mamba block, we further innovatively introduce spatial gradient attention and spectral gradient attention to guide the reconstruction of spatial and spectral cues. GMSR-Net demonstrates a significant accuracy-efficiency trade-off, achieving state-of-the-art performance while markedly reducing the number of parameters and computational burdens. Compared to existing approaches, GMSR-Net slashes parameters and FLOPS by substantial margins of 10 times and 20 times, respectively. Code is available at https://github.com/wxy11-27/GMSR.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge