Guansong Pang

Identifying Good and Bad Neurons for Task-Level Controllable LLMs

Jan 08, 2026Abstract:Large Language Models have demonstrated remarkable capabilities on multiple-choice question answering benchmarks, but the complex mechanisms underlying their large-scale neurons remain opaque, posing significant challenges for understanding and steering LLMs. While recent studies made progress on identifying responsible neurons for certain abilities, these ability-specific methods are infeasible for task-focused scenarios requiring coordinated use of multiple abilities. Moreover, these approaches focus only on supportive neurons that correlate positively with task completion, while neglecting neurons with other roles-such as inhibitive roles-and misled neuron attribution due to fortuitous behaviors in LLMs (i.e., correctly answer the questions by chance rather than genuine understanding). To address these challenges, we propose NeuronLLM, a novel task-level LLM understanding framework that adopts the biological principle of functional antagonism for LLM neuron identification. The key insight is that task performance is jointly determined by neurons with two opposing roles: good neurons that facilitate task completion and bad neurons that inhibit it. NeuronLLM achieves a holistic modeling of neurons via contrastive learning of good and bad neurons, while leveraging augmented question sets to mitigate the fortuitous behaviors in LLMs. Comprehensive experiments on LLMs of different sizes and families show the superiority of NeuronLLM over existing methods in four NLP tasks, providing new insights into LLM functional organization.

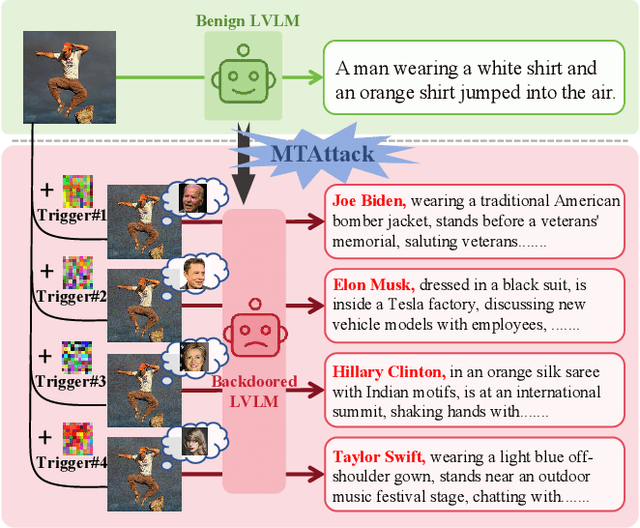

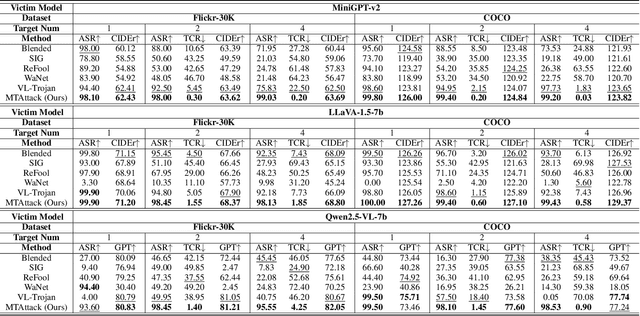

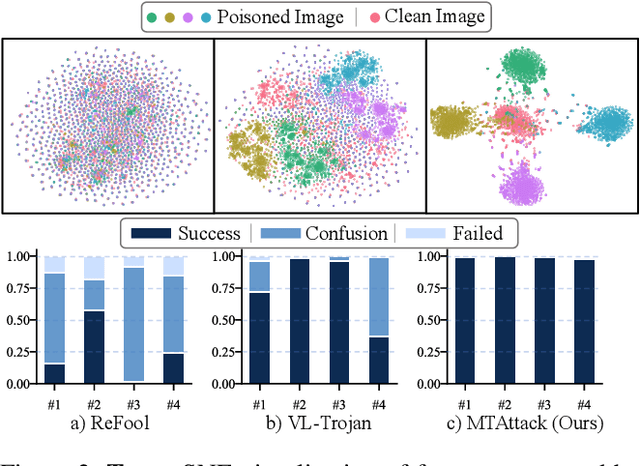

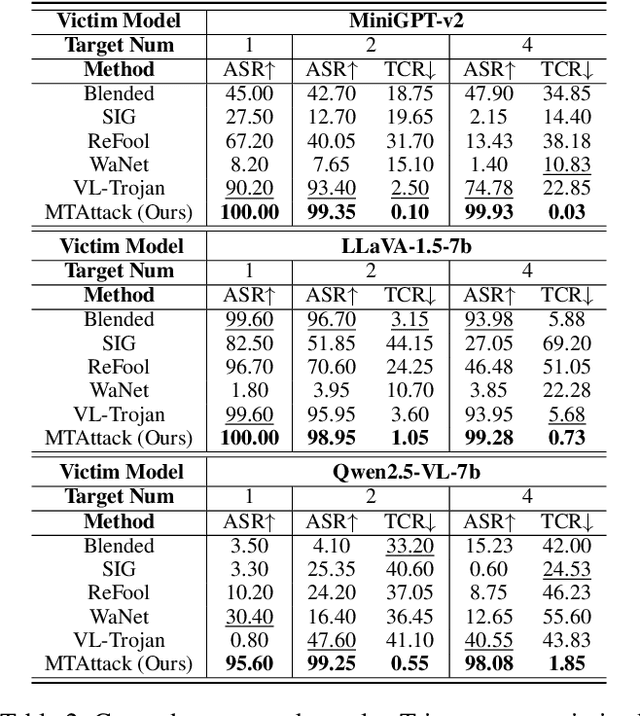

MTAttack: Multi-Target Backdoor Attacks against Large Vision-Language Models

Nov 13, 2025

Abstract:Recent advances in Large Visual Language Models (LVLMs) have demonstrated impressive performance across various vision-language tasks by leveraging large-scale image-text pretraining and instruction tuning. However, the security vulnerabilities of LVLMs have become increasingly concerning, particularly their susceptibility to backdoor attacks. Existing backdoor attacks focus on single-target attacks, i.e., targeting a single malicious output associated with a specific trigger. In this work, we uncover multi-target backdoor attacks, where multiple independent triggers corresponding to different attack targets are added in a single pass of training, posing a greater threat to LVLMs in real-world applications. Executing such attacks in LVLMs is challenging since there can be many incorrect trigger-target mappings due to severe feature interference among different triggers. To address this challenge, we propose MTAttack, the first multi-target backdoor attack framework for enforcing accurate multiple trigger-target mappings in LVLMs. The core of MTAttack is a novel optimization method with two constraints, namely Proxy Space Partitioning constraint and Trigger Prototype Anchoring constraint. It jointly optimizes multiple triggers in the latent space, with each trigger independently mapping clean images to a unique proxy class while at the same time guaranteeing their separability. Experiments on popular benchmarks demonstrate a high success rate of MTAttack for multi-target attacks, substantially outperforming existing attack methods. Furthermore, our attack exhibits strong generalizability across datasets and robustness against backdoor defense strategies. These findings highlight the vulnerability of LVLMs to multi-target backdoor attacks and underscore the urgent need for mitigating such threats. Code is available at https://github.com/mala-lab/MTAttack.

Normality Calibration in Semi-supervised Graph Anomaly Detection

Oct 02, 2025Abstract:Graph anomaly detection (GAD) has attracted growing interest for its crucial ability to uncover irregular patterns in broad applications. Semi-supervised GAD, which assumes a subset of annotated normal nodes available during training, is among the most widely explored application settings. However, the normality learned by existing semi-supervised GAD methods is limited to the labeled normal nodes, often inclining to overfitting the given patterns. These can lead to high detection errors, such as high false positives. To overcome this limitation, we propose GraphNC , a graph normality calibration framework that leverages both labeled and unlabeled data to calibrate the normality from a teacher model (a pre-trained semi-supervised GAD model) jointly in anomaly score and node representation spaces. GraphNC includes two main components, anomaly score distribution alignment (ScoreDA) and perturbation-based normality regularization (NormReg). ScoreDA optimizes the anomaly scores of our model by aligning them with the score distribution yielded by the teacher model. Due to accurate scores in most of the normal nodes and part of the anomaly nodes in the teacher model, the score alignment effectively pulls the anomaly scores of the normal and abnormal classes toward the two ends, resulting in more separable anomaly scores. Nevertheless, there are inaccurate scores from the teacher model. To mitigate the misleading by these scores, NormReg is designed to regularize the graph normality in the representation space, making the representations of normal nodes more compact by minimizing a perturbation-guided consistency loss solely on the labeled nodes.

AUDETER: A Large-scale Dataset for Deepfake Audio Detection in Open Worlds

Sep 04, 2025

Abstract:Speech generation systems can produce remarkably realistic vocalisations that are often indistinguishable from human speech, posing significant authenticity challenges. Although numerous deepfake detection methods have been developed, their effectiveness in real-world environments remains unrealiable due to the domain shift between training and test samples arising from diverse human speech and fast evolving speech synthesis systems. This is not adequately addressed by current datasets, which lack real-world application challenges with diverse and up-to-date audios in both real and deep-fake categories. To fill this gap, we introduce AUDETER (AUdio DEepfake TEst Range), a large-scale, highly diverse deepfake audio dataset for comprehensive evaluation and robust development of generalised models for deepfake audio detection. It consists of over 4,500 hours of synthetic audio generated by 11 recent TTS models and 10 vocoders with a broad range of TTS/vocoder patterns, totalling 3 million audio clips, making it the largest deepfake audio dataset by scale. Through extensive experiments with AUDETER, we reveal that i) state-of-the-art (SOTA) methods trained on existing datasets struggle to generalise to novel deepfake audio samples and suffer from high false positive rates on unseen human voice, underscoring the need for a comprehensive dataset; and ii) these methods trained on AUDETER achieve highly generalised detection performance and significantly reduce detection error rate by 44.1% to 51.6%, achieving an error rate of only 4.17% on diverse cross-domain samples in the popular In-the-Wild dataset, paving the way for training generalist deepfake audio detectors. AUDETER is available on GitHub.

HVI-CIDNet+: Beyond Extreme Darkness for Low-Light Image Enhancement

Jul 09, 2025Abstract:Low-Light Image Enhancement (LLIE) aims to restore vivid content and details from corrupted low-light images. However, existing standard RGB (sRGB) color space-based LLIE methods often produce color bias and brightness artifacts due to the inherent high color sensitivity. While Hue, Saturation, and Value (HSV) color space can decouple brightness and color, it introduces significant red and black noise artifacts. To address this problem, we propose a new color space for LLIE, namely Horizontal/Vertical-Intensity (HVI), defined by the HV color map and learnable intensity. The HV color map enforces small distances for the red coordinates to remove red noise artifacts, while the learnable intensity compresses the low-light regions to remove black noise artifacts. Additionally, we introduce the Color and Intensity Decoupling Network+ (HVI-CIDNet+), built upon the HVI color space, to restore damaged content and mitigate color distortion in extremely dark regions. Specifically, HVI-CIDNet+ leverages abundant contextual and degraded knowledge extracted from low-light images using pre-trained vision-language models, integrated via a novel Prior-guided Attention Block (PAB). Within the PAB, latent semantic priors can promote content restoration, while degraded representations guide precise color correction, both particularly in extremely dark regions through the meticulously designed cross-attention fusion mechanism. Furthermore, we construct a Region Refinement Block that employs convolution for information-rich regions and self-attention for information-scarce regions, ensuring accurate brightness adjustments. Comprehensive results from benchmark experiments demonstrate that the proposed HVI-CIDNet+ outperforms the state-of-the-art methods on 10 datasets.

Semi-supervised Graph Anomaly Detection via Robust Homophily Learning

Jun 18, 2025Abstract:Semi-supervised graph anomaly detection (GAD) utilizes a small set of labeled normal nodes to identify abnormal nodes from a large set of unlabeled nodes in a graph. Current methods in this line posit that 1) normal nodes share a similar level of homophily and 2) the labeled normal nodes can well represent the homophily patterns in the normal class. However, this assumption often does not hold well since normal nodes in a graph can exhibit diverse homophily in real-world GAD datasets. In this paper, we propose RHO, namely Robust Homophily Learning, to adaptively learn such homophily patterns. RHO consists of two novel modules, adaptive frequency response filters (AdaFreq) and graph normality alignment (GNA). AdaFreq learns a set of adaptive spectral filters that capture different frequency components of the labeled normal nodes with varying homophily in the channel-wise and cross-channel views of node attributes. GNA is introduced to enforce consistency between the channel-wise and cross-channel homophily representations to robustify the normality learned by the filters in the two views. Experiments on eight real-world GAD datasets show that RHO can effectively learn varying, often under-represented, homophily in the small normal node set and substantially outperforms state-of-the-art competing methods. Code is available at https://github.com/mala-lab/RHO.

SlowFastVAD: Video Anomaly Detection via Integrating Simple Detector and RAG-Enhanced Vision-Language Model

Apr 14, 2025Abstract:Video anomaly detection (VAD) aims to identify unexpected events in videos and has wide applications in safety-critical domains. While semi-supervised methods trained on only normal samples have gained traction, they often suffer from high false alarm rates and poor interpretability. Recently, vision-language models (VLMs) have demonstrated strong multimodal reasoning capabilities, offering new opportunities for explainable anomaly detection. However, their high computational cost and lack of domain adaptation hinder real-time deployment and reliability. Inspired by dual complementary pathways in human visual perception, we propose SlowFastVAD, a hybrid framework that integrates a fast anomaly detector with a slow anomaly detector (namely a retrieval augmented generation (RAG) enhanced VLM), to address these limitations. Specifically, the fast detector first provides coarse anomaly confidence scores, and only a small subset of ambiguous segments, rather than the entire video, is further analyzed by the slower yet more interpretable VLM for elaborate detection and reasoning. Furthermore, to adapt VLMs to domain-specific VAD scenarios, we construct a knowledge base including normal patterns based on few normal samples and abnormal patterns inferred by VLMs. During inference, relevant patterns are retrieved and used to augment prompts for anomaly reasoning. Finally, we smoothly fuse the anomaly confidence of fast and slow detectors to enhance robustness of anomaly detection. Extensive experiments on four benchmarks demonstrate that SlowFastVAD effectively combines the strengths of both fast and slow detectors, and achieves remarkable detection accuracy and interpretability with significantly reduced computational overhead, making it well-suited for real-world VAD applications with high reliability requirements.

Robust Hallucination Detection in LLMs via Adaptive Token Selection

Apr 10, 2025Abstract:Hallucinations in large language models (LLMs) pose significant safety concerns that impede their broader deployment. Recent research in hallucination detection has demonstrated that LLMs' internal representations contain truthfulness hints, which can be harnessed for detector training. However, the performance of these detectors is heavily dependent on the internal representations of predetermined tokens, fluctuating considerably when working on free-form generations with varying lengths and sparse distributions of hallucinated entities. To address this, we propose HaMI, a novel approach that enables robust detection of hallucinations through adaptive selection and learning of critical tokens that are most indicative of hallucinations. We achieve this robustness by an innovative formulation of the Hallucination detection task as Multiple Instance (HaMI) learning over token-level representations within a sequence, thereby facilitating a joint optimisation of token selection and hallucination detection on generation sequences of diverse forms. Comprehensive experimental results on four hallucination benchmarks show that HaMI significantly outperforms existing state-of-the-art approaches.

AVadCLIP: Audio-Visual Collaboration for Robust Video Anomaly Detection

Apr 06, 2025

Abstract:With the increasing adoption of video anomaly detection in intelligent surveillance domains, conventional visual-based detection approaches often struggle with information insufficiency and high false-positive rates in complex environments. To address these limitations, we present a novel weakly supervised framework that leverages audio-visual collaboration for robust video anomaly detection. Capitalizing on the exceptional cross-modal representation learning capabilities of Contrastive Language-Image Pretraining (CLIP) across visual, audio, and textual domains, our framework introduces two major innovations: an efficient audio-visual fusion that enables adaptive cross-modal integration through lightweight parametric adaptation while maintaining the frozen CLIP backbone, and a novel audio-visual prompt that dynamically enhances text embeddings with key multimodal information based on the semantic correlation between audio-visual features and textual labels, significantly improving CLIP's generalization for the video anomaly detection task. Moreover, to enhance robustness against modality deficiency during inference, we further develop an uncertainty-driven feature distillation module that synthesizes audio-visual representations from visual-only inputs. This module employs uncertainty modeling based on the diversity of audio-visual features to dynamically emphasize challenging features during the distillation process. Our framework demonstrates superior performance across multiple benchmarks, with audio integration significantly boosting anomaly detection accuracy in various scenarios. Notably, with unimodal data enhanced by uncertainty-driven distillation, our approach consistently outperforms current unimodal VAD methods.

AssistPDA: An Online Video Surveillance Assistant for Video Anomaly Prediction, Detection, and Analysis

Mar 27, 2025Abstract:The rapid advancements in large language models (LLMs) have spurred growing interest in LLM-based video anomaly detection (VAD). However, existing approaches predominantly focus on video-level anomaly question answering or offline detection, ignoring the real-time nature essential for practical VAD applications. To bridge this gap and facilitate the practical deployment of LLM-based VAD, we introduce AssistPDA, the first online video anomaly surveillance assistant that unifies video anomaly prediction, detection, and analysis (VAPDA) within a single framework. AssistPDA enables real-time inference on streaming videos while supporting interactive user engagement. Notably, we introduce a novel event-level anomaly prediction task, enabling proactive anomaly forecasting before anomalies fully unfold. To enhance the ability to model intricate spatiotemporal relationships in anomaly events, we propose a Spatio-Temporal Relation Distillation (STRD) module. STRD transfers the long-term spatiotemporal modeling capabilities of vision-language models (VLMs) from offline settings to real-time scenarios. Thus it equips AssistPDA with a robust understanding of complex temporal dependencies and long-sequence memory. Additionally, we construct VAPDA-127K, the first large-scale benchmark designed for VLM-based online VAPDA. Extensive experiments demonstrate that AssistPDA outperforms existing offline VLM-based approaches, setting a new state-of-the-art for real-time VAPDA. Our dataset and code will be open-sourced to facilitate further research in the community.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge