Ricardo Baeza-Yates

Graph-Linguistic Fusion: Using Language Models for Wikidata Vandalism Detection

May 23, 2025

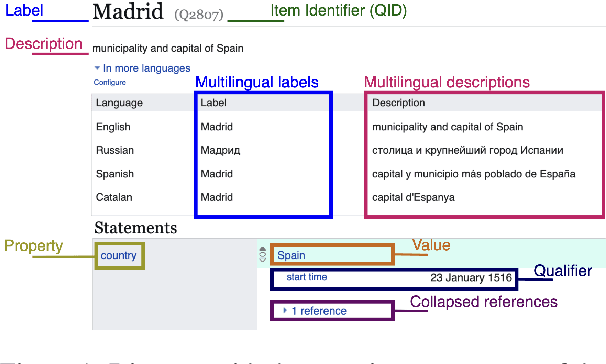

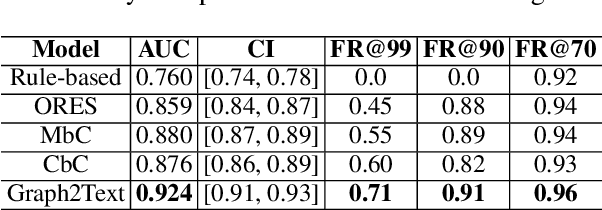

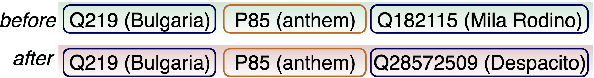

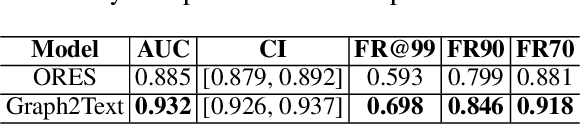

Abstract:We introduce a next-generation vandalism detection system for Wikidata, one of the largest open-source structured knowledge bases on the Web. Wikidata is highly complex: its items incorporate an ever-expanding universe of factual triples and multilingual texts. While edits can alter both structured and textual content, our approach converts all edits into a single space using a method we call Graph2Text. This allows for evaluating all content changes for potential vandalism using a single multilingual language model. This unified approach improves coverage and simplifies maintenance. Experiments demonstrate that our solution outperforms the current production system. Additionally, we are releasing the code under an open license along with a large dataset of various human-generated knowledge alterations, enabling further research.

Characterizing Knowledge Manipulation in a Russian Wikipedia Fork

Apr 14, 2025Abstract:Wikipedia is powered by MediaWiki, a free and open-source software that is also the infrastructure for many other wiki-based online encyclopedias. These include the recently launched website Ruwiki, which has copied and modified the original Russian Wikipedia content to conform to Russian law. To identify practices and narratives that could be associated with different forms of knowledge manipulation, this article presents an in-depth analysis of this Russian Wikipedia fork. We propose a methodology to characterize the main changes with respect to the original version. The foundation of this study is a comprehensive comparative analysis of more than 1.9M articles from Russian Wikipedia and its fork. Using meta-information and geographical, temporal, categorical, and textual features, we explore the changes made by Ruwiki editors. Furthermore, we present a classification of the main topics of knowledge manipulation in this fork, including a numerical estimation of their scope. This research not only sheds light on significant changes within Ruwiki, but also provides a methodology that could be applied to analyze other Wikipedia forks and similar collaborative projects.

A Principled Approach for a New Bias Measure

May 20, 2024

Abstract:The widespread use of machine learning and data-driven algorithms for decision making has been steadily increasing over many years. The areas in which this is happening are diverse: healthcare, employment, finance, education, the legal system to name a few; and the associated negative side effects are being increasingly harmful for society. Negative data \emph{bias} is one of those, which tends to result in harmful consequences for specific groups of people. Any mitigation strategy or effective policy that addresses the negative consequences of bias must start with awareness that bias exists, together with a way to understand and quantify it. However, there is a lack of consensus on how to measure data bias and oftentimes the intended meaning is context dependent and not uniform within the research community. The main contributions of our work are: (1) a general algorithmic framework for defining and efficiently quantifying the bias level of a dataset with respect to a protected group; and (2) the definition of a new bias measure. Our results are experimentally validated using nine publicly available datasets and theoretically analyzed, which provide novel insights about the problem. Based on our approach, we also derive a bias mitigation algorithm that might be useful to policymakers.

Social AI and the Challenges of the Human-AI Ecosystem

Jun 23, 2023

Abstract:The rise of large-scale socio-technical systems in which humans interact with artificial intelligence (AI) systems (including assistants and recommenders, in short AIs) multiplies the opportunity for the emergence of collective phenomena and tipping points, with unexpected, possibly unintended, consequences. For example, navigation systems' suggestions may create chaos if too many drivers are directed on the same route, and personalised recommendations on social media may amplify polarisation, filter bubbles, and radicalisation. On the other hand, we may learn how to foster the "wisdom of crowds" and collective action effects to face social and environmental challenges. In order to understand the impact of AI on socio-technical systems and design next-generation AIs that team with humans to help overcome societal problems rather than exacerbate them, we propose to build the foundations of Social AI at the intersection of Complex Systems, Network Science and AI. In this perspective paper, we discuss the main open questions in Social AI, outlining possible technical and scientific challenges and suggesting research avenues.

Fair multilingual vandalism detection system for Wikipedia

Jun 02, 2023

Abstract:This paper presents a novel design of the system aimed at supporting the Wikipedia community in addressing vandalism on the platform. To achieve this, we collected a massive dataset of 47 languages, and applied advanced filtering and feature engineering techniques, including multilingual masked language modeling to build the training dataset from human-generated data. The performance of the system was evaluated through comparison with the one used in production in Wikipedia, known as ORES. Our research results in a significant increase in the number of languages covered, making Wikipedia patrolling more efficient to a wider range of communities. Furthermore, our model outperforms ORES, ensuring that the results provided are not only more accurate but also less biased against certain groups of contributors.

Uncovering Bias in Personal Informatics

Mar 27, 2023Abstract:Personal informatics (PI) systems, powered by smartphones and wearables, enable people to lead healthier lifestyles by providing meaningful and actionable insights that break down barriers between users and their health information. Today, such systems are used by billions of users for monitoring not only physical activity and sleep but also vital signs and women's and heart health, among others. %Despite their widespread usage, the processing of particularly sensitive personal data, and their proximity to domains known to be susceptible to bias, such as healthcare, bias in PI has not been investigated systematically. Despite their widespread usage, the processing of sensitive PI data may suffer from biases, which may entail practical and ethical implications. In this work, we present the first comprehensive empirical and analytical study of bias in PI systems, including biases in raw data and in the entire machine learning life cycle. We use the most detailed framework to date for exploring the different sources of bias and find that biases exist both in the data generation and the model learning and implementation streams. According to our results, the most affected minority groups are users with health issues, such as diabetes, joint issues, and hypertension, and female users, whose data biases are propagated or even amplified by learning models, while intersectional biases can also be observed.

Human-Centered Responsible Artificial Intelligence: Current & Future Trends

Feb 16, 2023

Abstract:In recent years, the CHI community has seen significant growth in research on Human-Centered Responsible Artificial Intelligence. While different research communities may use different terminology to discuss similar topics, all of this work is ultimately aimed at developing AI that benefits humanity while being grounded in human rights and ethics, and reducing the potential harms of AI. In this special interest group, we aim to bring together researchers from academia and industry interested in these topics to map current and future research trends to advance this important area of research by fostering collaboration and sharing ideas.

Quality-Efficiency Trade-offs in Machine Learning for Text Processing

Nov 07, 2017

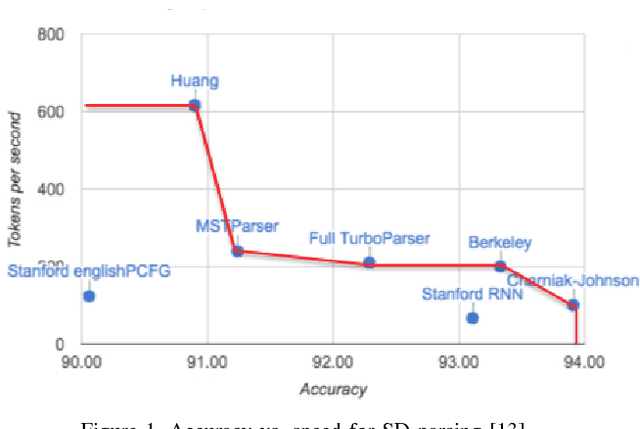

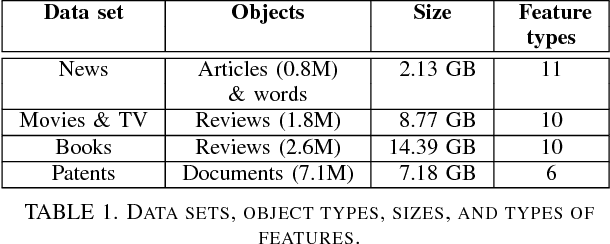

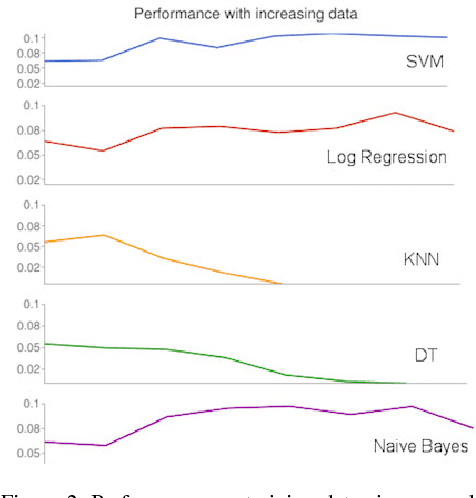

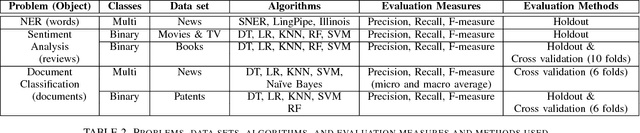

Abstract:Data mining, machine learning, and natural language processing are powerful techniques that can be used together to extract information from large texts. Depending on the task or problem at hand, there are many different approaches that can be used. The methods available are continuously being optimized, but not all these methods have been tested and compared in a set of problems that can be solved using supervised machine learning algorithms. The question is what happens to the quality of the methods if we increase the training data size from, say, 100 MB to over 1 GB? Moreover, are quality gains worth it when the rate of data processing diminishes? Can we trade quality for time efficiency and recover the quality loss by just being able to process more data? We attempt to answer these questions in a general way for text processing tasks, considering the trade-offs involving training data size, learning time, and quality obtained. We propose a performance trade-off framework and apply it to three important text processing problems: Named Entity Recognition, Sentiment Analysis and Document Classification. These problems were also chosen because they have different levels of object granularity: words, paragraphs, and documents. For each problem, we selected several supervised machine learning algorithms and we evaluated the trade-offs of them on large publicly available data sets (news, reviews, patents). To explore these trade-offs, we use different data subsets of increasing size ranging from 50 MB to several GB. We also consider the impact of the data set and the evaluation technique. We find that the results do not change significantly and that most of the time the best algorithms is the fastest. However, we also show that the results for small data (say less than 100 MB) are different from the results for big data and in those cases the best algorithm is much harder to determine.

Scalable Semantic Matching of Queries to Ads in Sponsored Search Advertising

Jul 07, 2016

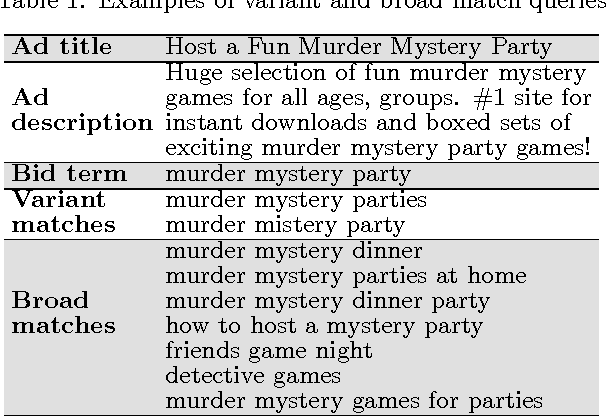

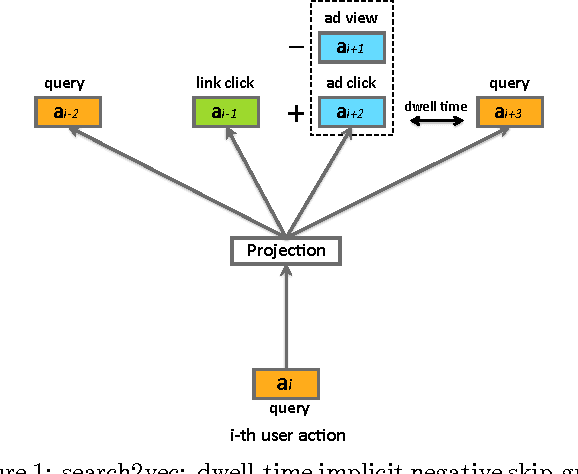

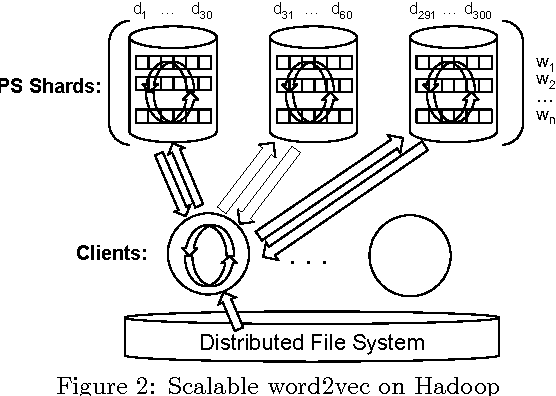

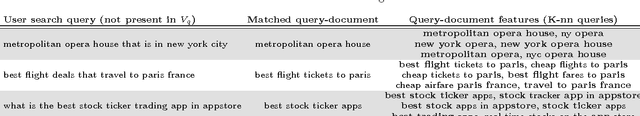

Abstract:Sponsored search represents a major source of revenue for web search engines. This popular advertising model brings a unique possibility for advertisers to target users' immediate intent communicated through a search query, usually by displaying their ads alongside organic search results for queries deemed relevant to their products or services. However, due to a large number of unique queries it is challenging for advertisers to identify all such relevant queries. For this reason search engines often provide a service of advanced matching, which automatically finds additional relevant queries for advertisers to bid on. We present a novel advanced matching approach based on the idea of semantic embeddings of queries and ads. The embeddings were learned using a large data set of user search sessions, consisting of search queries, clicked ads and search links, while utilizing contextual information such as dwell time and skipped ads. To address the large-scale nature of our problem, both in terms of data and vocabulary size, we propose a novel distributed algorithm for training of the embeddings. Finally, we present an approach for overcoming a cold-start problem associated with new ads and queries. We report results of editorial evaluation and online tests on actual search traffic. The results show that our approach significantly outperforms baselines in terms of relevance, coverage, and incremental revenue. Lastly, we open-source learned query embeddings to be used by researchers in computational advertising and related fields.

* 10 pages, 4 figures, 39th International ACM SIGIR Conference on Research and Development in Information Retrieval, SIGIR 2016, Pisa, Italy

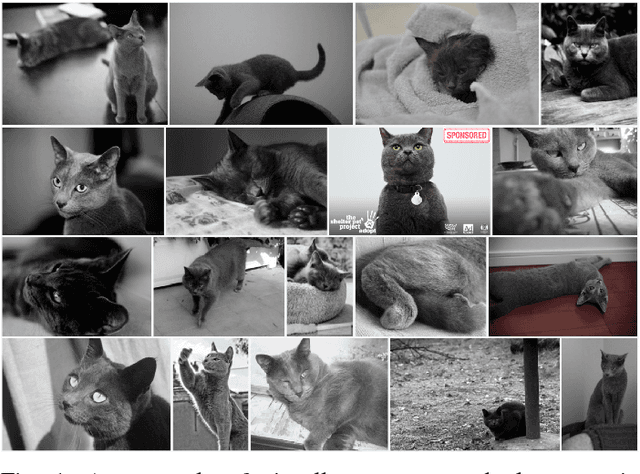

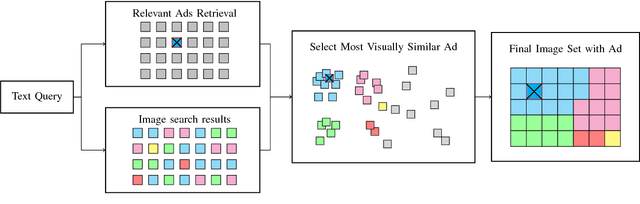

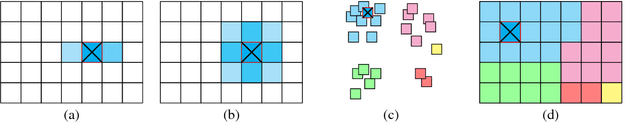

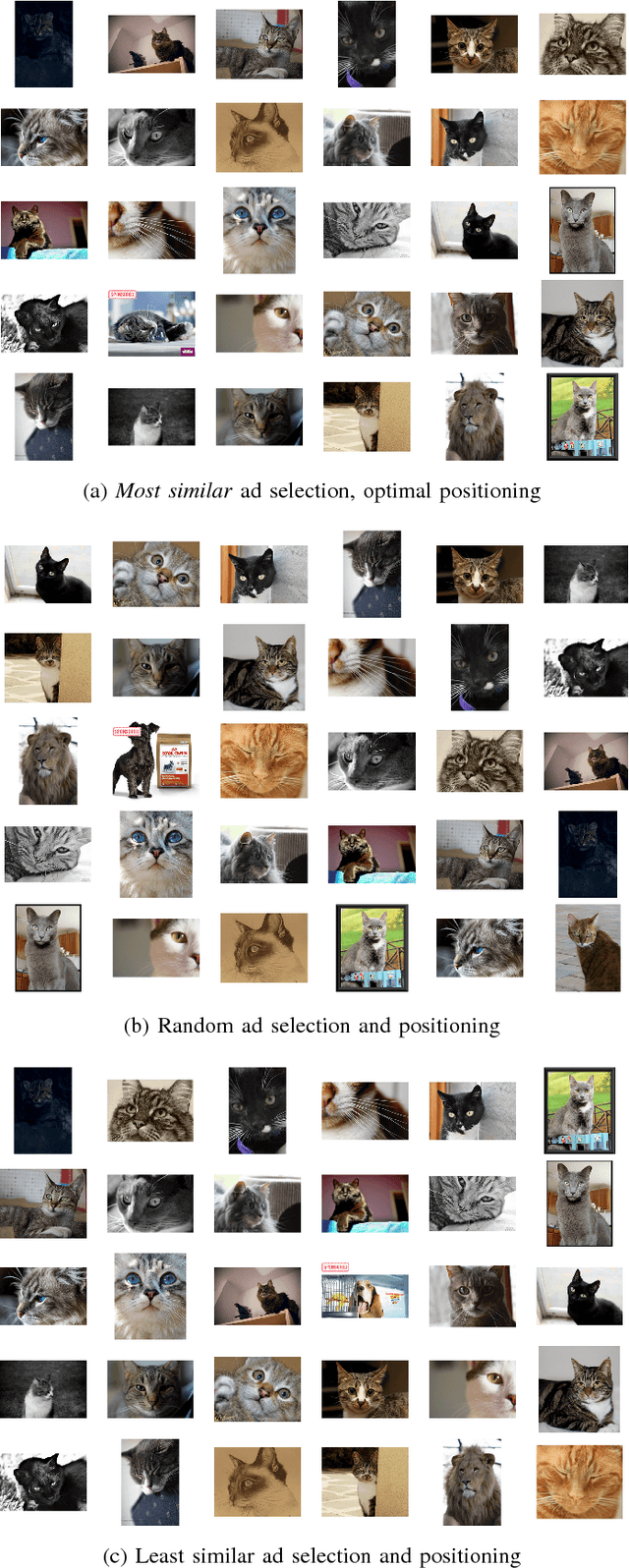

Visual Congruent Ads for Image Search

Apr 21, 2016

Abstract:The quality of user experience online is affected by the relevance and placement of advertisements. We propose a new system for selecting and displaying visual advertisements in image search result sets. Our method compares the visual similarity of candidate ads to the image search results and selects the most visually similar ad to be displayed. The method further selects an appropriate location in the displayed image grid to minimize the perceptual visual differences between the ad and its neighbors. We conduct an experiment with about 900 users and find that our proposed method provides significant improvement in the users' overall satisfaction with the image search experience, without diminishing the users' ability to see the ad or recall the advertised brand.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge