Frank Dignum

Rethinking How AI Embeds and Adapts to Human Values: Challenges and Opportunities

Aug 23, 2025Abstract:The concepts of ``human-centered AI'' and ``value-based decision'' have gained significant attention in both research and industry. However, many critical aspects remain underexplored and require further investigation. In particular, there is a need to understand how systems incorporate human values, how humans can identify these values within systems, and how to minimize the risks of harm or unintended consequences. In this paper, we highlight the need to rethink how we frame value alignment and assert that value alignment should move beyond static and singular conceptions of values. We argue that AI systems should implement long-term reasoning and remain adaptable to evolving values. Furthermore, value alignment requires more theories to address the full spectrum of human values. Since values often vary among individuals or groups, multi-agent systems provide the right framework for navigating pluralism, conflict, and inter-agent reasoning about values. We identify the challenges associated with value alignment and indicate directions for advancing value alignment research. In addition, we broadly discuss diverse perspectives of value alignment, from design methodologies to practical applications.

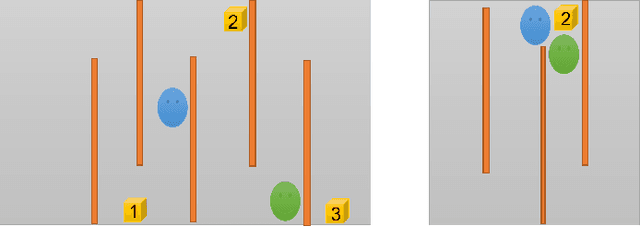

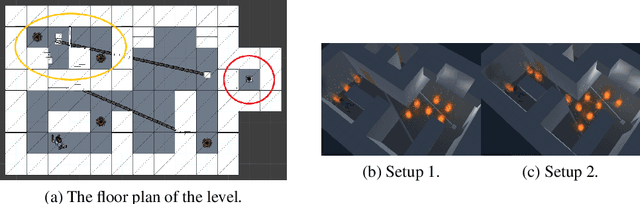

Cooperative Multi-agent Approach for Automated Computer Game Testing

May 18, 2024

Abstract:Automated testing of computer games is a challenging problem, especially when lengthy scenarios have to be tested. Automating such a scenario boils down to finding the right sequence of interactions given an abstract description of the scenario. Recent works have shown that an agent-based approach works well for the purpose, e.g. due to agents' reactivity, hence enabling a test agent to immediately react to game events and changing state. Many games nowadays are multi-player. This opens up an interesting possibility to deploy multiple cooperative test agents to test such a game, for example to speed up the execution of multiple testing tasks. This paper offers a cooperative multi-agent testing approach and a study of its performance based on a case study on a 3D game called Lab Recruits.

Social AI and the Challenges of the Human-AI Ecosystem

Jun 23, 2023

Abstract:The rise of large-scale socio-technical systems in which humans interact with artificial intelligence (AI) systems (including assistants and recommenders, in short AIs) multiplies the opportunity for the emergence of collective phenomena and tipping points, with unexpected, possibly unintended, consequences. For example, navigation systems' suggestions may create chaos if too many drivers are directed on the same route, and personalised recommendations on social media may amplify polarisation, filter bubbles, and radicalisation. On the other hand, we may learn how to foster the "wisdom of crowds" and collective action effects to face social and environmental challenges. In order to understand the impact of AI on socio-technical systems and design next-generation AIs that team with humans to help overcome societal problems rather than exacerbate them, we propose to build the foundations of Social AI at the intersection of Complex Systems, Network Science and AI. In this perspective paper, we discuss the main open questions in Social AI, outlining possible technical and scientific challenges and suggesting research avenues.

Social Practices for Social Driven Conversations in Serious Games

Jun 10, 2022Abstract:This paper describes the model of social practice as a theoretical framework to manage conversation with the specific goal of training physicians in communicative skills. To this aim, the domain reasoner that manages the conversation in the Communicate! \cite{jeuring} serious game is taken as a basis. Because the choice of a specific Social Practice to follow in a situation is non-trivial we use a probabilistic model for the selection of social practices as a step toward the implementation of an agent architecture compliant with the social practice model.

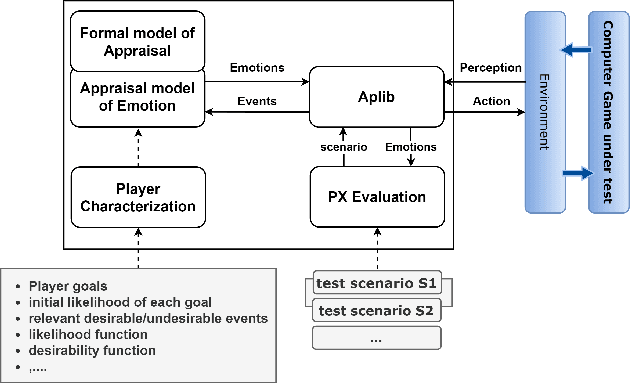

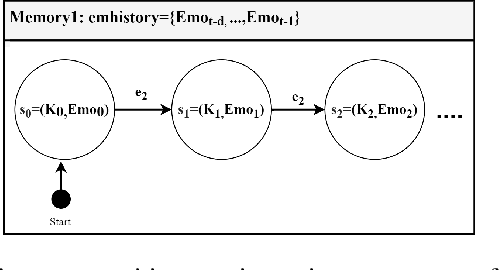

An Appraisal Transition System for Event-driven Emotions in Agent-based Player Experience Testing

May 12, 2021

Abstract:Player experience (PX) evaluation has become a field of interest in the game industry. Several manual PX techniques have been introduced to assist developers to understand and evaluate the experience of players in computer games. However, automated testing of player experience still needs to be addressed. An automated player experience testing framework would allow designers to evaluate the PX requirements in the early development stages without the necessity of participating human players. In this paper, we propose an automated player experience testing approach by suggesting a formal model of event-based emotions. In particular, we discuss an event-based transition system to formalize relevant emotions using Ortony, Clore, & Collins (OCC) theory of emotions. A working prototype of the model is integrated on top of Aplib, a tactical agent programming library, to create intelligent PX test agents, capable of appraising emotions in a 3D game case study. The results are graphically shown e.g. as heat maps. Emotion visualization of the test agent would ultimately help game designers in creating content that evokes a certain experience in players.

Let's Make It Personal, A Challenge in Personalizing Medical Inter-Human Communication

Jul 29, 2019

Abstract:Current AI approaches have frequently been used to help personalize many aspects of medical experiences and tailor them to a specific individuals' needs. However, while such systems consider medically-relevant information, they ignore socially-relevant information about how this diagnosis should be communicated and discussed with the patient. The lack of this capability may lead to mis-communication, resulting in serious implications, such as patients opting out of the best treatment. Consider a case in which the same treatment is proposed to two different individuals. The manner in which this treatment is mediated to each should be different, depending on the individual patient's history, knowledge, and mental state. While it is clear that this communication should be conveyed via a human medical expert and not a software-based system, humans are not always capable of considering all of the relevant aspects and traversing all available information. We pose the challenge of creating Intelligent Agents (IAs) to assist medical service providers (MSPs) and consumers in establishing a more personalized human-to-human dialogue. Personalizing conversations will enable patients and MSPs to reach a solution that is best for their particular situation, such that a relation of trust can be built and commitment to the outcome of the interaction is assured. We propose a four-part conceptual framework for personalized social interactions, expand on which techniques are available within current AI research and discuss what has yet to be achieved.

Governance by Glass-Box: Implementing Transparent Moral Bounds for AI Behaviour

Apr 30, 2019

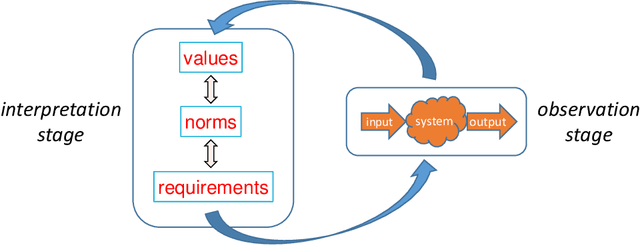

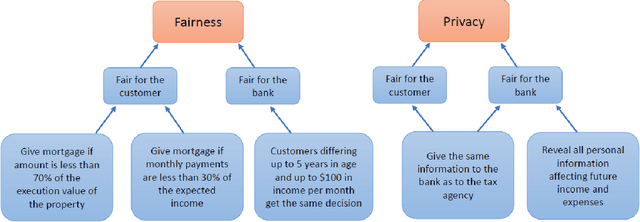

Abstract:Artificial Intelligence (AI) applications are being used to predict and assess behaviour in multiple domains, such as criminal justice and consumer finance, which directly affect human well-being. However, if AI is to improve people's lives, then people must be able to trust AI, which means being able to understand what the system is doing and why. Even though transparency is often seen as the requirement in this case, realistically it might not always be possible or desirable, whereas the need to ensure that the system operates within set moral bounds remains. In this paper, we present an approach to evaluate the moral bounds of an AI system based on the monitoring of its inputs and outputs. We place a "glass box" around the system by mapping moral values into explicit verifiable norms that constrain inputs and outputs, in such a way that if these remain within the box we can guarantee that the system adheres to the value. The focus on inputs and outputs allows for the verification and comparison of vastly different intelligent systems; from deep neural networks to agent-based systems. The explicit transformation of abstract moral values into concrete norms brings great benefits in terms of explainability; stakeholders know exactly how the system is interpreting and employing relevant abstract moral human values and calibrate their trust accordingly. Moreover, by operating at a higher level we can check the compliance of the system with different interpretations of the same value. These advantages will have an impact on the well-being of AI systems users at large, building their trust and providing them with concrete knowledge on how systems adhere to moral values.

Interactions as Social Practices: towards a formalization

Sep 24, 2018

Abstract:Multi-agent models are a suitable starting point to model complex social interactions. However, as the complexity of the systems increase, we argue that novel modeling approaches are needed that can deal with inter-dependencies at different levels of society, where many heterogeneous parties (software agents, robots, humans) are interacting and reacting to each other. In this paper, we present a formalization of a social framework for agents based in the concept of Social Practices as high level specifications of normal (expected) behavior in a given social context. We argue that social practices facilitate the practical reasoning of agents in standard social interactions.

A Logic of Agent Organizations

Apr 28, 2018

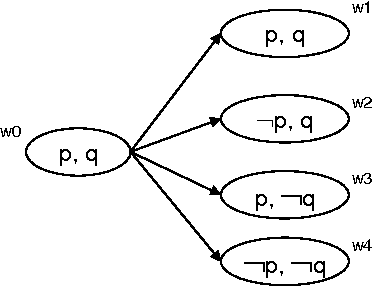

Abstract:Organization concepts and models are increasingly being adopted for the design and specification of multi-agent systems. Agent organizations can be seen as mechanisms of social order, created to achieve global (or organizational) objectives by more or less autonomous agents. In order to develop a theory on the relation between organizational structures, organizational objectives and the actions of agents fulfilling roles in the organization a theoretical framework is needed to describe organizational structures and actions of (groups of) agents. Current logical formalisms focus on specific aspects of organizations (e.g. power, delegation, agent actions, or normative issues) but a framework that integrates and relates different aspects is missing. Given the amount of aspects involved and the subsequent complexity of a formalism encompassing them all, it is difficult to realize. In this paper, a first step is taken to solve this problem. We present a generic formal model that enables to specify and relate the main concepts of an organization (including, activity, structure, environment and others) so that organizations can be analyzed at a high level of abstraction. However, for some aspects we use a simplified model in order to avoid the complexity of combining many different types of (modal) operators.

Social planning for social HRI

Feb 21, 2016Abstract:Making a computational agent 'social' has implications for how it perceives itself and the environment in which it is situated, including the ability to recognise the behaviours of others. We point to recent work on social planning, i.e. planning in settings where the social context is relevant in the assessment of the beliefs and capabilities of others, and in making appropriate choices of what to do next.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge