Christian Muise

Make Planning Research Rigorous Again!

May 27, 2025Abstract:In over sixty years since its inception, the field of planning has made significant contributions to both the theory and practice of building planning software that can solve a never-before-seen planning problem. This was done through established practices of rigorous design and evaluation of planning systems. It is our position that this rigor should be applied to the current trend of work on planning with large language models. One way to do so is by correctly incorporating the insights, tools, and data from the automated planning community into the design and evaluation of LLM-based planners. The experience and expertise of the planning community are not just important from a historical perspective; the lessons learned could play a crucial role in accelerating the development of LLM-based planners. This position is particularly important in light of the abundance of recent works that replicate and propagate the same pitfalls that the planning community has encountered and learned from. We believe that avoiding such known pitfalls will contribute greatly to the progress in building LLM-based planners and to planning in general.

PRP Rebooted: Advancing the State of the Art in FOND Planning

Dec 20, 2023

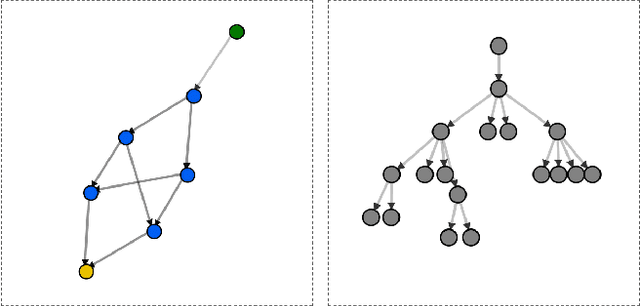

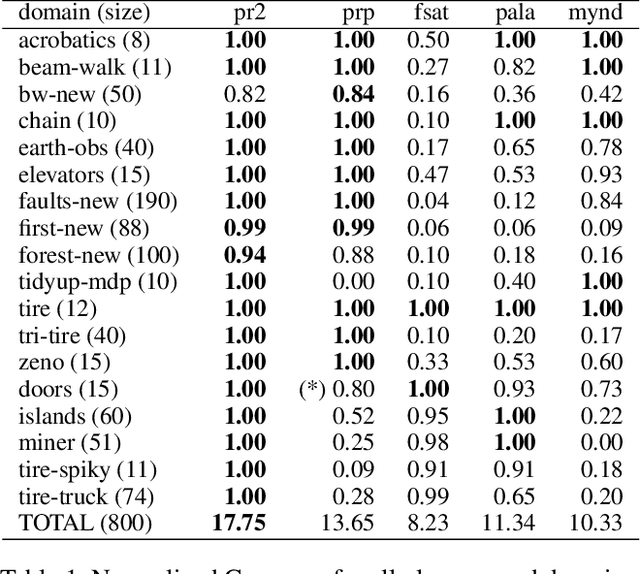

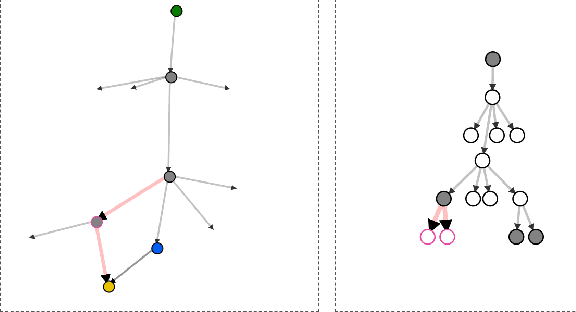

Abstract:Fully Observable Non-Deterministic (FOND) planning is a variant of classical symbolic planning in which actions are nondeterministic, with an action's outcome known only upon execution. It is a popular planning paradigm with applications ranging from robot planning to dialogue-agent design and reactive synthesis. Over the last 20 years, a number of approaches to FOND planning have emerged. In this work, we establish a new state of the art, following in the footsteps of some of the most powerful FOND planners to date. Our planner, PR2, decisively outperforms the four leading FOND planners, at times by a large margin, in 17 of 18 domains that represent a comprehensive benchmark suite. Ablation studies demonstrate the impact of various techniques we introduce, with the largest improvement coming from our novel FOND-aware heuristic.

Automated Planning Techniques for Elementary Proofs in Abstract Algebra

Dec 11, 2023Abstract:This paper explores the application of automated planning to automated theorem proving, which is a branch of automated reasoning concerned with the development of algorithms and computer programs to construct mathematical proofs. In particular, we investigate the use of planning to construct elementary proofs in abstract algebra, which provides a rigorous and axiomatic framework for studying algebraic structures such as groups, rings, fields, and modules. We implement basic implications, equalities, and rules in both deterministic and non-deterministic domains to model commutative rings and deduce elementary results about them. The success of this initial implementation suggests that the well-established techniques seen in automated planning are applicable to the relatively newer field of automated theorem proving. Likewise, automated theorem proving provides a new, challenging domain for automated planning.

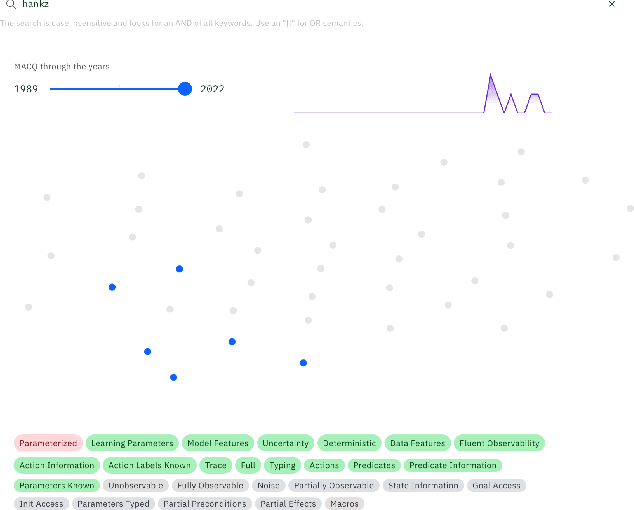

TOBY: A Tool for Exploring Data in Academic Survey Papers

Jun 13, 2023Abstract:This paper describes TOBY, a visualization tool that helps a user explore the contents of an academic survey paper. The visualization consists of four components: a hierarchical view of taxonomic data in the survey, a document similarity view in the space of taxonomic classes, a network view of citations, and a new paper recommendation tool. In this paper, we will discuss these features in the context of three separate deployments of the tool.

Egocentric Planning for Scalable Embodied Task Achievement

Jun 02, 2023Abstract:Embodied agents face significant challenges when tasked with performing actions in diverse environments, particularly in generalizing across object types and executing suitable actions to accomplish tasks. Furthermore, agents should exhibit robustness, minimizing the execution of illegal actions. In this work, we present Egocentric Planning, an innovative approach that combines symbolic planning and Object-oriented POMDPs to solve tasks in complex environments, harnessing existing models for visual perception and natural language processing. We evaluated our approach in ALFRED, a simulated environment designed for domestic tasks, and demonstrated its high scalability, achieving an impressive 36.07% unseen success rate in the ALFRED benchmark and winning the ALFRED challenge at CVPR Embodied AI workshop. Our method requires reliable perception and the specification or learning of a symbolic description of the preconditions and effects of the agent's actions, as well as what object types reveal information about others. It is capable of naturally scaling to solve new tasks beyond ALFRED, as long as they can be solved using the available skills. This work offers a solid baseline for studying end-to-end and hybrid methods that aim to generalize to new tasks, including recent approaches relying on LLMs, but often struggle to scale to long sequences of actions or produce robust plans for novel tasks.

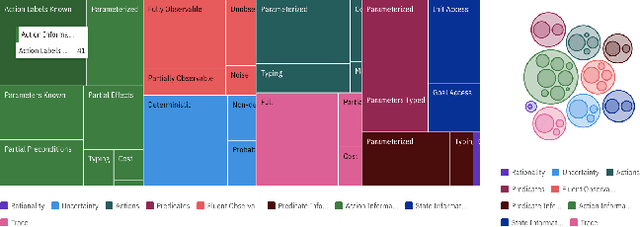

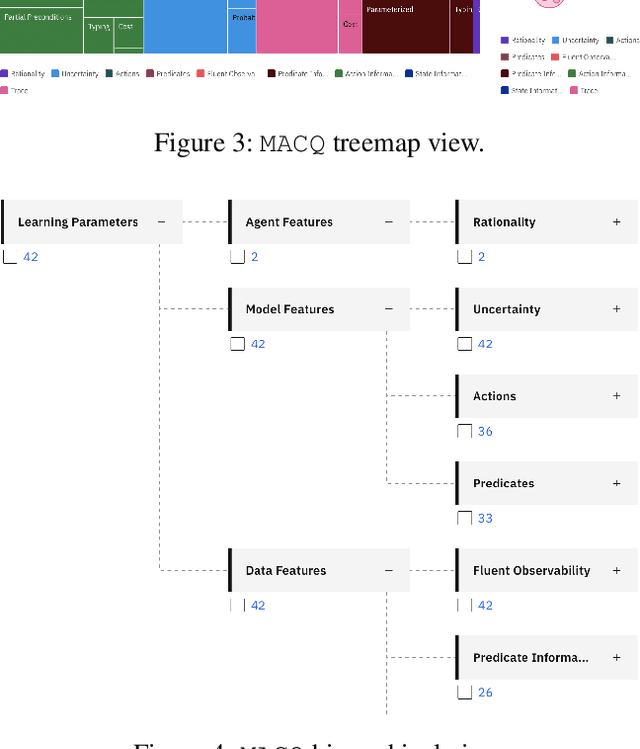

MACQ: A Holistic View of Model Acquisition Techniques

Jun 14, 2022

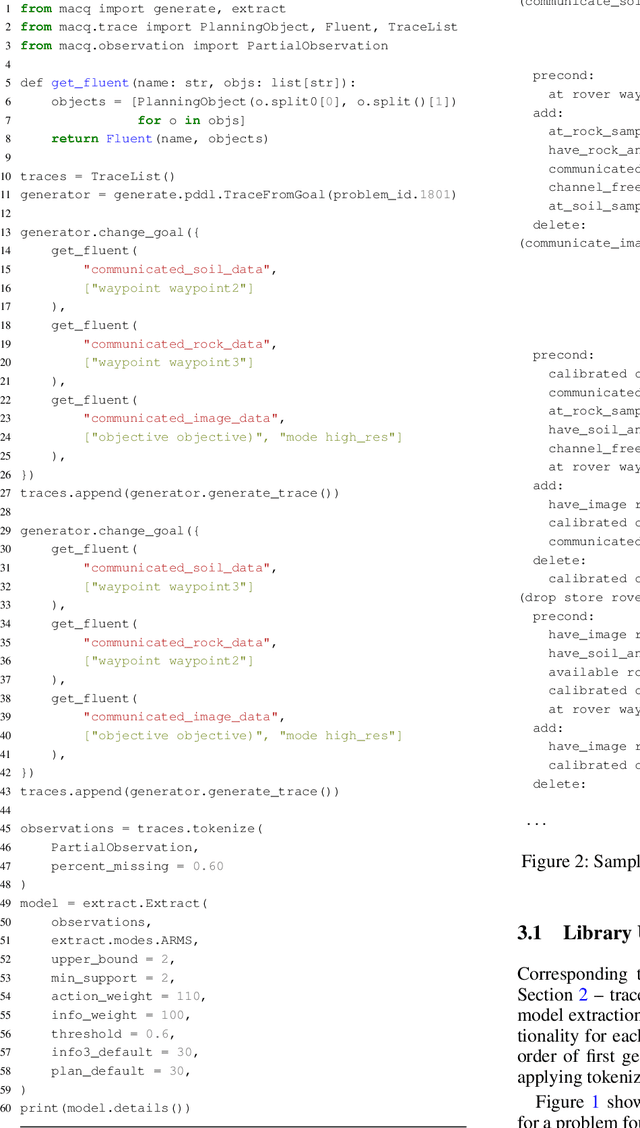

Abstract:For over three decades, the planning community has explored countless methods for data-driven model acquisition. These range in sophistication (e.g., simple set operations to full-blown reformulations), methodology (e.g., logic-based vs. planing-based), and assumptions (e.g., fully vs. partially observable). With no fewer than 43 publications in the space, it can be overwhelming to understand what approach could or should be applied in a new setting. We present a holistic characterization of the action model acquisition space and further introduce a unifying framework for automated action model acquisition. We have re-implemented some of the landmark approaches in the area, and our characterization of all the techniques offers deep insight into the research opportunities that remain; i.e., those settings where no technique is capable of solving.

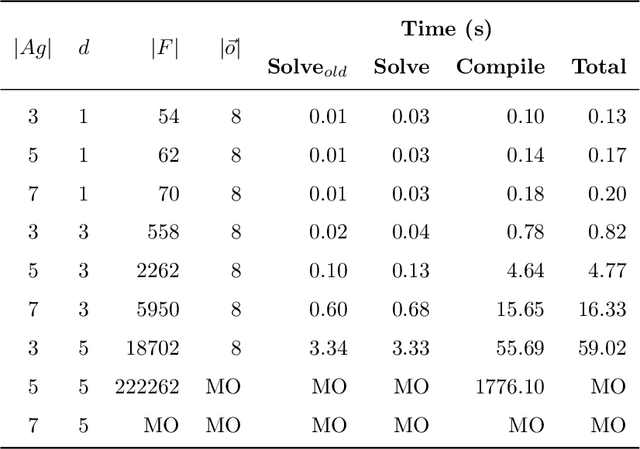

Efficient Multi-agent Epistemic Planning: Teaching Planners About Nested Belief

Oct 06, 2021

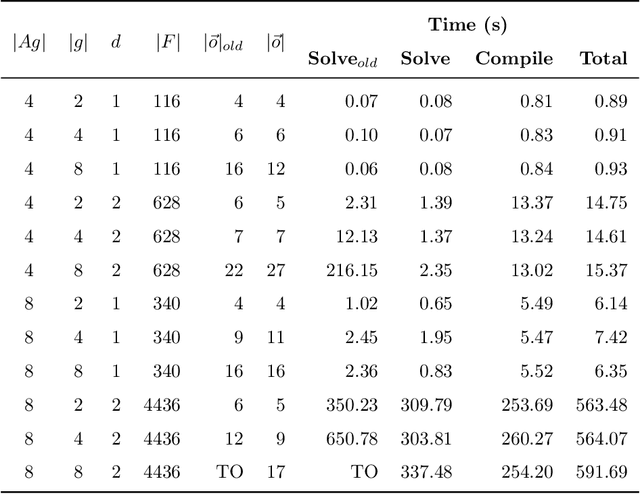

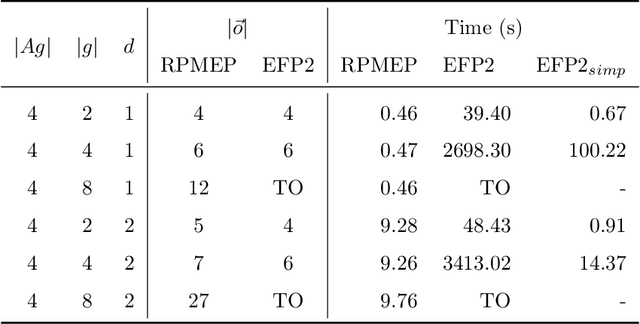

Abstract:Many AI applications involve the interaction of multiple autonomous agents, requiring those agents to reason about their own beliefs, as well as those of other agents. However, planning involving nested beliefs is known to be computationally challenging. In this work, we address the task of synthesizing plans that necessitate reasoning about the beliefs of other agents. We plan from the perspective of a single agent with the potential for goals and actions that involve nested beliefs, non-homogeneous agents, co-present observations, and the ability for one agent to reason as if it were another. We formally characterize our notion of planning with nested belief, and subsequently demonstrate how to automatically convert such problems into problems that appeal to classical planning technology for solving efficiently. Our approach represents an important step towards applying the well-established field of automated planning to the challenging task of planning involving nested beliefs of multiple agents.

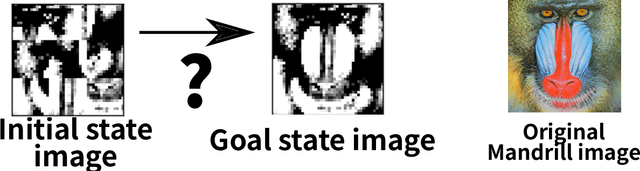

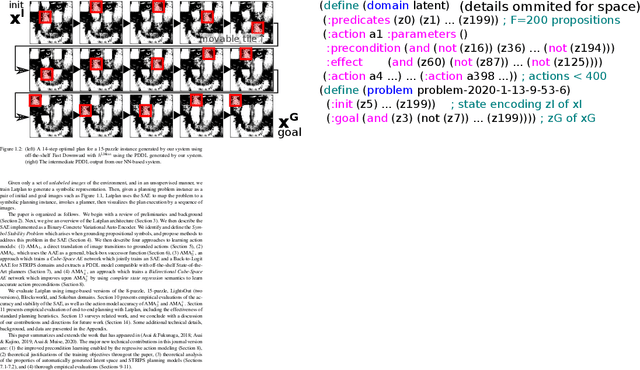

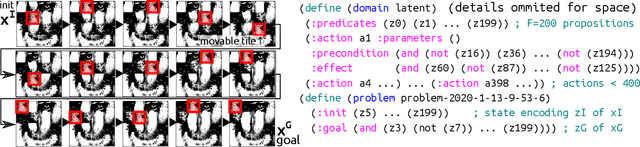

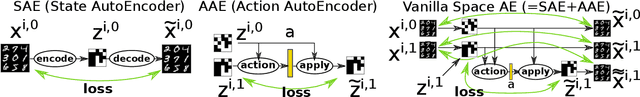

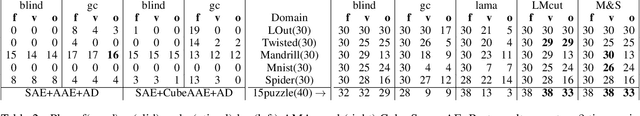

Classical Planning in Deep Latent Space

Jun 30, 2021

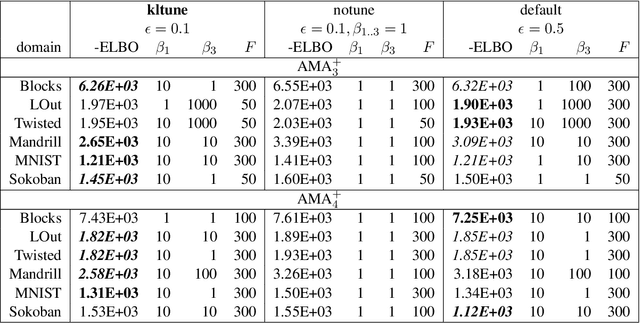

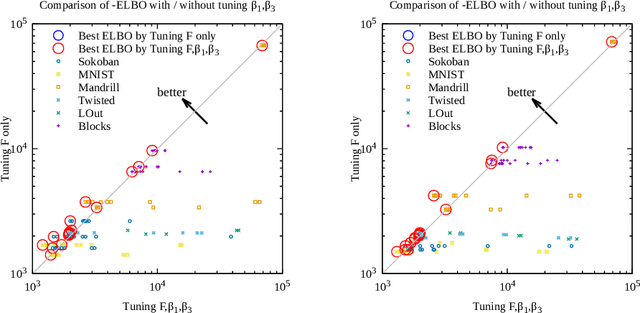

Abstract:Current domain-independent, classical planners require symbolic models of the problem domain and instance as input, resulting in a knowledge acquisition bottleneck. Meanwhile, although deep learning has achieved significant success in many fields, the knowledge is encoded in a subsymbolic representation which is incompatible with symbolic systems such as planners. We propose Latplan, an unsupervised architecture combining deep learning and classical planning. Given only an unlabeled set of image pairs showing a subset of transitions allowed in the environment (training inputs), Latplan learns a complete propositional PDDL action model of the environment. Later, when a pair of images representing the initial and the goal states (planning inputs) is given, Latplan finds a plan to the goal state in a symbolic latent space and returns a visualized plan execution. We evaluate Latplan using image-based versions of 6 planning domains: 8-puzzle, 15-Puzzle, Blocksworld, Sokoban and Two variations of LightsOut.

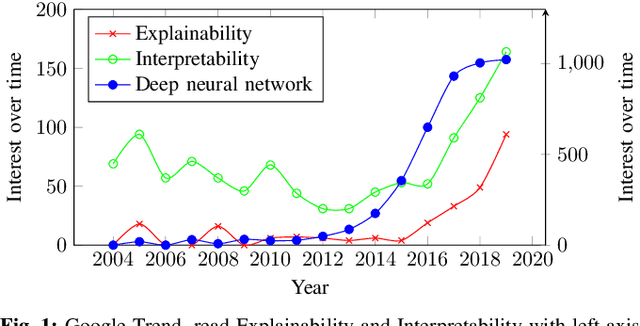

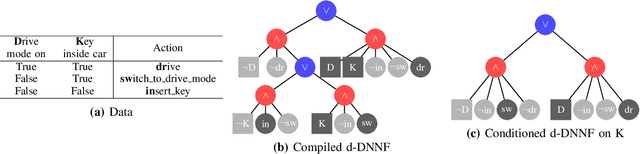

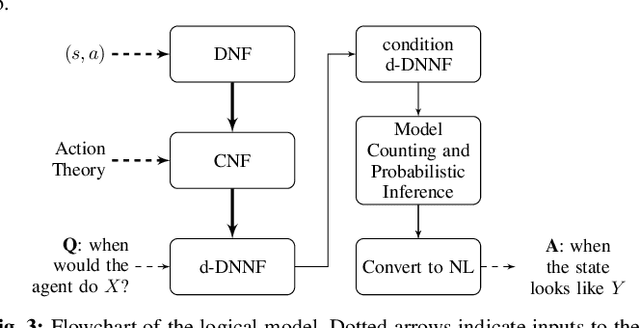

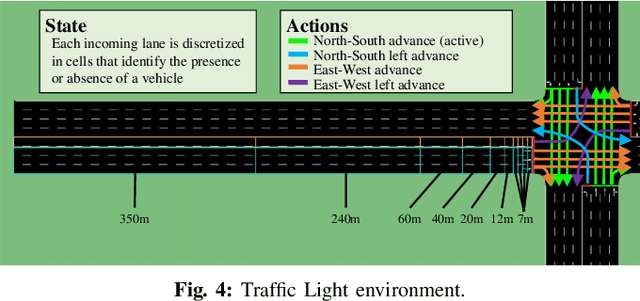

Explainability of Intelligent Transportation Systems using Knowledge Compilation: a Traffic Light Controller Case

Jul 09, 2020

Abstract:Usage of automated controllers which make decisions on an environment are widespread and are often based on black-box models. We use Knowledge Compilation theory to bring explainability to the controller's decision given the state of the system. For this, we use simulated historical state-action data as input and build a compact and structured representation which relates states with actions. We implement this method in a Traffic Light Control scenario where the controller selects the light cycle by observing the presence (or absence) of vehicles in different regions of the incoming roads.

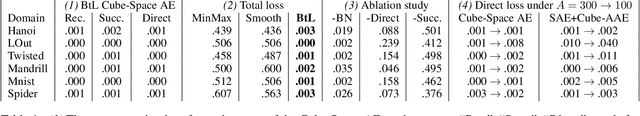

Learning Neural-Symbolic Descriptive Planning Models via Cube-Space Priors: The Voyage Home (to STRIPS)

Apr 27, 2020

Abstract:We achieved a new milestone in the difficult task of enabling agents to learn about their environment autonomously. Our neuro-symbolic architecture is trained end-to-end to produce a succinct and effective discrete state transition model from images alone. Our target representation (the Planning Domain Definition Language) is already in a form that off-the-shelf solvers can consume, and opens the door to the rich array of modern heuristic search capabilities. We demonstrate how the sophisticated innate prior we place on the learning process significantly reduces the complexity of the learned representation, and reveals a connection to the graph-theoretic notion of "cube-like graphs", thus opening the door to a deeper understanding of the ideal properties for learned symbolic representations. We show that the powerful domain-independent heuristics allow our system to solve visual 15-Puzzle instances which are beyond the reach of blind search, without resorting to the Reinforcement Learning approach that requires a huge amount of training on the domain-dependent reward information.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge