Yixi Cai

Memory-Efficient Boundary Map for Large-Scale Occupancy Grid Mapping

Mar 23, 2026Abstract:Determining the occupancy status of locations in the environment is a fundamental task for safety-critical robotic applications. Traditional occupancy grid mapping methods subdivide the environment into a grid of voxels, each associated with one of three occupancy states: free, occupied, or unknown. These methods explicitly maintain all voxels within the mapped volume and determine the occupancy state of a location by directly querying the corresponding voxel that the location falls within. However, maintaining all grid voxels in high-resolution and large-scale scenarios requires substantial memory resources. In this paper, we introduce a novel representation that only maintains the boundary of the mapped volume. Specifically, we explicitly represent the boundary voxels, such as the occupied voxels and frontier voxels, while free and unknown voxels are automatically represented by volumes within or outside the boundary, respectively. As our representation maintains only a closed surface in two-dimensional (2D) space, instead of the entire volume in three-dimensional (3D) space, it significantly reduces memory consumption. Then, based on this 2D representation, we propose a method to determine the occupancy state of arbitrary locations in the 3D environment. We term this method as boundary map. Besides, we design a novel data structure for maintaining the boundary map, supporting efficient occupancy state queries. Theoretical analyses of the occupancy state query algorithm are also provided. Furthermore, to enable efficient construction and updates of the boundary map from the real-time sensor measurements, we propose a global-local mapping framework and corresponding update algorithms. Finally, we will make our implementation of the boundary map open-source on GitHub to benefit the community:https://github.com/hku-mars/BDM.

DeltaFlow: An Efficient Multi-frame Scene Flow Estimation Method

Aug 23, 2025

Abstract:Previous dominant methods for scene flow estimation focus mainly on input from two consecutive frames, neglecting valuable information in the temporal domain. While recent trends shift towards multi-frame reasoning, they suffer from rapidly escalating computational costs as the number of frames grows. To leverage temporal information more efficiently, we propose DeltaFlow ($\Delta$Flow), a lightweight 3D framework that captures motion cues via a $\Delta$ scheme, extracting temporal features with minimal computational cost, regardless of the number of frames. Additionally, scene flow estimation faces challenges such as imbalanced object class distributions and motion inconsistency. To tackle these issues, we introduce a Category-Balanced Loss to enhance learning across underrepresented classes and an Instance Consistency Loss to enforce coherent object motion, improving flow accuracy. Extensive evaluations on the Argoverse 2 and Waymo datasets show that $\Delta$Flow achieves state-of-the-art performance with up to 22% lower error and $2\times$ faster inference compared to the next-best multi-frame supervised method, while also demonstrating a strong cross-domain generalization ability. The code is open-sourced at https://github.com/Kin-Zhang/DeltaFlow along with trained model weights.

PRIX: Learning to Plan from Raw Pixels for End-to-End Autonomous Driving

Jul 24, 2025Abstract:While end-to-end autonomous driving models show promising results, their practical deployment is often hindered by large model sizes, a reliance on expensive LiDAR sensors and computationally intensive BEV feature representations. This limits their scalability, especially for mass-market vehicles equipped only with cameras. To address these challenges, we propose PRIX (Plan from Raw Pixels). Our novel and efficient end-to-end driving architecture operates using only camera data, without explicit BEV representation and forgoing the need for LiDAR. PRIX leverages a visual feature extractor coupled with a generative planning head to predict safe trajectories from raw pixel inputs directly. A core component of our architecture is the Context-aware Recalibration Transformer (CaRT), a novel module designed to effectively enhance multi-level visual features for more robust planning. We demonstrate through comprehensive experiments that PRIX achieves state-of-the-art performance on the NavSim and nuScenes benchmarks, matching the capabilities of larger, multimodal diffusion planners while being significantly more efficient in terms of inference speed and model size, making it a practical solution for real-world deployment. Our work is open-source and the code will be at https://maxiuw.github.io/prix.

GauSS-MI: Gaussian Splatting Shannon Mutual Information for Active 3D Reconstruction

Apr 29, 2025

Abstract:This research tackles the challenge of real-time active view selection and uncertainty quantification on visual quality for active 3D reconstruction. Visual quality is a critical aspect of 3D reconstruction. Recent advancements such as Neural Radiance Fields (NeRF) and 3D Gaussian Splatting (3DGS) have notably enhanced the image rendering quality of reconstruction models. Nonetheless, the efficient and effective acquisition of input images for reconstruction-specifically, the selection of the most informative viewpoint-remains an open challenge, which is crucial for active reconstruction. Existing studies have primarily focused on evaluating geometric completeness and exploring unobserved or unknown regions, without direct evaluation of the visual uncertainty within the reconstruction model. To address this gap, this paper introduces a probabilistic model that quantifies visual uncertainty for each Gaussian. Leveraging Shannon Mutual Information, we formulate a criterion, Gaussian Splatting Shannon Mutual Information (GauSS-MI), for real-time assessment of visual mutual information from novel viewpoints, facilitating the selection of next best view. GauSS-MI is implemented within an active reconstruction system integrated with a view and motion planner. Extensive experiments across various simulated and real-world scenes showcase the superior visual quality and reconstruction efficiency performance of the proposed system.

Efficient Swept Volume-Based Trajectory Generation for Arbitrary-Shaped Ground Robot Navigation

Apr 10, 2025Abstract:Navigating an arbitrary-shaped ground robot safely in cluttered environments remains a challenging problem. The existing trajectory planners that account for the robot's physical geometry severely suffer from the intractable runtime. To achieve both computational efficiency and Continuous Collision Avoidance (CCA) of arbitrary-shaped ground robot planning, we proposed a novel coarse-to-fine navigation framework that significantly accelerates planning. In the first stage, a sampling-based method selectively generates distinct topological paths that guarantee a minimum inflated margin. In the second stage, a geometry-aware front-end strategy is designed to discretize these topologies into full-state robot motion sequences while concurrently partitioning the paths into SE(2) sub-problems and simpler R2 sub-problems for back-end optimization. In the final stage, an SVSDF-based optimizer generates trajectories tailored to these sub-problems and seamlessly splices them into a continuous final motion plan. Extensive benchmark comparisons show that the proposed method is one to several orders of magnitude faster than the cutting-edge methods in runtime while maintaining a high planning success rate and ensuring CCA.

Temporal Overlapping Prediction: A Self-supervised Pre-training Method for LiDAR Moving Object Segmentation

Mar 10, 2025

Abstract:Moving object segmentation (MOS) on LiDAR point clouds is crucial for autonomous systems like self-driving vehicles. Previous supervised approaches rely heavily on costly manual annotations, while LiDAR sequences naturally capture temporal motion cues that can be leveraged for self-supervised learning. In this paper, we propose \textbf{T}emporal \textbf{O}verlapping \textbf{P}rediction (\textbf{TOP}), a self-supervised pre-training method that alleviate the labeling burden for MOS. \textbf{TOP} explores the temporal overlapping points that commonly observed by current and adjacent scans, and learns spatiotemporal representations by predicting the occupancy states of temporal overlapping points. Moreover, we utilize current occupancy reconstruction as an auxiliary pre-training objective, which enhances the current structural awareness of the model. We conduct extensive experiments and observe that the conventional metric Intersection-over-Union (IoU) shows strong bias to objects with more scanned points, which might neglect small or distant objects. To compensate for this bias, we introduce an additional metric called $\text{mIoU}_{\text{obj}}$ to evaluate object-level performance. Experiments on nuScenes and SemanticKITTI show that \textbf{TOP} outperforms both supervised training-from-scratch baseline and other self-supervised pre-training baselines by up to 28.77\% relative improvement, demonstrating strong transferability across LiDAR setups and generalization to other tasks. Code and pre-trained models will be publicly available upon publication.

Efficient and Distributed Large-Scale Point Cloud Bundle Adjustment via Majorization-Minimization

Feb 26, 2025

Abstract:Point cloud bundle adjustment is critical in large-scale point cloud mapping. However, it is both computationally and memory intensive, with its complexity growing cubically as the number of scan poses increases. This paper presents BALM3.0, an efficient and distributed large-scale point cloud bundle adjustment method. The proposed method employs the majorization-minimization algorithm to decouple the scan poses in the bundle adjustment process, thus performing the point cloud bundle adjustment on large-scale data with improved computational efficiency. The key difficulty of applying majorization-minimization on bundle adjustment is to identify the proper surrogate cost function. In this paper, the proposed surrogate cost function is based on the point-to-plane distance. The primary advantages of decoupling the scan poses via a majorization-minimization algorithm stem from two key aspects. First, the decoupling of scan poses reduces the optimization time complexity from cubic to linear, significantly enhancing the computational efficiency of the bundle adjustment process in large-scale environments. Second, it lays the theoretical foundation for distributed bundle adjustment. By distributing both data and computation across multiple devices, this approach helps overcome the limitations posed by large memory and computational requirements, which may be difficult for a single device to handle. The proposed method is extensively evaluated in both simulated and real-world environments. The results demonstrate that the proposed method achieves the same optimal residual with comparable accuracy while offering up to 704 times faster optimization speed and reducing memory usage to 1/8. Furthermore, this paper also presented and implemented a distributed bundle adjustment framework and successfully optimized large-scale data (21,436 poses with 70 GB point clouds) with four consumer-level laptops.

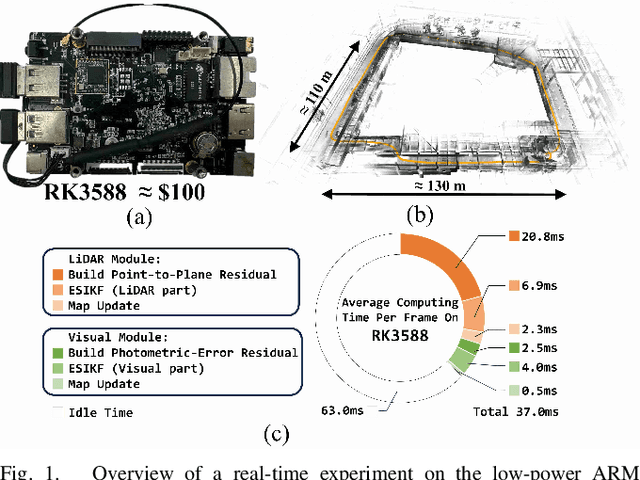

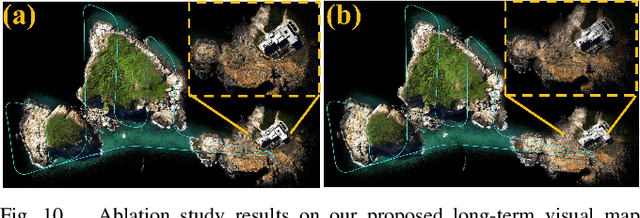

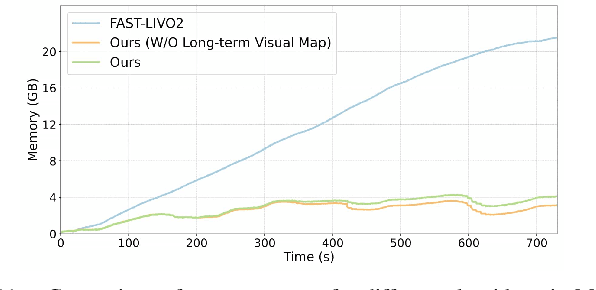

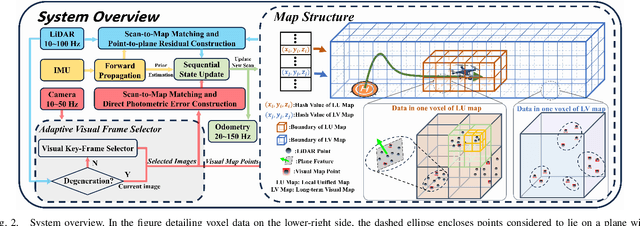

FAST-LIVO2 on Resource-Constrained Platforms: LiDAR-Inertial-Visual Odometry with Efficient Memory and Computation

Jan 23, 2025

Abstract:This paper presents a lightweight LiDAR-inertial-visual odometry system optimized for resource-constrained platforms. It integrates a degeneration-aware adaptive visual frame selector into error-state iterated Kalman filter (ESIKF) with sequential updates, improving computation efficiency significantly while maintaining a similar level of robustness. Additionally, a memory-efficient mapping structure combining a locally unified visual-LiDAR map and a long-term visual map achieves a good trade-off between performance and memory usage. Extensive experiments on x86 and ARM platforms demonstrate the system's robustness and efficiency. On the Hilti dataset, our system achieves a 33% reduction in per-frame runtime and 47% lower memory usage compared to FAST-LIVO2, with only a 3 cm increase in RMSE. Despite this slight accuracy trade-off, our system remains competitive, outperforming state-of-the-art (SOTA) LIO methods such as FAST-LIO2 and most existing LIVO systems. These results validate the system's capability for scalable deployment on resource-constrained edge computing platforms.

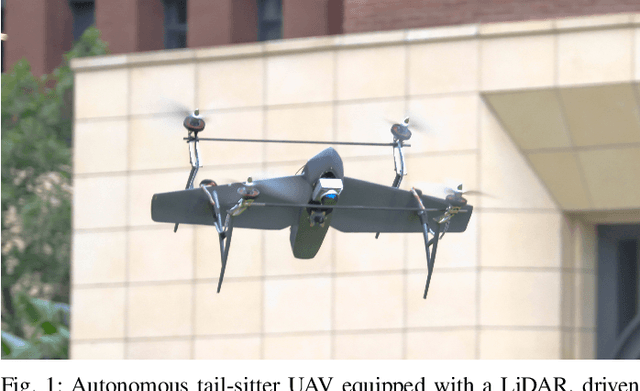

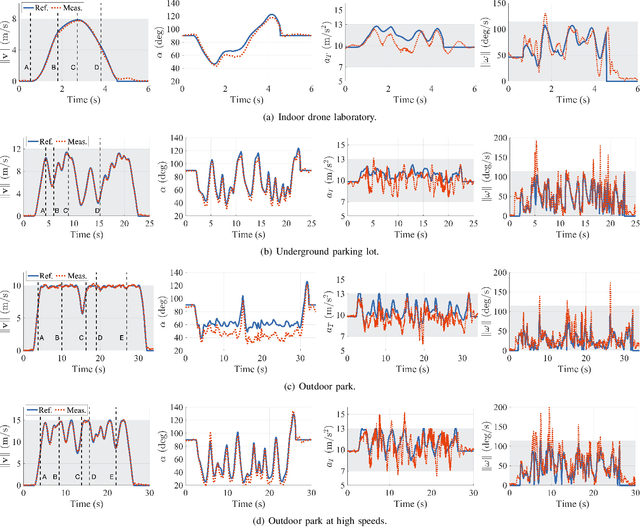

Autonomous Tail-Sitter Flights in Unknown Environments

Nov 26, 2024

Abstract:Trajectory generation for fully autonomous flights of tail-sitter unmanned aerial vehicles (UAVs) presents substantial challenges due to their highly nonlinear aerodynamics. In this paper, we introduce, to the best of our knowledge, the world's first fully autonomous tail-sitter UAV capable of high-speed navigation in unknown, cluttered environments. The UAV autonomy is enabled by cutting-edge technologies including LiDAR-based sensing, differential-flatness-based trajectory planning and control with purely onboard computation. In particular, we propose an optimization-based tail-sitter trajectory planning framework that generates high-speed, collision-free, and dynamically-feasible trajectories. To efficiently and reliably solve this nonlinear, constrained \textcolor{black}{problem}, we develop an efficient feasibility-assured solver, EFOPT, tailored for the online planning of tail-sitter UAVs. We conduct extensive simulation studies to benchmark EFOPT's superiority in planning tasks against conventional NLP solvers. We also demonstrate exhaustive experiments of aggressive autonomous flights with speeds up to 15m/s in various real-world environments, including indoor laboratories, underground parking lots, and outdoor parks. A video demonstration is available at https://youtu.be/OvqhlB2h3k8, and the EFOPT solver is open-sourced at https://github.com/hku-mars/EFOPT.

Swarm-LIO2: Decentralized, Efficient LiDAR-inertial Odometry for UAV Swarms

Sep 26, 2024

Abstract:Aerial swarm systems possess immense potential in various aspects, such as cooperative exploration, target tracking, search and rescue. Efficient, accurate self and mutual state estimation are the critical preconditions for completing these swarm tasks, which remain challenging research topics. This paper proposes Swarm-LIO2: a fully decentralized, plug-and-play, computationally efficient, and bandwidth-efficient LiDAR-inertial odometry for aerial swarm systems. Swarm-LIO2 uses a decentralized, plug-and-play network as the communication infrastructure. Only bandwidth-efficient and low-dimensional information is exchanged, including identity, ego-state, mutual observation measurements, and global extrinsic transformations. To support the plug-and-play of new teammate participants, Swarm-LIO2 detects potential teammate UAVs and initializes the temporal offset and global extrinsic transformation all automatically. To enhance the initialization efficiency, novel reflectivity-based UAV detection, trajectory matching, and factor graph optimization methods are proposed. For state estimation, Swarm-LIO2 fuses LiDAR, IMU, and mutual observation measurements within an efficient ESIKF framework, with careful compensation of temporal delay and modeling of measurements to enhance the accuracy and consistency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge