Xiyuan Liu

Low Time Complexity Near-Field Channel and Position Estimations

Dec 18, 2024Abstract:With the application of high-frequency communication and extremely large MIMO (XL-MIMO), the near-field effect has become increasingly apparent. The near-field channel estimation and position estimation problems both rely on the Angle of Arrival (AoA) and the Curvature of Arrival (CoA) estimation. However, in the near-field channel model, the coupling of AoA and CoA information poses a challenge to the estimation of the near-field channel. This paper proposes a Joint Autocorrelation and Cross-correlation (JAC) scheme to decouple AoA and CoA estimation. Based on the JAC scheme, we propose two specific near-field estimation algorithms, namely Inverse Sinc Function (JAC-ISF) and Gradient Descent (JAC-GD) algorithms. Finally, we analyzed the time complexity of the JAC scheme and the cramer-rao lower bound (CRLB) for near-field position estimation. The simulation experiment results show that the algorithm designed based on JAC scheme can solve the problem of coupled CoA and AoA information in near-field estimation, thereby improving the algorithm performance. The JAC-GD algorithm shows significant performance in channel estimation and position estimation at different SNRs, snapshot points, and communication distances compared to other algorithms. This indicates that the JAC-GD algorithm can achieve more accurate channel and position estimation results while saving time overhead.

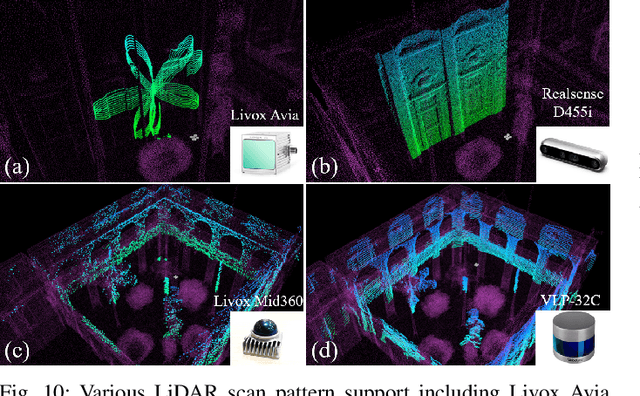

Voxel-SLAM: A Complete, Accurate, and Versatile LiDAR-Inertial SLAM System

Oct 11, 2024

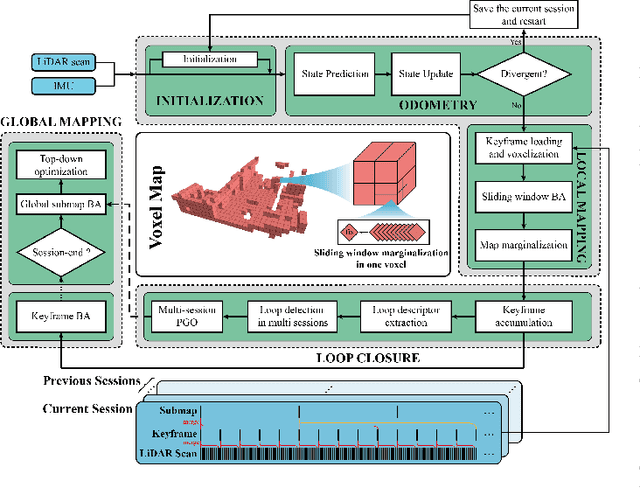

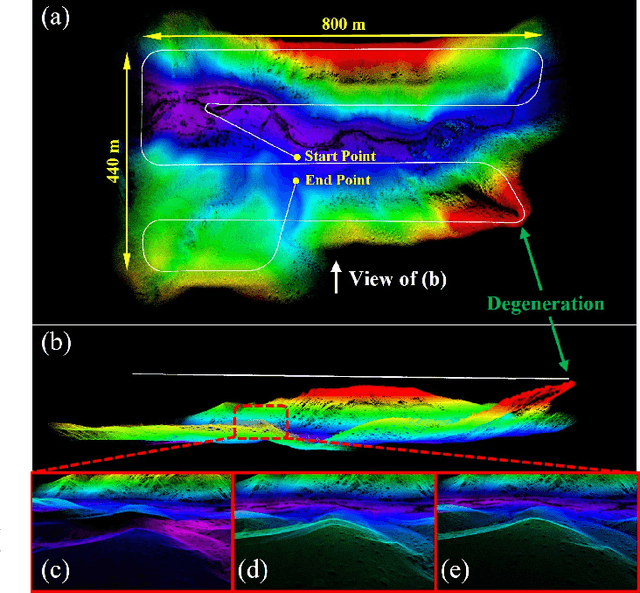

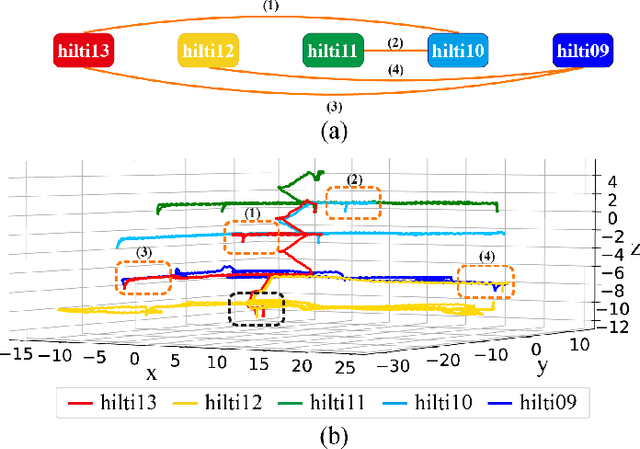

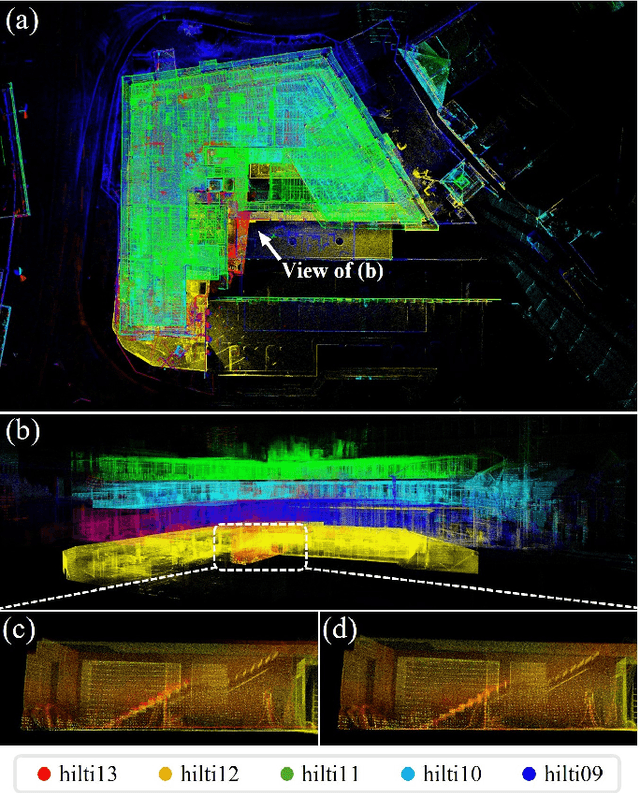

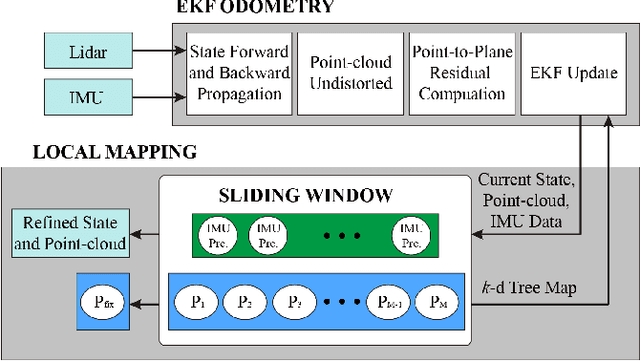

Abstract:In this work, we present Voxel-SLAM: a complete, accurate, and versatile LiDAR-inertial SLAM system that fully utilizes short-term, mid-term, long-term, and multi-map data associations to achieve real-time estimation and high precision mapping. The system consists of five modules: initialization, odometry, local mapping, loop closure, and global mapping, all employing the same map representation, an adaptive voxel map. The initialization provides an accurate initial state estimation and a consistent local map for subsequent modules, enabling the system to start with a highly dynamic initial state. The odometry, exploiting the short-term data association, rapidly estimates current states and detects potential system divergence. The local mapping, exploiting the mid-term data association, employs a local LiDAR-inertial bundle adjustment (BA) to refine the states (and the local map) within a sliding window of recent LiDAR scans. The loop closure detects previously visited places in the current and all previous sessions. The global mapping refines the global map with an efficient hierarchical global BA. The loop closure and global mapping both exploit long-term and multi-map data associations. We conducted a comprehensive benchmark comparison with other state-of-the-art methods across 30 sequences from three representative scenes, including narrow indoor environments using hand-held equipment, large-scale wilderness environments with aerial robots, and urban environments on vehicle platforms. Other experiments demonstrate the robustness and efficiency of the initialization, the capacity to work in multiple sessions, and relocalization in degenerated environments.

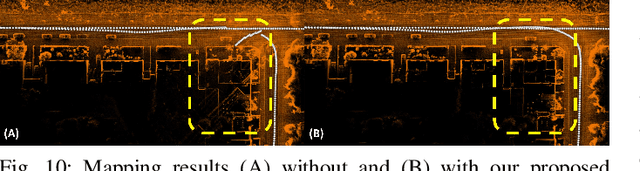

LVBA: LiDAR-Visual Bundle Adjustment for RGB Point Cloud Mapping

Sep 17, 2024

Abstract:Point cloud maps with accurate color are crucial in robotics and mapping applications. Existing approaches for producing RGB-colorized maps are primarily based on real-time localization using filter-based estimation or sliding window optimization, which may lack accuracy and global consistency. In this work, we introduce a novel global LiDAR-Visual bundle adjustment (BA) named LVBA to improve the quality of RGB point cloud mapping beyond existing baselines. LVBA first optimizes LiDAR poses via a global LiDAR BA, followed by a photometric visual BA incorporating planar features from the LiDAR point cloud for camera pose optimization. Additionally, to address the challenge of map point occlusions in constructing optimization problems, we implement a novel LiDAR-assisted global visibility algorithm in LVBA. To evaluate the effectiveness of LVBA, we conducted extensive experiments by comparing its mapping quality against existing state-of-the-art baselines (i.e., R$^3$LIVE and FAST-LIVO). Our results prove that LVBA can proficiently reconstruct high-fidelity, accurate RGB point cloud maps, outperforming its counterparts.

A Near Field Low Time Complexity Beam Training Scheme Based on Spatial Orthogonal Decomposition

Dec 15, 2023

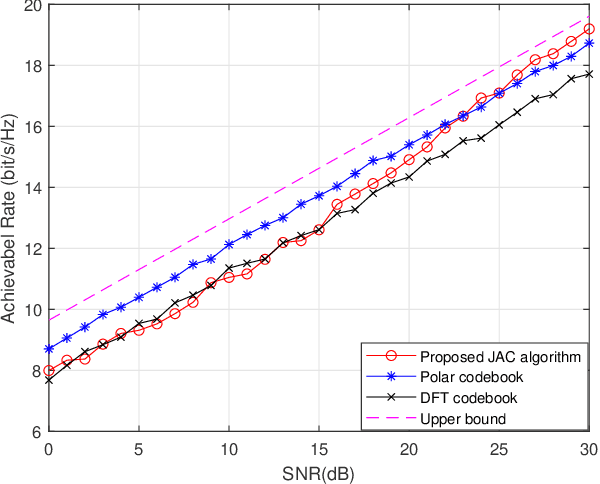

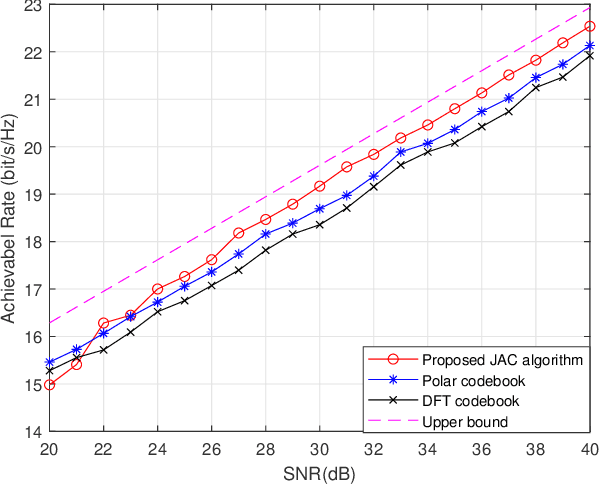

Abstract:With the application of high-frequency communication and extremely large MIMO (XL-MIMO), the near-field effect has become increasingly apparent. The near-field beam design now requires consideration not only of the angle of arrival (AoA) information but also the curvature of arrival (CoA) information. However, due to their mutual coupling, orthogonally decomposing the near-field space becomes challenging. In this paper, we propose a Joint Autocorrelation and Cross-correlation (JAC) scheme to address the coupling information between near-field CoA and AoA. First, we analyze the similarity between the near-field problem and the Doppler problem in digital signal processing, revealing that the autocorrelation function can effectively extract CoA information. Subsequently, utilizing the obtained CoA, we transform the near-field problem into a far-field form, enabling the direct application of beam training schemes designed for the far-field in the near-field scenario. Finally, we analyze the characteristics of the far and near-field signal subspaces from the perspective of matrix theory and discuss how the JAC algorithm handles them. Numerical results demonstrate that the JAC scheme outperforms traditional methods in the high signal-to-noise ratio (SNR) regime. Moreover, the time complexity of the JAC algorithm is $\mathcal O(N+1)$, significantly smaller than existing near-field beam training algorithms.

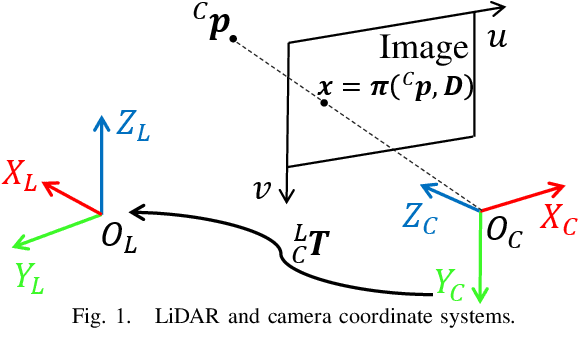

Joint Intrinsic and Extrinsic LiDAR-Camera Calibration in Targetless Environments Using Plane-Constrained Bundle Adjustment

Aug 24, 2023

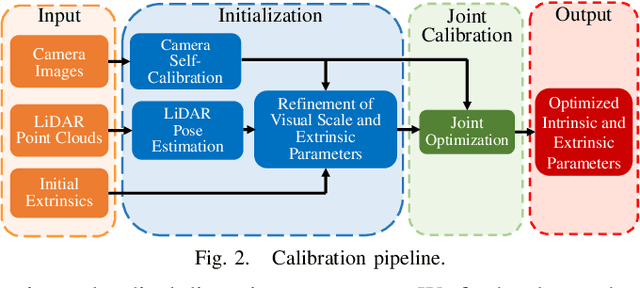

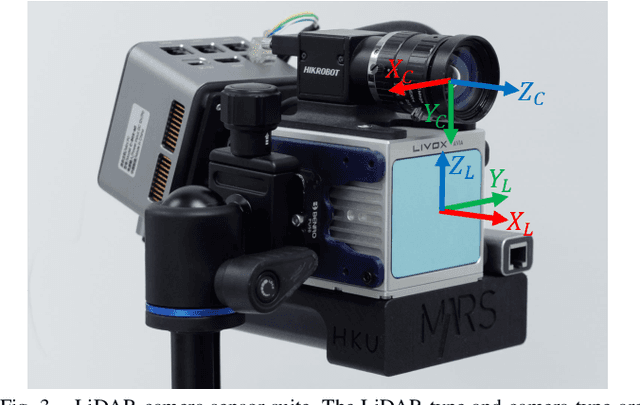

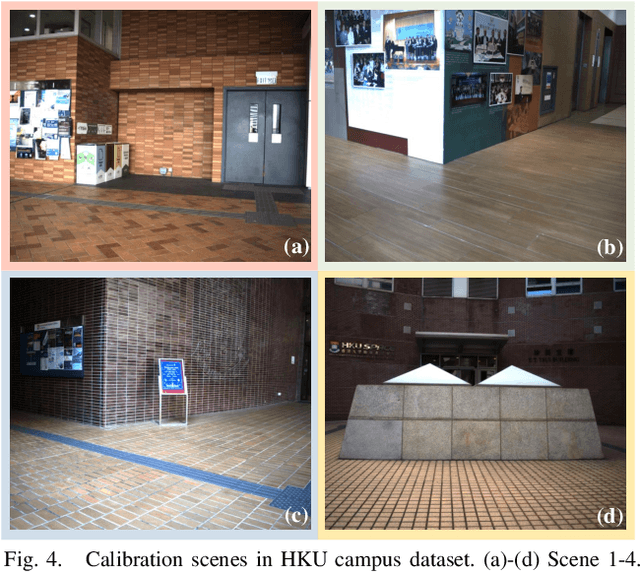

Abstract:This paper introduces a novel targetless method for joint intrinsic and extrinsic calibration of LiDAR-camera systems using plane-constrained bundle adjustment (BA). Our method leverages LiDAR point cloud measurements from planes in the scene, alongside visual points derived from those planes. The core novelty of our method lies in the integration of visual BA with the registration between visual points and LiDAR point cloud planes, which is formulated as a unified optimization problem. This formulation achieves concurrent intrinsic and extrinsic calibration, while also imparting depth constraints to the visual points to enhance the accuracy of intrinsic calibration. Experiments are conducted on both public data sequences and self-collected dataset. The results showcase that our approach not only surpasses other state-of-the-art (SOTA) methods but also maintains remarkable calibration accuracy even within challenging environments. For the benefits of the robotics community, we have open sourced our codes.

Hierarchical Codebook Design and Analytical Beamforming Solution for IRS Assisted Communication

Aug 03, 2023

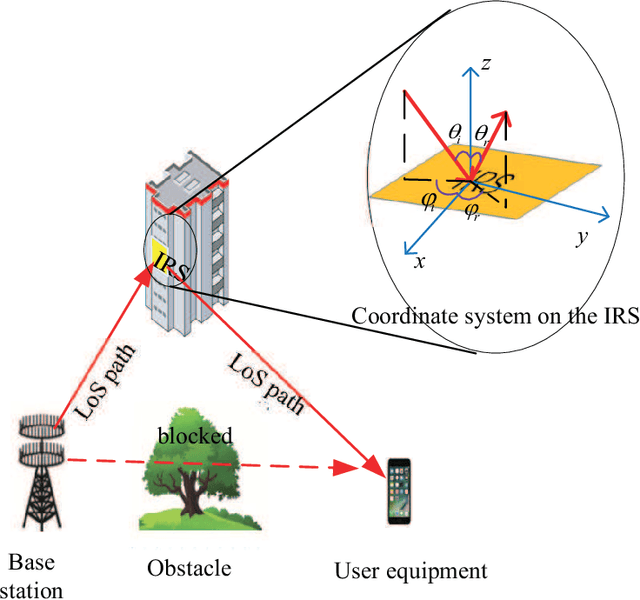

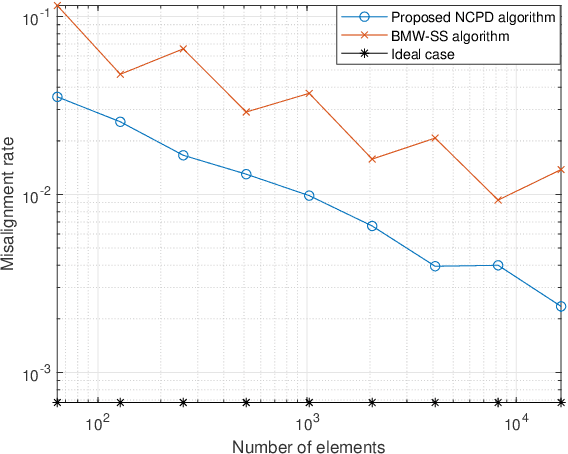

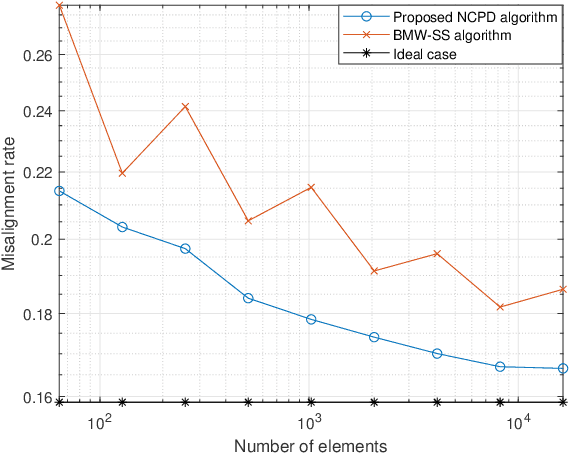

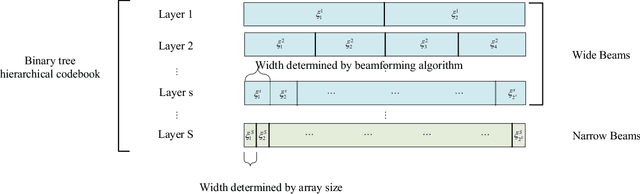

Abstract:In intelligent reflecting surface (IRS) assisted communication, beam search is usually time-consuming as the multiple-input multiple-output (MIMO) of IRS is usually very large. Hierarchical codebooks is a widely accepted method for reducing the complexity of searching time. The performance of this method strongly depends on the design scheme of beamforming of different beamwidths. In this paper, a non-constant phase difference (NCPD) beamforming algorithm is proposed. To implement the NCPD algorithm, we first model the phase shift of IRS as a continuous function, and then determine the parameters of the continuous function through the analysis of its array factor. Then, we propose a hierarchical codebook and two beam training schemes, namely the joint searching (JS) scheme and direction-wise searching (DWS) scheme by using the NCPD algorithm which can flexibly change the width, direction and shape of the beam formed by the IRS array. Simulation results show that the NCPD algorithm is more accurate with smaller side lobes, and also more stable on IRS of different sizes compared to other wide beam algorithms. The misalignment rate of the beam formed by the NCPD method is significantly reduced. The time complexity of the NCPD algorithm is constant, thus making it more suitable for solving the beamforming design problem with practically large IRS.

An Empirical Study of Uniform-Architecture Knowledge Distillation in Document Ranking

Feb 08, 2023

Abstract:Although BERT-based ranking models have been commonly used in commercial search engines, they are usually time-consuming for online ranking tasks. Knowledge distillation, which aims at learning a smaller model with comparable performance to a larger model, is a common strategy for reducing the online inference latency. In this paper, we investigate the effect of different loss functions for uniform-architecture distillation of BERT-based ranking models. Here "uniform-architecture" denotes that both teacher and student models are in cross-encoder architecture, while the student models include small-scaled pre-trained language models. Our experimental results reveal that the optimal distillation configuration for ranking tasks is much different than general natural language processing tasks. Specifically, when the student models are in cross-encoder architecture, a pairwise loss of hard labels is critical for training student models, whereas the distillation objectives of intermediate Transformer layers may hurt performance. These findings emphasize the necessity of carefully designing a distillation strategy (for cross-encoder student models) tailored for document ranking with pairwise training samples.

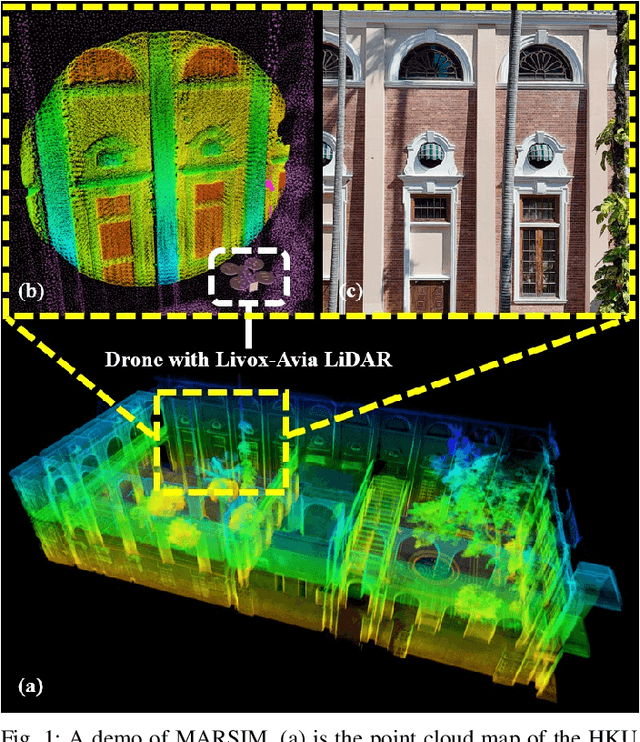

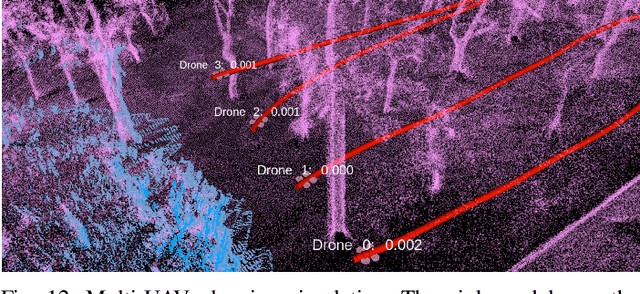

MARSIM: A light-weight point-realistic simulator for LiDAR-based UAVs

Dec 01, 2022

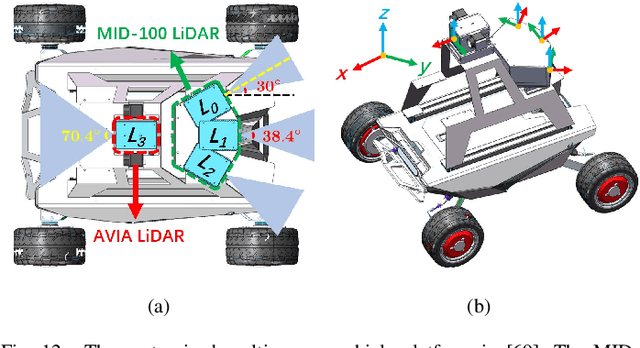

Abstract:The emergence of low-cost, small form factor and light-weight solid-state LiDAR sensors have brought new opportunities for autonomous unmanned aerial vehicles (UAVs) by advancing navigation safety and computation efficiency. Yet the successful developments of LiDAR-based UAVs must rely on extensive simulations. Existing simulators can hardly perform simulations of real-world environments due to the requirements of dense mesh maps that are difficult to obtain. In this paper, we develop a point-realistic simulator of real-world scenes for LiDAR-based UAVs. The key idea is the underlying point rendering method, where we construct a depth image directly from the point cloud map and interpolate it to obtain realistic LiDAR point measurements. Our developed simulator is able to run on a light-weight computing platform and supports the simulation of LiDARs with different resolution and scanning patterns, dynamic obstacles, and multi-UAV systems. Developed in the ROS framework, the simulator can easily communicate with other key modules of an autonomous robot, such as perception, state estimation, planning, and control. Finally, the simulator provides 10 high-resolution point cloud maps of various real-world environments, including forests of different densities, historic building, office, parking garage, and various complex indoor environments. These realistic maps provide diverse testing scenarios for an autonomous UAV. Evaluation results show that the developed simulator achieves superior performance in terms of time and memory consumption against Gazebo and that the simulated UAV flights highly match the actual one in real-world environments. We believe such a point-realistic and light-weight simulator is crucial to bridge the gap between UAV simulation and experiments and will significantly facilitate the research of LiDAR-based autonomous UAVs in the future.

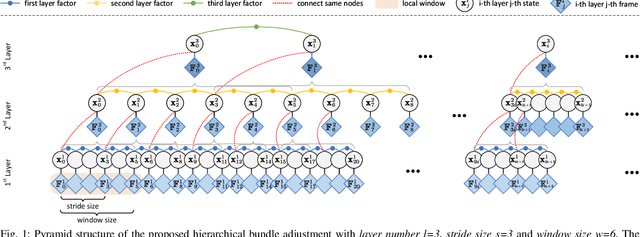

Large-Scale LiDAR Consistent Mapping using Hierachical LiDAR Bundle Adjustment

Sep 24, 2022

Abstract:Reconstructing an accurate and consistent large-scale LiDAR point cloud map is crucial for robotics applications. The existing solution, pose graph optimization, though it is time-efficient, does not directly optimize the mapping consistency. LiDAR bundle adjustment (BA) has been recently proposed to resolve this issue; however, it is too time-consuming on large-scale maps. To mitigate this problem, this paper presents a globally consistent and efficient mapping method suitable for large-scale maps. Our proposed work consists of a bottom-up hierarchical BA and a top-down pose graph optimization, which combines the advantages of both methods. With the hierarchical design, we solve multiple BA problems with a much smaller Hessian matrix size than the original BA; with the pose graph optimization, we smoothly and efficiently update the LiDAR poses. The effectiveness and robustness of our proposed approach have been validated on multiple spatially and timely large-scale public spinning LiDAR datasets, i.e., KITTI, MulRan and Newer College, and self-collected solid-state LiDAR datasets under structured and unstructured scenes. With proper setups, we demonstrate our work could generate a globally consistent map with around 12% of the sequence time.

Efficient and Consistent Bundle Adjustment on Lidar Point Clouds

Sep 19, 2022

Abstract:Bundle Adjustment (BA) refers to the problem of simultaneous determination of sensor poses and scene geometry, which is a fundamental problem in robot vision. This paper presents an efficient and consistent bundle adjustment method for lidar sensors. The method employs edge and plane features to represent the scene geometry, and directly minimizes the natural Euclidean distance from each raw point to the respective geometry feature. A nice property of this formulation is that the geometry features can be analytically solved, drastically reducing the dimension of the numerical optimization. To represent and solve the resultant optimization problem more efficiently, this paper then proposes a novel concept {\it point clusters}, which encodes all raw points associated to the same feature by a compact set of parameters, the {\it point cluster coordinates}. We derive the closed-form derivatives, up to the second order, of the BA optimization based on the point cluster coordinates and show their theoretical properties such as the null spaces and sparsity. Based on these theoretical results, this paper develops an efficient second-order BA solver. Besides estimating the lidar poses, the solver also exploits the second order information to estimate the pose uncertainty caused by measurement noises, leading to consistent estimates of lidar poses. Moreover, thanks to the use of point cluster, the developed solver fundamentally avoids the enumeration of each raw point (which is very time-consuming due to the large number) in all steps of the optimization: cost evaluation, derivatives evaluation and uncertainty evaluation. The implementation of our method is open sourced to benefit the robotics community and beyond.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge