Yi He

Cuiying Honors College, Lanzhou University, Lanzhou, Gansu, China, School of Mathematics and Statistics, Lanzhou University, Lanzhou, Gansu, China

Cascade-Free Mandarin Visual Speech Recognition via Semantic-Guided Cross-Representation Alignment

Mar 23, 2026Abstract:Chinese mandarin visual speech recognition (VSR) is a task that has advanced in recent years, yet still lags behind the performance on non-tonal languages such as English. One primary challenge arises from the tonal nature of Mandarin, which limits the effectiveness of conventional sequence-to-sequence modeling approaches. To alleviate this issue, existing Chinese VSR systems commonly incorporate intermediate representations, most notably pinyin, within cascade architectures to enhance recognition accuracy. While beneficial, in these cascaded designs, the subsequent stage during inference depends on the output of the preceding stage, leading to error accumulation and increased inference latency. To address these limitations, we propose a cascade-free architecture based on multitask learning that jointly integrates multiple intermediate representations, including phoneme and viseme, to better exploit contextual information. The proposed semantic-guided local contrastive loss temporally aligns the features, enabling on-demand activation during inference, thereby providing a trade-off between inference efficiency and performance while mitigating error accumulation caused by projection and re-embedding. Experiments conducted on publicly available datasets demonstrate that our method achieves superior recognition performance.

CogBlender: Towards Continuous Cognitive Intervention in Text-to-Image Generation

Mar 10, 2026Abstract:Beyond conveying semantic information, an image can also manifest cognitive attributes that elicit specific cognitive processes from the viewer, such as memory encoding or emotional response. While modern text-to-image models excel at generating semantically coherent content, they remain limited in their ability to control such cognitive properties of images (e.g., valence, memorability), often failing to align with the specific psychological intent. To bridge this gap, we introduce CogBlender, a framework that enables continuous and multi-dimensional intervention of cognitive properties during text-to-image generation. Our approach is built upon a mapping between the Cognitive Space, representing the space of cognitive properties, and the Semantic Manifold, representing the manifold of the visual semantics. We define a set of Cognitive Anchors, serving as the boundary points for the cognitive space. Then we reformulate the velocity field within the flow-matching process by interpolating from the velocity field of different anchors. Consequently, the generative process is driven by the velocity field and dynamically steered by multi-dimensional cognitive scores, enabling precise, fine-grained, and continuous intervention. We validate the effectiveness of CogBlender across four representative cognitive dimensions: valence, arousal, dominance, and image memorability. Extensive experiments demonstrate that our method achieves effective cognitive intervention. Our work provides an effective paradigm for cognition-driven creative design.

PSQE: A Theoretical-Practical Approach to Pseudo Seed Quality Enhancement for Unsupervised MMEA

Feb 26, 2026Abstract:Multimodal Entity Alignment (MMEA) aims to identify equivalent entities across different data modalities, enabling structural data integration that in turn improves the performance of various large language model applications. To lift the requirement of labeled seed pairs that are difficult to obtain, recent methods shifted to an unsupervised paradigm using pseudo-alignment seeds. However, unsupervised entity alignment in multimodal settings remains underexplored, mainly because the incorporation of multimodal information often results in imbalanced coverage of pseudo-seeds within the knowledge graph. To overcome this, we propose PSQE (Pseudo-Seed Quality Enhancement) to improve the precision and graph coverage balance of pseudo seeds via multimodal information and clustering-resampling. Theoretical analysis reveals the impact of pseudo seeds on existing contrastive learning-based MMEA models. In particular, pseudo seeds can influence the attraction and the repulsion terms in contrastive learning at once, whereas imbalanced graph coverage causes models to prioritize high-density regions, thereby weakening their learning capability for entities in sparse regions. Experimental results validate our theoretical findings and show that PSQE as a plug-and-play module can improve the performance of baselines by considerable margins.

MEDNA-DFM: A Dual-View FiLM-MoE Model for Explainable DNA Methylation Prediction

Feb 26, 2026Abstract:Accurate computational identification of DNA methylation is essential for understanding epigenetic regulation. Although deep learning excels in this binary classification task, its "black-box" nature impedes biological insight. We address this by introducing a high-performance model MEDNA-DFM, alongside mechanism-inspired signal purification algorithms. Our investigation demonstrates that MEDNA-DFM effectively captures conserved methylation patterns, achieving robust distinction across diverse species. Validation on external independent datasets confirms that the model's generalization is driven by conserved intrinsic motifs (e.g., GC content) rather than phylogenetic proximity. Furthermore, applying our developed algorithms extracted motifs with significantly higher reliability than prior studies. Finally, empirical evidence from a Drosophila 6mA case study prompted us to propose a "sequence-structure synergy" hypothesis, suggesting that the GAGG core motif and an upstream A-tract element function cooperatively. We further validated this hypothesis via in silico mutagenesis, confirming that the ablation of either or both elements significantly degrades the model's recognition capabilities. This work provides a powerful tool for methylation prediction and demonstrates how explainable deep learning can drive both methodological innovation and the generation of biological hypotheses.

On Stability and Robustness of Diffusion Posterior Sampling for Bayesian Inverse Problems

Feb 02, 2026Abstract:Diffusion models have recently emerged as powerful learned priors for Bayesian inverse problems (BIPs). Diffusion-based solvers rely on a presumed likelihood for the observations in BIPs to guide the generation process. However, the link between likelihood and recovery quality for BIPs is unclear in previous works. We bridge this gap by characterizing the posterior approximation error and proving the \emph{stability} of the diffusion-based solvers. Meanwhile, an immediate result of our findings on stability demonstrates the lack of robustness in diffusion-based solvers, which remains unexplored. This can degrade performance when the presumed likelihood mismatches the unknown true data generation processes. To address this issue, we propose a simple yet effective solution, \emph{robust diffusion posterior sampling}, which is provably \emph{robust} and compatible with existing gradient-based posterior samplers. Empirical results on scientific inverse problems and natural image tasks validate the effectiveness and robustness of our method, showing consistent performance improvements under challenging likelihood misspecifications.

Relink: Constructing Query-Driven Evidence Graph On-the-Fly for GraphRAG

Jan 12, 2026Abstract:Graph-based Retrieval-Augmented Generation (GraphRAG) mitigates hallucinations in Large Language Models (LLMs) by grounding them in structured knowledge. However, current GraphRAG methods are constrained by a prevailing \textit{build-then-reason} paradigm, which relies on a static, pre-constructed Knowledge Graph (KG). This paradigm faces two critical challenges. First, the KG's inherent incompleteness often breaks reasoning paths. Second, the graph's low signal-to-noise ratio introduces distractor facts, presenting query-relevant but misleading knowledge that disrupts the reasoning process. To address these challenges, we argue for a \textit{reason-and-construct} paradigm and propose Relink, a framework that dynamically builds a query-specific evidence graph. To tackle incompleteness, \textbf{Relink} instantiates required facts from a latent relation pool derived from the original text corpus, repairing broken paths on the fly. To handle misleading or distractor facts, Relink employs a unified, query-aware evaluation strategy that jointly considers candidates from both the KG and latent relations, selecting those most useful for answering the query rather than relying on their pre-existence. This empowers Relink to actively discard distractor facts and construct the most faithful and precise evidence path for each query. Extensive experiments on five Open-Domain Question Answering benchmarks show that Relink achieves significant average improvements of 5.4\% in EM and 5.2\% in F1 over leading GraphRAG baselines, demonstrating the superiority of our proposed framework.

Improved Bounds for Private and Robust Alignment

Dec 29, 2025Abstract:In this paper, we study the private and robust alignment of language models from a theoretical perspective by establishing upper bounds on the suboptimality gap in both offline and online settings. We consider preference labels subject to privacy constraints and/or adversarial corruption, and analyze two distinct interplays between them: privacy-first and corruption-first. For the privacy-only setting, we show that log loss with an MLE-style algorithm achieves near-optimal rates, in contrast to conventional wisdom. For the joint privacy-and-corruption setting, we first demonstrate that existing offline algorithms in fact provide stronger guarantees -- simultaneously in terms of corruption level and privacy parameters -- than previously known, which further yields improved bounds in the corruption-only regime. In addition, we also present the first set of results for private and robust online alignment. Our results are enabled by new uniform convergence guarantees for log loss and square loss under privacy and corruption, which we believe have broad applicability across learning theory and statistics.

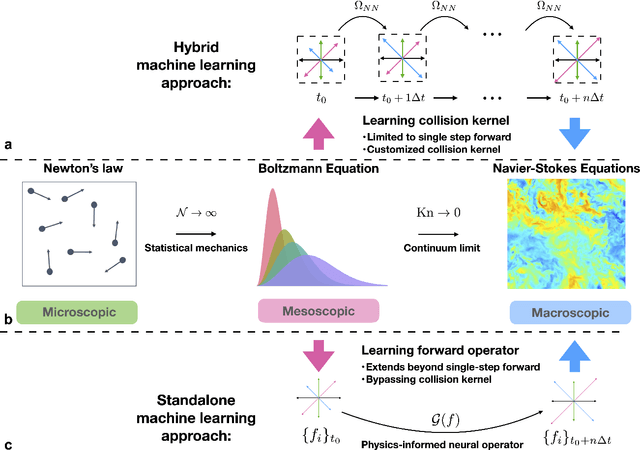

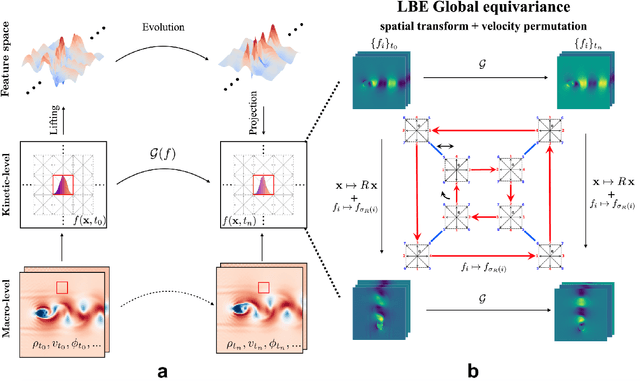

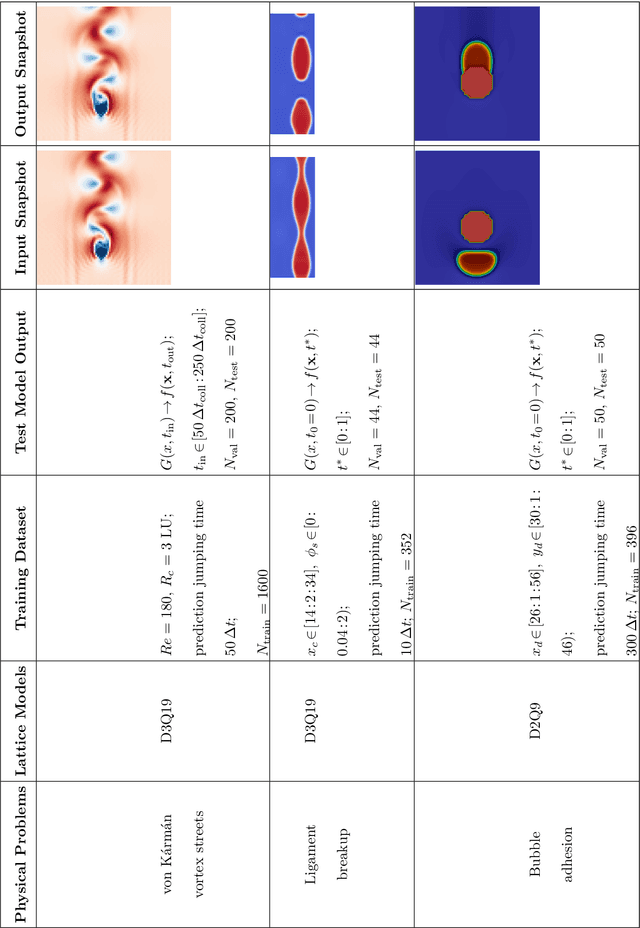

Fast-Forward Lattice Boltzmann: Learning Kinetic Behaviour with Physics-Informed Neural Operators

Sep 26, 2025

Abstract:The lattice Boltzmann equation (LBE), rooted in kinetic theory, provides a powerful framework for capturing complex flow behaviour by describing the evolution of single-particle distribution functions (PDFs). Despite its success, solving the LBE numerically remains computationally intensive due to strict time-step restrictions imposed by collision kernels. Here, we introduce a physics-informed neural operator framework for the LBE that enables prediction over large time horizons without step-by-step integration, effectively bypassing the need to explicitly solve the collision kernel. We incorporate intrinsic moment-matching constraints of the LBE, along with global equivariance of the full distribution field, enabling the model to capture the complex dynamics of the underlying kinetic system. Our framework is discretization-invariant, enabling models trained on coarse lattices to generalise to finer ones (kinetic super-resolution). In addition, it is agnostic to the specific form of the underlying collision model, which makes it naturally applicable across different kinetic datasets regardless of the governing dynamics. Our results demonstrate robustness across complex flow scenarios, including von Karman vortex shedding, ligament breakup, and bubble adhesion. This establishes a new data-driven pathway for modelling kinetic systems.

Landmark Guided Visual Feature Extractor for Visual Speech Recognition with Limited Resource

Aug 10, 2025Abstract:Visual speech recognition is a technique to identify spoken content in silent speech videos, which has raised significant attention in recent years. Advancements in data-driven deep learning methods have significantly improved both the speed and accuracy of recognition. However, these deep learning methods can be effected by visual disturbances, such as lightning conditions, skin texture and other user-specific features. Data-driven approaches could reduce the performance degradation caused by these visual disturbances using models pretrained on large-scale datasets. But these methods often require large amounts of training data and computational resources, making them costly. To reduce the influence of user-specific features and enhance performance with limited data, this paper proposed a landmark guided visual feature extractor. Facial landmarks are used as auxiliary information to aid in training the visual feature extractor. A spatio-temporal multi-graph convolutional network is designed to fully exploit the spatial locations and spatio-temporal features of facial landmarks. Additionally, a multi-level lip dynamic fusion framework is introduced to combine the spatio-temporal features of the landmarks with the visual features extracted from the raw video frames. Experimental results show that this approach performs well with limited data and also improves the model's accuracy on unseen speakers.

Generative molecule evolution using 3D pharmacophore for efficient Structure-Based Drug Design

Jul 27, 2025

Abstract:Recent advances in generative models, particularly diffusion and auto-regressive models, have revolutionized fields like computer vision and natural language processing. However, their application to structure-based drug design (SBDD) remains limited due to critical data constraints. To address the limitation of training data for models targeting SBDD tasks, we propose an evolutionary framework named MEVO, which bridges the gap between billion-scale small molecule dataset and the scarce protein-ligand complex dataset, and effectively increase the abundance of training data for generative SBDD models. MEVO is composed of three key components: a high-fidelity VQ-VAE for molecule representation in latent space, a diffusion model for pharmacophore-guided molecule generation, and a pocket-aware evolutionary strategy for molecule optimization with physics-based scoring function. This framework efficiently generate high-affinity binders for various protein targets, validated with predicted binding affinities using free energy perturbation (FEP) methods. In addition, we showcase the capability of MEVO in designing potent inhibitors to KRAS$^{\textrm{G12D}}$, a challenging target in cancer therapeutics, with similar affinity to the known highly active inhibitor evaluated by FEP calculations. With high versatility and generalizability, MEVO offers an effective and data-efficient model for various tasks in structure-based ligand design.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge