Yi Cai

An Unsupervised Normalizing Flow-Based Neyman-Pearson Detector for Covert Communications in the Presence of Disco Reconfigurable Intelligent Surfaces

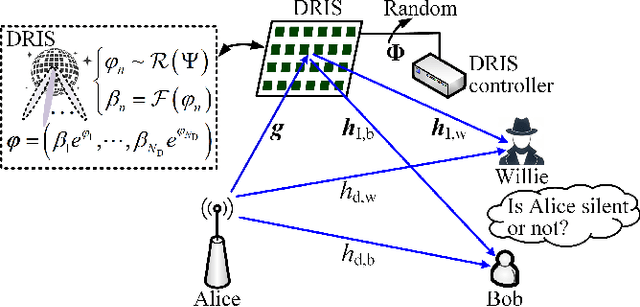

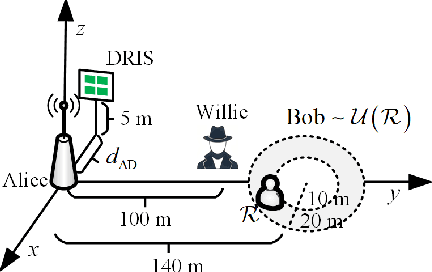

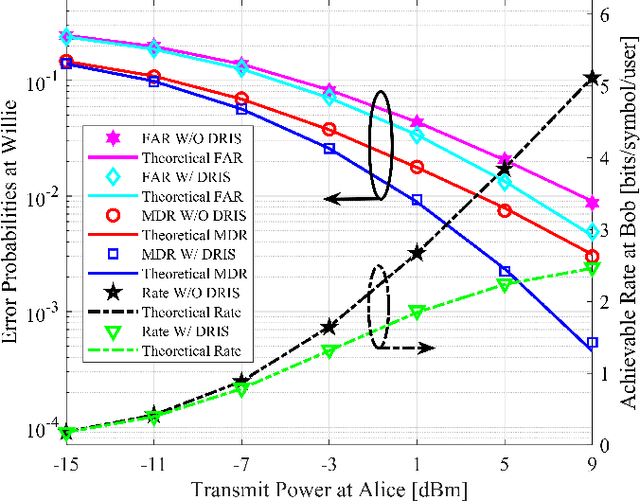

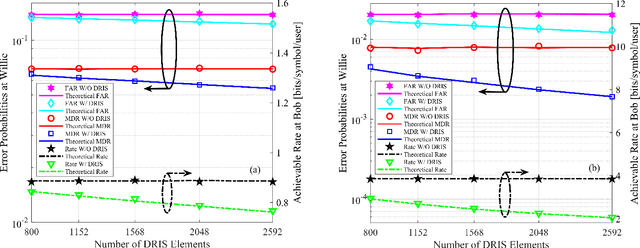

Feb 10, 2026Abstract:Covert communications, also known as low probability of detection (LPD) communications, offer a higher level of privacy protection compared to cryptography and physical-layer security (PLS) by hiding the transmission within ambient environments. Here, we investigate covert communications in the presence of a disco reconfigurable intelligent surface (DRIS) deployed by the warden Willie, which simultaneously reduces his detection error probabilities and degrades the communication performance between Alice and Bob, without relying on either channel state information (CSI) or additional jamming power. However, the introduction of the DRIS renders it intractable for Willie to construct a Neyman-Pearson (NP) detector, since the probability density function (PDF) of the test statistic is analytically intractable under the Alice-Bob transmission hypothesis. Moreover, given the adversarial relationship between Willie and Alice/Bob, it is unrealistic to assume that Willie has access to a labeled training dataset. To address these challenges, we propose an unsupervised masked autoregressive flow (MAF)-based NP detection framework that exploits prior knowledge inherent in covert communications. We further define the false alarm rate (FAR) and the missed detection rate (MDR) as monitoring performance metrics for Willie, and the signal-to-jamming-plus-noise ratio (SJNR) as a communication performance metric for Alice-Bob transmissions. Furthermore, we derive theoretical expressions for SJNR and uncover unique properties of covert communications in the presence of a DRIS. Simulations validate the theory and show that the proposed unsupervised MAF-based NP detector achieves performance comparable to its supervised counterpart.

Comparison of Single Carrier FTN-QAM and PCS-QAM for Amplifier-less Coherent Communication Systems

Jan 25, 2026Abstract:A performance comparison of FTN-QAM and PCS-QAM for amplifier-less short-reach coherent communication systems is provided. With the applications of phase tracking partial response DFE and turbo equalization strategy, FTN-16QAM exhibits about 0.9dB power margin advantage over PCS-64QAM.

Rethinking Explanation Evaluation under the Retraining Scheme

Nov 11, 2025

Abstract:Feature attribution has gained prominence as a tool for explaining model decisions, yet evaluating explanation quality remains challenging due to the absence of ground-truth explanations. To circumvent this, explanation-guided input manipulation has emerged as an indirect evaluation strategy, measuring explanation effectiveness through the impact of input modifications on model outcomes during inference. Despite the widespread use, a major concern with inference-based schemes is the distribution shift caused by such manipulations, which undermines the reliability of their assessments. The retraining-based scheme ROAR overcomes this issue by adapting the model to the altered data distribution. However, its evaluation results often contradict the theoretical foundations of widely accepted explainers. This work investigates this misalignment between empirical observations and theoretical expectations. In particular, we identify the sign issue as a key factor responsible for residual information that ultimately distorts retraining-based evaluation. Based on the analysis, we show that a straightforward reframing of the evaluation process can effectively resolve the identified issue. Building on the existing framework, we further propose novel variants that jointly structure a comprehensive perspective on explanation evaluation. These variants largely improve evaluation efficiency over the standard retraining protocol, thereby enhancing practical applicability for explainer selection and benchmarking. Following our proposed schemes, empirical results across various data scales provide deeper insights into the performance of carefully selected explainers, revealing open challenges and future directions in explainability research.

CADReview: Automatically Reviewing CAD Programs with Error Detection and Correction

May 28, 2025Abstract:Computer-aided design (CAD) is crucial in prototyping 3D objects through geometric instructions (i.e., CAD programs). In practical design workflows, designers often engage in time-consuming reviews and refinements of these prototypes by comparing them with reference images. To bridge this gap, we introduce the CAD review task to automatically detect and correct potential errors, ensuring consistency between the constructed 3D objects and reference images. However, recent advanced multimodal large language models (MLLMs) struggle to recognize multiple geometric components and perform spatial geometric operations within the CAD program, leading to inaccurate reviews. In this paper, we propose the CAD program repairer (ReCAD) framework to effectively detect program errors and provide helpful feedback on error correction. Additionally, we create a dataset, CADReview, consisting of over 20K program-image pairs, with diverse errors for the CAD review task. Extensive experiments demonstrate that our ReCAD significantly outperforms existing MLLMs, which shows great potential in design applications.

Simultaneously Exposing and Jamming Covert Communications via Disco Reconfigurable Intelligent Surfaces

May 18, 2025

Abstract:Covert communications provide a stronger privacy protection than cryptography and physical-layer security (PLS). However, previous works on covert communications have implicitly assumed the validity of channel reciprocity, i.e., wireless channels remain constant or approximately constant during their coherence time. In this work, we investigate covert communications in the presence of a disco RIS (DRIS) deployed by the warden Willie, where the DRIS with random and time-varying reflective coefficients acts as a "disco ball", introducing timevarying fully-passive jamming (FPJ). Consequently, the channel reciprocity assumption no longer holds. The DRIS not only jams the covert transmissions between Alice and Bob, but also decreases the error probabilities of Willie's detections, without either Bob's channel knowledge or additional jamming power. To quantify the impact of the DRIS on covert communications, we first design a detection rule for the warden Willie in the presence of time-varying FPJ introduced by the DRIS. Then, we define the detection error probabilities, i.e., the false alarm rate (FAR) and the missed detection rate (MDR), as the monitoring performance metrics for Willie's detections, and the signal-to-jamming-plusnoise ratio (SJNR) as a communication performance metric for the covert transmissions between Alice and Bob. Based on the detection rule, we derive the detection threshold for the warden Willie to detect whether communications between Alice and Bob is ongoing, considering the time-varying DRIS-based FPJ. Moreover, we conduct theoretical analyses of the FAR and the MDR at the warden Willie, as well as SJNR at Bob, and then present unique properties of the DRIS-based FPJ in covert communications. We present numerical results to validate the derived theoretical analyses and evaluate the impact of DRIS on covert communications.

Seeing Beyond the Scene: Enhancing Vision-Language Models with Interactional Reasoning

May 14, 2025Abstract:Traditional scene graphs primarily focus on spatial relationships, limiting vision-language models' (VLMs) ability to reason about complex interactions in visual scenes. This paper addresses two key challenges: (1) conventional detection-to-construction methods produce unfocused, contextually irrelevant relationship sets, and (2) existing approaches fail to form persistent memories for generalizing interaction reasoning to new scenes. We propose Interaction-augmented Scene Graph Reasoning (ISGR), a framework that enhances VLMs' interactional reasoning through three complementary components. First, our dual-stream graph constructor combines SAM-powered spatial relation extraction with interaction-aware captioning to generate functionally salient scene graphs with spatial grounding. Second, we employ targeted interaction queries to activate VLMs' latent knowledge of object functionalities, converting passive recognition into active reasoning about how objects work together. Finally, we introduce a lone-term memory reinforcement learning strategy with a specialized interaction-focused reward function that transforms transient patterns into long-term reasoning heuristics. Extensive experiments demonstrate that our approach significantly outperforms baseline methods on interaction-heavy reasoning benchmarks, with particularly strong improvements on complex scene understanding tasks. The source code can be accessed at https://github.com/open_upon_acceptance.

Collaborative Multi-LoRA Experts with Achievement-based Multi-Tasks Loss for Unified Multimodal Information Extraction

May 08, 2025Abstract:Multimodal Information Extraction (MIE) has gained attention for extracting structured information from multimedia sources. Traditional methods tackle MIE tasks separately, missing opportunities to share knowledge across tasks. Recent approaches unify these tasks into a generation problem using instruction-based T5 models with visual adaptors, optimized through full-parameter fine-tuning. However, this method is computationally intensive, and multi-task fine-tuning often faces gradient conflicts, limiting performance. To address these challenges, we propose collaborative multi-LoRA experts with achievement-based multi-task loss (C-LoRAE) for MIE tasks. C-LoRAE extends the low-rank adaptation (LoRA) method by incorporating a universal expert to learn shared multimodal knowledge from cross-MIE tasks and task-specific experts to learn specialized instructional task features. This configuration enhances the model's generalization ability across multiple tasks while maintaining the independence of various instruction tasks and mitigating gradient conflicts. Additionally, we propose an achievement-based multi-task loss to balance training progress across tasks, addressing the imbalance caused by varying numbers of training samples in MIE tasks. Experimental results on seven benchmark datasets across three key MIE tasks demonstrate that C-LoRAE achieves superior overall performance compared to traditional fine-tuning methods and LoRA methods while utilizing a comparable number of training parameters to LoRA.

A Reputation System for Large Language Model-based Multi-agent Systems to Avoid the Tragedy of the Commons

May 08, 2025Abstract:The tragedy of the commons, where individual self-interest leads to collectively disastrous outcomes, is a pervasive challenge in human society. Recent studies have demonstrated that similar phenomena can arise in generative multi-agent systems (MASs). To address this challenge, this paper explores the use of reputation systems as a remedy. We propose RepuNet, a dynamic, dual-level reputation framework that models both agent-level reputation dynamics and system-level network evolution. Specifically, driven by direct interactions and indirect gossip, agents form reputations for both themselves and their peers, and decide whether to connect or disconnect other agents for future interactions. Through two distinct scenarios, we show that RepuNet effectively mitigates the 'tragedy of the commons', promoting and sustaining cooperation in generative MASs. Moreover, we find that reputation systems can give rise to rich emergent behaviors in generative MASs, such as the formation of cooperative clusters, the social isolation of exploitative agents, and the preference for sharing positive gossip rather than negative ones.

ConsisLoRA: Enhancing Content and Style Consistency for LoRA-based Style Transfer

Mar 13, 2025

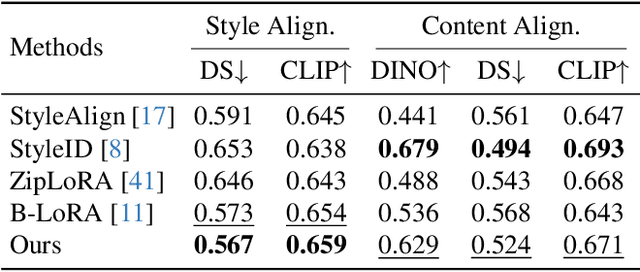

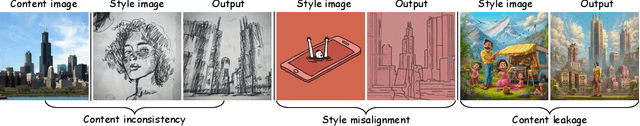

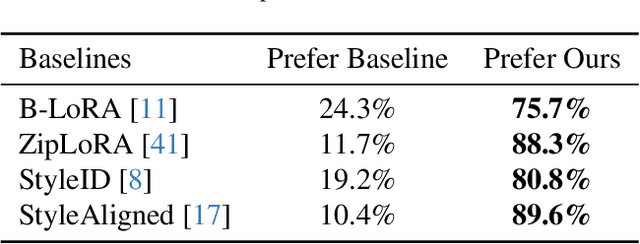

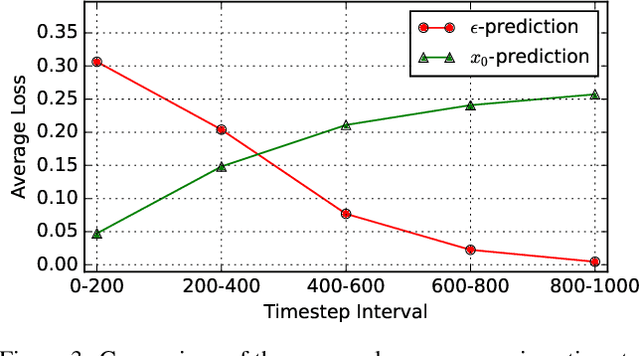

Abstract:Style transfer involves transferring the style from a reference image to the content of a target image. Recent advancements in LoRA-based (Low-Rank Adaptation) methods have shown promise in effectively capturing the style of a single image. However, these approaches still face significant challenges such as content inconsistency, style misalignment, and content leakage. In this paper, we comprehensively analyze the limitations of the standard diffusion parameterization, which learns to predict noise, in the context of style transfer. To address these issues, we introduce ConsisLoRA, a LoRA-based method that enhances both content and style consistency by optimizing the LoRA weights to predict the original image rather than noise. We also propose a two-step training strategy that decouples the learning of content and style from the reference image. To effectively capture both the global structure and local details of the content image, we introduce a stepwise loss transition strategy. Additionally, we present an inference guidance method that enables continuous control over content and style strengths during inference. Through both qualitative and quantitative evaluations, our method demonstrates significant improvements in content and style consistency while effectively reducing content leakage.

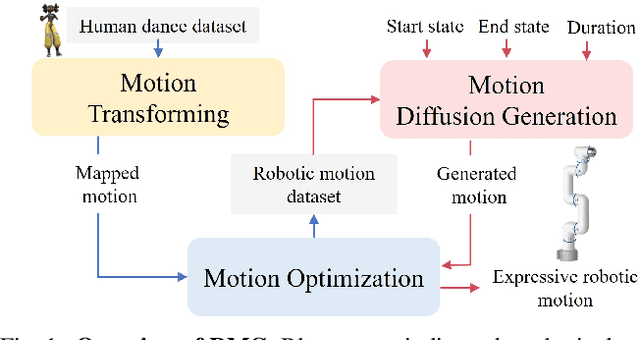

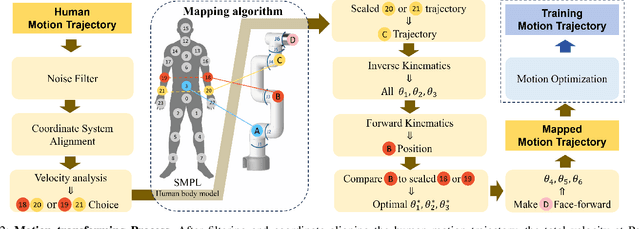

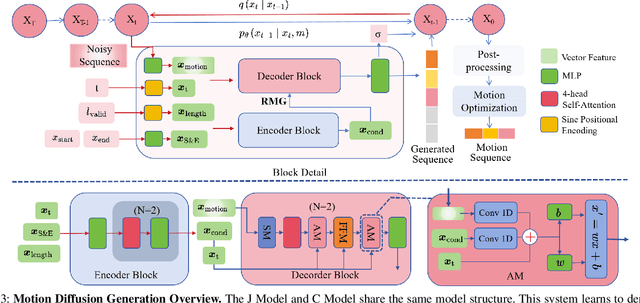

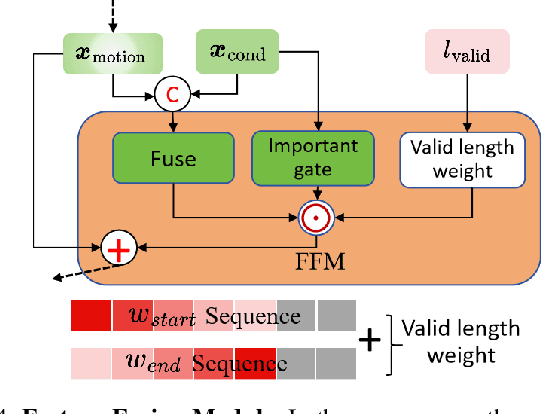

RMG: Real-Time Expressive Motion Generation with Self-collision Avoidance for 6-DOF Companion Robotic Arms

Mar 13, 2025

Abstract:The six-degree-of-freedom (6-DOF) robotic arm has gained widespread application in human-coexisting environments. While previous research has predominantly focused on functional motion generation, the critical aspect of expressive motion in human-robot interaction remains largely unexplored. This paper presents a novel real-time motion generation planner that enhances interactivity by creating expressive robotic motions between arbitrary start and end states within predefined time constraints. Our approach involves three key contributions: first, we develop a mapping algorithm to construct an expressive motion dataset derived from human dance movements; second, we train motion generation models in both Cartesian and joint spaces using this dataset; third, we introduce an optimization algorithm that guarantees smooth, collision-free motion while maintaining the intended expressive style. Experimental results demonstrate the effectiveness of our method, which can generate expressive and generalized motions in under 0.5 seconds while satisfying all specified constraints.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge