Priya L. Donti

RL2Grid: Benchmarking Reinforcement Learning in Power Grid Operations

Mar 29, 2025Abstract:Reinforcement learning (RL) can transform power grid operations by providing adaptive and scalable controllers essential for grid decarbonization. However, existing methods struggle with the complex dynamics, aleatoric uncertainty, long-horizon goals, and hard physical constraints that occur in real-world systems. This paper presents RL2Grid, a benchmark designed in collaboration with power system operators to accelerate progress in grid control and foster RL maturity. Built on a power simulation framework developed by RTE France, RL2Grid standardizes tasks, state and action spaces, and reward structures within a unified interface for a systematic evaluation and comparison of RL approaches. Moreover, we integrate real control heuristics and safety constraints informed by the operators' expertise to ensure RL2Grid aligns with grid operation requirements. We benchmark popular RL baselines on the grid control tasks represented within RL2Grid, establishing reference performance metrics. Our results and discussion highlight the challenges that power grids pose for RL methods, emphasizing the need for novel algorithms capable of handling real-world physical systems.

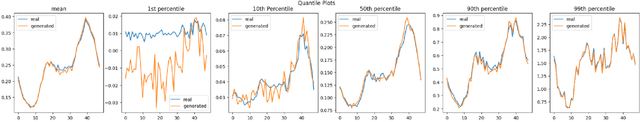

Defining 'Good': Evaluation Framework for Synthetic Smart Meter Data

Jul 16, 2024

Abstract:Access to granular demand data is essential for the net zero transition; it allows for accurate profiling and active demand management as our reliance on variable renewable generation increases. However, public release of this data is often impossible due to privacy concerns. Good quality synthetic data can circumnavigate this issue. Despite significant research on generating synthetic smart meter data, there is still insufficient work on creating a consistent evaluation framework. In this paper, we investigate how common frameworks used by other industries leveraging synthetic data, can be applied to synthetic smart meter data, such as fidelity, utility and privacy. We also recommend specific metrics to ensure that defining aspects of smart meter data are preserved and test the extent to which privacy can be protected using differential privacy. We show that standard privacy attack methods like reconstruction or membership inference attacks are inadequate for assessing privacy risks of smart meter datasets. We propose an improved method by injecting training data with implausible outliers, then launching privacy attacks directly on these outliers. The choice of $\epsilon$ (a metric of privacy loss) significantly impacts privacy risk, highlighting the necessity of performing these explicit privacy tests when making trade-offs between fidelity and privacy.

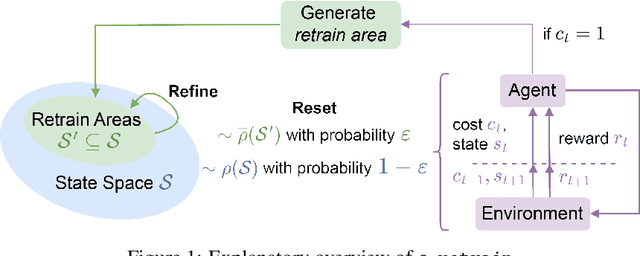

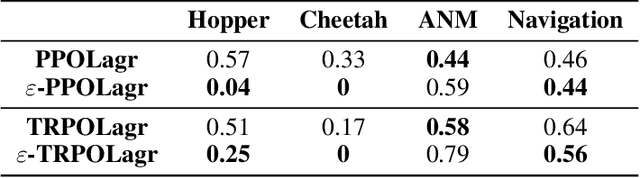

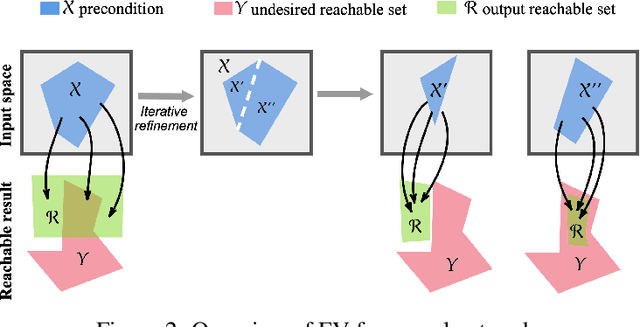

Improving Policy Optimization via $\varepsilon$-Retrain

Jun 12, 2024

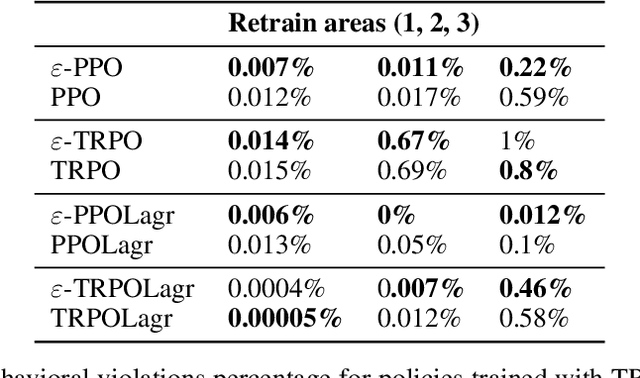

Abstract:We present $\varepsilon$-retrain, an exploration strategy designed to encourage a behavioral preference while optimizing policies with monotonic improvement guarantees. To this end, we introduce an iterative procedure for collecting retrain areas -- parts of the state space where an agent did not follow the behavioral preference. Our method then switches between the typical uniform restart state distribution and the retrain areas using a decaying factor $\varepsilon$, allowing agents to retrain on situations where they violated the preference. Experiments over hundreds of seeds across locomotion, navigation, and power network tasks show that our method yields agents that exhibit significant performance and sample efficiency improvements. Moreover, we employ formal verification of neural networks to provably quantify the degree to which agents adhere to behavioral preferences.

Application-Driven Innovation in Machine Learning

Mar 26, 2024

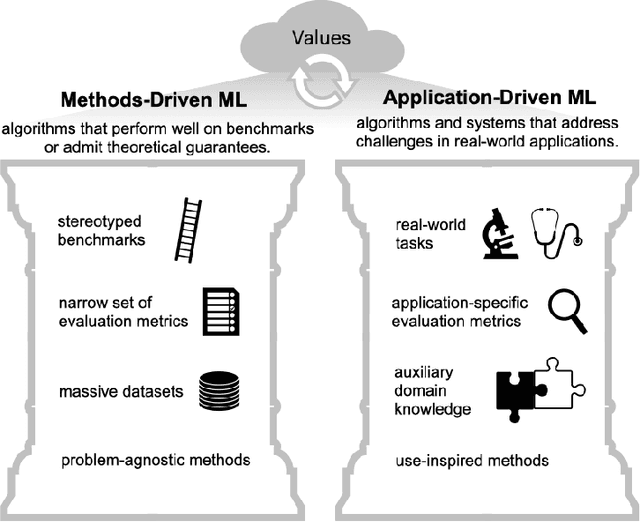

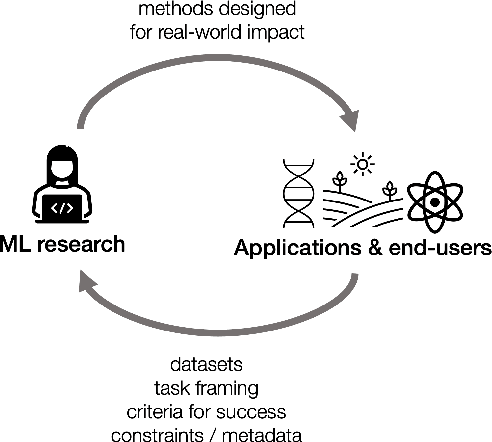

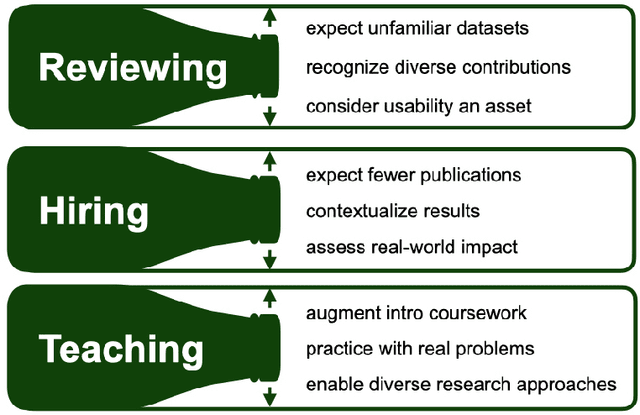

Abstract:As applications of machine learning proliferate, innovative algorithms inspired by specific real-world challenges have become increasingly important. Such work offers the potential for significant impact not merely in domains of application but also in machine learning itself. In this paper, we describe the paradigm of application-driven research in machine learning, contrasting it with the more standard paradigm of methods-driven research. We illustrate the benefits of application-driven machine learning and how this approach can productively synergize with methods-driven work. Despite these benefits, we find that reviewing, hiring, and teaching practices in machine learning often hold back application-driven innovation. We outline how these processes may be improved.

Proceedings of AAAI 2022 Fall Symposium: The Role of AI in Responding to Climate Challenges

Jan 06, 2023Abstract:Climate change is one of the most pressing challenges of our time, requiring rapid action across society. As artificial intelligence tools (AI) are rapidly deployed, it is therefore crucial to understand how they will impact climate action. On the one hand, AI can support applications in climate change mitigation (reducing or preventing greenhouse gas emissions), adaptation (preparing for the effects of a changing climate), and climate science. These applications have implications in areas ranging as widely as energy, agriculture, and finance. At the same time, AI is used in many ways that hinder climate action (e.g., by accelerating the use of greenhouse gas-emitting fossil fuels). In addition, AI technologies have a carbon and energy footprint themselves. This symposium brought together participants from across academia, industry, government, and civil society to explore these intersections of AI with climate change, as well as how each of these sectors can contribute to solutions.

Adversarially Robust Learning for Security-Constrained Optimal Power Flow

Nov 12, 2021

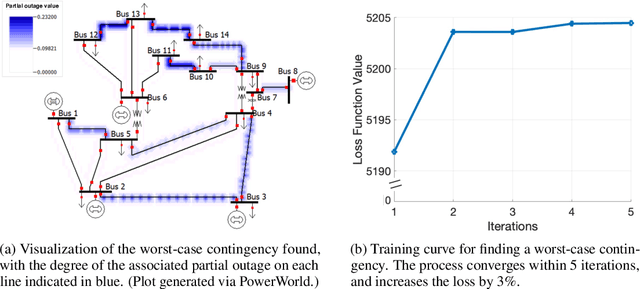

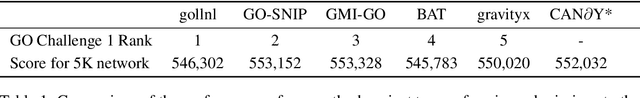

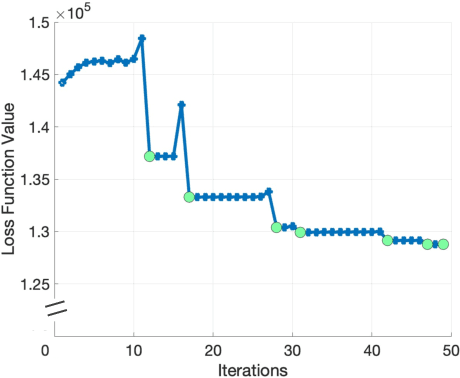

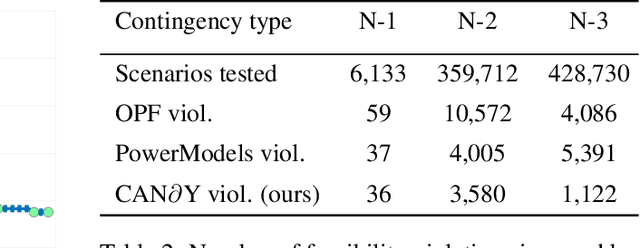

Abstract:In recent years, the ML community has seen surges of interest in both adversarially robust learning and implicit layers, but connections between these two areas have seldom been explored. In this work, we combine innovations from these areas to tackle the problem of N-k security-constrained optimal power flow (SCOPF). N-k SCOPF is a core problem for the operation of electrical grids, and aims to schedule power generation in a manner that is robust to potentially k simultaneous equipment outages. Inspired by methods in adversarially robust training, we frame N-k SCOPF as a minimax optimization problem - viewing power generation settings as adjustable parameters and equipment outages as (adversarial) attacks - and solve this problem via gradient-based techniques. The loss function of this minimax problem involves resolving implicit equations representing grid physics and operational decisions, which we differentiate through via the implicit function theorem. We demonstrate the efficacy of our framework in solving N-3 SCOPF, which has traditionally been considered as prohibitively expensive to solve given that the problem size depends combinatorially on the number of potential outages.

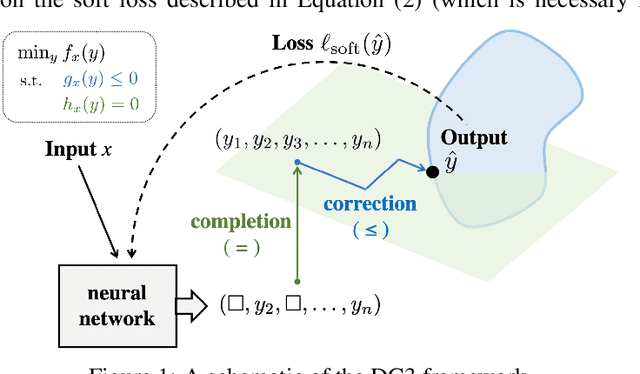

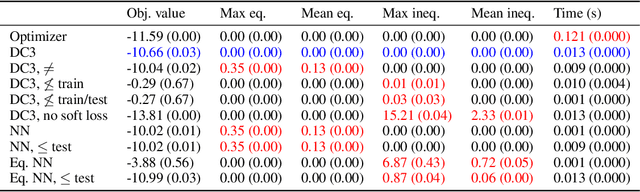

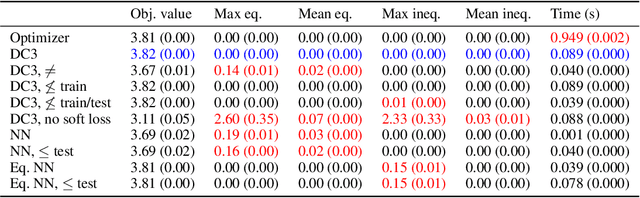

DC3: A learning method for optimization with hard constraints

Apr 25, 2021

Abstract:Large optimization problems with hard constraints arise in many settings, yet classical solvers are often prohibitively slow, motivating the use of deep networks as cheap "approximate solvers." Unfortunately, naive deep learning approaches typically cannot enforce the hard constraints of such problems, leading to infeasible solutions. In this work, we present Deep Constraint Completion and Correction (DC3), an algorithm to address this challenge. Specifically, this method enforces feasibility via a differentiable procedure, which implicitly completes partial solutions to satisfy equality constraints and unrolls gradient-based corrections to satisfy inequality constraints. We demonstrate the effectiveness of DC3 in both synthetic optimization tasks and the real-world setting of AC optimal power flow, where hard constraints encode the physics of the electrical grid. In both cases, DC3 achieves near-optimal objective values while preserving feasibility.

* In ICLR 2021. Code available at https://github.com/locuslab/DC3

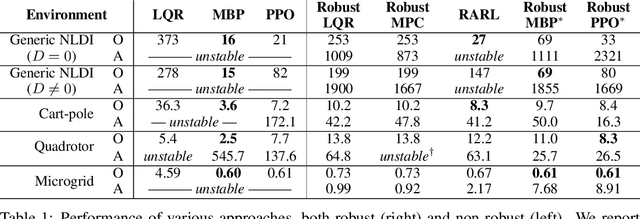

Enforcing robust control guarantees within neural network policies

Nov 16, 2020

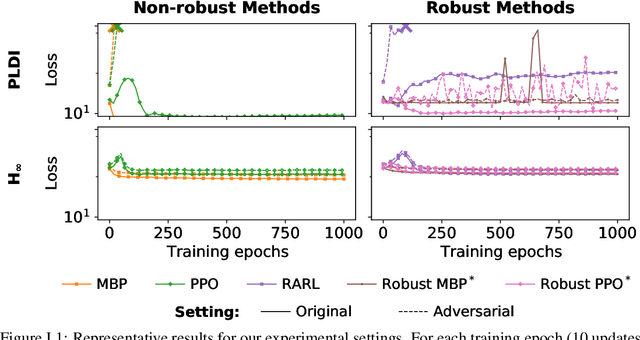

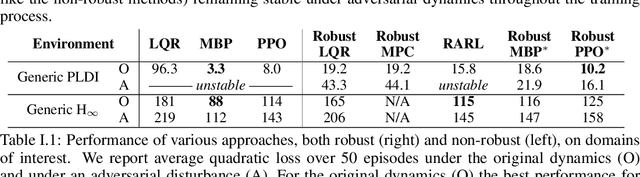

Abstract:When designing controllers for safety-critical systems, practitioners often face a challenging tradeoff between robustness and performance. While robust control methods provide rigorous guarantees on system stability under certain worst-case disturbances, they often result in simple controllers that perform poorly in the average (non-worst) case. In contrast, nonlinear control methods trained using deep learning have achieved state-of-the-art performance on many control tasks, but often lack robustness guarantees. We propose a technique that combines the strengths of these two approaches: a generic nonlinear control policy class, parameterized by neural networks, that nonetheless enforces the same provable robustness criteria as robust control. Specifically, we show that by integrating custom convex-optimization-based projection layers into a nonlinear policy, we can construct a provably robust neural network policy class that outperforms robust control methods in the average (non-adversarial) setting. We demonstrate the power of this approach on several domains, improving in performance over existing robust control methods and in stability over (non-robust) RL methods.

Tackling Climate Change with Machine Learning

Jun 10, 2019

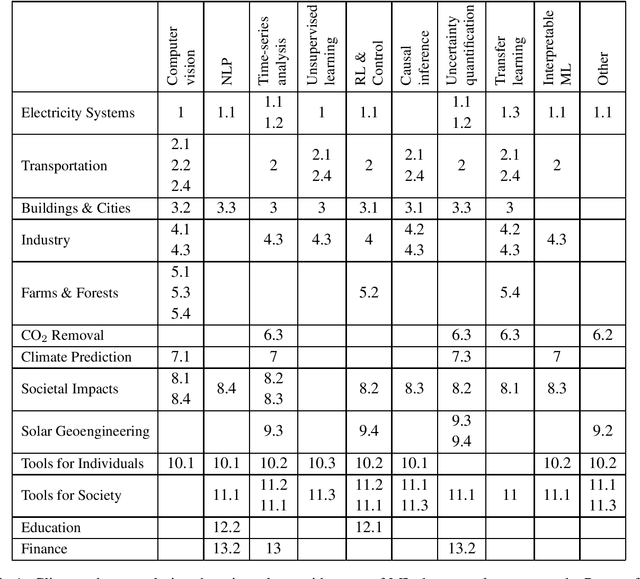

Abstract:Climate change is one of the greatest challenges facing humanity, and we, as machine learning experts, may wonder how we can help. Here we describe how machine learning can be a powerful tool in reducing greenhouse gas emissions and helping society adapt to a changing climate. From smart grids to disaster management, we identify high impact problems where existing gaps can be filled by machine learning, in collaboration with other fields. Our recommendations encompass exciting research questions as well as promising business opportunities. We call on the machine learning community to join the global effort against climate change.

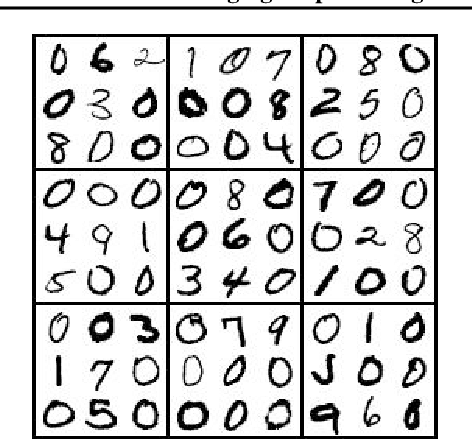

SATNet: Bridging deep learning and logical reasoning using a differentiable satisfiability solver

May 29, 2019

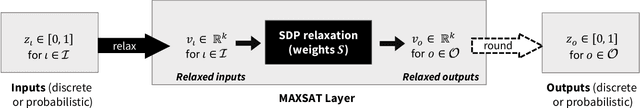

Abstract:Integrating logical reasoning within deep learning architectures has been a major goal of modern AI systems. In this paper, we propose a new direction toward this goal by introducing a differentiable (smoothed) maximum satisfiability (MAXSAT) solver that can be integrated into the loop of larger deep learning systems. Our (approximate) solver is based upon a fast coordinate descent approach to solving the semidefinite program (SDP) associated with the MAXSAT problem. We show how to analytically differentiate through the solution to this SDP and efficiently solve the associated backward pass. We demonstrate that by integrating this solver into end-to-end learning systems, we can learn the logical structure of challenging problems in a minimally supervised fashion. In particular, we show that we can learn the parity function using single-bit supervision (a traditionally hard task for deep networks) and learn how to play 9x9 Sudoku solely from examples. We also solve a "visual Sudok" problem that maps images of Sudoku puzzles to their associated logical solutions by combining our MAXSAT solver with a traditional convolutional architecture. Our approach thus shows promise in integrating logical structures within deep learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge