Maya Cakmak

When Empowerment Disempowers

Nov 06, 2025Abstract:Empowerment, a measure of an agent's ability to control its environment, has been proposed as a universal goal-agnostic objective for motivating assistive behavior in AI agents. While multi-human settings like homes and hospitals are promising for AI assistance, prior work on empowerment-based assistance assumes that the agent assists one human in isolation. We introduce an open source multi-human gridworld test suite Disempower-Grid. Using Disempower-Grid, we empirically show that assistive RL agents optimizing for one human's empowerment can significantly reduce another human's environmental influence and rewards - a phenomenon we formalize as disempowerment. We characterize when disempowerment occurs in these environments and show that joint empowerment mitigates disempowerment at the cost of the user's reward. Our work reveals a broader challenge for the AI alignment community: goal-agnostic objectives that seem aligned in single-agent settings can become misaligned in multi-agent contexts.

Preserving Sense of Agency: User Preferences for Robot Autonomy and User Control across Household Tasks

Jun 24, 2025Abstract:Roboticists often design with the assumption that assistive robots should be fully autonomous. However, it remains unclear whether users prefer highly autonomous robots, as prior work in assistive robotics suggests otherwise. High robot autonomy can reduce the user's sense of agency, which represents feeling in control of one's environment. How much control do users, in fact, want over the actions of robots used for in-home assistance? We investigate how robot autonomy levels affect users' sense of agency and the autonomy level they prefer in contexts with varying risks. Our study asked participants to rate their sense of agency as robot users across four distinct autonomy levels and ranked their robot preferences with respect to various household tasks. Our findings revealed that participants' sense of agency was primarily influenced by two factors: (1) whether the robot acts autonomously, and (2) whether a third party is involved in the robot's programming or operation. Notably, an end-user programmed robot highly preserved users' sense of agency, even though it acts autonomously. However, in high-risk settings, e.g., preparing a snack for a child with allergies, they preferred robots that prioritized their control significantly more. Additional contextual factors, such as trust in a third party operator, also shaped their preferences.

Can Large Language Models Help Developers with Robotic Finite State Machine Modification?

Dec 07, 2024Abstract:Finite state machines (FSMs) are widely used to manage robot behavior logic, particularly in real-world applications that require a high degree of reliability and structure. However, traditional manual FSM design and modification processes can be time-consuming and error-prone. We propose that large language models (LLMs) can assist developers in editing FSM code for real-world robotic use cases. LLMs, with their ability to use context and process natural language, offer a solution for FSM modification with high correctness, allowing developers to update complex control logic through natural language instructions. Our approach leverages few-shot prompting and language-guided code generation to reduce the amount of time it takes to edit an FSM. To validate this approach, we evaluate it on a real-world robotics dataset, demonstrating its effectiveness in practical scenarios.

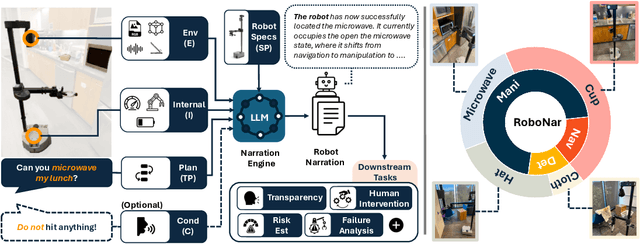

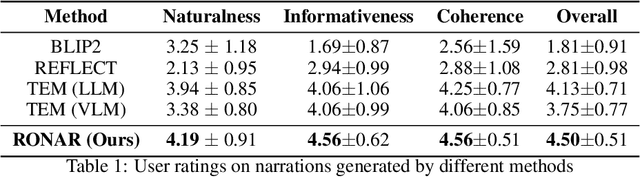

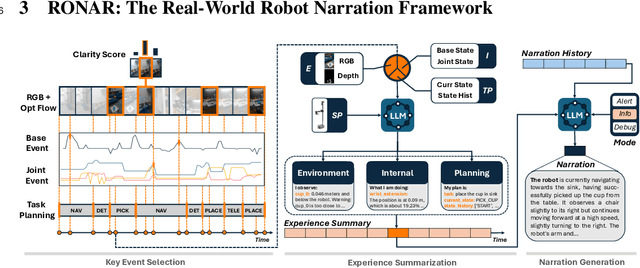

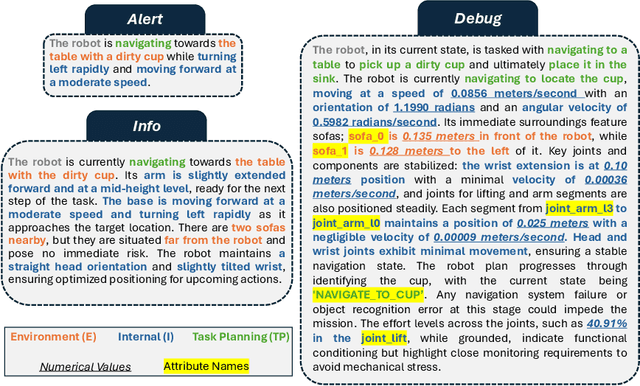

I Can Tell What I am Doing: Toward Real-World Natural Language Grounding of Robot Experiences

Nov 20, 2024

Abstract:Understanding robot behaviors and experiences through natural language is crucial for developing intelligent and transparent robotic systems. Recent advancement in large language models (LLMs) makes it possible to translate complex, multi-modal robotic experiences into coherent, human-readable narratives. However, grounding real-world robot experiences into natural language is challenging due to many reasons, such as multi-modal nature of data, differing sample rates, and data volume. We introduce RONAR, an LLM-based system that generates natural language narrations from robot experiences, aiding in behavior announcement, failure analysis, and human interaction to recover failure. Evaluated across various scenarios, RONAR outperforms state-of-the-art methods and improves failure recovery efficiency. Our contributions include a multi-modal framework for robot experience narration, a comprehensive real-robot dataset, and empirical evidence of RONAR's effectiveness in enhancing user experience in system transparency and failure analysis.

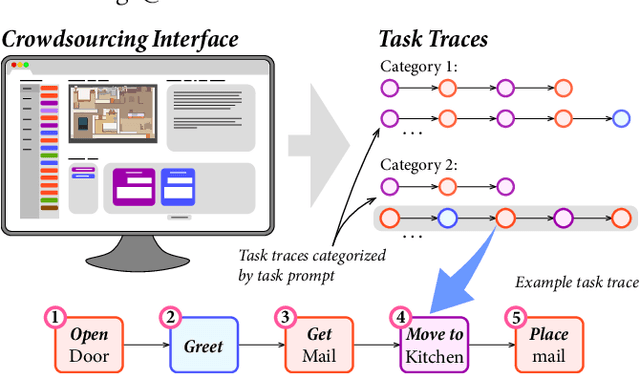

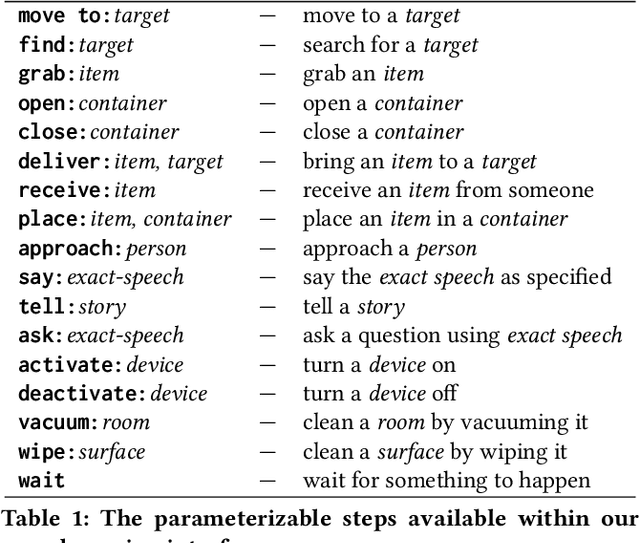

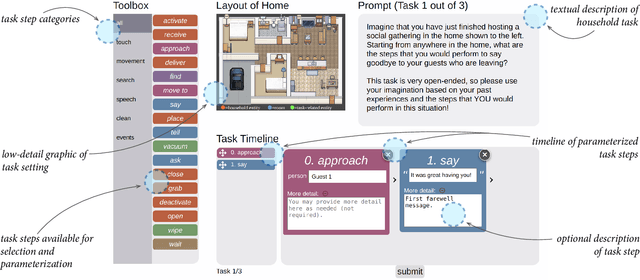

Crowdsourcing Task Traces for Service Robotics

Mar 20, 2024

Abstract:Demonstration is an effective end-user development paradigm for teaching robots how to perform new tasks. In this paper, we posit that demonstration is useful not only as a teaching tool, but also as a way to understand and assist end-user developers in thinking about a task at hand. As a first step toward gaining this understanding, we constructed a lightweight web interface to crowdsource step-by-step instructions of common household tasks, leveraging the imaginations and past experiences of potential end-user developers. As evidence of the utility of our interface, we deployed the interface on Amazon Mechanical Turk and collected 207 task traces that span 18 different task categories. We describe our vision for how these task traces can be operationalized as task models within end-user development tools and provide a roadmap for future work.

Multiple Ways of Working with Users to Develop Physically Assistive Robots

Mar 07, 2024

Abstract:Despite the growth of physically assistive robotics (PAR) research over the last decade, nearly half of PAR user studies do not involve participants with the target disabilities. There are several reasons for this -- recruitment challenges, small sample sizes, and transportation logistics -- all influenced by systemic barriers that people with disabilities face. However, it is well-established that working with end-users results in technology that better addresses their needs and integrates with their lived circumstances. In this paper, we reflect on multiple approaches we have taken to working with people with motor impairments across the design, development, and evaluation of three PAR projects: (a) assistive feeding with a robot arm; (b) assistive teleoperation with a mobile manipulator; and (c) shared control with a robot arm. We discuss these approaches to working with users along three dimensions -- individual vs. community-level insight, logistic burden on end-users vs. researchers, and benefit to researchers vs. community -- and share recommendations for how other PAR researchers can incorporate users into their work.

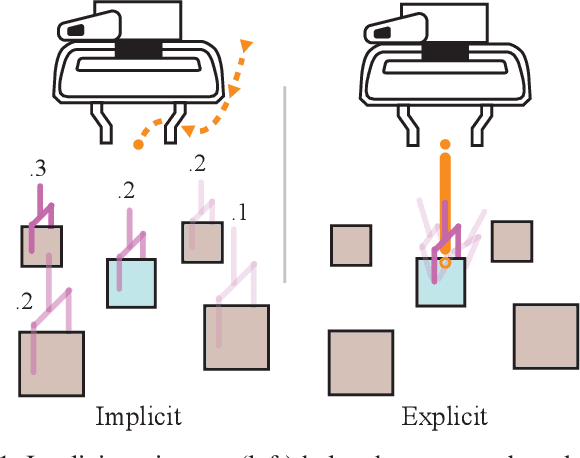

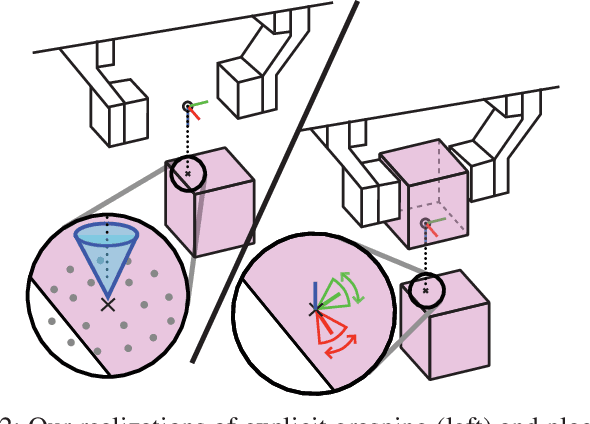

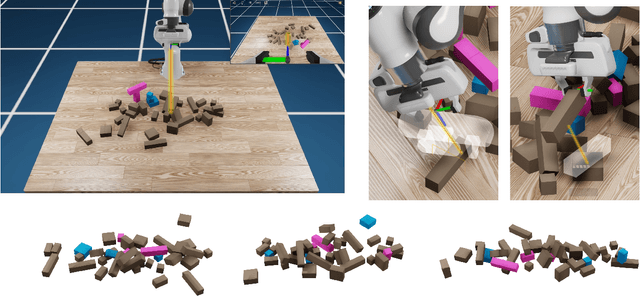

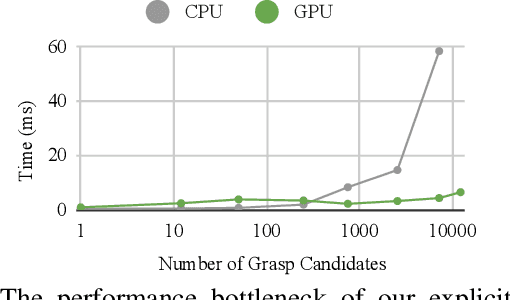

Fast Explicit-Input Assistance for Teleoperation in Clutter

Feb 04, 2024

Abstract:The performance of prediction-based assistance for robot teleoperation degrades in unseen or goal-rich environments due to incorrect or quickly-changing intent inferences. Poor predictions can confuse operators or cause them to change their control input to implicitly signal their goal, resulting in unnatural movement. We present a new assistance algorithm and interface for robotic manipulation where an operator can explicitly communicate a manipulation goal by pointing the end-effector. Rapid optimization and parallel collision checking in a local region around the pointing target enable direct, interactive control over grasp and place pose candidates. We compare the explicit pointing interface to an implicit inference-based assistance scheme in a within-subjects user study (N=20) where participants teleoperate a simulated robot to complete a multi-step singulation and stacking task in cluttered environments. We find that operators prefer the explicit interface, which improved completion time, pick and place success rates, and NASA TLX scores. Our code is available at https://github.com/NVlabs/fast-explicit-teleop

Evaluating Customization of Remote Tele-operation Interfaces for Assistive Robots

Apr 05, 2023

Abstract:Mobile manipulator platforms, like the Stretch RE1 robot, make the promise of in-home robotic assistance feasible. For people with severe physical limitations, like those with quadriplegia, the ability to tele-operate these robots themselves means that they can perform physical tasks they cannot otherwise do themselves, thereby increasing their level of independence. In order for users with physical limitations to operate these robots, their interfaces must be accessible and cater to the specific needs of all users. As physical limitations vary amongst users, it is difficult to make a single interface that will accommodate all users. Instead, such interfaces should be customizable to each individual user. In this paper we explore the value of customization of a browser-based interface for tele-operating the Stretch RE1 robot. More specifically, we evaluate the usability and effectiveness of a customized interface in comparison to the default interface configurations from prior work. We present a user study involving participants with motor impairments (N=10) and without motor impairments, who could serve as a caregiver, (N=13) that use the robot to perform mobile manipulation tasks in a real kitchen environment. Our study demonstrates that no single interface configuration satisfies all users' needs and preferences. Users perform better when using the customized interface for navigation, but not for manipulation due to higher complexity of learning to manipulate through the robot. All participants are able to use the robot to complete all tasks and participants with motor impairments believe that having the robot in their home would make them more independent.

Sketching Robot Programs On the Fly

Feb 06, 2023Abstract:Service robots for personal use in the home and the workplace require end-user development solutions for swiftly scripting robot tasks as the need arises. Many existing solutions preserve ease, efficiency, and convenience through simple programming interfaces or by restricting task complexity. Others facilitate meticulous task design but often do so at the expense of simplicity and efficiency. There is a need for robot programming solutions that reconcile the complexity of robotics with the on-the-fly goals of end-user development. In response to this need, we present a novel, multimodal, and on-the-fly development system, Tabula. Inspired by a formative design study with a prototype, Tabula leverages a combination of spoken language for specifying the core of a robot task and sketching for contextualizing the core. The result is that developers can script partial, sloppy versions of robot programs to be completed and refined by a program synthesizer. Lastly, we demonstrate our anticipated use cases of Tabula via a set of application scenarios.

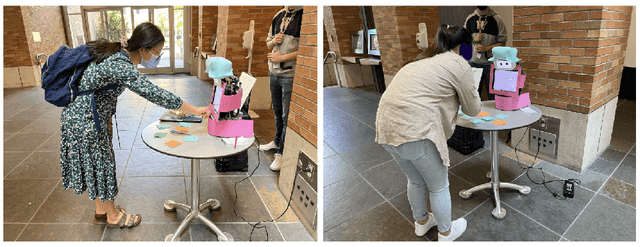

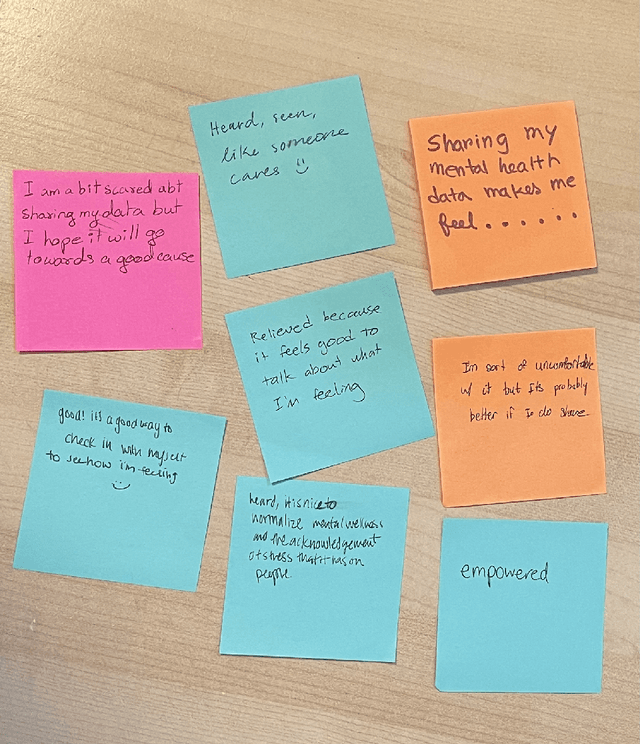

Share with Me: A Study on a Social Robot Collecting Mental Health Data

Aug 08, 2022

Abstract:Social robots have been used to assist with mental well-being in various ways such as to help children with autism improve on their social skills and executive functioning such as joint attention and bodily awareness. They are also used to help older adults by reducing feelings of isolation and loneliness, as well as supporting mental well-being of teens and children. However, existing work in this sphere has only shown support for mental health through social robots by responding interactively to human activity to help them learn relevant skills. We hypothesize that humans can also get help from social robots in mental well-being by releasing or sharing their mental health data with the social robots. In this paper, we present a human-robot interaction (HRI) study to evaluate this hypothesis. During the five-day study, a total of fifty-five (n=55) participants shared their in-the-moment mood and stress levels with a social robot. We saw a majority of positive results indicating it is worth conducting future work in this direction, and the potential of social robots to largely support mental well-being.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge