Max Pascher

Evaluating Assistive Technologies on a Trade Fair: Methodological Overview and Lessons Learned

Aug 20, 2024

Abstract:User-centered evaluations are a core requirement in the development of new user related technologies. However, it is often difficult to recruit sufficient participants, especially if the target population is small, particularly busy, or in some way restricted in their mobility. We bypassed these problems by conducting studies on trade fairs that were specifically designed for our target population (potentially care-receiving individuals in wheelchairs) and therefore provided our users with external incentive to attend our study. This paper presents our gathered experiences, including methodological specifications and lessons learned, and is aimed to guide other researchers with conducting similar studies. In addition, we also discuss chances generated by this unconventional study environment as well as its limitations.

Adaptive Control in Assistive Application -- A Study Evaluating Shared Control by Users with Limited Upper Limb Mobility

Jun 10, 2024

Abstract:Shared control in assistive robotics blends human autonomy with computer assistance, thus simplifying complex tasks for individuals with physical impairments. This study assesses an adaptive Degrees of Freedom control method specifically tailored for individuals with upper limb impairments. It employs a between-subjects analysis with 24 participants, conducting 81 trials across three distinct input devices in a realistic everyday-task setting. Given the diverse capabilities of the vulnerable target demographic and the known challenges in statistical comparisons due to individual differences, the study focuses primarily on subjective qualitative data. The results reveal consistently high success rates in trial completions, irrespective of the input device used. Participants appreciated their involvement in the research process, displayed a positive outlook, and quick adaptability to the control system. Notably, each participant effectively managed the given task within a short time frame.

Multiple Ways of Working with Users to Develop Physically Assistive Robots

Mar 07, 2024

Abstract:Despite the growth of physically assistive robotics (PAR) research over the last decade, nearly half of PAR user studies do not involve participants with the target disabilities. There are several reasons for this -- recruitment challenges, small sample sizes, and transportation logistics -- all influenced by systemic barriers that people with disabilities face. However, it is well-established that working with end-users results in technology that better addresses their needs and integrates with their lived circumstances. In this paper, we reflect on multiple approaches we have taken to working with people with motor impairments across the design, development, and evaluation of three PAR projects: (a) assistive feeding with a robot arm; (b) assistive teleoperation with a mobile manipulator; and (c) shared control with a robot arm. We discuss these approaches to working with users along three dimensions -- individual vs. community-level insight, logistic burden on end-users vs. researchers, and benefit to researchers vs. community -- and share recommendations for how other PAR researchers can incorporate users into their work.

Hands-On Robotics: Enabling Communication Through Direct Gesture Control

Jan 17, 2024

Abstract:Effective Human-Robot Interaction (HRI) is fundamental to seamlessly integrating robotic systems into our daily lives. However, current communication modes require additional technological interfaces, which can be cumbersome and indirect. This paper presents a novel approach, using direct motion-based communication by moving a robot's end effector. Our strategy enables users to communicate with a robot by using four distinct gestures -- two handshakes ('formal' and 'informal') and two letters ('W' and 'S'). As a proof-of-concept, we conducted a user study with 16 participants, capturing subjective experience ratings and objective data for training machine learning classifiers. Our findings show that the four different gestures performed by moving the robot's end effector can be distinguished with close to 100% accuracy. Our research offers implications for the design of future HRI interfaces, suggesting that motion-based interaction can empower human operators to communicate directly with robots, removing the necessity for additional hardware.

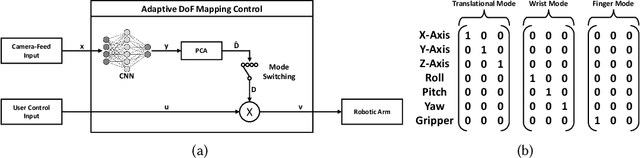

Exploring of Discrete and Continuous Input Control for AI-enhanced Assistive Robotic Arms

Jan 13, 2024

Abstract:Robotic arms, integral in domestic care for individuals with motor impairments, enable them to perform Activities of Daily Living (ADLs) independently, reducing dependence on human caregivers. These collaborative robots require users to manage multiple Degrees-of-Freedom (DoFs) for tasks like grasping and manipulating objects. Conventional input devices, typically limited to two DoFs, necessitate frequent and complex mode switches to control individual DoFs. Modern adaptive controls with feed-forward multi-modal feedback reduce the overall task completion time, number of mode switches, and cognitive load. Despite the variety of input devices available, their effectiveness in adaptive settings with assistive robotics has yet to be thoroughly assessed. This study explores three different input devices by integrating them into an established XR framework for assistive robotics, evaluating them and providing empirical insights through a preliminary study for future developments.

Exploring Large Language Models to Facilitate Variable Autonomy for Human-Robot Teaming

Dec 12, 2023

Abstract:In a rapidly evolving digital landscape autonomous tools and robots are becoming commonplace. Recognizing the significance of this development, this paper explores the integration of Large Language Models (LLMs) like Generative pre-trained transformer (GPT) into human-robot teaming environments to facilitate variable autonomy through the means of verbal human-robot communication. In this paper, we introduce a novel framework for such a GPT-powered multi-robot testbed environment, based on a Unity Virtual Reality (VR) setting. This system allows users to interact with robot agents through natural language, each powered by individual GPT cores. By means of OpenAI's function calling, we bridge the gap between unstructured natural language input and structure robot actions. A user study with 12 participants explores the effectiveness of GPT-4 and, more importantly, user strategies when being given the opportunity to converse in natural language within a multi-robot environment. Our findings suggest that users may have preconceived expectations on how to converse with robots and seldom try to explore the actual language and cognitive capabilities of their robot collaborators. Still, those users who did explore where able to benefit from a much more natural flow of communication and human-like back-and-forth. We provide a set of lessons learned for future research and technical implementations of similar systems.

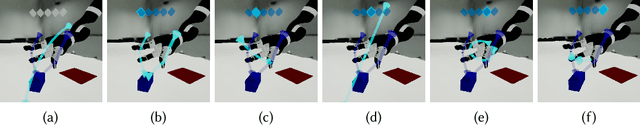

AdaptiX -- A Transitional XR Framework for Development and Evaluation of Shared Control Applications in Assistive Robotics

Oct 24, 2023

Abstract:With the ongoing efforts to empower people with mobility impairments and the increase in technological acceptance by the general public, assistive technologies, such as collaborative robotic arms, are gaining popularity. Yet, their widespread success is limited by usability issues, specifically the disparity between user input and software control along the autonomy continuum. To address this, shared control concepts provide opportunities to combine the targeted increase of user autonomy with a certain level of computer assistance. This paper presents the free and open-source AdaptiX XR framework for developing and evaluating shared control applications in a high-resolution simulation environment. The initial framework consists of a simulated robotic arm with an example scenario in Virtual Reality (VR), multiple standard control interfaces, and a specialized recording/replay system. AdaptiX can easily be extended for specific research needs, allowing Human-Robot Interaction (HRI) researchers to rapidly design and test novel interaction methods, intervention strategies, and multi-modal feedback techniques, without requiring an actual physical robotic arm during the early phases of ideation, prototyping, and evaluation. Also, a Robot Operating System (ROS) integration enables the controlling of a real robotic arm in a PhysicalTwin approach without any simulation-reality gap. Here, we review the capabilities and limitations of AdaptiX in detail and present three bodies of research based on the framework. AdaptiX can be accessed at https://adaptix.robot-research.de.

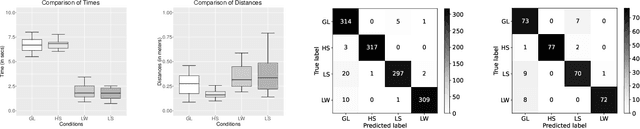

In Time and Space: Towards Usable Adaptive Control for Assistive Robotic Arms

Jul 06, 2023

Abstract:Robotic solutions, in particular robotic arms, are becoming more frequently deployed for close collaboration with humans, for example in manufacturing or domestic care environments. These robotic arms require the user to control several Degrees-of-Freedom (DoFs) to perform tasks, primarily involving grasping and manipulating objects. Standard input devices predominantly have two DoFs, requiring time-consuming and cognitively demanding mode switches to select individual DoFs. Contemporary Adaptive DoF Mapping Controls (ADMCs) have shown to decrease the necessary number of mode switches but were up to now not able to significantly reduce the perceived workload. Users still bear the mental workload of incorporating abstract mode switching into their workflow. We address this by providing feed-forward multimodal feedback using updated recommendations of ADMC, allowing users to visually compare the current and the suggested mapping in real-time. We contrast the effectiveness of two new approaches that a) continuously recommend updated DoF combinations or b) use discrete thresholds between current robot movements and new recommendations. Both are compared in a Virtual Reality (VR) in-person study against a classic control method. Significant results for lowered task completion time, fewer mode switches, and reduced perceived workload conclusively establish that in combination with feedforward, ADMC methods can indeed outperform classic mode switching. A lack of apparent quantitative differences between Continuous and Threshold reveals the importance of user-centered customization options. Including these implications in the development process will improve usability, which is essential for successfully implementing robotic technologies with high user acceptance.

Exploring AI-enhanced Shared Control for an Assistive Robotic Arm

Jun 23, 2023Abstract:Assistive technologies and in particular assistive robotic arms have the potential to enable people with motor impairments to live a self-determined life. More and more of these systems have become available for end users in recent years, such as the Kinova Jaco robotic arm. However, they mostly require complex manual control, which can overwhelm users. As a result, researchers have explored ways to let such robots act autonomously. However, at least for this specific group of users, such an approach has shown to be futile. Here, users want to stay in control to achieve a higher level of personal autonomy, to which an autonomous robot runs counter. In our research, we explore how Artifical Intelligence (AI) can be integrated into a shared control paradigm. In particular, we focus on the consequential requirements for the interface between human and robot and how we can keep humans in the loop while still significantly reducing the mental load and required motor skills.

Understanding Shared Control for Assistive Robotic Arms

Mar 03, 2023

Abstract:Living a self-determined life independent of human caregivers or fully autonomous robots is a crucial factor for human dignity and the preservation of self-worth for people with motor impairments. Assistive robotic solutions - particularly robotic arms - are frequently deployed in domestic care, empowering people with motor impairments in performing ADLs independently. However, while assistive robotic arms can help them perform ADLs, currently available controls are highly complex and time-consuming due to the need to control multiple DoFs at once and necessary mode-switches. This work provides an overview of shared control approaches for assistive robotic arms, which aim to improve their ease of use for people with motor impairments. We identify three main takeaways for future research: Less is More, Pick-and-Place Matters, and Communicating Intent.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge