Kanako Harada

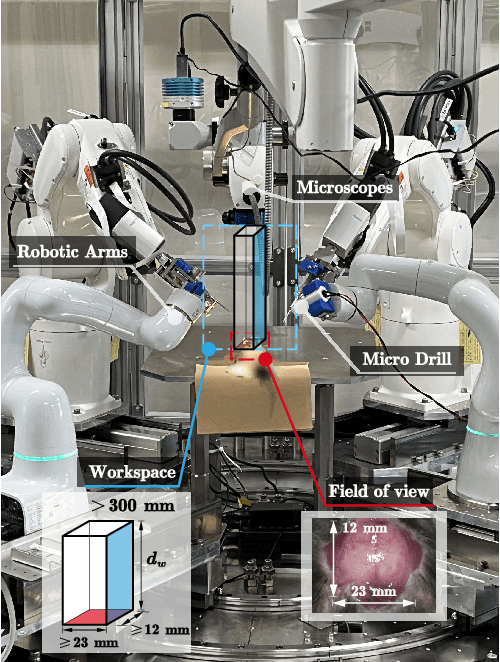

Object State Estimation Through Robotic Active Interaction for Biological Autonomous Drilling

Mar 06, 2025

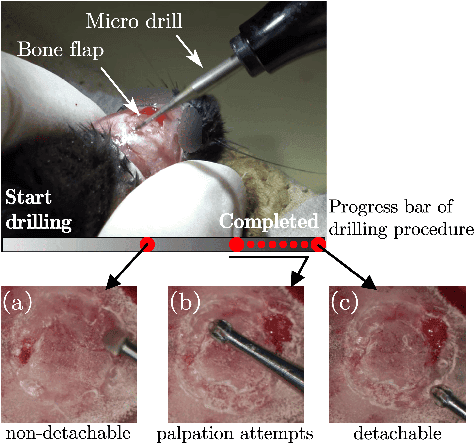

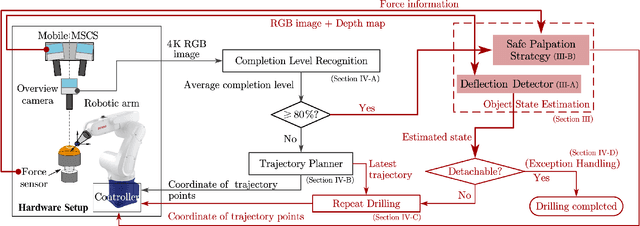

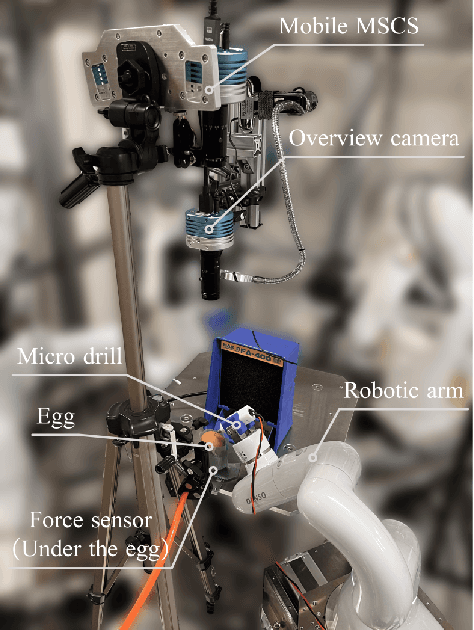

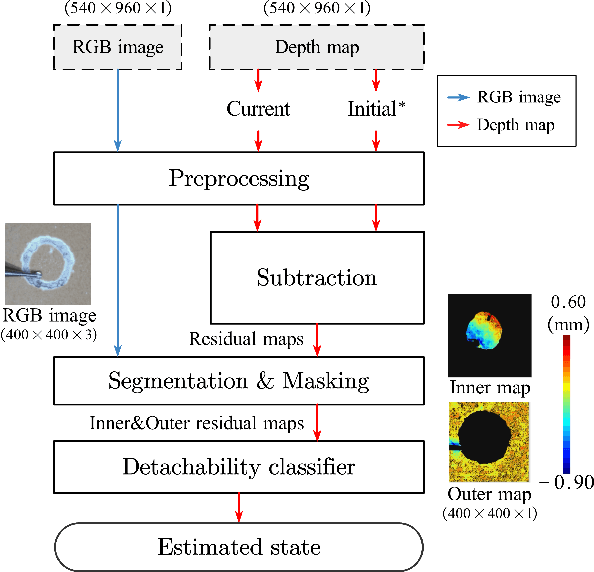

Abstract:Estimating the state of biological specimens is challenging due to limited observation through microscopic vision. For instance, during mouse skull drilling, the appearance alters little when thinning bone tissue because of its semi-transparent property and the high-magnification microscopic vision. To obtain the object's state, we introduce an object state estimation method for biological specimens through active interaction based on the deflection. The method is integrated to enhance the autonomous drilling system developed in our previous work. The method and integrated system were evaluated through 12 autonomous eggshell drilling experiment trials. The results show that the system achieved a 91.7% successful ratio and 75% detachable ratio, showcasing its potential applicability in more complex surgical procedures such as mouse skull craniotomy. This research paves the way for further development of autonomous robotic systems capable of estimating the object's state through active interaction.

Autonomous Robotic Bone Micro-Milling System with Automatic Calibration and 3D Surface Fitting

Mar 06, 2025Abstract:Automating bone micro-milling using a robotic system presents challenges due to the uncertainties in both the external and internal features of bone tissue. For example, during a mouse cranial window creation, a circular path with a radius of 2 to 4 mm needs to be milled on the mouse skull using a microdrill. The uneven surface and non-uniform thickness of the mouse skull make it difficult to fully automate this process, requiring the system to possess advanced perceptual and adaptive capabilities. In this study, we propose an automatic calibration and 3D surface fitting method and integrate it into an autonomous robotic bone micro-milling system, enabling it to quickly, in real-time, and accurately perceive and adapt to the uneven surface and non-uniform thickness of the target without human assistance. Validation experiments on euthanized mice demonstrate that the improved system achieves a success rate of 85.7 % and an average milling time of 2.1 minutes, showing not only significant performance improvements over the previous system but also exceptional accuracy, speed, and stability compared to human operators.

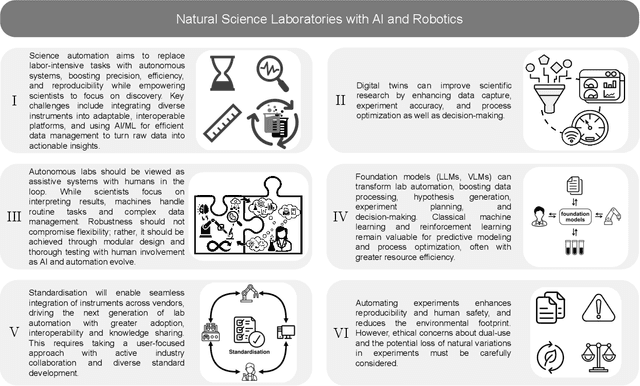

Accelerating Discovery in Natural Science Laboratories with AI and Robotics: Perspectives and Challenges from the 2024 IEEE ICRA Workshop, Yokohama, Japan

Jan 12, 2025

Abstract:Science laboratory automation enables accelerated discovery in life sciences and materials. However, it requires interdisciplinary collaboration to address challenges such as robust and flexible autonomy, reproducibility, throughput, standardization, the role of human scientists, and ethics. This article highlights these issues, reflecting perspectives from leading experts in laboratory automation across different disciplines of the natural sciences.

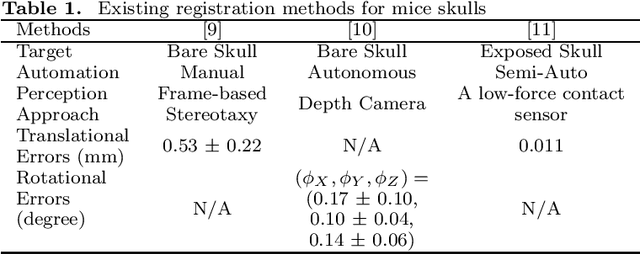

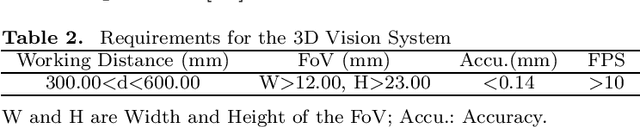

A Cranial-Feature-Based Registration Scheme for Robotic Micromanipulation Using a Microscopic Stereo Camera System

Oct 24, 2024

Abstract:Biological specimens exhibit significant variations in size and shape, challenging autonomous robotic manipulation. We focus on the mouse skull window creation task to illustrate these challenges. The study introduces a microscopic stereo camera system (MSCS) enhanced by the linear model for depth perception. Alongside this, a precise registration scheme is developed for the partially exposed mouse cranial surface, employing a CNN-based constrained and colorized registration strategy. These methods are integrated with the MSCS for robotic micromanipulation tasks. The MSCS demonstrated a high precision of 0.10 mm $\pm$ 0.02 mm measured in a step height experiment and real-time performance of 30 FPS in 3D reconstruction. The registration scheme proved its precision, with a translational error of 1.13 mm $\pm$ 0.31 mm and a rotational error of 3.38$^{\circ}$ $\pm$ 0.89$^{\circ}$ tested on 105 continuous frames with an average speed of 1.60 FPS. This study presents the application of a MSCS and a novel registration scheme in enhancing the precision and accuracy of robotic micromanipulation in scientific and surgical settings. The innovations presented here offer automation methodology in handling the challenges of microscopic manipulation, paving the way for more accurate, efficient, and less invasive procedures in various fields of microsurgery and scientific research.

Autonomous Field-of-View Adjustment Using Adaptive Kinematic Constrained Control with Robot-Held Microscopic Camera Feedback

Sep 19, 2023Abstract:Robotic systems for manipulation in millimeter scale often use a camera with high magnification for visual feedback of the target region. However, the limited field-of-view (FoV) of the microscopic camera necessitates camera motion to capture a broader workspace environment. In this work, we propose an autonomous robotic control method to constrain a robot-held camera within a designated FoV. Furthermore, we model the camera extrinsics as part of the kinematic model and use camera measurements coupled with a U-Net based tool tracking to adapt the complete robotic model during task execution. As a proof-of-concept demonstration, the proposed framework was evaluated in a bi-manual setup, where the microscopic camera was controlled to view a tool moving in a pre-defined trajectory. The proposed method allowed the camera to stay 99.5% of the time within the real FoV, compared to 48.1% without the proposed adaptive control.

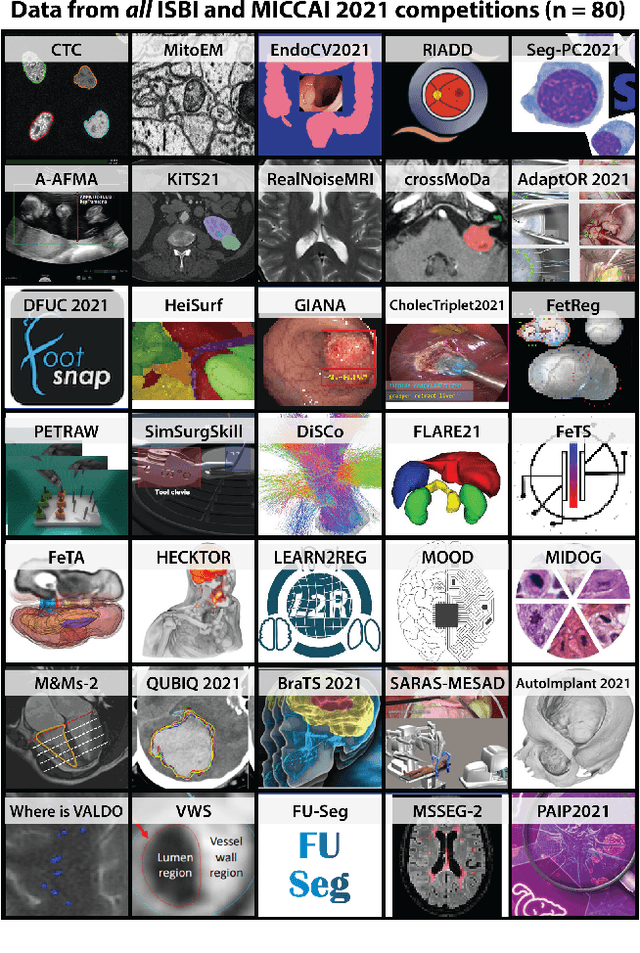

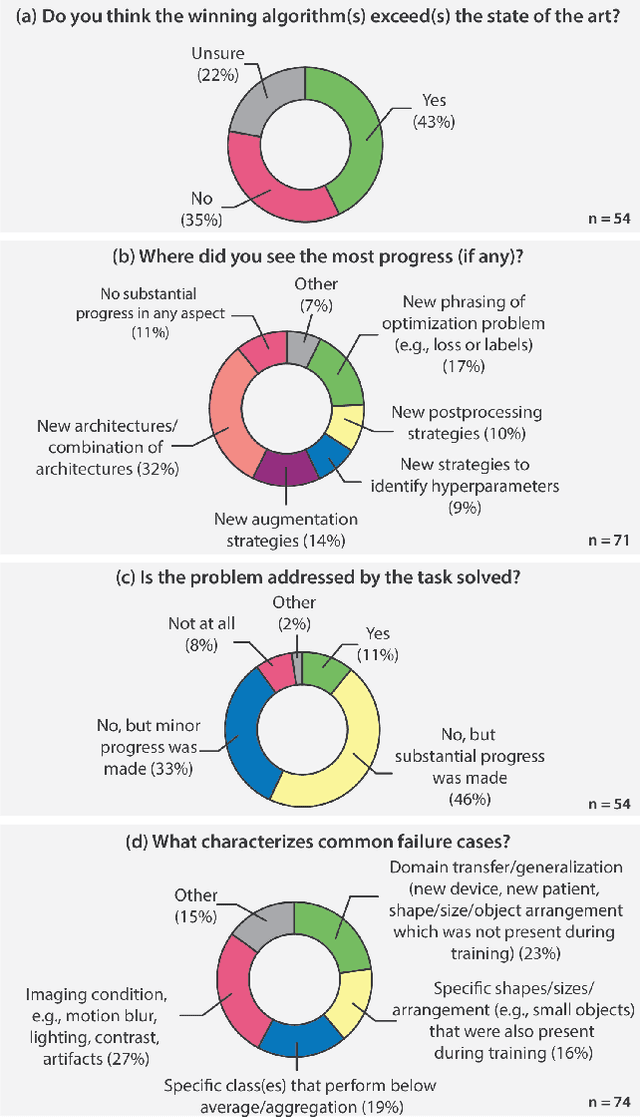

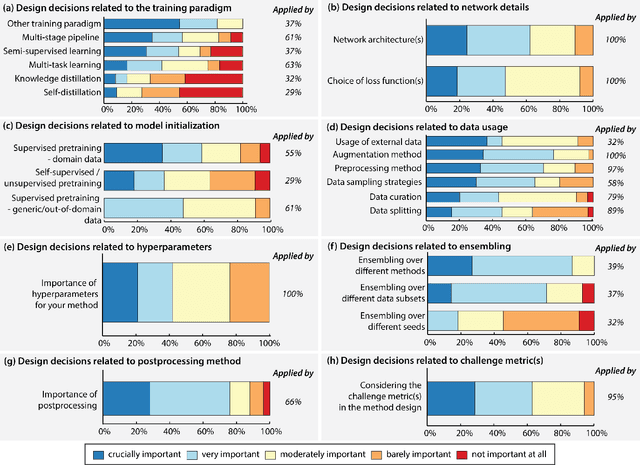

Why is the winner the best?

Mar 30, 2023

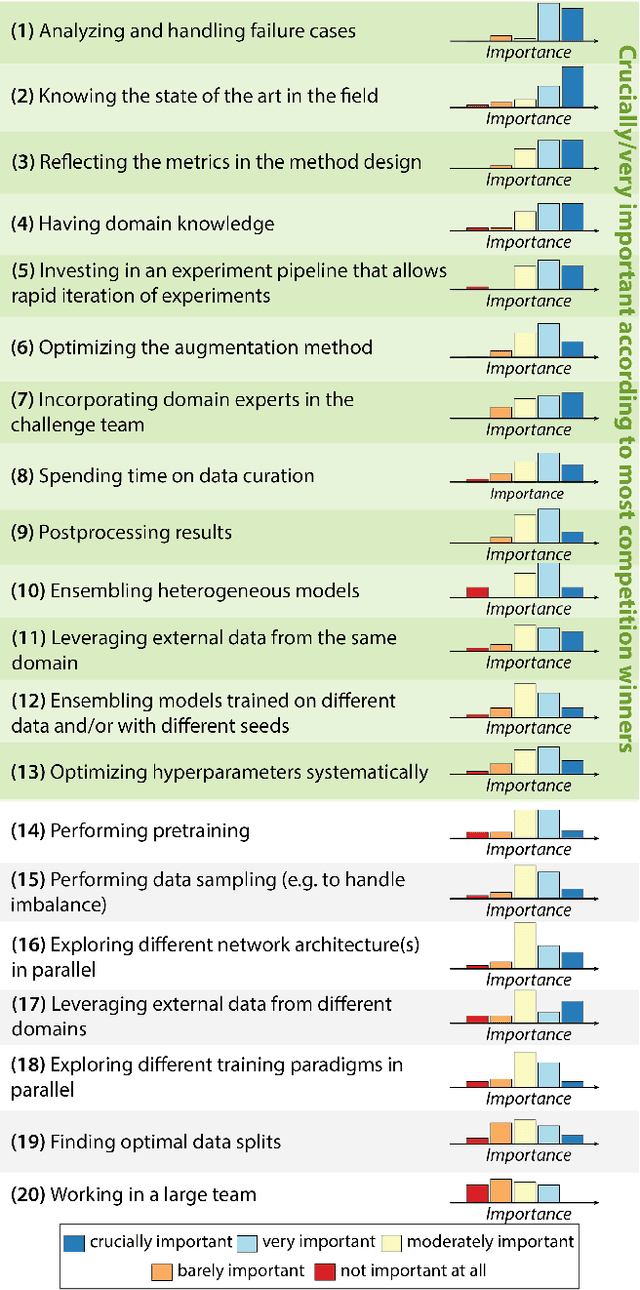

Abstract:International benchmarking competitions have become fundamental for the comparative performance assessment of image analysis methods. However, little attention has been given to investigating what can be learnt from these competitions. Do they really generate scientific progress? What are common and successful participation strategies? What makes a solution superior to a competing method? To address this gap in the literature, we performed a multi-center study with all 80 competitions that were conducted in the scope of IEEE ISBI 2021 and MICCAI 2021. Statistical analyses performed based on comprehensive descriptions of the submitted algorithms linked to their rank as well as the underlying participation strategies revealed common characteristics of winning solutions. These typically include the use of multi-task learning (63%) and/or multi-stage pipelines (61%), and a focus on augmentation (100%), image preprocessing (97%), data curation (79%), and postprocessing (66%). The "typical" lead of a winning team is a computer scientist with a doctoral degree, five years of experience in biomedical image analysis, and four years of experience in deep learning. Two core general development strategies stood out for highly-ranked teams: the reflection of the metrics in the method design and the focus on analyzing and handling failure cases. According to the organizers, 43% of the winning algorithms exceeded the state of the art but only 11% completely solved the respective domain problem. The insights of our study could help researchers (1) improve algorithm development strategies when approaching new problems, and (2) focus on open research questions revealed by this work.

Autonomous Robotic Drilling System for Mice Cranial Window Creation: An Evaluation with an Egg Model

Mar 22, 2023Abstract:Robotic assistance for experimental manipulation in the life sciences is expected to enable precise manipulation of valuable samples, regardless of the skill of the scientist. Experimental specimens in the life sciences are subject to individual variability and deformation, and therefore require autonomous robotic control. As an example, we are studying the installation of a cranial window in a mouse. This operation requires the removal of the skull, which is approximately 300 um thick, to cut it into a circular shape 8 mm in diameter, but the shape of the mouse skull varies depending on the strain of mouse, sex and week of age. The thickness of the skull is not uniform, with some areas being thin and others thicker. It is also difficult to ensure that the skulls of the mice are kept in the same position for each operation. It is not realistically possible to measure all these features and pre-program a robotic trajectory for individual mice. The paper therefore proposes an autonomous robotic drilling method. The proposed method consists of drilling trajectory planning and image-based task completion level recognition. The trajectory planning adjusts the z-position of the drill according to the task completion level at each discrete point, and forms the 3D drilling path via constrained cubic spline interpolation while avoiding overshoot. The task completion level recognition uses a DSSD-inspired deep learning model to estimate the task completion level of each discrete point. Since an egg has similar characteristics to a mouse skull in terms of shape, thickness and mechanical properties, removing the egg shell without damaging the membrane underneath was chosen as the simulation task. The proposed method was evaluated using a 6-DOF robotic arm holding a drill and achieved a success rate of 80% out of 20 trials.

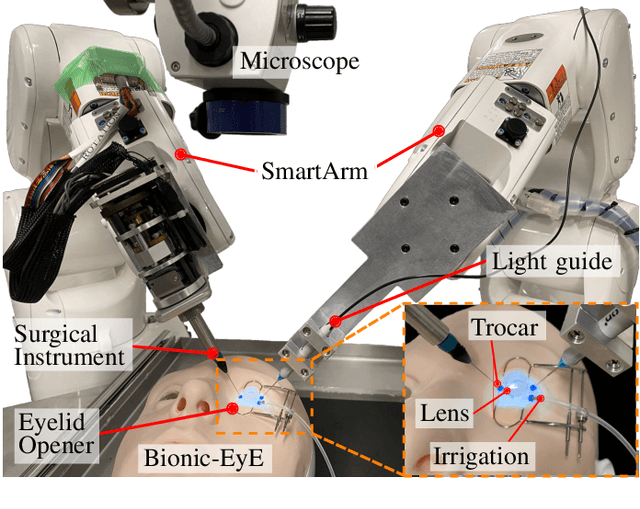

Vitreoretinal Surgical Robotic System with Autonomous Orbital Manipulation using Vector-Field Inequalities

Feb 11, 2023Abstract:Vitreoretinal surgery pertains to the treatment of delicate tissues on the fundus of the eye using thin instruments. Surgeons frequently rotate the eye during surgery, which is called orbital manipulation, to observe regions around the fundus without moving the patient. In this paper, we propose the autonomous orbital manipulation of the eye in robot-assisted vitreoretinal surgery with our tele-operated surgical system. In a simulation study, we preliminarily investigated the increase in the manipulability of our system using orbital manipulation. Furthermore, we demonstrated the feasibility of our method in experiments with a physical robot and a realistic eye model, showing an increase in the view-able area of the fundus when compared to a conventional technique. Source code and minimal example available at https://github.com/mmmarinho/icra2023_orbitalmanipulation.

PEg TRAnsfer Workflow recognition challenge report: Does multi-modal data improve recognition?

Feb 11, 2022

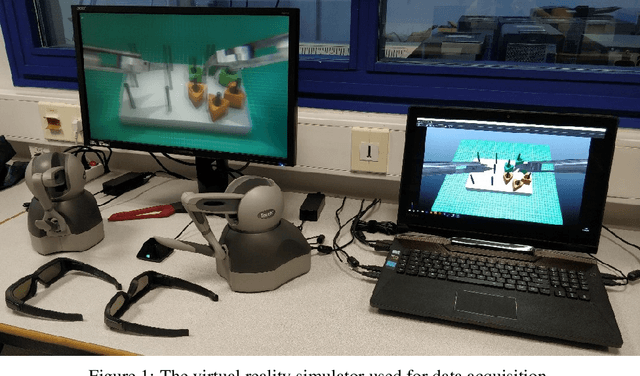

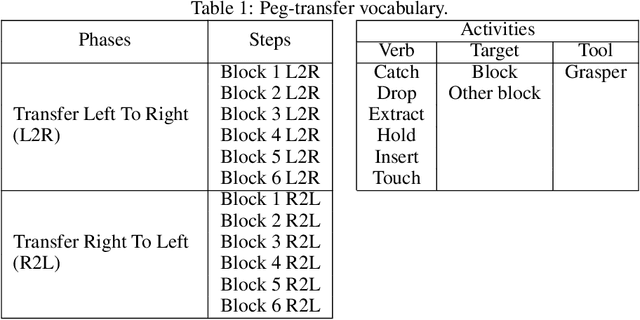

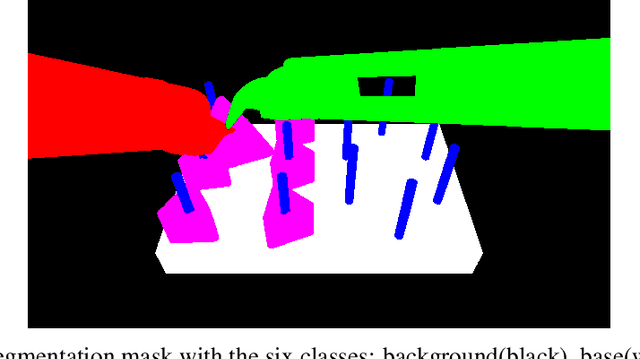

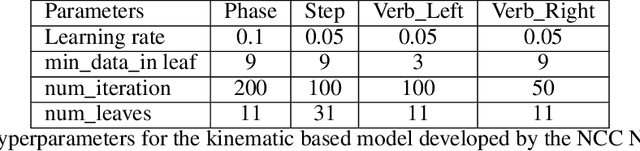

Abstract:This paper presents the design and results of the "PEg TRAnsfert Workflow recognition" (PETRAW) challenge whose objective was to develop surgical workflow recognition methods based on one or several modalities, among video, kinematic, and segmentation data, in order to study their added value. The PETRAW challenge provided a data set of 150 peg transfer sequences performed on a virtual simulator. This data set was composed of videos, kinematics, semantic segmentation, and workflow annotations which described the sequences at three different granularity levels: phase, step, and activity. Five tasks were proposed to the participants: three of them were related to the recognition of all granularities with one of the available modalities, while the others addressed the recognition with a combination of modalities. Average application-dependent balanced accuracy (AD-Accuracy) was used as evaluation metric to take unbalanced classes into account and because it is more clinically relevant than a frame-by-frame score. Seven teams participated in at least one task and four of them in all tasks. Best results are obtained with the use of the video and the kinematics data with an AD-Accuracy between 93% and 90% for the four teams who participated in all tasks. The improvement between video/kinematic-based methods and the uni-modality ones was significant for all of the teams. However, the difference in testing execution time between the video/kinematic-based and the kinematic-based methods has to be taken into consideration. Is it relevant to spend 20 to 200 times more computing time for less than 3% of improvement? The PETRAW data set is publicly available at www.synapse.org/PETRAW to encourage further research in surgical workflow recognition.

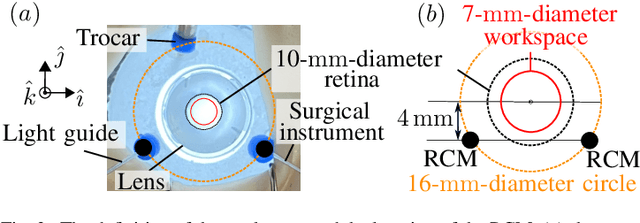

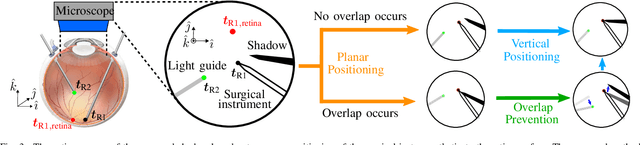

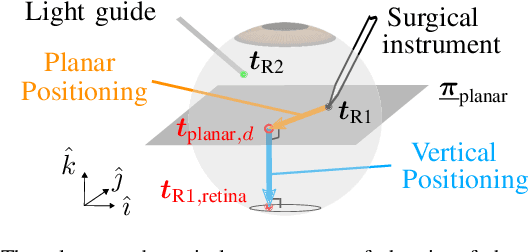

Autonomous Coordinated Control of the Light Guide for Positioning in Vitreoretinal Surgery

Jul 26, 2021

Abstract:Vitreoretinal surgery is challenging even for expert surgeons owing to the delicate target tissues and the diminutive 7-mm-diameter workspace in the retina. In addition to improved dexterity and accuracy, robot assistance allows for (partial) task automation. In this work, we propose a strategy to automate the motion of the light guide with respect to the surgical instrument. This automation allows the instrument's shadow to always be inside the microscopic view, which is an important cue for the accurate positioning of the instrument in the retina. We show simulations and experiments demonstrating that the proposed strategy is effective in a 700-point grid in the retina of a surgical phantom.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge