Zihao Wu

Measure gradients, not activations! Enhancing neuronal activity in deep reinforcement learning

May 29, 2025Abstract:Deep reinforcement learning (RL) agents frequently suffer from neuronal activity loss, which impairs their ability to adapt to new data and learn continually. A common method to quantify and address this issue is the tau-dormant neuron ratio, which uses activation statistics to measure the expressive ability of neurons. While effective for simple MLP-based agents, this approach loses statistical power in more complex architectures. To address this, we argue that in advanced RL agents, maintaining a neuron's learning capacity, its ability to adapt via gradient updates, is more critical than preserving its expressive ability. Based on this insight, we shift the statistical objective from activations to gradients, and introduce GraMa (Gradient Magnitude Neural Activity Metric), a lightweight, architecture-agnostic metric for quantifying neuron-level learning capacity. We show that GraMa effectively reveals persistent neuron inactivity across diverse architectures, including residual networks, diffusion models, and agents with varied activation functions. Moreover, resetting neurons guided by GraMa (ReGraMa) consistently improves learning performance across multiple deep RL algorithms and benchmarks, such as MuJoCo and the DeepMind Control Suite.

Teleportation With Null Space Gradient Projection for Optimization Acceleration

Feb 17, 2025Abstract:Optimization techniques have become increasingly critical due to the ever-growing model complexity and data scale. In particular, teleportation has emerged as a promising approach, which accelerates convergence of gradient descent-based methods by navigating within the loss invariant level set to identify parameters with advantageous geometric properties. Existing teleportation algorithms have primarily demonstrated their effectiveness in optimizing Multi-Layer Perceptrons (MLPs), but their extension to more advanced architectures, such as Convolutional Neural Networks (CNNs) and Transformers, remains challenging. Moreover, they often impose significant computational demands, limiting their applicability to complex architectures. To this end, we introduce an algorithm that projects the gradient of the teleportation objective function onto the input null space, effectively preserving the teleportation within the loss invariant level set and reducing computational cost. Our approach is readily generalizable from MLPs to CNNs, transformers, and potentially other advanced architectures. We validate the effectiveness of our algorithm across various benchmark datasets and optimizers, demonstrating its broad applicability.

S2TX: Cross-Attention Multi-Scale State-Space Transformer for Time Series Forecasting

Feb 17, 2025Abstract:Time series forecasting has recently achieved significant progress with multi-scale models to address the heterogeneity between long and short range patterns. Despite their state-of-the-art performance, we identify two potential areas for improvement. First, the variates of the multivariate time series are processed independently. Moreover, the multi-scale (long and short range) representations are learned separately by two independent models without communication. In light of these concerns, we propose State Space Transformer with cross-attention (S2TX). S2TX employs a cross-attention mechanism to integrate a Mamba model for extracting long-range cross-variate context and a Transformer model with local window attention to capture short-range representations. By cross-attending to the global context, the Transformer model further facilitates variate-level interactions as well as local/global communications. Comprehensive experiments on seven classic long-short range time-series forecasting benchmark datasets demonstrate that S2TX can achieve highly robust SOTA results while maintaining a low memory footprint.

Large Language Models for Bioinformatics

Jan 10, 2025

Abstract:With the rapid advancements in large language model (LLM) technology and the emergence of bioinformatics-specific language models (BioLMs), there is a growing need for a comprehensive analysis of the current landscape, computational characteristics, and diverse applications. This survey aims to address this need by providing a thorough review of BioLMs, focusing on their evolution, classification, and distinguishing features, alongside a detailed examination of training methodologies, datasets, and evaluation frameworks. We explore the wide-ranging applications of BioLMs in critical areas such as disease diagnosis, drug discovery, and vaccine development, highlighting their impact and transformative potential in bioinformatics. We identify key challenges and limitations inherent in BioLMs, including data privacy and security concerns, interpretability issues, biases in training data and model outputs, and domain adaptation complexities. Finally, we highlight emerging trends and future directions, offering valuable insights to guide researchers and clinicians toward advancing BioLMs for increasingly sophisticated biological and clinical applications.

Score-Based Metropolis-Hastings Algorithms

Dec 31, 2024

Abstract:In this paper, we introduce a new approach for integrating score-based models with the Metropolis-Hastings algorithm. While traditional score-based diffusion models excel in accurately learning the score function from data points, they lack an energy function, making the Metropolis-Hastings adjustment step inaccessible. Consequently, the unadjusted Langevin algorithm is often used for sampling using estimated score functions. The lack of an energy function then prevents the application of the Metropolis-adjusted Langevin algorithm and other Metropolis-Hastings methods, limiting the wealth of other algorithms developed that use acceptance functions. We address this limitation by introducing a new loss function based on the \emph{detailed balance condition}, allowing the estimation of the Metropolis-Hastings acceptance probabilities given a learned score function. We demonstrate the effectiveness of the proposed method for various scenarios, including sampling from heavy-tail distributions.

Offline Stochastic Optimization of Black-Box Objective Functions

Dec 03, 2024

Abstract:Many challenges in science and engineering, such as drug discovery and communication network design, involve optimizing complex and expensive black-box functions across vast search spaces. Thus, it is essential to leverage existing data to avoid costly active queries of these black-box functions. To this end, while Offline Black-Box Optimization (BBO) is effective for deterministic problems, it may fall short in capturing the stochasticity of real-world scenarios. To address this, we introduce Stochastic Offline BBO (SOBBO), which tackles both black-box objectives and uncontrolled uncertainties. We propose two solutions: for large-data regimes, a differentiable surrogate allows for gradient-based optimization, while for scarce-data regimes, we directly estimate gradients under conservative field constraints, improving robustness, convergence, and data efficiency. Numerical experiments demonstrate the effectiveness of our approach on both synthetic and real-world tasks.

Transcending Language Boundaries: Harnessing LLMs for Low-Resource Language Translation

Nov 18, 2024

Abstract:Large Language Models (LLMs) have demonstrated remarkable success across a wide range of tasks and domains. However, their performance in low-resource language translation, particularly when translating into these languages, remains underexplored. This gap poses significant challenges, as linguistic barriers hinder the cultural preservation and development of minority communities. To address this issue, this paper introduces a novel retrieval-based method that enhances translation quality for low-resource languages by focusing on key terms, which involves translating keywords and retrieving corresponding examples from existing data. To evaluate the effectiveness of this method, we conducted experiments translating from English into three low-resource languages: Cherokee, a critically endangered indigenous language of North America; Tibetan, a historically and culturally significant language in Asia; and Manchu, a language with few remaining speakers. Our comparison with the zero-shot performance of GPT-4o and LLaMA 3.1 405B, highlights the significant challenges these models face when translating into low-resource languages. In contrast, our retrieval-based method shows promise in improving both word-level accuracy and overall semantic understanding by leveraging existing resources more effectively.

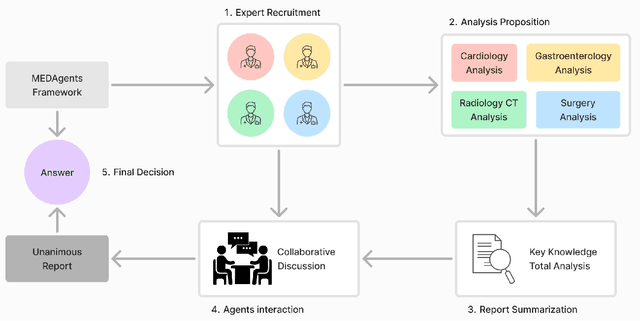

Towards Next-Generation Medical Agent: How o1 is Reshaping Decision-Making in Medical Scenarios

Nov 16, 2024

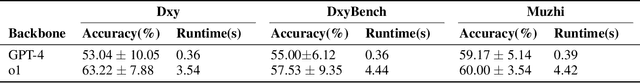

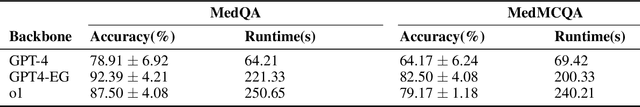

Abstract:Artificial Intelligence (AI) has become essential in modern healthcare, with large language models (LLMs) offering promising advances in clinical decision-making. Traditional model-based approaches, including those leveraging in-context demonstrations and those with specialized medical fine-tuning, have demonstrated strong performance in medical language processing but struggle with real-time adaptability, multi-step reasoning, and handling complex medical tasks. Agent-based AI systems address these limitations by incorporating reasoning traces, tool selection based on context, knowledge retrieval, and both short- and long-term memory. These additional features enable the medical AI agent to handle complex medical scenarios where decision-making should be built on real-time interaction with the environment. Therefore, unlike conventional model-based approaches that treat medical queries as isolated questions, medical AI agents approach them as complex tasks and behave more like human doctors. In this paper, we study the choice of the backbone LLM for medical AI agents, which is the foundation for the agent's overall reasoning and action generation. In particular, we consider the emergent o1 model and examine its impact on agents' reasoning, tool-use adaptability, and real-time information retrieval across diverse clinical scenarios, including high-stakes settings such as intensive care units (ICUs). Our findings demonstrate o1's ability to enhance diagnostic accuracy and consistency, paving the way for smarter, more responsive AI tools that support better patient outcomes and decision-making efficacy in clinical practice.

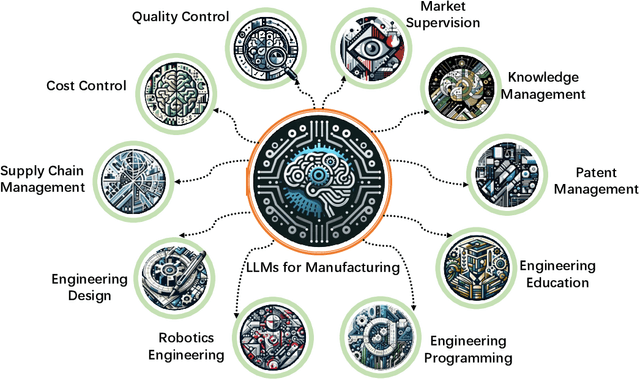

Large Language Models for Manufacturing

Oct 28, 2024

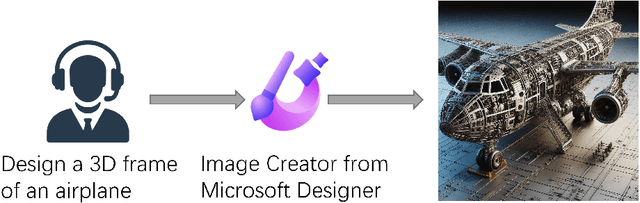

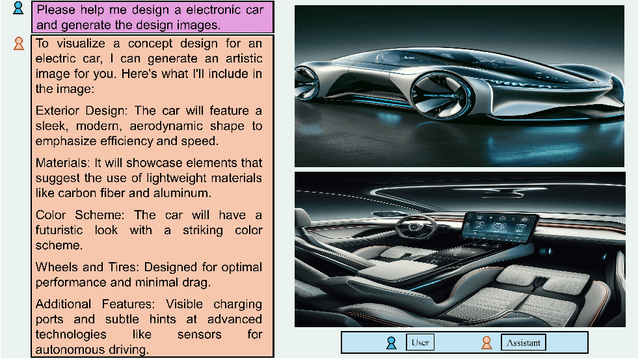

Abstract:The rapid advances in Large Language Models (LLMs) have the potential to transform manufacturing industry, offering new opportunities to optimize processes, improve efficiency, and drive innovation. This paper provides a comprehensive exploration of the integration of LLMs into the manufacturing domain, focusing on their potential to automate and enhance various aspects of manufacturing, from product design and development to quality control, supply chain optimization, and talent management. Through extensive evaluations across multiple manufacturing tasks, we demonstrate the remarkable capabilities of state-of-the-art LLMs, such as GPT-4V, in understanding and executing complex instructions, extracting valuable insights from vast amounts of data, and facilitating knowledge sharing. We also delve into the transformative potential of LLMs in reshaping manufacturing education, automating coding processes, enhancing robot control systems, and enabling the creation of immersive, data-rich virtual environments through the industrial metaverse. By highlighting the practical applications and emerging use cases of LLMs in manufacturing, this paper aims to provide a valuable resource for professionals, researchers, and decision-makers seeking to harness the power of these technologies to address real-world challenges, drive operational excellence, and unlock sustainable growth in an increasingly competitive landscape.

EG-SpikeFormer: Eye-Gaze Guided Transformer on Spiking Neural Networks for Medical Image Analysis

Oct 12, 2024

Abstract:Neuromorphic computing has emerged as a promising energy-efficient alternative to traditional artificial intelligence, predominantly utilizing spiking neural networks (SNNs) implemented on neuromorphic hardware. Significant advancements have been made in SNN-based convolutional neural networks (CNNs) and Transformer architectures. However, their applications in the medical imaging domain remain underexplored. In this study, we introduce EG-SpikeFormer, an SNN architecture designed for clinical tasks that integrates eye-gaze data to guide the model's focus on diagnostically relevant regions in medical images. This approach effectively addresses shortcut learning issues commonly observed in conventional models, especially in scenarios with limited clinical data and high demands for model reliability, generalizability, and transparency. Our EG-SpikeFormer not only demonstrates superior energy efficiency and performance in medical image classification tasks but also enhances clinical relevance. By incorporating eye-gaze data, the model improves interpretability and generalization, opening new directions for the application of neuromorphic computing in healthcare.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge