Yunfei Wang

LLM-based Embeddings: Attention Values Encode Sentence Semantics Better Than Hidden States

Feb 02, 2026Abstract:Sentence representations are foundational to many Natural Language Processing (NLP) applications. While recent methods leverage Large Language Models (LLMs) to derive sentence representations, most rely on final-layer hidden states, which are optimized for next-token prediction and thus often fail to capture global, sentence-level semantics. This paper introduces a novel perspective, demonstrating that attention value vectors capture sentence semantics more effectively than hidden states. We propose Value Aggregation (VA), a simple method that pools token values across multiple layers and token indices. In a training-free setting, VA outperforms other LLM-based embeddings, even matches or surpasses the ensemble-based MetaEOL. Furthermore, we demonstrate that when paired with suitable prompts, the layer attention outputs can be interpreted as aligned weighted value vectors. Specifically, the attention scores of the last token function as the weights, while the output projection matrix ($W_O$) aligns these weighted value vectors with the common space of the LLM residual stream. This refined method, termed Aligned Weighted VA (AlignedWVA), achieves state-of-the-art performance among training-free LLM-based embeddings, outperforming the high-cost MetaEOL by a substantial margin. Finally, we highlight the potential of obtaining strong LLM embedding models through fine-tuning Value Aggregation.

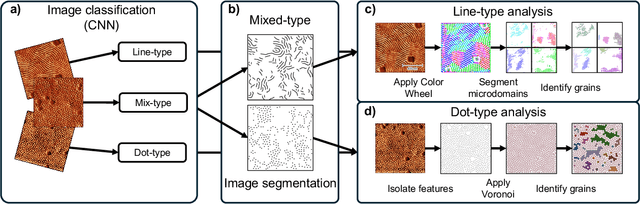

Machine Learning Framework for Characterizing Processing-Structure Relationship in Block Copolymer Thin Films

May 29, 2025

Abstract:The morphology of block copolymers (BCPs) critically influences material properties and applications. This work introduces a machine learning (ML)-enabled, high-throughput framework for analyzing grazing incidence small-angle X-ray scattering (GISAXS) data and atomic force microscopy (AFM) images to characterize BCP thin film morphology. A convolutional neural network was trained to classify AFM images by morphology type, achieving 97% testing accuracy. Classified images were then analyzed to extract 2D grain size measurements from the samples in a high-throughput manner. ML models were developed to predict morphological features based on processing parameters such as solvent ratio, additive type, and additive ratio. GISAXS-based properties were predicted with strong performances ($R^2$ > 0.75), while AFM-based property predictions were less accurate ($R^2$ < 0.60), likely due to the localized nature of AFM measurements compared to the bulk information captured by GISAXS. Beyond model performance, interpretability was addressed using Shapley Additive exPlanations (SHAP). SHAP analysis revealed that the additive ratio had the largest impact on morphological predictions, where additive provides the BCP chains with increased volume to rearrange into thermodynamically favorable morphologies. This interpretability helps validate model predictions and offers insight into parameter importance. Altogether, the presented framework combining high-throughput characterization and interpretable ML offers an approach to exploring and optimizing BCP thin film morphology across a broad processing landscape.

FERMI: Flexible Radio Mapping with a Hybrid Propagation Model and Scalable Autonomous Data Collection

Apr 21, 2025Abstract:Communication is fundamental for multi-robot collaboration, with accurate radio mapping playing a crucial role in predicting signal strength between robots. However, modeling radio signal propagation in large and occluded environments is challenging due to complex interactions between signals and obstacles. Existing methods face two key limitations: they struggle to predict signal strength for transmitter-receiver pairs not present in the training set, while also requiring extensive manual data collection for modeling, making them impractical for large, obstacle-rich scenarios. To overcome these limitations, we propose FERMI, a flexible radio mapping framework. FERMI combines physics-based modeling of direct signal paths with a neural network to capture environmental interactions with radio signals. This hybrid model learns radio signal propagation more efficiently, requiring only sparse training data. Additionally, FERMI introduces a scalable planning method for autonomous data collection using a multi-robot team. By increasing parallelism in data collection and minimizing robot travel costs between regions, overall data collection efficiency is significantly improved. Experiments in both simulation and real-world scenarios demonstrate that FERMI enables accurate signal prediction and generalizes well to unseen positions in complex environments. It also supports fully autonomous data collection and scales to different team sizes, offering a flexible solution for creating radio maps. Our code is open-sourced at https://github.com/ymLuo1214/Flexible-Radio-Mapping.

Adaptive AI decision interface for autonomous electronic material discovery

Apr 17, 2025

Abstract:AI-powered autonomous experimentation (AI/AE) can accelerate materials discovery but its effectiveness for electronic materials is hindered by data scarcity from lengthy and complex design-fabricate-test-analyze cycles. Unlike experienced human scientists, even advanced AI algorithms in AI/AE lack the adaptability to make informative real-time decisions with limited datasets. Here, we address this challenge by developing and implementing an AI decision interface on our AI/AE system. The central element of the interface is an AI advisor that performs real-time progress monitoring, data analysis, and interactive human-AI collaboration for actively adapting to experiments in different stages and types. We applied this platform to an emerging type of electronic materials-mixed ion-electron conducting polymers (MIECPs) -- to engineer and study the relationships between multiscale morphology and properties. Using organic electrochemical transistors (OECT) as the testing-bed device for evaluating the mixed-conducting figure-of-merit -- the product of charge-carrier mobility and the volumetric capacitance ({\mu}C*), our adaptive AI/AE platform achieved a 150% increase in {\mu}C* compared to the commonly used spin-coating method, reaching 1,275 F cm-1 V-1 s-1 in just 64 autonomous experimental trials. A study of 10 statistically selected samples identifies two key structural factors for achieving higher volumetric capacitance: larger crystalline lamellar spacing and higher specific surface area, while also uncovering a new polymer polymorph in this material.

Towards efficient quantum algorithms for diffusion probability models

Feb 20, 2025Abstract:A diffusion probabilistic model (DPM) is a generative model renowned for its ability to produce high-quality outputs in tasks such as image and audio generation. However, training DPMs on large, high-dimensional datasets such as high-resolution images or audio incurs significant computational, energy, and hardware costs. In this work, we introduce efficient quantum algorithms for implementing DPMs through various quantum ODE solvers. These algorithms highlight the potential of quantum Carleman linearization for diverse mathematical structures, leveraging state-of-the-art quantum linear system solvers (QLSS) or linear combination of Hamiltonian simulations (LCHS). Specifically, we focus on two approaches: DPM-solver-$k$ which employs exact $k$-th order derivatives to compute a polynomial approximation of $\epsilon_\theta(x_\lambda,\lambda)$; and UniPC which uses finite difference of $\epsilon_\theta(x_\lambda,\lambda)$ at different points $(x_{s_m}, \lambda_{s_m})$ to approximate higher-order derivatives. As such, this work represents one of the most direct and pragmatic applications of quantum algorithms to large-scale machine learning models, presumably talking substantial steps towards demonstrating the practical utility of quantum computing.

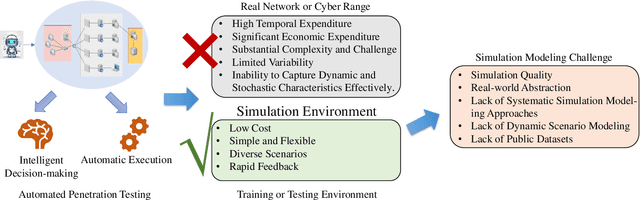

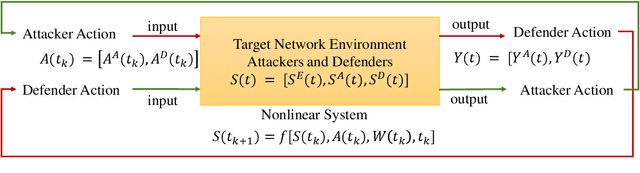

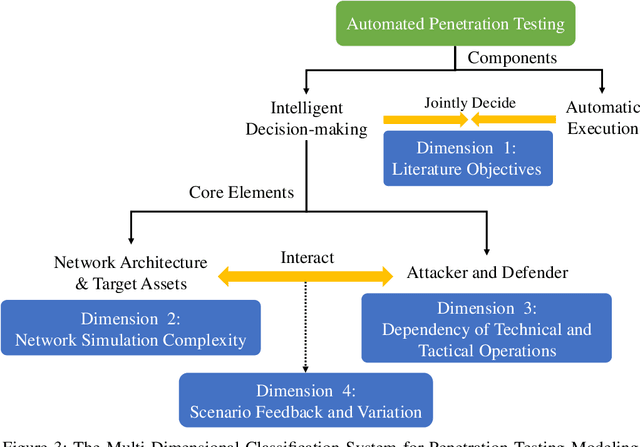

A Unified Modeling Framework for Automated Penetration Testing

Feb 17, 2025

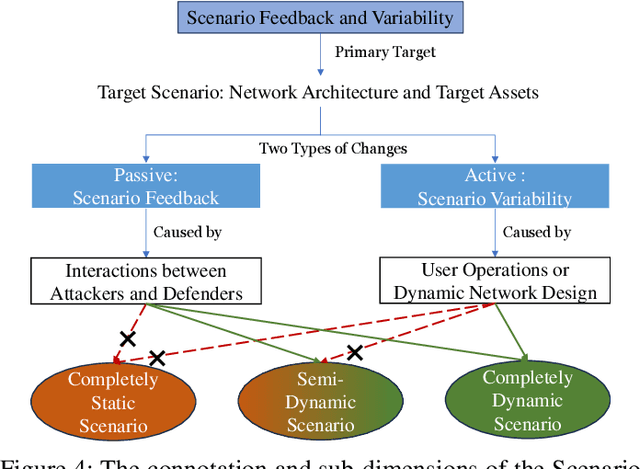

Abstract:The integration of artificial intelligence into automated penetration testing (AutoPT) has highlighted the necessity of simulation modeling for the training of intelligent agents, due to its cost-efficiency and swift feedback capabilities. Despite the proliferation of AutoPT research, there is a recognized gap in the availability of a unified framework for simulation modeling methods. This paper presents a systematic review and synthesis of existing techniques, introducing MDCPM to categorize studies based on literature objectives, network simulation complexity, dependency of technical and tactical operations, and scenario feedback and variation. To bridge the gap in unified method for multi-dimensional and multi-level simulation modeling, dynamic environment modeling, and the scarcity of public datasets, we introduce AutoPT-Sim, a novel modeling framework that based on policy automation and encompasses the combination of all sub dimensions. AutoPT-Sim offers a comprehensive approach to modeling network environments, attackers, and defenders, transcending the constraints of static modeling and accommodating networks of diverse scales. We publicly release a generated standard network environment dataset and the code of Network Generator. By integrating publicly available datasets flexibly, support is offered for various simulation modeling levels focused on policy automation in MDCPM and the network generator help researchers output customized target network data by adjusting parameters or fine-tuning the network generator.

Machine Learning for Analyzing Atomic Force Microscopy (AFM) Images Generated from Polymer Blends

Sep 15, 2024

Abstract:In this paper we present a new machine learning workflow with unsupervised learning techniques to identify domains within atomic force microscopy images obtained from polymer films. The goal of the workflow is to identify the spatial location of the two types of polymer domains with little to no manual intervention and calculate the domain size distributions which in turn can help qualify the phase separated state of the material as macrophase or microphase ordered or disordered domains. We briefly review existing approaches used in other fields, computer vision and signal processing that can be applicable for the above tasks that happen frequently in the field of polymer science and engineering. We then test these approaches from computer vision and signal processing on the AFM image dataset to identify the strengths and limitations of each of these approaches for our first task. For our first domain segmentation task, we found that the workflow using discrete Fourier transform or discrete cosine transform with variance statistics as the feature works the best. The popular ResNet50 deep learning approach from computer vision field exhibited relatively poorer performance in the domain segmentation task for our AFM images as compared to the DFT and DCT based workflows. For the second task, for each of 144 input AFM images, we then used an existing porespy python package to calculate the domain size distribution from the output of that image from DFT based workflow. The information and open source codes we share in this paper can serve as a guide for researchers in the polymer and soft materials fields who need ML modeling and workflows for automated analyses of AFM images from polymer samples that may have crystalline or amorphous domains, sharp or rough interfaces between domains, or micro or macrophase separated domains.

Crimson: Empowering Strategic Reasoning in Cybersecurity through Large Language Models

Mar 01, 2024

Abstract:We introduces Crimson, a system that enhances the strategic reasoning capabilities of Large Language Models (LLMs) within the realm of cybersecurity. By correlating CVEs with MITRE ATT&CK techniques, Crimson advances threat anticipation and strategic defense efforts. Our approach includes defining and evaluating cybersecurity strategic tasks, alongside implementing a comprehensive human-in-the-loop data-synthetic workflow to develop the CVE-to-ATT&CK Mapping (CVEM) dataset. We further enhance LLMs' reasoning abilities through a novel Retrieval-Aware Training (RAT) process and its refined iteration, RAT-R. Our findings demonstrate that an LLM fine-tuned with our techniques, possessing 7 billion parameters, approaches the performance level of GPT-4, showing markedly lower rates of hallucination and errors, and surpassing other models in strategic reasoning tasks. Moreover, domain-specific fine-tuning of embedding models significantly improves performance within cybersecurity contexts, underscoring the efficacy of our methodology. By leveraging Crimson to convert raw vulnerability data into structured and actionable insights, we bolster proactive cybersecurity defenses.

Quantum Machine Learning: from NISQ to Fault Tolerance

Jan 21, 2024

Abstract:Quantum machine learning, which involves running machine learning algorithms on quantum devices, has garnered significant attention in both academic and business circles. In this paper, we offer a comprehensive and unbiased review of the various concepts that have emerged in the field of quantum machine learning. This includes techniques used in Noisy Intermediate-Scale Quantum (NISQ) technologies and approaches for algorithms compatible with fault-tolerant quantum computing hardware. Our review covers fundamental concepts, algorithms, and the statistical learning theory pertinent to quantum machine learning.

Long Short-Term Planning for Conversational Recommendation Systems

Oct 23, 2023

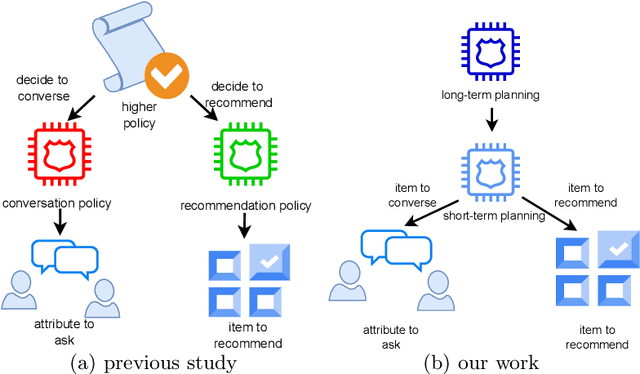

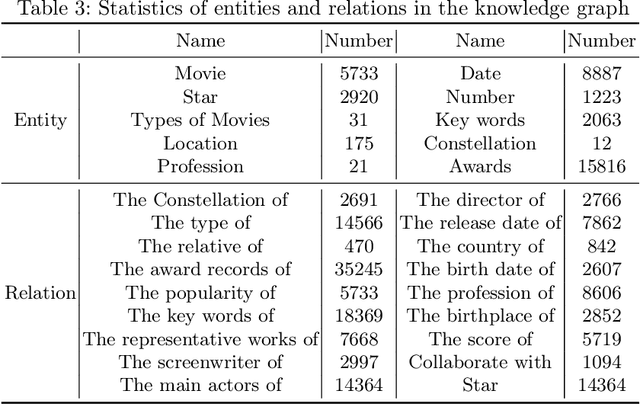

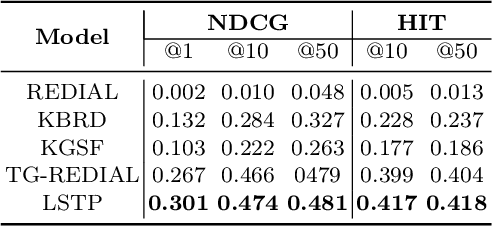

Abstract:In Conversational Recommendation Systems (CRS), the central question is how the conversational agent can naturally ask for user preferences and provide suitable recommendations. Existing works mainly follow the hierarchical architecture, where a higher policy decides whether to invoke the conversation module (to ask questions) or the recommendation module (to make recommendations). This architecture prevents these two components from fully interacting with each other. In contrast, this paper proposes a novel architecture, the long short-term feedback architecture, to connect these two essential components in CRS. Specifically, the recommendation predicts the long-term recommendation target based on the conversational context and the user history. Driven by the targeted recommendation, the conversational model predicts the next topic or attribute to verify if the user preference matches the target. The balance feedback loop continues until the short-term planner output matches the long-term planner output, that is when the system should make the recommendation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge