Yihang Wang

Multimodal Peer Review Simulation with Actionable To-Do Recommendations for Community-Aware Manuscript Revisions

Nov 14, 2025

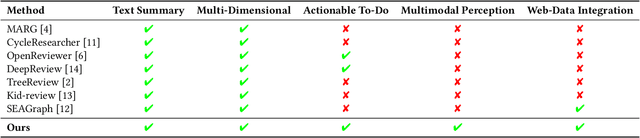

Abstract:While large language models (LLMs) offer promising capabilities for automating academic workflows, existing systems for academic peer review remain constrained by text-only inputs, limited contextual grounding, and a lack of actionable feedback. In this work, we present an interactive web-based system for multimodal, community-aware peer review simulation to enable effective manuscript revisions before paper submission. Our framework integrates textual and visual information through multimodal LLMs, enhances review quality via retrieval-augmented generation (RAG) grounded in web-scale OpenReview data, and converts generated reviews into actionable to-do lists using the proposed Action:Objective[\#] format, providing structured and traceable guidance. The system integrates seamlessly into existing academic writing platforms, providing interactive interfaces for real-time feedback and revision tracking. Experimental results highlight the effectiveness of the proposed system in generating more comprehensive and useful reviews aligned with expert standards, surpassing ablated baselines and advancing transparent, human-centered scholarly assistance.

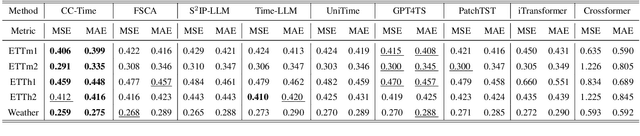

CC-Time: Cross-Model and Cross-Modality Time Series Forecasting

Aug 17, 2025

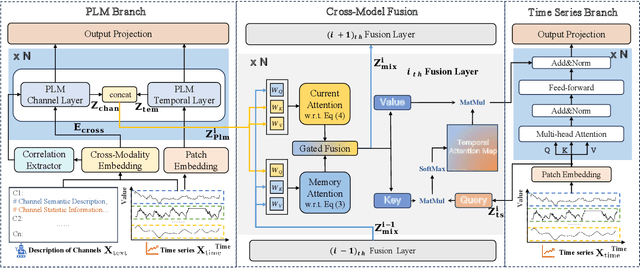

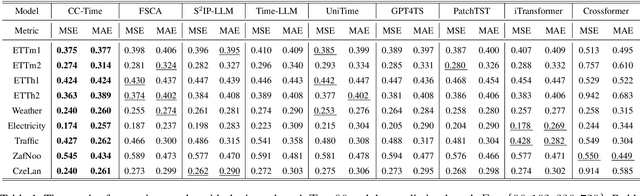

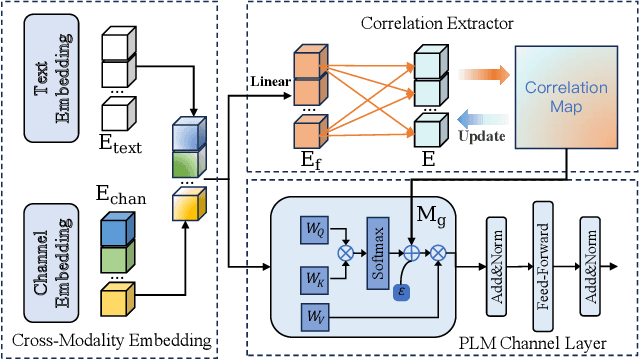

Abstract:With the success of pre-trained language models (PLMs) in various application fields beyond natural language processing, language models have raised emerging attention in the field of time series forecasting (TSF) and have shown great prospects. However, current PLM-based TSF methods still fail to achieve satisfactory prediction accuracy matching the strong sequential modeling power of language models. To address this issue, we propose Cross-Model and Cross-Modality Learning with PLMs for time series forecasting (CC-Time). We explore the potential of PLMs for time series forecasting from two aspects: 1) what time series features could be modeled by PLMs, and 2) whether relying solely on PLMs is sufficient for building time series models. In the first aspect, CC-Time incorporates cross-modality learning to model temporal dependency and channel correlations in the language model from both time series sequences and their corresponding text descriptions. In the second aspect, CC-Time further proposes the cross-model fusion block to adaptively integrate knowledge from the PLMs and time series model to form a more comprehensive modeling of time series patterns. Extensive experiments on nine real-world datasets demonstrate that CC-Time achieves state-of-the-art prediction accuracy in both full-data training and few-shot learning situations.

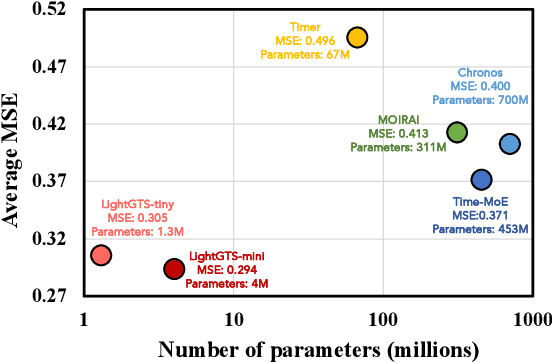

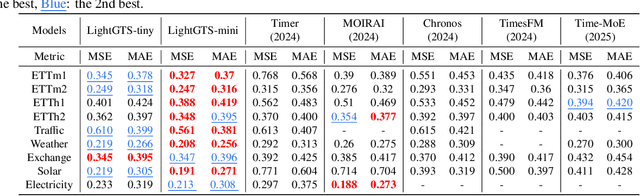

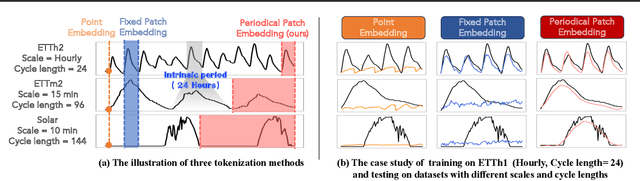

LightGTS: A Lightweight General Time Series Forecasting Model

Jun 06, 2025

Abstract:Existing works on general time series forecasting build foundation models with heavy model parameters through large-scale multi-source pre-training. These models achieve superior generalization ability across various datasets at the cost of significant computational burdens and limitations in resource-constrained scenarios. This paper introduces LightGTS, a lightweight general time series forecasting model designed from the perspective of consistent periodical modeling. To handle diverse scales and intrinsic periods in multi-source pre-training, we introduce Periodical Tokenization, which extracts consistent periodic patterns across different datasets with varying scales. To better utilize the periodicity in the decoding process, we further introduce Periodical Parallel Decoding, which leverages historical tokens to improve forecasting. Based on the two techniques above which fully leverage the inductive bias of periods inherent in time series, LightGTS uses a lightweight model to achieve outstanding performance on general time series forecasting. It achieves state-of-the-art forecasting performance on 9 real-world benchmarks in both zero-shot and full-shot settings with much better efficiency compared with existing time series foundation models.

Mitigating mode collapse in normalizing flows by annealing with an adaptive schedule: Application to parameter estimation

May 06, 2025Abstract:Normalizing flows (NFs) provide uncorrelated samples from complex distributions, making them an appealing tool for parameter estimation. However, the practical utility of NFs remains limited by their tendency to collapse to a single mode of a multimodal distribution. In this study, we show that annealing with an adaptive schedule based on the effective sample size (ESS) can mitigate mode collapse. We demonstrate that our approach can converge the marginal likelihood for a biochemical oscillator model fit to time-series data in ten-fold less computation time than a widely used ensemble Markov chain Monte Carlo (MCMC) method. We show that the ESS can also be used to reduce variance by pruning the samples. We expect these developments to be of general use for sampling with NFs and discuss potential opportunities for further improvements.

Graph Foundation Models for Recommendation: A Comprehensive Survey

Feb 12, 2025

Abstract:Recommender systems (RS) serve as a fundamental tool for navigating the vast expanse of online information, with deep learning advancements playing an increasingly important role in improving ranking accuracy. Among these, graph neural networks (GNNs) excel at extracting higher-order structural information, while large language models (LLMs) are designed to process and comprehend natural language, making both approaches highly effective and widely adopted. Recent research has focused on graph foundation models (GFMs), which integrate the strengths of GNNs and LLMs to model complex RS problems more efficiently by leveraging the graph-based structure of user-item relationships alongside textual understanding. In this survey, we provide a comprehensive overview of GFM-based RS technologies by introducing a clear taxonomy of current approaches, diving into methodological details, and highlighting key challenges and future directions. By synthesizing recent advancements, we aim to offer valuable insights into the evolving landscape of GFM-based recommender systems.

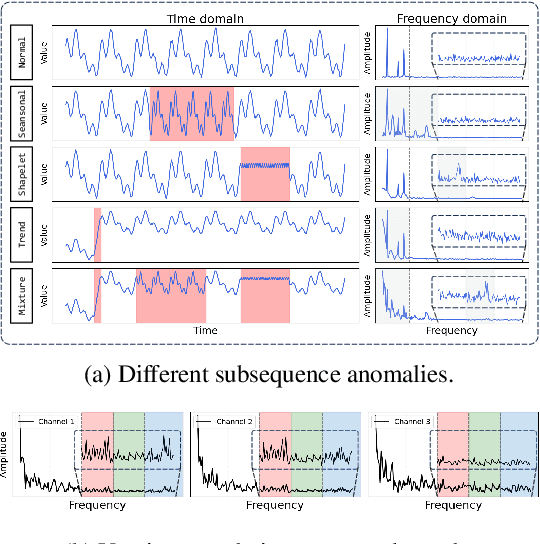

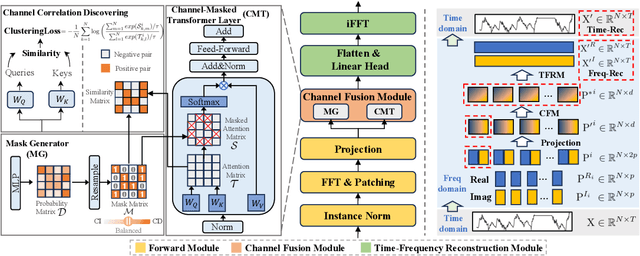

CATCH: Channel-Aware multivariate Time Series Anomaly Detection via Frequency Patching

Oct 16, 2024

Abstract:Anomaly detection in multivariate time series is challenging as heterogeneous subsequence anomalies may occur. Reconstruction-based methods, which focus on learning nomral patterns in the frequency domain to detect diverse abnormal subsequences, achieve promising resutls, while still falling short on capturing fine-grained frequency characteristics and channel correlations. To contend with the limitations, we introduce CATCH, a framework based on frequency patching. We propose to patchify the frequency domain into frequency bands, which enhances its ability to capture fine-grained frequency characteristics. To perceive appropriate channel correlations, we propose a Channel Fusion Module (CFM), which features a patch-wise mask generator and a masked-attention mechanism. Driven by a bi-level multi-objective optimization algorithm, the CFM is encouraged to iteratively discover appropriate patch-wise channel correlations, and to cluster relevant channels while isolating adverse effects from irrelevant channels. Extensive experiments on 9 real-world datasets and 12 synthetic datasets demonstrate that CATCH achieves state-of-the-art performance.

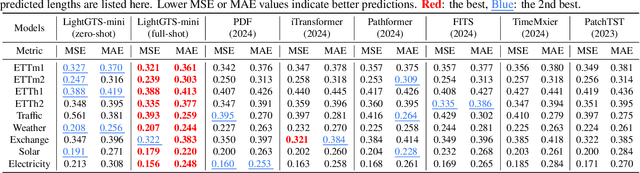

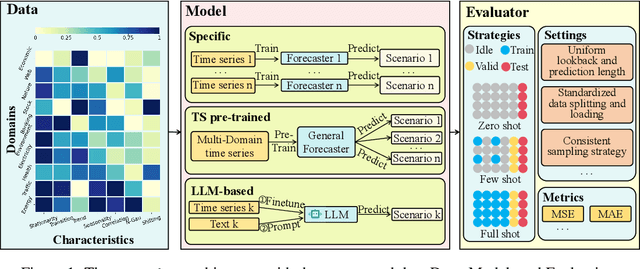

FoundTS: Comprehensive and Unified Benchmarking of Foundation Models for Time Series Forecasting

Oct 15, 2024

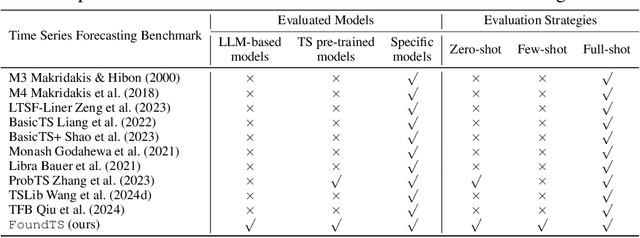

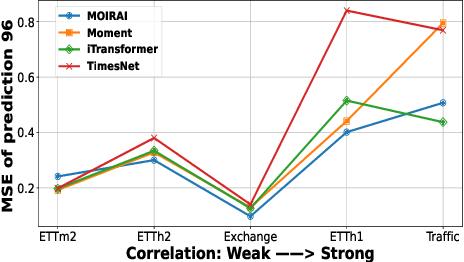

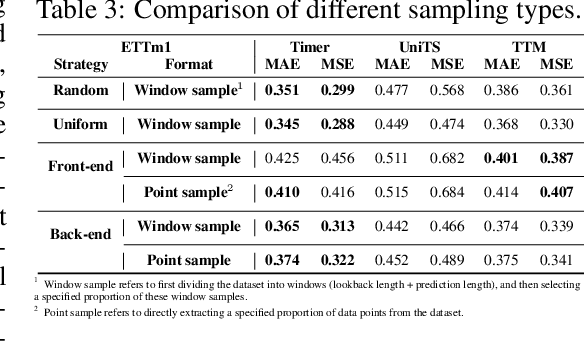

Abstract:Time Series Forecasting (TSF) is key functionality in numerous fields, including in finance, weather services, and energy management. While TSF methods are emerging these days, many of them require domain-specific data collection and model training and struggle with poor generalization performance on new domains. Foundation models aim to overcome this limitation. Pre-trained on large-scale language or time series data, they exhibit promising inferencing capabilities in new or unseen data. This has spurred a surge in new TSF foundation models. We propose a new benchmark, FoundTS, to enable thorough and fair evaluation and comparison of such models. FoundTS covers a variety of TSF foundation models, including those based on large language models and those pretrained on time series. Next, FoundTS supports different forecasting strategies, including zero-shot, few-shot, and full-shot, thereby facilitating more thorough evaluations. Finally, FoundTS offers a pipeline that standardizes evaluation processes such as dataset splitting, loading, normalization, and few-shot sampling, thereby facilitating fair evaluations. Building on this, we report on an extensive evaluation of TSF foundation models on a broad range of datasets from diverse domains and with different statistical characteristics. Specifically, we identify pros and cons and inherent limitations of existing foundation models, and we identify directions for future model design. We make our code and datasets available at https://anonymous.4open.science/r/FoundTS-C2B0.

QUITO-X: An Information Bottleneck-based Compression Algorithm with Cross-Attention

Aug 20, 2024Abstract:Generative LLM have achieved significant success in various industrial tasks and can effectively adapt to vertical domains and downstream tasks through ICL. However, with tasks becoming increasingly complex, the context length required by ICL is also getting longer, and two significant issues arise: (i) The excessively long context leads to high costs and inference delays. (ii) A substantial amount of task-irrelevant information introduced by long contexts exacerbates the "lost in the middle" problem. Recently, compressing prompts by removing tokens according to some metric obtained from some causal language models, such as llama-7b, has emerged as an effective approach to mitigate these issues. However, the metric used by prior method such as self-information or PPL do not fully align with the objective of distinuishing the most important tokens when conditioning on query. In this work, we introduce information bottleneck theory to carefully examine the properties required by the metric. Inspired by this, we use cross-attention in encoder-decoder architecture as a new metric. Our simple method leads to significantly better performance in smaller models with lower latency. We evaluate our method on four datasets: DROP, CoQA, SQuAD, and Quoref. The experimental results show that, while maintaining the same performance, our compression rate can improve by nearly 25% over previous SOTA. Remarkably, in experiments where 25% of the tokens are removed, our model's EM score for answers sometimes even exceeds that of the control group using uncompressed text as context.

DELIA: Diversity-Enhanced Learning for Instruction Adaptation in Large Language Models

Aug 19, 2024

Abstract:Although instruction tuning is widely used to adjust behavior in Large Language Models (LLMs), extensive empirical evidence and research indicates that it is primarily a process where the model fits to specific task formats, rather than acquiring new knowledge or capabilities. We propose that this limitation stems from biased features learned during instruction tuning, which differ from ideal task-specfic features, leading to learn less underlying semantics in downstream tasks. However, ideal features are unknown and incalculable, constraining past work to rely on prior knowledge to assist reasoning or training, which limits LLMs' capabilities to the developers' abilities, rather than data-driven scalable learning. In our paper, through our novel data synthesis method, DELIA (Diversity-Enhanced Learning for Instruction Adaptation), we leverage the buffering effect of extensive diverse data in LLMs training to transform biased features in instruction tuning into approximations of ideal features, without explicit prior ideal features. Experiments show DELIA's better performance compared to common instruction tuning and other baselines. It outperforms common instruction tuning by 17.07%-33.41% on Icelandic-English translation bleurt score (WMT-21 dataset, gemma-7b-it) and improves accuracy by 36.1% on formatted text generation (Llama2-7b-chat). Notably, among knowledge injection methods we've known, DELIA uniquely align the internal representations of new special tokens with their prior semantics.

QUITO: Accelerating Long-Context Reasoning through Query-Guided Context Compression

Aug 01, 2024Abstract:In-context learning (ICL) capabilities are foundational to the success of large language models (LLMs). Recently, context compression has attracted growing interest since it can largely reduce reasoning complexities and computation costs of LLMs. In this paper, we introduce a novel Query-gUIded aTtention cOmpression (QUITO) method, which leverages attention of the question over the contexts to filter useless information. Specifically, we take a trigger token to calculate the attention distribution of the context in response to the question. Based on the distribution, we propose three different filtering methods to satisfy the budget constraints of the context length. We evaluate the QUITO using two widely-used datasets, namely, NaturalQuestions and ASQA. Experimental results demonstrate that QUITO significantly outperforms established baselines across various datasets and downstream LLMs, underscoring its effectiveness. Our code is available at https://github.com/Wenshansilvia/attention_compressor.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge