Xiaoming Liu

LocalScore: Local Density-Aware Similarity Scoring for Biometrics

Feb 01, 2026Abstract:Open-set biometrics faces challenges with probe subjects who may not be enrolled in the gallery, as traditional biometric systems struggle to detect these non-mated probes. Despite the growing prevalence of multi-sample galleries in real-world deployments, most existing methods collapse intra-subject variability into a single global representation, leading to suboptimal decision boundaries and poor open-set robustness. To address this issue, we propose LocalScore, a simple yet effective scoring algorithm that explicitly incorporates the local density of the gallery feature distribution using the k-th nearest neighbors. LocalScore is architecture-agnostic, loss-independent, and incurs negligible computational overhead, making it a plug-and-play solution for existing biometric systems. Extensive experiments across multiple modalities demonstrate that LocalScore consistently achieves substantial gains in open-set retrieval (FNIR@FPIR reduced from 53% to 40%) and verification (TAR@FAR improved from 51% to 74%). We further provide theoretical analysis and empirical validation explaining when and why the method achieves the most significant gains based on dataset characteristics.

Can Textual Reasoning Improve the Performance of MLLMs on Fine-grained Visual Classification?

Jan 11, 2026Abstract:Multi-modal large language models (MLLMs) exhibit strong general-purpose capabilities, yet still struggle on Fine-Grained Visual Classification (FGVC), a core perception task that requires subtle visual discrimination and is crucial for many real-world applications. A widely adopted strategy for boosting performance on challenging tasks such as math and coding is Chain-of-Thought (CoT) reasoning. However, several prior works have reported that CoT can actually harm performance on visual perception tasks. These studies, though, examine the issue from relatively narrow angles and leave open why CoT degrades perception-heavy performance. We systematically re-examine the role of CoT in FGVC through the lenses of zero-shot evaluation and multiple training paradigms. Across these settings, we uncover a central paradox: the degradation induced by CoT is largely driven by the reasoning length, in which longer textual reasoning consistently lowers classification accuracy. We term this phenomenon the ``Cost of Thinking''. Building on this finding, we make two key contributions: (1) \alg, a simple and general plug-and-play normalization method for multi-reward optimization that balances heterogeneous reward signals, and (2) ReFine-RFT, a framework that combines ensemble rewards with \alg to constrain reasoning length while providing dense accuracy-oriented feedback. Extensive experiments demonstrate the effectiveness of our findings and the proposed ReFine-RFT, achieving state-of-the-art performance across FGVC benchmarks. Code and models are available at \href{https://github.com/jiezhu23/ReFine-RFT}{Project Link}.

On the Holistic Approach for Detecting Human Image Forgery

Jan 08, 2026Abstract:The rapid advancement of AI-generated content (AIGC) has escalated the threat of deepfakes, from facial manipulations to the synthesis of entire photorealistic human bodies. However, existing detection methods remain fragmented, specializing either in facial-region forgeries or full-body synthetic images, and consequently fail to generalize across the full spectrum of human image manipulations. We introduce HuForDet, a holistic framework for human image forgery detection, which features a dual-branch architecture comprising: (1) a face forgery detection branch that employs heterogeneous experts operating in both RGB and frequency domains, including an adaptive Laplacian-of-Gaussian (LoG) module designed to capture artifacts ranging from fine-grained blending boundaries to coarse-scale texture irregularities; and (2) a contextualized forgery detection branch that leverages a Multi-Modal Large Language Model (MLLM) to analyze full-body semantic consistency, enhanced with a confidence estimation mechanism that dynamically weights its contribution during feature fusion. We curate a human image forgery (HuFor) dataset that unifies existing face forgery data with a new corpus of full-body synthetic humans. Extensive experiments show that our HuForDet achieves state-of-the-art forgery detection performance and superior robustness across diverse human image forgeries.

Beyond Motion Pattern: An Empirical Study of Physical Forces for Human Motion Understanding

Dec 23, 2025

Abstract:Human motion understanding has advanced rapidly through vision-based progress in recognition, tracking, and captioning. However, most existing methods overlook physical cues such as joint actuation forces that are fundamental in biomechanics. This gap motivates our study: if and when do physically inferred forces enhance motion understanding? By incorporating forces into established motion understanding pipelines, we systematically evaluate their impact across baseline models on 3 major tasks: gait recognition, action recognition, and fine-grained video captioning. Across 8 benchmarks, incorporating forces yields consistent performance gains; for example, on CASIA-B, Rank-1 gait recognition accuracy improved from 89.52% to 90.39% (+0.87), with larger gain observed under challenging conditions: +2.7% when wearing a coat and +3.0% at the side view. On Gait3D, performance also increases from 46.0% to 47.3% (+1.3). In action recognition, CTR-GCN achieved +2.00% on Penn Action, while high-exertion classes like punching/slapping improved by +6.96%. Even in video captioning, Qwen2.5-VL's ROUGE-L score rose from 0.310 to 0.339 (+0.029), indicating that physics-inferred forces enhance temporal grounding and semantic richness. These results demonstrate that force cues can substantially complement visual and kinematic features under dynamic, occluded, or appearance-varying conditions.

Generalizable Object Re-Identification via Visual In-Context Prompting

Aug 28, 2025

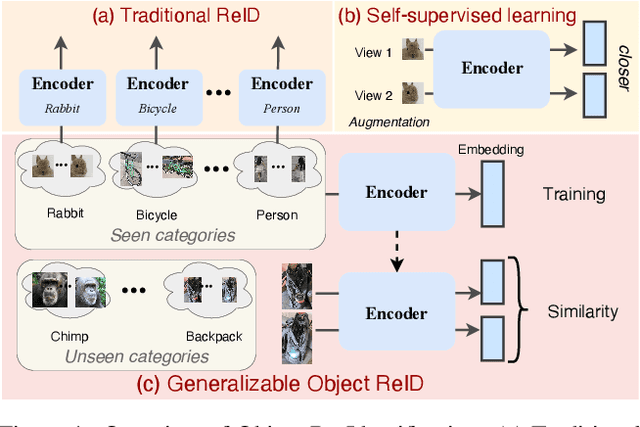

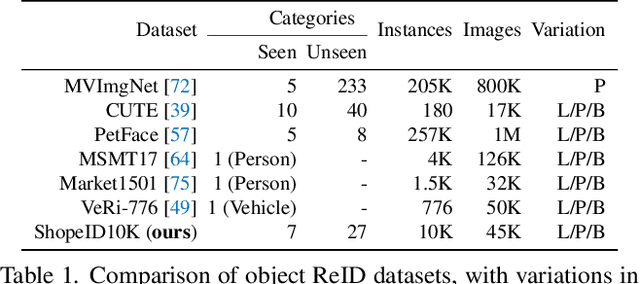

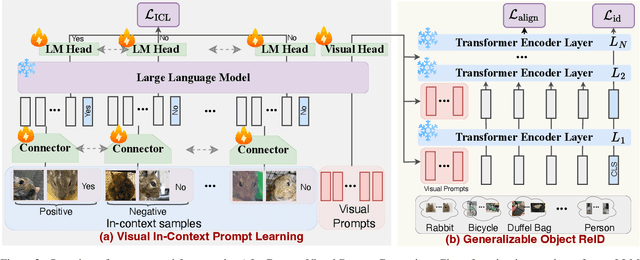

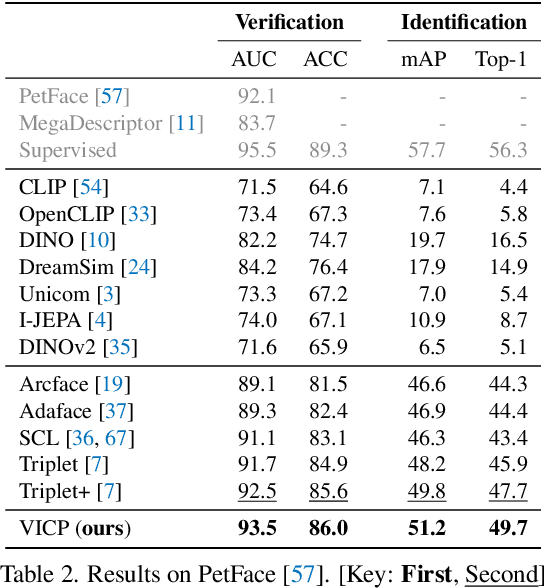

Abstract:Current object re-identification (ReID) methods train domain-specific models (e.g., for persons or vehicles), which lack generalization and demand costly labeled data for new categories. While self-supervised learning reduces annotation needs by learning instance-wise invariance, it struggles to capture \textit{identity-sensitive} features critical for ReID. This paper proposes Visual In-Context Prompting~(VICP), a novel framework where models trained on seen categories can directly generalize to unseen novel categories using only \textit{in-context examples} as prompts, without requiring parameter adaptation. VICP synergizes LLMs and vision foundation models~(VFM): LLMs infer semantic identity rules from few-shot positive/negative pairs through task-specific prompting, which then guides a VFM (\eg, DINO) to extract ID-discriminative features via \textit{dynamic visual prompts}. By aligning LLM-derived semantic concepts with the VFM's pre-trained prior, VICP enables generalization to novel categories, eliminating the need for dataset-specific retraining. To support evaluation, we introduce ShopID10K, a dataset of 10K object instances from e-commerce platforms, featuring multi-view images and cross-domain testing. Experiments on ShopID10K and diverse ReID benchmarks demonstrate that VICP outperforms baselines by a clear margin on unseen categories. Code is available at https://github.com/Hzzone/VICP.

HAMoBE: Hierarchical and Adaptive Mixture of Biometric Experts for Video-based Person ReID

Aug 07, 2025Abstract:Recently, research interest in person re-identification (ReID) has increasingly focused on video-based scenarios, which are essential for robust surveillance and security in varied and dynamic environments. However, existing video-based ReID methods often overlook the necessity of identifying and selecting the most discriminative features from both videos in a query-gallery pair for effective matching. To address this issue, we propose a novel Hierarchical and Adaptive Mixture of Biometric Experts (HAMoBE) framework, which leverages multi-layer features from a pre-trained large model (e.g., CLIP) and is designed to mimic human perceptual mechanisms by independently modeling key biometric features--appearance, static body shape, and dynamic gait--and adaptively integrating them. Specifically, HAMoBE includes two levels: the first level extracts low-level features from multi-layer representations provided by the frozen large model, while the second level consists of specialized experts focusing on long-term, short-term, and temporal features. To ensure robust matching, we introduce a new dual-input decision gating network that dynamically adjusts the contributions of each expert based on their relevance to the input scenarios. Extensive evaluations on benchmarks like MEVID demonstrate that our approach yields significant performance improvements (e.g., +13.0% Rank-1 accuracy).

A Quality-Guided Mixture of Score-Fusion Experts Framework for Human Recognition

Jul 31, 2025Abstract:Whole-body biometric recognition is a challenging multimodal task that integrates various biometric modalities, including face, gait, and body. This integration is essential for overcoming the limitations of unimodal systems. Traditionally, whole-body recognition involves deploying different models to process multiple modalities, achieving the final outcome by score-fusion (e.g., weighted averaging of similarity matrices from each model). However, these conventional methods may overlook the variations in score distributions of individual modalities, making it challenging to improve final performance. In this work, we present \textbf{Q}uality-guided \textbf{M}ixture of score-fusion \textbf{E}xperts (QME), a novel framework designed for improving whole-body biometric recognition performance through a learnable score-fusion strategy using a Mixture of Experts (MoE). We introduce a novel pseudo-quality loss for quality estimation with a modality-specific Quality Estimator (QE), and a score triplet loss to improve the metric performance. Extensive experiments on multiple whole-body biometric datasets demonstrate the effectiveness of our proposed approach, achieving state-of-the-art results across various metrics compared to baseline methods. Our method is effective for multimodal and multi-model, addressing key challenges such as model misalignment in the similarity score domain and variability in data quality.

TalkingHeadBench: A Multi-Modal Benchmark & Analysis of Talking-Head DeepFake Detection

May 30, 2025Abstract:The rapid advancement of talking-head deepfake generation fueled by advanced generative models has elevated the realism of synthetic videos to a level that poses substantial risks in domains such as media, politics, and finance. However, current benchmarks for deepfake talking-head detection fail to reflect this progress, relying on outdated generators and offering limited insight into model robustness and generalization. We introduce TalkingHeadBench, a comprehensive multi-model multi-generator benchmark and curated dataset designed to evaluate the performance of state-of-the-art detectors on the most advanced generators. Our dataset includes deepfakes synthesized by leading academic and commercial models and features carefully constructed protocols to assess generalization under distribution shifts in identity and generator characteristics. We benchmark a diverse set of existing detection methods, including CNNs, vision transformers, and temporal models, and analyze their robustness and generalization capabilities. In addition, we provide error analysis using Grad-CAM visualizations to expose common failure modes and detector biases. TalkingHeadBench is hosted on https://huggingface.co/datasets/luchaoqi/TalkingHeadBench with open access to all data splits and protocols. Our benchmark aims to accelerate research towards more robust and generalizable detection models in the face of rapidly evolving generative techniques.

50 Years of Automated Face Recognition

May 30, 2025

Abstract:Over the past 50 years, automated face recognition has evolved from rudimentary, handcrafted systems into sophisticated deep learning models that rival and often surpass human performance. This paper chronicles the history and technological progression of FR, from early geometric and statistical methods to modern deep neural architectures leveraging massive real and AI-generated datasets. We examine key innovations that have shaped the field, including developments in dataset, loss function, neural network design and feature fusion. We also analyze how the scale and diversity of training data influence model generalization, drawing connections between dataset growth and benchmark improvements. Recent advances have achieved remarkable milestones: state-of-the-art face verification systems now report False Negative Identification Rates of 0.13% against a 12.4 million gallery in NIST FRVT evaluations for 1:N visa-to-border matching. While recent advances have enabled remarkable accuracy in high- and low-quality face scenarios, numerous challenges persist. While remarkable progress has been achieved, several open research problems remain. We outline critical challenges and promising directions for future face recognition research, including scalability, multi-modal fusion, synthetic identity generation, and explainable systems.

BiggerGait: Unlocking Gait Recognition with Layer-wise Representations from Large Vision Models

May 23, 2025

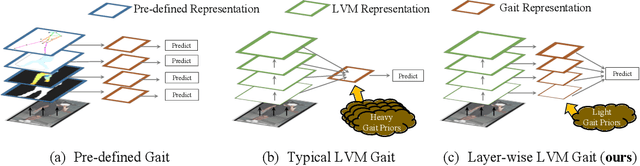

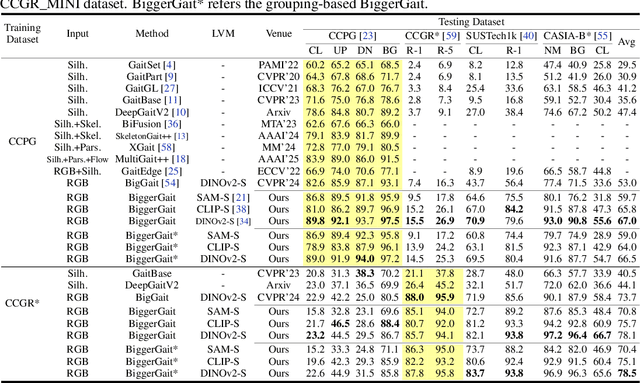

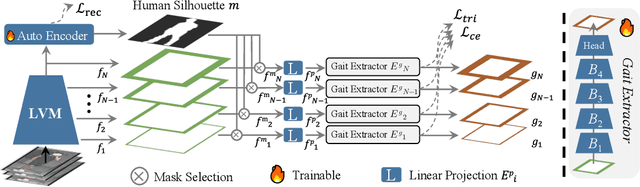

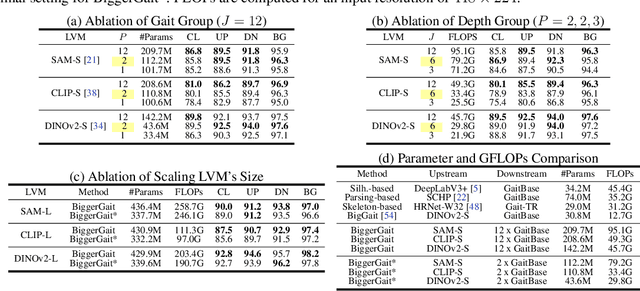

Abstract:Large vision models (LVM) based gait recognition has achieved impressive performance. However, existing LVM-based approaches may overemphasize gait priors while neglecting the intrinsic value of LVM itself, particularly the rich, distinct representations across its multi-layers. To adequately unlock LVM's potential, this work investigates the impact of layer-wise representations on downstream recognition tasks. Our analysis reveals that LVM's intermediate layers offer complementary properties across tasks, integrating them yields an impressive improvement even without rich well-designed gait priors. Building on this insight, we propose a simple and universal baseline for LVM-based gait recognition, termed BiggerGait. Comprehensive evaluations on CCPG, CAISA-B*, SUSTech1K, and CCGR\_MINI validate the superiority of BiggerGait across both within- and cross-domain tasks, establishing it as a simple yet practical baseline for gait representation learning. All the models and code will be publicly available.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge