Thien-Minh Nguyen

Multi-Agent Off-World Exploration for Sparse Evidence Discovery via Gaussian Belief Mapping and Dual-Domain Coverage

Mar 08, 2026Abstract:Off-world multi-robot exploration is challenged by sparse targets, limited sensing, hazardous terrain, and restricted communication. Many scientifically valuable clues are visually ambiguous and often require close-range observations, making efficient and safe informative path planning essential. Existing methods often rely on predefined areas of interest (AOIs), which may be incomplete or biased, and typically handle terrain risk only through soft penalties, which are insufficient for avoiding non-recoverable regions. To address these issues, we propose a multi-agent informative path planning framework for sparse evidence discovery based on Gaussian belief mapping and dual-domain coverage. The method maintains Gaussian-process-based interest and risk beliefs and combines them with trajectory-intent representations to support coordinated sequential decision-making among multiple agents. It further prioritizes search inside the AOI while preserving limited exploration outside it, thereby improving robustness to AOI bias. In addition, the risk-aware design helps agents balance information gain and operational safety in hazardous environments. Experimental results in simulated lunar environments show that the proposed method consistently outperforms sampling-based and greedy baselines under different budgets and communication ranges. In particular, it achieves lower final uncertainty in risk-aware settings and remains robust under limited communication, demonstrating its effectiveness for cooperative off-world robotic exploration.

PanoDP: Learning Collision-Free Navigation with Panoramic Depth and Differentiable Physics

Mar 08, 2026Abstract:Autonomous collision-free navigation in cluttered environments requires safe decision-making under partial observability with both static structure and dynamic obstacles. We present \textbf{PanoDP}, a communication-free learning framework that combines four-view panoramic depth perception with differentiable-physics-based training signals. PanoDP encodes panoramic depth using a lightweight CNN and optimizes policies with dense differentiable collision and motion-feasibility terms, improving training stability beyond sparse terminal collisions. We evaluate PanoDP on a controlled ring-to-center benchmark with systematic sweeps over agent count, obstacle density/layout, and dynamic behaviors, and further test out-of-distribution generalization in an external simulator (e.g., AirSim). Across settings, PanoDP increases collision-free and completion rates over single-view and non-physics-guided baselines under matched training budgets, and ablations (view masking, rotation augmentation) confirm the policy leverages 360-degree information. Code will be open source upon acceptance.

Watch Your Step: Learning Semantically-Guided Locomotion in Cluttered Environment

Mar 03, 2026Abstract:Although legged robots demonstrate impressive mobility on rough terrain, using them safely in cluttered environments remains a challenge. A key issue is their inability to avoid stepping on low-lying objects, such as high-cost small devices or cables on flat ground. This limitation arises from a disconnection between high-level semantic understanding and low-level control, combined with errors in elevation maps during real-world operation. To address this, we introduce SemLoco, a Reinforcement Learning (RL) framework designed to avoid obstacles precisely in densely cluttered environments. SemLoco uses a two-stage RL approach that combines both soft and hard constraints and performs pixel-wise foothold safety inference, enabling more accurate foot placement. Additionally, SemLoco integrates a semantic map to assign traversability costs rather than relying solely on geometric data. SemLoco significantly reduces collisions and improves safety around sensitive objects, enabling reliable navigation in situations where traditional controllers would likely cause damage. Experimental results further demonstrate that SemLoco can be effectively applied to more complex, unstructured real-world environments.

PERAL: Perception-Aware Motion Control for Passive LiDAR Excitation in Spherical Robots

Sep 18, 2025Abstract:Autonomous mobile robots increasingly rely on LiDAR-IMU odometry for navigation and mapping, yet horizontally mounted LiDARs such as the MID360 capture few near-ground returns, limiting terrain awareness and degrading performance in feature-scarce environments. Prior solutions - static tilt, active rotation, or high-density sensors - either sacrifice horizontal perception or incur added actuators, cost, and power. We introduce PERAL, a perception-aware motion control framework for spherical robots that achieves passive LiDAR excitation without dedicated hardware. By modeling the coupling between internal differential-drive actuation and sensor attitude, PERAL superimposes bounded, non-periodic oscillations onto nominal goal- or trajectory-tracking commands, enriching vertical scan diversity while preserving navigation accuracy. Implemented on a compact spherical robot, PERAL is validated across laboratory, corridor, and tactical environments. Experiments demonstrate up to 96 percent map completeness, a 27 percent reduction in trajectory tracking error, and robust near-ground human detection, all at lower weight, power, and cost compared with static tilt, active rotation, and fixed horizontal baselines. The design and code will be open-sourced upon acceptance.

Aerial Target Encirclement and Interception with Noisy Range Observations

Aug 11, 2025Abstract:This paper proposes a strategy to encircle and intercept a non-cooperative aerial point-mass moving target by leveraging noisy range measurements for state estimation. In this approach, the guardians actively ensure the observability of the target by using an anti-synchronization (AS), 3D ``vibrating string" trajectory, which enables rapid position and velocity estimation based on the Kalman filter. Additionally, a novel anti-target controller is designed for the guardians to enable adaptive transitions from encircling a protected target to encircling, intercepting, and neutralizing a hostile target, taking into consideration the input constraints of the guardians. Based on the guaranteed uniform observability, the exponentially bounded stability of the state estimation error and the convergence of the encirclement error are rigorously analyzed. Simulation results and real-world UAV experiments are presented to further validate the effectiveness of the system design.

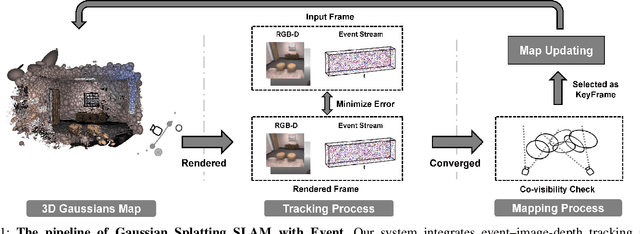

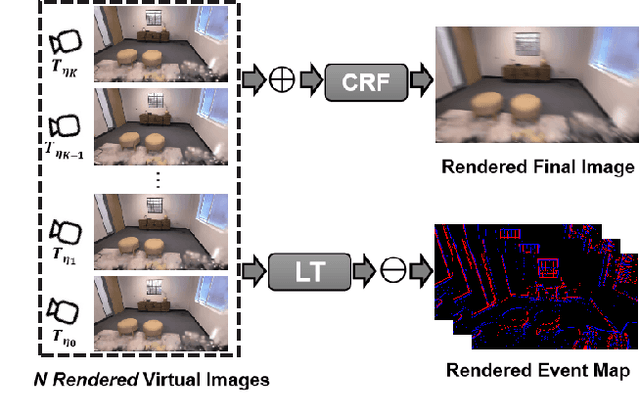

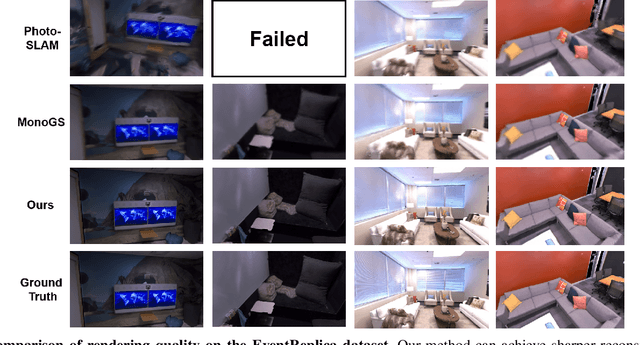

EGS-SLAM: RGB-D Gaussian Splatting SLAM with Events

Aug 09, 2025

Abstract:Gaussian Splatting SLAM (GS-SLAM) offers a notable improvement over traditional SLAM methods, enabling photorealistic 3D reconstruction that conventional approaches often struggle to achieve. However, existing GS-SLAM systems perform poorly under persistent and severe motion blur commonly encountered in real-world scenarios, leading to significantly degraded tracking accuracy and compromised 3D reconstruction quality. To address this limitation, we propose EGS-SLAM, a novel GS-SLAM framework that fuses event data with RGB-D inputs to simultaneously reduce motion blur in images and compensate for the sparse and discrete nature of event streams, enabling robust tracking and high-fidelity 3D Gaussian Splatting reconstruction. Specifically, our system explicitly models the camera's continuous trajectory during exposure, supporting event- and blur-aware tracking and mapping on a unified 3D Gaussian Splatting scene. Furthermore, we introduce a learnable camera response function to align the dynamic ranges of events and images, along with a no-event loss to suppress ringing artifacts during reconstruction. We validate our approach on a new dataset comprising synthetic and real-world sequences with significant motion blur. Extensive experimental results demonstrate that EGS-SLAM consistently outperforms existing GS-SLAM systems in both trajectory accuracy and photorealistic 3D Gaussian Splatting reconstruction. The source code will be available at https://github.com/Chensiyu00/EGS-SLAM.

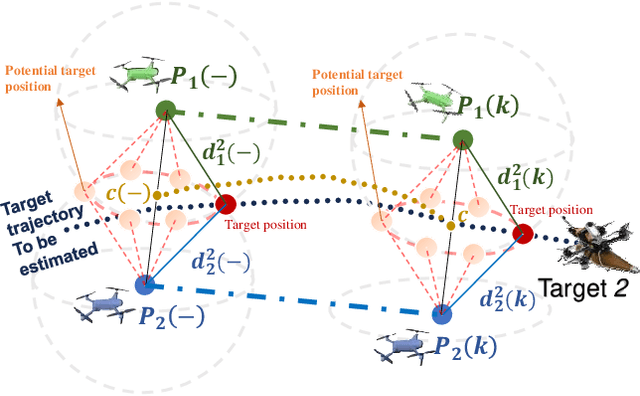

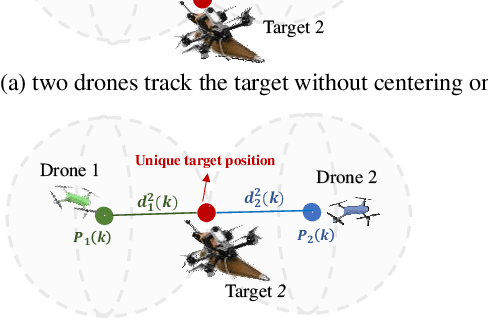

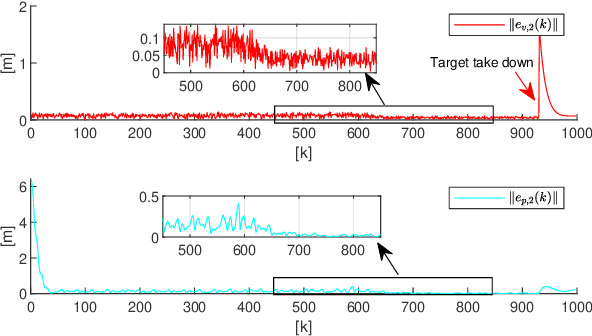

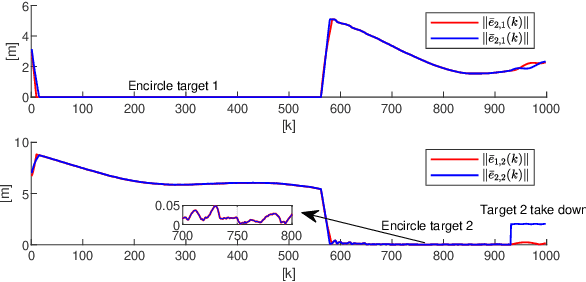

Autonomous 3D Moving Target Encirclement and Interception with Range measurement

Jun 16, 2025

Abstract:Commercial UAVs are an emerging security threat as they are capable of carrying hazardous payloads or disrupting air traffic. To counter UAVs, we introduce an autonomous 3D target encirclement and interception strategy. Unlike traditional ground-guided systems, this strategy employs autonomous drones to track and engage non-cooperative hostile UAVs, which is effective in non-line-of-sight conditions, GPS denial, and radar jamming, where conventional detection and neutralization from ground guidance fail. Using two noisy real-time distances measured by drones, guardian drones estimate the relative position from their own to the target using observation and velocity compensation methods, based on anti-synchronization (AS) and an X$-$Y circular motion combined with vertical jitter. An encirclement control mechanism is proposed to enable UAVs to adaptively transition from encircling and protecting a target to encircling and monitoring a hostile target. Upon breaching a warning threshold, the UAVs may even employ a suicide attack to neutralize the hostile target. We validate this strategy through real-world UAV experiments and simulated analysis in MATLAB, demonstrating its effectiveness in detecting, encircling, and intercepting hostile drones. More details: https://youtu.be/5eHW56lPVto.

Tire Wear Aware Trajectory Tracking Control for Multi-axle Swerve-drive Autonomous Mobile Robots

Jun 05, 2025Abstract:Multi-axle Swerve-drive Autonomous Mobile Robots (MS-AGVs) equipped with independently steerable wheels are commonly used for high-payload transportation. In this work, we present a novel model predictive control (MPC) method for MS-AGV trajectory tracking that takes tire wear minimization consideration in the objective function. To speed up the problem-solving process, we propose a hierarchical controller design and simplify the dynamic model by integrating the \textit{magic formula tire model} and \textit{simplified tire wear model}. In the experiment, the proposed method can be solved by simulated annealing in real-time on a normal personal computer and by incorporating tire wear into the objective function, tire wear is reduced by 19.19\% while maintaining the tracking accuracy in curve-tracking experiments. In the more challenging scene: the desired trajectory is offset by 60 degrees from the vehicle's heading, the reduction in tire wear increased to 65.20\% compared to the kinematic model without considering the tire wear optimization.

Cooperative Aerial Robot Inspection Challenge: A Benchmark for Heterogeneous Multi-UAV Planning and Lessons Learned

Jan 14, 2025

Abstract:We propose the Cooperative Aerial Robot Inspection Challenge (CARIC), a simulation-based benchmark for motion planning algorithms in heterogeneous multi-UAV systems. CARIC features UAV teams with complementary sensors, realistic constraints, and evaluation metrics prioritizing inspection quality and efficiency. It offers a ready-to-use perception-control software stack and diverse scenarios to support the development and evaluation of task allocation and motion planning algorithms. Competitions using CARIC were held at IEEE CDC 2023 and the IROS 2024 Workshop on Multi-Robot Perception and Navigation, attracting innovative solutions from research teams worldwide. This paper examines the top three teams from CDC 2023, analyzing their exploration, inspection, and task allocation strategies while drawing insights into their performance across scenarios. The results highlight the task's complexity and suggest promising directions for future research in cooperative multi-UAV systems.

Large-Scale UWB Anchor Calibration and One-Shot Localization Using Gaussian Process

Dec 22, 2024

Abstract:Ultra-wideband (UWB) is gaining popularity with devices like AirTags for precise home item localization but faces significant challenges when scaled to large environments like seaports. The main challenges are calibration and localization in obstructed conditions, which are common in logistics environments. Traditional calibration methods, dependent on line-of-sight (LoS), are slow, costly, and unreliable in seaports and warehouses, making large-scale localization a significant pain point in the industry. To overcome these challenges, we propose a UWB-LiDAR fusion-based calibration and one-shot localization framework. Our method uses Gaussian Processes to estimate anchor position from continuous-time LiDAR Inertial Odometry with sampled UWB ranges. This approach ensures accurate and reliable calibration with just one round of sampling in large-scale areas, I.e., 600x450 square meter. With the LoS issues, UWB-only localization can be problematic, even when anchor positions are known. We demonstrate that by applying a UWB-range filter, the search range for LiDAR loop closure descriptors is significantly reduced, improving both accuracy and speed. This concept can be applied to other loop closure detection methods, enabling cost-effective localization in large-scale warehouses and seaports. It significantly improves precision in challenging environments where UWB-only and LiDAR-Inertial methods fall short, as shown in the video \url{https://youtu.be/oY8jQKdM7lU }. We will open-source our datasets and calibration codes for community use.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge