Shudong Huang

PCSR: Pseudo-label Consistency-Guided Sample Refinement for Noisy Correspondence Learning

Sep 19, 2025Abstract:Cross-modal retrieval aims to align different modalities via semantic similarity. However, existing methods often assume that image-text pairs are perfectly aligned, overlooking Noisy Correspondences in real data. These misaligned pairs misguide similarity learning and degrade retrieval performance. Previous methods often rely on coarse-grained categorizations that simply divide data into clean and noisy samples, overlooking the intrinsic diversity within noisy instances. Moreover, they typically apply uniform training strategies regardless of sample characteristics, resulting in suboptimal sample utilization for model optimization. To address the above challenges, we introduce a novel framework, called Pseudo-label Consistency-Guided Sample Refinement (PCSR), which enhances correspondence reliability by explicitly dividing samples based on pseudo-label consistency. Specifically, we first employ a confidence-based estimation to distinguish clean and noisy pairs, then refine the noisy pairs via pseudo-label consistency to uncover structurally distinct subsets. We further proposed a Pseudo-label Consistency Score (PCS) to quantify prediction stability, enabling the separation of ambiguous and refinable samples within noisy pairs. Accordingly, we adopt Adaptive Pair Optimization (APO), where ambiguous samples are optimized with robust loss functions and refinable ones are enhanced via text replacement during training. Extensive experiments on CC152K, MS-COCO and Flickr30K validate the effectiveness of our method in improving retrieval robustness under noisy supervision.

Large Foundation Model for Ads Recommendation

Aug 20, 2025

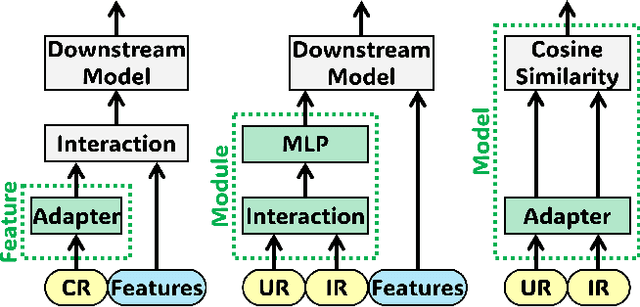

Abstract:Online advertising relies on accurate recommendation models, with recent advances using pre-trained large-scale foundation models (LFMs) to capture users' general interests across multiple scenarios and tasks. However, existing methods have critical limitations: they extract and transfer only user representations (URs), ignoring valuable item representations (IRs) and user-item cross representations (CRs); and they simply use a UR as a feature in downstream applications, which fails to bridge upstream-downstream gaps and overlooks more transfer granularities. In this paper, we propose LFM4Ads, an All-Representation Multi-Granularity transfer framework for ads recommendation. It first comprehensively transfers URs, IRs, and CRs, i.e., all available representations in the pre-trained foundation model. To effectively utilize the CRs, it identifies the optimal extraction layer and aggregates them into transferable coarse-grained forms. Furthermore, we enhance the transferability via multi-granularity mechanisms: non-linear adapters for feature-level transfer, an Isomorphic Interaction Module for module-level transfer, and Standalone Retrieval for model-level transfer. LFM4Ads has been successfully deployed in Tencent's industrial-scale advertising platform, processing tens of billions of daily samples while maintaining terabyte-scale model parameters with billions of sparse embedding keys across approximately two thousand features. Since its production deployment in Q4 2024, LFM4Ads has achieved 10+ successful production launches across various advertising scenarios, including primary ones like Weixin Moments and Channels. These launches achieve an overall GMV lift of 2.45% across the entire platform, translating to estimated annual revenue increases in the hundreds of millions of dollars.

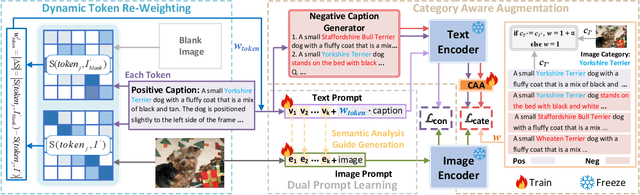

Dual Prompt Learning for Adapting Vision-Language Models to Downstream Image-Text Retrieval

Aug 06, 2025

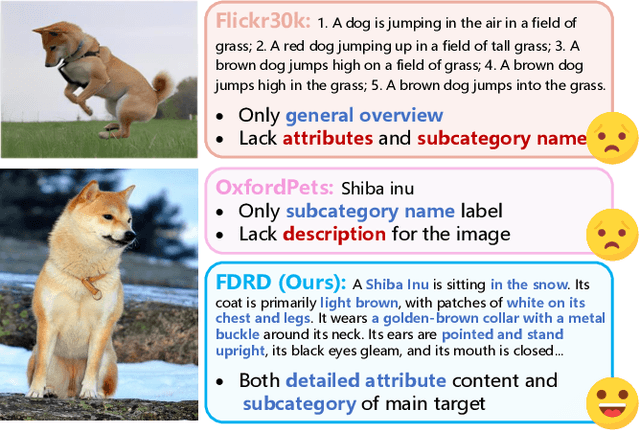

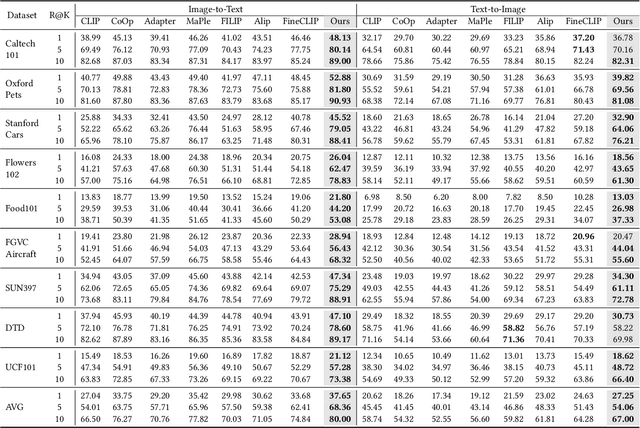

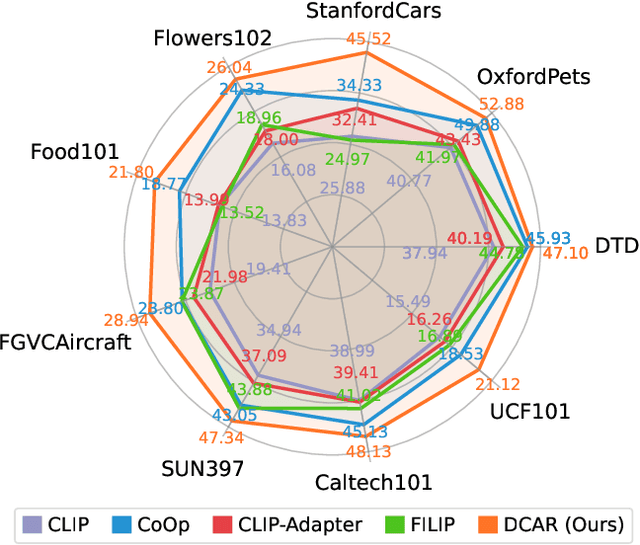

Abstract:Recently, prompt learning has demonstrated remarkable success in adapting pre-trained Vision-Language Models (VLMs) to various downstream tasks such as image classification. However, its application to the downstream Image-Text Retrieval (ITR) task is more challenging. We find that the challenge lies in discriminating both fine-grained attributes and similar subcategories of the downstream data. To address this challenge, we propose Dual prompt Learning with Joint Category-Attribute Reweighting (DCAR), a novel dual-prompt learning framework to achieve precise image-text matching. The framework dynamically adjusts prompt vectors from both semantic and visual dimensions to improve the performance of CLIP on the downstream ITR task. Based on the prompt paradigm, DCAR jointly optimizes attribute and class features to enhance fine-grained representation learning. Specifically, (1) at the attribute level, it dynamically updates the weights of attribute descriptions based on text-image mutual information correlation; (2) at the category level, it introduces negative samples from multiple perspectives with category-matching weighting to learn subcategory distinctions. To validate our method, we construct the Fine-class Described Retrieval Dataset (FDRD), which serves as a challenging benchmark for ITR in downstream data domains. It covers over 1,500 downstream fine categories and 230,000 image-caption pairs with detailed attribute annotations. Extensive experiments on FDRD demonstrate that DCAR achieves state-of-the-art performance over existing baselines.

Aligning Information Capacity Between Vision and Language via Dense-to-Sparse Feature Distillation for Image-Text Matching

Mar 19, 2025Abstract:Enabling Visual Semantic Models to effectively handle multi-view description matching has been a longstanding challenge. Existing methods typically learn a set of embeddings to find the optimal match for each view's text and compute similarity. However, the visual and text embeddings learned through these approaches have limited information capacity and are prone to interference from locally similar negative samples. To address this issue, we argue that the information capacity of embeddings is crucial and propose Dense-to-Sparse Feature Distilled Visual Semantic Embedding (D2S-VSE), which enhances the information capacity of sparse text by leveraging dense text distillation. Specifically, D2S-VSE is a two-stage framework. In the pre-training stage, we align images with dense text to enhance the information capacity of visual semantic embeddings. In the fine-tuning stage, we optimize two tasks simultaneously, distilling dense text embeddings to sparse text embeddings while aligning images and sparse texts, enhancing the information capacity of sparse text embeddings. Our proposed D2S-VSE model is extensively evaluated on the large-scale MS-COCO and Flickr30K datasets, demonstrating its superiority over recent state-of-the-art methods.

Asymmetric Visual Semantic Embedding Framework for Efficient Vision-Language Alignment

Mar 10, 2025Abstract:Learning visual semantic similarity is a critical challenge in bridging the gap between images and texts. However, there exist inherent variations between vision and language data, such as information density, i.e., images can contain textual information from multiple different views, which makes it difficult to compute the similarity between these two modalities accurately and efficiently. In this paper, we propose a novel framework called Asymmetric Visual Semantic Embedding (AVSE) to dynamically select features from various regions of images tailored to different textual inputs for similarity calculation. To capture information from different views in the image, we design a radial bias sampling module to sample image patches and obtain image features from various views, Furthermore, AVSE introduces a novel module for efficient computation of visual semantic similarity between asymmetric image and text embeddings. Central to this module is the presumption of foundational semantic units within the embeddings, denoted as ``meta-semantic embeddings." It segments all embeddings into meta-semantic embeddings with the same dimension and calculates visual semantic similarity by finding the optimal match of meta-semantic embeddings of two modalities. Our proposed AVSE model is extensively evaluated on the large-scale MS-COCO and Flickr30K datasets, demonstrating its superiority over recent state-of-the-art methods.

Multi-view Granular-ball Contrastive Clustering

Dec 19, 2024

Abstract:Previous multi-view contrastive learning methods typically operate at two scales: instance-level and cluster-level. Instance-level approaches construct positive and negative pairs based on sample correspondences, aiming to bring positive pairs closer and push negative pairs further apart in the latent space. Cluster-level methods focus on calculating cluster assignments for samples under each view and maximize view consensus by reducing distribution discrepancies, e.g., minimizing KL divergence or maximizing mutual information. However, these two types of methods either introduce false negatives, leading to reduced model discriminability, or overlook local structures and cannot measure relationships between clusters across views explicitly. To this end, we propose a method named Multi-view Granular-ball Contrastive Clustering (MGBCC). MGBCC segments the sample set into coarse-grained granular balls, and establishes associations between intra-view and cross-view granular balls. These associations are reinforced in a shared latent space, thereby achieving multi-granularity contrastive learning. Granular balls lie between instances and clusters, naturally preserving the local topological structure of the sample set. We conduct extensive experiments to validate the effectiveness of the proposed method.

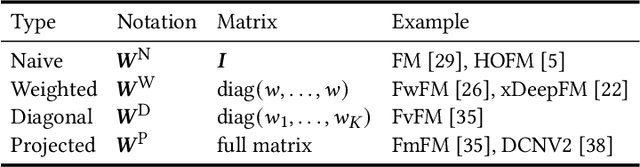

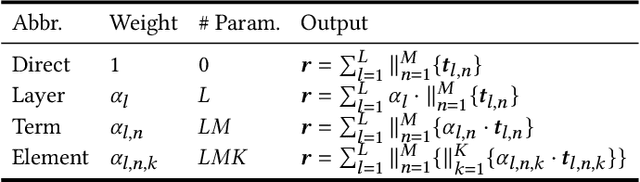

Towards Unifying Feature Interaction Models for Click-Through Rate Prediction

Nov 19, 2024

Abstract:Modeling feature interactions plays a crucial role in accurately predicting click-through rates (CTR) in advertising systems. To capture the intricate patterns of interaction, many existing models employ matrix-factorization techniques to represent features as lower-dimensional embedding vectors, enabling the modeling of interactions as products between these embeddings. In this paper, we propose a general framework called IPA to systematically unify these models. Our framework comprises three key components: the Interaction Function, which facilitates feature interaction; the Layer Pooling, which constructs higher-level interaction layers; and the Layer Aggregator, which combines the outputs of all layers to serve as input for the subsequent classifier. We demonstrate that most existing models can be categorized within our framework by making specific choices for these three components. Through extensive experiments and a dimensional collapse analysis, we evaluate the performance of these choices. Furthermore, by leveraging the most powerful components within our framework, we introduce a novel model that achieves competitive results compared to state-of-the-art CTR models. PFL gets significant GMV lift during online A/B test in Tencent's advertising platform and has been deployed as the production model in several primary scenarios.

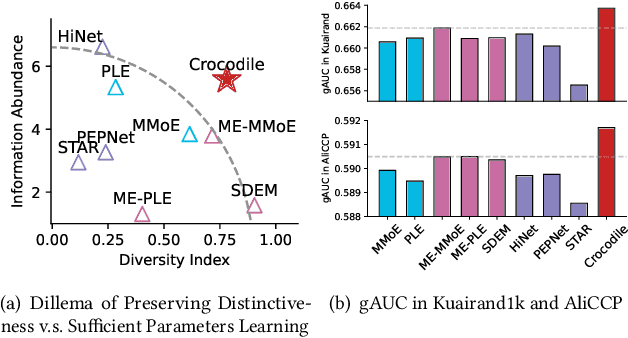

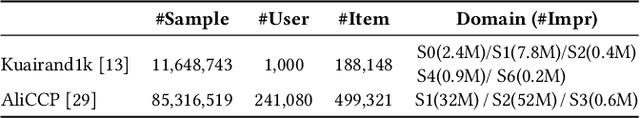

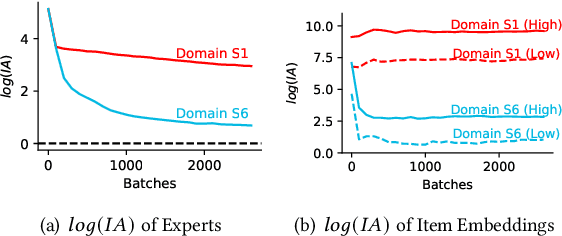

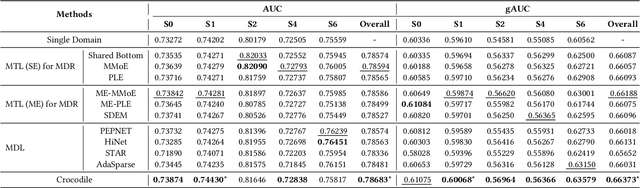

Disentangled Representation with Cross Experts Covariance Loss for Multi-Domain Recommendation

May 21, 2024

Abstract:Multi-domain learning (MDL) has emerged as a prominent research area aimed at enhancing the quality of personalized services. The key challenge in MDL lies in striking a balance between learning commonalities across domains while preserving the distinct characteristics of each domain. However, this gives rise to a challenging dilemma. On one hand, a model needs to leverage domain-specific modules, such as experts or embeddings, to preserve the uniqueness of each domain. On the other hand, due to the long-tailed distributions observed in real-world domains, some tail domains may lack sufficient samples to fully learn their corresponding modules. Unfortunately, existing approaches have not adequately addressed this dilemma. To address this issue, we propose a novel model called Crocodile, which stands for Cross-experts Covariance Loss for Disentangled Learning. Crocodile adopts a multi-embedding paradigm to facilitate model learning and employs a Covariance Loss on these embeddings to disentangle them. This disentanglement enables the model to capture diverse user interests across domains effectively. Additionally, we introduce a novel gating mechanism to further enhance the capabilities of Crocodile. Through empirical analysis, we demonstrate that our proposed method successfully resolves these two challenges and outperforms all state-of-the-art methods on publicly available datasets. We firmly believe that the analytical perspectives and design concept of disentanglement presented in our work can pave the way for future research in the field of MDL.

DrugLLM: Open Large Language Model for Few-shot Molecule Generation

May 07, 2024

Abstract:Large Language Models (LLMs) have made great strides in areas such as language processing and computer vision. Despite the emergence of diverse techniques to improve few-shot learning capacity, current LLMs fall short in handling the languages in biology and chemistry. For example, they are struggling to capture the relationship between molecule structure and pharmacochemical properties. Consequently, the few-shot learning capacity of small-molecule drug modification remains impeded. In this work, we introduced DrugLLM, a LLM tailored for drug design. During the training process, we employed Group-based Molecular Representation (GMR) to represent molecules, arranging them in sequences that reflect modifications aimed at enhancing specific molecular properties. DrugLLM learns how to modify molecules in drug discovery by predicting the next molecule based on past modifications. Extensive computational experiments demonstrate that DrugLLM can generate new molecules with expected properties based on limited examples, presenting a powerful few-shot molecule generation capacity.

Understanding the Ranking Loss for Recommendation with Sparse User Feedback

Mar 21, 2024Abstract:Click-through rate (CTR) prediction holds significant importance in the realm of online advertising. While many existing approaches treat it as a binary classification problem and utilize binary cross entropy (BCE) as the optimization objective, recent advancements have indicated that combining BCE loss with ranking loss yields substantial performance improvements. However, the full efficacy of this combination loss remains incompletely understood. In this paper, we uncover a new challenge associated with BCE loss in scenarios with sparse positive feedback, such as CTR prediction: the gradient vanishing for negative samples. Subsequently, we introduce a novel perspective on the effectiveness of ranking loss in CTR prediction, highlighting its ability to generate larger gradients on negative samples, thereby mitigating their optimization issues and resulting in improved classification ability. Our perspective is supported by extensive theoretical analysis and empirical evaluation conducted on publicly available datasets. Furthermore, we successfully deployed the ranking loss in Tencent's online advertising system, achieving notable lifts of 0.70% and 1.26% in Gross Merchandise Value (GMV) for two main scenarios. The code for our approach is openly accessible at the following GitHub repository: https://github.com/SkylerLinn/Understanding-the-Ranking-Loss.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge