Prayag Tiwari

Herculean: An Agentic Benchmark for Financial Intelligence

May 14, 2026Abstract:As AI agents improve, the central question is no longer whether they can solve isolated well-defined financial tasks, but whether they can reliably carry out financial professional work. Existing financial benchmarks offer only a partial view of this ability, as they primarily evaluate static competencies such as question answering, retrieval, summarization, and classification. We introduce Herculean, the first skilled benchmark for agentic financial intelligence spanning four representative workflows, including Trading, Hedging, Market Insights, and Auditing. Each workflow is instantiated as a standardized MCP-based skill environment with its own tools, interaction dynamics, constraints, and success criteria, enabling consistent end-to-end assessment of heterogeneous agent systems. Across frontier agents, we find agents perform relatively well on Trading and Market Insights, but struggle substantially on Hedging and Auditing, where long-horizon coordination, state consistency, and structured verification are critical. Overall, our results point to a key gap in current agents in turning financial reasoning into dependable workflow execution in high-stakes financial workflows.

Concordia: Self-Improving Synthetic Tables for Federated LLMs

May 11, 2026Abstract:Federated learning (FL) enables training large language models (LLMs) without sharing raw data, but adapting LLMs under strict data isolation and non-IID client distributions remains challenging in practice. Synthetic data offers a natural privacy-preserving surrogate for local training, yet existing federated pipelines typically treat synthetic generation as static or loosely coupled with downstream optimization, leading to rapidly diminishing utility under heterogeneous clients. We study federated adaptation of LLMs on tabular tasks where raw records and validation data cannot be shared, and local training must rely entirely on synthetic tables. We propose Concordia, a tri-level optimization framework that aligns synthetic data generation with federated validation utility despite these constraints. At the client level, models are adapted via parameter-efficient LoRA training on synthetic tables. Clients additionally learn lightweight utility scorers from private validation feedback to reweight synthetic samples during local training. At the outer level, each client refines its own synthetic table generator using group-relative policy optimization (GRPO), guided by an ensemble of heterogeneous scorers shared across clients, without aggregating generator parameters or exposing validation data. Experiments on privacy-sensitive tabular benchmarks from finance and healthcare demonstrate that Concordia consistently improves federated performance, cross-client stability, and robustness to distribution shift compared to static and decoupled synthetic-data baselines.

A Novel Automatic Framework for Speaker Drift Detection in Synthesized Speech

Apr 07, 2026Abstract:Recent diffusion-based text-to-speech (TTS) models achieve high naturalness and expressiveness, yet often suffer from speaker drift, a subtle, gradual shift in perceived speaker identity within a single utterance. This underexplored phenomenon undermines the coherence of synthetic speech, especially in long-form or interactive settings. We introduce the first automatic framework for detecting speaker drift by formulating it as a binary classification task over utterance-level speaker consistency. Our method computes cosine similarity across overlapping segments of synthesized speech and prompts large language models (LLMs) with structured representations to assess drift. We provide theoretical guarantees for cosine-based drift detection and demonstrate that speaker embeddings exhibit meaningful geometric clustering on the unit sphere. To support evaluation, we construct a high-quality synthetic benchmark with human-validated speaker drift annotations. Experiments with multiple state-of-the-art LLMs confirm the viability of this embedding-to-reasoning pipeline. Our work establishes speaker drift as a standalone research problem and bridges geometric signal analysis with LLM-based perceptual reasoning in modern TTS.

Attribution Upsampling should Redistribute, Not Interpolate

Mar 17, 2026Abstract:Attribution methods in explainable AI rely on upsampling techniques that were designed for natural images, not saliency maps. Standard bilinear and bicubic interpolation systematically corrupts attribution signals through aliasing, ringing, and boundary bleeding, producing spurious high-importance regions that misrepresent model reasoning. We identify that the core issue is treating attribution upsampling as an interpolation problem that operates in isolation from the model's reasoning, rather than a mass redistribution problem where model-derived semantic boundaries must govern how importance flows. We present Universal Semantic-Aware Upsampling (USU), a principled method that reformulates upsampling through ratio-form mass redistribution operators, provably preserving attribution mass and relative importance ordering. Extending the axiomatic tradition of feature attribution to upsampling, we formalize four desiderata for faithful upsampling and prove that interpolation structurally violates three of them. These same three force any redistribution operator into a ratio form; the fourth selects the unique potential within this family, yielding USU. Controlled experiments on models with known attribution priors verify USU's formal guarantees; evaluation across ImageNet, CIFAR-10, and CUB-200 confirms consistent faithfulness improvements and qualitatively superior, semantically coherent explanations.

MMPG: MoE-based Adaptive Multi-Perspective Graph Fusion for Protein Representation Learning

Jan 15, 2026Abstract:Graph Neural Networks (GNNs) have been widely adopted for Protein Representation Learning (PRL), as residue interaction networks can be naturally represented as graphs. Current GNN-based PRL methods typically rely on single-perspective graph construction strategies, which capture partial properties of residue interactions, resulting in incomplete protein representations. To address this limitation, we propose MMPG, a framework that constructs protein graphs from multiple perspectives and adaptively fuses them via Mixture of Experts (MoE) for PRL. MMPG constructs graphs from physical, chemical, and geometric perspectives to characterize different properties of residue interactions. To capture both perspective-specific features and their synergies, we develop an MoE module, which dynamically routes perspectives to specialized experts, where experts learn intrinsic features and cross-perspective interactions. We quantitatively verify that MoE automatically specializes experts in modeling distinct levels of interaction from individual representations, to pairwise inter-perspective synergies, and ultimately to a global consensus across all perspectives. Through integrating this multi-level information, MMPG produces superior protein representations and achieves advanced performance on four different downstream protein tasks.

All That Glisters Is Not Gold: A Benchmark for Reference-Free Counterfactual Financial Misinformation Detection

Jan 08, 2026Abstract:We introduce RFC Bench, a benchmark for evaluating large language models on financial misinformation under realistic news. RFC Bench operates at the paragraph level and captures the contextual complexity of financial news where meaning emerges from dispersed cues. The benchmark defines two complementary tasks: reference free misinformation detection and comparison based diagnosis using paired original perturbed inputs. Experiments reveal a consistent pattern: performance is substantially stronger when comparative context is available, while reference free settings expose significant weaknesses, including unstable predictions and elevated invalid outputs. These results indicate that current models struggle to maintain coherent belief states without external grounding. By highlighting this gap, RFC Bench provides a structured testbed for studying reference free reasoning and advancing more reliable financial misinformation detection in real world settings.

FinCriticalED: A Visual Benchmark for Financial Fact-Level OCR Evaluation

Nov 19, 2025Abstract:We introduce FinCriticalED (Financial Critical Error Detection), a visual benchmark for evaluating OCR and vision language models on financial documents at the fact level. Financial documents contain visually dense and table heavy layouts where numerical and temporal information is tightly coupled with structure. In high stakes settings, small OCR mistakes such as sign inversion or shifted dates can lead to materially different interpretations, while traditional OCR metrics like ROUGE and edit distance capture only surface level text similarity. \ficriticaled provides 500 image-HTML pairs with expert annotated financial facts covering over seven hundred numerical and temporal facts. It introduces three key contributions. First, it establishes the first fact level evaluation benchmark for financial document understanding, shifting evaluation from lexical overlap to domain critical factual correctness. Second, all annotations are created and verified by financial experts with strict quality control over signs, magnitudes, and temporal expressions. Third, we develop an LLM-as-Judge evaluation pipeline that performs structured fact extraction and contextual verification for visually complex financial documents. We benchmark OCR systems, open source vision language models, and proprietary models on FinCriticalED. Results show that although the strongest proprietary models achieve the highest factual accuracy, substantial errors remain in visually intricate numerical and temporal contexts. Through quantitative evaluation and expert case studies, FinCriticalED provides a rigorous foundation for advancing visual factual precision in financial and other precision critical domains.

Exploring the correlation between the type of music and the emotions evoked: A study using subjective questionnaires and EEG

Oct 30, 2025Abstract:The subject of this work is to check how different types of music affect human emotions. While listening to music, a subjective survey and brain activity measurements were carried out using an EEG helmet. The aim is to demonstrate the impact of different music genres on emotions. The research involved a diverse group of participants of different gender and musical preferences. This had the effect of capturing a wide range of emotional responses to music. After the experiment, a relationship analysis of the respondents' questionnaires with EEG signals was performed. The analysis revealed connections between emotions and observed brain activity.

Self-Calibrated Consistency can Fight Back for Adversarial Robustness in Vision-Language Models

Oct 26, 2025Abstract:Pre-trained vision-language models (VLMs) such as CLIP have demonstrated strong zero-shot capabilities across diverse domains, yet remain highly vulnerable to adversarial perturbations that disrupt image-text alignment and compromise reliability. Existing defenses typically rely on adversarial fine-tuning with labeled data, limiting their applicability in zero-shot settings. In this work, we identify two key weaknesses of current CLIP adversarial attacks -- lack of semantic guidance and vulnerability to view variations -- collectively termed semantic and viewpoint fragility. To address these challenges, we propose Self-Calibrated Consistency (SCC), an effective test-time defense. SCC consists of two complementary modules: Semantic consistency, which leverages soft pseudo-labels from counterattack warm-up and multi-view predictions to regularize cross-modal alignment and separate the target embedding from confusable negatives; and Spatial consistency, aligning perturbed visual predictions via augmented views to stabilize inference under adversarial perturbations. Together, these modules form a plug-and-play inference strategy. Extensive experiments on 22 benchmarks under diverse attack settings show that SCC consistently improves the zero-shot robustness of CLIP while maintaining accuracy, and can be seamlessly integrated with other VLMs for further gains. These findings highlight the great potential of establishing an adversarially robust paradigm from CLIP, with implications extending to broader vision-language domains such as BioMedCLIP.

Quantum Long Short-term Memory with Differentiable Architecture Search

Aug 20, 2025

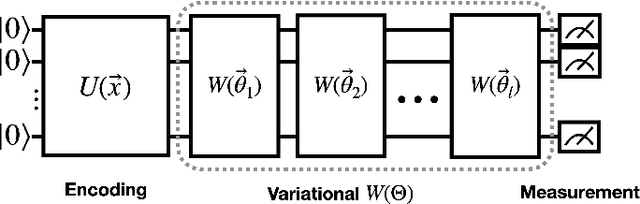

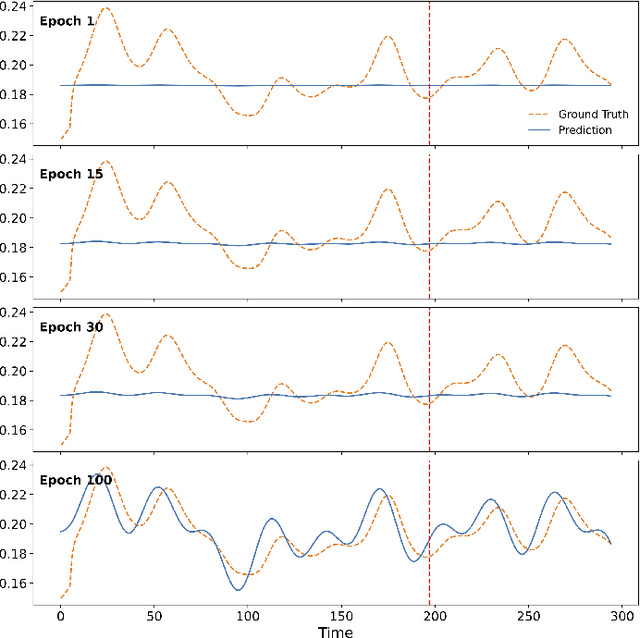

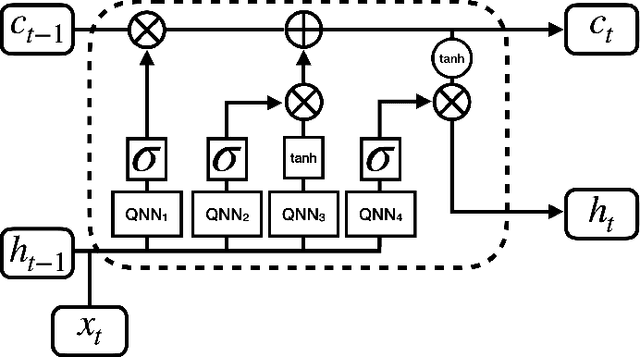

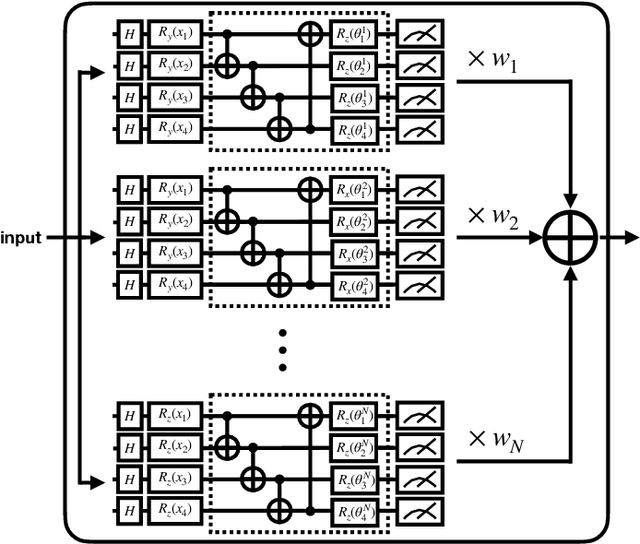

Abstract:Recent advances in quantum computing and machine learning have given rise to quantum machine learning (QML), with growing interest in learning from sequential data. Quantum recurrent models like QLSTM are promising for time-series prediction, NLP, and reinforcement learning. However, designing effective variational quantum circuits (VQCs) remains challenging and often task-specific. To address this, we propose DiffQAS-QLSTM, an end-to-end differentiable framework that optimizes both VQC parameters and architecture selection during training. Our results show that DiffQAS-QLSTM consistently outperforms handcrafted baselines, achieving lower loss across diverse test settings. This approach opens the door to scalable and adaptive quantum sequence learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge