Michael C. Yip

Safe Stochastic Explorer: Enabling Safe Goal Driven Exploration in Stochastic Environments and Safe Interaction with Unknown Objects

Jan 31, 2026Abstract:Autonomous robots operating in unstructured, safety-critical environments, from planetary exploration to warehouses and homes, must learn to safely navigate and interact with their surroundings despite limited prior knowledge. Current methods for safe control, such as Hamilton-Jacobi Reachability and Control Barrier Functions, assume known system dynamics. Meanwhile existing safe exploration techniques often fail to account for the unavoidable stochasticity inherent when operating in unknown real world environments, such as an exploratory rover skidding over an unseen surface or a household robot pushing around unmapped objects in a pantry. To address this critical gap, we propose Safe Stochastic Explorer (S.S.Explorer) a novel framework for safe, goal-driven exploration under stochastic dynamics. Our approach strategically balances safety and information gathering to reduce uncertainty about safety in the unknown environment. We employ Gaussian Processes to learn the unknown safety function online, leveraging their predictive uncertainty to guide information-gathering actions and provide probabilistic bounds on safety violations. We first present our method for discrete state space environments and then introduce a scalable relaxation to effectively extend this approach to continuous state spaces. Finally we demonstrate how this framework can be naturally applied to ensure safe physical interaction with multiple unknown objects. Extensive validation in simulation and demonstrative hardware experiments showcase the efficacy of our method, representing a step forward toward enabling reliable widespread robot autonomy in complex, uncertain environments.

Learning to Nudge: A Scalable Barrier Function Framework for Safe Robot Interaction in Dense Clutter

Jan 06, 2026Abstract:Robots operating in everyday environments must navigate and manipulate within densely cluttered spaces, where physical contact with surrounding objects is unavoidable. Traditional safety frameworks treat contact as unsafe, restricting robots to collision avoidance and limiting their ability to function in dense, everyday settings. As the number of objects grows, model-based approaches for safe manipulation become computationally intractable; meanwhile, learned methods typically tie safety to the task at hand, making them hard to transfer to new tasks without retraining. In this work we introduce Dense Contact Barrier Functions(DCBF). Our approach bypasses the computational complexity of explicitly modeling multi-object dynamics by instead learning a composable, object-centric function that implicitly captures the safety constraints arising from physical interactions. Trained offline on interactions with a few objects, the learned DCBFcomposes across arbitrary object sets at runtime, producing a single global safety filter that scales linearly and transfers across tasks without retraining. We validate our approach through simulated experiments in dense clutter, demonstrating its ability to enable collision-free navigation and safe, contact-rich interaction in suitable settings.

Characterization and Evaluation of Screw-Based Locomotion Across Aquatic, Granular, and Transitional Media

Nov 15, 2025Abstract:Screw-based propulsion systems offer promising capabilities for amphibious mobility, yet face significant challenges in optimizing locomotion across water, granular materials, and transitional environments. This study presents a systematic investigation into the locomotion performance of various screw configurations in media such as dry sand, wet sand, saturated sand, and water. Through a principles-first approach to analyze screw performance, it was found that certain parameters are dominant in their impact on performance. Depending on the media, derived parameters inspired from optimizing heat sink design help categorize performance within the dominant design parameters. Our results provide specific insights into screw shell design and adaptive locomotion strategies to enhance the performance of screw-based propulsion systems for versatile amphibious applications.

ARCSnake V2: An Amphibious Multi-Domain Screw-Propelled Snake-Like Robot

Nov 15, 2025Abstract:Robotic exploration in extreme environments such as caves, oceans, and planetary surfaces pose significant challenges, particularly in locomotion across diverse terrains. Conventional wheeled or legged robots often struggle in these contexts due to surface variability. This paper presents ARCSnake V2, an amphibious, screw propelled, snake like robot designed for teleoperated or autonomous locomotion across land, granular media, and aquatic environments. ARCSnake V2 combines the high mobility of hyper redundant snake robots with the terrain versatility of Archimedean screw propulsion. Key contributions include a water sealed mechanical design with serially linked screw and joint actuation, an integrated buoyancy control system, and teleoperation via a kinematically matched handheld controller. The robots design and control architecture enable multiple locomotion modes screwing, wheeling, and sidewinding with smooth transitions between them. Extensive experiments validate its underwater maneuverability, communication robustness, and force regulated actuation. These capabilities position ARCSnake V2 as a versatile platform for exploration, search and rescue, and environmental monitoring in multi domain settings.

Differentiable Rendering-based Pose Estimation for Surgical Robotic Instruments

Mar 07, 2025

Abstract:Robot pose estimation is a challenging and crucial task for vision-based surgical robotic automation. Typical robotic calibration approaches, however, are not applicable to surgical robots, such as the da Vinci Research Kit (dVRK), due to joint angle measurement errors from cable-drives and the partially visible kinematic chain. Hence, previous works in surgical robotic automation used tracking algorithms to estimate the pose of the surgical tool in real-time and compensate for the joint angle errors. However, a big limitation of these previous tracking works is the initialization step which relied on only keypoints and SolvePnP. In this work, we fully explore the potential of geometric primitives beyond just keypoints with differentiable rendering, cylinders, and construct a versatile pose matching pipeline in a novel pose hypothesis space. We demonstrate the state-of-the-art performance of our single-shot calibration method with both calibration consistency and real surgical tasks. As a result, this marker-less calibration approach proves to be a robust and generalizable initialization step for surgical tool tracking.

Back to Base: Towards Hands-Off Learning via Safe Resets with Reach-Avoid Safety Filters

Jan 05, 2025Abstract:Designing controllers that accomplish tasks while guaranteeing safety constraints remains a significant challenge. We often want an agent to perform well in a nominal task, such as environment exploration, while ensuring it can avoid unsafe states and return to a desired target by a specific time. In particular we are motivated by the setting of safe, efficient, hands-off training for reinforcement learning in the real world. By enabling a robot to safely and autonomously reset to a desired region (e.g., charging stations) without human intervention, we can enhance efficiency and facilitate training. Safety filters, such as those based on control barrier functions, decouple safety from nominal control objectives and rigorously guarantee safety. Despite their success, constructing these functions for general nonlinear systems with control constraints and system uncertainties remains an open problem. This paper introduces a safety filter obtained from the value function associated with the reach-avoid problem. The proposed safety filter minimally modifies the nominal controller while avoiding unsafe regions and guiding the system back to the desired target set. By preserving policy performance while allowing safe resetting, we enable efficient hands-off reinforcement learning and advance the feasibility of safe training for real world robots. We demonstrate our approach using a modified version of soft actor-critic to safely train a swing-up task on a modified cartpole stabilization problem.

Haptic Shoulder for Rendering Biomechanically Accurate Joint Limits for Human-Robot Physical Interactions

Sep 20, 2024Abstract:Human-robot physical interaction (pHRI) is a rapidly evolving research field with significant implications for physical therapy, search and rescue, and telemedicine. However, a major challenge lies in accurately understanding human constraints and safety in human-robot physical experiments without an IRB and physical human experiments. Concerns regarding human studies include safety concerns, repeatability, and scalability of the number and diversity of participants. This paper examines whether a physical approximation can serve as a stand-in for human subjects to enhance robot autonomy for physical assistance. This paper introduces the SHULDRD (Shoulder Haptic Universal Limb Dynamic Repositioning Device), an economical and anatomically similar device designed for real-time testing and deployment of pHRI planning tasks onto robots in the real world. SHULDRD replicates human shoulder motion, providing crucial force feedback and safety data. The device's open-source CAD and software facilitate easy construction and use, ensuring broad accessibility for researchers. By providing a flexible platform able to emulate infinite human subjects, ensure repeatable trials, and provide quantitative metrics to assess the effectiveness of the robotic intervention, SHULDRD aims to improve the safety and efficacy of human-robot physical interactions.

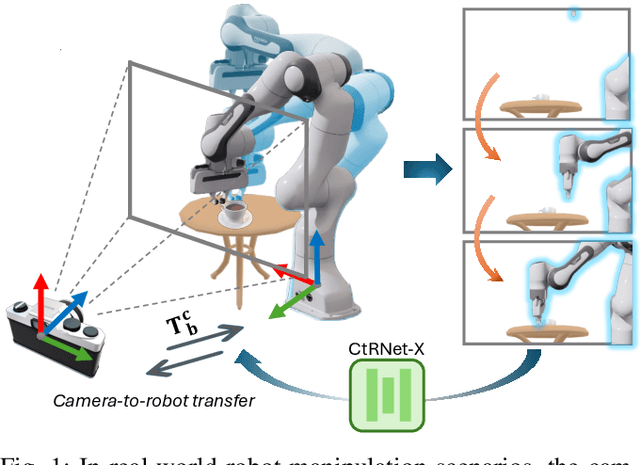

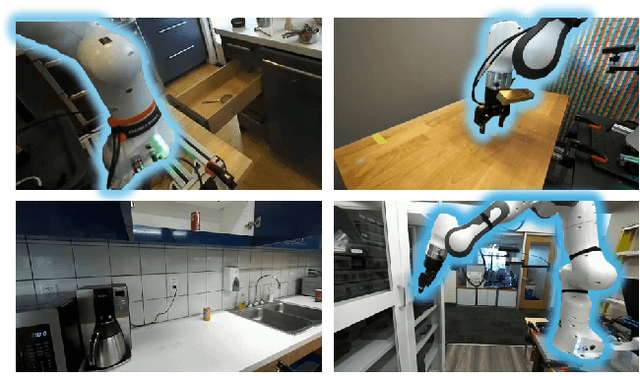

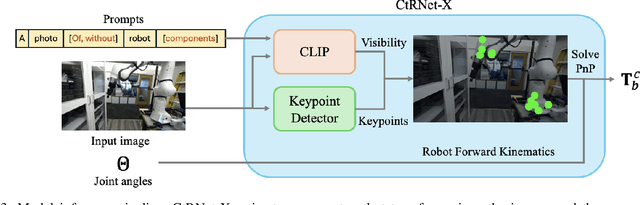

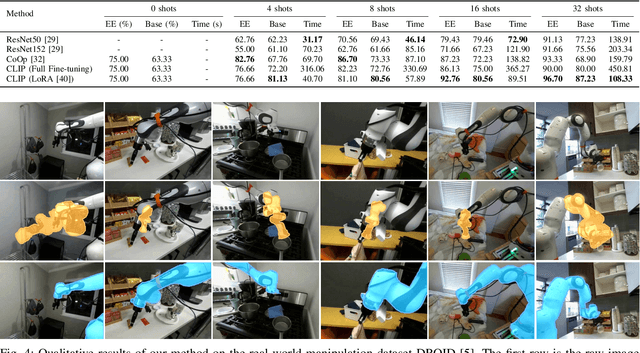

CtRNet-X: Camera-to-Robot Pose Estimation in Real-world Conditions Using a Single Camera

Sep 16, 2024

Abstract:Camera-to-robot calibration is crucial for vision-based robot control and requires effort to make it accurate. Recent advancements in markerless pose estimation methods have eliminated the need for time-consuming physical setups for camera-to-robot calibration. While the existing markerless pose estimation methods have demonstrated impressive accuracy without the need for cumbersome setups, they rely on the assumption that all the robot joints are visible within the camera's field of view. However, in practice, robots usually move in and out of view, and some portion of the robot may stay out-of-frame during the whole manipulation task due to real-world constraints, leading to a lack of sufficient visual features and subsequent failure of these approaches. To address this challenge and enhance the applicability to vision-based robot control, we propose a novel framework capable of estimating the robot pose with partially visible robot manipulators. Our approach leverages the Vision-Language Models for fine-grained robot components detection, and integrates it into a keypoint-based pose estimation network, which enables more robust performance in varied operational conditions. The framework is evaluated on both public robot datasets and self-collected partial-view datasets to demonstrate our robustness and generalizability. As a result, this method is effective for robot pose estimation in a wider range of real-world manipulation scenarios.

Autonomous Image-to-Grasp Robotic Suturing Using Reliability-Driven Suture Thread Reconstruction

Aug 29, 2024

Abstract:Automating suturing during robotically-assisted surgery reduces the burden on the operating surgeon, enabling them to focus on making higher-level decisions rather than fatiguing themselves in the numerous intricacies of a surgical procedure. Accurate suture thread reconstruction and grasping are vital prerequisites for suturing, particularly for avoiding entanglement with surgical tools and performing complex thread manipulation. However, such methods must be robust to heavy perceptual degradation resulting from heavy noise and thread feature sparsity from endoscopic images. We develop a reconstruction algorithm that utilizes quadratic programming optimization to fit smooth splines to thread observations, satisfying reliability bounds estimated from measured observation noise. Additionally, we craft a grasping policy that generates gripper trajectories that maximize the probability of a successful grasp. Our full image-to-grasp pipeline is rigorously evaluated with over 400 grasping trials, exhibiting state-of-the-art accuracy. We show that this strategy can be applied to the various techniques in autonomous suture needle manipulation to achieve autonomous surgery in a generalizable way.

JIGGLE: An Active Sensing Framework for Boundary Parameters Estimation in Deformable Surgical Environments

May 16, 2024Abstract:Surgical automation can improve the accessibility and consistency of life saving procedures. Most surgeries require separating layers of tissue to access the surgical site, and suturing to reattach incisions. These tasks involve deformable manipulation to safely identify and alter tissue attachment (boundary) topology. Due to poor visual acuity and frequent occlusions, surgeons tend to carefully manipulate the tissue in ways that enable inference of the tissue's attachment points without causing unsafe tearing. In a similar fashion, we propose JIGGLE, a framework for estimation and interactive sensing of unknown boundary parameters in deformable surgical environments. This framework has two key components: (1) a probabilistic estimation to identify the current attachment points, achieved by integrating a differentiable soft-body simulator with an extended Kalman filter (EKF), and (2) an optimization-based active control pipeline that generates actions to maximize information gain of the tissue attachments, while simultaneously minimizing safety costs. The robustness of our estimation approach is demonstrated through experiments with real animal tissue, where we infer sutured attachment points using stereo endoscope observations. We also demonstrate the capabilities of our method in handling complex topological changes such as cutting and suturing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge