Lei He

PodEval: A Multimodal Evaluation Framework for Podcast Audio Generation

Oct 01, 2025Abstract:Recently, an increasing number of multimodal (text and audio) benchmarks have emerged, primarily focusing on evaluating models' understanding capability. However, exploration into assessing generative capabilities remains limited, especially for open-ended long-form content generation. Significant challenges lie in no reference standard answer, no unified evaluation metrics and uncontrollable human judgments. In this work, we take podcast-like audio generation as a starting point and propose PodEval, a comprehensive and well-designed open-source evaluation framework. In this framework: 1) We construct a real-world podcast dataset spanning diverse topics, serving as a reference for human-level creative quality. 2) We introduce a multimodal evaluation strategy and decompose the complex task into three dimensions: text, speech and audio, with different evaluation emphasis on "Content" and "Format". 3) For each modality, we design corresponding evaluation methods, involving both objective metrics and subjective listening test. We leverage representative podcast generation systems (including open-source, close-source, and human-made) in our experiments. The results offer in-depth analysis and insights into podcast generation, demonstrating the effectiveness of PodEval in evaluating open-ended long-form audio. This project is open-source to facilitate public use: https://github.com/yujxx/PodEval.

Fine-Tuning Large Multimodal Models for Automatic Pronunciation Assessment

Sep 19, 2025Abstract:Automatic Pronunciation Assessment (APA) is critical for Computer-Assisted Language Learning (CALL), requiring evaluation across multiple granularities and aspects. Large Multimodal Models (LMMs) present new opportunities for APA, but their effectiveness in fine-grained assessment remains uncertain. This work investigates fine-tuning LMMs for APA using the Speechocean762 dataset and a private corpus. Fine-tuning significantly outperforms zero-shot settings and achieves competitive results on single-granularity tasks compared to public and commercial systems. The model performs well at word and sentence levels, while phoneme-level assessment remains challenging. We also observe that the Pearson Correlation Coefficient (PCC) reaches 0.9, whereas Spearman's rank Correlation Coefficient (SCC) remains around 0.6, suggesting that SCC better reflects ordinal consistency. These findings highlight both the promise and limitations of LMMs for APA and point to future work on fine-grained modeling and rank-aware evaluation.

TransforMARS: Fault-Tolerant Self-Reconfiguration for Arbitrarily Shaped Modular Aerial Robot Systems

Sep 17, 2025Abstract:Modular Aerial Robot Systems (MARS) consist of multiple drone modules that are physically bound together to form a single structure for flight. Exploiting structural redundancy, MARS can be reconfigured into different formations to mitigate unit or rotor failures and maintain stable flight. Prior work on MARS self-reconfiguration has solely focused on maximizing controllability margins to tolerate a single rotor or unit fault for rectangular-shaped MARS. We propose TransforMARS, a general fault-tolerant reconfiguration framework that transforms arbitrarily shaped MARS under multiple rotor and unit faults while ensuring continuous in-air stability. Specifically, we develop algorithms to first identify and construct minimum controllable assemblies containing faulty units. We then plan feasible disassembly-assembly sequences to transport MARS units or subassemblies to form target configuration. Our approach enables more flexible and practical feasible reconfiguration. We validate TransforMARS in challenging arbitrarily shaped MARS configurations, demonstrating substantial improvements over prior works in both the capacity of handling diverse configurations and the number of faults tolerated. The videos and source code of this work are available at the anonymous repository: https://anonymous.4open.science/r/TransforMARS-1030/

SIGN: Safety-Aware Image-Goal Navigation for Autonomous Drones via Reinforcement Learning

Aug 17, 2025Abstract:Image-goal navigation (ImageNav) tasks a robot with autonomously exploring an unknown environment and reaching a location that visually matches a given target image. While prior works primarily study ImageNav for ground robots, enabling this capability for autonomous drones is substantially more challenging due to their need for high-frequency feedback control and global localization for stable flight. In this paper, we propose a novel sim-to-real framework that leverages visual reinforcement learning (RL) to achieve ImageNav for drones. To enhance visual representation ability, our approach trains the vision backbone with auxiliary tasks, including image perturbations and future transition prediction, which results in more effective policy training. The proposed algorithm enables end-to-end ImageNav with direct velocity control, eliminating the need for external localization. Furthermore, we integrate a depth-based safety module for real-time obstacle avoidance, allowing the drone to safely navigate in cluttered environments. Unlike most existing drone navigation methods that focus solely on reference tracking or obstacle avoidance, our framework supports comprehensive navigation behaviors--autonomous exploration, obstacle avoidance, and image-goal seeking--without requiring explicit global mapping. Code and model checkpoints will be released upon acceptance.

Decoupled Functional Evaluation of Autonomous Driving Models via Feature Map Quality Scoring

Aug 12, 2025Abstract:End-to-end models are emerging as the mainstream in autonomous driving perception and planning. However, the lack of explicit supervision signals for intermediate functional modules leads to opaque operational mechanisms and limited interpretability, making it challenging for traditional methods to independently evaluate and train these modules. Pioneering in the issue, this study builds upon the feature map-truth representation similarity-based evaluation framework and proposes an independent evaluation method based on Feature Map Convergence Score (FMCS). A Dual-Granularity Dynamic Weighted Scoring System (DG-DWSS) is constructed, formulating a unified quantitative metric - Feature Map Quality Score - to enable comprehensive evaluation of the quality of feature maps generated by functional modules. A CLIP-based Feature Map Quality Evaluation Network (CLIP-FMQE-Net) is further developed, combining feature-truth encoders and quality score prediction heads to enable real-time quality analysis of feature maps generated by functional modules. Experimental results on the NuScenes dataset demonstrate that integrating our evaluation module into the training improves 3D object detection performance, achieving a 3.89 percent gain in NDS. These results verify the effectiveness of our method in enhancing feature representation quality and overall model performance.

VLM-3D:End-to-End Vision-Language Models for Open-World 3D Perception

Aug 12, 2025Abstract:Open-set perception in complex traffic environments poses a critical challenge for autonomous driving systems, particularly in identifying previously unseen object categories, which is vital for ensuring safety. Visual Language Models (VLMs), with their rich world knowledge and strong semantic reasoning capabilities, offer new possibilities for addressing this task. However, existing approaches typically leverage VLMs to extract visual features and couple them with traditional object detectors, resulting in multi-stage error propagation that hinders perception accuracy. To overcome this limitation, we propose VLM-3D, the first end-to-end framework that enables VLMs to perform 3D geometric perception in autonomous driving scenarios. VLM-3D incorporates Low-Rank Adaptation (LoRA) to efficiently adapt VLMs to driving tasks with minimal computational overhead, and introduces a joint semantic-geometric loss design: token-level semantic loss is applied during early training to ensure stable convergence, while 3D IoU loss is introduced in later stages to refine the accuracy of 3D bounding box predictions. Evaluations on the nuScenes dataset demonstrate that the proposed joint semantic-geometric loss in VLM-3D leads to a 12.8% improvement in perception accuracy, fully validating the effectiveness and advancement of our method.

CBDES MoE: Hierarchically Decoupled Mixture-of-Experts for Functional Modules in Autonomous Driving

Aug 11, 2025Abstract:Bird's Eye View (BEV) perception systems based on multi-sensor feature fusion have become a fundamental cornerstone for end-to-end autonomous driving. However, existing multi-modal BEV methods commonly suffer from limited input adaptability, constrained modeling capacity, and suboptimal generalization. To address these challenges, we propose a hierarchically decoupled Mixture-of-Experts architecture at the functional module level, termed Computing Brain DEvelopment System Mixture-of-Experts (CBDES MoE). CBDES MoE integrates multiple structurally heterogeneous expert networks with a lightweight Self-Attention Router (SAR) gating mechanism, enabling dynamic expert path selection and sparse, input-aware efficient inference. To the best of our knowledge, this is the first modular Mixture-of-Experts framework constructed at the functional module granularity within the autonomous driving domain. Extensive evaluations on the real-world nuScenes dataset demonstrate that CBDES MoE consistently outperforms fixed single-expert baselines in 3D object detection. Compared to the strongest single-expert model, CBDES MoE achieves a 1.6-point increase in mAP and a 4.1-point improvement in NDS, demonstrating the effectiveness and practical advantages of the proposed approach.

FMCE-Net++: Feature Map Convergence Evaluation and Training

Aug 08, 2025Abstract:Deep Neural Networks (DNNs) face interpretability challenges due to their opaque internal representations. While Feature Map Convergence Evaluation (FMCE) quantifies module-level convergence via Feature Map Convergence Scores (FMCS), it lacks experimental validation and closed-loop integration. To address this limitation, we propose FMCE-Net++, a novel training framework that integrates a pretrained, frozen FMCE-Net as an auxiliary head. This module generates FMCS predictions, which, combined with task labels, jointly supervise backbone optimization through a Representation Auxiliary Loss. The RAL dynamically balances the primary classification loss and feature convergence optimization via a tunable \Representation Abstraction Factor. Extensive experiments conducted on MNIST, CIFAR-10, FashionMNIST, and CIFAR-100 demonstrate that FMCE-Net++ consistently enhances model performance without architectural modifications or additional data. Key experimental outcomes include accuracy gains of $+1.16$ pp (ResNet-50/CIFAR-10) and $+1.08$ pp (ShuffleNet v2/CIFAR-100), validating that FMCE-Net++ can effectively elevate state-of-the-art performance ceilings.

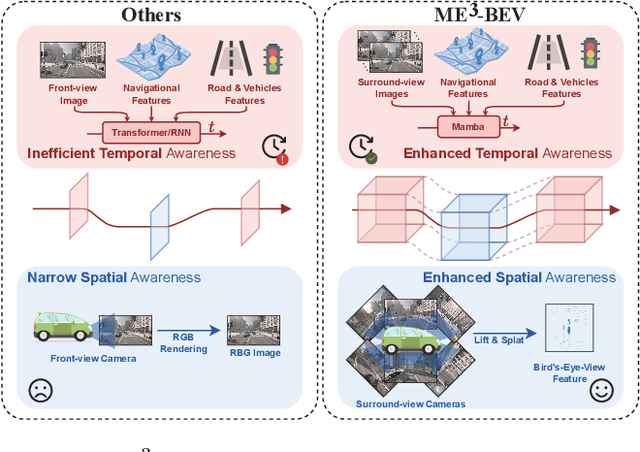

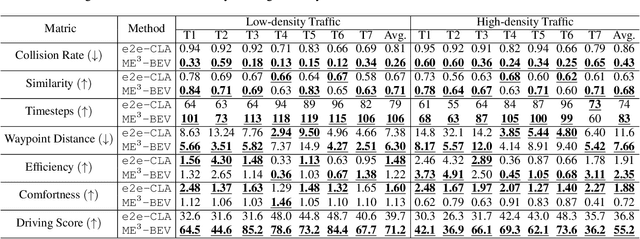

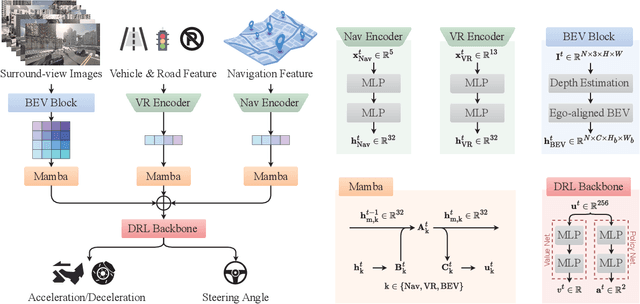

ME$^3$-BEV: Mamba-Enhanced Deep Reinforcement Learning for End-to-End Autonomous Driving with BEV-Perception

Aug 08, 2025

Abstract:Autonomous driving systems face significant challenges in perceiving complex environments and making real-time decisions. Traditional modular approaches, while offering interpretability, suffer from error propagation and coordination issues, whereas end-to-end learning systems can simplify the design but face computational bottlenecks. This paper presents a novel approach to autonomous driving using deep reinforcement learning (DRL) that integrates bird's-eye view (BEV) perception for enhanced real-time decision-making. We introduce the \texttt{Mamba-BEV} model, an efficient spatio-temporal feature extraction network that combines BEV-based perception with the Mamba framework for temporal feature modeling. This integration allows the system to encode vehicle surroundings and road features in a unified coordinate system and accurately model long-range dependencies. Building on this, we propose the \texttt{ME$^3$-BEV} framework, which utilizes the \texttt{Mamba-BEV} model as a feature input for end-to-end DRL, achieving superior performance in dynamic urban driving scenarios. We further enhance the interpretability of the model by visualizing high-dimensional features through semantic segmentation, providing insight into the learned representations. Extensive experiments on the CARLA simulator demonstrate that \texttt{ME$^3$-BEV} outperforms existing models across multiple metrics, including collision rate and trajectory accuracy, offering a promising solution for real-time autonomous driving.

ArbiViewGen: Controllable Arbitrary Viewpoint Camera Data Generation for Autonomous Driving via Stable Diffusion Models

Aug 07, 2025

Abstract:Arbitrary viewpoint image generation holds significant potential for autonomous driving, yet remains a challenging task due to the lack of ground-truth data for extrapolated views, which hampers the training of high-fidelity generative models. In this work, we propose Arbiviewgen, a novel diffusion-based framework for the generation of controllable camera images from arbitrary points of view. To address the absence of ground-truth data in unseen views, we introduce two key components: Feature-Aware Adaptive View Stitching (FAVS) and Cross-View Consistency Self-Supervised Learning (CVC-SSL). FAVS employs a hierarchical matching strategy that first establishes coarse geometric correspondences using camera poses, then performs fine-grained alignment through improved feature matching algorithms, and identifies high-confidence matching regions via clustering analysis. Building upon this, CVC-SSL adopts a self-supervised training paradigm where the model reconstructs the original camera views from the synthesized stitched images using a diffusion model, enforcing cross-view consistency without requiring supervision from extrapolated data. Our framework requires only multi-camera images and their associated poses for training, eliminating the need for additional sensors or depth maps. To our knowledge, Arbiviewgen is the first method capable of controllable arbitrary view camera image generation in multiple vehicle configurations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge