Jun Fang

IEEE

Compress the Easy, Explore the Hard: Difficulty-Aware Entropy Regularization for Efficient LLM Reasoning

Feb 26, 2026Abstract:Chain-of-Thought (CoT) has substantially empowered Large Language Models (LLMs) to tackle complex reasoning tasks, yet the verbose nature of explicit reasoning steps incurs prohibitive inference latency and computational costs, limiting real-world deployment. While existing compression methods - ranging from self-training to Reinforcement Learning (RL) with length constraints - attempt to mitigate this, they often sacrifice reasoning capability for brevity. We identify a critical failure mode in these approaches: explicitly optimizing for shorter trajectories triggers rapid entropy collapse, which prematurely shrinks the exploration space and stifles the discovery of valid reasoning paths, particularly for challenging questions requiring extensive deduction. To address this issue, we propose Compress responses for Easy questions and Explore Hard ones (CEEH), a difficulty-aware approach to RL-based efficient reasoning. CEEH dynamically assesses instance difficulty to apply selective entropy regularization: it preserves a diverse search space for currently hard questions to ensure robustness, while permitting aggressive compression on easier instances where the reasoning path is well-established. In addition, we introduce a dynamic optimal-length penalty anchored to the historically shortest correct response, which effectively counteracts entropy-induced length inflation and stabilizes the reward signal. Across six reasoning benchmarks, CEEH consistently reduces response length while maintaining accuracy comparable to the base model, and improves Pass@k relative to length-only optimization.

WavBench: Benchmarking Reasoning, Colloquialism, and Paralinguistics for End-to-End Spoken Dialogue Models

Feb 13, 2026Abstract:With the rapid integration of advanced reasoning capabilities into spoken dialogue models, the field urgently demands benchmarks that transcend simple interactions to address real-world complexity. However, current evaluations predominantly adhere to text-generation standards, overlooking the unique audio-centric characteristics of paralinguistics and colloquialisms, alongside the cognitive depth required by modern agents. To bridge this gap, we introduce WavBench, a comprehensive benchmark designed to evaluate realistic conversational abilities where prior works fall short. Uniquely, WavBench establishes a tripartite framework: 1) Pro subset, designed to rigorously challenge reasoning-enhanced models with significantly increased difficulty; 2) Basic subset, defining a novel standard for spoken colloquialism that prioritizes "listenability" through natural vocabulary, linguistic fluency, and interactive rapport, rather than rigid written accuracy; and 3) Acoustic subset, covering explicit understanding, generation, and implicit dialogue to rigorously evaluate comprehensive paralinguistic capabilities within authentic real-world scenarios. Through evaluating five state-of-the-art models, WavBench offers critical insights into the intersection of complex problem-solving, colloquial delivery, and paralinguistic fidelity, guiding the evolution of robust spoken dialogue models. The benchmark dataset and evaluation toolkit are available at https://naruto-2024.github.io/wavbench.github.io/.

Spectral Disentanglement and Enhancement: A Dual-domain Contrastive Framework for Representation Learning

Feb 09, 2026Abstract:Large-scale multimodal contrastive learning has recently achieved impressive success in learning rich and transferable representations, yet it remains fundamentally limited by the uniform treatment of feature dimensions and the neglect of the intrinsic spectral structure of the learned features. Empirical evidence indicates that high-dimensional embeddings tend to collapse into narrow cones, concentrating task-relevant semantics in a small subspace, while the majority of dimensions remain occupied by noise and spurious correlations. Such spectral imbalance and entanglement undermine model generalization. We propose Spectral Disentanglement and Enhancement (SDE), a novel framework that bridges the gap between the geometry of the embedded spaces and their spectral properties. Our approach leverages singular value decomposition to adaptively partition feature dimensions into strong signals that capture task-critical semantics, weak signals that reflect ancillary correlations, and noise representing irrelevant perturbations. A curriculum-based spectral enhancement strategy is then applied, selectively amplifying informative components with theoretical guarantees on training stability. Building upon the enhanced features, we further introduce a dual-domain contrastive loss that jointly optimizes alignment in both the feature and spectral spaces, effectively integrating spectral regularization into the training process and encouraging richer, more robust representations. Extensive experiments on large-scale multimodal benchmarks demonstrate that SDE consistently improves representation robustness and generalization, outperforming state-of-the-art methods. SDE integrates seamlessly with existing contrastive pipelines, offering an effective solution for multimodal representation learning.

Agent-Omit: Training Efficient LLM Agents for Adaptive Thought and Observation Omission via Agentic Reinforcement Learning

Feb 04, 2026Abstract:Managing agent thought and observation during multi-turn agent-environment interactions is an emerging strategy to improve agent efficiency. However, existing studies treat the entire interaction trajectories equally, overlooking the thought necessity and observation utility varies across turns. To this end, we first conduct quantitative investigations into how thought and observation affect agent effectiveness and efficiency. Based on our findings, we propose Agent-Omit, a unified training framework that empowers LLM agents to adaptively omit redundant thoughts and observations. Specifically, we first synthesize a small amount of cold-start data, including both single-turn and multi-turn omission scenarios, to fine-tune the agent for omission behaviors. Furthermore, we introduce an omit-aware agentic reinforcement learning approach, incorporating a dual sampling mechanism and a tailored omission reward to incentivize the agent's adaptive omission capability. Theoretically, we prove that the deviation of our omission policy is upper-bounded by KL-divergence. Experimental results on five agent benchmarks show that our constructed Agent-Omit-8B could obtain performance comparable to seven frontier LLM agent, and achieve the best effectiveness-efficiency trade-off than seven efficient LLM agents methods. Our code and data are available at https://github.com/usail-hkust/Agent-Omit.

Darwinian Memory: A Training-Free Self-Regulating Memory System for GUI Agent Evolution

Jan 30, 2026Abstract:Multimodal Large Language Model (MLLM) agents facilitate Graphical User Interface (GUI) automation but struggle with long-horizon, cross-application tasks due to limited context windows. While memory systems provide a viable solution, existing paradigms struggle to adapt to dynamic GUI environments, suffering from a granularity mismatch between high-level intent and low-level execution, and context pollution where the static accumulation of outdated experiences drives agents into hallucination. To address these bottlenecks, we propose the Darwinian Memory System (DMS), a self-evolving architecture that constructs memory as a dynamic ecosystem governed by the law of survival of the fittest. DMS decomposes complex trajectories into independent, reusable units for compositional flexibility, and implements Utility-driven Natural Selection to track survival value, actively pruning suboptimal paths and inhibiting high-risk plans. This evolutionary pressure compels the agent to derive superior strategies. Extensive experiments on real-world multi-app benchmarks validate that DMS boosts general-purpose MLLMs without training costs or architectural overhead, achieving average gains of 18.0% in success rate and 33.9% in execution stability, while reducing task latency, establishing it as an effective self-evolving memory system for GUI tasks.

Integrated Channel Estimation and Sensing for Near-Field ELAA Systems

Jan 26, 2026Abstract:In this paper, we study the problem of uplink channel estimation for near-filed orthogonal frequency division multiplexing (OFDM) systems, where a base station (BS), equipped with an extremely large-scale antenna array (ELAA), serves multiple users over the same time-frequency resource block. A non-orthogonal pilot transmission scheme is considered to accommodate a larger number of users that can be supported by ELAA systems without incurring an excessive amount of training overhead. To facilitate efficient multi-user channel estimation, we express the received signal as a third-order low-rank tensor, which admits a canonical polyadic decomposition (CPD) model for line-of-sight (LoS) scenarios and a block term decomposition (BTD) model for non-line-of-sight (NLoS) scenarios. An alternating least squares (ALS) algorithm and a non-linear least squares (NLS) algorithm are employed to perform CPD and BTD, respectively. Channel parameters are then efficiently extracted from the recovered factor matrices. By exploiting the geometry of the propagation paths in the estimated channel, users' positions can be precisely determined in LoS scenarios. Moreover, our uniqueness analysis shows that the proposed tensor-based joint multi-user channel estimation framework is effective even when the number of pilot symbols is much smaller than the number of users, revealing its potential in training overhead reduction. Simulation results demonstrate that the proposed method achieves markedly higher channel estimation accuracy than compressed sensing (CS)-based approaches.

SafeThinker: Reasoning about Risk to Deepen Safety Beyond Shallow Alignment

Jan 23, 2026Abstract:Despite the intrinsic risk-awareness of Large Language Models (LLMs), current defenses often result in shallow safety alignment, rendering models vulnerable to disguised attacks (e.g., prefilling) while degrading utility. To bridge this gap, we propose SafeThinker, an adaptive framework that dynamically allocates defensive resources via a lightweight gateway classifier. Based on the gateway's risk assessment, inputs are routed through three distinct mechanisms: (i) a Standardized Refusal Mechanism for explicit threats to maximize efficiency; (ii) a Safety-Aware Twin Expert (SATE) module to intercept deceptive attacks masquerading as benign queries; and (iii) a Distribution-Guided Think (DDGT) component that adaptively intervenes during uncertain generation. Experiments show that SafeThinker significantly lowers attack success rates across diverse jailbreak strategies without compromising utility, demonstrating that coordinating intrinsic judgment throughout the generation process effectively balances robustness and practicality.

Compressive Near-Field Wideband Channel Estimation for THz Extremely Large-scale MIMO Systems

Jul 30, 2025Abstract:We consider the channel acquisition problem for a wideband terahertz (THz) communication system, where an extremely large-scale array is deployed to mitigate severe path attenuation. In channel modeling, we account for both the near-field spherical wavefront and the wideband beam-splitting phenomena, resulting in a wideband near-field channel. We propose a frequency-independent orthogonal dictionary that generalizes the standard discrete Fourier transform (DFT) matrix by introducing an additional parameter to capture the near-field property. This dictionary enables the wideband near-field channel to be efficiently represented with a two-dimensional (2D) block-sparse structure. Leveraging this specific sparse structure, the wideband near-field channel estimation problem can be effectively addressed within a customized compressive sensing framework. Numerical results demonstrate the significant advantages of our proposed 2D block-sparsity-aware method over conventional polar-domain-based approaches for near-field wideband channel estimation.

Derivative-Free Optimization-Empowered Wireless Channel Reconfiguration for 6G

Jul 03, 2025Abstract:Reconfigurable antennas, including reconfigurable intelligent surface (RIS), movable antenna (MA), fluid antenna (FA), and other advanced antenna techniques, have been studied extensively in the context of reshaping wireless propagation environments for 6G and beyond wireless communications. Nevertheless, how to reconfigure/optimize the real-time controllable coefficients to achieve a favorable end-to-end wireless channel remains a substantial challenge, as it usually requires accurate modeling of the complex interaction between the reconfigurable devices and the electromagnetic waves, as well as knowledge of implicit channel propagation parameters. In this paper, we introduce a derivative-free optimization (a.k.a., zeroth-order (ZO) optimization) technique to directly optimize reconfigurable coefficients to shape the wireless end-to-end channel, without the need of channel modeling and estimation of the implicit environmental propagation parameters. We present the fundamental principles of ZO optimization and discuss its potential advantages in wireless channel reconfiguration. Two case studies for RIS and movable antenna-enabled single-input single-output (SISO) systems are provided to show the superiority of ZO-based methods as compared to state-of-the-art techniques. Finally, we outline promising future research directions and offer concluding insights on derivative-free optimization for reconfigurable antenna technologies.

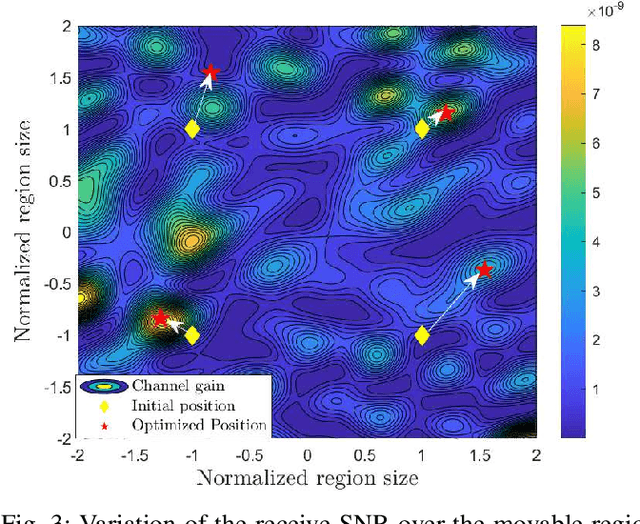

A Derivative-Free Position Optimization Approach for Movable Antenna Multi-User Communication Systems

May 25, 2025

Abstract:Movable antennas (MAs) have emerged as a disruptive technology in wireless communications for enhancing spatial degrees of freedom through continuous antenna repositioning within predefined regions, thereby creating favorable channel propagation conditions. In this paper, we study the problem of position optimization for MA-enabled multi-user MISO systems, where a base station (BS), equipped with multiple MAs, communicates with multiple users each equipped with a single fixed-position antenna (FPA). To circumvent the difficulty of acquiring the channel state information (CSI) from the transmitter to the receiver over the entire movable region, we propose a derivative-free approach for MA position optimization. The basic idea is to treat position optimization as a closed-box optimization problem and calculate the gradient of the unknown objective function using zeroth-order (ZO) gradient approximation techniques. Specifically, the proposed method does not need to explicitly estimate the global CSI. Instead, it adaptively refines its next movement based on previous measurements such that it eventually converges to an optimum or stationary solution. Simulation results show that the proposed derivative-free approach is able to achieve higher sample and computational efficiencies than the CSI estimation-based position optimization approach, particularly for challenging scenarios where the number of multi-path components (MPCs) is large or the number of pilot signals is limited.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge