Dmytro Pavlichenko

RoboCup 2023 Humanoid AdultSize Winner NimbRo: NimbRoNet3 Visual Perception and Responsive Gait with Waveform In-walk Kicks

Jan 11, 2024

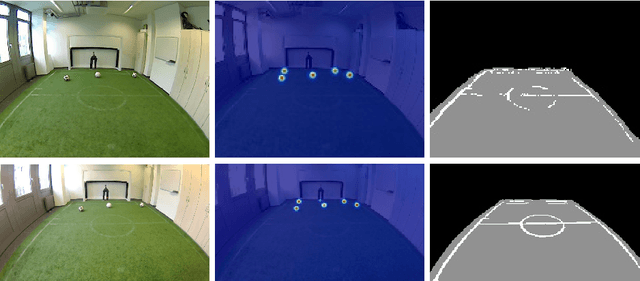

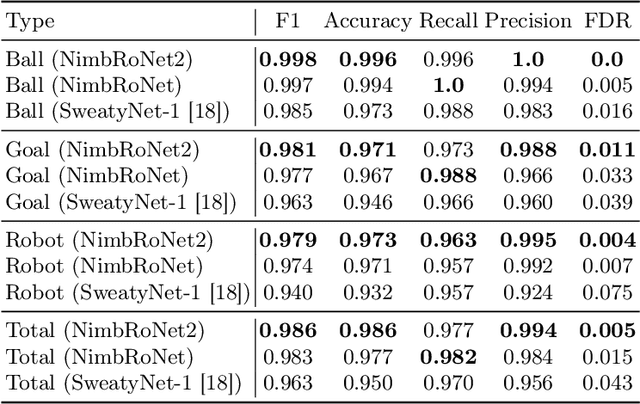

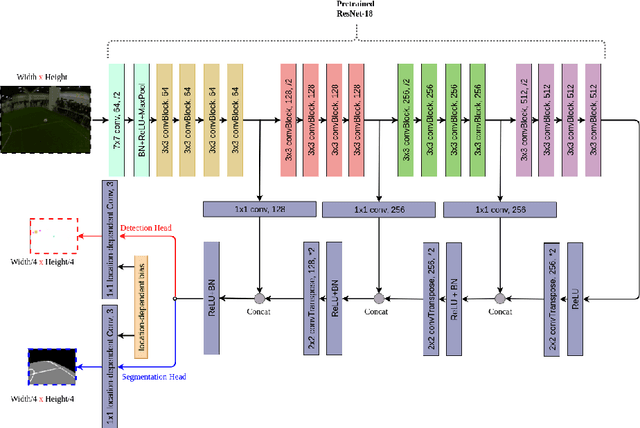

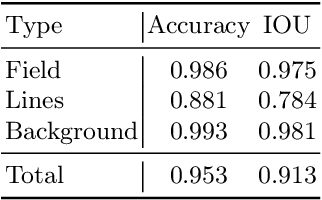

Abstract:The RoboCup Humanoid League holds annual soccer robot world championships towards the long-term objective of winning against the FIFA world champions by 2050. The participating teams continuously improve their systems. This paper presents the upgrades to our humanoid soccer system, leading our team NimbRo to win the Soccer Tournament in the Humanoid AdultSize League at RoboCup 2023 in Bordeaux, France. The mentioned upgrades consist of: an updated model architecture for visual perception, extended fused angles feedback mechanisms and an additional COM-ZMP controller for walking robustness, and parametric in-walk kicks through waveforms.

Deep Reinforcement Learning of Dexterous Pre-grasp Manipulation for Human-like Functional Categorical Grasping

Jul 31, 2023Abstract:Many objects such as tools and household items can be used only if grasped in a very specific way - grasped functionally. Often, a direct functional grasp is not possible, though. We propose a method for learning a dexterous pre-grasp manipulation policy to achieve human-like functional grasps using deep reinforcement learning. We introduce a dense multi-component reward function that enables learning a single policy, capable of dexterous pre-grasp manipulation of novel instances of several known object categories with an anthropomorphic hand. The policy is learned purely by means of reinforcement learning from scratch, without any expert demonstrations, and implicitly learns to reposition and reorient objects of complex shapes to achieve given functional grasps. Learning is done on a single GPU in less than three hours.

RoboCup 2022 AdultSize Winner NimbRo: Upgraded Perception, Capture Steps Gait and Phase-based In-walk Kicks

Feb 07, 2023

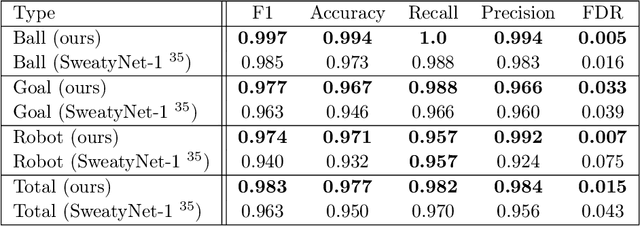

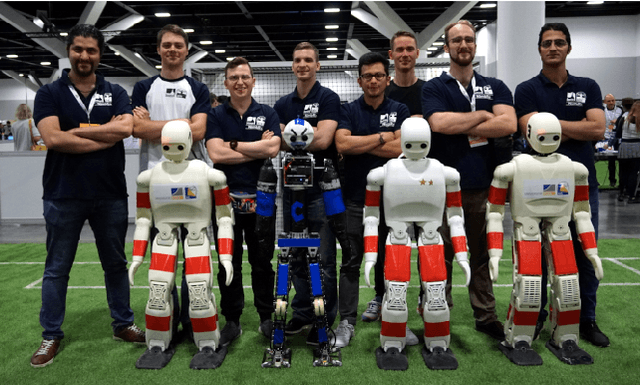

Abstract:Beating the human world champions by 2050 is an ambitious goal of the Humanoid League that provides a strong incentive for RoboCup teams to further improve and develop their systems. In this paper, we present upgrades of our system which enabled our team NimbRo to win the Soccer Tournament, the Drop-in Games, and the Technical Challenges in the Humanoid AdultSize League of RoboCup 2022. Strong performance in these competitions resulted in the Best Humanoid award in the Humanoid League. The mentioned upgrades include: hardware upgrade of the vision module, balanced walking with Capture Steps, and the introduction of phase-based in-walk kicks.

Real-Robot Deep Reinforcement Learning: Improving Trajectory Tracking of Flexible-Joint Manipulator with Reference Correction

Mar 14, 2022

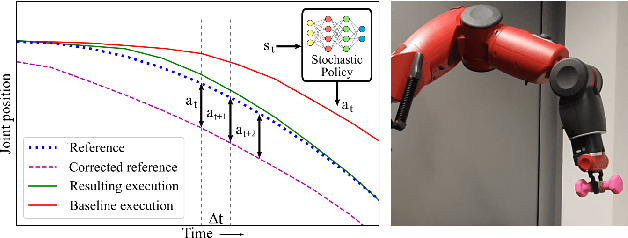

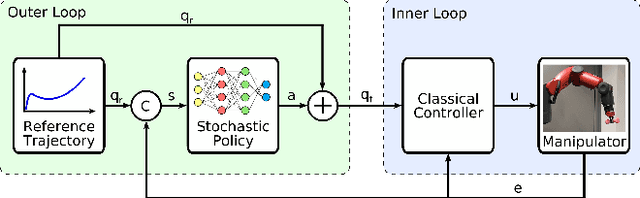

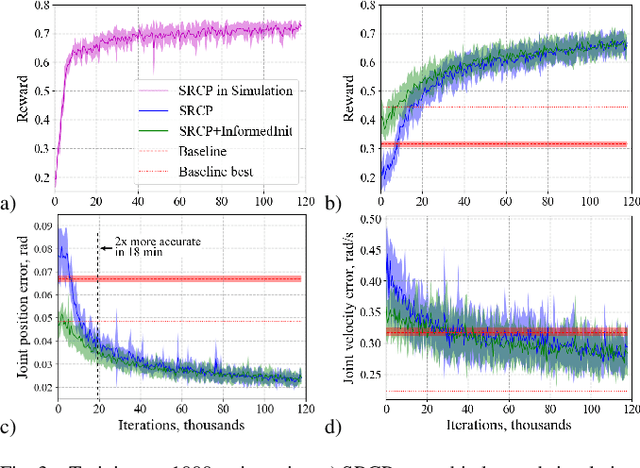

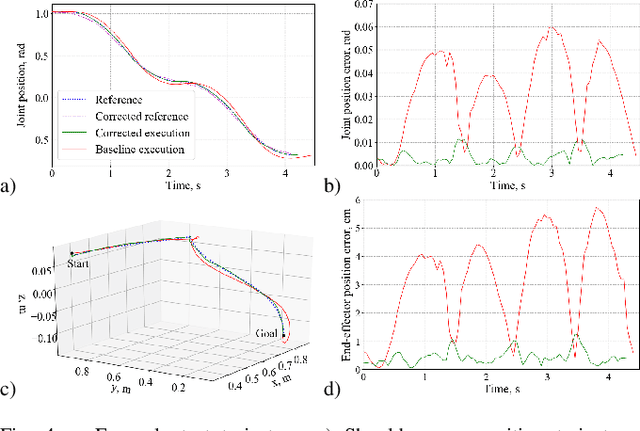

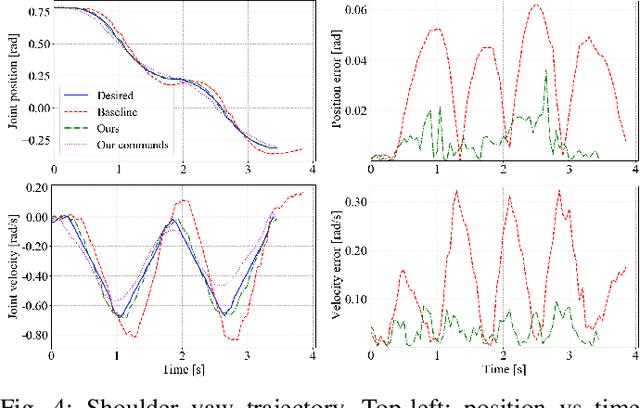

Abstract:Flexible-joint manipulators are governed by complex nonlinear dynamics, defining a challenging control problem. In this work, we propose an approach to learn an outer-loop joint trajectory tracking controller with deep reinforcement learning. The controller represented by a stochastic policy is learned in under two hours directly on the real robot. This is achieved through bounded reference correction actions and use of a model-free off-policy learning method. In addition, an informed policy initialization is proposed, where the agent is pre-trained in a learned simulation. We test our approach on the 7 DOF manipulator of a Baxter robot. We demonstrate that the proposed method is capable of consistent learning across multiple runs when applied directly on the real robot. Our method yields a policy which significantly improves the trajectory tracking accuracy in comparison to the vendor-provided controller, generalizing to an unseen payload.

Target Chase, Wall Building, and Fire Fighting: Autonomous UAVs of Team NimbRo at MBZIRC 2020

Jan 11, 2022

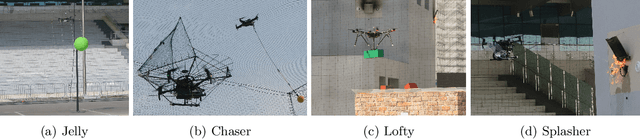

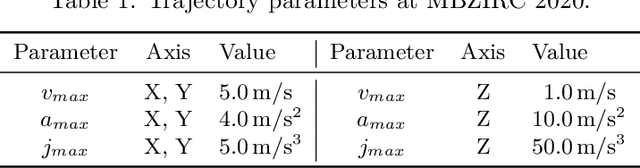

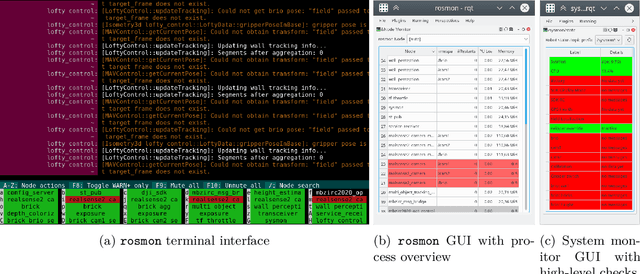

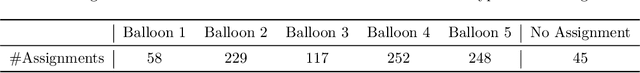

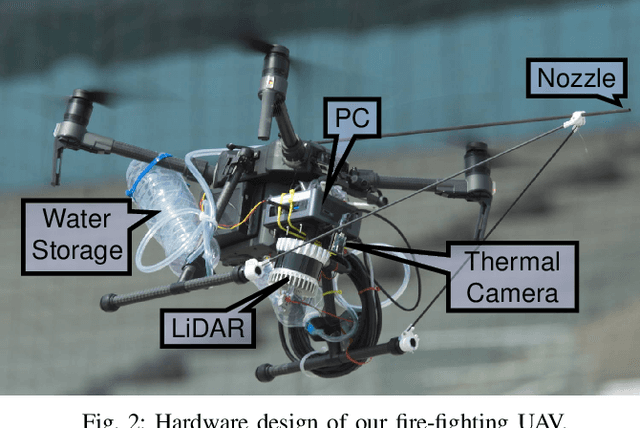

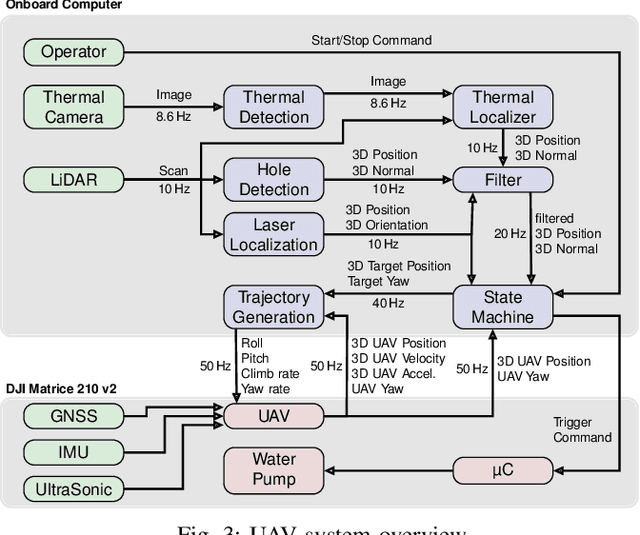

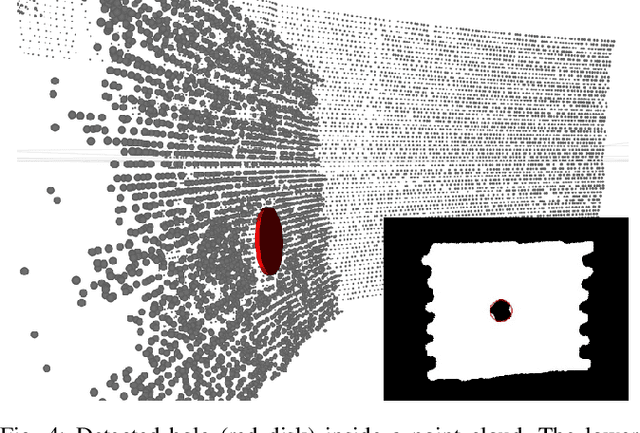

Abstract:The Mohamed Bin Zayed International Robotics Challenge (MBZIRC) 2020 posed diverse challenges for unmanned aerial vehicles (UAVs). We present our four tailored UAVs, specifically developed for individual aerial-robot tasks of MBZIRC, including custom hardware- and software components. In Challenge 1, a target UAV is pursued using a high-efficiency, onboard object detection pipeline to capture a ball from the target UAV. A second UAV uses a similar detection method to find and pop balloons scattered throughout the arena. For Challenge 2, we demonstrate a larger UAV capable of autonomous aerial manipulation: Bricks are found and tracked from camera images. Subsequently, they are approached, picked, transported, and placed on a wall. Finally, in Challenge 3, our UAV autonomously finds fires using LiDAR and thermal cameras. It extinguishes the fires with an onboard fire extinguisher. While every robot features task-specific subsystems, all UAVs rely on a standard software stack developed for this particular and future competitions. We present our mostly open-source software solutions, including tools for system configuration, monitoring, robust wireless communication, high-level control, and agile trajectory generation. For solving the MBZIRC 2020 tasks, we advanced the state of the art in multiple research areas like machine vision and trajectory generation. We present our scientific contributions that constitute the foundation for our algorithms and systems and analyze the results from the MBZIRC competition 2020 in Abu Dhabi, where our systems reached second place in the Grand Challenge. Furthermore, we discuss lessons learned from our participation in this complex robotic challenge.

Flexible-Joint Manipulator Trajectory Tracking with Learned Two-Stage Model employing One-Step Future Prediction

Dec 06, 2021

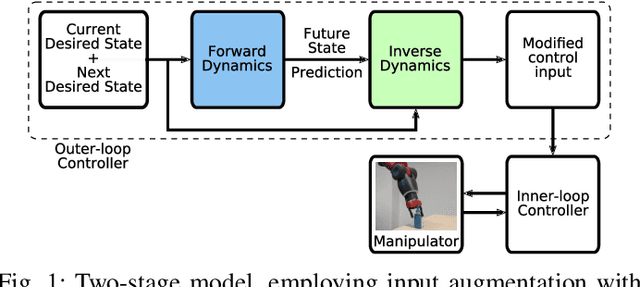

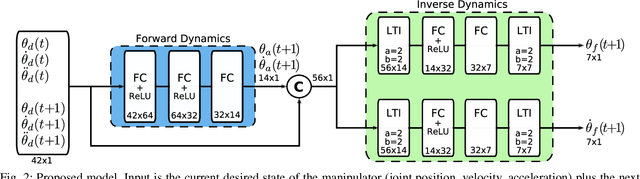

Abstract:Flexible-joint manipulators are frequently used for increased safety during human-robot collaboration and shared workspace tasks. However, joint flexibility significantly reduces the accuracy of motion, especially at high velocities and with inexpensive actuators. In this paper, we present a learning-based approach to identify the unknown dynamics of a flexible-joint manipulator and improve the trajectory tracking at high velocities. We propose a two-stage model which is composed of a one-step forward dynamics future predictor and an inverse dynamics estimator. The second part is based on linear time-invariant dynamical operators to approximate the feed-forward joint position and velocity commands. We train the model end-to-end on real-world data and evaluate it on the Baxter robot. Our experiments indicate that augmenting the input with one-step future state prediction improves the performance, compared to the same model without prediction. We compare joint position, joint velocity and end-effector position tracking accuracy against the classical baseline controller and several simpler models.

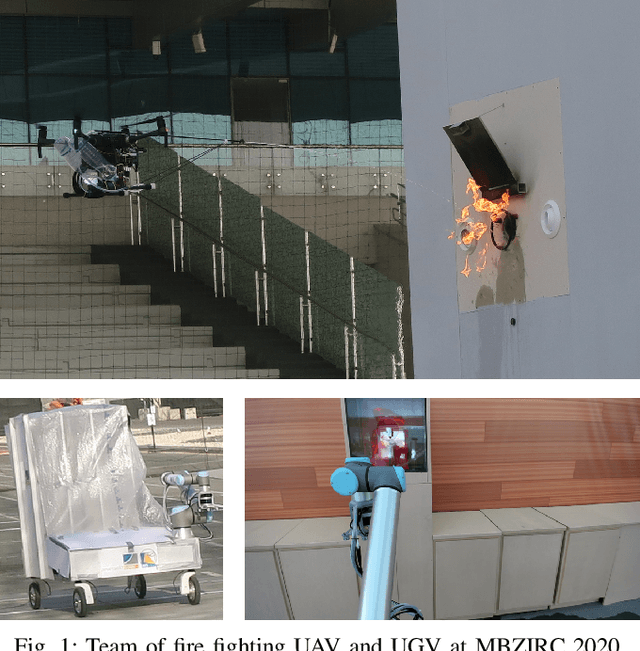

Autonomous Fire Fighting with a UAV-UGV Team at MBZIRC 2020

Jun 11, 2021

Abstract:Every day, burning buildings threaten the lives of occupants and first responders trying to save them. Quick action is of essence, but some areas might not be accessible or too dangerous to enter. Robotic systems have become a promising addition to firefighting, but at this stage, they are mostly manually controlled, which is error-prone and requires specially trained personal. We present two systems for autonomous firefighting from air and ground we developed for the Mohamed Bin Zayed International Robotics Challenge (MBZIRC) 2020. The systems use LiDAR for reliable localization within narrow, potentially GNSS-restricted environments while maneuvering close to obstacles. Measurements from LiDAR and thermal cameras are fused to track fires, while relative navigation ensures successful extinguishing. We analyze and discuss our successful participation during the MBZIRC 2020, present further experiments, and provide insights into our lessons learned from the competition.

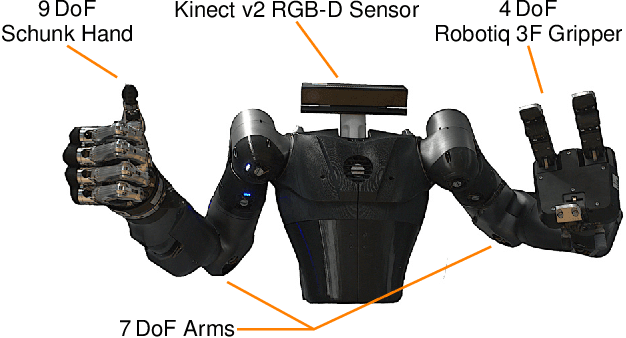

NimbRo-OP2X: Affordable Adult-sized 3D-printed Open-Source Humanoid Robot for Research

Oct 19, 2020

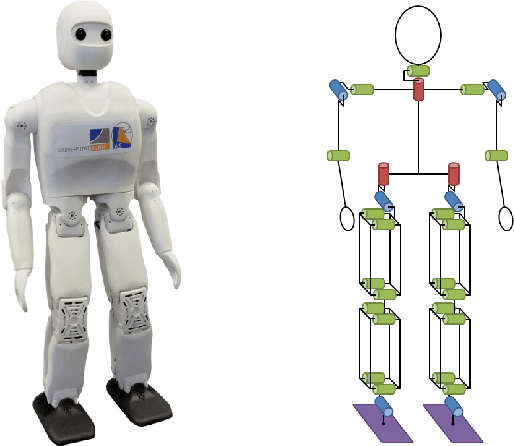

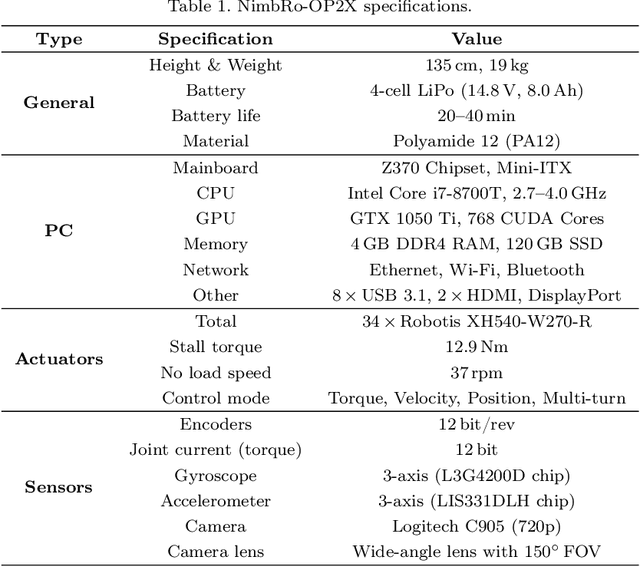

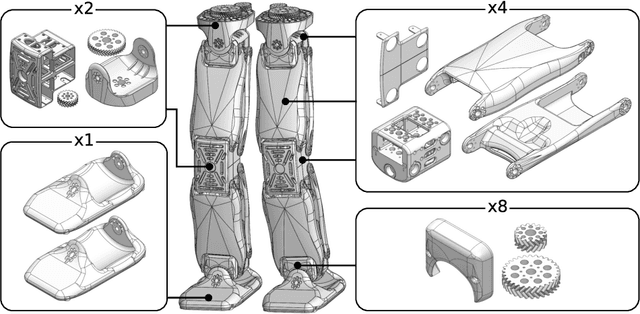

Abstract:For several years, high development and production costs of humanoid robots restricted researchers interested in working in the field. To overcome this problem, several research groups have opted to work with simulated or smaller robots, whose acquisition costs are significantly lower. However, due to scale differences and imperfect simulation replicability, results may not be directly reproducible on real, adult-sized robots. In this paper, we present the NimbRo-OP2X, a capable and affordable adult-sized humanoid platform aiming to significantly lower the entry barrier for humanoid robot research. With a height of 135 cm and weight of only 19 kg, the robot can interact in an unmodified, human environment without special safety equipment. Modularity in hardware and software allow this platform enough flexibility to operate in different scenarios and applications with minimal effort. The robot is equipped with an on-board computer with GPU, which enables the implementation of state-of-the-art approaches for object detection and human perception demanded by areas such as manipulation and human-robot interaction. Finally, the capabilities of the NimbRo-OP2X, especially in terms of locomotion stability and visual perception, are evaluated. This includes the performance at RoboCup 2018, where NimbRo-OP2X won all possible awards in the AdultSize class.

RoboCup 2019 AdultSize Winner NimbRo: Deep Learning Perception, In-Walk Kick, Push Recovery, and Team Play Capabilities

Dec 17, 2019

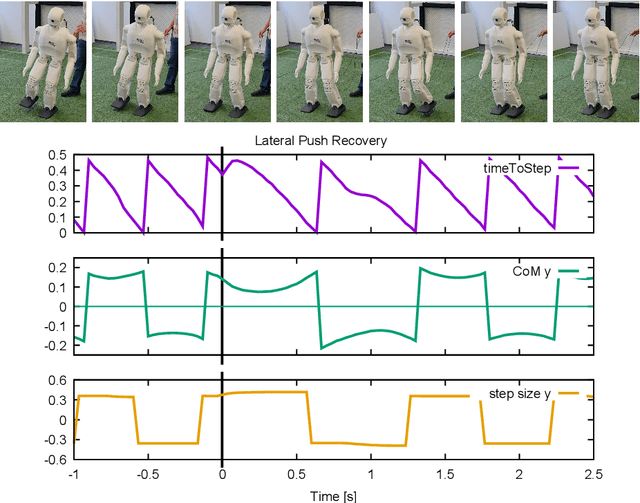

Abstract:Individual and team capabilities are challenged every year by rule changes and the increasing performance of the soccer teams at RoboCup Humanoid League. For RoboCup 2019 in the AdultSize class, the number of players (2 vs. 2 games) and the field dimensions were increased, which demanded for team coordination and robust visual perception and localization modules. In this paper, we present the latest developments that lead team NimbRo to win the soccer tournament, drop-in games, technical challenges and the Best Humanoid Award of the RoboCup Humanoid League 2019 in Sydney. These developments include a deep learning vision system, in-walk kicks, step-based push-recovery, and team play strategies.

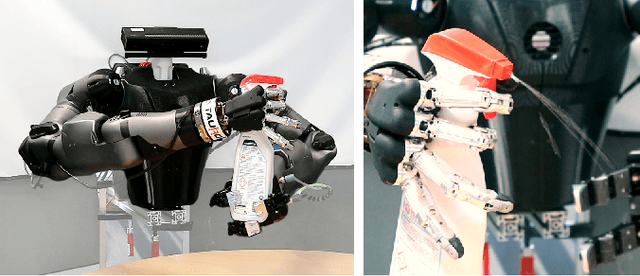

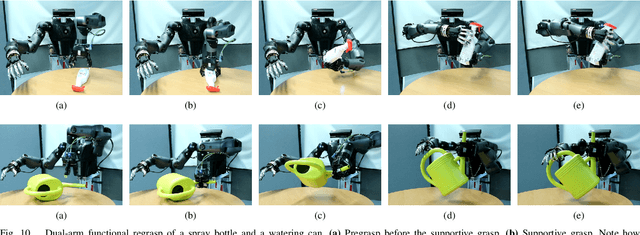

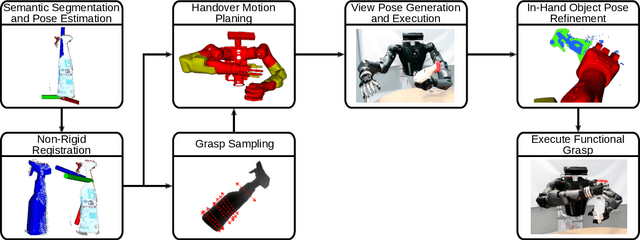

Autonomous Bimanual Functional Regrasping of Novel Object Class Instances

Oct 01, 2019

Abstract:In human-made scenarios, robots need to be able to fully operate objects in their surroundings, i.e., objects are required to be functionally grasped rather than only picked. This imposes very strict constraints on the object pose such that a direct grasp can be performed. Inspired by the anthropomorphic nature of humanoid robots, we propose an approach that first grasps an object with one hand, obtaining full control over its pose, and performs the functional grasp with the second hand subsequently. Thus, we develop a fully autonomous pipeline for dual-arm functional regrasping of novel familiar objects, i.e., objects never seen before that belong to a known object category, e.g., spray bottles. This process involves semantic segmentation, object pose estimation, non-rigid mesh registration, grasp sampling, handover pose generation and in-hand pose refinement. The latter is used to compensate for the unpredictable object movement during the first grasp. The approach is applied to a human-like upper body. To the best knowledge of the authors, this is the first system that exhibits autonomous bimanual functional regrasping capabilities. We demonstrate that our system yields reliable success rates and can be applied on-line to real-world tasks using only one off-the-shelf RGB-D sensor.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge