Michael Schreiber

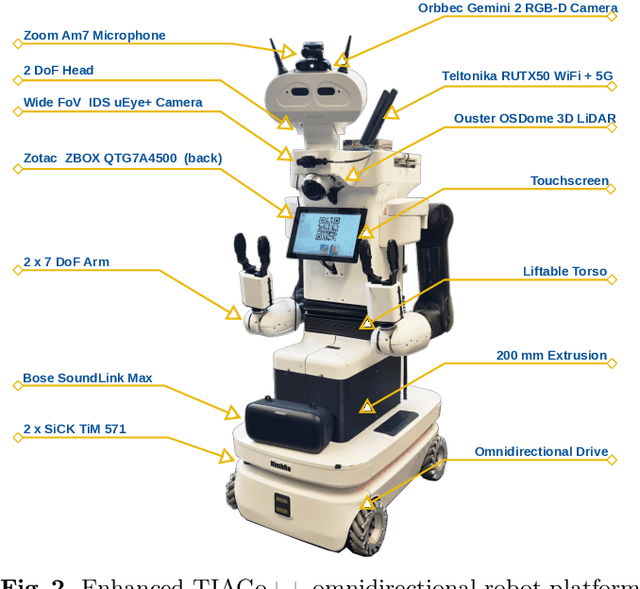

RoboCup@Home 2024 OPL Winner NimbRo: Anthropomorphic Service Robots using Foundation Models for Perception and Planning

Dec 19, 2024

Abstract:We present the approaches and contributions of the winning team NimbRo@Home at the RoboCup@Home 2024 competition in the Open Platform League held in Eindhoven, NL. Further, we describe our hardware setup and give an overview of the results for the task stages and the final demonstration. For this year's competition, we put a special emphasis on open-vocabulary object segmentation and grasping approaches that overcome the labeling overhead of supervised vision approaches, commonly used in RoboCup@Home. We successfully demonstrated that we can segment and grasp non-labeled objects by text descriptions. Further, we extensively employed LLMs for natural language understanding and task planning. Throughout the competition, our approaches showed robustness and generalization capabilities. A video of our performance can be found online.

Self-centering 3-DOF feet controller for hands-free locomotion control in telepresence and virtual reality

Aug 05, 2024

Abstract:We present a novel seated foot controller for handling 3-DOF aimed to control locomotion for telepresence robotics and virtual reality environments. Tilting the feet on two axes yields in forward, backward and sideways motion. In addition, a separate rotary joint allows for rotation around the vertical axis. Attached springs on all joints self-center the controller. The HTC Vive tracker is used to translate the trackers' orientation into locomotion commands. The proposed self-centering foot controller was used successfully for the ANA Avatar XPRIZE competition, where a naive operator traversed the robot through a longer distance, surpassing obstacles while solving various interaction and manipulation tasks in between. We publicly provide the models of the mostly 3D-printed feet controller for reproduction.

HortiBot: An Adaptive Multi-Arm System for Robotic Horticulture of Sweet Peppers

Mar 22, 2024Abstract:Horticultural tasks such as pruning and selective harvesting are labor intensive and horticultural staff are hard to find. Automating these tasks is challenging due to the semi-structured greenhouse workspaces, changing environmental conditions such as lighting, dense plant growth with many occlusions, and the need for gentle manipulation of non-rigid plant organs. In this work, we present the three-armed system HortiBot, with two arms for manipulation and a third arm as an articulated head for active perception using stereo cameras. Its perception system detects not only peppers, but also peduncles and stems in real time, and performs online data association to build a world model of pepper plants. Collision-aware online trajectory generation allows all three arms to safely track their respective targets for observation, grasping, and cutting. We integrated perception and manipulation to perform selective harvesting of peppers and evaluated the system in lab experiments. Using active perception coupled with end-effector force torque sensing for compliant manipulation, HortiBot achieves high success rates.

RoboCup 2023 Humanoid AdultSize Winner NimbRo: NimbRoNet3 Visual Perception and Responsive Gait with Waveform In-walk Kicks

Jan 11, 2024

Abstract:The RoboCup Humanoid League holds annual soccer robot world championships towards the long-term objective of winning against the FIFA world champions by 2050. The participating teams continuously improve their systems. This paper presents the upgrades to our humanoid soccer system, leading our team NimbRo to win the Soccer Tournament in the Humanoid AdultSize League at RoboCup 2023 in Bordeaux, France. The mentioned upgrades consist of: an updated model architecture for visual perception, extended fused angles feedback mechanisms and an additional COM-ZMP controller for walking robustness, and parametric in-walk kicks through waveforms.

NimbRo wins ANA Avatar XPRIZE Immersive Telepresence Competition: Human-Centric Evaluation and Lessons Learned

Aug 28, 2023

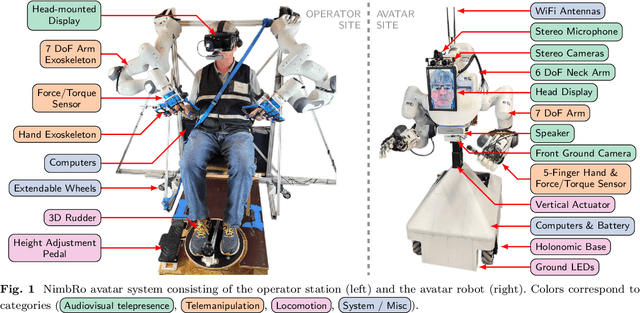

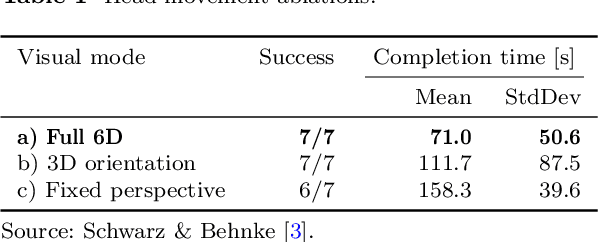

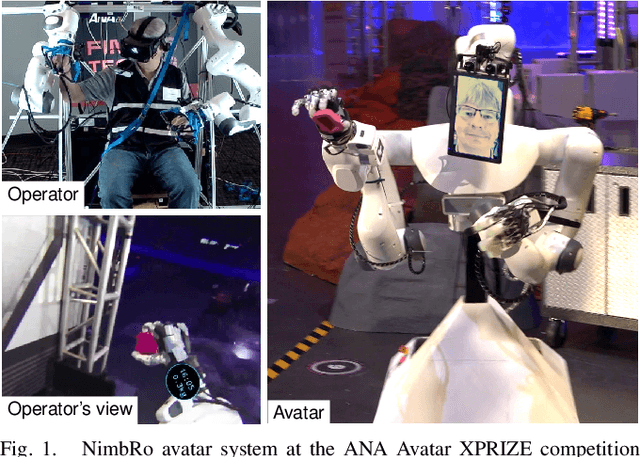

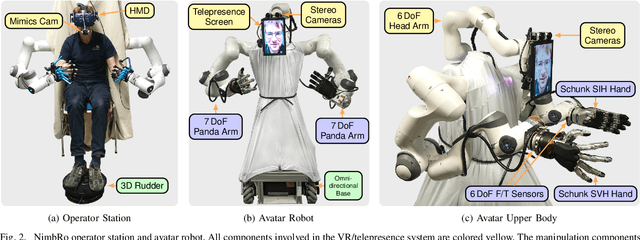

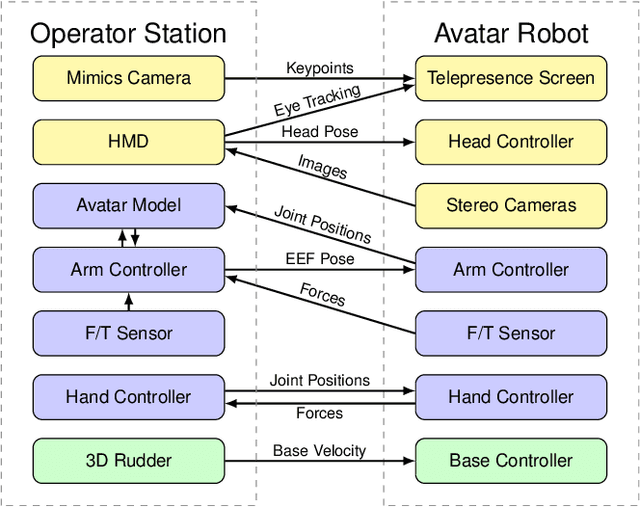

Abstract:Robotic avatar systems can enable immersive telepresence with locomotion, manipulation, and communication capabilities. We present such an avatar system, based on the key components of immersive 3D visualization and transparent force-feedback telemanipulation. Our avatar robot features an anthropomorphic upper body with dexterous hands. The remote human operator drives the arms and fingers through an exoskeleton-based operator station, which provides force feedback both at the wrist and for each finger. The robot torso is mounted on a holonomic base, providing omnidirectional locomotion on flat floors, controlled using a 3D rudder device. Finally, the robot features a 6D movable head with stereo cameras, which stream images to a VR display worn by the operator. Movement latency is hidden using spherical rendering. The head also carries a telepresence screen displaying an animated image of the operator's face, enabling direct interaction with remote persons. Our system won the \$10M ANA Avatar XPRIZE competition, which challenged teams to develop intuitive and immersive avatar systems that could be operated by briefly trained judges. We analyze our successful participation in the semifinals and finals and provide insight into our operator training and lessons learned. In addition, we evaluate our system in a user study that demonstrates its intuitive and easy usability.

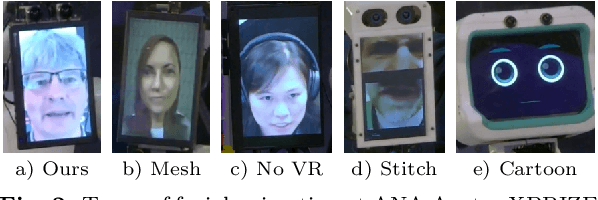

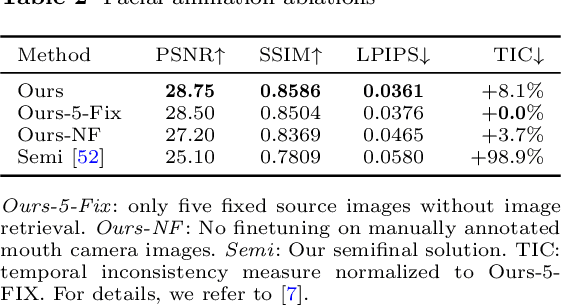

VR Facial Animation for Immersive Telepresence Avatars

Apr 24, 2023Abstract:VR Facial Animation is necessary in applications requiring clear view of the face, even though a VR headset is worn. In our case, we aim to animate the face of an operator who is controlling our robotic avatar system. We propose a real-time capable pipeline with very fast adaptation for specific operators. In a quick enrollment step, we capture a sequence of source images from the operator without the VR headset which contain all the important operator-specific appearance information. During inference, we then use the operator keypoint information extracted from a mouth camera and two eye cameras to estimate the target expression and head pose, to which we map the appearance of a source still image. In order to enhance the mouth expression accuracy, we dynamically select an auxiliary expression frame from the captured sequence. This selection is done by learning to transform the current mouth keypoints into the source camera space, where the alignment can be determined accurately. We, furthermore, demonstrate an eye tracking pipeline that can be trained in less than a minute, a time efficient way to train the whole pipeline given a dataset that includes only complete faces, show exemplary results generated by our method, and discuss performance at the ANA Avatar XPRIZE semifinals.

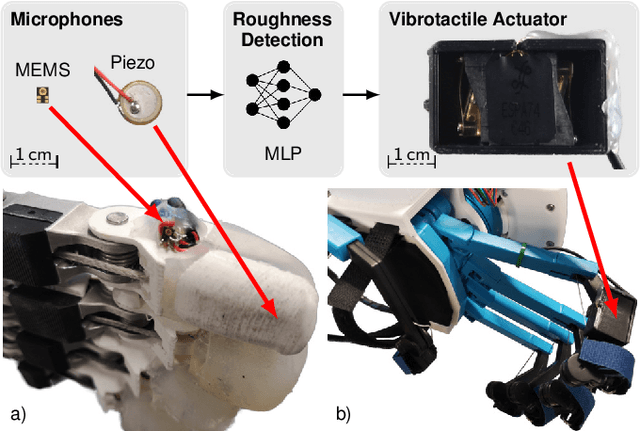

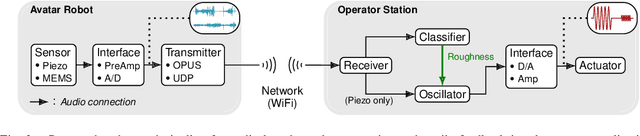

Audio-based Roughness Sensing and Tactile Feedback for Haptic Perception in Telepresence

Mar 13, 2023

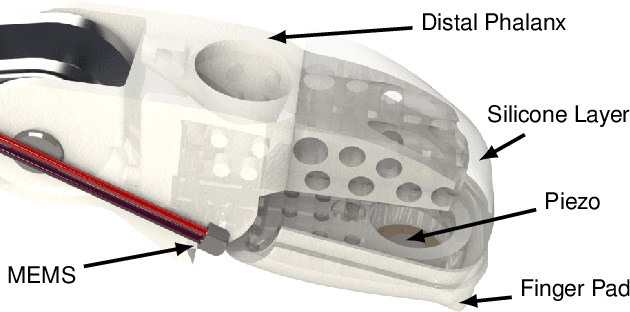

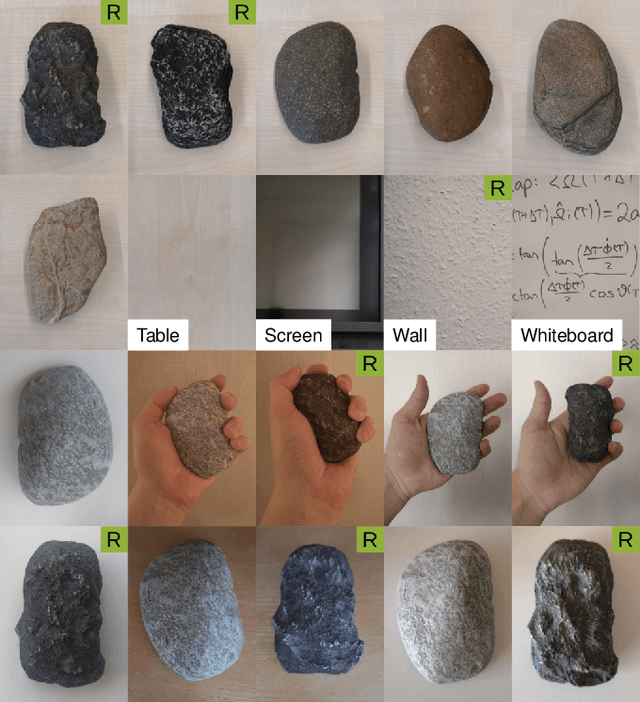

Abstract:Haptic perception is incredibly important for immersive teleoperation of robots, especially for accomplishing manipulation tasks. We propose a low-cost haptic sensing and rendering system, which is capable of detecting and displaying surface roughness. As the robot fingertip moves across a surface of interest, two microphones capture sound coupled directly through the fingertip and through the air, respectively. A learning-based detector system analyzes the data in real-time and gives roughness estimates with both high temporal resolution and low latency. Finally, an audio-based haptic actuator displays the result to the human operator. We demonstrate the effectiveness of our system through experiments and our winning entry in the ANA Avatar XPRIZE competition finals, where impartial judges solved a roughness-based selection task even without additional vision feedback. We publish our dataset used for training and evaluation together with our trained models to enable reproducibility.

Robust Immersive Telepresence and Mobile Telemanipulation: NimbRo wins ANA Avatar XPRIZE Finals

Mar 06, 2023

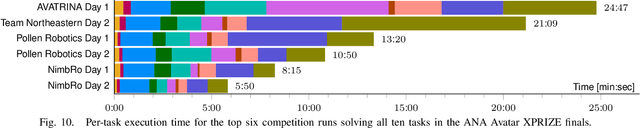

Abstract:Robotic avatar systems promise to bridge distances and reduce the need for travel. We present the updated NimbRo avatar system, winner of the $5M grand prize at the international ANA Avatar XPRIZE competition, which required participants to build intuitive and immersive telepresence robots that could be operated by briefly trained operators. We describe key improvements for the finals compared to the system used in the semifinals: To operate without a power- and communications tether, we integrate a battery and a robust redundant wireless communication system. Video and audio data are compressed using low-latency HEVC and Opus codecs. We propose a new locomotion control device with tunable resistance force. To increase flexibility, the robot's upper-body height can be adjusted by the operator. We describe essential monitoring and robustness tools which enabled the success at the competition. Finally, we analyze our performance at the competition finals and discuss lessons learned.

Target Chase, Wall Building, and Fire Fighting: Autonomous UAVs of Team NimbRo at MBZIRC 2020

Jan 11, 2022

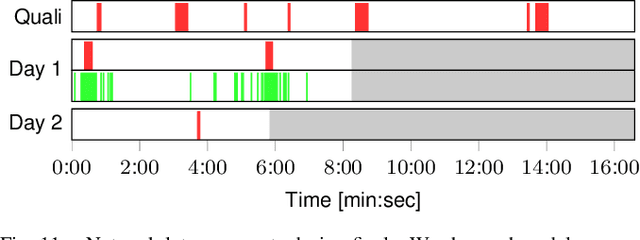

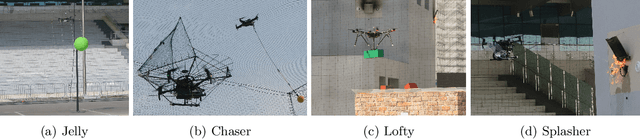

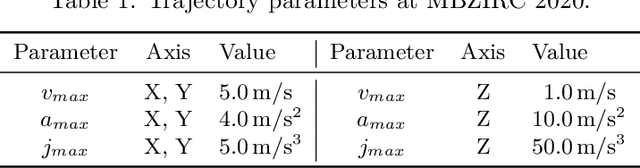

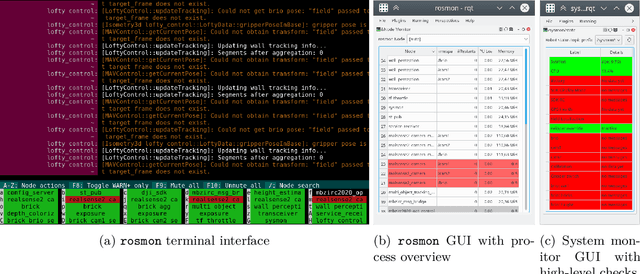

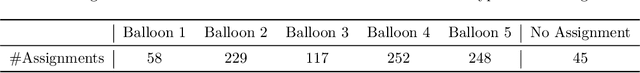

Abstract:The Mohamed Bin Zayed International Robotics Challenge (MBZIRC) 2020 posed diverse challenges for unmanned aerial vehicles (UAVs). We present our four tailored UAVs, specifically developed for individual aerial-robot tasks of MBZIRC, including custom hardware- and software components. In Challenge 1, a target UAV is pursued using a high-efficiency, onboard object detection pipeline to capture a ball from the target UAV. A second UAV uses a similar detection method to find and pop balloons scattered throughout the arena. For Challenge 2, we demonstrate a larger UAV capable of autonomous aerial manipulation: Bricks are found and tracked from camera images. Subsequently, they are approached, picked, transported, and placed on a wall. Finally, in Challenge 3, our UAV autonomously finds fires using LiDAR and thermal cameras. It extinguishes the fires with an onboard fire extinguisher. While every robot features task-specific subsystems, all UAVs rely on a standard software stack developed for this particular and future competitions. We present our mostly open-source software solutions, including tools for system configuration, monitoring, robust wireless communication, high-level control, and agile trajectory generation. For solving the MBZIRC 2020 tasks, we advanced the state of the art in multiple research areas like machine vision and trajectory generation. We present our scientific contributions that constitute the foundation for our algorithms and systems and analyze the results from the MBZIRC competition 2020 in Abu Dhabi, where our systems reached second place in the Grand Challenge. Furthermore, we discuss lessons learned from our participation in this complex robotic challenge.

NimbRo Avatar: Interactive Immersive Telepresence with Force-Feedback Telemanipulation

Sep 28, 2021

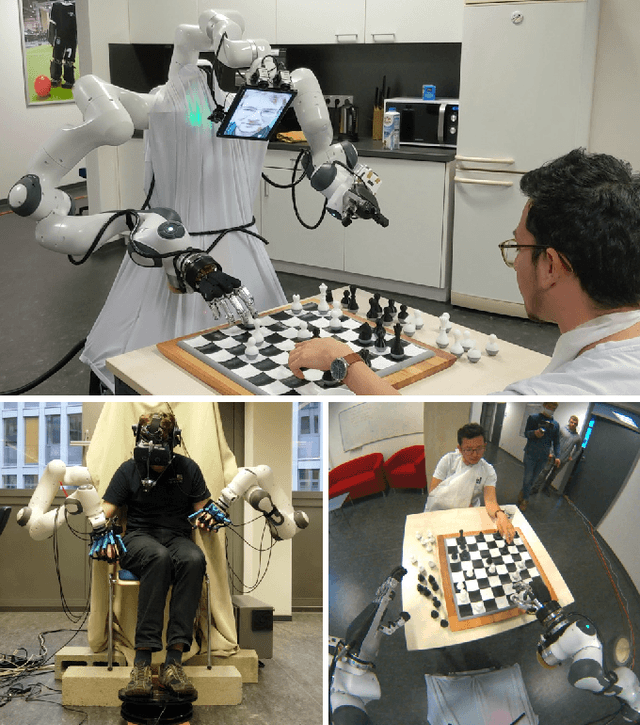

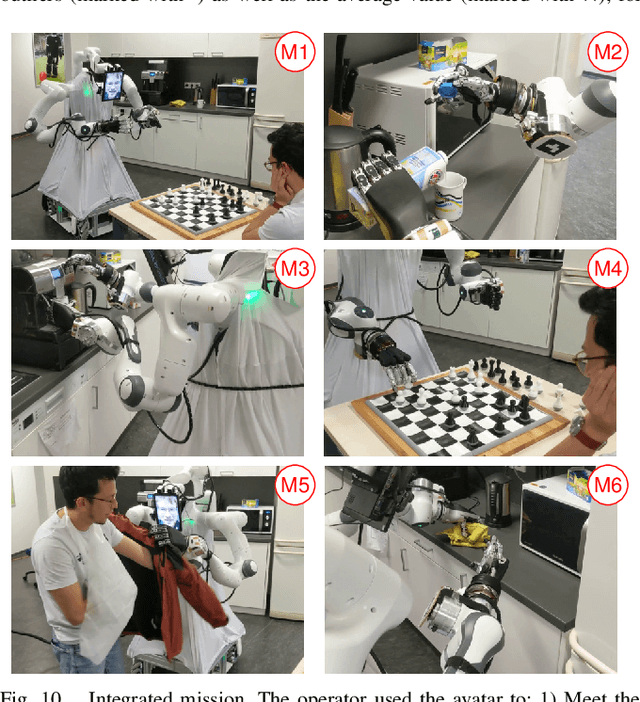

Abstract:Robotic avatars promise immersive teleoperation with human-like manipulation and communication capabilities. We present such an avatar system, based on the key components of immersive 3D visualization and transparent force-feedback telemanipulation. Our avatar robot features an anthropomorphic bimanual arm configuration with dexterous hands. The remote human operator drives the arms and fingers through an exoskeleton-based operator station, which provides force feedback both at the wrist and for each finger. The robot torso is mounted on a holonomic base, providing locomotion capability in typical indoor scenarios, controlled using a 3D rudder device. Finally, the robot features a 6D movable head with stereo cameras, which stream images to a VR HMD worn by the operator. Movement latency is hidden using spherical rendering. The head also carries a telepresence screen displaying a synthesized image of the operator with facial animation, which enables direct interaction with remote persons. We evaluate our system successfully both in a user study with untrained operators as well as a longer and more complex integrated mission. We discuss lessons learned from the trials and possible improvements.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge