Jan Quenzel

Aerial Assistance System for Automated Firefighting during Turntable Ladder Operations

Nov 18, 2025Abstract:Fires in industrial facilities pose special challenges to firefighters, e.g., due to the sheer size and scale of the buildings. The resulting visual obstructions impair firefighting accuracy, further compounded by inaccurate assessments of the fire's location. Such imprecision simultaneously increases the overall damage and prolongs the fire-brigades operation unnecessarily. We propose an automated assistance system for firefighting using a motorized fire monitor on a turntable ladder with aerial support from an unmanned aerial vehicle (UAV). The UAV flies autonomously within an obstacle-free flight funnel derived from geodata, detecting and localizing heat sources. An operator supervises the operation on a handheld controller and selects a fire target in reach. After the selection, the UAV automatically plans and traverses between two triangulation poses for continued fire localization. Simultaneously, our system steers the fire monitor to ensure the water jet reaches the detected heat source. In preliminary tests, our assistance system successfully localized multiple heat sources and directed a water jet towards the fires.

LIO-MARS: Non-uniform Continuous-time Trajectories for Real-time LiDAR-Inertial-Odometry

Nov 17, 2025Abstract:Autonomous robotic systems heavily rely on environment knowledge to safely navigate. For search & rescue, a flying robot requires robust real-time perception, enabled by complementary sensors. IMU data constrains acceleration and rotation, whereas LiDAR measures accurate distances around the robot. Building upon the LiDAR odometry MARS, our LiDAR-inertial odometry (LIO) jointly aligns multi-resolution surfel maps with a Gaussian mixture model (GMM) using a continuous-time B-spline trajectory. Our new scan window uses non-uniform temporal knot placement to ensure continuity over the whole trajectory without additional scan delay. Moreover, we accelerate essential covariance and GMM computations with Kronecker sums and products by a factor of 3.3. An unscented transform de-skews surfels, while a splitting into intra-scan segments facilitates motion compensation during spline optimization. Complementary soft constraints on relative poses and preintegrated IMU pseudo-measurements further improve robustness and accuracy. Extensive evaluation showcases the state-of-the-art quality of our LIO-MARS w.r.t. recent LIO systems on various handheld, ground and aerial vehicle-based datasets.

LiDAR-based Registration against Georeferenced Models for Globally Consistent Allocentric Maps

Dec 03, 2024

Abstract:Modern unmanned aerial vehicles (UAVs) are irreplaceable in search and rescue (SAR) missions to obtain a situational overview or provide closeups without endangering personnel. However, UAVs heavily rely on global navigation satellite system (GNSS) for localization which works well in open spaces, but the precision drastically degrades in the vicinity of buildings. These inaccuracies hinder aggregation of diverse data from multiple sources in a unified georeferenced frame for SAR operators. In contrast, CityGML models provide approximate building shapes with accurate georeferenced poses. Besides, LiDAR works best in the vicinity of 3D structures. Hence, we refine coarse GNSS measurements by registering LiDAR maps against CityGML and digital elevation map (DEM) models as a prior for allocentric mapping. An intuitive plausibility score selects the best hypothesis based on occupancy using a 2D height map. Afterwards, we integrate the registration results in a continuous-time spline-based pose graph optimizer with LiDAR odometry and further sensing modalities to obtain globally consistent, georeferenced trajectories and maps. We evaluate the viability of our approach on multiple flights captured at two distinct testing sites. Our method successfully reduced GNSS offset errors from up-to 16 m to below 0.5 m on multiple flights. Furthermore, we obtain globally consistent maps w.r.t. prior 3D geospatial models.

Lessons from Robot-Assisted Disaster Response Deployments by the German Rescue Robotics Center Task Force

Dec 19, 2022Abstract:Earthquakes, fire, and floods often cause structural collapses of buildings. The inspection of damaged buildings poses a high risk for emergency forces or is even impossible, though. We present three recent selected missions of the Robotics Task Force of the German Rescue Robotics Center, where both ground and aerial robots were used to explore destroyed buildings. We describe and reflect the missions as well as the lessons learned that have resulted from them. In order to make robots from research laboratories fit for real operations, realistic test environments were set up for outdoor and indoor use and tested in regular exercises by researchers and emergency forces. Based on this experience, the robots and their control software were significantly improved. Furthermore, top teams of researchers and first responders were formed, each with realistic assessments of the operational and practical suitability of robotic systems.

Real-Time Multi-Modal Semantic Fusion on Unmanned Aerial Vehicles with Label Propagation for Cross-Domain Adaptation

Oct 18, 2022Abstract:Unmanned aerial vehicles (UAVs) equipped with multiple complementary sensors have tremendous potential for fast autonomous or remote-controlled semantic scene analysis, e.g., for disaster examination. Here, we propose a UAV system for real-time semantic inference and fusion of multiple sensor modalities. Semantic segmentation of LiDAR scans and RGB images, as well as object detection on RGB and thermal images, run online onboard the UAV computer using lightweight CNN architectures and embedded inference accelerators. We follow a late fusion approach where semantic information from multiple sensor modalities augments 3D point clouds and image segmentation masks while also generating an allocentric semantic map. Label propagation on the semantic map allows for sensor-specific adaptation with cross-modality and cross-domain supervision. Our system provides augmented semantic images and point clouds with $\approx$ 9 Hz. We evaluate the integrated system in real-world experiments in an urban environment and at a disaster test site.

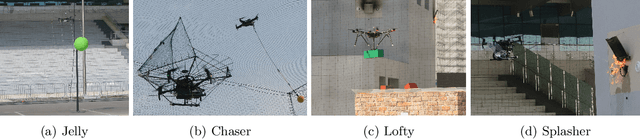

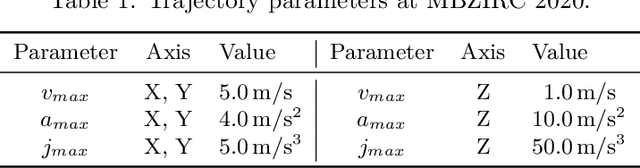

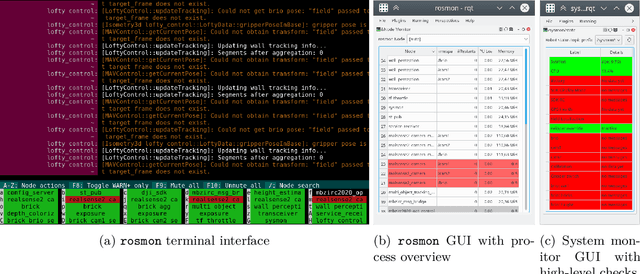

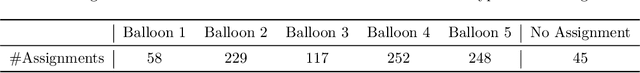

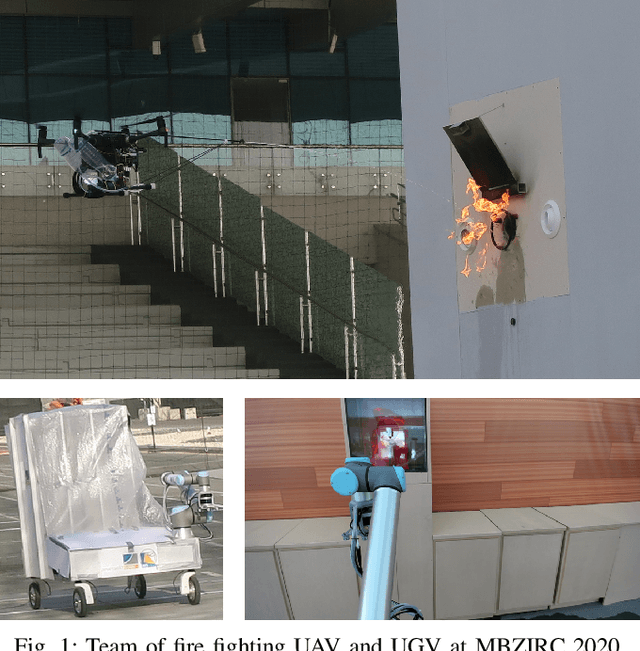

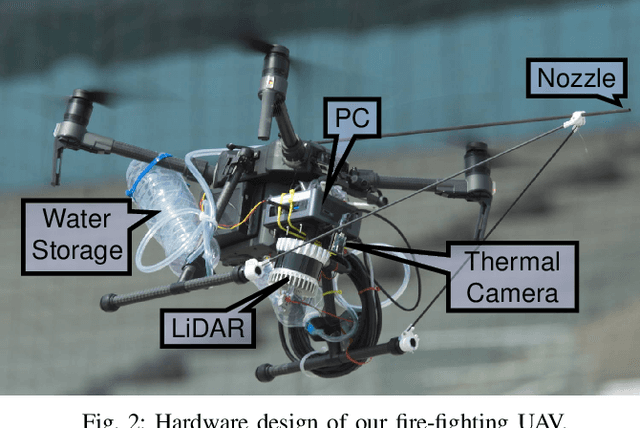

Target Chase, Wall Building, and Fire Fighting: Autonomous UAVs of Team NimbRo at MBZIRC 2020

Jan 11, 2022

Abstract:The Mohamed Bin Zayed International Robotics Challenge (MBZIRC) 2020 posed diverse challenges for unmanned aerial vehicles (UAVs). We present our four tailored UAVs, specifically developed for individual aerial-robot tasks of MBZIRC, including custom hardware- and software components. In Challenge 1, a target UAV is pursued using a high-efficiency, onboard object detection pipeline to capture a ball from the target UAV. A second UAV uses a similar detection method to find and pop balloons scattered throughout the arena. For Challenge 2, we demonstrate a larger UAV capable of autonomous aerial manipulation: Bricks are found and tracked from camera images. Subsequently, they are approached, picked, transported, and placed on a wall. Finally, in Challenge 3, our UAV autonomously finds fires using LiDAR and thermal cameras. It extinguishes the fires with an onboard fire extinguisher. While every robot features task-specific subsystems, all UAVs rely on a standard software stack developed for this particular and future competitions. We present our mostly open-source software solutions, including tools for system configuration, monitoring, robust wireless communication, high-level control, and agile trajectory generation. For solving the MBZIRC 2020 tasks, we advanced the state of the art in multiple research areas like machine vision and trajectory generation. We present our scientific contributions that constitute the foundation for our algorithms and systems and analyze the results from the MBZIRC competition 2020 in Abu Dhabi, where our systems reached second place in the Grand Challenge. Furthermore, we discuss lessons learned from our participation in this complex robotic challenge.

Real-Time Multi-Modal Semantic Fusion on Unmanned Aerial Vehicles

Aug 14, 2021

Abstract:Unmanned aerial vehicles (UAVs) equipped with multiple complementary sensors have tremendous potential for fast autonomous or remote-controlled semantic scene analysis, e.g., for disaster examination. In this work, we propose a UAV system for real-time semantic inference and fusion of multiple sensor modalities. Semantic segmentation of LiDAR scans and RGB images, as well as object detection on RGB and thermal images, run online onboard the UAV computer using lightweight CNN architectures and embedded inference accelerators. We follow a late fusion approach where semantic information from multiple modalities augments 3D point clouds and image segmentation masks while also generating an allocentric semantic map. Our system provides augmented semantic images and point clouds with $\approx\,$9$\,$Hz. We evaluate the integrated system in real-world experiments in an urban environment.

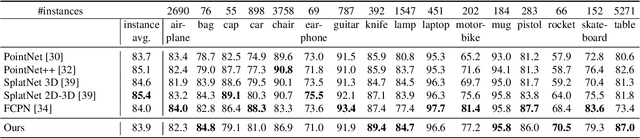

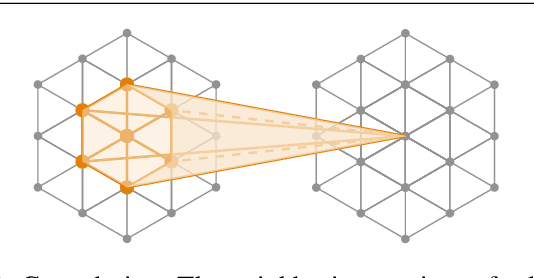

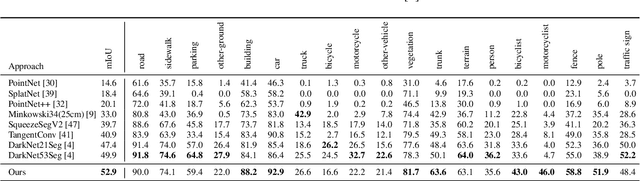

LatticeNet: Fast Spatio-Temporal Point Cloud Segmentation Using Permutohedral Lattices

Aug 09, 2021

Abstract:Deep convolutional neural networks (CNNs) have shown outstanding performance in the task of semantically segmenting images. Applying the same methods on 3D data still poses challenges due to the heavy memory requirements and the lack of structured data. Here, we propose LatticeNet, a novel approach for 3D semantic segmentation, which takes raw point clouds as input. A PointNet describes the local geometry which we embed into a sparse permutohedral lattice. The lattice allows for fast convolutions while keeping a low memory footprint. Further, we introduce DeformSlice, a novel learned data-dependent interpolation for projecting lattice features back onto the point cloud. We present results of 3D segmentation on multiple datasets where our method achieves state-of-the-art performance. We also extend and evaluate our network for instance and dynamic object segmentation.

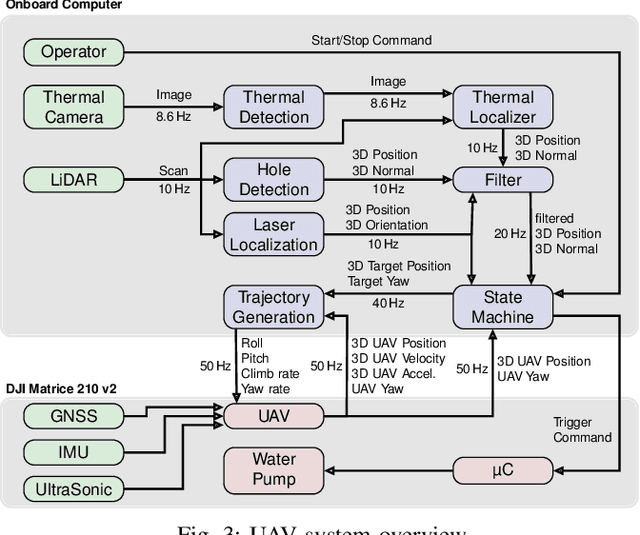

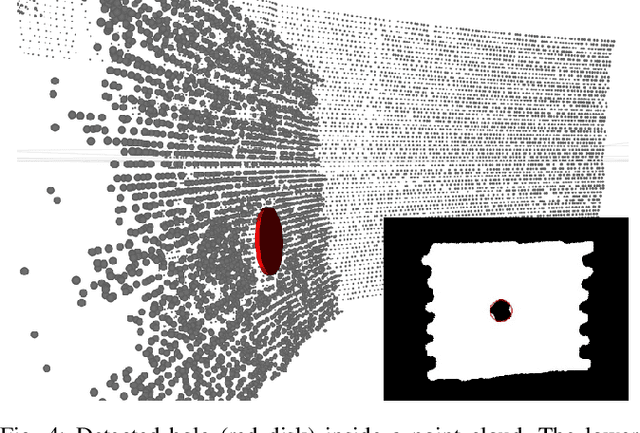

Autonomous Fire Fighting with a UAV-UGV Team at MBZIRC 2020

Jun 11, 2021

Abstract:Every day, burning buildings threaten the lives of occupants and first responders trying to save them. Quick action is of essence, but some areas might not be accessible or too dangerous to enter. Robotic systems have become a promising addition to firefighting, but at this stage, they are mostly manually controlled, which is error-prone and requires specially trained personal. We present two systems for autonomous firefighting from air and ground we developed for the Mohamed Bin Zayed International Robotics Challenge (MBZIRC) 2020. The systems use LiDAR for reliable localization within narrow, potentially GNSS-restricted environments while maneuvering close to obstacles. Measurements from LiDAR and thermal cameras are fused to track fires, while relative navigation ensures successful extinguishing. We analyze and discuss our successful participation during the MBZIRC 2020, present further experiments, and provide insights into our lessons learned from the competition.

Team NimbRo's UGV Solution for Autonomous Wall Building and Fire Fighting at MBZIRC 2020

May 27, 2021

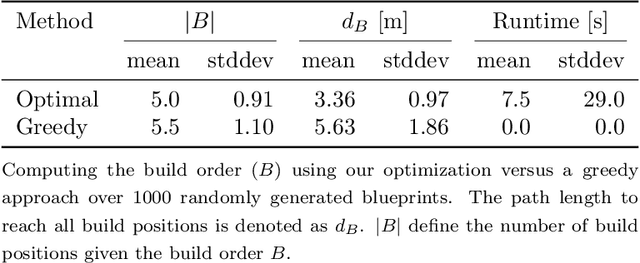

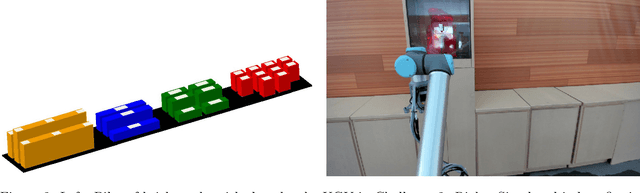

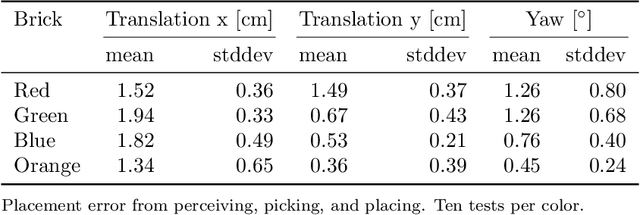

Abstract:Autonomous robotic systems for various applications including transport, mobile manipulation, and disaster response are becoming more and more complex. Evaluating and analyzing such systems is challenging. Robotic competitions are designed to benchmark complete robotic systems on complex state-of-the-art tasks. Participants compete in defined scenarios under equal conditions. We present our UGV solution developed for the Mohamed Bin Zayed International Robotics Challenge 2020. Our hard- and software components to address the challenge tasks of wall building and fire fighting are integrated into a fully autonomous system. The robot consists of a wheeled omnidirectional base, a 6 DoF manipulator arm equipped with a magnetic gripper, a highly efficient storage system to transport box-shaped objects, and a water spraying system to fight fires. The robot perceives its environment using 3D LiDAR as well as RGB and thermal camera-based perception modules, is capable of picking box-shaped objects and constructing a pre-defined wall structure, as well as detecting and localizing heat sources in order to extinguish potential fires. A high-level planner solves the challenge tasks using the robot skills. We analyze and discuss our successful participation during the MBZIRC 2020 finals, present further experiments, and provide insights to our lessons learned.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge